S M O D

advertisement

Slides for Introduction to Stochastic Search

and Optimization (ISSO) by J. C. Spall

CHAPTER 12

STATISTICAL METHODS FOR

OPTIMIZATION IN DISCRETE PROBLEMS

•Organization of chapter in ISSO

–Basic problem in multiple comparisons

•Finite number of elements in search domain

–Tukey-Kramer test

–“Many-to-one” tests for sharper analysis

•Measurement noise variance known

•Measurement noise variance unknown (estimated)

–Ranking and selection methods

Background

• Statistical methods used here to solve optimization

problem

– Not just for evaluation purposes

• Extending standard pairwise t-test to multiple

comparisons

• Let {1, 2, …, K} be finite search space (K

possible options)

• Optimization problem is to find the j such that = j

• Only have noisy measurements of L(i)

12-2

Applications with Monte Carlo

Simulations

• Suppose wish to evaluate K possible options in a

real system

– Too difficult to use real system to evaluate options

• Suppose run Monte Carlo simulation(s) for each of

the K options

• Compare options based on a performance measure

(or loss function) L() representing average (mean)

performance

– represents options that can be varied

– Monte Carlo simulations produce noisy

measurement of loss function L at each option

12-3

Statistical Hypothesis Testing

• Null hypothesis: All options in {1, 2, …, K}

are effectively the same in the sense that L(1) =

L(2) = … = L(K)

• Challenge in multiple comparisons: alternative

hypothesis is not unique

– Contrasts with standard pairwise t-test

• Analogous to standard t-test, hypothesis testing

based on collecting sample values of L(1), L(2),

and L(K), forming sample means L1, L2 ,..., LK

12-4

Tukey–Kramer Test

• Tukey (1953) and Kramer (1956) independently

developed popular multiple comparisons analogue to

standard t-test

• Recall null hypothesis that all options in {1, 2, …,

K} are effectively the same in the sense that L(1) =

L(2) = … = L(K)

• Tukey–Kramer test forms multiple acceptance

intervals for K(K–1)/2 differences ij Li L j

– Intervals require sample variance calculation based on

samples at all K options

• Null hypothesis is accepted if evidence suggests all

differences ij lie in their respective intervals

– Null hypothesis is rejected if evidence suggests at least

one ij lies outside its respective interval

12-5

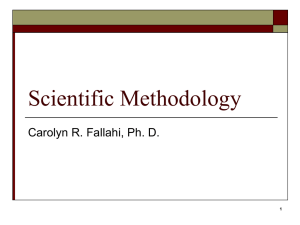

Example: Widths of 95% Acceptance

Intervals Increasing with K in Tukey–Kramer

Test (n1=n2=…=nK=10)

12-6

Example of Tukey–Kramer Test

(Example 12.2 in ISSO)

• Goal: With K = 4, test null hypothesis L(1) = L(2) =

L(3) = L(4) based on 10 measurements at each i

• All (six) differences ij Li L j must lie in acceptance

intervals [–1.23, 1.23]

• Find that 34 = 1.72

– Have 34 [–1.23, 1.23]

• Since at least one ij is not in acceptance interval, reject

null hypothesis

• Conclude at least one i likely better than others

– Further analysis required to find i that is better

12-7

Multiple Comparisons Against One Candidate

• Assume prior information suggests one of K points is

optimal, say m

• Reduces number of comparisons from K(K–1)/2 differences

ij = Li L j to only K–1 differences mj

• Under null hypothesis, L(m) L(j) for all j

• Aim to reject null hypothesis

– Implies that L(m) < L(j) for at least some j

• Tests based on critical values mj < 0 for observed

differences mj

• To show that L(m) < L(j) for all j requires additional

analysis

12-8

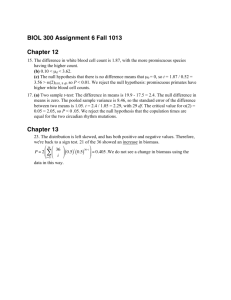

Example of Many-to-One Test with

Known Variances (Example 12.3 in ISSO)

• Suppose K = 4, m = 2 Need to compute 3 critical

values 21 , 23, and 24 for acceptance regions { 2 j 2 j }

• Valid to take 21 23 24

• Under Bonferroni/Chebyshev: 3.96

• Under Bonferroni/normal noise: 1.10

• Under Slepian/normal noise: 1.09

• Note tighter (smaller) acceptance regions when assuming

normal noise

12-9

Widths of 95% Acceptance Intervals (< 0)

for Tukey-Kramer and Many-to-One Tests

(n1=n2=…=nK=10)

Ranking and Selection:

Indifference Zone Methods

• Consider usual problem of determining best of K possible

options, represented 1 , 2 ,…, K

• Have noisy loss measurements yk(i )

• Suppose analyst is willing to accept any i such that L(i)

is in indifference zone [L(), L() + )

• Analyst can specify such that

P(correct selection of = ) 1

whenever L(i) L() for all i

• Can use independent sampling or common random

numbers (see Section 14.5 of ISSO)

12-11