Lecture 13: Hypothesis testing in linear regression models BUEC 333

advertisement

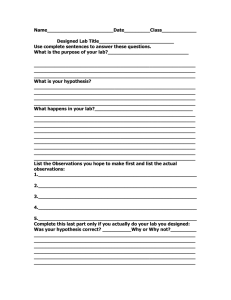

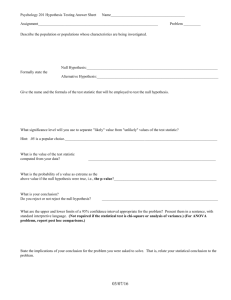

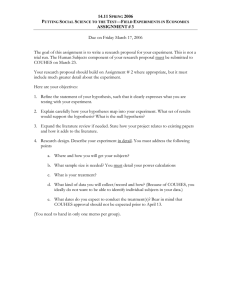

Lecture 13: Hypothesis testing in linear regression models BUEC 333 Professor David Jacks 1 Previously, considered the sampling distribution for the least squares estimator. Specifically, saw that in the regression model, Yi = β0 + β1X1i + β2X2i +...+ βkXki + εi with εi ~ N(0,σ2), OLS estimator ˆ j has a normal sampling distribution with mean βj and Var[ ˆ j ]. Follows from the fact that a linear function of a normally distributed variable is itself normally distributed (from εi to Yi to ˆ j). Sampling and hypothesis testing 2 We also revisited the Central Limit Theorem. Main implication from the CLT: as the sample size gets larger (technically, as n → ∞), the sampling distribution of the least squares estimator is well approximated by a normal distribution. So even if the errors are not normal, the sampling distribution of the beta-hats is approximately normal in large samples. Sampling and hypothesis testing 3 The point was to get a sampling distribution for the OLS estimator to do some hypothesis testing. Here, hypothesis testing is the same as always: 1.) we formulate the null and alternative hypotheses that we are interested in and choose a level of significance α for the test. 2.) we construct a test statistic that has a known sampling distribution Sampling and hypothesis testing 4 3.) we compare the value of the test statistic to a critical value (associated with our α) from the sampling distribution of the test statistic. 4.) if the test statistic is larger than the critical value, it is unlikely that the null is true and we reject it (with Type I error probability α). Sampling and hypothesis testing 5 Consider the case of IQ tests which are constructed so the average score for adults is 100. Would like to know if university students are smarter than average? In this example, we can take a sample of undergrads at SFU (say, n=6) and try to determine if the average of IQ scores for all students at the university is higher than 100 (above average). How this works 6 The following scores are obtained for the 6 students in the sample: 110, 118, 110, 122, 110, and 150. It can easily be shown that the sample mean and sample variance are 120 and 201.33…so it seems to be the case that SFU students are smarter. But is this finding likely to hold in repeated samples? How this works 7 Enter the t-test: 1.) We form our null and alternative hypotheses as follows, H0 : μ ≤ 100 versus H1 : μ > 100, and specify a significance level of 5%. 2.) Under the null, the t-statistic should be distributed as a tn-1 = t5 if H0 is true How this works 8 Think about what this means: repeatedly sampling 6 students and calculating a t-statistic each time, histogram as a sketch of t’s sampling distribution. If the population mean truly is 100, the most likely value of t should be zero if our sample of 6 students is truly representative of the population. How this works 9 How this works 10 3.) & 4.) But we need to be more precise than “our sample does not look like it is representative of the population”. That is, our computed t-statistic is large in magnitude, but how large is large enough? We can calculate how often a computed sample t will be far from the population mean of t = 0 based on our knowledge of the distribution. How this works 11 The critical values of the t-distribution tell us exactly how often we should find computed tstatistics of large magnitude. But we also need to know the degrees of freedom (here, df = 5) to help us make this determination. Why? If we sample only a few students, our computed t-statistics are more likely How this works 12 Under a one-tailed test with significance level of 5% and 5 degrees of freedom, you should find a critical value of t = 2.02. Is our computed t-statistic larger than this critical value? Obviously yes… Thus, reject the null hypothesis at the 5% level of significance that the population mean of IQ scores for students is 100 (or less) How this works 13 More precisely, based on our knowledge of the t-distribution we know that 95% of the time the computed t-statistic should be less than 2.02. But there will always be some uncertainty: if we conclude that the population mean intelligence (of students) is higher than 100, how often will be wrong in this conclusion? How this works 14 So what kind of hypotheses do we test in a regression context? Well, a lot of things… 1.) simple hypotheses about coefficients; e.g., H0 : βj = 0 versus H1 : βj ≠ 0 2.) hypotheses related to the confidence intervals for coefficients; e.g. Pr[L ≤ βj ≤ U] = 1 – α As it turns out, these are very similar to what we did Hypothesis testing and regression 15 We can also test more complicated hypotheses about the set of regression coefficients; e.g., H0 : β1 = 0, β2 = 0 versus H1 : β1 ≠ 0, β2 = 0. In the remainder of this lecture, we will see how to do these tests. And in weeks to come, we will see how to test for correct specification, multicollinearity, serial correlation, and heteroskedasticity; that is, whether the classical assumptions are violated. Hypothesis testing and regression 16 But before all that, we need to motivate the topic a little more; that is, we can do hypothesis testing but to what ultimate purpose? Econometrics started out as an exercise in explicitly testing economic theory. For example, does quantity demanded decrease with price or does international trade induce economic growth? Hypothesis testing and regression 17 So far, we have developed the best means to come up with some reasonable guesses (i.e., estimates). Now, we can return back to the essence of econometrics and ask what can we learn about the real world from the sample at our disposal. In particular, hypothesis testing allows to answer the question of whether our results are likely Hypothesis testing and regression 18 In the context of regression analysis, we can never prove that a theory is correct; all we can do is to show that the sample data “fit” the theory. However, you can often reject a hypothesis or theory; “it is very unlikely this sample would have been observed if the theory were true.” Hypothesis testing and regression 19 We know that if εi ~ N(0,σ2), then ˆ j ~ N j ,Var[ˆ j ] for each j = 0,1, 2,..., k. We also know how to standardize variables: subtract by their mean and divide by their standard deviation so that the resulting distribution is centered around 0 with a variance of 1, or Returning to the t-test 20 Returning to the t-test 21 . Returning to the t-test 22 Suppose we want to test a simple hypothesis like H0 : βj = βH versus H1 : βj ≠ βH where βH is some number (unspecified for now). If we knew Var[ ˆ j ], we could base our test on Z. That is, if H0 is true, then: Returning to the t-test 23 If Z is far from zero, then it is unlikely that H0 is true, and we would reject the null. And if Z is close to zero, then there is not enough evidence against H0 to reject the null, so we would fail to reject. And, of course, we know whether a particular value of Z is “close” or “far” from zero Returning to the t-test 24 For better or worse, we will never knowVar[ ˆ j ] . Notice that it is a population quantity and, therefore, we cannot actually use Z for testing. But we can estimate Var[ ˆ j ]. And we have seen this before…in the form of the standard error of ˆ j , Luckily, this easily calculated by a computer. From the Z to the t 25 So instead, we can base our test on: When and only when εi ~ N(0,σ2), then t has a t distribution with n-k-1 degrees of freedom; or more compactly, t ~ tn-k-1 when εi ~ N(0,σ2). This can be shown using exactly the same kind of argument From the Z to the t 26 So now we have a test statistic that we can use for testing simple (but informative) hypotheses about regression coefficients. We can (and will) test one- or two-sided hypotheses using this statistic. We can also build confidence intervals for regression coefficients using this statistic like Pr[ˆ t * s.e.(ˆ ) ˆ t * s.e.(ˆ )] 1 j /2 j Comments on the t-test j j /2 j 27 All of this is operationally identical to the tests we did for population means. And just like when we were testing population means, we need normality (here, of the error terms) for the t-statistic to follow a t-distribution. If the errors are not normally distributed, we can rely on the CLT to help us out; consequently, in large samples we can still use Comments on the t-test 28 People often (always?) test whether a particular regression coefficient is “statistically significant”. When they say this, they are testing whether βj is statistically different from zero; that is: they are considering H0 : βj = 0 versus H1 : βj ≠ 0. We can test this hypothesis very easily using our trusty t-statistic as, in this case Statistical significance 29 This particular hypothesis test is so common that every software package reports the result of this test automatically (and the associated p-value). Source SS df MS Model Residual 1123.39127 533.896273 2 146 561.695635 3.65682379 Total 1657.28754 148 11.1978888 lntrade Coef. lngdpprod lndist _cons 1.469513 -1.713246 18.09403 Std. Err. .1002652 .1351385 2.530274 Statistical significance t 14.66 -12.68 7.15 Number of obs F( 2, 146) Prob > F R-squared Adj R-squared Root MSE P>|t| 0.000 0.000 0.000 = = = = = = 149 153.60 0.0000 0.6778 0.6734 1.9123 [95% Conf. Interval] 1.271355 -1.980326 13.09334 1.667672 -1.446165 23.09473 30 1.) Just because we reject the null hypothesis βj = 0 does not mean Xj should be in the model. 2.) When n increases, you will automatically get smaller standard errors…adjust α accordingly. 3.) A larger value of the test statistic does not mean a particular independent variable is “more important” in explaining the dependent variable. Statistical significance 31 Suppose we have a regression model with k independent variables. A very common hypothesis to test is H0 : β1 = β2 = ... = βk = 0 versus H1 : at least one βj ≠ 0, where j = 1, 2, ... , k. That is, we are testing the joint hypothesis that all the slope coefficients are zero. The F-test 32 Both seek to answer the question of whether the regression model fits the data well. In the case of the F-test, the particular question of interest is whether the regression fits the data better than the sample mean. If H0 is true, then Yi = β0 + εi, and the OLS estimator of β0 in this model is simply ˆ0 Y. The F-test 33 The test statistic for this hypothesis is ESS / k ESS (n k 1) F RSS / (n k 1) RSS k TSS * R 2 (n k 1) F 2 TSS TSS * R k R (n k 1) F ~ Fk ,n k 1 2 (1 R ) k 2 The F-test 34 Once again, you can look up critical values for the F-distribution with k and (n – k – 1) degrees of freedom in the back of text or online. This test is also routinely calculated by statistical software packages (refer back to slide 30 for an example of a F-test value as well as p-value). But again be careful. The F-test 35 A huge literature has emerged which tries to explain the volume of trade between countries (i.e., bilateral trade). The workhorse of this literature in empirical international trade is the gravity model. The gravity model relates bilateral volumes of trade to GDP (size) and measures of trade costs. Gravity: an extended example 36 Two nations with similar economies, history, and institutions (Australia and Canada) and their trade with the United States. Gravity: an extended example 37 Two nations with similar geography, history, and institutions (Denmark and Germany) and their trade with the United States. Gravity: an extended example 38 But why gravity? M1 M 2 Fg G d2 GDP1 GDP2 Trade12 B n d Here, B is a “catch-all” term which will include Gravity: an extended example 39 A typical model might look like the following: ln(tradeij * trade ji ) 1 ln(GDPi GDPj ) 2 ln(distanceij ) ij Consider countries i and j and form all pairs. But before estimation, should establish our priors related to the sign of the estimated coefficients. That is, β1 > 0 and β2 < 0 Gravity: an extended example 40 Gravity: an extended example 41 Consider the sign of GDP1* GDP2…we expect this to positively affect the amount of trade any given pair of countries will have (ceteris paribus). 1.) Form the null and alternative hypotheses and specify a level of significance (α) of 5%: H0 : β1 ≤ 0 versus H1 : β1 > 0. 2.) If H0 is true, the t-statistic should be distributed Gravity: an extended example 42 2.) continued ˆ j H 1.4695 0.00 t 14.66 0.1003 s.e.( ˆ j ) We can already note that the value of the t-statistic is very large (“large enough” values are generally in the range of two to three). This suggests that it is very unlikely Gravity: an extended example 43 3.) We can improve on this intuition by comparing the value of the test statistic to the critical value from the sampling distribution of the test statistic. In this case, with n = 149 and df = 146, the critical value of a one-sided t-test at the 5% level of significance is 1.66. This tells us that there is a 5% probability of observing an estimated t-value Gravity: an extended example 44 4.) However, since the test statistic is so much larger (in absolute value) than the critical value, it is unlikely that the null is true and we reject it (with Type I error probability α). Another way of seeing this is the CI for β1: Pr[ ˆ j t* /2 s.e.( ˆ j ) j ˆ j t* /2 s.e.( ˆ j )] 1 Pr[1.4695 1.96 0.1003 j 1.4695 1.96 0.1003] 95% Gravity: an extended example 45 Another potential hypothesis relates to the OLS estimate on GDP1*GDP2…a certain set of models of international trade suggest β1 = 2. 1.) Form the null and alternative hypotheses and specify a level of significance (α) of 5%: H0 : β1 = 2 versus H1 : β1 ≠ 2. 2.) If H0 is true, the t-statistic should be distributed Gravity: an extended example 46 Gravity: an extended example 47 2.) continued Gravity: an extended example 48 3.) We can improve on that intuition by comparing the value of the test statistic to the critical value from the sampling distribution of the test statistic. In this case, with n = 149 and df = 146, the critical value of a two-sided t-test at the 5% level of significance is 1.96. This tells us that there is a 5% probability of observing an estimated t-value Gravity: an extended example 49 4.) However, since the test statistic is so much larger (in absolute value) than the critical value, it is unlikely that the null is true and we reject it (with Type I error probability α). Another way of seeing this is the CI for β2: Pr[ ˆ j t* /2 s.e.( ˆ j ) j ˆ j t* /2 s.e.( ˆ j )] 1 Pr[1.4695 1.96 0.1003 j 1.4695 1.96 0.1003] 95% Gravity: an extended example 50 Finally, we can consider the overall significance of our regression model by evaluating the F-test. Under the null of the F-test, no explanatory variables has any effect…if F is large, then the unconstrained model fits the data much better than the constrained model where Yi = β0 + ε. In this case, where F = 153.60 and the associated p-value is 0.0000 Gravity: an extended example 51 For purposes of hypothesis testing in the CLRM, there are precisely two ways to get at wellbehaved sampling distributions for test statistics: 1.) assume normal population errors, or 2.) invoke the Central Limit Theorem Once these are in place, it all boils down to calculating values Conclusion 52