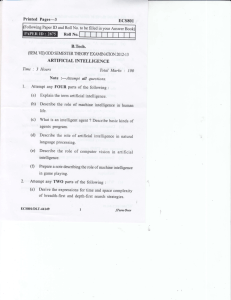

Intro to AI: Definitions, History & Turing Test

advertisement