Document 11916165

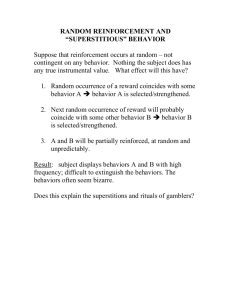

advertisement

AN ABSTRACT OF THE THESIS OF

Scott Proper for the degree of Doctor of Philosophy in Computer Science

presented on December 1, 2009.

Title: Scaling Multiagent Reinforcement Learning

Abstract approved:

Prasad Tadepalli

Reinforcement learning in real-world domains suffers from three curses of

dimensionality: explosions in state and action spaces, and high stochasticity or

“outcome space” explosion. Multiagent domains are particularly susceptible to

these problems. This thesis describes ways to mitigate these curses in several

different multiagent domains, including real-time delivery of products using

multiple vehicles with stochastic demands, a multiagent predator-prey domain,

and a domain based on a real-time strategy game.

To mitigate the problem of state-space explosion, this thesis present several

approaches that mitigate each of these curses. “Tabular linear functions” (TLFs)

are introduced that generalize tile-coding and linear value functions and allow

learning of complex nonlinear functions in high-dimensional state-spaces. It is

also shown how to adapt TLFs to relational domains, creating a “lifted” version

called relational templates. To mitigate the problem of action-space explosion,

the replacement of complete joint action space search with a form of hill climbing

is described. To mitigate the problem of outcome space explosion, a more

efficient calculation of the expected value of the next state is shown, and two

real-time dynamic programming algorithms based on afterstates, ASH-learning

and ATR-learning, are introduced.

Lastly, two approaches that scale by treating a multiagent domain as being

formed of several coordinating agents are presented. “Multiagent H-learning”

and “Multiagent ASH-learning” are described, where coordination is achieved

through a method called “serial coordination”. This technique has the benefit of

addressing each of the three curses of dimensionality simultaneously by reducing

the space of states and actions each local agent must consider.

The second approach to multiagent coordination presented is “assignment-based

decomposition”, which divides the action selection step into an assignment phase

and a primitive action selection step. Like the multiagent approach,

assignment-based decomposition addresses all three curses of dimensionality

simultaneously by reducing the space of states and actions each group of agents

must consider. This method is capable of much more sophisticated coordination.

Experimental results are presented which show successful application of all

methods described. These results demonstrate that the scaling techniques

described in this thesis can greatly mitigate the three curses of dimensionality

and allow solutions for multiagent domains to scale to large numbers of agents,

and complex state and outcome spaces.

c

Copyright by Scott Proper

December 1, 2009

All Rights Reserved

Scaling Multiagent Reinforcement Learning

by

Scott Proper

A THESIS

submitted to

Oregon State University

in partial fulfillment of

the requirements for the

degree of

Doctor of Philosophy

Presented December 1, 2009

Commencement June 2010

Doctor of Philosophy thesis of Scott Proper presented on December 1, 2009.

APPROVED:

Major Professor, representing Computer Science

Director of the School of Electrical Engineering and Computer Science

Dean of the Graduate School

I understand that my thesis will become part of the permanent collection of

Oregon State University libraries. My signature below authorizes release of my

thesis to any reader upon request.

Scott Proper, Author

ACKNOWLEDGEMENTS

My deepest thanks are extended to all those who have supported me, in

particular my major professor, Prasad Tadepalli. Without his assistance and

support throughout my graduate education, this thesis could never have been

completed. In addition I would like to thank the members of my committee: Tom

Dietterich, Alan Fern, Ron Metoyer, and Jack Higginbotham for their patience

and support.

I would also like to thank Neville Mehta, Aaron Wilson, Sriraam Natarajan, and

Ronald Bjarnason for their friendship, and many useful discussions throughout

my research.

Very special thanks to my parents, Anna Collins-Proper and Datus Proper for

their love, support, encouragement, and understanding throughout my life. It is

because of them that I have had the opportunity to take my education this far.

Finally, I gratefully acknowledge the support of the Defense Advanced Research

Projects Agency under grant number FA8750-05-2-0249 and the National Science

Foundation for grant number IIS-0329278.

TABLE OF CONTENTS

Page

1 Introduction

1

1.1

Outline of the Thesis . . . . . . . . . . . . . . . . . . . . . . . . . .

1

1.2

Thesis Organization . . . . . . . . . . . . . . . . . . . . . . . . . . .

5

2 Background

7

2.1

Reinforcement Learning . . . . . . . . . . . . . . . . . . . . . . . .

7

2.2

Markov Decision Processes . . . . . . . . . . . . . . . . . . . . . . .

8

2.3

Dynamic Programming . . . . . . . . . .

2.3.1 Total Reward Optimization . . .

2.3.2 Discounted Reward Optimization

2.3.3 Average Reward Optimization . .

.

.

.

.

10

10

11

12

2.4

Model-free Reinforcement Learning . . . . . . . . . . . . . . . . . .

13

2.5

Model-based Reinforcement Learning . . . . . . . . . . . . . . . . .

15

2.6

Multiagent Reinforcement Learning . . . . . . . . . . . . . . . . . .

18

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

3 The Three Curses of Dimensionality

20

3.1

Function Approximation . . . . . . . . . . . . . . . . . . . . . . . .

3.1.1 Tabular Linear Functions . . . . . . . . . . . . . . . . . . . .

3.1.2 Relational Templates . . . . . . . . . . . . . . . . . . . . . .

20

21

24

3.2

Hill Climbing for Action Space Search

26

3.3

Reducing Result-Space Explosion . . . .

3.3.1 Efficient Expectation Calculation

3.3.2 ASH-learning . . . . . . . . . . .

3.3.3 ATR-learning . . . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

27

28

29

32

3.4

Experimental Results . . . . . . . . . . .

3.4.1 The Product Delivery Domain . .

3.4.2 The Real-Time Strategy Domain

3.4.3 ASH-learning Experiments . . . .

3.4.4 ATR-learning Experiments . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

34

34

37

39

44

3.5

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

48

. . . . . . . . . . . . . . . .

TABLE OF CONTENTS (Continued)

Page

4 Multiagent Learning

51

4.1

Multiagent H-learning . . . . . . . . . . .

4.1.1 Decomposition of the State Space .

4.1.2 Decomposition of the Action Space

4.1.3 Serial Coordination . . . . . . . . .

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

52

52

54

55

4.2

Multiagent ASH-learning . . . . . . . . . . . . . . . . . . . . . . . .

57

4.3

Experimental Results . . . . . . . . . . . . . . . . . . . . . . . . . .

4.3.1 Team Capture domain . . . . . . . . . . . . . . . . . . . . .

4.3.2 Experiments . . . . . . . . . . . . . . . . . . . . . . . . . . .

58

58

61

4.4

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

65

5 Assignment-based Decomposition

67

5.1

Model-free Assignment-based Decomposition . . . . . . . . . . . . .

69

5.2

Model-based Assignment-based Decomposition . . . . . . . . . . . .

71

5.3

Assignment Search Techniques . . . . . . . . . . . . . . . . . . . . .

74

5.4

Advantages of Assignment-based Decomposition . . . . . . . . . . .

76

5.5

Coordination Graphs . . . . . . . . . . . . . . . . . . . . . . . . . .

5.5.1 The Max-plus Algorithm . . . . . . . . . . . . . . . . . . . .

5.5.2 Dynamic Coordination . . . . . . . . . . . . . . . . . . . . .

77

80

82

5.6

Experimental Results . . . . . . . . . . . . . . . . . . . .

5.6.1 Multiagent Predator-Prey Domain . . . . . . . . .

5.6.2 Model-free Reinforcement Learning Experiments .

5.6.3 Model-based Reinforcement Learning Experiments

84

85

87

94

5.7

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 100

6 Assignment-level Learning

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

.

101

6.1

HRL Semantics . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 101

6.2

Function Approximation Semantics . . . . . . . . . . . . . . . . . . 105

6.3

Experimental Results . . . . . . . . . . . . . . . . . . . . . . . . . . 108

6.3.1 Four-state MDP Domain . . . . . . . . . . . . . . . . . . . . 109

6.3.2 Real-Time Strategy Game Domain . . . . . . . . . . . . . . 110

6.4

Summary . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 114

TABLE OF CONTENTS (Continued)

Page

7 Conclusions

116

7.1

Summary of Contributions . . . . . . . . . . . . . . . . . . . . . . . 116

7.2

Discussion and Future Work . . . . . . . . . . . . . . . . . . . . . . 119

Bibliography

120

LIST OF FIGURES

Figure

Page

2.1

Schematic diagram for reinforcement learning. . . . . . . . . . . . .

7

2.2

The relationship between model-free (direct) and model-based (indirect) reinforcement learning. . . . . . . . . . . . . . . . . . . . . .

13

3.1

Progression of states (s, s0 , and s00 ) and afterstates (sa and s0a0 ). . .

29

3.2

The product delivery domain, with depot (square) and five shops

(circles). Numbers indicate probability of customer visit each time

step. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

35

Comparison of complete search, Hill climbing, H- and ASH-learning

for the truck-shop tiling approximation. . . . . . . . . . . . . . . . .

39

Comparison of complete search, Hill climbing, H- and ASH-learning

for the linear inventory approximation. . . . . . . . . . . . . . . . .

40

Comparison of hand-coded algorithm vs. ASH-learning with complete search for the truck-shop tiling, linear inventory, and all featurepairs tiling approximations. . . . . . . . . . . . . . . . . . . . . . .

43

3.6

Comparison of 3 agents vs 1 task domains. . . . . . . . . . . . . . .

45

3.7

Comparison of training on various source domains transferred to the

3 Archers vs. 1 Tower domain. . . . . . . . . . . . . . . . . . . . . .

46

Comparison of training on various source domains transferred to the

Infantry vs. Knight domain. . . . . . . . . . . . . . . . . . . . . . .

47

DBN showing the creation of afterstates sa1 ...sam and the final state

s0 by the actions of agents a1 ...am and the environment E. . . . . .

56

An example of the team capture domain for 2 pieces per side on a

4x4 grid. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

59

The tiles used to create the function approximation for the team

capture domain. . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

59

Comparison of multiagent, joint agent, H- and ASH-learning for the

two vs. two Team Capture domain. . . . . . . . . . . . . . . . . . .

62

3.3

3.4

3.5

3.8

4.1

4.2

4.3

4.4

LIST OF FIGURES (Continued)

Figure

4.5

Page

Comparison of ASH-learning approaches and hand-coded algorithm

for the four vs. four Team Capture domain. . . . . . . . . . . . . .

63

Comparison of multiagent ASH-learning to hand-coded algorithm

for the ten vs. ten Team Capture domain. . . . . . . . . . . . . . .

64

A possible coordination graph for a 4-agent domain. Q-values indicate an edge-based decomposition of the graph. . . . . . . . . . . .

78

Messages passed using Max-plus. Each step, every node passes a

message to each neighbor. . . . . . . . . . . . . . . . . . . . . . . .

80

A possible state in an 8 vs. 4 toroidal grid predator-prey domain.

All eight predators (black) are in a position to possibly capture all

four prey (white). . . . . . . . . . . . . . . . . . . . . . . . . . . . .

85

Comparison of various Q-learning approaches for the product delivery domain. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

89

Examination of the optimality of policy found by assignment-based

decomposition for product delivery domain. . . . . . . . . . . . . .

90

Comparison of action selection and search methods for the 4 vs 2

Predator-Prey domain. . . . . . . . . . . . . . . . . . . . . . . . . .

91

Comparison of action selection and search methods for the 8 vs 4

Predator-Prey domain. . . . . . . . . . . . . . . . . . . . . . . . . .

92

5.8

Comparison of 6 agents vs 2 task domains. . . . . . . . . . . . . . .

95

5.9

Comparison of 12 agents vs 4 task domains. . . . . . . . . . . . . .

96

6.1

Information typically examined by assignment-based decomposition. 102

6.2

Information examined by assignment-based decomposition with assignmentlevel learning. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 102

6.3

A 4-state MDP with two tasks. . . . . . . . . . . . . . . . . . . . . 103

6.4

Comparison of various strategies for assignment-level learning. . . . 109

6.5

Comparison of assignment-based decomposition with and without

assignment-level learning for the 3 vs 2 real-time strategy domain. . 111

4.6

5.1

5.2

5.3

5.4

5.5

5.6

5.7

LIST OF FIGURES (Continued)

Figure

Page

6.6

Comparison of 6 archers vs. 2 glass cannons, 2 halls domain. . . . . 113

6.7

Comparison of 6 agents vs 4 tasks domain. . . . . . . . . . . . . . . 114

LIST OF TABLES

Table

3.1

Page

Various relational templates used in experiments. See Table 3.2 for

descriptions of relational features, and Section 3.4.2 for a description

of the domain. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

24

3.2

Meaning of various relational features. . . . . . . . . . . . . . . . .

24

3.3

Different unit types. . . . . . . . . . . . . . . . . . . . . . . . . . .

37

3.4

Comparison of execution times for one run . . . . . . . . . . . . . .

42

4.1

Comparison of execution times in seconds for one run of each algorithm. Column labels indicate number of pieces. “–” indicates a

test requiring impractically large computation time. . . . . . . . . .

64

Running times (in seconds), parameters required, and and terms

summed over for five algorithms applied to the product delivery

domain. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

89

Experiment data and run times. Columns list domain size, units

involved (Archers, Infantry, Towers, Ballista, or Knights), use of

transfer learning, assignment search type (“flat” indicates no assignment search), relational templates used for state and afterstate

value functions, and average time to complete a single run. . . . . .

98

5.1

5.2

7.1

The contributions of several methods discussed in this paper towards

mitigating the three curses of dimensionality. . . . . . . . . . . . . . 117

15

List of Algorithms

2.1

The Q-learning algorithm. . . . . . . . . . . . . . . . . . . . . . . . .

14

2.2

The R-learning algorithm. . . . . . . . . . . . . . . . . . . . . . . . .

16

2.3

The H-learning algorithm. The agent executes each step when in

state s. . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

3.1

17

The ASH-learning algorithm. The agent executes steps 1-7 when in

state s0 . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . .

31

3.2

The ATR-learning algorithm, using the update of Equation 3.14. . .

33

4.1

The multiagent H-learning algorithm with serial coordination. Each

agent a executes each step when in state s. . . . . . . . . . . . . . .

4.2

53

The multiagent ASH-learning algorithm. Each agent a executes each

step when in state s0 .

. . . . . . . . . . . . . . . . . . . . . . . . . .

58

5.1

The assignment-based decomposition Q-learning algorithm. . . . . .

70

5.2

The ATR-learning algorithm with assignment-based decomposition,

using the update of Equations 5.3 and 5.5. . . . . . . . . . . . . . . .

73

5.3

The centralized anytime Max-plus algorithm. . . . . . . . . . . . . .

81

5.4

The assignment-based decomposition Q-learning algorithm using coordination graphs. . . . . . . . . . . . . . . . . . . . . . . . . . . . .

6.1

83

The assignment-based decomposition with assignment-level learning

Q-learning algorithm. . . . . . . . . . . . . . . . . . . . . . . . . . . 106

6.2

The ATR-learning algorithm with assignment-based decomposition

and assignment-level learning. . . . . . . . . . . . . . . . . . . . . . . 107

DEDICATION

I dedicate this thesis to my mother, Anna,

and to my father, Datus, in memoriam.

Chapter 1 – Introduction

1.1 Outline of the Thesis

Reinforcement Learning (RL) is a method of teaching a computer to learn how to

act in a given environment or “domain” via trial and error. By repeatedly taking

actions, observing results and an associated reward or cost signal, a computer agent

may learn to act in an environment in such way as to maximize its reward. This

kind of technique allows a computer to learn how to solve problems that might

be impractical to solve any other way. Reinforcement learning provides a nice

framework to model a variety of stochastic optimization problems [23], which are

optimization problems involving probabilistic (random) elements. Often, reinforcement learning is performed by learning a value function over states or state-action

pairs of the domain. This value function maps states or state-action pairs to values,

which allow an agent to determine the relative utility of a given state or action.

Typically, the value function is stored in a table, such that each single state or

state-action pair is mapped to a value. However, table-based approaches to large

RL problems suffer from three “curses of dimensionality”: explosions in state, action, and outcome spaces [16]. In this thesis, I propose and demonstrate several

ways to mitigate these curses in a variety of multiagent domains, including product delivery and routing, multiple predator and prey simulations, and real-time

2

strategy games.

The three main computational obstacles to dealing with large reinforcement

learning problems may be described as follows: First, the state space (and the time

required for convergence) grows exponentially in the number of variables. Second,

the space of possible actions is exponential in the number of agents, so even onestep look-ahead search is computationally expensive. Lastly, exact computation

of the expected value of the next state is costly, as the number of possible future

states (outcomes) can be exponential in the number of state variables. These three

obstacles are referred to as the three “curses of dimensionality”.

I introduce methods that effectively address each of the above difficulties, both

individually and together, in several different domains. To mitigate the exploding

state-space problem, I introduce “tabular linear functions” (TLFs), which can be

viewed as linear functions over some features, whose weights are functions of other

features. TLFs generalize tables, linear functions, and tile coding, and allow for

a fairly flexible mechanism for specifying the space of potential value functions. I

show particular uses of these functions in a product delivery domain that achieve

a compact representation of the value function and faster learning. I introduce a

“lifted” relational version of TLFs called “relational templates”, which I show to

facilitate transfer learning in certain domains.

Second, to reduce the computational cost of searching the action space, which is

exponential in the number of agents, I introduce a simple hill climbing algorithm

that effectively scales to a larger number of agents without sacrificing solution

quality.

3

Third, for model-based reinforcement learning algorithms, the expected value of

the next state at every step must be calculated. Unfortunately many domains have

a high stochastic branching factor (number of possible next states) when the state

is a Cartesian product of several random state variables. I provide two solutions

to this problem. First, I take advantage of the factoring of the action model and

the partial linearity of the value function to decompose this computation. Second,

I introduce two new algorithms called ASH-Learning and ATR-learning, which are

“afterstate” versions of model-based reinforcement learning using average reward

and total reward settings respectively. These algorithms learn by distinguishing

between the action-dependent and action-independent effects of an agent’s action

[23]. I show experimental results in a product delivery domain and a real-time

strategy game that demonstrate that my methods are effective in ameliorating the

three curses of dimensionality that limit the applicability of reinforcement learning.

The above methods address each curse of dimensionality individually. A method

to address all curses of dimensionality simultaneously would be very useful. To

this end, I introduce multiagent versions of the H-learning and ASH-learning algorithms. By decomposing the state and action spaces of a joint agent into several

weakly coordinating agents, we can simultaneously address each of the three curses

of dimensionality. Results for this are demonstrated in a “Team Capture” domain.

When implementing a multiagent RL solution, coordination between agents is

the main difficulty, and it determines the tradeoff between speed and solution quality. The weak “serial coordination” introduced by the multiagent H-learning and

ASH-learning methods may not be enough. To improve coordination, I introduce

4

both a model-free and a model-based version of the assignment-based decomposition architecture. In this architecture, the action space is divided into a task

assignment level and a task execution level. At the task assignment level, each

task is assigned a group of agents. At the task execution level, each agent is assigned primitive actions to perform based on its task. Since the task execution is

mostly local, I learn a low-dimensional relational template-based value function for

this. Since the task assignment level is global, I use various exact and approximate

search algorithms to do the task assignment. While this two-level decomposition

resembles that of hierarchical multiagent reinforcement learning [14], in contrast

to that work, I do not require that a value function be stored at the root level.

Such a root-level value function must be over the joint state-space of all agents in

the worst case and intractable. I demonstrate results showing that using search

over values given by a lower-level value function at the assignment level allows my

system to scale up to 12 agents, and potentially much beyond this.

Assignment based decomposition is flexible enough to allow a value function

at all levels of the decision-making process, although as described above it is not

required. As described later in this thesis, I will show how such a value function

may be added to the assignment level and how doing this may allow improved

assignment decisions under certain circumstances. However, because of the scaling

limitations of requiring a global value function over all agents, using an assignmentlevel value function can limit the total number of agents at the top level of the

decision-making process.

In this thesis, I also explore the usefulness of transfer learning when applied to

5

the scaling problem. Transfer learning is a research problem in machine learning

that focuses on storing knowledge gained while solving one problem and applying

it to a different but related problem. I explore this in the context of a real-time

strategy game by exploiting the benefits of relational templates. I present three

kinds of transfer learning results in real-time strategy games. First, I show how

how my approach enables transfer of value function knowledge between different

but similarly-sized multiagent domains. Second, I show transfer across domains

with different numbers of agents and tasks. Combining these two types of transfer

learning, I then show how knowledge gained from learning in several small domains

may be transfered to solve problems in large domains with multiple types of agents

and tasks. Thus, transfer learning may be applied to scale reinforcement learning

for multiagent domains.

1.2 Thesis Organization

The rest of the thesis is organized as follows:

Chapter 2 introduces reinforcement learning and Markov Decision Processes.

It describes two previously-studied reinforcement learning methods: a model-free

discounted learning method called Q-learning and a model-based average-reward

learning method called H-learning. These algorithms form the basis of algorithms

described in later chapters.

Chapter 3 discusses the three curses of dimensionality and some methods for

mitigating them individually. These methods include two kinds of function ap-

6

proximation, which serve to mitigate the first curse of dimensionality or exploding

state space, a hill climbing action selection approach which I use to mitigate the

second curse of dimensionality or exploding action space, and the ASH-learning

and ATR-learning algorithms, which are techniques for mitigating the third curse

of dimensionality or exploding outcome space for model-based RL algorithms. Finally I introduce the product delivery domain and describe experimental results

for the above techniques.

Chapter 4 describes a method for learning in multiagent domains which simultaneously addresses each of the three curses of dimensionality. Decomposed versions

of the H-learning and ASH-learning algorithms are provided. The “Team Capture”

domain is explained, and experimental results for this work are presented.

Chapter 5 introduces assignment-based decomposition, a more sophisticated

coordination technique for multiagent domains, for both model-free (Q-learning)

and model-based (ATR-learning) reinforcement learning algorithms. I also show

how to use coordination graphs together with assignment-based decomposition. I

finally describe a new “Predator-Prey” domain and the results for my experiments

on this and other domains using assignment-based decomposition.

Chapter 6 introduces assignment-level learning, which adds a value function

over certain “global” features of the state to the top assignment-level decision.

This allows assignment-based decomposition to solve certain problems it might

otherwise have difficulty with, but limits the scalability of the algorithm.

Finally in Chapter 7, I summarize my results and discuss potential future work.

7

Chapter 2 – Background

This chapter outlines the background of Reinforcement Learning (RL) and describes two previously studied reinforcement learning methods: Q-learning and

H-learning. These two algorithms form a basis upon which I build several new

reinforcement learning algorithms described in later chapters.

2.1 Reinforcement Learning

Reinforcement learning is the problem faced by a learning agent that must learn to

act by trial-and-error interactions with its environment. In the standard reinforcement learning paradigm, an agent is connected to its environment via perception

and action, as shown in Figure 2.1.

In each step of interaction, the agent senses the environment and then selects

Figure 2.1: Schematic diagram for reinforcement learning.

8

an action to change the state of the environment. This state transition generates

a reinforcement signal – reward or penalty – that is received by the agent. While

taking actions by trial-and-error, the agent may incrementally learn a “value function” over states or state-action pairs, which indicates their utility to that agent.

The goal of reinforcement learning methods is to arrive, by performing actions

and observing their outcomes, at a policy, i.e. a mapping from states to actions,

which maximizes some measure of the accumulated reward over time. RL methods differ according to the exact measure and optimization criteria they use to

select actions. These methods apply trial-and-error methodology to explore the

environment over time to come up with a desired policy.

2.2 Markov Decision Processes

The agent’s environment is modeled as a Markov Decision Process (MDP). An

MDP is a tuple hS, A, P, Ri where S is a finite set of n discrete states and A is a

finite set of actions available to the agent. The set of actions which are applicable in

a state s are denoted by A(s) and are called admissible. The actions are stochastic

and Markovian in that an action a in a given state s ∈ S results in a state s0 with

fixed probability P (s0 |s, a). This probability matrix is called an action model of

s. The reward function R : S × A → R returns the reward R(s, a) after taking

action a in state s, also callled the reward model of s. The action and reward

models of an MDP are called its “domain model”. Each action is assumed to take

one time step. An agent’s policy is defined as a mapping π : S → A, such that

9

the agent executes action a ∈ A when in state s. A stationary policy is one which

does not change with time. A deterministic policy always maps the same state to

the same action. For the remainder of this thesis, “policy” refers to a stationary

deterministic policy.

Instead of directly learning a policy, in RL the agent may learn a value function that estimates the value for each state. At any time, the RL methods use

one-step lookahead with the current value function to choose the best action in

each state by some kind of maximization. Therefore the policies that RL methods learn are called “greedy” with respect to their value functions. In addition to

such greedy actions, RL methods also take some directed or random (exploratory)

actions. These exploratory actions ensure that all reachable states are explored

with sufficient frequency so that a learning method does not get stuck in a local maximum. There are several exploration strategies. The random exploration

strategy takes random actions with a fixed probability, giving high probabilities

to actions with high values [1]. The counter-based exploration prefers to execute

actions that lead to less frequently visited states [26]. Recency-based exploration

prefers actions which have not been executed recently in a given state [22]. In this

thesis, I use an -greedy strategy in all my experiments, which takes a random

action with probability and a greedy action with probability 1 − .

10

2.3 Dynamic Programming

Given a complete and accurate model of an MDP in the form of the action and

reward models P (s0 |s, a) and R(s, a), it is possible to solve the decision problem

off-line by applying Dynamic Programming (DP) algorithms [2, 3, 18]. The recurrence relation of DP differs according to the optimization criterion: total reward

optimization, discounted reward optimization, or average reward optimization.

2.3.1 Total Reward Optimization

Suppose that an agent using a policy π goes through states s0 , ..., st in time 0

through t, with some probability. The cumulative sum of rewards received by

following a policy π starting from any state s0 is given by:

π

V (s0 ) = lim E(

t→∞

t−1

X

R(sk π(sk )))

(2.1)

k=0

When there is an absorbing goal state g which is reachable from every state

under every stationary policy, and from which there are no transitions to other

states, the value function for a given policy π can be computed using the following

recurrence relation:

V π (g) = 0

∀s 6= g V π (s) = R(s, π(s)) + E(

X

(2.2)

P (s0 |s, π(s))V π (s0 ))

(2.3)

s0 ∈S

An optimal total reward policy π ∗ maximizes the above value function over all

11

∗

states s0 and policies π, i.e. V π (s0 ) ≥ V π (s0 ).

Under the above conditions, the value function for the optimal total reward

policy π ∗ can be computed by:

∗

V π (g) = 0

∗

V π (s) = max {R(s, a) +

a∈A(s)

X

(2.4)

∗

P (s0 |s, a)V π (s0 )}

(2.5)

s0 ∈S

∗

where π ∗ indicates the optimal policy, and thus V π (s) is a value function corresponding to the optimal policy. A(s)

2.3.2 Discounted Reward Optimization

Total reward is a good candidate to optimize; but if the agent has an infinite horizon

and there is no absorbing goal state, the total reward approaches ∞. One way

to make this total finite is by exponentially discounting future rewards. In other

words, one unit of reward received after one time step is considered equivalent

to a reward of γ < 1 received immediately. We now maximize the discounted

cumulative sum of rewards received by following a policy. The discounted total

reward received by following a policy π from state s0 is given by:

fγπ (s0 )

t−1

X

= lim E(

γ t R(sk π(sk )))

t→∞

(2.6)

k=0

where γ < 1 is the discount factor. Discounting by γ < 1 makes fγπ (s0 ) finite.

The value function above can be computed for any state by solving the following

12

set of simultaneous recurrence relations:

f π (s) = R(s, π(s)) + γ

X

P (s0 |s, π(s))f π (s0 )

(2.7)

s0 ∈S

An optimal discounted policy π ∗ maximizes the above value function over all

states s and policies π. It can be shown to satisfy the following recurrence relation

[1, 3]:

∗

f π (s) = max {R(s, a) + γ

a∈A(s)

X

∗

P (s0 |s, a)f π (s0 )}

(2.8)

s0 ∈S

2.3.3 Average Reward Optimization

For average reward optimization, we seek to optimize the average reward per time

step computed over time t as t → ∞, which is called the gain [18]. For a given

starting state s0 and policy π, the gain is given by Equation 2.9, where rπ (s0 , t)

is the total reward in t steps when policy π is used starting at state s0 , and

E(rπ (s0 , t)) is its expected value:

1

E(rπ (s0 , t))

t→∞ t

ρπ (s0 ) = lim

(2.9)

The goal of average reward learning is to learn a policy that achieves near-optimal

gain by executing actions, receiving rewards and learning from them. A policy

that optimizes the gain is called a gain-optimal policy. The expected total reward

in time t for optimal policies depends on the starting state s and can be written

as the sum ρ(s) · t + ht (s), where ρ(s) is its gain. The Cesaro’s limit (or expected

13

value) of the second term ht (s) as t → ∞ is called the bias of state s and is denoted

by h(s). In communicating MDPs, where every state is reachable from every other

state, the optimal gain ρ∗ is independent of the starting state [18]. This is because

as t → ∞, we can expect to visit every state infinitely often (including the starting

state), and the contribution of the starting state will be included as a part of

the average reward. ρ∗ and the biases of the states satisfy the following Bellman

equation:

(

h(s) = max

a∈A(s)

r(s, a) +

N

X

)

p(s0 |s, a)h(s0 )

− ρ∗

(2.10)

s0 =1

2.4 Model-free Reinforcement Learning

There are two main roles for experience in a reinforcement learning agent: it may

be used to directly learn the policy, or it may be used to learn a model which can

then be used to plan and learn a value function or policy from. This relationship

is visualized in Figure 2.2. Using experience to directly learn the policy is called

“direct RL” [23] or “model-free” reinforcement learning. In this case, the model is

Figure 2.2: The relationship between model-free (direct) and model-based (indirect) reinforcement learning.

14

1

2

3

4

5

6

7

8

Initialize Q(s, a) arbitrarily

Initialize s to any starting state

for each step do

Choose action a from s using -greedy policy derived from Q

Take action a, observeh reward r and next state s0

i

Q(s, a) ← Q(s, a) + α r + γ 0max

Q(s0 , a0 ) − Q(s, a)

0

a ∈A (s)

s←s

end

0

Algorithm 2.1: The Q-learning algorithm.

learned implicitly as a part of the value function or policy. The case in which the

model is learned explicitly is called “indirect RL” or “model-based” reinforcement

learning, and is covered in Section 2.5. Both model-free and model-based RL have

advantages: for example, model-free methods will not be affected by biases in the

structure or design of the model.

In this section I describe two common model-free algorithms: Q-learning and Rlearning. Q-learning can be a discounted or total reward algorithm. The objective

is to find an optimal policy π ∗ that maximizes the expected discounted future

reward for each state s. The MDP is assumed to have an infinite horizon, and so

future rewards are discounted exponentially with a discount factor γ ∈ [0, 1).

The optimal action-value function or Q-function gives the expected discounted

future reward for any state s when executing action a and then following the

optimal policy. The Q-function satisfies the following recurrence relation:

Q∗ (s, a) = R(s, a) + γ

X

s0

P (s0 |s, a) max

Q∗ (s0 , a0 )

0

a

(2.11)

15

The optimal policy for a state s is the action arg maxa Q∗ (s, a) that maximizes the

expected future discounted reward. See the most common form of the Q-learning

algorithm in Algorithm 2.1.

R-learning [19] is an off-policy model-free average reward reinforcement learning

algorithm. As with all average reward algorithms, the objective is to find an

optimal policy π ∗ that maximizes the reward per time step. The MDP is assumed

to have an infinite horizon, but unlike discounted methods, the value functions for

a policy are defined relative to the average expected reward per time step under

the policy.

R-learning is a standard TD control method similar to Q-learning. It maintains

a value function for each state-action pair and a running estimate of the average

reward ρ, which is an approximation of ρπ , the true optimal average reward. See

the complete algorithm in 2.2. Note that I do not conduct any experiments using

this algorithm in this thesis, it is included here because of its similarity to other

methods I discuss, in particular H-learning, which is discussed in the next section.

2.5 Model-based Reinforcement Learning

Model-based RL has several advantages over model-free methods: indirect methods

can often make fuller use of limited experience, and thus converge to a better policy

given fewer interactions with the environment. Having a model also provides more

options: one can choose to learn the model first and then use planning approaches

to learn a policy off-line, for example. This allows the luxury of testing several

16

1

2

3

4

5

6

Initialize ρ and Q(s, a) arbitrarily

Initialize s to any starting state

for each step do

Choose action a from s using -greedy policy derived from Q

Take action a, observeh reward r and next state s0

i

0 0

Q(s, a) ← Q(s, a) + α r − ρ + 0max

Q(s

,

a

)

−

Q(s,

a)

0

a ∈A (s)

0

7

8

9

10

0

if Q(s, a) = 0max

Q(s , a ) then

ah

∈A0 (s)

i

0 0

ρ ← ρ + β r − ρ + 0max

Q(s

,

a

)

−

max

Q(s,

a)

0

a ∈A (s)

s←s

end

a∈A(s)

0

Algorithm 2.2: The R-learning algorithm.

different forms of function approximation to determine which works best with

a given problem, while minimizing the amount of experience data that must be

gathered. In addition, it is usually the case that a value function learned using a

model needs to store a value only for each state, not each state-action pair as with

model-free methods such as Q-learning. If the model is compact, this can result in

many fewer parameters required to store the value function.

In this thesis, I explore several model-based RL algorithms based on “Hlearning”, which is an average reward learning algorithm. H-Learning is modelbased in that it uses explicitly represented action models p(s0 |s, u) and r(s, u). In

previous work, H-learning has been found to be more robust and faster than its

model-free counterpart, R-learning [19, 24].

At every step, the H-learning algorithm updates the parameters of the value

function in the direction of reducing the temporal difference error TDE, i.e., the

17

1

2

3

4

5

6

7

8

P

Find an action u ∈ U (s) that maximizes R(s, u) + N

q=1 p(q|s, u)h(q)

Take an exploratory action or a greedy action in the current state s. Let a

be the action taken, s0 be the resulting state, and rimm be the immediate

reward received.

Update the model parameters for p(s0 |s, a) and R(s, a)

if a greedy action was taken then

ρ ← (1 − α)ρ + α(R(s, a) − h(s) + h(s0 ))

α

α ← α+1

end

P

h(s) ← max R(s, u) + N

q=1 p(q|s, u)h(q) − ρ

u∈U (s)

0

s←s

Algorithm 2.3: The H-learning algorithm. The agent executes each step

when in state s.

9

difference between the r.h.s. and the l.h.s. of the Bellman Equation 2.10.

(

T DE(s) = max

a∈A(s)

r(s, a) +

N

X

)

p(s0 |s, a)h(s0 )

− ρ − h(s)

(2.12)

s0 =1

One issue that still needs to be addressed in Average-reward RL is the estimation

of ρ∗ , the optimal gain. Since it is unknown, H-learning uses ρ, an estimate of the

average reward of the current greedy policy, instead. From Equation 2.10, it can

be seen that r(s, u) + h(s0 ) − h(s) gives an unbiased estimate of ρ∗ , when action u

is greedy in state s, and s0 is the next state. We may thus update ρ as follows, in

every step:

ρ ← (1 − α)ρ + α(r(s, a) − h(s) + h(s0 ))

See Algorithm 2.3 for the complete algorithm.

(2.13)

18

2.6 Multiagent Reinforcement Learning

The term “multiagent” may have several meanings. In this thesis, when referring

to a description of a domain, “multiagent” means a factored joint action space,

i.e. the actions available to the entire agent may be expressed as a Cartesian

product of n sets a1 , a2 , ..., an , each set corresponding to the actions of a separate,

independent agent. All domains described in this thesis are multiagent domains

in this sense.

When referring to algorithms for solving multiagent domains, there are two

general categories: a “joint agent” approach, which treats the full set (or joint set)

of all agent actions as a large list of actions which must be exhaustively searched to

find the correct joint action, or a “multiagent” approach, which takes full advantage

of the factored nature of the action space and typically searches only a local space

of actions unique to each agent, for each agent. The joint agent approach is often

slow, but an exhaustive search of the action space may sometimes find solutions

that multiagent approach could not. However, joint agent approaches are unlikely

to scale to large numbers of agents. In this paper, I discuss methods for scaling

joint agent approaches in Chapter 3, and multiagent approaches in Chapters 4 and

5. Typically, the challenge of multiagent approaches involves introducing enough

coordination between agents so that the absence of an exhaustive search of the

action space is mitigated.

Within mulitagent algorithms, there are two broad approaches: first, using a

centralized multiagent approach to mitigate difficulties in scaling due to a large

19

joint action space, or second, a distributed mulitagent approach that is required

due to the constraints of the domain, for example, when some method is needed to

coordinate the actions of multiple robots acting in the world. The main difference

between these approaches is that communication and sharing of data between

agents is easier or effortless in the case of a centralized approach emphasizing

scaling. For domains requiring a distributed multiagent approach, communication

usually carries some sort of cost. In this thesis, I focus entirely on the benefits of

a multiagent approach to scaling, and do not consider problems of communication

between agents.

I present a brief example of a typical multiagent RL algorithm here, by adapting Q-learning to a multiagent context. In a multiagent approach, the global Qfunction Q(s, a) is approximated as a sum of agent-specific action-value functions:

Pn

Q(s, a) =

i Qi (si , ai ) [12]. Further I approximate each agent-specific actionvalue as a function only of each agent’s local state si . A “selfish” agent-based

version of multiagent Q-learning [23] updates each agent’s Q-value independently

using the update function:

h

i

Qi (si , ai ) ← Qi (si , ai ) + α Ri (s, a) + γQi (s0i , a∗i ) − Qi (si , ai )

(2.14)

where α ∈ [0, 1] is the learning rate. The notation Qi indicates only that the

Q-value is agent-based. The parameters used to store the Q-function may either

be unique to that agent or shared between all agents. The term Ri indicates that

the reward is factored, i.e. a separate reward signal is given to each agent.

20

Chapter 3 – The Three Curses of Dimensionality

Reinforcement learning algorithms suffer from three “curses of dimensionality”:

explosions in state and action spaces, and a large number of possible next states of

an action due to stochasticity (or “outcome space” explosion‘) [16]. In this chapter,

I explore several methods for mitigating each of these three curses individually.

To mitigate the explosion in the state space, I introduced two related methods of function approximation, Tabular Linear Functions (TLFs) and relational

templates. To mitigate the explosion in action space common to multiagent algorithms, I suggest an approximate search of the action space using Hill-climbing.

To help mitigate the explosion in the number of result states, I introduce ASHlearning and ATR-learning, which are a hybrid model-free/model-based approach

using afterstates.

3.1 Function Approximation

Unfortunately, table-based reinforcement learning does not scale to large state

spaces such as those explored in this thesis both due to limitations of space and

convergence speed. The value function needs to be approximated using a more

compact representation to make it scale with size. Linear function approximators

are among the simplest and fastest means of approximation. However, since the

21

value function is usually highly nonlinear in the primitive features of the domain,

the user needs to carefully hand-design high-level features so that the value function

can be approximated by a function which is linear in them [27].

In the following sections I introduce two related function approximation schemes:

“Tabular Linear Functions” (TLFs) and “Relational Templates” which generalize

linear functions, tables, and tile coding. Usually, a TLF expresses a tradeoff between the small number of parameters used by typical linear functions, and the

expressiveness of a complete table. Like any table, TLFs may express a nonlinear value function, but like a linear function, the value function may be stored

compactly.

3.1.1 Tabular Linear Functions

A tabular linear function is a linear function of a set of “linear” features of the

state, where the weights of the linear function are arbitrary functions of other

discretized (or “nominal”) features. Hence the weights can be stored in a table

indexed by the nominal features, and when multiplied with the linear features of

the state and summed, produce the final value function.

More formally, a tabular linear function TLF is represented by Equation 3.1,

which is a sum of n terms. Each term is a product of a linear feature φi and a

weight θi . The features φi need not be distinct from each other, although they

22

usually are. Each weight θi is a function of mi nominal features fi,1 , . . . , fi,mi .

v(s) =

n

X

θi (fi,1 (s), . . . , fi,mi (s))φi (s)

(3.1)

i=1

A TLF reduces to a linear function when there are no nominal features, i.e. when

θ1 , . . . , θn are scalar values. One can also view any TLF as a purely linear function

where there is a term for every possible set of values of the nominal features:

v(s) =

n X

X

θi,k φi (s)I(fi (s) = k)

(3.2)

i=1 k∈K

Here I(fi (s) = k) is 1 if fi (s) = k and 0 otherwise. fi (s) is a vector of values

fi,1 (s), . . . , fi,mi (s) in Equation 3.1, and K is the set of all possible vectors. TLFs

reduce to a table when there is a single term and no linear features, i.e., n = 1 and

φ1 = 1 for all states. They reduce to tile coding or coarse coding when there are

no linear features, but there are multiple terms, i.e., φi = 1 for all i and n ≥ 1.

The nominal features of each term can be viewed as defining a tiling or partition

of the state space into overlapping regions and the terms are simply added up to

yield the final value of the state [23].

Most forms of TLF are created using prior knowledge about the domain (see

Section 3.4.1). What if such prior knowledge does not exist? It is possible to take

advantage of tabular linear functions by constraining them with some syntactic

restrictions. For example, we can define a set of terms over all possible pairs

(or triples) of primitive state features. We then sum over all n2 terms, where

23

n indicates the number of primitive state features. For example, if we have four

features f1 ...f4 , we will then have 6 possible tuples of 2 features each:

v(s) = θ1 (f1 , f2 ) + θ2 (f1 , f3 ) + θ3 (f1 , f4 ) + θ4 (f2 , f3 ) + θ5 (f2 , f4 ) + θ6 (f3 , f4 ) (3.3)

Since this is also an instance of tile-coding, I call this all feature-pairs tiling.

An advantage of TLFs is that they provide a flexible but simple framework to

consider and incorporate different assumptions about the functional form of the

value function and the set of relevant features.

In general, the value function is represented as a parameterized functional form

of Equation 3.1 with weights θ1 , . . . , θn and linear features φ1 , . . . , φn . Each weight

θi is a function of mi nominal features fi,1 , . . . , fi,mi .

Then each θi is updated using the following equation:

θi (fi,1 (s), . . . , fi,mi (s)) ← θi (fi,1 (s), . . . , fi,mi (s)) + β(T DE(s))∇θi v(s)

(3.4)

where ∇θi v(s) = φi (s) and β is the learning rate.

The above update suggests that the value function would be adjusted to reduce the temporal difference error in state s. This update is very similar to the

update used for ordinary linear value functions. Unlike with a normal linear value

function, only those table entries that match the current state’s nominal features

are updated, in proportion to the value of the linear feature φi (s).

24

Table 3.1: Various relational templates used in experiments. See Table 3.2 for

descriptions of relational features, and Section 3.4.2 for a description of the domain.

No.

Description

#1

#2

#3

#4

#5

#6

hDistance(A, B), AgentHP (B), T askHP (A), U nitsInrange(B)i

hU nitT ype(B), T askT ype(A), Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A)i

hU nitT ype(B), Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A)i

hT askT ype(A), Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A)i

hDistance(A, B), AgentHP (B), T askHP (A), U nitsInrange(B), T asksInrange(B)i

hU nitT ype(B), T askT ype(A), Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A),

T asksInrange(B)i

hU nitT ype(B), Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A), T asksInrange(B)i

hT askT ype(A)Distance(A, B), AgentHP (B), T askHP (A), U nitsInrange(A), T asksInrange(B)i

hU nitX(A), U nitY (A), U nitX(B), U nitY (B)i

#7

#8

#9

3.1.2 Relational Templates

Many domains are object-oriented, where the state consists of multiple objects or

units of different classes, each with multiple attributes. Relational templates are

a “lifted” version of tabular linear functions, generalizing them to object-oriented

domains [7].

A relational template is defined by a set of relational features over shared

variables (see Table 3.1). Each relational feature may have certain constraints

Table 3.2: Meaning of various relational features.

Feature

Constraint

Meaning

Distance(A, B)

AgentHP (B)

T askHP (A)

U nitsInrange(A)

T asksInrange(B)

U nitX(B)

U nitY (B)

U nitT ype(B)

T askT ype(A)

T ask(A) ∧ Agent(B)

Agent(B)

T ask(A)

T ask(A)

Agent(B)

Agent(B)

Agent(B)

Agent(B)

T ask(A)

Manhattan distance between units

Hit points of an agent

Hit points of a task

Count of the number of agents able to attack a task

Count of the number of enemies able to attack an agent

X-coordinate of an agent

Y-coordinate of an agent

Type (archery or infantry) of an agent

Type (tower, ballista, or knight) of a task

25

on the objects that can be passed to it; for example, in Table 3.2 each feature

has a type constraint on its variables. Each template is instantiated in a state

by binding its variables to units of the correct type. An instantiated template i

defines a table θi indexed by the values of its features in the current state. In

general, each template may give rise to multiple instantiations in the same state.

The value v(s) of a state s is the sum of the values represented by all instantiations

of all templates.

v(s) =

n

X

X

θi (fi,1 (s, σ), . . . , fi,mi (s, σ))

(3.5)

i=1 σ∈I(i,s)

where i is a particular template, I(i, s) is the set of possible instantiations of i

in state s, and σ is a particular instantiation of i that binds the variables of the

template to units in the state. The relational features fi,1 (s, σ), . . . , fi,mi (s, σ) map

state s and instantiation σ to discrete values which index into the table θi . All

instantiations of each template i share the same table θi , which is updated for each

σ using the following equation:

θi (fi,1 (s, σ), . . . , fi,mi (s, σ)) ← θi (fi,1 (s, σ), . . . , fi,mi (s, σ)) + α(T DE(s, σ)) (3.6)

where α is the learning rate. This update suggests that the value of v(s) would be

adjusted to reduce the temporal difference error in state s. In some domains, the

number of objects can grow or shrink over time: this merely changes the number

of instantiations of a template.

26

One template is more refined than another if it has a superset of features.

The refinement relationship defines a hierarchy over the templates with the base

template forming the root and the most refined templates at the leaves. The values

in the tables of any intermediate template in this hierarchy can be computed from

its child template by summing up the entries in its table that refine a given entry in

the parent template. Hence, we can avoid maintaining the intermediate template

tables explicitly. This adds to the complexity of action selection and updates, so

my implementation explicitly maintains all templates.

3.2 Hill Climbing for Action Space Search

The second curse of dimensionality in reinforcement learning is the exponential

growth of the joint action space and the corresponding time required to search this

action space. To mitigate this problem, one may implement a simple form of hill

climbing which can greatly speed up the action selection process with minimal loss

in the quality of the resulting policy.

In my experiments, hill climbing was used only during training. This is possible

only when using an off-policy learning method, such as H-learning (Algorithm 2.3).

I found that full exploitation of the policy during training is not necessarily conducive to improved performance during testing. It is only important that potentially high-value states are explored as often as necessary to learn their true

values; this kind of good exploration is a property shared by both complete and

hill climbing searches of the action space.

27

I performed hill climbing by noting that every joint action ~a is a vector of

sub-actions, each by a single agent, i.e., a = (a1 , . . . , ak ). This vector is initialized

with all neutral actions. The definition of “neutral action” varies with the domain:

a “wait” action would be a typical example for some domains. Starting at a1 , I

consider a small neighborhood of actions (one for each possible action a1 may take,

other than the action it is currently set to), and a is set to the best action. This

process is repeated for each agent a2 , . . . , ak . The process then starts over at a1 ,

repeating until ~a has converged to a local optimum.

3.3 Reducing Result-Space Explosion

The third curse of dimensionality occurs due to the difficulty of efficiently calculating the expected value of the next state, in domains with many possible resulting

states. This is sometimes called the “result-space” explosion. Such domains often

arise when there are many objects in the domain that are not agent-controlled,

yet exhibit some unpredictable behavior. For example, in a real-time strategy

game (see Section 3.4.2) there may be many enemy agents, each of which is acting

according to some unknown or stochastic policy.

It should be noted that while model-free reinforcement learning may also suffer

from having too many result states (thus requiring a lower learning rate and a

longer time to converge) in this thesis I am primarily concerned with the time

required to actually calculate the expected value of the next state. Model-free

algorithms have a significant advantage here, as usually an explicit calculation of

28

this value is needed only when using model-based reinforcement learning. One of

the drawbacks of model-based methods is that they require stepping through all

possible next states of a given action to compute the expected value of the next

state. This is very time-consuming. Optimizing this step improves the speed of

the algorithm considerably. Consider the fact that we need to compute the term

PN

0

0

s0 =1 p(s |s, u)h(s ) in Equation 2.12 to compute the Bellman error and update the

parameters. Since there are often an exponential number of possible next states

in domain parameters such as the number of enemy agents, doing this calculation

by brute-force is expensive. I present three possible solutions to this problem in

the next sections.

3.3.1 Efficient Expectation Calculation

For this first method to apply, the value function must be linear in any features

whose values change stochastically. For example, in the product delivery domain

(see Section 3.4.1), the only features whose values change stochastically are the

shop inventory levels. Hence this solution may be applied for the linear inventory

function approximation of my domain.

Under the above assumption, we can rewrite the exponential-size calculation

PN

PN

Pn

0

0

0

l=1 θ l φl,s ), which can be

s0 =1 p(s |s, u)h(s ) in Equation 2.12 as

s0 =1 p(s |s, u)(

Pn

PN

Pn

0

rewritten as

l=1 θ l

l=1 θ l E(φl,s0 |s, u),

s0 =1 p(s |s, u)φl,s and be simplified to

PN

0

where E(φl,s0 |s, u) =

s0 =1 p(s |s, u)φl,s0 and represents the expected value of the

feature value φl in the next state under action u. E(φl,s0 |s, u) is directly estimated

29

by on-line sampling and stored in a factored form. Instead of taking time proportional to the number of possible next states, this only takes time proportional to

the number of features, which is exponentially smaller. For example, if the current

inventory level of shop l is 2, and the probability of inventory going down by 1

in this step is 0.2, and the probability of its going down by 2 or more is 0, then

E(φl,j |i, u) = 2 − 1 ∗ .2 = 1.8. So we obtain the following temporal difference error:

(

T DE(s) = max

r(s, u) +

u∈U (s)

by substituting

Pn

l=1 θ l E(φl,s0

n

X

)

θl E(φl,s |s, u)

− ρ − h(s)

(3.7)

l=1

|s, u) for

PN

s0 =1 p(s

0

|s, u)h(s0 ) in Equation 2.12.

3.3.2 ASH-learning

A second method for optimizing the calculation of the expectation is a different algorithm I call ASH-Learning, which stands

Figure 3.1: Progression of states (s,

0

s

, and s00 ) and afterstates (sa and

for Afterstate H-Learning. This is based

s0a0 ).

on the notion of afterstates [23] also called

“post-decision states” [16]. Afterstates are created by conceptually splitting the effects of an agent’s action into “action-dependent” effects and “action-independent”

(or environmental) effects.

The afterstate is the state that results by taking

into account the action-dependent effects, but not the action-independent effects.

If we consider Figure 3.1, we can view the progression of states/afterstates as

30

a0

a

s → sa → s0 → s0a0 → s00 (see Figure 3.1). The “a” subscript used here indicates

that sa is the afterstate of state s and action a. The action-independent effects of

the environment have created state s0 from afterstate sa . The agent chooses action

a0 leading to afterstate s0a0 and receiving reward r(s0 , a0 ). The environment again

stochastically selects a state, and so on. The h-values may now be redefined in

these terms:

h(sa ) = E(h(s0 ))

N

X

p(s0u |s0 , u)h(s0u ) − ρ∗

h(s0 ) = max0 r(s0 , u) +

u∈U (s )

0

(3.8)

(3.9)

su =1

If we substitute Equation 3.9 into Equation 3.8, we obtain this Bellman equation:

h(sa ) = E max0

u∈U (s )

r(s0 , u) +

N

X

s0u =1

p(s0u |s0 , u)h(s0u )

− ρ∗

(3.10)

Here the s0u notation indicates the afterstate obtained by taking action u in state

s0 . I estimate the expectation of the max above via sampling in the ASH-learning

algorithm (Algorithm 3.1). Since this avoids looping through all possible next

states, the algorithm is much faster. In the domains explored in this chapter, the

afterstate is deterministic given the agent’s actions, but the stochastic effects due

to the environment are unknown. Using afterstates to learn the expectation of

the value of the next state takes advantage of this knowledge. For such domains

with deterministic agent actions, we do not need to learn p(s0u |s0 ,u), providing a

31

1

2

3

4

5

6

7

8

Find an action u ∈ U (s0 ) that maximizes

o

n

P

0

0 0

)

|s

,

u)h(s

r(s0 , u) + N

p(s

0

u

u

su =1

Take an exploratory action or a greedy action in the state s0 . Let a0 be the

action taken, s0a0 be the afterstate, and s00 be the resulting state.

Update the model parameters p(s0a0 |s0 , a0 ) and r(s0 , a0 ) using the immediate

reward received.

if a greedy action was taken then

ρ ← (1 − α)ρ + α(r(s0 , a0 ) − h(sa ) + h(s0a0 ))

α

α ← α+1

end

o

n

PN

0

0 0

0

h(sa ) ← (1 − β)h(sa ) + β max0 r(s , u) + s0u =1 p(su |s , u)h(su ) − ρ

u∈U (s )

0

00

s ←s

sa ← s0a0

Algorithm 3.1: The ASH-learning algorithm. The agent executes steps 1-7

when in state s0 .

9

10

significant savings in memory, computation time, and code complexity. By storing

only the values of states rather than state-action pairs, this method also shows the

advantages of model-based H-learning.

The temporal difference error for the ASH-learning algorithm would be:

T DE(sa ) = max0

u∈U (s )

r(s0 , u) +

N

X

s0u =1

p(s0u |s0 , u)h(s0u )

− ρ − h(sa )

(3.11)

which I use in Equation 3.4 when using TLFs for function approximation.

ASH-learning generalizes model-based H-learning and model-free R-learning

[19]. ASH-learning reduces to H-learning if the afterstate is set to be the next

state, treating all effects to be action-dependent. Doing this, the expectation of

the maximum in Equation 3.11 drops out, and we have the Bellman equation for

32

H-learning in Equation 2.10.

To reduce ASH-learning to R-learning, two steps are required. First, we define

the afterstate to be the state-action pair, which makes the action-dependent effects

implicit. Second, note that for Equations 3.8 and 3.9, we assume the reward

r(s, a) in Figure 3.1 is given prior to the afterstate sa . It is also valid to instead

assume the reward is given after the afterstate. This is a conceptual difference

only, but combined with the first step above, this small change allows us to reduce

Equation 3.11 to the Bellman equation for R-learning:

0

∗

h(s, a) = E r(s, a) + max0 {h(s , u)} − ρ

(3.12)

u∈U (s )

The transition probabilities p(s0u |s0 , u) drop out because the afterstate (s0 , u) is

deterministic given the state and action. Under these circumstances, ASH-learning

reduces to model-free R-learning.

While in theory the afterstate may be defined as being anything from the

current state-action pair to the next state, in practice it is useful if it has low

stochasticity and small dimensionality. This is true for example when an agent’s

actions are completely deterministic and stochasticity is due to the actions of the

environment (possibly including other agents).

3.3.3 ATR-learning

In this section, I adapt ASH-learning to finite horizon domains. Instead of using

average reward, I calculate total reward. I call this variation of afterstate total-

33

1

2

3

4

5

6

7

Initialize afterstate value function av(·)

Initialize s to a starting state

for each step do

Find action u that maximizes r(s, u) + av(su )

Take an exploratory action or a greedy action in the state s. Let a be

the joint action taken, r the reward received, sa the corresponding

afterstate, and s0 be the resulting state.

Update the model parameters

r(s0 , a).

av(sa ) ← av(sa ) + α max {r(s0 , u) + av(s0u )} − av(sa )

u∈A

0

s←s

end

Algorithm 3.2: The ATR-learning algorithm, using the update of Equation 3.14.

8

9

reward learning “ATR-learning”. I define the afterstate-based value function of

ATR-learning as av(sa ), which satisfies the following Bellman equation:

av(sa ) =

X

0

0

p(s |sa ) max {r(s , u) +

u∈A

s0 ∈S

av(s0u )}

.

(3.13)

As with ASH-learning, I use sampling to avoid the expensive calculation of the

expectation above. At every step, the ATR-learning algorithm updates the parameters of the value function in the direction of reducing the temporal difference

error (TDE), i.e., the difference between the r.h.s. and the l.h.s. of the above Bellman equation:

T DE(sa ) = max {r(s0 , u) + av(s0u )} − av(sa ).

u∈A

The ATR-learning algorithm is shown in Algorithm 3.2.

(3.14)

34

3.4 Experimental Results

In this chapter, I describe two domains used to perform my experiments: a product

delivery domain, and a real-time strategy game domain. These domains illustrate

the three curses of dimensionality, each having several agents, states, actions, and

possible result states.

While discussing each domain, I will show how that domain exhibits each curse

of dimensionality. In general, the number of agents has the largest influence on the

number of states, actions, and result states – these are usually exponential in the

number of agents. If the environment includes several random actors (for example,

customers or enemy agents) this will also increase the number of possible result

states.

Finally I present my experiments involving these domains and the techniques

described so far.

3.4.1 The Product Delivery Domain

I simplify many of the complexities of the real-world delivery problem, including

varieties of products, seasonal changes in demands, constraints on the availability

of labor and on routing, the extra costs due to serving multiple shops in the same

trip, etc. Rather than building a deployable solution for a real world problem, my

goal is to scale the methods of reinforcement learning to be able to address the

combinatorial core of the product delivery problem. This approach is consistent

with the many similar efforts in the operations research literature [4]. While RL

35

Figure 3.2: The product delivery domain, with depot (square) and five shops

(circles). Numbers indicate probability of customer visit each time step.

has been applied separately to inventory control [28] and vehicle routing [16,20,21]

in the past, I am not aware of any applications of RL to the integrated problem of

real-time delivery of products that includes both.

I assume a supplier of a single product that needs to be delivered to several

shops from a warehouse using several trucks. The goal is to ensure that the stores

remain supplied while minimizing truck movements. I experimented with an instance of the problem shown in Figure 3.2. To simplify matters further, I assumed

it takes one unit of time to go from any location to its adjacent location or to

execute an unload action.

The shop inventory levels and truck load levels are discretized into 5 levels 0-4.

It is easy to see that the size of the state space is exponential in the number of

trucks and the number of shops, which illustrates the first curse of dimensionality.

I experimented with 4 trucks, 5 shops, and 10 possible truck locations, which gives

36

a state-space size of (55 )(54 )(104 ) = 19, 531, 250, 000.

Each truck has 9 actions available at each time step: unload 1, 2, 3, or 4

units, move in one of up to four directions, or wait. A policy for this domain

seeks to address the problems of inventory control, vehicle assignment, and routing

simultaneously. The set of possible actions in any one state is a Cartesian product

of the available actions for all trucks, and it is exponential in the number of trucks.

Thus, just picking a greedy joint action with respect to the value function requires

an exponential size search at each learning step, illustrating the second curse of

dimensionality. In my experiments with 4 trucks, 94 = 6561 actions in each step

must be considered. Although this is feasible, we need a faster approach to scale

to larger numbers of trucks, since the action search occurs at each step of the

learning algorithm. Trucks are loaded automatically upon reaching the depot. A

small negative reward of −0.1 is given for every “move” action of a truck to reflect

the fuel cost.

The consumption at each shop is modeled by decreasing the inventory level by

1 unit with some probability, which independently varies from shop to shop. This

can be viewed as a purchase by a customer. In general, the number of possible next

states for a state and an action is exponential in the number of shops, since each

shop may end up in multiple next states, thus illustrating the third and final curse

of dimensionality. With my assumption of 5 shops, each of which may or may not

be visited by a customer each time step, this gives us up to 25 = 32 possible next

states each time step. I call this the stochastic branching factor – the maximum

number of possible next states for any state-action pair. I also give a penalty of

37

−5 if a customer enters a store and finds the shelves empty.

3.4.2 The Real-Time Strategy Domain