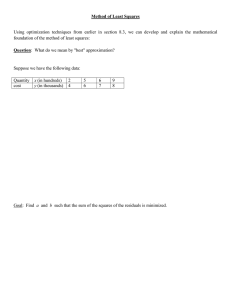

7. Regression Analysis 7.1

advertisement

7. Regression Analysis

The OLS estimator (b.l.u.e.) for

is

b = (X T X ) 1 X T Y

7.1 Simple linear regression for

normal-theory Gauss-Markov

models.

" when does this exist?

Model 1:

Yi = 0 + 1Xi + i

where i NID(0; 2)

for i = 1; : : : ; n.

Matrix formulation:

Y1

1 X1

Y2 = 1 X2

..

.. ..

or

2

3

2

3

2

6

6

6

6

6

6

6

6

6

6

6

6

6

4

7

7

7

7

7

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

6

6

6

6

6

4

7

7

7

7

7

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

6

6

6

6

6

4

Yn

Xn

1

1

2

0 + .

.

1

2

3

6

6

6

4

7

7

7

5

n

Here

2

XT X =

3

7

7

7

7

7

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

6

4

n

Xi

i=1

n 2

X

i=1 i

n

n

X

i=1 i

X

X

X

n

Yi

T

X Y = i=1

n

XY

i=1 i i

2

X

6

6

6

6

6

6

6

6

6

4

Y =X +

X

3

7

7

7

7

7

7

7

7

7

5

3

7

7

7

7

7

7

7

7

7

5

421

420

Then

(X T X )

b = (X T X ) 1X T Y =

1

2

=

n

n 2

X

i=1 i

X

1

n

X

i=1 i

0

B

@

X

2

1

C

A

6

6

6

6

6

6

6

6

6

4

2

=

n

X

i=1

Xi2

n

X

i=1 i

n 2

X

i=1 i

X

X

n

n

1

(X X )2

i=1 i

X

6

6

6

6

6

6

4

nX

n

X

i=1

n

nX

n

3

7

7

7

7

7

7

5

422

Xi

3

7

7

7

7

7

7

7

7

7

5

n

1

n

(X

i=1 i

X

X )2

Xi2 n Yi nX n XiYi

i=1

i=1

i=1

nX n Yi + n n XiYi

2

0

6

6

6

6

6

6

6

6

4

B

@

n

and

2

2

b=

6

6

6

6

6

6

4

b0

b1

3

7

7

7

7

7

7

5

1 0

X

=

6

6

6

6

6

6

6

6

6

6

6

6

6

6

6

6

4

C B

A @

1

X

X

C

A

X

X

i=1

i=1

Y b1X

n

)Yi

(Xi X

i=1

n

)2

(Xi X

i=1

X

X

3

7

7

7

7

7

7

7

7

7

7

7

7

7

7

7

7

5

423

3

7

7

7

7

7

7

7

7

5

Covariance matrix

V ar(b)

=

V ar (X T X ) 1X T Y

0

1

@

A

= (X T X )

=

1X T (2I )X (X T X ) 1

2(X T X ) 1

X 2

X

2 (X

n

(

X

X

)

i

i X )2

= 2

1

X

(Xi X )2 (Xi X )2

2

6

6

6

6

6

6

6

6

6

6

4

1+

3

7

7

7

7

7

7

7

7

7

7

5

where

MSE = SSE=(n

=

n

1

2

Y(I

2)

PX )Y:

Estimate the covariance matrix for

b as

Sb = MSE (X T X ) 1

425

424

Analysis of Variance:

Notation

n

Y 2 = YT Y

i=1 i

= YT (I PX + PX P1 + P1)Y

= YT (I PX )Y + YT (PX P1)Y + YT P1Y

X

"

SSE

%

"Corrected

model"

sum of

squares

"

call this

R( 1 j

"

Correction

for the

"mean"

"

call this

R(

0)

0)

(i) By Cochran's Theorem, these three

sums of squares are multiples of independent chi-squared random variables.

(ii) By result 4.7, 12 SSE 2(n 2) if the

model is correctly speci ed.

426

Reduction in residual sum

of squares:

R( k+1; : : : ; k+q j 0; 1; : : : ; k)

= Y T (I

"

sum of squared

residuals for the

smaller model

Here

YT (I

PX 1)Y

"

sum of squared

residuals for the

larger model

X = [ X1 j X2

%

-

columns

corresponding

to 0; 1; : : : ; k

PX )Y

]

columns

corresponding

to k+1 k+q

427

Correction for the overall mean:

An alternative formula is

R( 0) = YT P1Y

= YT (I I + P1)Y

= YT I Y

n

=

(Y

i=1 i

R( 0 )

YT (I

P1)Y

n

0)2

(Yi Y )2

i=1

%

X

X

sum of squared

residuals from tting

the model

Yi = + i.

The OLS estimator for = E (Yi) is

^

= (1T 1)

= (n)

1

1T Y

n

Y

i=1 i

1

0

B

@

X

1

C

A

= YT P1Y

11T Y

= YT 1(1T 1)

n

=

Y

( n) 1

Y

i

i=1

i=1 i

2

n

= (n ) 1

Y

i

i=1

= nY 2

0

B

B

@

n

X

1

0

C

C

A

B

B

@

0

B

B

@

C

C

A

= Y

= YT (PX

=

=

P1)Y

YT (PX I + I P1)Y

YT (I P1 (I PX ))Y

YT (I -P1)Y YT (I -PX )Y

%

%

sum of squared

sum of squared

residuals for

residuals for

tting the model tting the model

Yi = + i

C

C

A

with df = rank(P1) = rank(1) = 1:

Reduction in the residual sum of

squares for regression on X1:

=

1

1

X

429

428

R( 1 j 0 )

X

Yi =

0

ANOVA table:

Source of

variation

d.f.

Regression

on X

1 R(

Residuals

n 2

Corrected

total

n 1

Correction

for the mean 1

Sum of

Squares

1

j 0) = YT (PX P1)Y

YT (I PX )Y

YT (I P1)Y

YT P1Y = nY 2

+ 1Xi + i

430

431

F-tests

From result 4.7 we have

1

1

2 R( 0 ) = 2

YT P1Y 2(Æ 2)

1

where

Æ2

=

=

=

=

1

2

T XT P1X

1

2

T XT P T P1X

Also use Result 4.7 to show that

1

1

T

2 (P1X ) (P1X

n ( + B X )2

2 0 1

Hypothesis test:

Reject H0 : 0 + 1X = 0 if

( 0)

> F(1;n 2);

F = RMSE

1

1

T

2 SSE = 2 Y (I

)

PX )Y 2(n 2)

432

Use Result 4.8 to show that

433

Test the null hypothesis H0 : 1 = 0

Use

F = R( 1j 0)=1

SSE = 12 YT (I PX )Y

is distributed independently of

MSE

[YT (PX P1)Y]=[1 2]

=

]

[YT (I PX )Y]=[(n 2) 2

R( 0) = 12 YT P1Y :

This follows from

(I

F(1;n

PX )P1 = 0 :

Consequently,

( 0)

F(1;n 2)(Æ2)

F = RMSE

and this becomes a central

F-distribution when the null

hypothesis is true.

2

2)(Æ )

where

Æ2 = 12 T X T (PX

=

1

2

T X T (PX

P1)X

P1)T (PX P1)X

%

The null hypothesis is

H0 : (PX P1)X = 0

434

435

If any Xi =

6 Xj , then we cannot

have both

Here

Xj X = 0

(PX P1)X

= (PX

and

P1)[1jX]

= [(PX P1)1 j (PX P1)X]

= [PX 1 P11 j PX X P1X]

1]

= [1 1 j X X

2

=

6

6

6

6

6

6

6

6

6

6

6

6

6

4

0

0

..

0

j X1 X

j X2 . X

j .

j Xn X

Xi = X = 0 :

Consequently, if any Xi 6= Xj then

(PX

7

7

7

7

7

7

7

7

7

7

7

7

7

5

1=0 :

Hence, the null hypothesis is

H0 : 1 = 0:

436

X

n

Æ2 = 2 12 i=1

(Xi

X

437

Reparameterize the model:

X )2

Yi =

+

1(Xi

X ) + i

with i NID(0; 2); i = 1; : : : ; n.

Maximize the power of the F-test

for H0 : 1 = 0 vs. HA : 1 6= 0

by maximizing

1

=0

if and only if

3

Note that

n

Æ2 = 12 12 i=1

(Xi

P1)X

X )2

Interpretation of parameters:

= E (Y )

when X = X

1 is the change in E (Y ) when

X is increased by one unit.

438

Matrix formulation:

2

6

6

6

6

6

6

6

6

4

Y1

3

2

1

X1 X

1

Xn X

.. = ..

Yn

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

4

..

3

7

7

7

7

7

7

7

7

5

2

2

3

6

6

6

4

7

7

7

5

1

+

6

6

6

6

6

6

6

6

4

1

..

n

3

For this reparameterization, the

columns of W are orthogonal and

7

7

7

7

7

7

7

7

5

2

WTW

or

Y

=W +

Clearly,

W

=

X

=

X

2

6

6

6

4

W

2

6

6

6

4

X

1

0

1

3

7

7

7

5

=

6

6

6

6

6

6

6

4

2

(W T W )

n

0

1

1 = n

6

6

6

6

6

6

6

4

0

n

X

i=1

0

3

X )2

(Xi

3

0

1

(Xi X )2

n

Yi

W T Y = i=1

n

(X

i=1 i

7

7

7

7

7

7

7

5

2

= XF

6

6

6

6

6

6

6

6

6

4

1 X

= WG

0 1

3

7

7

7

7

7

7

7

5

3

X

X )Yi

X

7

7

7

7

7

7

7

7

7

5

7

7

7

5

439

440

Analysis of Variance

Then,

^

^= ^

1

2

3

6

6

6

6

4

7

7

7

7

5

= (W T X )

2

=

6

6

6

6

6

6

6

4

and

1W T Y

Y

The reparamterization does not

change the ANOVA table.

PX = X (X T X ) 1X T

3

(Xi X )Yi

(Xi X )2

7

7

7

7

7

7

7

5

=

V ar(^ )

=

2(W T W ) 1

2

=

6

6

6

6

6

6

6

6

6

4

2

n

0

0

2

and

3

7

7

7

7

7

7

7

7

7

5

(Xi X )2

Xi X )Yi

Hence, Y and ^1 = (

(Xi X )2

are uncorrelated (independent for

the normal theory Gauss-Markov

model).

441

W (W T W ) 1 W T

= PW

R( 0) + R( 1j 0) + SSE

= YT P1Y + YT (PX P1)Y +

YT (I PX )Y

= YT P1Y + YT (PW P1)Y +

YT (I PW )Y

= R( ) + R( 1j ) + SSE

442

Matrix formulation:

7.2 Multiple regression analysis

for the normal-theory

Gauss-Markov model

N (0; 2I )

Y =X +

where

Yi = 0 + 1X1i + + r Xri + i

where

1

1

= 1

2

X

6

6

6

6

6

6

6

6

6

6

6

6

6

6

6

6

6

4

i NID(0; 2) for i = 1; : : : ; n :

X11 X21 Xr1

X12 X22 Xr2

.

. .

.

.

..

..

1 X1n X2n

..

Xrn

"

"

" "

1

.

..

X1

X2

(iv) e = Y

(i) the OLS estimator (b.l.u.e.) for

is

b = (X T X ) 1X T Y

(v) By result 4.7,

= X (X T X )

= PX Y

2

6

6

6

6

6

6

6

6

6

6

6

6

6

4

0

..1

r

3

7

7

7

7

7

7

7

7

7

7

7

7

7

5

444

Suppose rank(X ) = r + 1, then

(iii) Y^ = X b

7

7

7

7

7

7

7

7

7

7

7

7

7

7

7

7

7

5

Xr

443

(ii) V ar(b) = 2(X T X ) 1

3

^ = (I

Y

PX )Y

1

SSE = 2 eT e

2

1

=

1

YT (I PX )Y

2

2(n r 1)

(vi) MSE = n SSE

r 1 is an unbiased

estimator of 2.

1X T Y

445

446

ANOVA

Source of

variation

Model

(regression

on X1; : : : ; Xr )

Error (or

residuals)

Corrected

total

d.f.

r

Reduction in the residual sum of

squares obtained by regression on

Sum of

squares

R( 1 ; : : : ; r j 0 )

= YT (PX P1)Y

n r 1 YT (I PX )Y

n 1

Correction

for the mean

1

YT (I P1)Y

R( 0) = YT P1Y

=nY 2

X1; X2; : : : ; Xr

is denoted by

R( 1 ; 2 ; : : : ; r j 0 )

= YT (I P1)Y YT (I PX )Y

= YT (PX P1)Y

447

448

Then

Use Cochran's theorem or results

4.7 and 4.8 to show that SSE is

distributed independently of

R( 1; 2; : : : ; r j 0) = SSmodel

F

=

R( 1; : : : ; rj 0)=r F

where

Æ2 = 12 T XT (PX

=

and

1

2

2 SSE (n r 1)

MSE

=

1

T X T (PX

2

1

2

2

4

2

(r;n r 1)(Æ )

P1)X

I + I P1)X

T X T (I

P1)X

T XT (I PX )X

3

5

-

and that

This is a matrix of zeros

1

2 2

2 R( 1; : : : ; r j 0) (r)(Æ )

449

=

=

=

1 T T

2 X (I P1)X

1 T T

2 X (I P1)(I P1)X

T

1

2 [(I P1)X ] (I P1)X

450

Note that (I

P1)X is

[(I P1)1j(I P1)X1j j(I P1)Xr ]

1 1 j j Xr X

r 1]

= [ 0 j X1 X

where

1

= ..

=)

(I

P1)X

=

r

(X

j =1 j j

X

1

X j 1)

r

X

XX

=

T n (X

i=1 j

2

2

1

2

6

6

4

X

2

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

4

r

=

X

X 1

..

X r

3

2

7

7

7

7

7

7

7

7

5

6

6

6

6

6

6

6

6

4

Xj =

X1j

..

Xrj

3

7

7

7

7

7

7

7

7

5

X

2

T

2 j =1 j (Xj Xj 1) (Xj Xj 1)

+

(X

X j 1)T (Xk X k1)

j 6=k j k j

6

6

6

4

3

6

6

6

6

6

6

6

6

4

)(Xj X

)T is

If n (Xj X

j =1

positive de nite, then the null hypothesis corresponding to Æ2 = 0 is

Then, the noncentrality parameter

is

2

2

)(Xi

X

)T

X

3

7

7

5

3

7

7

7

5

H0 : = 0( or 1 = 2 = = r = 0)

Reject H0 : = 0 if

T

F = YT (IY (PPX)Y=P(1n)Y=r

r 1)

X

> F(r;n r 1);

451

Sequential sums of squares (Type I

sums of squares in PROC GLM or

PROC REG in SAS).

452

Then

YT Y = YT P0Y + YT (P1

+YT (P2

P0)Y

P1)Y + +YT (Pr

Pr 1)Y

+YT (I Pr )Y

De ne

X0 = 1

X1 = [1jX1]

X2 = [1jX1jX2]

..

P0 = X0(X0T X0) 1X0T

P1 = X1(X1T X1) X1T

P2 = X2(X2T X2) X2T

..

Xr = [1jX1j jXr ] Pr = Xr (XrT Xr ) XrT

453

=

R( 0 ) + R( 1 j 0 ) + R( 2 j 0 ; 1 )

+ + R( r j 0 ; 1 ; : : : ; r 1 )

+SSE

454

Then

F

Use Cochran's theorem to show

{ these sums of squares are

distributed independently of

each other.

{ Each 12 R( ij 0; : : : ; i 1) has

a chi-squared distribution with

one degree of freedom.

Use Result 4.7 to show

1

2

2 SSE (n r 1).

=

R( j j 0; : : : ; j 1)=1 F

1;n r 1(Æ2)

MSE

where

Æ2 = 12

= 1

2

T X T (Pj

Pj 1)X

T X T (Pj

Pj 1)T (Pj Pj 1)X

= 12 [(Pj Pj 1)X ]T (Pj Pj 1)X

Hence, this is a test of

H0 : (Pj Pj 1)X

=0

vs

Ha : (Pj Pj 1)X 6= 0

456

455

Then

Note that

(Pj Pj 1)X

= (Pj Pj 1) 1 X1 Xj 1 Xj Xr

(Pj

X

= (Pj Pj 1)1 (Pj Pj 1)X1 (Pj Pj 1)Xj 1 (Pj Pj 1)Xj

Pj 1)X

r

=

(P

Pj 1)Xk

k =j k j

= j (Pj Pj 1)Xj

r

+

(P

Pj 1)Xk

k=j +1 k j

= Onj (Pj Pj 1)Xj (Pj Pj 1)Xr

X

and the null hypothesis is

H0 : 0

=

j (Pj

+

457

Pj 1)Xj

r

(P

k=j +1 k j

X

Pj 1)Xk

458

Type II sums of squares in SAS

(these are also Type III and

Type IV sums of squares for

regression problems).

From the previous discussion:

F

=

YT (PX P j )Y=1

MSE

F(1;n

2

r 1)(Æ )

where

R( j j 0 and all other k0 s)

= YT (PX P j )Y

Æ2

1

=

2

P j = X j (X T j X j ) X T j

P j )X

1

2 T

2 j Xj (PX P j )Xj

=

where

T X T (PX

This F-test provides a test of

and X j is obtained by deleting the

(j + 1)-th column of X .

HA : j 6= 0

H0 : j = 0

vs

if (PX P j )Xj 6= 0.

459

460

When X1; X2; : : : ; Xr are all

uncorrelated, then

Type I Sums

of squares

Type II Sums

of squares

X1

R( 1 j

X2

R( 2 j 0 ; 1 )

= Y T (P2

P1)Y

R( 1j other 0s)

=YT (PX P 1)Y

R( 2j other 's)

= Y T (PX

P 2)Y

Variable

..

Xr

Residuals

Corrected

Total

0)

=YT (P1

P0)Y

..

..

R( r j 0 ; 1 ; : : : ; r 1 ) R( r j 0 ; : : : ; r 1 )

= Y T (Pr

Pr 1)Y

= Y T (PX

P r)Y

SSE = YT (I PX )Y

YT (

I P1)Y

(i) R( j

j

0 and any other 's)

= R( j j 0 )

There is only one ANOVA table.

(ii) R( j j 0) = ^j2 n (Xji

i=1

X

X j:)2

j j 0) F

(iii) F = R(MSE

1;n k 1(Æ2)

where Æ2 = 12 j2 n (Xji X j:)2

i=1

provides a test of

H0 : j = 0 versus HA : j 6= 0.

X

461

462

Testable Hypothesis

For any testable hypothesis, reject

= d in favor of the general

alternative HA : C 6= d if

H0 : C

T [C (X T X ) C T ] 1(C b d)=m

F = (C b d)

YT (I PX )Y=(n rank(X ))

> F(m;n rank(X ));

where

m = number of rows in C

= rank(C )

and

b = (X T X )

XT Y

Con dence interval for an

estimable function cT

cT bt

T T

(n rank(X )) =2 MSE c (X X ) c

v

u

u

t

Use cT = (0 0 .. 0 1 0 .. 0)

"

j -th position

to construct a con dence

interval for j 1

Use cT = (1; x1; x2; : : : ; xr) to

construct a con dence interval

for

E (YjX1 = x1; : : : ; Xr = xr)

= 0 + 1x1 + + r xr

463

464

Prediction Intervals

A (1

is

Predict a future observation at

X1 = x1; : : : ; Xr = xr

=

v

u

u

t

0 + 1x1 + + r xr + %

estimate the

conditional

mean as

b0 + b1x1 + + br xr

100% prediction interval

(cT b + 0) t(n rank(X )); =2

i.e., predict

Y

)

%

estimate

this with

its mean

E () = 0

465

MSE [1 + cT(XTX) c]

where

cT = (1

x1 xr )

466

/* A SAS program to perform a regression

analysis of the effects of the

composition of Portland cement on the

amount of heat given off as the cement

hardens. Posted as cement.sas */

data set1;

input run x1 x2 x3 x4 y;

/* label y = evolved heat (calories)

x1 = tricalcium aluminate

x2 = tricalcium silicate

x3 = tetracalcium aluminate ferrate

x4 = dicalcium silicate; */

cards;

1 7 26 6 60 78.5

2 1 29 15 52 74.3

3 11 56 8 20 104.3

4 11 31 8 47 87.6

5 7 52 6 33 95.9

6 11 55 9 22 109.2

7 3 71 17 6 102.7

8 1 31 22 44 72.5

9 2

10 21

11 1

12 11

13 10

run;

54

47

40

66

68

18

4

23

9

8

22

26

34

12

12

93.1

115.9

83.8

113.2

109.4

proc print data=set1 uniform split='*';

var y x1 x2 x3 x4;

label y = 'Evolved*heat*(calories)'

x1 = 'Percent*tricalcium*aluminate'

x2 = 'Percent*tricalcium*silicate'

x3 = 'Percent*tetracalcium*aluminate*ferrate'

x4 = 'Percent*dicalcium*silicate';

run;

468

467

/* Regress y on all four explanatory

variables and check residual plots

and collinearity diagnostics */

/* Regress y on two of explanatory

variables and check residual plots

and collinearity diagnostics */

proc reg data=set1 corr;

model y = x1 x2 x3 x4 / p r ss1 ss2

covb collin;

output out=set2 residual=r

predicted=yhat;

run;

proc reg data=set1 corr;

model y = x1 x2 / p r ss1 ss2

covb collin;

output out=set2 residual=r

predicted=yhat;

run;

/* Examine smaller regression models

corresponding to subsets of the

explanatory variables */

/* Use the GLM procedure to identify

all estimable functions */

proc reg data=set1;

model y = x1 x2 x3 x4 /

selection=rsquare cp aic

sbc mse stop=4 best=6;

run;

proc glm data=set1;

model y = x1 x2 x3 x4 / ss1 ss2 e1 e2 e p;

run;

469

470

Obs

1

2

3

4

5

6

7

8

9

10

11

12

13

Percent

Percent tetracalcium Percent

tricalcium aluminate dicalcium

silicate

ferrate

silicate

Evolved

Percent

heat

tricalcium

(calories) aluminate

78.5

74.3

104.3

87.6

95.9

109.2

102.7

72.5

93.1

115.9

83.8

113.2

109.4

7

1

11

11

7

11

3

1

2

21

1

11

10

26

29

56

31

52

55

71

31

54

47

40

66

68

6

15

8

8

6

9

17

22

18

4

23

9

8

60

52

20

47

33

22

6

44

22

26

34

12

12

The REG Procedure

Model: MODEL1

Dependent Variable: y

Analysis of Variance

Source

DF

Sum of

Squares

Mean

Square

Model

Error

Corrected Total

4

8

12

2664.52051

47.67641

2712.19692

666.13013

5.95955

Root MSE

Dependent Mean

Coeff Var

Correlation

Variable

x1

x2

x3

x4

y

x1

x2

x3

x4

y

1.0000

0.2286

-0.8241

-0.2454

0.7309

0.2286

1.0000

-0.1392

-0.9730

0.8162

-0.8241

-0.1392

1.0000

0.0295

-0.5348

-0.2454

-0.9730

0.0295

1.0000

-0.8212

0.7309

0.8162

-0.5348

-0.8212

1.0000

2.44122

95.41538

2.55852

R-Square

Adj R-Sq

472

471

Collinearity Diagnostics

Parameter Estimates

Variable

DF

Parameter

Estimate

Intercept

x1

x2

x3

x4

1

1

1

1

1

63.16602

1.54305

0.50200

0.09419

-0.15152

0.9824

0.9736

Standard

Error

t Value

69.93378

0.74331

0.72237

0.75323

0.70766

0.90

2.08

0.69

0.13

-0.21

Pr > |t|

0.3928

0.0716

0.5068

0.9036

0.8358

Number

Eigenvalue

Condition

Index

1

2

3

4

5

4.11970

0.55389

0.28870

0.03764

0.00006614

1.00000

2.72721

3.77753

10.46207

249.57825

Variable

DF

Type I SS

Type II SS

Collinearity Diagnostics

Intercept

x1

x2

x3

x4

1

1

1

1

1

118353

1448,75413

1205.70283

9.79033

0.27323

4.86191

25.68225

2.87801

0.09319

0.27323

---------------Proportion of Variation---------------Intercept

x1

x2

x3

x4

473

1

2

3

4

5

0.000005

8.812E-8

3.060E-7

0.000127

0.99987

0.00037

0.01004

0.000581

0.05745

0.93157

0.00002

0.00001

0.00032

0.00278

0.99687

0.00021

0.00266

0.00159

0.04569

0.94985

0.00036

0.00010

0.00168

0.00088

0.99730

474

Obs

Dep Var

y

Predicted

Value

Std Error

Mean Predict

Student

Residual

1

2

3

4

5

6

7

8

9

10

11

12

13

78.5000

74.3000

104.3000

87.6000

95.9000

109.2000

102.7000

72.5000

93.1000

115.9000

83.8000

113.2000

109.4000

78.4929

72.8005

105.9744

89.3333

95.6360

105.2635

104.1289

75.6760

91.7218

115.6010

81.8034

112.3007

111.6675

1.8109

1.4092

1.8543

1.3265

1.4598

0.8602

1.4791

1.5604

1.3244

2.0431

1.5924

1.2519

1.3454

0.00432

0.752

-1.054

-0.846

0.135

1.723

-0.736

-1.692

0.672

0.224

1.079

0.429

-1.113

Obs

1

2

3

4

5

6

7

8

9

10

11

12

13

|

|

|

|

|

|

|

|

|

|

|

|

|

-2-1 0 1 2

|

|*

**|

*|

|

|***

*|

***|

|*

|

|**

|

**|

|

|

|

|

|

|

|

|

|

|

|

|

|

Cook's D

0.000

0.057

0.303

0.060

0.002

0.084

0.063

0.395

0.038

0.023

0.172

0.013

0.108

The REG Procedure

Model: MODEL1

R-Square Selection Method

Regression Models for Dependent Variable: y

Number in

Model

R-Square

AIC

Variables

in Model

SBC

1

0.6744

58.8383

59.96815 x4

1

0.6661

59.1672

60.29712 x2

1

0.5342

63.4964

64.62630 x1

1

0.2860

69.0481

70.17804 x3

-----------------------------------------------------2

0.9787

25.3830

27.07785 x1 x2

2

0.9726

28.6828

30.37766 x1 x4

2

0.9353

39.8308

41.52565 x3 x4

2

0.8470

51.0247

52.71951 x2 x3

2

0.6799

60.6172

62.31201 x2 x4

2

0.5484

65.0933

66.78816 x1 x3

------------------------------------------------------3

0.9824

24.9187

27.17852 x1 x2 x4

3

0.9823

24.9676

27.22742 x1 x2 x3

3

0.9814

25.6553

27.91511 x1 x3 x4

3

0.9730

30.4953

32.75514 x2 x3 x4

------------------------------------------------------4

0.9824

26.8933

29.71808 x1 x2 x3 x4

475

This output was produced by the e option

in the model statement of the GLM procedure.

It indicates that all five regression

parameters are estimable.

476

This output was produced by the e1 option

in the model statement of the GLM procedure.

It describes the null hypotheses that are

tested with the sequential Type I sums of

squares.

The GLM Procedure

Type I Estimable Functions

General Form of Estimable Functions

Effect

Coefficients

Intercept

L1

x1

L2

x2

L3

x3

L4

x4

L5

477

Effect

----------------Coefficients--------------x1

x2

x3

x4

Intercept

0

0

0

0

x1

L2

0

0

0

x2

0.6047*L2

L3

0

0

x3

-0.8974*L2

0.0213*L3

L4

0

x4

-0.6984*L2

-1.0406*L3

-1.0281*L4

478

L5

>

>

>

>

>

>

Type II Estimable Functions

----Coefficients---x1

x2

x3

x4

Effect

Intercept

0

0

0

0

x1

L2

0

0

0

x2

0

L3

0

0

x3

0

0

L4

0

x4

0

0

0

L5

# The commands are posted as:

#

#

#

#

cement.spl

The data file is stored under the name

cement.txt. It has variable names on the

first line. We will enter the data into

a data frame.

> cement<-read.table("c:\\cement.txt",header=T)

> cement

1

2

3

4

5

6

7

8

9

run

1

2

3

4

5

6

7

8

9

X1

7

1

11

11

7

11

3

1

2

X2

26

29

56

31

52

55

71

31

54

X3

6

15

8

8

6

9

17

22

18

X4

60

52

20

47

33

22

6

44

22

Y

78.5

74.3

104.3

87.6

95.9

109.2

102.7

72.5

93.1

479

10

11

12

13

10

11

12

13

21

1

11

10

47

40

66

68

4

23

9

8

26

34

12

12

115.9

83.8

113.2

109.4

> # Create a scatterplot matrix with smooth

> # curves. Unix users should first use

> # motif( ) to open a graphics wundow

> # Compute correlations and round the results

> # to four significant digits

> round(cor(cement[-1]),4)

X1

X2

X3

X4

Y

X1

1.0000

0.2286

-0.8241

-0.2454

0.7309

X2

0.2286

1.0000

-0.1392

-0.9730

0.8162

X3

-0.8241

-0.1392

1.0000

0.0295

-0.5348

480

X4

-0.2454

-0.9730

0.0295

1.0000

-0.8212

Y

0.7309

0.8162

-0.5348

-0.8212

1.0000

481

> points.lines <- function(x, y)

+ {

+ points(x, y)

+ lines(loess.smooth(x, y, 0.90))

+ }

> par(din=c(7,7),pch=18,mkh=.15,cex=1.2,lwd=3)

> pairs(cement[ ,-1], panel=points.lines)

482

10

30

50

15

20

30 40 50 60 70

> cement.out <- lm(Y~X1+X2+X3+X4, cement)

> summary(cement.out)

70

5

10

X1

> # Use the lm( ) function to

> # fit a linear regression model

50

60

Call: lm(formula = Y ~ X1+X2+X3+X4, data=cement)

Residuals:

Min

1Q Median

3Q Max

-3.176 -1.674 0.264 1.378 3.936

15

20

30

40

X2

Coefficients:

5

Residual standard error: 2.441 on 8 d.f.

Multiple R-Squared: 0.9824

40

50

60

(Intercept)

X1

X2

X3

X4

100

10

20

30

X4

80

90

Y

5

10

15

20

5

10

15

20

80 90

110

F-statistic: 111.8 on 4 and 8 degrees of freedom,

the p-value is 4.707e-007

484

483

Correlation of

(Intercept)

X1 -0.9678

X2 -0.9978

X3 -0.9769

X4 -0.9983

Coefficients:

X1

X2

Std.

Value

Error t value Pr(>|t|)

63.1660 69.9338 0.9032 0.3928

1.5431 0.7433 2.0759 0.0716

0.5020 0.7224 0.6949 0.5068

0.0942 0.7532 0.1250 0.9036

-0.1515 0.7077 -0.2141 0.8358

110

10

X3

X3

0.9510

0.9861 0.9624

0.9568 0.9979 0.9659

>

>

>

>

#

#

#

#

Create a function to evaluate an orthogonal

projection matrix. Then create a function

to compute type II sums of squares.

This uses the ginverse( ) function

>

>

>

>

>

>

+

>

#=======================================

# project( )

#-------------# calculate orthogonal projection matrix

#=======================================

project <- function(X)

{X%*%ginverse(crossprod(X))%*%t(X)}

#=======================================

> anova(cement.out)

Analysis of Variance Table

Response: Y

Terms added sequentially (first to last)

Df Sum of Sq Mean Sq F Value Pr(F)

X1 1 1448.754 1448.754 243.0978 0.0000

X2 1 1205.703 1205.703 202.3144 0.0000

X3 1

9.790

9.790 1.6428 0.2358

X4 1

0.273

0.273 0.0458 0.8358

Resid 8

47.676

5.960

485

486

>

>

>

>

>

>

>

>

>

>

>

+

+

+

+

+

+

+

+

+

+

+

+

+

+

#========================================

# typeII.SS( )

#-----------------# calculate Type II sum of squares

#

# input lmout = object made by the

#

lm( ) function

#

y = dependent variable

#========================================

typeII.SS <- function(lmout,y)

{

# generate the model matrix

model <- model.matrix(lmout)

# create list of parameter names

par.name <- dimnames(model)[[2]]

# compute number of parameters

n.par <- dim(model)[2]

# Compute residual mean square

SS.res <- deviance(lmout)

df2

<- lmout$df.resid

MS.res <- SS.res/df2

+ result <- NULL

# store results

+

+

# Compute Type II SS

+ for (i in 1:n.par) {

+ A <- project(model)-project(model[,-i])

+ SS.II <- t(y) %*% A %*% y

+ df1

<- qr(project(model))$rank +

qr(project(model[ ,-i]))$rank

+ MS.II <- SS.II/df1

+ F.stat <- MS.II/MS.res

+ p.val <- 1-pf(F.stat,df1,df2)

+ temp <- cbind(df1,SS.II,MS.II,F.stat,p.val)

+ result <- rbind(result,temp)

+ }

+

+result<-rbind(result,c(df2,SS.res,MS.res,NA,NA))

+dimnames(result)<-list(c(par.name,"Residual"),

+c("Df","Sum of Sq","Mean Sq","F Value","Pr(F)"))

+ cat("Analysis of Variance

+ (TypeII Sum of Squares) \n")

+ round(result,6)

+ }

> #==========================================

488

487

>

>

>

>

>

> typeII.SS(cement.out, cement$Y)

Analysis of Variance

Df Sum of Sq

(Inter) 1 4.861907

X1 1 25.682254

X2 1 2.878010

X3 1 0.093191

X4 1 0.273229

Resid 8 47.676412

(TypeII Sum of Squares)

Mean Sq F Value

Pr(F)

4.861907 0.815818 0.392790

25.682254 4.309427 0.071568

2.878010 0.482924 0.506779

0.093191 0.015637 0.903570

0.273229 0.045847 0.835810

5.959551

NA

NA

#

#

#

#

#

Venables and Ripley have supplied functions

studres( ) and stdres( ) to compute

studentized and standardized residuals.

Use the library( ) function to attach the

MASS library before using these functions.

> library(MASS)

> cement.res <- cbind(cement$Y,

+

cement.out$fitted,

+

cement.out$resid,

+

studres(cement.out),

+

stdres(cement.out))

> dimnames(cement.res) <- list(cement$run,

+

c("Response","Predicted","Residual",

+

"Stud. Res.","Std. Res."))

> round(cement.res,4)

489

490

Response

1 78.5

2 74.3

3 104.3

4 87.6

5 95.9

6 109.2

7 102.7

8 72.5

9 93.1

10 115.9

11 83.8

12 113.2

13 109.4

Predicted

78.4929

72.8005

105.9744

89.3333

95.6360

105.2635

104.1289

75.6760

91.7218

115.6010

81.8034

112.3007

111.6675

Residual Stud. Res.

0.0071

0.0040

1.4995

0.7299

-1.6744 -1.0630

-1.7333 -0.8291

0.2640

0.1264

3.9365

2.0324

-1.4289 -0.7128

-3.1760 -1.9745

1.3782

0.6472

0.2990

0.2100

1.9966

1.0919

0.8993

0.4061

-2.2675 -1.1326

Std. Res.

0.0043

0.7522

-1.0545

-0.8458

0.1349

1.7230

-0.7358

-1.6917

0.6721

0.2237

1.0790

0.4291

-1.1131

> # Produce plots for model diagnostics

> # including Cook's D.

> par(mfrow=c(3,2))

> plot(cement.out)

> # Search for a simpler model

> cement.stp <- step(cement.out,

+

scope=list(upper = ~X1 + X2 + X3 + X4,

+

lower = ~ 1), trace=F)

492

491

2.0

4

> # Search for a simpler model

6

6

1.5

> cement.stp <- step(cement.out,

+

scope=list(upper = ~X1 + X2 + X3 + X4,

+

lower = ~ 1), trace=F)

1.0

13

0.5

0

-2

Residuals

2

sqrt(abs(Residuals))

8

13

8

80

90

100

110

80

90

100

> cement.stp$anova

110

fits

4

Fitted : X1 + X2 + X3 + X4

Initial Model:

Y ~ X1 + X2 + X3 + X4

13

8

80

90

100

110

-1

Fitted : X1 + X2 + X3 + X4

1

Final Model:

Y ~ X1 + X2

0.4

0.3

0.2

f-value

3

0.1

11

0.0

-20

0.6

8

0.2

20

10

0

-10

Cook’s Distance

20

Residuals

10

-10

-20

0.2

0

Quantiles of Standard Normal

Y

0

Fitted Values

Stepwise Model Path

Analysis of Deviance Table

0

-2

80

90

Y

Residuals

100

2

110

6

0.6

2

4

6

8

10

12

Index

493

Step Df Deviance Resid. Df Resid. Dev

AIC

1

8 47.67641 107.2719

2 - X3 1 0.093191

9 47.76960 95.4460

3 - X4 1 9.970363

10 57.73997 93.4973

494