Using qualitative and mixed methods in (rapid) evaluations

Using qualitative and mixed methods in [rapid] evaluations

Michael Woolcock

Lead Social Development Specialist

Development Research Group, World Bank

Santo Domingo

November 14, 2011

Primary source material

• Bamberger, Michael, Vijayendra Rao and Michael Woolcock

(2010) “Using Mixed Methods in Monitoring and Evaluation:

Experiences from International Development”, in Abbas

Tashakkori and Charles Teddlie (eds.) Handbook of Mixed

Methods (2 nd revised edition) Thousand Oaks, CA: Sage

Publications, pp. 613-641

• Barron, Patrick, Rachael Diprose and Michael Woolcock

(2011) Contesting Development: Participatory Projects and

Local Conflict Dynamics in Indonesia New Haven: Yale

University Press

• Woolcock, Michael (2009) ‘Toward a Plurality of Methods in

Project Evaluation: A Contextualized Approach to

Understanding Impact Trajectories and Efficacy’ Journal of

Development Effectiveness 1(1): 1-14

Overview

• What is a ‘rapid assessment’?

• The limits of rapid assessment

• Ten reasons to use qualitative methods

• Three challenges in evaluation:

– Allocating development resources

– Assessing project effectiveness (in general)

– Assessing complex ‘social’ projects (in particular)

• Discussion of options, strategies for assessing projects using mixed methods

• Some examples of mixed methods evaluations

What is a rapid assessment?

• An evaluation done when time and resources are highly constrained

• Same principles apply as for comprehensive evaluations…

– But, if anything, need to be more skilled!

• Strategy likely to entail deploying a range of methods and tools

– So need to be conversant across disciplines

• Setting reasonable expectations is crucial

– As is anticipating political pressures for certain results

The limits of rapid assessments

• Better than nothing…

• …but ‘quick and dirty’ risks being just that

• Difficult to discern nature/extent of impact trajectory

• Difficult to draw strong causal inference

• Primacy effects: initial ‘impressions’ endure

(perhaps very erroneously)

• Best as complement/prelude to, not substitute for, comprehensive evaluation

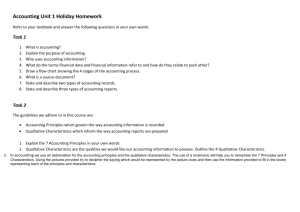

Ten Reasons to Use Qualitative

Methods in (Rapid) Assessments

1.

Understanding Political, Social Change

• ‘Process’ often as important as ‘product’

• Modernization of rules, social relations, meaning systems

2.

Examining Dynamics (not just ‘Demographics’) of

Group Membership

• How are boundaries defined, determined? How are leaders determined?

3.

Accessing Sensitive Issues and

Stigmatized/Marginalized Groups

• E.g., conflict and corruption; sex workers

6

Ten Reasons to Use Qualitative

Methods in (Rapid) Assessments

4.

Explaining Context Idiosyncrasies

• Beyond “context matters” to understanding how and why, at different units of analysis

• ‘Contexts’ not merely “out there” but “in here”; the Bank

produces legible contexts

5.

Unpacking Understandings of Concepts and (‘Fixed’)

Categories

• Surveys assume everyone understands questions and categories the same way; do they?

• Qualitative methods can be used to correct and/or complement orthodox surveys

7

Ten Reasons to Use Qualitative

Methods in (Rapid) Assessments

6.

Facilitating Researcher-Respondent Interaction

• Enhance two-way flow of information

• Cross-checking; providing feedback

7.

Exploring Alternative Approaches to Understanding

‘Causality’

• Econometrics: robustness tests on large N datasets; controlling for various contending factors

• History: single/rare event processes

• Anthropology: deep knowledge of contexts

• Exploring inductive approaches

• Cf. ER doctors, courtroom lawyers, solving jigsaws

8

Ten Reasons to Use Qualitative

Methods in (Rapid) Assessments

8.

Observing ‘Unobservables’

• Project impact not just a function of easily measured factors; unobserved factors—such as motivation, political ties—also important

9.

Exploring Characteristics of ‘Outliers’

• Not necessarily ‘noise’ or ‘exceptional’; can be high instructive (cf. illness informs health)

10. Resolving Apparent Anomalies

• Nice when inter and intra method results align, but sometimes they don’t; who/which is ‘right’?

9

Three challenges

• How to allocate development resources?

• How to assess project effectiveness in general?

• How to assess complex social development projects in particular?

Allocating development resources

• How to allocate finite resources to projects believed likely to have a positive development impact?

• Allocations made for good and bad reasons, only a part of which is ‘evidence-based’…

– … but most of which is ‘theory-based’, i.e., done because of an implicit (if not explicit) belief that Intervention A will ‘cause’

Impact B in Place C net of Factors D and E for Reasons F and G

• E.g., micro-credit will raise the income of villagers in Flores, independently of their education and wealth, because it enhances their capacity to respond to shocks (floods, illness) and enables largerscale investment in productive assets (seeds, fertilizer)

Allocating development resources

• Imperatives of large development agencies strongly favor one-size-fits-all policy solutions (despite protestations to the contrary!). An ideal project yields…

– predictable, readily-measurable, quick, photogenic, noncontroversial, context-independent results

• roads, electrification, immunization

• Want ‘best practices’ that ‘work’ that can be readily scaled up and replicated

• Projects that diverge from this structure enter the resource allocation game at a distinct disadvantage…

– … but the obligation to demonstrate impact (rightly) remains; just need to enter the fray well armed

• empirically, theoretically, strategically

Key principles

• Ask interesting and important questions, then assemble the best combination of methods to answer it

– Not, “What questions can I answer with this data?”

– Not, “I don’t have a randomized design, so therefore I can’t say anything defensible”

• Generate data to help projects ‘learn’, in real time

– Be useful, here and now

– Make ‘M’ as cool as ‘E’

• Help to more carefully identify the conditions under

which given interventions ‘work’

– Individual methods, per se, are not inherently ‘rigorous’; they become so to the extent they appropriately match the problems they confront, the constraints they overcome

– Focus on understanding SD as much as determining LATE

How to Assess Project Effectiveness?

• Need to disentangle the effect of a given intervention over and above other factors occurring simultaneously

– Distinguishing between the ‘signal’ and ‘noise’

• Is my job creation program reducing unemployment, or is it just the booming economy?

• An intervention itself may have many components

– TTLs are most immediately concerned about which aspect is the most important, or the binding constraint

– (Important as this is, it is not the same thing as assessing impact)

• Need to be able to make defensible causal claims about project efficacy even (especially) when the apparent ‘rigor’ of econometric methods aren’t suitable/available

– Thus need to change both the terms and content of debate

14

Making knowledge claims in project evaluation and development research

• Construct validity

– How well does my instrument assess the underlying concepts (‘poverty’, ‘participation’, ‘conflict’,

‘empowerment’)?

• Internal validity

– How well have I addressed various sources of bias

(most notably selection effects) influencing the relationship between IV and DV?

• i.e., what is my identification strategy?

• External validity

– How well can I extrapolate my findings? If my project works ‘here’, will it also work ‘there’? If it works with

‘them’, will it work with ‘these’? Will bigger be better?

We observe an outcome indicator…

Y

1

(observedl)

Y

0 t =0

Intervention

16

…and its value rises after the program

Y

1

(observedl)

Y

0 t =0 t =1 time

Intervention

17

However, we need to identify the counterfactual

(i.e., what would have happened otherwise)…

Y

1

(observedl)

Y

1

* (counterfactual)

Y

0

Intervention t =0 t =1 time

18

… since only then can we determine the impact of the intervention

Y

1

Impact = Y

1

Y

1

*

Y

1

*

Y

0 t =0 t =1 time

19

Why are ‘complex’ interventions so hard to evaluate? A simple example

• You are the inventor of ‘BrightSmile’, a new toothpaste that you are sure makes teeth whiter and reduces cavities without any harmful side effects. How would you

‘prove’ this to public health officials and (say) Colgate?

20

Why are ‘complex’ interventions so hard to evaluate? A simple example

• You are the inventor of ‘BrightSmile’, a new toothpaste that you are sure makes teeth whiter and reduces cavities without any harmful side effects. How would you

‘prove’ this to public health officials and (say) Colgate?

• Hopefully (!), you would be able to:

– Randomly assign participants to a ‘treatment’ and ‘control’ group (and then have then switch after a certain period); make sure both groups brushed the same way, with the same frequency, using the same amount of paste and the same type of brush; ensure nobody (except an administrator, who did not do the data analysis) knew who was in which group

21

Demonstrating ‘impact’ of

BrightSmile vs. SD projects

• Enormously difficult—methodologically, logistically and empirically—to formally identify ‘impact’; equally problematic to draw general ‘policy implications’, especially for other countries

• Prototypical “complex” CDD/J4P project:

– Open project menu: unconstrained content of intervention

– Highly participatory: communities control resources and decisionmaking

– Decentralized: local providers and communities given high degree of discretion in implementation

– Emphasis on building capabilities and the capacity for collective action

– Context-specific; project is (in principle) designed to respond to and reflect local cultural realities

– Project’s impact may be ‘non-additive’ (e.g., stepwise, exponential, high initially then tapering off…)

22

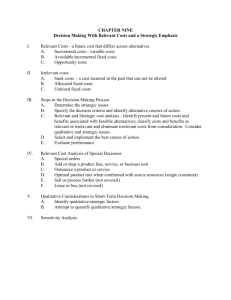

‘Complexity’ and Evaluation

‘Simple’

Nets, pills, roads

‘Complicated’

Agriculture, microcredit

‘Complex’

Education, health

‘Chaotic’

Local justice reform

Theory

Predictive precision

Cumulative knowledge

Subject/object gap

Mechanisms

# Causal pathways

# of ‘people-based’ transactions

# Feedback loops

Outcomes

Plausible range

Measurement precision

High

Few

Narrow

Low

Many

Wide

23

How does J4P work over time?

(or, what is its ‘functional form’?)

‘Governance’?

CCTs?

A B

Time

‘AIDS awareness’?

Bridges?

Time

C D

24 Time Time

How does J4P work over time?

(or, what is its ‘functional form’?)

Unintended consequences?

Shocks?

(‘Impulse response function’)

E F

Time

‘Empowerment’?

Time

Land titling?

G H

Time Time 25

How does J4P work over time?

(or, what is its ‘functional form’?)

Time

I

?

Unknown… Unknowable?

J

Time

26

So, what can we do when…

• Inputs are variables (not constants)?

– Facilitation/participation vs. tax cuts (seeds, pills, etc)

– Teaching vs. text books

– Therapy vs. medicine

• Adapting to context is an explicit, desirable feature?

– Each context/project nexus is thus idiosyncratic

• Time, resources are very limited?

• Outcomes are inherently hard to define and measure?

– E.g., empowerment, collective action, conflict mediation, social capital

27

Use mixed methods

• Combinations of methods to complement strengths and weaknesses of each

• Understanding context, process

• Enhancing construct, internal and external validity

– Scaling up, replication

• Especially as it pertains to making causal claims

– Econometrics vs history vs anthropology vs law

• Link to explicit theory of change

Other uses for Mixed Methods

1. When existing time and resources prelude doing or using formal survey/census data

• Examples: St Lucia and Colombia

2. When it’s unclear what “intervention” might be responsible for observed outcomes

– That is, no clear ex ante hypotheses; working inductively from matched comparison cases

• Examples:

– Putnam (1993) on regional governance in Italy

– Mahoney (2010) on governance in Central America

– Collins (2001) on “good to great” US companies

– Varshney (2002) on sources of ethnic violence in India

29

Practical examples (1)

1. Poverty in Guatemala (GUAPA)

– ‘Parallel’

– Quan: expanded LSMS

• first social capital module

• large differences by region, gender, income, ethnicity

• pervasive elite capture

– Qual: 10 villages (5 different ethnic groups)

• perceptions of exclusion, access to services

• fear of reprisal, of children being stolen

• legacy of shocks (political and natural )

• links to LSMS data

30

Practical examples (2)

2. Poverty in Delhi slums (Jha, Rao and Woolcock 2007)

– ‘Sequential’

– Qual: 4 migrant communities

• near, far, recent, long-term

– Quan: 800 randomly selected representative households

– From survival to mobility

• role of norms (sharing, status) and networks (kinship, politics)

• housing, employment transitions

• property rights

– Understanding ‘governance’

• managing collective action

• crucial role of service provision

31

Practical examples (3)

3. ‘Justice for the Poor’ Initiative

– Origins in Indonesia

• Draws on the approach and findings from large local conflict study

– Integrated qualitative and quantitative approach

– Results show importance of understanding

• Rules of the game (meta-rules)

• Dynamics of difference (politics of ‘us’-‘them’ relations)

• Efficacy of intermediaries (legitimacy, enforceability)

• Extension to Cambodia…

– Research on collective disputes (e.g., land), to inform IDA grant in 2007

• …and now into Africa and East Asia

– Sierra Leone, Kenya, Vanuatu, East Timor, PNG…

32

J4P: Core Research Design

• Enormous investment in recruiting, training, keeping local field staff

• Training centers on techniques, ethics, data management and analysis

• Where possible, use existing quantitative data sources to (a) complement qualitative work, and (b) help with sampling

• Sampling based on basic comparative method:

– Maximum difference between contexts

– Focus on outliers (‘exceptions to the rule’)

• Rough rule of thumb: analysis takes three times as long as data collection

– Analysis can’t be “outsourced”: research team needs to be involved at all stages

33

Practical examples (4)

• Assessing the Kechamatan Development

Program (KDP, now PNPM) in Indonesia, the world’s largest CDD program

Contesting Development

Participatory Projects and Local

Conflict Dynamics in Indonesia

PATRICK BARRON

RACHAEL DIPROSE

MICHAEL WOOLCOCK

Yale University Press, 2011

Summary of methods used in

Barron, Diprose and Woolcock (2011)

PODES,

GDS

D e

D p e p t t h h

Case Studies

Key Informant

Survey

Newspaper

Analysis

Type of Impact

Forums (places)

Facilitators (people)

Group Relations

Behavioral

Normative

Summary of findings

Low

Program Functionality

Low

Context Capacity

High Low

High

Program Functionality

High

-- ++ --* 0

0

0

0

++

0

+

0

+++

0

0

+++

+

0

0

+

+++

Implications for policy and practice

• Non-linear trajectories of change

– J-curves, step-functions, ?? (not a straight line)

• Social relations

– As resources, as constraints

– Virtues and limits of traditional dispute resolution

• Capacity to engage

– Facilitators as street-level diplomats (“Habermasian bureaucrats”)

– Low capacity a donor problem as much as a client ‘gap’

• State-society relations, “good governance” a product of

– Good struggles

• ‘Interim institutions’ forged, legitimated through equitable contests

– Good failures

• Learning organizations as platforms of innovation, feedback, accountability

• Tolerating, rewarding lots of experiments (many won’t work)

Concluding thoughts

• The virtues and limits of measurement

– Tension between simplifying versus complicating reality

• Triangulation

– Integrating more data, better data, more diverse data as

“substitutes” and “complements”

• Surveys as tool for adaptation and guidance

– Not prescription for uniformity or control

– One size (literally) does not fit all

– Encouraging comparability across time and space

39