Document

advertisement

exercise in the previous class

Y1

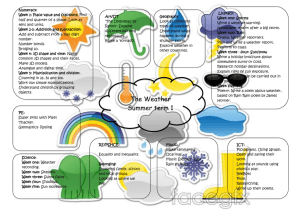

sunny rain

12

sunny 45

X

15

28

rain

Y2

sunny rain

57

sunny 0

X

43

0

rain

Q1: Compute P(X=Y1) and P(X=Y2): P(X=Y1) = 0.73 and P(X=Y2) = 0

Q2:Compute I(X; Y1) and I(X; Y2): H(X) =ℋ 0.57 = 0.986 bit

to compute H(X|Y1), determine some probabilities;

Y1

P(Y1=sunny)= 0.6, P(Y1=rain)=0.4

P(X|Y1) sunny rain H(X|Y1=sunny) = ℋ 0.75 = 0.811

sunny 0.75 0.30 H(X|Y1=rain) = ℋ 0.30 = 0.881

X

0.25 0.70 H(X|Y1) = 0.6×0.811+0.4×0.881=0.839

rain

I(X; Y1) = H(X) – H(X|Y1) = 0.986 – 0.839= 0.147 bit

1

exercise in the previous class (cnt’d)

Y1

sunny rain

12

sunny 45

X

15

28

rain

Y2

sunny rain

57

sunny 0

X

43

0

rain

Q2:Compute I(X; Y1) and I(X; Y2): H(X) =ℋ 0.57 = 0.986 bit

to compute H(X|Y2), determine some probabilities;

Y2

P(Y2=sunny)= 0.43, P(Y2=rain)=0.57

P(X|Y2) sunny rain H(X|Y2=sunny) = ℋ 0 = 0

1

sunny 0

H(X|Y2=rain) = ℋ 1 = 0

X

1

0

rain

H(X|Y2) = 0.43×0+0.57×0=0

I(X; Y2) = H(X) – H(X|Y2) = 0.986 – 0= 0.986 bit

Q3: Which is the better forecasting?

Y2 gives more information.

2

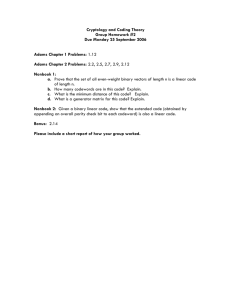

chapter 2:

compact representation of information

3

the purpose of chapter 2

We learn how to encode symbols from information source.

source coding

data compression

0101101

encoder

source

the purpose of source encoding:

to give representations which are good for communication

to discard (捨てる) redundancy (冗長性)

We want a source coding scheme which gives ...

as precise (正確) encoding as possible

as compact encoding as possible

4

plan of the chapter

basic properties needed for source coding

uniquely decodable

immediately decodable

Huffman code

construction of Huffman code

today

extensions of Huffman code

theoretical limit of the “compression”

related topics

5

words and terms

Meanwhile, we consider symbol-by-symbol encodings only.

M...the set of symbols generated by an information source.

For each symbol in M, associate a sequence (系列) over {0, 1}.

codewords (符号語): sequences associated to symbols in M

code (符号): the set of codewords

alphabet: {0, 1} in this case... binary code

M

C

sunny 00

cloudy 010

rainy 101

three codewords; 00, 010 and 101

code C = {00, 010 and 101}

011 is NOT a codeword, for example

6

encoding and decoding

encode ... to determine the codeword for a given symbol

decode ... to determine the symbol for a given codeword

sunny

cloudy

rainy

encode

decode

00

010

101

encode = 符号化

decode = 復号(化)

NO separation symbols between codewords;

010 00 101 101 ... NG,

01000101101 ... OK

Why?

{0, 1 and “space”} ... the alphabet have three symbols, not two

7

uniquely decodable codes

A code must be uniquely decodable (一意復号可能).

Different symbol sequences are encoded to different 0-1

sequences.

uniquely decodable codewords are all different,

but the converse (逆) does not hold in general.

a1

a2

a3

a4

C1

00

10

01

11

yes

C2

0

01

011

111

yes

C3

0

10

11

01

no

C4

0

10

11

0

no

with the code C3...

a1 a3 a1

0110

a4 a2

8

more than uniqueness

consider a scenario of using C2...

a1, a4, a4, a1 is encoded to 01111110.

The 0-1 sequence is transmitted by 1 bit/sec.

When does the receiver find that

the first symbol is a1?

a1

a2

a3

a4

C1

00

10

01

11

C2

0

01

011

111

seven seconds later, the receiver obtains 0111111:

if 0 comes next, then 0 - 111 - 111 - 0 a1, a4, a4, a1

if 1 comes next, then 01 - 111 - 111 a2, a4, a4

We cannot finalize the first symbol even in the seven seconds later.

buffer to save data, latency (遅延) of decoding...

9

immediately decodable codes

A code must be uniquely decodable, and if possible,

it should be immediately decodable (瞬時復号可能).

Decoding is possible without looking ahead the sequence.

If you find a codeword pattern, then decode it immediately.

important property from an engineering viewpoint.

formally writing...

If 𝒔 ∈ 0,1 ∗ is written as 𝑐1 𝒔𝟏 = 𝒔 with 𝑐1 ∈ 𝐶 and 𝒔1 ∈ 0,1 ∗ ,

then there is no 𝑐2 ∈ 𝐶 and 𝒔2 ∈ 0,1 ∗ such that 𝑐2 𝒔𝟐 = 𝒔.

c1

s1

≠

c2

s2

10

prefix condition

If a code is NOT immediately decodable, then there is 𝒔 ∈ 0,1

such that 𝒔 = 𝑐1 𝒔𝟏 = 𝑐2 𝒔𝟐 with different 𝑐1 and 𝑐2 .

c1

s1

=

c2

s2

∗

the codeword 𝑐1 is a prefix (語頭) of 𝑐2

(c1 is the same as the beginning part of c2)

Lemma:

A code C is immediately decodable if and only if

no codeword in C is a prefix of other codewords.

(prefix condition, 語頭条件)

a1

a2

a

“0” is a prefix of “01” and “011” 3

“01” is a prefix of “011” a4

C2

0

01

011

111

11

break: prefix condition and user interface

The prefix condition is important in engineering design.

bad example: strokes for character writing on Palm PDA

graffiti (ver. 1)

basically one stroke only

graffiti (ver. 2)

some needs of two strokes

prefix condition violated

“– –”, and “=”, “– 1” and “+”

12

how to achieve the prefix condition

easy ways to construct codes with the prefix condition:

let all codewords have the same length

put a special pattern at the end of each codeword

C = {011, 1011, 01011, 10011} ... “comma code”

... too straightforward

select codewords by using a tree structure (code tree)

for binary codes, we use binary trees

for k-ary codes, we use trees with degree k

a code tree

with degree 3

13

construction of codes (k-ary case)

how to construct a k-ary code with M codewords

1.

construct a k-ary tree T with M leaf nodes

2.

for each branch (枝) of T, assign a label in {0, ..., k – 1}

sibling (兄弟) branches cannot have the same label

3.

for each of leaf nodes of T, traverse T from the root to the leaf,

with concatenating (連接する) labels on branches

the obtained sequence is the codeword of the node

14

example

construct a binary code with four codewords

Step 1

Step 2

0

0

0

1

0

1

Step 3

0

1

1

0

1

1

00

01

10

11

the constructed code is {00, 01, 10, 11}

15

example (cnt’d)

other constructions;

we can choose different trees, different labeling...

0

1

0

1

0

1

0

C1={0, 10, 110, 111}

0

1

1

0

0

C2={0, 11, 101, 100}

1

1

0

0

1

0

1

1 0

C3={01, 000, 1011, 1010}

The prefix condition is always guaranteed.

Immediately decodable codes are constructed.

16

the “best” among immediately decodable codes

0

1

0

1

0

1

C1={0, 10, 110, 111}

0

1

0

1

0

1

0 1 0

C3={01, 000, 1011, 1010}

C1 seems to give more compact representation than C3.

codeword length = [1, 2, 3, 3]

Can we construct more compact immediately decodable codes?

codeword length = [1, 1, 1, 1]?

codeword length = [1, 2, 2, 3]?

What is the criteria (基準) ?

codeword length = [2, 2, 2, 3]?

17

Kraft’s inequality

Theorem:

A) If a k-ary code {c1, ..., cM} with |ci| = li is immediately decodable,

then 𝑘 −𝑙1 + ⋯ + 𝑘 −𝑙𝑀 ≤ 1 (Kraft’s inequality) holds.

B) If 𝑘 −𝑙1 + ⋯ + 𝑘 −𝑙𝑀 ≤ 1, then we can construct

a k-ary immediately decodable code {c1, ..., cM} with |ci| = li.

proof omitted in this class ... use results of graph theory

[trivia] The result is given in the Master’s thesis of L. Kraft.

18

back to the examples

Can we construct more compact immediately decodable codes?

codeword length = [1, 2, 2, 3]?

…2−1

+

2−2

+

2−2

+ 2−3

9

8

= >1

We cannot construct an immediately decodable code.

codeword length = [2, 2, 2, 3]?

7

8

…2−2 + 2−2 + 2−2 + 2−3 = < 1

We can construct an immediately decodable code,

by simply constructing a code tree....

19

to the next step

basic properties needed for source coding

uniquely decodable

immediately decodable

Huffman code

construction of Huffman code

today

extensions of Huffman code

theoretical limit of the “compression”

related topics

20

the measure of efficiency

We want to construct a good source coding scheme.

easy to use ... immediately decodable

efficient ... what is the efficiency?

We try to minimize...

the expected length of a codeword for representing one symbol:

𝑀

symbol probability codeword length

𝑝𝑖 𝑙𝑖

a1

p1

c1

l1

𝑖=1

a2

p2

c2

l2

average codeword length

:

:

:

:

aM

pM

cM

lM

21

computing the average codeword length

symbol probability C1 C2 C3

a1

0.4

0 111 00

a2

0.3

10 110 01

a3

0.2

110 10 10

a4

0.1

111 0 11

C1: 0.4×1+ 0.3×2+ 0.2×3+ 0.1×3 = 1.9

C2: 0.4×3+ 0.3×3+ 0.2×2+ 0.1×1 = 2.6

C3: 0.4×2+ 0.3×2+ 0.2×2+ 0.1×2 = 2.0

It is expected that...

C1 gives the most compact representation in typical cases.

22

Huffman code

Huffman algorithm gives a clever way to construct

a code with small average codeword length.

1.

2.

prepare isolated M nodes, each attached with

a probability of a symbol (node = size-one tree)

David Huffman

1925-1999

repeat the following operation until all trees are joined to one

a. select two trees T1 and T2 having the smallest probabilities

b. join T1 and T2 by introducing a new parent node

c. the sum of probabilities of T1 and T2 is given to the new tree

23

example

“merger of small companies”

0.15

0.6

A

0.25

B

0.1

C

0.05

D

0.6

A

1.0

1 0.4

0

1 0.15

0

0 1

0.25 0.1

0.05

B

C

D

0.6

A

0.25

B

0.1

C

0.05

D

0.4

0.15

0.6

A

0.25

B

0.1

C

0.05

D

24

exercise

A

B

C

D

E

prob. codewords

0.2

0.1

0.3

0.3

0.1

compare the average length with the equal-length codes...

25

exercise

A

B

C

D

E

F

prob. codewords

0.3

0.2

0.2

0.1

0.1

0.1

compare the average length with the equal-length codes...

26

different construction, same efficiency

We may have multiple options on the code construction:

several nodes have the same small probabilities

labels can be assigned differently to branches

Different option results in a different Huffman code, but...

the average length does not depend on the chosen option.

0.4 0.2 0.2 0.1 0.1

a1 a2 a3 a4 a5

0.4 0.2 0.2 0.1 0.1

a 1 a2 a3 a4 a5

27

summary of today’s class

basic properties needed for source coding

uniquely decodable

immediately decodable

Huffman code

construction of Huffman code

today

extensions of Huffman code

theoretical limit of the “compression”

related topics

28

exercise

Construct a binary Huffman code for

the information source given in the table.

Compute the average codeword length

of the constructed code.

Can you construct a 4-ary Huffman code

for the source?

A

B

C

D

E

F

G

H

prob.

0.363

0.174

0.143

0.098

0.087

0.069

0.045

0.021

29