Multivariate Analysis: Advantages & Definitions

n

Advantages of

Multivariate

Analysis

Close resemblance to how the researcher thinks.

n

Easy visualisation and interpretation of data.

n

More information is analysed simultaneously, giving greater power.

n

Relationship between variables is understood better.

n

Focus shifts from individual factors taken singly to relationship among variables.

Definitions - I

n n n n

Independent (or Explanatory or Predictor) variable always on the X axis.

Dependent (or Outcome or Response) variable always on the Y axis.

In OBSERVATIONAL studies researcher observes the effects of explanatory variables on outcome.

In INTERVENTION studies researcher manipulates explanatory variable (e.g. dose of drug) to influence outcome

Definitions - II

n n

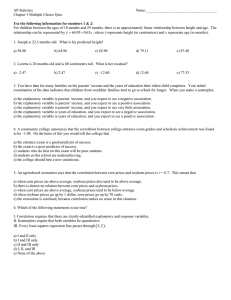

Scatter plot helps to visualise the relationship between two variables.

The figure shows a scatter plot with a regression line. For a given value of X there is a spread of Y values.

The regression line represents the mean values of Y.

2500

2000

1500

1000

1000

Scatterplot of deuterium against testweighing

Deuterium = -67.3413 + 1.16186 Test weigh

S = 234.234 R-Sq = 59.3 % R-Sq(adj) = 56.0 %

2000 1500

Test weigh

Regression

95% CI

Definitions - III n n

INTERCEPT is the value of Y for X = 0. It denotes the point where the regression line meets the Y axis

SLOPE is a measure of the change in the value of Y for a unit change in

X.

Y axis

Intercept

Slope

X axis

Basic Assumptions n

Y increases or decreases linearly with increase or decrease in X.

n

For any given value of X the values of Y are distributed Normally.

n

Variance of Y at any given value of X is the same for all value of X.

n

The deviations in any one value of Y has no effect on other values of Y for any given X

The Residuals n n

The difference between the observed value of Y and the value on the regression line

(Fitted value) is the residual.

The statistical programme minimizes the sum of the squares of the residuals. In a

Good Fit the data points are all crowded around the regression line.

Residual

Analysis of Variance - I

n n n

The variation of Y values around the regression line is a measure of how X and Y relate to each other.

Method of quantifying the variation is by

Analysis of variance presented as Analysis of

Variance table

Total sum of squares represents total variation of Y values around their mean - S yy

Analysis of Variance - II

Total Sum of Squares ( S yy

) is made up of two parts:

(i). Explained by the regression

(ii). Residual Sum of Squares

Sum of Squares ÷ its degree of freedom = Mean Sum of Squares

(MSS)

The ratio MSS due to regression ÷ MSS Residual = F ratio

Reading the output

n n n n n n n n n n

Regression Equation

Residual Sum of Squares (RSS)

Values of α and β.

R 2

S (standard deviation)

Testing for β ≠ 0

Analysis of Variance Table

F test

Outliers

Remote from the rest