ch5.5 (MBE).

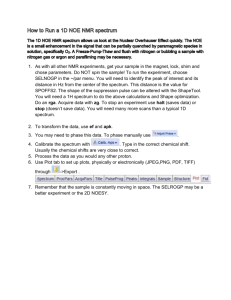

advertisement

MBE Vocoder Page 0 of 34 Outline Introduction to vocoders MBE vocoder – MBE Parameters – Parameter estimation – Analysis and synthesis algorithm AMBE IMBE Page 1 of 34 Vocoders - analyzer 1. 2. Speech analyzed first by segmenting speech using a window (e.g. Hamming window) Excitation and system parameters are calculated for each segment 1. 2. 3. Excitation parameters : voiced/unvoiced, pitch period System parameters: spectral envelope / system impulse response Sending this parameters Page 2 of 34 Vocoders - Synthesizer Excitation Signal White noise/ unvoiced Pulse train/voiced Page 3 of 34 System parameters Synthesized voice Vocoders But usually vocoders have poor quality – Fundamental limitation in speech models – Inaccurate parameter estimation – Incapability of pulse train/ white noise to produce all voice • speech synthesized entirely with a periodic source exhibits a “buzzy” quality, and speech synthesized entirely with a noise source exhibits a “hoarse” quality Potential solution to buzziness of vocoders is to use of mixed excitation models In these vocoders periodic and noise like excitations are mixed with a calculated ratio and this ration will be sent along the parameters Page 4 of 34 Multi Band Excitation Speech Model Due to stationary nature of a speech signal, a window w(n) is usually applied to signal sw (n) w(n) s(n) The Fourier transform of a windowed segment sw ( )can be modeled as the product of a spectral envelope H w ( ) and an excitation spectrum | Ew ( ) | sˆw ( ) H w ( ) | Ew ( ) | In most models H w ( ) is a smoothed version of the original speech spectrum sw ( ) Page 5 of 34 MBE model (Cont’d) the spectral envelope must be represented accurately enough to prevent degradations in the spectral envelope from dominating. – quality improvements achieved by the addition of a frequency dependent voiced/unvoiced mixture function. In previous simple models, the excitation spectrum is totally specified by the fundamental frequency w0 and a voiced/unvoiced decision for the entire spectrum. In MBE model, the excitation spectrum is specified by the fundamental frequency w0 and a frequency dependent voiced/unvoiced mixture function. Page 6 of 34 Multi Banding In general, a continuously varying frequency dependent voiced/unvoiced mixture function would require a large number of parameters to represent it accurately. The addition of a large number of parameters would severely decrease the utility of this model in such applications as bit-rate reduction. To further reduce the number of these binary parameters, the spectrum is divided into multiple frequency bands and a binary voiced/unvoiced parameter is allocated to each band. MBE model differs from previous models in that the spectrum is divided into a large number of frequency bands (typically 20 or more), whereas previous models used three frequency bands at most . Page 7 of 34 Multi Banding V/UV Original information spectrum Noise Spectral spectrum envelope Excitation spectrum Periodic spectrum Synthetic Page 8 of 34 spectrum MBE Parameters The parameters used in MBE model are: 1. 2. 3. 4. Page 9 of 34 spectral envelope the fundamental frequency the V/UV information for each harmonic and the phase of each harmonic declared voiced. The phases of harmonics in frequency bands declared unvoiced are not included since they are not required by the synthesis algorithm Parameter Estimation In many approaches (LPC based algorithms) the algorithms for estimation of excitation parameters and estimation of spectral envelope parameters operate independently. These parameters are usually estimated based on heuristic criterion without explicit consideration of how close the synthesized speech will be to the original speech. – This can result in a synthetic spectrum quite different from the original spectrum. In MBE the excitation and spectral envelope parameters are estimated simultaneously so that the synthesized spectrum is closest in the least squares sense to the spectrum of the original speech “analysis by synthesis” Page 10 of 34 Parameter Estimation (Cont’d) the estimation process has been divided into two major steps. 1. In the first step, the pitch period and spectral envelope parameters are estimated to minimize the error between the original spectrum and the synthetic spectrum. 2. Then, the V/UV decisions are made based on the closeness of fit between the original and the synthetic spectrum at each harmonic of the estimated fundamental. Page 11 of 34 Parameter Estimation (cont’d) The parameters estimated by minimizing the following error criterion: – Where The error in an interval 1 2 s 2 w ( ) sˆw ( ) d sˆw ( ) H w ( ) | Ew ( ) | m 1 2 2 bm s w ( ) Am EW ( ) d am bm is minimized at: Am Sw( ) Ew( ) d am bm Ew( ) am Page 12 of 34 2 d Pitch Estimation and Spectral Envelope An efficient method for obtaining a good approximation for the periodic transform P ( w ) in this interval is to precompute samples of the Fourier transform of the window w (n) and center it around the harmonic frequency associated with this interval. For unvoiced frequency intervals, the envelope parameters are estimated by substituting idealized white noise (unity across the band) for |E (a)| in previous formulas which reduces to averaging the original spectrum in each frequency interval. For unvoiced regions, only the magnitude of A, is estimated since the phase of A, is not required for speech synthesis. Page 13 of 34 More about pitch estimation Experimentally, the error E tends to vary slowly with the pitch period P the initial estimate is obtained by evaluating the error for integer pitch periods Since integer multiples of the correct pitch period have spectra with harmonics at the correct frequencies, the error E will be comparable for the correct pitch period and its integer multiples. Page 14 of 34 More about pitch estimation (Cont’d) Error/Pitch Speech segment Original Original and spectrum Synthetic P=42.48 Original and Synthetic Page 15 of 34 P=42 V/UV Decision The voiced/unvoiced decision for each harmonic is made by comparing the normalized error over each harmonic of the estimated fundamental to a threshold When the normalized error over mth harmonic is below the threshold, this frame will be marked as voiced else unvoiced Page 16 of 34 m m 1 2 bm Sw( ) am 2 d Analysis Algorithm Flowchart start Window Speech segment Compute error vs. pitch period Autocorrelation approach Page 17 of 34 Select initial pitch period (Dynamic programming Pitch tracker) Refine initial pitch period (frequency domain approach) Make V/UV decision for each Frequency band Select V/UV spectral Envelope parameters For each freq. band Stop Speech Synthesis The voiced signal can be synthesized as the sum of sinusoidal oscillators with frequencies at the harmonics of the fundamental and amplitudes set by the spectral envelope parameters (The time domain method). The unvoiced signal can be synthesized as the sum of bandpass filtered white noise The frequency domain method was selected for synthesizing the unvoiced portion of the synthetic speech. Page 18 of 34 Synthesis algorithm block diagram V/UV Decision Voiced envelope Separate samples Envelope Voiced/Unvoiced samples Envelope samples Unvoiced envelope samples Unvoiced envelope samples White noise sequence Page 19 of 34 Voiced envelope samples Unvoiced envelope Bank of Harmonic oscillators Voiced speech samples Linear interpolation STFT Replace envelope Weighted Unvoiced Overlap-add speech MBE Synthesis algorithm First, the spectral envelope samples are separated into voiced or unvoiced spectral envelope samples depending on whether they are in frequency bands declared voiced or unvoiced Voiced envelope samples include both magnitude and phase, whereas unvoiced envelope samples include only the magnitude. Voiced speech is synthesized from the voiced envelope samples by summing the outputs of a band of sinusoidal oscillators running at the harmonics of the fundamental frequency sˆv (t ) Am (t ) cos( m (t )) m Page 20 of 34 MBE Synthesis algorithm (Voiced) The phase function m is determined by an initial phase 0 and a frequency track m (t ) as follows: t m (t ) m ( )d 0 0 The frequency track m (t ) is linearly interpolated between the mth harmonic of the current frame and that of the next frame by: m (t ) m 0 (0) Page 21 of 34 (S t ) t m 0 ( S ) m S S MBE Synthesis algorithm (Unvoiced) Unvoiced speech is synthesized from the unvoiced envelope samples by first synthesizing a white noise sequence. For each frame, the white noise sequence is windowed and an FFT is applied to produce samples of the Fourier transform In each unvoiced frequency band, the noise transform samples are normalized to have unity magnitude. The unvoiced spectral envelope is constructed by linearly interpolating between the envelope samples |Am(t)|. The normalized noise transform is multiplied by the spectral envelope to produce the synthetic transform. The synthetic transforms are then used to synthesize unvoiced speech using the weighted overlap-add method. Page 22 of 34 MBE Synthesis (Cont’d) The final synthesized speech is generated by summing the voiced and unvoiced synthesized speech signals Voiced speech + Unvoiced speech Page 23 of 34 Synthesized speech Bit Allocation Page 24 of 34 Parameter Bits Fundamental Frequency 9 Harmonic Magnitude 139-94 Harmonic Phase 0-45 Voiced/Unvoiced Bits 12 Total 160 Advanced MBE (AMBE) MBE coding rate at 2400 bps AMBE coding rate at 1200/2400 bps Four new features 1. 2. 3. 4. Enhanced V/UV decision Initial pitch detection Refined pitch determination Dual rate coding Page 25 of 34 Enhanced V/UV decision divide the whole speech frequency band into 4 subbands and 2 subbands for 2.4 kbps and 1.2 kbps respectively. That is to say only 4 bits and 2 bits are used to encode U/V decisions for 2.4 kb/s and 1.2 kb/s vocoder respectively. Page 26 of 34 Initial pitch detection MBE takes 2 steps to detect the refined initial pitch period – Spectrum matching technique to find the initial pitch period – Using DTW-based (Discrete Time Wrapping) technique to smooth the estimation Computational complexity is very high In MBE, a modified three-level center clipped autocorrelation method is used to detect the initial pitch period, and also use a simple smoothing method to correct the pitch errors. Page 27 of 34 Redefined pitch determination To find the best pitch the basic method is to compute the error between the original speech spectrum and the shaped voiced speech spectrum by first supposing a pitch period The supposed pitch of which the spectrum error is minimum is chosen as the last pitch To reduce the computational complexity, AMBE uses a 256- point FFT to get the speech spectrum, and 5-point window spectrum is used to form the voiced harmonic spectrum. To get the refined pitch, AMBE perform seven times of spectrum matching process. In every time. AMBE first set a supposed pitch, then shape a harmonic spectrum over the overall frequency band according to the supposed pitch and window spectrum, and an error can be calculated by subtracting the shaped spectrum from speech spectrum. After the seven times of matching process, the refined pitch can easily be determined Page 28 of 34 Dual rate coding Page 29 of 34 Parameter 2400 bps 1200 bps Pitch quantization 8 6 V/UV decision 4 2 Amplitude quantization 41 19 total 53 27 Improved MBE (IMBE) A 2400 bps coder based on MBE Substantially better than U.S government standard LPC-10e The parameters of the MBE speech model : – the fundamental frequency – voiced/unvoiced information – the spectral envelope. Page 30 of 34 IMBE algorithm estimate the excitation and system parameters which minimize the distance between the original and synthetic speech spectra (analysis by synthesis) Once these parameters are estimated, voiced/unvoiced decisions are made by comparing the spectral error over a series of harmonics to a prescribed threshold Page 31 of 34 IMBE block diagram Page 32 of 34 IMBE algorithm block diagram IMBE Coding IMBE offered in 2.4, 4.8 and 8.0 kbps Analysis and synthesis routines are the same except the bit allocation The fundamental frequency needs accuracy of about l Hz. and requires about 9 bits per frame. The V/UV decisions are encoded with one bit per decision. The remaining bits are allocated to error control and the spectral envelope information. Page 33 of 33