Introduction to ML

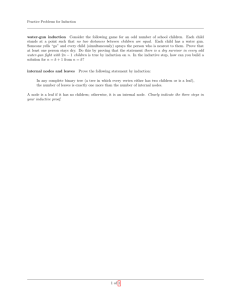

advertisement

INTRODUCTION TO ARTIFICIAL INTELLIGENCE Massimo Poesio LECTURE: Intro to Machine Learning MOTIVATION • Cognition involves making decisions on the basis of knowledge • Example: wordsense disambiguation SENSES OF “line” • Product: “While he wouldn’t estimate the sale price, analysts have estimated that it would exceed $1 billion. Kraft also told analysts it plans to develop and test a line of refrigerated entrees and desserts, under the Chillery brand name.” • Formation: “C-LD-R L-V-S V-NNA reads a sign in Caldor’s book department. The 1,000 or so people fighting for a place in line have no trouble filling in the blanks.” • Text: “Newspaper editor Francis P. Church became famous for a 1897 editorial, addressed to a child, that included the line “Yes, Virginia, there is a Santa Clause.” • Cord: “It is known as an aggressive, tenacious litigator. Richard D. Parsons, a partner at Patterson, Belknap, Webb and Tyler, likes the experience of opposing Sullivan & Cromwell to “having a thousand-pound tuna on the line.” • Division: “Today, it is more vital than ever. In 1983, the act was entrenched in a new constitution, which established a tricameral parliament along racial lines, with separate chambers for whites, coloreds and Asians but none for blacks.” • Phone: “On the tape recording of Mrs. Guba's call to the 911 emergency line, played at the trial, the baby sitter is heard begging for an ambulance.” 3 WHY LEARNING • It is often hard to articulate the knowledge required for such cognitive tasks – The senses of a word – How to decide • We humans are not aware of it • Better from a cognitive and a system building perspective): develop theories of how this information is acquired HUME ON INDUCTION • Piece of bread number 1 was nourishing when I ate it. • Piece of bread number 2 was nourishing when I ate it. • Piece of bread number 3 was nourishing when I ate it. • ….. • Piece of bread number 100 was nourishing when I ate it. • Therefore, all pieces of bread will be nourishing if I eat them. -- David Hume WHEN IS INDUCTION SAFE? • • • If asked why we believe the sun will rise tomorrow, we shall naturally answer, 'Because it has always risen every day.’ We have a firm belief that it will rise in the future, because it has risen in the past. The real question is: Do any number of cases of a law being fulfilled in the past afford evidence that it will be fulfilled in the future? It has been argued that we have reason to know the future will resemble the past, because what was the future has constantly become the past, and has always been found to resemble the past, so that we really have experience of the future, namely of times which were formerly future, which we may call past futures. But such an argument really begs the very question at issue. We have experience of past futures, but not of future futures, and the question is: Will future futures resemble past futures? -- Bertrand Russell, On Induction WHAT IS LEARNING • Memorizing something • Learning facts through observation and exploration • Developing motor and/or cognitive skills through practice • Organizing new knowledge into general, effective representations A DEFINITION OF LEARNING: LEARNING AS IMPROVEMENT Learning denotes changes in the system that are adaptive in the sense that they enable the system to do the task or tasks drawn from the same population more efficiently and more effectively the next time. -- Herb Simon LEARNING A FUNCTION • Given a set of input / output pairs, find a function that does a good job of expressing the relationship: – Wordsense disambiguation as a function from words (the input) to their senses (the outputs) – Categorizing email messages as a function from emails to their category (spam, useful) – A checker playing strategy a function from moves to their values (winning, losing) WAYS OF LEARNING A FUNCTION • SUPERVISED: given a set of example input / output pairs, find a rule that does a good job of predicting the output associated with an input • UNSUPERVISED learning or CLUSTERING: given a set of examples, but no labelling, group the examples into “natural” clusters • REINFORCEMENT LEARNING: an agent interacting with the world makes observations, takes action, and is rewarded or punished; the agent learns to take action in order to maximize reward SUPERVISED LEARNING OF WORD SENSE DISAMBIGUATION • Training experience: a CORPUS of examples annotated with their correct wordsense (according, e.g., to WordNet) UNSUPERVISED LEARNING OF WORDSENSE DISAMBIGUATION • Identify distinctions between contexts in which a word like LINE is encountered and hypothesize sense distinctions on their basis – Cord: fish, fishermen, tuna – Product: companies, products, finance • (The way children learn a lot of what they know) PROBLEMS IN LEARNING A FUNCTION • Memory • Averaging • Generalization EXAMPLE OF LEARNING PROBLEM • Playing checkers TRAINING EXPERIENCE • Supervised learning: Given sample input and output pairs for a useful target function. – Checker boards labeled with the correct move, e.g. extracted from record of expert play 17 CHOOSING A TARGET FUNCTION • What function is to be learned and how will it be used by the performance system? • For checkers, assume we are given a function for generating the legal moves for a given board position and want to decide the best move. – Could learn a function: ChooseMove(board, legal-moves) → best-move – Or could learn an evaluation function, V(board) → R, that gives each board position a score for how favorable it is. V can be used to pick a move by applying each legal move, scoring the resulting board position, and choosing the move that results in the highest scoring board position. 18 Ideal Definition of V(b) • • • • If b is a final winning board, then V(b) = 100 If b is a final losing board, then V(b) = –100 If b is a final draw board, then V(b) = 0 Otherwise, then V(b) = V(b´), where b´ is the highest scoring final board position that is achieved starting from b and playing optimally until the end of the game (assuming the opponent plays optimally as well). – Can be computed using complete mini-max search of the finite game tree. 19 Linear Function for Representing V(b) • In checkers, use a linear approximation of the evaluation function. V (b) w0 w1 bp(b) w2 rp (b) w3 bk (b) w4 rk (b) w5 bt (b) w6 rt (b) – – – – – bp(b): number of black pieces on board b rp(b): number of red pieces on board b bk(b): number of black kings on board b rk(b): number of red kings on board b bt(b): number of black pieces threatened (i.e. which can be immediately taken by red on its next turn) – rt(b): number of red pieces threatened 20 Obtaining Training Values • Direct supervision may be available for the target function. – < <bp=3,rp=0,bk=1,rk=0,bt=0,rt=0>, 100> (win for black) 21 Learning Algorithm • Uses training values for the target function to induce a hypothesized definition that fits these examples and hopefully generalizes to unseen examples. • In statistics, learning to approximate a continuous function is called regression. • Attempts to minimize some measure of error (loss function) such as mean squared error: 2 [Vtrain(b) V (b)] E bB B 22 A SPATIAL WAY OF LOOKING AT LEARNING • Learning a function can also be viewed as learning how to discriminate between different types of objects in a space A SPATIAL VIEW OF LEARNING SPAM NON-SPAM A SPATIAL VIEW OF LEARNING The task of the learner is to learn a function that divides the space of examples into black and red A SPATIAL VIEW OF LEARNING A MORE DIFFICULT EXAMPLE ONE SOLUTION ANOTHER SOLUTION TRAINING AND TESTING • Induction gives us the ability to classify unseen experiences • In order to assess the ability of a learning algorithm to give us this, we need to evaluate its performance on different data from those used for training it – TEST DATA VARIETIES OF LEARNING • Different functions • Different ways of searching the function Various Function Representations • Numerical functions – Linear regression – Neural networks – Support vector machines • Symbolic functions – Decision trees – Rules in propositional logic – Rules in first-order predicate logic • Instance-based functions – Nearest-neighbor – Case-based • Probabilistic Graphical Models – – – – – Naïve Bayes Bayesian networks Hidden-Markov Models (HMMs) Probabilistic Context Free Grammars (PCFGs) Markov networks 33 Various Search Algorithms • Gradient descent – Perceptron – Backpropagation • Dynamic Programming – HMM Learning – PCFG Learning • Divide and Conquer – Decision tree induction – Rule learning • Evolutionary Computation – Genetic Algorithms (GAs) – Genetic Programming (GP) – Neuro-evolution 34 History of Machine Learning • 1950s – Samuel’s checker player – Selfridge’s Pandemonium • 1960s: – – – – Neural networks: Perceptron Pattern recognition Learning in the limit theory Minsky and Papert prove limitations of Perceptron • 1970s: – – – – – – – Symbolic concept induction Winston’s arch learner Expert systems and the knowledge acquisition bottleneck Quinlan’s ID3 Michalski’s AQ and soybean diagnosis Scientific discovery with BACON Mathematical discovery with AM 35 History of Machine Learning (cont.) • 1980s: – – – – – – – – – Advanced decision tree and rule learning Explanation-based Learning (EBL) Learning and planning and problem solving Utility problem Analogy Cognitive architectures Resurgence of neural networks (connectionism, backpropagation) Valiant’s PAC Learning Theory Focus on experimental methodology • 1990s – – – – – – – Data mining Adaptive software agents and web applications Text learning Reinforcement learning (RL) Inductive Logic Programming (ILP) Ensembles: Bagging, Boosting, and Stacking Bayes Net learning 36 History of Machine Learning (cont.) • 2000s – – – – – – – – Support vector machines Kernel methods Graphical models Statistical relational learning Transfer learning Sequence labeling Collective classification and structured outputs Computer Systems Applications • • • • Compilers Debugging Graphics Security (intrusion, virus, and worm detection) – E mail management – Personalized assistants that learn – Learning in robotics and vision 37 READINGS • English: – T. Mitchell, Machine Learning, Mc-Graw Hill, ch.1 • Italian: – R. Basili & A. Moschitti, Apprendimento automatico, in F. Bianchini et al, Instrumentum vocale THANKS • I used material from – MIT Open Course ware – Ray Mooney’s ML course at UTexas