Chapter 5

advertisement

CHAPTER 5

1

1977: DRAM faster than microprocessors

2

Since 1980, CPU has outpaced DRAM ...

3

How do architects address this gap?

• Programmers want unlimited amounts of memory

with low latency

• Fast memory technology is more expensive per bit

than slower memory

• Solution: organize memory system into a hierarchy

– Entire addressable memory space available in largest,

slowest memory

– Incrementally smaller and faster memories, each

containing a subset of the memory below it, proceed in

steps up toward the processor

• Temporal and spatial locality insures that nearly all

references can be found in smaller memories

– Gives the allusion of a large, fast memory being presented

to the processor

4

Memory Hierarchy

5

Advantage of memory hierarchy

6

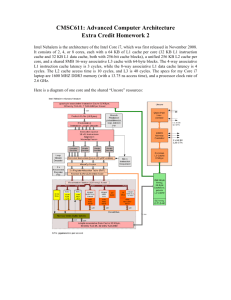

Memory Hierarchy Design

• Memory hierarchy design becomes more

crucial with recent multi-core processors:

– Aggregate peak bandwidth grows with # cores:

• Intel Core i7 can generate two references per core per clock

• Four cores and 3.2 GHz clock

– 25.6 billion 64-bit data references/second +

– 12.8 billion 128-bit instruction references

– = 409.6 GB/s!

• DRAM bandwidth is only 6% of this (25 GB/s)

• Requires:

– Multi-port, pipelined caches

– Two levels of cache per core

– Shared third-level cache on chip

7

Locality in Caches

•

•

A principle that makes memory hierarchy a good idea

If an item is referenced

– Temporal locality: it will tend to be referenced again soon

– Spatial locality: nearby items will tend to be referenced soon

8

Memory Hierarchy Basics

• Block (aka line): unit of copying

– May be multiple words

• If accessed data is present in upper level

– Hit: access satisfied by upper level

• Hit ratio: hits/accesses

• If accessed data is absent

– Miss: block copied from lower level

• Time taken: miss penalty

• Miss ratio: misses/accesses

= 1 – hit ratio

– Then accessed data supplied from upper level

– Takes advantage of spatial locality

9

Memory Technology

Static RAM (SRAM)

Dynamic RAM (DRAM)

50ns – 70ns, $20 – $75 per GB

Magnetic disk

0.5ns – 2.5ns, $2000 – $5000 per GB

5ms – 20ms, $0.20 – $2 per GB

Ideal memory

Access time of SRAM

Capacity and cost/GB of disk

10

Cache

•

•

•

Two issues

– How do we know if a data item is in the cache?

– If it is, how do we find it?

Our first example

– Block size is one word of data

– ”Direct mapped“

Our initial focus: two levels (upper, lower)

– Block: minimum unit of data

– Hit: data requested is in the upper level

– Miss: data requested is not in the upper level

Direct Mapped Cache:

For each item of data at the lower level, there is exactly one location

in the cache where it might be.

e.g., lots of items at the lower level share locations in the upper level

11

Direct Mapped Cache

Location determined by address

Direct mapped: only one choice

(Block address) modulo (#Blocks in cache)

#Blocks is a

power of 2

Use low-order

address bits

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 12

Tags and Valid Bits

How do we know which particular block is

stored in a cache location?

Store block address as well as the data

Actually, only need the high-order bits

Called the tag

What if there is no data in a location?

Valid bit: 1 = present, 0 = not present

Initially 0

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 13

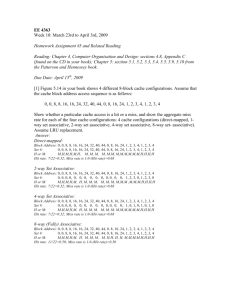

Cache Example

8-blocks, 1 word/block, direct mapped

Initial state

Index

V

000

N

001

N

010

N

011

N

100

N

101

N

110

N

111

N

Tag

Data

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 14

Cache Example

Word addr

Binary addr

Hit/miss

Cache block

22

10 110

Miss

110

Index

V

000

N

001

N

010

N

011

N

100

N

101

N

110

Y

111

N

Tag

Data

10

Mem[10110]

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 15

Cache Example

Word addr

Binary addr

Hit/miss

Cache block

26

11 010

Miss

010

Index

V

000

N

001

N

010

Y

011

N

100

N

101

N

110

Y

111

N

Tag

Data

11

Mem[11010]

10

Mem[10110]

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 16

Cache Example

Word addr

Binary addr

Hit/miss

Cache block

22

10 110

Hit

110

26

11 010

Hit

010

Index

V

000

N

001

N

010

Y

011

N

100

N

101

N

110

Y

111

N

Tag

Data

11

Mem[11010]

10

Mem[10110]

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 17

Cache Example

Word addr

Binary addr

Hit/miss

Cache block

16

10 000

Miss

000

3

00 011

Miss

011

16

10 000

Hit

000

Index

V

Tag

Data

000

Y

10

Mem[10000]

001

N

010

Y

11

Mem[11010]

011

Y

00

Mem[00011]

100

N

101

N

110

Y

10

Mem[10110]

111

N

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 18

Cache Example

Word addr

Binary addr

Hit/miss

Cache block

18

10 010

Miss

010

Index

V

Tag

Data

000

Y

10

Mem[10000]

001

N

010

Y

10

Mem[10010]

011

Y

00

Mem[00011]

100

N

101

N

110

Y

10

Mem[10110]

111

N

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 19

Address Subdivision

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 20

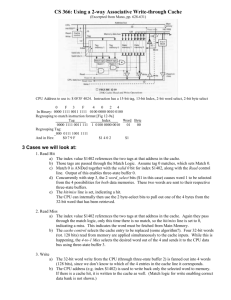

Direct mapped cache

•

•

•

•

•

•

Taking advantage of spatial locality

(16KB cache, 256 Blocks, 16 words/block)

For a 32-bit byte

address

Cache size is 2n

blocks, so n bits are

used for the index

Block size is 2m

words (2m+2 bytes), m

bits are used to the

address the word in a

block, two bits are

used for the byte part

of the address

Size of the tag field is

32 – (n + m + 2)

21

Block Size vs. Performance

22

Block Size vs. Cache Measures

•

Increasing Block Size generally

increases Miss Penalty and

decreases Miss Rate

Miss

Penalty

X

Miss

Rate

Block Size

Avg.

Memory

Access

Time

=

Block Size

Block Size

23

Number of Bits?

•

How many bits are required for a direct-mapped cache with 16 KB of

data and 4-word blocks, assuming a 32-bit address?

•

The 16 KB cache contains 4K words (212)

•

There are 1024 blocks (210) because block size is 4 words. n = 10

•

Each block has 4 * 32, or 128 bits of data plus the tag, and valid bit

•

Cache size = (210) * (bits for words in block + valid bit + tag)

•

Cache size = (210) * (128 + 1 + tag)

•

How many bits are used for the tag?

•

32 – (n + m + 2) = 32 – (10 + 2 + 2) = 18 bits

•

Cache size = (210) * (128 + 1 + 18) = (210) * 147 or 147 Kbits

Cache size is 2n blocks

Block size is 2m words

24

Four Questions for Memory Hierarchy

Designers

• Q1: Where can a block be placed in the upper

level? (Block placement)

• Q2: How is a block found if it is in the upper level?

(Block identification)

• Q3: Which block should be replaced on a miss?

(Block replacement)

• Q4: What happens on a write?

(Write strategy)

25

Q1: Where can a block be placed in the

upper level?

• Direct Mapped: Each block has only one place that it can appear

in the cache.

• Fully associative: Each block can be placed anywhere in the

cache.

– Must search entire cache for block (costly in terms of time)

– Can search in parallel with additional hardware (costly in terms

of space)

• Set associative: Each block can be placed in a restricted set of

places in the cache.

– Compromise between direct mapped and fully associative

– If there are n blocks in a set, the cache placement is called nway set associative

26

Associativity Examples

Fully associative:

Block 12 can go anywhere

Direct mapped:

Block no. = (Block address) mod

(No. of blocks in cache)

Block 12 can go only into block 4

(12 mod 8)

Set associative:

Set no. = (Block address) mod

(No. of sets in cache)

Block 12 can go anywhere in set 0

(12 mod 4)

27

Direct Mapped Cache

28

2 Way Set Associative Cache

29

Fully Set Associative Cache

30

An implementation of a

four-way set associative cache

31

Performance

32

Q2: How Is a Block Found If It Is in the

Upper Level?

• The address can be divided into two main parts

– Block offset: selects the data from the block

offset size = log2(block size)

– Block address: tag + index

• index: selects set in cache

index size = log2(#blocks/associativity)

– tag: compared to tag in cache to determine hit

tag size = address size - index size - offset

size

Tag

Index

33

Set Associative Cache Problem

•

Design an 8-way set associative cache that has 16 blocks and 32 bytes per

block. Assume 32-bit addressing. Calculate the following:

– How many bits are used for the block offset?

– How many bits are used for the set (index) field?

– How many bits are used for the tag?

•

•

•

Offset Size = log2(Block Size)

Offset Size = log2(32)

Offset Size = 5-bits

•

•

•

Index Size = log2(#blocks/associativity)

Index Size = log2(2)

Index Size = 1-bit

•

•

•

Tag Size = Address Size – Index Size - Offset

Tag Size = 32 – 1 – 5

Tag Size = 26-bits

34

Q3: Which Block Should be Replaced on a

Miss?

• Easy for Direct Mapped

• Set Associative or Fully Associative:

– Random - easier to implement

– Least Recently used - harder to implement - may

approximate

• Miss rates for caches with different size, associativity and

replacement algorithm.

Associativity:

Size

LRU

16 KB

5.18%

64 KB

1.88%

256 KB

1.15%

2-way

Random

5.69%

2.01%

1.17%

LRU

4.67%

1.54%

1.13%

4-way

Random

5.29%

1.66%

1.13%

LRU

4.39%

1.39%

1.12%

8-way

Random

4.96%

1.53%

1.12%

35

Q4: What Happens on a Write?

•

•

•

•

Write through: The information is written to both the block in the cache

and to the block in the lower-level memory.

Write back: The information is written only to the block in the cache. The

modified cache block is written to main memory only when it is replaced.

Is block clean or dirty? (add a dirty bit to each block)

Pros and Cons of each:

– Write through

• read misses cannot result in writes to memory,

• easier to implement

• Always combine with write buffers to avoid memory

latency

– Write back

• Less memory traffic

• Perform writes at the speed of the cache

36

Q4: What Happens on a Write?

37

Q4: What Happens on a Write?

•

Since data does not have to be brought into the cache on a write

miss, there are two options:

– Write allocate

• The block is brought into the cache on a write miss

• Used with write-back caches

• Hope subsequent writes to the block hit in cache

– No-write allocate

• The block is modified in memory, but not brought into

the cache

• Used with write-through caches

• Writes have to go to memory anyway, so why bring

the block into the cache

38

Hits vs. misses

•

Read hits

– This is what we want!

•

Read misses

– Stall the CPU, fetch block from memory, deliver to cache, restart

•

Write hits

– Can replace data in cache and memory (write-through)

– Write the data only into the cache (write-back the cache later)

•

Write misses

– Read the entire block into the cache, then write the word

39

Cache Misses

•

•

On cache hit, CPU proceeds normally

On cache miss

– Stall the CPU pipeline

– Fetch block from next level of hierarchy

– Instruction cache miss

• Restart instruction fetch

– Data cache miss

• Complete data access

40

Performance

•

Simplified model

– Execution time=(execution cycles + stall cycles)*cct

•

stall cycles= #of instructions*miss ratio*miss penalty

•

Two ways of improving performance

– Decreasing the miss ratio

– Decreasing the miss penalty

•

What happens if we increase block size?

41

Cache Measures

• Hit rate: fraction found in the cache

– Miss rate = 1 - Hit Rate

• Hit time: time to access the cache

• Miss penalty: time to replace a block from lower level,

– access time: time to access lower level

– transfer time: time to transfer block

CPU time =

(CPU execution cycles + Memory stall cycles)*Cycle time

Memory stall cycles

Memory accesses

Miss rate Miss penalty

Program

Instructio ns

Misses

Miss penalty

Program

Instructio n

42

Cost of Misses

• Average memory access time =

Hit Time + Memory stall cycles

• Note that speculative and multithreaded

processors may execute other instructions

during a miss

– Reduces performance impact of misses

43

Assume 75% instruction, 25% data access

44

Assume 75% instruction, 25% data access

• Which has a lower miss rate: a 16-KB instruction cache with a

16-KB data cache or a 32-KB unified cache?

– 16KB Instruction cache miss rate: 0.64%

– 16KB Data cache miss rate: 6.47%

– 32KB Unified cache miss rate: 1.99%

Miss rate of separate caches = (75% * 0.64%) + (25% * 6.47%)

= 2.10%

45

Assume 75% instruction, 25% data access

•

What is the average memory access time for the separate instruction and data

caches and unified cache assuming write-through caches with a write buffer.

Ignore stalls due to the write buffer. A hit takes 1 clock cycle and a miss

penalty costs 50 clock cycles. A load or store hit on the unified cache takes an

extra clock cycle.

– 16KB Instruction cache miss rate: 0.64%

– 16KB Data cache miss rate: 6.47%

– 32KB Unified cache miss rate: 1.99%

Average Access Time = % inst * (Hit time + instruction miss rate * miss penalty) +

%data * (Hit time + data miss rate * miss penalty)

•

•

Split

Average memory access time = 75% * (1 + 0.64% * 50) + 25% * (1 + 6.47% * 50)

= 75% * 1.32 + 25% * 4.235

= 2.05 cycles

•

•

Unified

Average memory access time = 75% * (1 + 1.99% * 50) + 25% * (1 + 1+ 1.99% * 50)

= (75% * 1.995) + (25% * 2.995)

= 2.24 cycles

46

Cost of Misses, CPU time

47

48

49

Improving Cache Performance

•

Average memory-access time

= Hit time + Miss rate x Miss penalty

•

Improve performance by:

1. Reduce the miss rate,

2. Reduce the miss penalty, or

3. Reduce the time to hit in the cache.

50

Types of misses

• Compulsory

– Very first access to a block (cold-start miss)

• Capacity

– Cache cannot contain all blocks needed

• Conflict

– Too many blocks mapped onto the same

set

51

How do you solve

• Compulsory misses?

– Larger blocks with a side effect!

• Capacity misses?

– Not much options: enlarge the cache

otherwise face “thrashing!”, computer runs

at a speed of the lower memory or slower!

• Conflict misses?

– Full associative cache with a cost of

hardware and may slow the processor!

52

Basic cache optimizations:

– Larger block size

• Reduces compulsory misses

• Increases capacity and conflict misses, increases miss

penalty

– Larger total cache capacity to reduce miss rate

• Increases hit time, increases power consumption

– Higher associativity

• Reduces conflict misses

• Increases hit time, increases power consumption

– Higher number of cache levels

• Reduces overall memory access time

53

3. Reducing Misses via Victim Cache

• Add a small fully associative victim cache to place data discarded from

regular cache

• When data not found in cache, check victim cache

• 4-entry victim cache removed 20% to 95% of conflicts for a 4 KB direct

mapped data cache

• Get access time of direct mapped with reduced miss rate

54

4. Reducing Misses by HW Prefetching of

Instruction & Data

•

•

•

E.g., Instruction Prefetching

– Alpha 21064 fetches 2 blocks on a miss

– Extra block placed in stream buffer

– On miss check stream buffer

– Norman Jouppi [1990 HP] 1 data stream buffer got 25% of

misses from 4KB cache; 4 streams got 43%

Works with data blocks too:

– Palacharla & Kessler [1994] for scientific programs for 8

streams got 50% to 70% of misses from 2 64KB, 4-way set

associative caches

Prefetching relies on extra memory bandwidth that can be used

without penalty

55

5. Reducing Misses by

SW Prefetching Data

•

Data Prefetch

– Load data into register (HP PA-RISC loads)

– Cache Prefetch: load into cache (MIPS IV, PowerPC, SPARC v. 9)

– Special prefetching instructions cannot cause faults;

a form of speculative execution

•

Issuing Prefetch Instructions takes time

– Is cost of prefetch issues < savings in reduced misses?

56

Multi-Level Caches

•

•

Second level cache accessed on first level cache miss

On first level miss, only pay the cost of accessing second level instead of main

memory

•

Different design considerations

– Primary Cache: Minimize hit time to yield shorter clock cycle or fewer

pipeline stages

– Secondary Cache: Reduce miss rate to reduce penalty of main memory

accesses

•

Primary cache is generally smallest

– May use smaller block size and lower associativity to reduce the miss penalty

Secondary cache is much larger

– May use larger block size and higher associativity

•

•

Intel Core i7-980X Gulftown (Cache size per core)

– 32KB Level 1 Data Cache

– 32KB Level 1 Instruction Cache

– 256KB Level 2 Cache

– 12MB Level 3 Cache

57

Multi-Level Cache Performance Example

•

•

Suppose we have a processor with a base CPI of 1.0, assuming all references hit

the primary cache, and a clock rate of 4 GHz. Assume a main memory access

time of 100 ns, including all the miss handling. Suppose the miss rate per

instruction at the primary cache is 2%.

How much faster will the processor be if we add a secondary cache that has a

5ns access time for either a hit or a miss, and is large enough to reduce the miss

rate to main memory to 0.5%.

Total CPI = Base CPI + Memory-stall cycles per instruction

Total CPI = Base CPI + Memory-stall cycles per instruction

= 1.0 + 2%*400

= 9.0

58

Multi-Level Cache Performance Example

Main Memory Miss Penalty = 400 clock cycles

1st Level Total CPI = 9.0

Total CPI = 1 + primary stalls per instruction + secondary stalls per instruction

= 1 + (2% * 20) + (0.5% * 400)

= 3.4

Speedup = 9.0/3.4

= 2.6

59

Interactions with Advanced CPUs

Out-of-order CPUs can execute

instructions during cache miss

Pending store stays in load/store unit

Dependent instructions wait in reservation

stations

Independent instructions continue

Effect of miss depends on program data

flow

Much harder to analyse

Use system simulation

Chapter 5 — Large and Fast: Exploiting Memory Hierarchy — 60

7. Reducing Misses by Compiler

Optimizations

•

•

Instructions

– Reorder procedures in memory so as to reduce misses

– Profiling to look at conflicts

– McFarling [1989] reduced caches misses by 75% on 8KB direct

mapped cache with 4 byte blocks

Data

– Merging Arrays: improve spatial locality by single array of

compound elements vs. 2 arrays

– Loop Interchange: change nesting of loops to access data in

order stored in memory

– Loop Fusion: Combine 2 independent loops that have same

looping and some variables overlap

– Blocking: Improve temporal locality by accessing “blocks” of

data repeatedly vs. going down whole columns or rows

61

Merging Arrays Example

. Problem: referencing multiple arrays in the same

dimension, with the same index, at the same time can

lead to conflict misses.

. Solution: Merge the independent arrays into a compound

array.

/* Before */

int val[SIZE];

int key[SIZE];

/* After */

struct merge {

int val;

int key;

};

struct merge merged_array[SIZE];

62

Miss Rate Reduction Techniques: Compiler

Optimizations

– Loop Interchange

63

Miss Rate Reduction Techniques: Compiler

Optimizations

– Loop Fusion

64

Blocking

• Problem: When accessing multiple multi-dimensional

arrays (e.g., for matrix multiplication), capacity misses

occur if not all of the data can fit into the cache.

•

• Solution: Divide the matrix into smaller sub-matrices (or

blocks) that can fit within the cache

• The size of the block chosen depends on the size of the

cache

• Blocking can only be used for certain types of algorithms

65

Summary of Compiler Optimizations to

Reduce Cache Misses

vpenta (nasa7)

gmty (nasa7)

tomcatv

btrix (nasa7)

mxm (nasa7)

spice

cholesky

(nasa7)

compress

1

1.5

2

2.5

3

Performance Improvement

merged

arrays

loop

interchange

loop fusion

blocking

66

Memory Technology

• Performance metrics

– Latency is concern of cache

– Bandwidth is concern of multiprocessors

and I/O

– Access time

• Time between read request and when desired word arrives

• DRAM used for main memory, SRAM

used for cache

67

Latches and Flip-flops

C

Q

_

Q

D

68

Latches and Flip-flops

D

D

C

Q

D

latch

D

C

Q

D

latch

Q

Q

Q

C

69

Latches and Flip-flops

Latches: whenever the inputs change, and the clock is asserted

Flip-flop: state changes only on a clock edge

(edge-triggered methodology)

70

SRAM

71

SRAM vs. DRAM

Which one has a better memory density?

static RAM (SRAM): value stored in a cell is kept on a pair

of inverting gates

dynamic RAM (DRAM), value kept in a cell is stored as a

charge in a capacitor.

DRAMs use only a single transistor per bit of storage,

By comparison, SRAMs require four to six transistors per bit

Which one is faster?

In DRAMs, the charge is stored on a capacitor, so it cannot be

kept indefinitely and must periodically be refreshed. (called dynamic)

Every ~ 8 ms

Each row can be refreshed simultaneously

Must be re-written after being read

72

Memory Technology

• Amdahl:

– Memory capacity should grow linearly with processor

speed (followed this trend for about 20 years)

– Unfortunately, memory capacity and speed has not

kept pace with processors

– Fourfold improvement every 3 years (originally)

– Doubled capacity from 2006-2010

73

Memory Optimizations

74

Memory Technology

• Some optimizations:

– Synchronous DRAM

• Added clock to DRAM interface

• Burst mode with critical word first

– Wider interfaces

• 4 bit transfer mode originally

• In 2010, upto 16-bit busses

– Double data rate (DDR)

• Transfer data on both rising and falling edge

75

Memory Optimizations

76

Memory Optimizations

• Graphics memory:

– Achieve 2-5 X bandwidth per DRAM vs.

DDR3

• Wider interfaces (32 vs. 16 bit)

• Higher clock rate

– Possible because they are attached via soldering instead of socketted

Dual Inline Memory Modules (DIMM)

• Reducing power in SDRAMs:

– Lower voltage

– Low power mode (ignores clock, continues

to refresh)

77

Virtual Machines

• First developed in 1960s

• Regained popularity recently

– Need for isolation and security in modern

systems

– Failures in security and reliability of standard

operation systems

– Sharing of single computer among many

unrelated users (datacenter, cloud)

– Dramatic increase in raw speed of processors

• Overhead of VMs now more acceptable

78

Virtual Machines

• Emulation methods that provide a standard

software interface

– IBM VM/370, VMware, ESX Server, Xen

• Create the illusion of having an entire computer to

yourself including a copy of the OS

• Allows different ISAs and operating systems to be

presented to user programs

– “System Virtual Machines”

– SVM software is called “virtual machine

monitor” or “hypervisor”

– Individual virtual machines run under the

monitor are called “guest VMs”

79

Impact of VMs on Virtual Memory

• Each guest OS maintains its own set of

page tables

– VMM adds a level of memory between

physical and virtual memory called “real

memory”

– VMM maintains shadow page table that

maps guest virtual addresses to physical

addresses

• Requires VMM to detect guest’s changes to its own page

table

• Occurs naturally if accessing the page table pointer is a

privileged operation

80

Chapter 5 – Virtual Memory

81

Review: Major Components of a Computer

82

Virtual Memory

• Use main memory as a “cache” for secondary (disk)

storage

– Managed jointly by CPU hardware and the

operating system (OS)

– Allows efficient and safe sharing of memory among

multiple programs

– Provides the ability to easily run programs larger

than the size of the physical memory

– Simplifies loading a program for execution by

providing ability for code relocation (i.e., the code

can be loaded anywhere in main memory)

83

Virtual Memory

• How does it work?

– Programs share main memory (DRAM)

– Each program gets a private virtual address space

containing its frequently used code and data

– Each virtual allocation is protected from other

programs

– Exploit locality of memory transactions

• CPU and OS translate virtual addresses to physical

addresses

– VM “block” is called a page

– VM translation “miss” is called a page fault

84

Virtual Memory

• Main memory can act as a cache for the secondary storage (disk)

• Advantages:

– Illusion of having more physical memory

– Program relocation

– Protection

85

Two Programs Sharing Physical Memory

• A program’s address space is divided into pages (all one fixed size)

or segments (variable sizes)

• The starting location of each page, in main memory and disk, is

contained in the program’s “page table”

Program 1

virtual address space

main memory

Program 2

virtual address space

86

Pages: Virtual Memory Blocks

• Page faults: the data is not in memory, retrieve it from

disk

– Takes millions of clock cycles, thus pages should be

fairly large (e.g., 4KB)

– Reducing page faults is important (LRU is worth the

price)

– Handled in by OS instead of hardware. Overhead is

small compared to disk time

– Using write-through is too expensive so we use

write-back

87

Virtual Memory

•

Page Offset

– Determines the page size

•

Number of bits for virtual page number do not need to match number of bits

used for physical page number

Virtual address

•

Having a large number of

virtual pages presents

illusion of unbounded

amounts of virtual

memory

31 30 29 28 27

15 14 13 12 11 10 9 8

3210

Page offset

Virtual page number

Translation

29 28 27

15 14 13 12 11 10 9 8

Physical page number

3210

Page offset

Physical address

88

Page Tables (Fully Associative Search Time Impractical)

•

Page Table

– Used for address translation

– Indexed with page number from Virtual Memory

– Provides Corresponding Physical Page number

– Each program has its own page table

89

A Program’s State

• Page Table

• PC

• Registers

• Must be saved if another program (process) wishes to

use the processor

• Rather than reloading page table, processor uses a

page table register to point to the table a process wants

to use

90

Page Tables

91

Page Faults

• Replacement Policy

• Handle with Hardware or Software

– External memory access time is large relative to

software based solution

• LRU

– Costly to keep track of every page

– Mechanism?

• Keep a reference bit

• OS periodically clears reference bit

92

Making Address Translation Fast

•

•

Page tables in memory

Memory access by a program: Accesses can take twice as long

– Obtain physical address

– Get data

•

Make use of locality of reference

– Temporal & Spatial (Words in a page)

•

Solution

– Special cache

– Keep track of recently used translations

– Translation Lookaside Buffer (TLB)

• Translation cache

• Your piece of paper where you record the location of books you

need from the library

93

Making Address Translation Fast

•

A cache for address translations: translation lookaside buffer

94

TLBs and caches

Virtual address

TLB access

TLB miss

exception

No

Yes

TLB hit?

Physical address

No

Try to read data

from cache

Cache miss stall

while read block

No

Cache hit?

Yes

Write?

No

Yes

Write access

bit on?

Write protection

exception

Yes

Try to write data

to cache

Deliver data

to the CPU

Cache miss stall

while read block

No

Cache hit?

Yes

Write data into cache,

update the dirty bit, and

put the data and the

address into the write buffer

95

TLBs and Caches

96

Modern Systems

•

97

Modern Systems

Intel Nehalem

AMD Barcelona

Address sizes

48 bits (vir); 44 bits (phy)

48 bits (vir); 48 bits (phy)

Page size

4KB

4KB

TLB organization

L1 TLB for instructions

and L1 TLB for data per

core; both are 4-way set

assoc.; LRU

L1 TLB for instructions and

L1 TLB for data per core;

both are fully assoc.; LRU

L1 ITLB has 128 entries,

L1 DTLB has 64 entries

L1 ITLB and DTLB each

have 48 entries

L2 TLB for instructions and

L2 TLB (unified) is 4-way L2 TLB for data per core;

set assoc.; LRU

each are 4-way set assoc.;

round robin LRU

L2 TLB has 512 entries

Both L2 TLBs have 512

entries

TLB misses handled in

TLB misses handled in

hardware

hardware

98

Modern Systems

•

Things are getting complicated!

99

Some Issues

•

Processor speeds continue to increase very fast

— much faster than either DRAM or disk access times

100,000

10,000

1,000

Performance

CPU

100

10

Memory

1

Year

•

Design challenge: dealing with this growing disparity

– Prefetching? 3rd level caches and more? Memory design?

100

“Power Wall + Memory Wall + ILP Wall = Brick Wall”

– David Patterson

101

Cache Control

•

How do we control our cache?

– Can create a FSM

Combinational

Control Logic

Datapath Control

Outputs

Outputs

Inputs

Next State

Inputs From

Datapath

State Register

102

Simple FSM Controller

Cache Hit

Mark Cache Ready

Idle

Valid CPU Request

Compare Tag

If Valid && Hit, Set

Valid, Set Tag, if

Write Set Dirty

y

n

ad

e

lea

R

C

k is

ory

c

m

o

Bl

Me

d

l

dO

n

a

ss

i

eM

h

c

Ca

Allocate

Read new block from

memory

Memory

not ready

Memory Ready

Cache Miss and Old

Block is Dirty

Write-Back

Write Old Block to

Memory

Memory

not ready

103

Cache Coherency

•

•

•

Multicore processors generally share a common physical address

space (clusters can as well)

Caching shared data can create memory problems

Different cores can have different values for the same memory

location. This creates a cache coherence problem

Main Memory (DRAM)

Cache 0

Cache 1

Cache 2

Cache 3

Processor Core 0

Processor Core 1

Processor Core 2

Processor Core 3

104

Cache Coherency

•

Coherence

– Defines what values can be returned by a read

•

Consistency

– Determines when a written value will be returned by a read

Time

Step

Event

Cache

Contents for

CPU A

Cache

Contents for

CPU B

0

Memory

Contents for

Location X

0

1

CPU A reads X

0

0

2

CPU B reads X

0

0

0

3

CPU A stores 1 into X

1

0

1

105

Enforcing Coherency

•

Snooping

– Every cache containing a data block from memory, also has the

sharing status of the data block

– All caches accessible through a broadcast medium (bus or

network)

– Each cache controller monitors the medium to determine whether

they have a copy of data that is requested by other processors

•

Write Invalidate Protocol

– Processor has exclusive access to data before writing

– Invalidate copies in other caches on a write

– No other readable or writable copies should exist when a write

occurs

– Writes are serialized

106

Write Invalidation on a Snooping Bus

Processor Activity

Bus Activity

Contents Contents Contents

of CPU

of CPU

of Memory

A’s Cache B’s Cache Location X

0

CPU A reads X

Cache miss for X

0

CPU B reads X

Cache miss for X

0

0

0

CPU A writes 1 to X Invalidation for X

1

Invalid

0

CPU B reads X

1

1

1

Cache miss for X

0

107

Coherency Performance Issues

• Most protocols exchange full blocks between processors

– Only one word from a block may be written by a processor

– Coherency bandwidth demands may increase by

exchanging full blocks

• False Sharing can occur

– When two unrelated variables are located in the same cache

block, the full block is exchanged between processors even

though the processors are accessing different variables

– Can be reduced by compilers and programmers through

carefully laying out data

108

Further Perspective with CUDA Enabled GPUs

Global Memory

DRAM

Constant

Memory

•

•

•

Constant cache optimized for

temporal locality

Texture cache optimized for spatial

locality

Coalescing can be exploited in the

Global Memory (Similar to spatial

locality in a cache)

Texture

Memory

Multiprocessor

Constant

Cache

Texture

Cache

109

Further Perspective with CUDA Enabled GPUs

Global Memory

First Half-Warp

in a ThreadBlock

[0]

[1]

[2]

[3]

[...]

[13]

[14]

[15]

Thread Thread Thread Thread Thread Thread Thread Thread

(0,0) (1,0) (2,0) (3,0) (…,0) (13,0) (14,0) (15,0)

First Warp in a

Thread-Block

Global Memory

Second HalfWarp in a

Thread-Block

[16]

[17]

[18]

[19]

[...]

[29]

[30]

[31]

Thread Thread Thread Thread Thread Thread Thread Thread

(0,1) (1,1) (2,1) (3,1) (…,1) (13,1) (14,1) (15,1)

110