EC 485: Time Series Analysis in a Nut Shell

advertisement

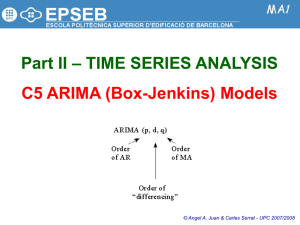

#1 EC 485: Time Series Analysis in a Nut Shell Data Preparation: 1) Plot data and examine for stationarity 2) Examine ACF for stationarity 3) If not stationary, take first differences 4) If variance appears non-constant, take logarithm before first differencing 5) Examine the ACF after these transformations to determine if the series is now stationary #2 Model Identification and Estimation: 1) Examine the ACF and PACF’s of your (now) stationary series to get some ideas about what ARIMA(p,d,q) models to estimate. 2) Estimate these models 3) Examine the parameter estimates, the SBC statistic and test of white noise for the residuals. Forecasting: 1) Use the best model to construct forecasts 2) Graph your forecasts against actual values 3) Calculate the Mean Squared Error for the forecasts Data Preparation: #3 1) Plot data and examine. Do a visual inspection to determine if your series is nonstationary. 2) Examine Autocorrelation Function (ACF) for stationarity. The ACF for a nonstationary series will show large autocorrelations that diminish only very slowly at large lags. (At this stage you can ignore the partial autocorrelations and you can always ignore what SAS calls the inverse autocorrelations. 3) If not stationary, take first differences. SAS will do this automatically in the IDENTIFY VAR=y(1) statement where the variable to be “identified” is y and the 1 refers to first-differencing. 4) If variance appears non-constant, take logarithm before first differencing. You would take the log before the IDENTIFY statement: ly = log(y); PROC ARIMA; IDENTIFY VAR=ly(1); 5) Examine the ACF after these transformations to determine if the series is now stationary In this presentation, a variable measuring the capacity utilization for the U.S. economy is modeled. The data are monthly from 1967:1 – 2004:03. It will be used as an example of how to carry out the three steps outlined on the previous slide. We will remove the last 6 observations 2003:10 – 2004:03 so that we can construct out-of-sample forecasts and compare our models’ ability to forecast. #4 Capacity Utilization 1967:1 – 2004:03 (in levels) This plot of the raw data indicates non-stationarity, although there does not appear to be a strong trend. #5 #6 The ARIMA Procedure Name of Variable = cu Mean of Working Series Standard Deviation Number of Observations 81.61519 3.764998 441 Autocorrelations Lag Covariance Correlation 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 14.175211 13.884523 13.485201 13.007277 12.434837 11.820231 11.191805 10.561770 9.900866 9.215675 8.479804 7.713914 6.928244 6.160440 5.422593 4.717018 4.051825 3.390746 2.751886 1.00000 0.97949 0.95132 0.91761 0.87722 0.83387 0.78953 0.74509 0.69846 0.65013 0.59821 0.54418 0.48876 0.43459 0.38254 0.33277 0.28584 0.23920 0.19413 This ACF plot is produced By SAS using the code: PROC ARIMA; IDENTIFY VAR=cu; -1 9 8 7 6 5 4 3 2 1 0 1 2 3 4 5 6 7 8 9 1 | | | | | | | | | | | | | | | | | | | |********************| . |********************| . |******************* | . |****************** | . |****************** | . |***************** | . |**************** | . |*************** | . |************** | . |************* | . |************ | . |*********** | . |********** | . |********* | . |******** | . |*******. | . |****** . | . |***** . | . |**** . | It will also produce an inverse autocorrelation plot that you can ignore and a partial autocorrelation plot that we will use in the modeling stage. This plot of the ACF clearly indicates a non-stationary series. The autocorrelations diminish only very slowly. First differences of Capacity Utilization 1967:1 – 2004:03 This graph of first differences appears to be stationary. #7 #8 Name of Variable = cu Period(s) of Differencing Mean of Working Series Standard Deviation Number of Observations Observation(s) eliminated by differencing Lag 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 Covariance 0.341391 0.126532 0.093756 0.079004 0.062319 0.021558 0.020578 0.018008 0.029300 0.040026 0.020880 0.010021 -0.0071559 -0.026090 -0.031699 -0.032960 -0.023544 -0.021426 -0.0084132 Correlation 1.00000 0.37064 0.27463 0.23142 0.18254 0.06315 0.06028 0.05275 0.08583 0.11724 0.06116 0.02935 -.02096 -.07642 -.09285 -.09654 -.06897 -.06276 -.02464 1 -0.03295 0.584287 440 1 Autocorrelations -1 9 8 7 6 5 4 3 2 1 0 1 2 3 4 5 6 7 8 9 1 | |********************| | . |******* | | . |***** | | . |***** | | . |**** | | . |*. | | . |*. | | . |*. | | . |** | | . |** | | . |*. | | . |*. | | . | . | | **| . | | **| . | | **| . | | . *| . | | . *| . | | . | . | This ACF was produced in SAS using the code: PROC ARIMA; IDENTIFY VAR=cu(1); RUN; where the (1) tells SAS to use first differences. This ACF shows the autocorrelations diminishing fairly quickly. So we decide that the first difference of the capacity util. rate is stationary. In addition to the autocorrelation function (ACF) and partial autocorrelation #9 functions (PACF) SAS will print out an autocorrelation check for white noise. Specifically, it prints out the Ljung-Box statistics, called Chi-Square below, and the p-values. If the p-value is very small as they are below, then we can reject the null hypothesis that all of the autocorrelations up to the stated lag are jointly zero. For example, for our capacity utilization data (first differences): Ho: 1 =2 =3 =4 =5 =6 = 0 (the data series is white noise) H1: at least one is non-zero 2 = 136.45 with a p-value of less than 0.0001 easily reject Ho To Lag ChiSquare Autocorrelation Check for White Noise Pr > DF ChiSq ---------------Autocorrelations--------------- 6 12 18 24 136.45 149.50 164.64 221.29 6 12 18 24 <.0001 <.0001 <.0001 <.0001 0.371 0.053 -0.076 -0.059 0.275 0.086 -0.093 -0.064 0.231 0.117 -0.097 -0.118 0.183 0.061 -0.069 -0.114 0.063 0.029 -0.063 -0.145 0.060 -0.021 -0.025 -0.257 A check for white noise on your stationary series is important, because if your series is white noise there is nothing to model and thus no point in carrying out any estimation or forecasting. We see here that the first difference of capacity utilization is not white noise, so we proceed to the modeling and estimation stage. Note: we can ignore the autocorrelation check for the data before differencing because it is non-stationary. #10 Model Identification and Estimation: 1) Examine the Autocorrelation Function (ACF) and Partial Autocorrelation Function (PACF) of your (now) stationary series to get some ideas about what ARIMA(p,d,q) models to estimate. The “d” in ARIMA stands for the number of times the data have been differenced to render to stationary. This was already determined in the previous section. The “p” in ARIMA(p,d,q) measures the order of the autoregressive component. To get an idea of what orders to consider, examine the partial autocorrelation function. If the time-series has an autoregressive order of 1, called AR(1), then we should see only the first partial autocorrelation coefficient as significant. If it has an AR(2), then we should see only the first and second partial autocorrelation coefficients as significant. (Note, that they could be positive and/or negative; what matters is the statistical significance.) Generally, the partial autocorrelation function PACF will have significant correlations up to lag p, and will quickly drop to near zero values after lag p. #11 Here is the partial autocorrelation function PACF for the first-differenced capacity utilization series. Notice that the first two (maybe three) autocorrelations are statistically significant. This suggests AR(2) or AR(3) model. There is a statistically significant autocorrelation at lag 24 (not printed here) but this can be ignored. Remember that 5% of the time we can get an autocorr. that is more than 2 st. dev.s above zero when in fact the true one is zero. Partial Autocorrelations Lag 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 Correlation 0.37064 0.15912 0.10330 0.04939 -0.07279 0.00433 0.01435 0.06815 0.08346 -0.02903 -0.03996 -0.07539 -0.08379 -0.03419 -0.02101 0.01950 -0.00768 0.01681 -1 9 8 7 6 5 4 3 2 1 0 1 2 3 4 5 6 7 8 9 1 | . |******* | | . |*** | | . |** | | . |*. | | .*| . | | . | . | | . | . | | . |*. | | . |** | | .*| . | | .*| . | | **| . | | **| . | | .*| . | | . | . | | . | . | | . | . | | . | . | Model Identification and Estimation: (con’t) #12 The “q” measures the order of the moving average component. To get an idea of what orders to consider, we examine the autocorrelation function. If the time-series is a moving average of order 1, called a MA(1), we should see only one significant autocorrelation coefficient at lag 1. This is because a MA(1) process has a memory of only one period. If the time-series is a MA(2), we should see only two significant autocorrelation coefficients, at lag 1 and 2, because a MA(2) process has a memory of only two periods. Generally, for a time-series that is a MA(q), the autocorrelation function will have significant correlations up to lag q, and will quickly drop to near zero values after lag q. For the capacity utilization time-series, we see that the ACF function decays, but only for the first 4 lags. Then it appears to drop off to zero abruptly. Therefore, a MA(4) might be considered. Our initial guess is ARIMA(2,1,4) where the 1 tells us that the data have been first-differenced to render it stationary. #13 2) Estimate the Models: To estimate the model in SAS is fairly straight forward. Go back to the PROC ARIMA and add the ESTIMATE command. Here we will estimate four models: ARIMA(1,1,0), ARIMA(1,1,1), ARIMA(2,1,0), and ARIMA(2,1,4). Although we believe the last of these will be the best, it is instructive to estimate a simple AR(1) on our differenced series, this is the ARIMA(1,1,0) a model with an AR(1) and a MA(1) on the differenced series; this is the ARIMA(1,1,1), and a model with only an AR(2) term. This is the ARIMA(2,1,0) PROC ARIMA; IDENTIFY VAR=cu(1); ESTIMATE p = 1: ESTIMATE p = 1 q=1; ESTIMATE p = 2; ESTIMATE p = 2 q = 4; RUN; This tells SAS that d=1 for all models This estimates an ARIMA(1,1,0) This estimates ARIMA(1,1,1) This estimates an ARIMA(2,1,0) This estimates an ARIMA(2,1,4) #14 3) Examine the parameter estimates, the SBC statistic and test of white noise for the residuals. On the next few slides you will see the results of estimating the 4 models discussed in the previous section. We are looking at the statistical significance of the parameter estimates. We also want to compare measures of overall fit. We will use the SBC statistic. It is based on the sum of squared residuals from estimating the model and it balances the reduction in degrees of freedom against the reduction in sum of squared residuals from adding more variables (lags of the time-series). The lower the sum of squared residuals, the better the model. SAS calculates the SBC as: SBC 2 ln( L) K ln( T ) Where k = p+q+1, the number of parameters estimated, and T is sample size. L is the likelihood measure, and essentially depends on the sum of squared residuals. The model with the lowest SBC measure is considered “best”. SBC can be positive or negative. NOTE: SAS’s formula differs slightly from the one in the textbook. This is the ARIMA(1,1,0) model: yt =β0 + β1 yt-1 + εt #15 Conditional Least Squares Estimation Parameter MU AR1,1 These are the estimates of β0 and β1 Estimate -0.03528 0.37113 Standard Error 0.04115 0.04440 t Value -0.86 8.36 Approx Pr > |t| 0.3918 <.0001 Lag 0 1 Constant Estimate -0.02219 Variance Estimate 0.295778 Std Error Estimate 0.543854 AIC 714.6766 SBC 722.8502 Number of Residuals 440 * AIC and SBC do not include log determinant. To Lag ChiSquare DF Autocorrelation Check of Residuals Pr > ChiSq ---------------Autocorrelations--------------- 6 12 18 24 30 36 17.95 22.89 27.95 50.98 62.85 68.07 5 11 17 23 29 35 0.0030 0.0183 0.0455 0.0007 0.0003 0.0007 -0.059 0.006 -0.052 -0.039 -0.071 -0.046 0.103 0.040 -0.048 -0.008 -0.045 0.056 0.109 0.092 -0.058 -0.079 -0.087 -0.042 0.114 0.017 -0.022 -0.037 -0.026 -0.027 -0.021 0.022 -0.043 -0.032 -0.056 -0.041 0.029 -0.008 0.020 -0.198 0.082 -0.040 Things to notice: the parameter estimate on the AR(1) term 1 is statistically significant, which is good. However, the autocorrelation check of the residuals tells us that the residuals from this ARIMA(1,1,0) are not whitenoise, with a p-value of 0.003. We have left important information in the residuals that could be used. We need a better model. #16 This is the ARIMA(1,1,1) model: yt = β0 + β1 yt-1 + εt + λ1 εt-1 Conditional Least Squares Estimation Standard Parameter Estimate Error MU -0.04037 0.05586 MA1,1 0.46161 0.09410 AR1,1 0.75599 0.06951 Approx Pr > |t| 0.4703 <.0001 <.0001 Lag 0 1 1 Constant Estimate -0.00985 Variance Estimate 0.286071 Std Error Estimate 0.534856 AIC 700.9892 SBC 713.2496 Number of Residuals 440 * AIC and SBC do not include log determinant. These are the estimates of β0 , β1 and λ1 To Lag 6 12 18 24 30 36 t Value -0.72 4.91 10.88 ChiSquare 4.71 10.53 16.75 35.15 45.51 49.89 DF 4 10 16 22 28 34 Autocorrelation Check of Residuals Pr > ChiSq ---------------Autocorrelations--------------0.3187 -0.001 -0.012 0.031 0.045 -0.079 -0.034 0.3953 -0.029 0.032 0.097 0.031 0.023 -0.012 0.4021 -0.062 -0.061 -0.059 -0.016 -0.017 0.045 0.0374 -0.002 0.014 -0.048 -0.008 -0.024 -0.190 0.0196 -0.072 -0.028 -0.066 -0.017 -0.022 0.104 0.0386 -0.003 0.070 -0.023 -0.025 -0.038 -0.040 Things to notice: the parameter estimates of the AR(1) term β1 and of the MA(1) term λ1 are statistically significant. Also, the autocorrelation check of the residuals tells us that the residuals from this ARIMA(1,1,1) are whitenoise, since the Chi-Square statistics up to a lag of 18 have p-values greater than 10%, meaning we cannot reject the null hypothesis that the autocorrelations up to lag 18 are jointly zero (p-value = 0.4021). Also the SBC statistic is smaller. So we might be done … This is the ARIMA(2,1,0) model: yt = β0 + β1 yt-1 + β2 yt-2 + εt Conditional Least Squares Estimation Standard Parameter Estimate Error MU -0.03783 0.04829 AR1,1 0.31208 0.04726 AR1,2 0.15929 0.04726 t Value -0.78 6.60 3.37 Approx Pr > |t| 0.4338 <.0001 0.0008 #17 Lag 0 1 2 Constant Estimate -0.02 Variance Estimate 0.288946 Std Error Estimate 0.537537 AIC 705.3888 SBC 717.6491 Number of Residuals 440 * AIC and SBC do not include log determinant. Autocorrelation Check of Residuals To Lag ChiSquare DF Pr > ChiSq 6 12 18 24 30 36 8.67 13.96 18.73 38.35 47.43 51.02 4 10 16 22 28 34 0.0700 0.1747 0.2832 0.0167 0.0123 0.0305 ---------------Autocorrelations---------------0.017 -0.010 -0.054 -0.016 -0.067 -0.019 -0.045 0.038 -0.053 -0.004 -0.021 0.053 0.085 0.096 -0.052 -0.063 -0.070 -0.029 0.089 0.023 -0.020 -0.009 -0.031 -0.030 -0.045 0.019 -0.025 -0.022 -0.034 -0.033 -0.007 -0.007 0.030 -0.193 0.085 -0.037 This model has statistically significant coefficient estimates, the residuals up to lag 6 reject the null hypothesis of white noise, casting some doubt on this model. We won’t place much meaning in the Chi-Square statistics for lags beyond 18. The SBC statistic is larger, which is not good. This is the ARIMA(2,1,4) model: yt = β0 + β1 yt-1 + β2 yt-2 + εt + λ1 εt-1 + λ2 εt-2 + λ3 εt-3+ λ4 εt-4 Conditional Least Squares Estimation Standard Parameter Estimate Error MU -0.03613 0.04697 MA1,1 0.48913 0.29916 MA1,2 -0.43438 0.13474 MA1,3 -0.17179 0.05634 MA1,4 -0.11146 0.08044 AR1,1 0.78020 0.29788 AR1,2 -0.44336 0.19274 To Lag 6 12 18 24 30 36 t Value -0.77 1.64 -3.22 -3.05 -1.39 2.62 -2.30 Approx Pr > |t| 0.4423 0.1028 0.0014 0.0024 0.1666 0.0091 0.0219 #18 Lag 0 1 2 3 4 1 2 Constant Estimate -0.02396 Variance Estimate 0.284717 Std Error Estimate 0.533589 AIC 702.8553 SBC 731.4627 Number of Residuals 440 * AIC and SBC do not include log determinant. Autocorrelation Check of Residuals ChiPr > Square DF ChiSq ---------------Autocorrelations--------------0.00 0 <.0001 -0.000 0.003 0.005 0.020 -0.009 0.068 5.66 6 0.4624 0.028 0.032 0.072 0.008 0.022 -0.002 9.94 12 0.6212 -0.049 -0.050 -0.054 -0.016 -0.024 0.026 27.26 18 0.0743 -0.029 -0.003 -0.063 -0.022 -0.022 -0.177 35.68 24 0.0590 -0.058 -0.030 -0.070 -0.025 -0.048 0.076 40.12 30 0.1025 -0.027 0.056 -0.034 -0.033 -0.040 -0.040 Two of the parameter estimates are not statistically significant telling us the model is not “parsimonious”, and the SBC statistic is larger than the SBC for the ARIMA(1,1,1) model. Ignore the first Chi-Square statistic since it has 0 d.o.f. due to estimating a model with 7 parameters. The Chi-Square statistic at 12 and 18 lags is statistically insignificant indicating white noise. #19 Forecasts: proc arima; identify var=cu(1); estimate p=1; (any model goes here) forecast lead=6 id=date interval=month out=fore1; We calculate the Mean Squared Error for the 6 out-of-sample forecasts. Graphs appear on the next four slides. We find that the fourth model produces forecasts with the smallest MSE. SAS automatically adjusts the data from first differences back into levels. Use the actual values for CU and the forecasted values below to generate a mean squared prediction error for each model estimated. The formula is MSE = (1/6)*(fcu – cu)2 where fcu is a forecast and cu is actual. Obs date cu cu2 fcu1 sd1 fcu2 sd2 fcu3 sd3 fcu4 sd4 441 SEP03 74.9 74.9 74.4778 0.54385 74.5294 0.53486 74.5596 0.53754 74.6211 0.53359 442 OCT03 . 75.0 75.0263 0.54385 75.0215 0.53486 75.0048 0.53754 75.1540 0.53359 443 NOV03 . 75.7 75.0509 0.92295 75.1034 0.87485 75.0813 0.88678 75.3396 0.87138 444 DEC03 . 75.8 75.0379 1.23500 75.1555 1.19316 75.1018 1.22371 75.3883 1.18650 445 JAN04 . 76.2 75.0109 1.49834 75.1851 1.49534 75.1004 1.52680 75.3511 1.50072 446 FEB04 . 76.7 74.9787 1.72697 75.1976 1.78205 75.0833 1.80183 75.2766 1.81196 447 MAR04 . 76.5 74.9445 1.93039 75.1972 2.05370 75.0577 2.05184 75.2110 2.08938 #20 Granger Causality (Predictability) Test Yt = 0 + 1Yt-1 + 2Yt-2 … + pYt-p + α1Xt-1 + α 2Xt-2 … + α pXt-p + ut We can test to determine if another variable helps to predict our series Yt. This can be done through a simple F-test on the α parameters. If these are jointly zero, then the variable X has no “predictive content” for variable Y. See textbook, Chapter 14. lcpi = lag(cpi); inf = 12*100*log(cpi)-log(lcpi); dinf = inf-lag(inf); ldinf = lag(dinf); l2dinf = lag2(dinf); l3dinf = lag3(dinf); l4dinf = lag4(dinf); ldcu = lag(dcu); l2dcu = lag2(dcu); l3dcu = lag3(dcu); l4dcu = lag4(dcu); run; proc autoreg data=one; model dcu = ldcu l2dcu l3dcu l4dcu ldinf l2dinf l3dinf l4dinf ; test ldinf=0,l2dinf=0,l3dinf=0,l4dinf=0; run; #21 #22 The AUTOREG Procedure Dependent Variable dcu Ordinary Least Squares Estimates SSE MSE SBC Regress R-Square Durbin-Watson 116.390576 0.26942 718.852042 0.2090 1.9847 DFE Root MSE AIC Total R-Square 432 0.51906 682.050639 0.2090 Variable DF Estimate Standard Error t Value Approx Pr > |t| Intercept ldcu l2dcu l3dcu l4dcu ldinf l2dinf l3dinf l4dinf 1 1 1 1 1 1 1 1 1 0.1455 0.2585 0.1118 0.0771 0.0534 0.006012 -0.0218 -0.006926 -0.0103 0.0459 0.0481 0.0493 0.0486 0.0468 0.009440 0.0101 0.0102 0.009566 3.17 5.37 2.27 1.59 1.14 0.64 -2.15 -0.68 -1.08 0.0016 <.0001 0.0240 0.1137 0.2545 0.5246 0.0318 0.4959 0.2802 Test 1 Source Numerator Denominator DF 4 432 Mean Square 1.295144 0.269423 F Value 4.81 Pr > F 0.0008