PPT - NDTAC

advertisement

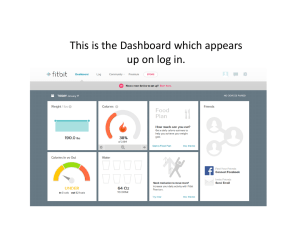

Reporting & Evaluation Workshop Lauren Amos, Liann Seiter, and Dory Seidel Workshop Objectives • Learn how to use a data dashboard to support your Title I, Part D, reporting and evaluation responsibilities. 2 Agenda: Part I 1. Presentation: Data Quality Overview and Common Issues 2. Dashboard Demonstration: Exploring National CSPR Data With the NDTAC Dashboard 3. Activity: Use the Dashboard To Conduct a CSPR Data Quality Review of Your State Data 4. Small-Group Discussion 3 Agenda: Part II 1. Dashboard Demonstration: GPRA Measures Used To Inform Federal Decisionmaking 2. Activity: Use the Dashboard To Drive Decisionmaking With Your State Data 3. Whole-Group Discussion 4 Presentation Data Quality Overview and Common Issues 5 Why Is Data Quality Important? You need to trust your data because it informs: • • • • • Data-driven decision-making Technical assistance (TA) needs Subgrantee monitoring Application reviews Program evaluation 6 What Makes “High Quality Data”? • Accuracy • Consistency • Unbiased • Understandable • Transparency 7 Individual Programs: Where Data Quality Begins Individual Programs SA or LEA SEA ED • If data quality is not a priority at the local level, the problems become harder to identify as the data are rolled up—problems can become hidden. • If data issues are recognized late in the process, it is more difficult (and less cost-effective) to identify where the issues are and rectify them in time. 8 Role of the Part D Coordinator Ultimately, coordinators cannot “make” the data be of high quality, but they can implement systems that make it likely: • • • • • • Understand the collection process. Provide TA in advance. Develop relationships. Develop multilevel verification processes. Track problems over time. Use the data. 9 Method # 1: Use the Data!!! The fastest way to motivate for data quality is to use the data that programs provide. The best way to increase data quality is to promote usage at the local level. 10 Should you use data that has data quality problems? YES!! You can use these data to… • Become familiar with the data and readily identify problems. • Know when the data are ready to be used or how they can be used. • Incentivize and motivate others. 11 Method #2: Incentivize and Motivate 1. Know who is involved in the process and their roles. 2. Identify what is important to you and your data coordinators. 3. Select motivational strategies that align with your priorities (and ideally encourage teamwork). Reward Provide Control Provide bonus/ incentives for good data quality Set goals, but allow freedom for how to get there (individual or team level) Belong Communicate vision and goals at all levels Compare Publish rankings, and make data visible Learn Punish Provide training Withhold and tools on funding data quality and data usage (to individuals or to everyone) 12 Method #3: Prioritize Consider targeting only: • Top problem areas among all subgrantees • Most crucial data for the State • Struggling programs 13 Method #4: Know the Data Quality Pitfalls Recognize and respond proactively to the things that can hinder progress: • Changes to indicators • Changes to submission processes • Staff turnover • Funding availability 14 Method #5: Renew, Reuse, Recycle • Develop materials upfront. • Look to existing resources and make them your own. Where to look: • NDTAC • ED • Your ND community • The Web 15 NDTAC: Tools for Proactive TA • Consolidated State Performance Report (CSPR) Guide – Sample CSPR tables – In-depth instructions – Data quality checklists • Data Collection List • CSPR Tools and Other Resources • EDFacts File Specifications 16 Tools for Reviewing Data and Motivating Providers • EDFacts summary reports – Review the quality of data entered via EDFacts • ED Data Express – Compare data at the SA and LEA level to the nation or similar states • Data Dashboard – Conduct data quality reviews or performance evaluation – Make data driven decisions 17 Common CSPR Data Quality Issues • Academic and vocational outcomes are over the age-eligible ranges. • The sum of students by race, age, or gender does not equal the unduplicated count of students. • The number of long-term students testing below grade level is more than the number of long-term students for reading and math. • The number of students demonstrating results (sum of rows 3–7) does not equal the number of students with complete pre- and posttests (row 2) for reading and math. • Average length of stay is 0 or more than 365 days. 18 Dashboard Demonstration Exploring National CSPR Data with the NDTAC Dashboard 19 Activity Use the Dashboard To Conduct a CSPR Data Quality Review of Your State Data 20 Activity: CSPR Data Quality Review 21 Strategies for Improving Data Quality • Technical assistance: newsletters, webinars, phone calls, one-pagers • Monitoring: on-site verification (e.g., document reviews, demo data collection process/system) • Interim data collection and QC reviews • Data collection templates • Annual CSPR data show • Other ideas? 22 Whole-Group Discussion: Data Quality • Is your current data collection tool sound? • Do your subgrantees know how to use your data collection tool? − How do you know? − What TA do you provide to support their use of the tool? • Do your facilities have a data collection system, process, or tool that allows them to track student-level data? • How do your current subgrantee monitoring activities support CSPR data quality? 23 Dashboard Demonstration GPRA Measures Used To Inform Federal Decisionmaking (or the Federal Perspective on State Performance) 24 Activity: GPRA Measures 25 Guiding Questions 1. GRPA Indicators » In what areas is your state underperforming relative to the nation? » What contextual factors may explain why? » What questions do you have about your state’s performance in this area? » Is this cause for concern? » If so, what are your next steps? 26 Activity Use the Dashboard To Drive Decisionmaking With Your State Data 27 Activity: Decisionmaking 28 Guiding Questions 2. Program Performance » Are certain demographic groups overrepresented in a particular program and if so, are those programs taking measures to serve the unique needs of those populations? » Are all students being served equally well or are certain program types outperforming (or underperforming) their peers? » Which program type(s) appear to be effective? Why might it/they appear to outperform the other program types (e.g., higher student performance upon entry, more highly qualified teachers, smaller student-teacher ratio, low teacher attrition)? » What questions do you have about your state’s performance in this area? » Any causes for concern? » If so, what are your next steps? 29 Whole-Group Discussion 1. Did these activities inspire any surprises for? Any major takeaways? 2. Are your subgrantees meeting the needs of your State’s youth who are neglected and delinquent? 3. Are youth needs being consistently met across program types? 4. What did the dashboard activity suggest about how well TIPD funds are being used in your State? 5. Are the funds you’ve allocated to your subgrantees reflected in program outcomes? 30 Whole-Group Discussion (cont.) 1. "It's Complicated": What's your Facebook status with your EDFacts Coordinator? • What might you do to forge better ties with your State data person? 2. What are your next steps? • What might you do differently moving forward to improve data quality and data-driven decisionmaking in your State? 3. How can NDTAC support you in these next steps? 31