Document

advertisement

Pattern

Classification

All materials in these slides were taken from

Pattern Classification (2nd ed) by R. O.

Duda, P. E. Hart and D. G. Stork, John Wiley

& Sons, 2000

with the permission of the authors and the

publisher

Chapter 4 (part 2):

Non-Parametric Classification

(Sections 4.3-4.5)

• Parzen Window (cont.)

• Kn –Nearest Neighbor Estimation

• The Nearest-Neighbor Rule

2

Pattern Classification, Chapter 4 (Part 2)

Parzen Windows (cont.)

3

• Parzen Windows – Probabilistic Neural Networks

• Compute a Parzen estimate based on n patterns

• Patterns with d features sampled from c classes

• The input unit is connected to n patterns

x1

x2

Input unit

.

.

.

xd

..

.

W11

..

.

.

p1

p2

Wd2

Wdn

Modifiable weights (trained)

.

.

.

Input

patterns

pn

Pattern Classification, Chapter 4 (Part 2)

4

pn

Input

patterns

..

.

.

p1

p2

.

.

.

pk

.

.

.

pn

..

.

1

2

.

.

.

Category

units

c

Activations

(Emission of nonlinear functions)

Pattern Classification, Chapter 4 (Part 2)

5

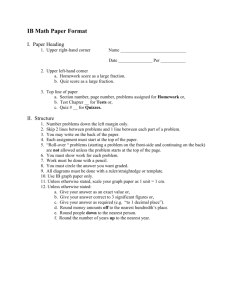

•

Training the network

•

Algorithm

1. Normalize each pattern x of the training set to 1

2. Place the first training pattern on the input units

3. Set the weights linking the input units and the first pattern units

such that: w1 = x1

4. Make a single connection from the first pattern unit to the

category unit corresponding to the known class of that pattern

5. Repeat the process for all remaining training patterns by setting

the weights such that wk = xk (k = 1, 2, …, n)

We finally obtain the following network

Pattern Classification, Chapter 4 (Part 2)

6

Pattern Classification, Chapter 4 (Part 2)

7

•

Testing the network

•

Algorithm

1. Normalize the test pattern x and place it at the input units

2. Each pattern unit computes the inner product in order to yield

the net activation

t

net k w k .x

and emit a nonlinear function

net 1

f ( net k ) exp k 2

3. Each output unit sums the contributions from all pattern units

connected to it

n

Pn ( x | j ) i P ( j | x )

i 1

4. Classify by selecting the maximum value of Pn(x | j)

(j = 1, …, c)

Pattern Classification, Chapter 4 (Part 2)

8

• Kn - Nearest neighbor estimation

• Goal: a solution for the problem of the unknown “best” window

function

• Let the cell volume be a function of the training data

• Center a cell about x and let it grows until it captures kn samples

•

(kn = f(n))

kn are called the kn nearest-neighbors of x

2 possibilities can occur:

• Density is high near x; therefore the cell will be small which provides

•

a good resolution

Density is low; therefore the cell will grow large and stop until higher

density regions are reached

We can obtain a family of estimates by setting kn=k1/n and

choosing different values for k1

Pattern Classification, Chapter 4 (Part 2)

9

Pattern Classification, Chapter 4 (Part 2)

10

Pattern Classification, Chapter 4 (Part 2)

11

Illustration

For kn = n = 1 ; the estimate becomes:

Pn(x) = kn / n.Vn = 1 / V1 =1 / 2|x-x1|

Pattern Classification, Chapter 4 (Part 2)

12

Pattern Classification, Chapter 4 (Part 2)

13

Pattern Classification, Chapter 4 (Part 2)

14

• Estimation of a-posteriori probabilities

• Goal: estimate P(i | x) from a set of n labeled samples

• Let’s place a cell of volume V around x and capture k samples

• ki samples amongst k turned out to be labeled i then:

pn(x, i) = ki /n.V

An estimate for pn(i| x) is:

pn ( i | x )

pn ( x , i )

j c

p ( x ,

j 1

n

j

)

ki

k

Pattern Classification, Chapter 4 (Part 2)

15

• ki/k is the fraction of the samples within the cell that are

labeled i

• For minimum error rate, the most frequently represented

category within the cell is selected

• If k is large and the cell sufficiently small, the performance

will approach the best possible

Pattern Classification, Chapter 4 (Part 2)

16

• The nearest –neighbor rule

• Let Dn = {x1, x2, …, xn} be a set of n labeled prototypes

• Let x’ Dn be the closest prototype to a test point x then the

nearest-neighbor rule for classifying x is to assign it the label

associated with x’

• The nearest-neighbor rule leads to an error rate greater than the

minimum possible: the Bayes rate

• If the number of prototype is large (unlimited), the error rate of the

nearest-neighbor classifier is never worse than twice the Bayes rate

(it can be demonstrated!)

• If n , it is always possible to find x’ sufficiently close so that:

P(i | x’) P(i | x)

Pattern Classification, Chapter 4 (Part 2)

17

Example:

x = (0.68, 0.60)t

Prototypes

Labels

A-posteriori

probabilities estimated

(0.50, 0.30)

2

3

0.25

0.75 = P(m | x)

(0.70, 0.65)

5

6

0.70

0.30

Decision: is the label assigned to x

5

Pattern Classification, Chapter 4 (Part 2)

18

• If P(m | x) 1, then

the nearest neighbor

selection is almost always the same as the

Bayes selection

Pattern Classification, Chapter 4 (Part 2)

19

Pattern Classification, Chapter 4 (Part 2)

20

• The k – nearest-neighbor rule

• Goal: Classify x by assigning it the label most

frequently represented among the k nearest

samples and use a voting scheme

Pattern Classification, Chapter 4 (Part 2)

21

Pattern Classification, Chapter 4 (Part 2)

22

Example:

k = 3 (odd value) and x = (0.10, 0.25)t

Prototypes

(0.15,

(0.10,

(0.09,

(0.12,

0.35)

0.28)

0.30)

0.20)

Labels

1

2

5

2

Closest vectors to x with their labels are:

{(0.10, 0.28, 2); (0.12, 0.20, 2); (0.15, 0.35,1)}

One voting scheme assigns the label 2 to x since 2 is the most

frequently represented

Pattern Classification, Chapter 4 (Part 2)

23

Pattern Classification, Chapter 4 (Part 2)

24

4.6 Metrics and NN Classification

• Metrics = “distance” between patterns

• Four properties:

• Non-negativity D(a, b) ≧0

• Reflexivity

D(a, b) = 0 iff a = b

• Symmetry

D(a, b) = D(b, a)

• Triangle inequality D(a, b)+ D(b, c) ≧ D(a, c)

• Euclidean distance k=2

• Minkowski metric

• Manhattan distance k=1

1/ k

k

Lk (a, b) ai bi

i 1

d

Pattern Classification, Chapter 4 (Part 2)

Distance 1.0 from (0,0,0) for different k

25

Pattern Classification, Chapter 4 (Part 2)

Scaling the coordinates

26

Pattern Classification, Chapter 4 (Part 2)

27

Tanimoto metric

• Use in taxonomy

n1 n2 2n12

DTanimoto( S1 , S 2 )

n1 n2 n12

• Identical

• D(S1, S2) = 0

• Overlap 50%

• D(S1, S2) = (1+1-2*0.5)/(1+1-0.5)=1/1.5=0.666

• No intersection

• D(S1, S2) = 1

Pattern Classification, Chapter 4 (Part 2)

4.6.2 Tangent Distance

28

• Transformed patterns to be as similar as possible

• Linear approximation to the arbitrary transforms

• Perform each of the transformation Fi (x’; ai) on

each stored prototype x’.

• Tangent vector TVi

• TVi=Fi (x’; ai) - x’

• Tangent distance

• Dtan(x’, x) = min [ ||( x’ + Ta) – x|| ]

a

Tangent space

Pattern Classification, Chapter 4 (Part 2)

29

100-dim patterns

Hand write “8”

Shifted s pixels

Pattern Classification, Chapter 4 (Part 2)

30

Pattern Classification, Chapter 4 (Part 2)

31

Pattern Classification, Chapter 4 (Part 2)

4.8 Reduced Coulomb Energy Networks

32

• Parzen-window

• -> Fixed window

• K-NN

• -> adjusting the region based on the density

• RCE network

• -> adjust the size of the window during training

according to the distance to the nearest point of a

different category.

Pattern Classification, Chapter 4 (Part 2)

33

Pattern Classification, Chapter 4 (Part 2)

34

Pattern Classification, Chapter 4 (Part 2)

RCE Training

35

1. begin init j=0, λm=max radius

Train weight

2. do j = j + 1

3.

wij = xi

Find nearest

4.

=

arg

min

D(x,

x’)

x̂

point not in w

5.

λj = min[ D( x̂, x’) - ε, λm]

6.radius if x wk then ajk =1

Set

7. until j = n

Connect pattern and category

8. end

x wi

i

Pattern Classification, Chapter 4 (Part 2)

RCE Classification

1.

2.

3.

4.

5.

6.

7.

8.

Set of stored

prototypes

36

begin init j=0, k=0, x=test pattern, Dt={}

Prototype xj’

do j = j + 1

if D(x, xj’) < λj then Dt= Dt∪xj’

until j=n

Radius of xj’

if label of all xj’ Dt is the same

then return label of all xk Dt

else return “ambiguous” label

end

Pattern Classification, Chapter 4 (Part 2)

37

Summary

•

•

•

2 Nonparametric estimation approaches

1. Densities are estimated (then used for classification)

2. Category is chosen directly

Densities are estimated

•

Parzen windows, probabilistic neural networks

Category is chosen directly

•

K-nearest-neighbor, reduced coulomb energy networks

Pattern Classification, Chapter 4 (Part 2)

38

Pattern Classification, Chapter 4 (Part 2)

39

Class Exercises

• Ex. 13 p.159

• Ex. 3 p.201

• Write a C/C++/Java program that uses a k-nearest neighbor

method to classify input patterns. Use the table on p.209

as your training sample.

Experiment the program with the following data:

•

k=3

x1 = (0.33, 0.58, - 4.8)

x2 = (0.27, 1.0, - 2.68)

x3 = (- 0.44, 2.8, 6.20)

Do the same thing with k = 11

•

• Compare the classification results between k = 3 and k = 11

(use the most dominant class voting scheme amongst the k classes)

Pattern Classification, Chapter 4 (Part 2)