Field studies - Andrew L. Kun

advertisement

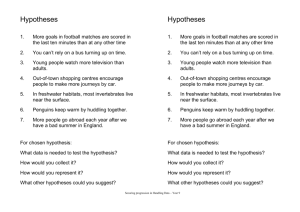

FIELD STUDIES User studies Ubicomp: people use technology Must conduct user studies Also: Focus groups Ethnographic studies Heuristic evaulations Etc. User studies Laboratory studies: Controlled environment Field (in-situ) studies Real world Field studies Appropriate for ubicomp: Abundant data Observe unexpected challenges Understand impact on lives Trade-off: Loss of control Significant time and effort Three common types Current behavior Proof of concept Experience with prototype How to think about user studies? Formulate hypotheses Research steps 1. 2. 3. 4. 5. 6. 7. 8. 9. State problem(s) State goal(s) Propose hypotheses Propose steps to test hypotheses Explain how problem(s), goal(s) and hypotheses fit into existing knowledge Produce results of testing hypotheses Explain results Evaluate research State new problems What is a hypothesis? Proposing an explanation Theory or hypothesis? “This is just a theory.” Some theories we live by (“just” not justified): Newton’s theory of motion Einstein’s theory of relativity Evolutionary theory Hypothesis Must be tentative Must predict Hypothesis Some criteria of scientificity Self-consistent Grounded (fits bulk of relevant knowledge) Accounts for empirical evidence Empirically testable by objective procedures of science General in some respect and to some extent On proposing hypotheses Anomalous phenomena: Strange and unfamiliar (Bermuda triangle) Familiar yet not fully understood (cognitive load) Is there already an explanation? Types of hypotheses Incremental Fundamental shift: Ptolemy (c. 90 – c. 168): geocentric cosmology Copernicus (1473 – 1543): heliocentric cosmology And then came… Kepler (1571 – 1630): elliptical orbits Fundamental shift example Ulcer: Stress? Spicy food? Bacteria. Types of proposed explanations Causes Correlation Causal mechanisms Underlying processes Laws Functions Proposing causal explanations Studies show that using a cell phone while driving increases the probability of getting into an accident. Why is that so? Pick up ringing phone Dial number See but don’t perceive Effects not always there Cell phone + driving: Usually no accident Only one of the factors Remote and proximate causes Cell phone + driving: Attention shift → missed signal → accident Remote cause → proximate cause → effect Correlation A and B are correlated if: →B B → A C → A and C → B A combination of (some of) the above Coincidence A Correlation vs. causal relation: Correlation doesn’t imply causal relation Cannot determine cause direction (A → B or B → A) Correlation Positive, negative None found ≠ none exists Causal link → correlation: May provide initial evidence for causal link Less explanatory value than facts about causal links Causal mechanisms Mechanisms connecting remote causes and their effects. E.g.: artery in heart → clotting Clotting → blocked artery Blocked artery → heart attack Aspirin inhibits clotting → lower risk of heart attack Damaged Underlying processes Photoelectric effect Photoelectric effect Einstein: 1921 Nobel Prize in Physics Laws General regularities in nature Universal: F = ma Non-universal: Statistical laws Functions What is the purpose of the phenomenon? FOR SALE A prime lot of serfs or SLAVES GYPSY (TZIGANY) Through an auction at noon at the St. Elias Monastery on 8 May 1852 consisting of 18 Men 10 Boys, 7 Women & 3 Girls in fine condition Functions William Harvey (1578 – 1657): Heart pumps blood through circulatory system No modern instruments! Experiments with a number of animals: Various fish, Snail, Pigeon, etc. Multiple methods together Function → → causal mechanism → → underlying processes National Ignition Facility (Dennis O’Brien @ UNH): Ignition with lasers → → Laser, target chamber → → Physics of nuclear fusion Multiple methods together Law → underlying processes Isaac Newton (1643 – 1727), second law of motion: F = ma → Graviton? Ockham’s razor Crop circles: pranksters or aliens? Ockham’s razor William of Ockham (c. 1288 – c. 1348) http://en.wikipedia.org/wiki/File:William_of_Ockham.png Do I have a hypothesis? Yes. Do you realize you do? How to think about user studies? Formulate hypotheses Three common types Current behavior Proof of concept Experience with prototype Research steps 1. 2. 3. 4. 5. 6. 7. 8. 9. State problem(s) State goal(s) Propose hypotheses Propose steps to test hypotheses Explain how problem(s), goal(s) and hypotheses fit into existing knowledge Produce results of testing hypotheses Explain results Evaluate research State new problems Current behavior Insights and inspiration: State problem(s), goal(s) Propose hypotheses Relatively long Current behavior – example 1 AJ Brush and Kori Inkpen, “Yours, mine and ours?...” (pdf) (2005 movie inspiring title) Home technology: users share, etc. Current behavior – example 2 Schwetak Patel et al. “Farther Than You May Think…” (pdf) Hypothesis: Mobile phone a proxy to user location. Three common types Current behavior Proof of concept Experience with prototype Research steps 1. 2. 3. 4. 5. 6. 7. 8. 9. State problem(s) State goal(s) Propose hypotheses Propose steps to test hypotheses Explain how problem(s), goal(s) and hypotheses fit into existing knowledge Produce results of testing hypotheses Explain results Evaluate research State new problems Proof of concept Technological advance: Produce results: prototype Explain results: prototype Relatively short Proof of concept – example 1 J. Sherwani et al., “Speech vs. Touch-tone: Telephone Interfaces for Information Access by Low Literate Users” (pdf) (video) Hypothesis: Speech better telephony interface than touch-tone for low literate users. Proof of concept – example 2 John Krumm and Eric Horvitz, “Predestination:…” (pdf) Hypothesis: Destinations from partial trajectories. Train/test algorithm on GPS tracks from 169 people Used pre-existing data: Krumm and Horvitz, “The Microsoft Multiperson Location Survey” Collecting original data a significant contribution Leverage! Three common types Current behavior Proof of concept Experience with prototype Research steps 1. 2. 3. 4. 5. 6. 7. 8. 9. State problem(s) State goal(s) Propose hypotheses Propose steps to test hypotheses Explain how problem(s), goal(s) and hypotheses fit into existing knowledge Produce results of testing hypotheses Explain results Evaluate research State new problems Experience with prototype Users’ interaction with technology: Produce results: prototype Explain results: prototype Relatively long Prototype an example! Others don’t care about: Raw usage information Usability problems Intricate implementation details Etc. Generalize! Scientific and good technical work Experience – example 1 C. Neustaedter, et al., “A Digital Family Calendar in the Home:…” (pdf) (video) Hypothesis: At-a-glance awareness, remote access are significant benefits. 4 households, 4 weeks each (Best Student Paper, Graphics Interface 2007) Experience – example 2 Rafael Ballagas et al., “Gaming Tourism:…” (pdf) (video) Hypothesis: Learning through a game. 18 participants: 2 alone + 8 pairs (8 x 2 = 16) Study design Who is the consumer? Manager(s) Professor(s) For paper, proposal, thesis Funding agency E.g. advisor’s collaborators Reviewers E.g. thesis committee Researchers Industry, academic lab Report on progress, proposal for funding Public Friends, family, alumni, potential students, donors, potential employers Study design How can I explain this to a layperson? What is key? What can be omitted? How will I write this up? Paper Thesis Report Blog post Start writing paper/thesis/report/blog post at the beginning of the study. Study design Test hypothesis/hypotheses Testing hypotheses via user studies User studies: Laboratory studies Good: Control, easier to evaluate results Bad: Constraints Field studies Good: Fewer constraints Bad: Less control, more difficult to evaluate results Criteria Falsifiability: Prediction fails = explanation isn’t correct Account for other factors! Note: Criterion - singular Criteria - plural Criteria Verifiability: Prediction successful = explanation is correct Account for other factors! The meat of it… Battleship Potemkin, 1925 film Rotten meat scene Why larvae in meat? Francesco Redi (1626-1697) Generation of insects, 1668 Causal explanation: fly droppings Redi’s research Hypothesis: Worms derived from fly droppings Testing hypothesis: Two sets of flasks with meat: sealed and open Prediction: worms only in open flask Falsifiability criterion Can anything cause a failed prediction even if explanation is correct? Did the apparatus operate properly? Tight seal? Meat not initially spoiled? Other? Verifiability criterion Can anything result in successful prediction even if explanation is wrong? What if “active principle” in the air is responsible for spontaneous generation? Modify experiment: Replace seal with veil: Flies cannot reach meat Air in contact with meat Modification helps meet verifiability criterion Verifiability criterion Experimental vs. control group: Only difference in level of one independent variable Redi’s experiment: Control: Open flasks Experimental: Veil-covered flasks Control: laboratory experiment Meat in veil-covered flasks? Creating control/experimental groups often impossible without careful design/control Study design Test hypothesis/hypotheses Formulate in terms of: Independent variables (multiple conditions) Dependent variables Design: Within-subjects Between-subjects Mixed design Within-subjects design: example Police radio UI: hardware Speech Blog post, video Within-subjects design: example Results in graphical form: Within-subjects design: example Results in graphical form: Example: between-subjects design Classical example: testing a drug Mixed design: example 1 SUI characteristics study Secondary task: speech control of radio 2 x 2 x 2 design: SR accuracy: high/low PTT button: yes/no – ambient recognition Dialog repair strategy: mis-/non-understanding Mixed design: example 2 Motivation: PTT vs. driving performance Secondary task: speech control of radio 2 x 3 x 3 design: SR accuracy: high/low PTT activation: push-hold-release/push-release/no push PTT button: ambient/fixed/glove Push-hold-release Ambient High Low Fixed Glove Push-release Ambient Fixed No-push Glove Ambient Fixed Glove Control condition Baseline: e.g. no technology vs. later introduced technology Considerations What will subjects do? Normal behavior – may take long Scenarios Augment existing or brand new? Augment: taking advantage of familiarity New: more control (fewer inherited constraints) Simulate or implement? E.g. WoZ Data to collect Qualitative Insight into what participants did. How do participants compare? Did they do what they thought they did? Use quantitative data. Quantitative How did people behave? But why? Use qualitative data. Data to collect At least three types of data: Demographic Usage Reactions Data to collect Run pilot experiments! Collecting data Logging Surveys Experience sampling Diaries Interviews Unstructured observation – ethnography Logging Plan ahead, not after the fact! Testing hypotheses Don’t leave important data out Don’t save data you don’t need Leverage logging: Everything E.g. OK? Mike Farrar’s MS research: files appearing on server indicates apps OK Explicit communication with server: “I’m OK!” Surveys Open-ended Multiple-choice Likert-scale Surveys Questions should allow positive and negative feedback. Text clear to others? Check! One question at a time! “Fun Length? Don’t and easy to use?” bore subjects to death. Standard questions (e.g. QUIS)? Previously used questions? Example: Mike Farrar’s study Hypotheses: Initialize grammar (video): From previous tags From tags by users with similar interests Voice commands convenient way to tag photos (video) Keyboard users will use voice less Low task completion: give up on voice Experience sampling (ESM) Short questionnaire Timing: Random Scheduled Event-based Experience sampling (ESM) How often? How many? Relate to quantitative data? Diaries Similar to ESM Interviews Semi-structured: List of specific questions + follow-up questions Bring data E.g. Nancy A. Van House: “Flickr and Public Image Sharing:…” Interviews + photo elicitation Interviews Neutral questions Negative feedback is OK (this is hard): Don’t argue! Participants Follow IRB rules Participants Who to recruit? Representative of intended users Not your friends, family, colleagues – bias! May need different types Recruit sufficient numbers of each type Participant profile Age E.g. age significant for driving Gender Technology use and experience Other Eye tracker studies: no glasses Number of participants Between-subjects usually requires more than withinsubjects Proof-of-concept: typically fewer and many types Longer study: may be able to use fewer Time commitment per participant is significant! Recruit (Craigslist), organize, train, run, transfer data, process data Participants will drop out – recruit extra Counterbalancing may not work out Compensation Don’t try to save on this! Driving simulator lab study cost example 1 graduate student year at UNH ≈ $50k Software maintenance fees per year ≈ $20k Trip to conference ≈ $2k PC or laptop ≈ $2k $20 x 24 participants ≈ $0.5k (less than 1%) Compensation Must not affect data E.g. in image tagging study if we paid per picture: More data Unrealistic as interactions are for money not for value of prototype Compensation Leverage if you can: Latest driving simulator lab study in collaboration with Microsoft Research: Use Microsoft software as compensation Data analysis Test hypotheses Use multiple data types Tell a story Data analysis Statistics: Descriptive Inferential Descriptive statistics Level of measurement: Nominal Ordinal Interval Descriptive statistics Level of measurement: Nominal Ordinal Interval Level of measurement Nominal: Unordered E.g. categories yes/no Valid to report : Frequency Level of measurement Ordinal: Rank order preference without numeric difference E.g. responses on Likert scale Five of the eight participants strongly agreed or agreed with the following statement: “I prefer to have a GPS screen for navigation.” Valid to report : Frequency Median Some people report means but what is the mean of “strongly agree” and “strongly disagree”? Level of measurement Interval: Numerical differences significant E.g. age, number of times an action occurred, etc. Valid to report: Sum Mean Median Standard deviation (outliers?) Outliers in interval data Inferential statistics Significance tests t-test ANOVA Many others Which to use: depends on data Significance test: example 1 To assess the effect of different navigation aids on visual attention, we performed a one-way ANOVA using PTD as the dependent variable. As expected, the time spent looking at the outside world was significantly higher when using spoken directions as compared to the standard PND directions, p<.01. Specifically, for spoken directions only, the average PDT was 96.9%, while it was 90.4% for the standard PND. Significance test: example 2 … PDT on the PND screen changes with the distance from the previous intersection… significant main effect, p<.01… 20 PDT on standard PND [%] 15 10 5 0 60-80 -5 80-100 100-120 120-140 distance from previous intersection [m] 140-160 Significance test: example 3 Randomization test Kun et al. (pdf) Idea from Veit et al. (pdf) Significance test: example 3 35 30 standard p = 0.05 25 Rstw [degrees^2 ] spoken only 20 15 10 5 0 0 1 2 3 4 lag [seconds] 5 6 7 8