Cognitive Architectures and Virtual Intelligent Agents

Cognitive Architectures and

Virtual Intelligent Agents

Pat Langley

School of Computing and Informatics

Arizona State University

Tempe, Arizona USA

Thanks to D. Choi, G. Cleveland, T. Konik, N. Li, N. Nejati, C. Park, and D. Stracuzzi for their contributions. This talk reports research partly funded by grants from ONR and

DARPA, which are not responsible for its contents.

The Nature of Intelligence

Cognitive science and AI, although distinct fields, agree on some core assumptions:

Intelligence arises from computational processes

A variety of functional abilities underlie intelligence

These abilities are general yet benefit from knowledge

Humans remain our best examples of intelligent systems

Progress toward understanding intelligence requires taking a position on two key methodological questions:

•

What theoretical framework should one assume?

• What problems or testbeds should drive research?

Frameworks for Intelligent Systems

Because intelligence involves distinct abilities, it is tempting to develop them separately and then combine them.

This position is well represented in the AI community by:

Software engineering (e.g., Brugali, 2009)

Blackboard systems (Engelmore & Morgan, 1988)

Multi-agent systems (Weiss, 2000)

Such integrated approaches offer clear advantages in terms of modular development and division of labor.

But they are not the only way to create intelligent artifacts.

Cognitive Architectures

A cognitive architecture (Newell, 1990) is an infrastructure for intelligent systems that:

makes strong theoretical assumptions about the representations and mechanisms underlying cognition

incorporates many ideas from psychology about the nature of the human mind

contains distinct modules, but these access and alter the same memories and representations

comes with a programming language that eases construction of knowledge-based systems

A cognitive architecture is all about mutual constraints, and it should provide a unified theory of intelligent behavior.

Testbeds for Intelligent Systems

We also need problems and testbeds that pose challenges to theories and evaluate their responses. Examples include:

Educational tasks (e.g., reasoning in math and physics)

Standardized tests (e.g., IQ, SAT, GRE exams)

Integrated robots (e.g., for search and rescue)

Synthetic characters in virtual worlds (e.g., for games)

Each problem class has advantages and disadvantages, but all have important roles to play.

In this talk, I will report work on synthetic characters that has driven our progress on cognitive architectures.

Synthetic Agents for Urban Driving

Insert demo here

QuickTime™ and a

decompressor are needed to see this picture.

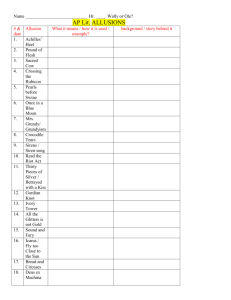

Outline for the Talk

•

Frameworks and testbeds for intelligent systems

• Review of the I CARUS cognitive architecture

• Common and distinctive features

• Representation and organization of memory

•

Performance and learning mechanisms

•

Synthetic agents developed with I CARUS

•

Complex synthetic environments

• Lessons learned from these efforts

• Plans for future architectural / testbed research

The I

CARUS

Architecture

I CARUS (Langley, 2006) is a computational theory of the human cognitive architecture that posits:

1. Short-term memories are distinct from long-term stores

2. Memories contain modular elements cast as symbolic structures

3. Long-term structures are accessed through pattern matching

4. Cognition occurs in retrieval/selection/action cycles

5. Learning involves monotonic addition of elements to memory

6. Learning is incremental and interleaved with performance

These assumptions are not novel; it shares them with architectures like Soar (Laird et al., 1987) and ACT-R (Anderson, 1993).

Distinctive Features of I

CARUS

However, I CARUS also makes assumptions that distinguish it from these architectures:

1. Cognition is grounded in perception and action

2. Categories and skills are separate cognitive entities

3. Short-term elements are instances of long-term structures

4. Skills and concepts are organized in a hierarchical manner

5. Inference and execution are more basic than problem solving

Some of these tenets also appear in Bonasso et al.’s (2003) 3T,

Freed’s (1998) APEX, and Sun et al.’s (2001) Clarion.

But only I CARUS combines them into a unified cognitive theory.

Research Goals for I

CARUS

Our current objectives in developing I CARUS are to produce:

a computational theory of high-level cognition in humans

that is qualitatively consistent with results from psychology

that exhibits as many distinct cognitive functions as possible

that supports creation of intelligent agents in virtual worlds

We have not yet modeled quantitative results from experiments, but we hope to obtain such fits when I CARUS is more mature.

This would enable more direct comparison to architectures like

ACT-R, Clarion, and EPIC (Meyer & Kieras, 1997).

Cascaded Integration in I

CARUS

Like other unified cognitive architectures, I CARUS incorporates a number of distinct modules. learning problem solving skill execution conceptual inference

I CARUS adopts a cascaded approach to integration in which lower-level modules produce results for higher-level ones.

I

CARUS

Beliefs and Goals for Urban Driving

Inferred beliefs:

(current-street me A)

(lane-to-right g599 g601)

(last-lane g599)

(under-speed-limit me)

(steering-wheel-not-straight me)

(in-lane me g599)

(on-right-side-in-segment me)

(building-on-left g288)

(building-on-left g427)

(building-on-left g431)

(building-on-right g287)

(increasing-direction me)

Top-level goals:

(not (near-pedestrian me ?any))

(on-right-side-in-segment me)

(not (over-speed-limit me))

(current-segment me g550)

(first-lane g599)

(last-lane g601)

(slow-for-right-turn me)

(centered-in-lane me g550 g599)

(in-segment me g550)

(intersection-behind g550 g522)

(building-on-left g425)

(building-on-left g429)

(building-on-left g433)

(building-on-right g279)

(near-pedestrian me g567)

(not (near-vehicle me ?other))

(in-lane me ?segment)

(not (running-red-light me))

I

CARUS

Concepts for Urban Driving

((in-rightmost-lane ?self ?clane)

:percepts ((self ?self) (segment ?seg)

(line ?clane segment ?seg))

:relations ((driving-well-in-segment ?self ?seg ?clane)

(last-lane ?clane)

(not (lane-to-right ?clane ?anylane))))

((driving-well-in-segment ?self ?seg ?lane)

:percepts ((self ?self) (segment ?seg) (line ?lane segment ?seg))

:relations ((in-segment ?self ?seg) (in-lane ?self ?lane)

(aligned-with-lane-in-segment ?self ?seg ?lane)

(centered-in-lane ?self ?seg ?lane)

(steering-wheel-straight ?self)))

((in-lane ?self ?lane)

:percepts ((self ?self segment ?seg) (line ?lane segment ?seg dist ?dist))

:tests ( (> ?dist -10) (<= ?dist 0)))

Hierarchical Organization of Concepts

I CARUS organizes conceptual memory in a hierarchical manner.

concept concept clause percept

The same conceptual predicate can appear in multiple clauses to specify disjunctive and recursive concepts.

Conceptual Inference in I

CARUS

Conceptual inference in I CARUS occurs from the bottom up.

concept concept clause percept

Starting with observed percepts, this process produces high-level beliefs about the current state.

Conceptual Inference in I

CARUS

Conceptual inference in I CARUS occurs from the bottom up.

concept concept clause percept

Starting with observed percepts, this process produces high-level beliefs about the current state.

Conceptual Inference in I

CARUS

Conceptual inference in I CARUS occurs from the bottom up.

concept concept clause percept

Starting with observed percepts, this process produces high-level beliefs about the current state.

Conceptual Inference in I

CARUS

Conceptual inference in I CARUS occurs from the bottom up.

concept concept clause percept

Starting with observed percepts, this process produces high-level beliefs about the current state.

I

CARUS

Skills for Urban Driving

((in-rightmost-lane ?self ?line)

:percepts ((self ?self) (line ?line))

:start ((last-lane ?line))

:subgoals ((driving-well-in-segment ?self ?seg ?line)))

((driving-well-in-segment ?self ?seg ?line)

:percepts ((segment ?seg) (line ?line) (self ?self))

:start ((steering-wheel-straight ?self))

:subgoals ((in-segment ?self ?seg)

(centered-in-lane ?self ?seg ?line)

(aligned-with-lane-in-segment ?self ?seg ?line)

(steering-wheel-straight ?self)))

((in-segment ?self ?endsg)

:percepts ((self ?self speed ?speed) (intersection ?int cross ?cross)

(segment ?endsg street ?cross angle ?angle))

:start ((in-intersection-for-right-turn ?self ?int))

:actions (( steer 1)))

Hierarchical Organization of Skills

I CARUS organizes skills in a hierarchical manner, which each skill clause indexed by the goal it aims to achieve. goal skill clause operator

The same goal can index multiple clauses to allow disjunctive, conditional, and recursive procedures.

Skill Execution in I

CARUS

Skill execution occurs from the top down, starting from goals, to find applicable paths through the skill hierarchy. goal skill clause operator

A skill clause is applicable if its goal is unsatisfied and if its conditions hold, given bindings from above.

Skill Execution in I

CARUS

This process repeats on each cycle to produce goal-directed but reactive behavior (Nilsson, 1994). goal skill clause operator

However, I CARUS prefers to continue ongoing skills when they match, giving it a bias toward persistence over reactivity.

Skill Execution in I

CARUS

If events proceed as expected, this iterative process eventually achieves the agent’s top-level goal. goal skill clause operator

At this point, another unsatisfied goal begins to drive behavior, invoking different skills to pursue it.

Execution and Problem Solving in I

CARUS

Skill Hierarchy

Reactive

Execution no impasse?

Problem goal beliefs yes

Generated Plan

Primitive Skills

Problem

Solving

Problem solving involves means-ends analysis that chains backward over skills and concept definitions, executing skills whenever they become applicable.

I

CARUS

Learns Skills from Problem Solving

Skill Hierarchy

Reactive

Execution no impasse?

Problem goal beliefs yes

Generated Plan

Primitive Skills

Problem

Solving

Skill

Learning

Learning from Problem Solutions

I CARUS incorporates a mechanism for learning new skills that:

operates whenever problem solving overcomes an impasse

incorporates only information stored locally with goals

generalizes beyond the specific objects concerned

depends on whether chaining involved skills or concepts

supports cumulative learning and within-problem transfer

This skill creation process is fully interleaved with means-ends analysis and execution.

Learned skills carry out forward execution in the environment rather than backward chaining in the mind.

I

CARUS

Summary

I CARUS is a unified theory of the cognitive architecture that:

includes hierarchical memories for concepts and skills;

interleaves conceptual inference with reactive execution;

resorts to problem solving when it lacks routine skills;

learns such skills from successful resolution of impasses.

We have developed I CARUS agents for a variety of simulated physical environments.

However, each effort has revealed limitations that have led to important architectural extensions.

Creating Synthetic Agents in I

CARUS

Synthetic Agents for ‘Urban Combat’

Urban Combat is a synthetic environment, built on the Quake engine, used in the DARPA Transfer Learning program.

Tasks for agents involved traversing an urban landscape with a variety of obstacles to capture a flag.

Our experiences in Urban Combat provided some clear lessons:

Storing, using, and learning route knowledge

Learning to handle obstacles through trial and error

Supporting different varieties of structural transfer across problems

Each of these insights has influenced our more recent work on I CARUS.

IED

Start

Transfer Studies with Urban Combat

Start Experiments with I CARUS agents on Urban Combat demonstrated varieties of structural transfer across multiple goal-directed tasks

(Choi et al., ICCM-2007).

IED

Transfer vs. Control Conditions

Start

Source

Target

IED

Synthetic Agents for Urban Driving

We have developed an urban driving environment using Garage

Games’ Torque game engine.

QuickTime™ and a

YUV420 codec decompressor are needed to see this picture.

This domain involves a mixture of cognitive and sensori-motor behavior in a constrained but complex and dynamic setting.

Synthetic Agents for Urban Driving

The Torque urban driving environment supports tasks of graded complexity, including:

Keeping the car aligned at a constant speed

Changing speed to obey traffic signs and lights

Changing lanes/speed to avoid vehicles/pedestrians

Approaching and turning a corner

Driving repeatedly around a block

Delivering packages to specified addresses

We have developed I CARUS agents that address each of these tasks in a reasonably robust manner.

We have also demonstrated its ability to learn driving skills from problem solving (Langley & Choi, JMLR, 2006).

Synthetic Agents for Urban Driving

Our early efforts on urban driving motivated two key features of the I CARUS architecture:

Indexing skills by the goals they achieve

Multiple top-level goals with priorities

Recent work on Torque driving agents has led to additional changes to the architecture (Choi, 2010):

Long-term memory for generic goals

Reactive generation of specific short-term goals

Resource-based execution of multiple skills per cycle

Our use of this complex environment has driven our cognitive architecture research toward important new functionalities.

Synthetic Agents for American Football

We have also developed I CARUS agents to execute football plays in

Rush 2008 from Knexus Research.

This domain is less complex in some ways but it still remains highly reactive and depends on spatio-temporal coordination among players on a team.

QuickTime™ and a

decompressor are needed to see this picture.

Synthetic Agents for American Football

Our results in Rush 2008 are interesting because I CARUS learned its football skills by observing other agents.

QuickTime™ and a

YUV420 codec decompressor are needed to see this picture.

This and similar videos of the Oregon State football team provided training examples to drive this process.

Synthetic Agents for American Football

Our efforts on Rush 2008 have motivated additional extensions to the I CARUS architecture:

Time stamps on beliefs to support episodic traces

Ability to represent and recognize temporal concepts

Adapting means-ends analysis to explain observed behavior

Ability to control multiple agents in an environment

We have used the extended I CARUS to learn hierarchical skills for

20 distinct football plays (Li et al., AAIDE-2009).

These results utilized Oregon State’s image-processing system, which extracted objects and simple events from the videos.

Synthetic Agents for Twig Scenarios

Most recently, we have used Horswill’s (2008) Twig simulator to develop humanoid I CARUS agents.

This low-fidelity environment supports a few object types, along with simple reactive behaviors for virtual characters.

Synthetic Agents for Twig Scenarios

We have developed a specific Twig scenario that involves four types of synthetic characters:

Innocents go from ball to ball, pausing to pick up any dolls they encounter nearby.

Collectors sit in chairs, buy dolls from anyone who offers at the right price, and secure these purchases in safes.

Producers stand by trees, produce new dolls, and guard them by blocking any other agents who approach.

Capitalists work in pairs, buying or stealing dolls from Innocents, stealing them from Producers, and selling them to Collectors.

We have developed I CARUS agents for these character types to demonstrate their interactions with each other.

This served as the final project for an ASU course on cognitive systems and intelligent agents (http://circas.asu.edu/cogsys/).

Synthetic Agents for Twig Scenarios

QuickTime™ and a

Microsoft Video 1 decompressor are needed to see this picture.

Reasoning about Others

We designed I CARUS to model intelligent embodied agents, but our work has emphasized independent action.

•

The framework can deal with other agents, but only by viewing them as objects in the environment.

Yet humans can reason about the beliefs, goals, and intentions of others, then use their inferences to make decisions.

•

One might even argue that such deep social cognition is a key feature of human intelligence.

Adding this capability to I CARUS will require extensions to its representation, performance processes, and learning methods.

Ongoing I

CARUS

Extensions

To provide I CARUS with the capability to reason about others’ mental states, we are:

•

Extending its representation to allow embedded modal literals

[e.g., (belief me (goal driver2 (in-right-lane driver2 seg6)))] ;

•

Revising inference to support incremental abductive reasoning that explains events in terms of mental states;

• Extending skill execution and problem solving to pursue goals that change mental states (e.g., by communication); and

• Introducing learning mechanisms that make inference about others more efficient with experience.

We are developing these abilities to construct conversational agents for emergency medical assistance in the field.

Synthetic Environments / Architectural Testbeds

In an STTR collaboration, we are using SET Corp.’s CASTLE, a flexible physics/simulation engine, to:

•

Create an improved urban driving environment that supports a richer set of objects, goals, and activities;

• Develop a ‘treasure hunt’ testbed in which players must follow complex instructions and coordinate with other agents;

•

Create interfaces to I CARUS , ACT-R, and other architectures;

•

Design tasks of graded complexity that can aid in evaluating architecture-based cognitive models.

Together, these will let the community evaluate its architectures on common problems in shared testbeds.

These will support both extended, open-ended operations and brief, hand-crafted scenarios.

CASTLE Screen Shots / Assumptions

Rigid body dynamics — solid objects, Newtonian physics, friction, joints, springs; no flexible objects or fluids

Z-buffer graphics — solid objects, textures, lights; no shadows, motion blur, or irradiance

Concluding Remarks

In this talk, I presented I CARUS , a unified theory of the human cognitive architecture that:

•

Grounds high-level cognition in perception and action

•

Treats categories and skills as separate cognitive entities

•

Views short-term structures as instances of long-term ones

• Organizes skills and concepts in a hierarchical manner

• Combines reactive control with deliberative problem solving

In addition, I reported a number of I CARUS agents that control synthetic agents in simulated environments.

These efforts raised theoretical challenges, led to extensions, and revealed insights about the nature of intelligence.

Other Research on Modeling / Simulation

Quantitative process models of ecological systems

(Bridewell et al., MLj, 2008)

Qualitative system-level models of human aging

(Langley, NIA-2009)

Time

–

–

+ junk-protein

+

+

Fe

ROS

+ oxidized-protein

+ lipofuscin

+

–

+

Lysosome membrane-damage lytic-enzyme

+

H202

+

+ junk-protein

Cytoplasm lipofuscin

H202