Ch. 3 Simple Linear Regression

advertisement

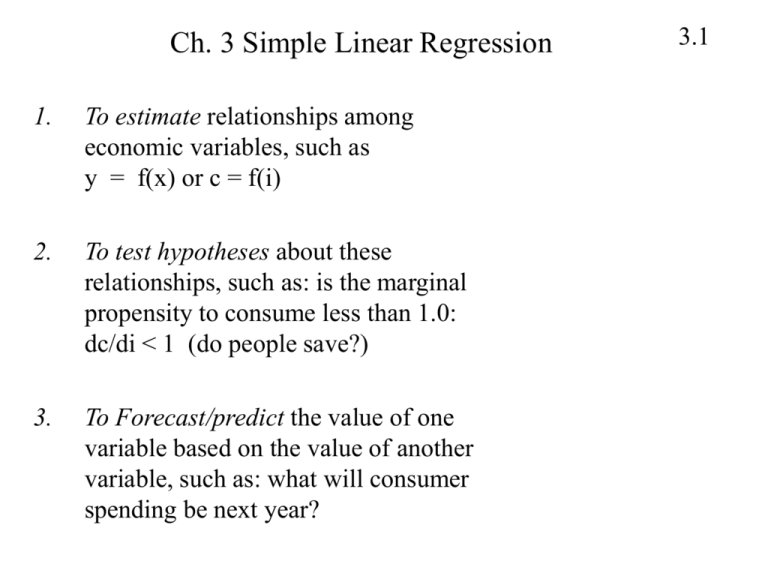

Ch. 3 Simple Linear Regression 1. To estimate relationships among economic variables, such as y = f(x) or c = f(i) 2. To test hypotheses about these relationships, such as: is the marginal propensity to consume less than 1.0: dc/di < 1 (do people save?) 3. To Forecast/predict the value of one variable based on the value of another variable, such as: what will consumer spending be next year? 3.1 Population Regression Function Y: dependent variable: the variable whose behavior we seek to explain X: independent variable: the variable that determines (in part) the variation in the dependent variable 1) the deterministic portion: β1 + β2 x 2) the stochastic portion: e Population Regression Function is: y = β1 + β 2 x + e 3.2 Example: Weekly Food Expenditures yt = dollars spent each week on food items by household t xt = weekly income of household t Suppose we believe x and y have a linear, causal relationship whereby variation in x “causes” variation in y: y = β1 + β 2 x However, it is unlikely that the relationship will be exact. We need to allow for an error term, e: y = β1 + β 2 x + e 3.3 The Error Term 3.4 The presence of the (unobservable) error term drives much of what we do. Suppose (incorrectly) the relationship between X and Y did not have an error term. Suppose β1 = 30 and β2 = 0.15 Then for families with x = $480 Y = β1 + β2x = 30 + 0.15(480) = $102 This means all families with a weekly income of $480 will spend $102 per week on food. Very unlikely. More likely to see variation in weekly food expenditures for the group of families who earn $480 per week (or any other $$ amount) The Error Term (con’t) 3.5 Y is a random variable because it is the sum of non-random variable: (β1 + β2x ) and a random variable: (e) The error term (e) picks up: 1. Omitted variables (unspecified factors ) that influence the dependent variable 2. Effects of a non-linear relationship between Y and X 3. Unpredictable random behavior that is be unique to that observation is in error. (the model is one of behavior) Use Rules of Mathematical Expectation From Chapter 2, you learned the following rules of mathematical expectation (the E(.) operator) Suppose that Z is the random variable: E(Z + a) = E(Z) + a where a is a constant (has no randomness) Suppose that b is also a constant: E(bZ + a) = bE(Z) + a In both of these rules, we see how the E(.) operator moves thru and stops on random variables, not on constants 3.6 Assumptions of the Model 1. The value of y, for each value of x, is y = 1 + 2x + e the model is linear in the parameters () and the error term is additive 2. The average value of the random error e is: E(e) = 0 3. The variance of the random error e is: var(e) = 2 var(y) = 2 Homosckedastic 4. The error term is serially independent (uncorrelated with itself) cov(ei ,ej) = cov(yi ,yj) = 0 (Serial independence) 3.7 Assumptions of the Model (con’t) 5. The variable X is not random and must take at least two different values COV(X,e) = 0 [or E(Xe) = 0 b/c E(e) = 0 by assumption 2] 6. (optional) e is normally distributed with mean 0, var(e)=2 e ~ N(0,2) 3.8 3.9 f(yt) . . x1=480 x2=800 income The probability density function for yt at two levels of household income, x t xt Goal of Regression Analysis The economic model is … E(y|x) = β1 + β2x The econometric model is… y = β1 + β2x + e We want to estimate this mean. This mean is just a line with an intercept and slope. 1 measures E(y|x = 0) 2 measures the change in E(y) from a change in x E ( y | x) dE ( y | x) 2 x dx 3.10 f(.) 3.11 f(e) f(y) 0 1+2x Probability density function for e and y The Framework Population regression values: y t = 1 + 2 x t + e t (Data on yt are generated by this econometric model) Population regression line is the mean of y E(y t|x t) = 1 + 2x t The parameters of the model are: 1 the intercept 2 the slope 2 the variance of the error term (et) 3.12 The Estimation: 3.13 • The parameters 1, 2, and 2 are unknown to us and must be estimated. • Take a sample of data on X and Y • The idea is to fit a line through the data points. Call this line the Sample Regression Line: ^y = b + b x t 1 2 t where b1 be the estimator for 1 and b2 the estimator for 2. • Define a Residual as: ^e = y – ^y t t t it measures the difference between actual y value and the “fitted” (or “predicted”) value. 3.14 y = expendture Table 3.1 Food Expenditure Data 300 200 Y 100 0 0 500 1000 x = income 1500 Estimating the Population Regression Line:The Least Squares Principle • Any line through the data points will generate a set of residuals. • We want the line that generates the smallest residuals. • The smallest residuals are those whose sum of squares is the smallest. We call it the Method of Least Squares 3.15 Obtaining the Least Squares Estimates To obtain the Least Squares estimates of 1 and 2 we will find the values of the slope and intercept of a line that minimizes the sum of squared residuals using calculus. This will provide us with formulas, called the least squares estimators, that we can use in any regression problem. 3.16 3.17 yt 1 2 xt et et yt 1 2 xt The sum of squared deviations: T T t 1 t 1 S ( 1 , 2 ) et2 ( yt 1 2 xt ) 2 Minimize this sum with respect to 1 and 2 by taking the first derivative twice: once with respect to 1 and again with respect to 2: T S ( 1 , 2 ) ( yt 1 2 xt ) 2 t 1 S (.) 2 ( yt 1 2 xt ) 1 S (.) 2 ( yt 1 2 xt )xt 2 When these two terms are set to zero, 1 and 2 become b1 and b2 because they no longer represent just any value of 1 and 2 but the special values that correspond to the minimum of S() 3.18 #1 2 ( yt b1 b2 xt ) 0 3.19 #2 2 ( yt b1 b2 xt ) xt 0 We have two equations and two unknowns (b1 and b2). Therefore, solve these two equations for b1 and b2. Let’s start with #1: 2 ( yt b1 b2 xt ) 0 ( yt b1 b2 xt ) 0 yt b1 b2 xt 0 yt Tb1 b2 xt 0 y b1 t b2 xt Tb1 y T t b2 xt T Now solve #2 for b2 and substitute in our formula for b1 2 ( yt b1 b2 xt ) xt 0 ( yt b1 b2 xt ) xt 0 ( yt xt b1xt b2 xt xt ) 0 2 y x b x b x t t 1 t 2 t 0 2 y x b x b x t t 1 t 2 t 0 yt xt b ) x y x ( t t 2 t b2 xt2 0 T T yt xt xt xt 2 y x b b x t t 2 2 t 0 T T yt xt xt xt 2 y x b ( x t t 2 t )0 T T 3.20 y x xt xt 2 b2 xt yt xt T T yt xt yt xt T b2 xt xt 2 xt T T yt xt yt xt b2 2 2 T xt xt t or: t And from slide 3.25: b1 y T t b2 xt T yt b2 xt 0 3.21 Estimates for Food Expenditure Data b2 0.1283 b1 40.7676 yˆ t 40.7676 .1283xt How do we interpret these estimates? 3.22 3.23 Regression of food expenditure on income ^y = 0.1283x + 40.768 300 200 Y Y 100 0 0 500 1000 X 1500 Linear (Predicted Y) Regression Terminology Concept Definition Dependent variable (y) The variable whose behavior we want to model Independent variable (x) The variable that we believe determines the dependent variable Parameters (coefficients) β1 and β2 Defines the nature of the relationship between Y and X Random error term (e) We expect the relationship between X and Y to have a random element. Estimators b1 and b2 Formulas explaining how to combine the sample data on X and Y to estimate the intercept and slope Fitted line ^yt = b1 + b2xt Predicted values for Y, using the estimates of intercept and slope Residual ^et = yt - ^ yt Difference between actual and predicted values of Y 3.24