Phonetic features in ASR

advertisement

Phonetic features in ASR

Intensive course Dipartimento di Elettrotecnica ed Elettronica

Politecnica di Bari

22 – 26 March 1999

Jacques Koreman

Institute of Phonetics

University of the Saarland

P.O. Box 15 11 50

D - 66041 Saarbrücken

Germany

E-mail:

jkoreman@coli.uni-sb.de

Organisation of the course

• Tuesday – Friday:

- First half of each session:

theory

- Second half of each session: practice

• Interruptions invited!!!

Overview of the course

1. Variability in the signal

2. Phonetic features in ASR

3. Deriving phonetic features from the

acoustic signal by a Kohonen network

4. ICSLP’98: “Exploiting transitions and

focussing on linguistic properties for ASR”

5. ICSLP’98: “Do phonetic features help to

improve consonant identification in ASR?”

The goal of ASR systems

• Input: spectral description of microphone

signal, typically

- energy in band-pass filters

- LPC coefficients

- cepstral coefficients

• Output: linguistic units, usually phones or

phonemes (on the basis of which words can

be recognised)

Variability in the signal (1)

Main problem in ASR: variability in the input

signal

Example: /k/ has very different realisations

in different contexts. Its place of

articulation varies from velar before

back vowels to pre-velar before front

vowels

(own articulation of “keep”,“cool”)

Variability in the signal (2)

Main problem in ASR: variability in the input

signal

Example: /g/ in canonical form is sometimes

realised as a fricative or approximant ,

e.g. intervocalically (OE. regen > E.

rain). In Danish, this happens to all

intervocalic voiced plosives; also,

voiceless plosives become voiced.

Variability in the signal (3)

Main problem in ASR: variability in the input

signal

Example: /h/ has very different realisations

in different contexts. It can be

considered as a voiceless realisation

of the surrounding vowels.

(spectrograms “ihi”, “aha”, “uhu”)

Variability in the signal (3a)

[ i: h

i: ]

[ a: h a:

]

[ u:

h u:

]

Variability in the signal (4)

Main problem in ASR: variability in the input

signal

Example: deletion of segments due to articulatory overlap. Friction is superimposed

on the vowel signal.

(spectrogram G.“System”)

Variability in the signal (4a)

p0

s

i m

a

l

z Y s p0 t

(

[ b0 d e

e

m]

Variability in the signal (5)

Main problem in ASR: variability in the input

signal

Example: the same vowel /a:/ is realised differently dependent on its context.

(spectrogram “aba”, “ada”, “aga”)

Variability in the signal (5a)

[ a: b0b a: ]

[ a: b0d

a: ]

[ a: b0g a: ]

Modelling variability

• Hidden Markov models can represent the

variable signal characteristics of phones

1-p1

S

1

1-p2

p1

1-p3

p2

p3

E

Lexicon and language model (1)

• Linguistic knowledge about phone

sequences (lexicon, language model)

improves word recognition

• Without linguistic knowledge, low phone

accuracy

Lexicon and language model (2)

Using a lexicon and/or language model is not a

top-down solution to all problems: sometimes

pragmatic knowledge needed.

Recognise speech

Example: [rsp]

Wreck a nice beach

Lexicon and language model (3)

Using a lexicon and/or language model is not a

top-down solution to all problems: sometimes

pragmatic knowledge needed.

Get up at eight o’clock

Example: [p]

Get a potato clock

CONCLUSIONS

• The acoustic parameters (e.g. MFCC) are

very variable.

• We must try to improve phone accuracy by

extracting linguistic information.

• Rationale: word recognition rates will

increase if phone accuracy improves

• BUT: not all our problems can be solved

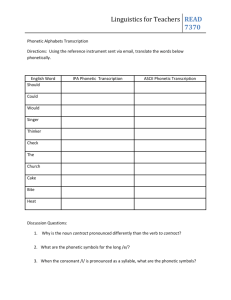

Practical:

Phonetic features in ASR

• Assumption: phone accuracy can be

improved by deriving phonetic features from

the spectral representation of the speech

signal

• What are phonetic features?

A phonetic description of sounds

• The articulatory organs

A phonetic description of sounds

• The articulation of consonants

velum (= soft palate)

tongue

A phonetic description of sounds

• The articulation of vowels

Phonetic features: IPA

• IPA (International Phonetic Alphabet) chart

- consonants and vowels

- only phonemic distinctions

(http://www.arts.gla.ac.uk/IPA/ipa.html)

The IPA chart (consonants)

The IPA chart (other consonants)

The IPA chart (non-pulm. cons.)

The IPA chart (vowels)

The IPA chart (diacritics)

IPA features (obstruents)

p0

b0

p

t

k

b

d

g

f

T

s

S

C

x

vfri

vapr

Dfri

z

Z

l

a

b

0

0

1

-1

-1

1

-1

-1

1

-1

-1

-1

-1

-1

1

1

-1

-1

-1

d

e

n

0

-1

-1

-1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

1

1

1

-1

-1

a

l

v

0

0

-1

1

-1

-1

1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

1

1

p

a

l

0

0

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

1

v

e

l

0

0

-1

-1

1

-1

-1

1

-1

-1

-1

-1

-1

1

-1

-1

-1

-1

-1

u

v

u

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

g

l

o

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

p

l

o

1

1

1

1

1

1

1

1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

f

r

i

0

0

-1

-1

-1

-1

-1

-1

1

1

1

1

1

1

1

-1

1

1

1

n

a

s

0

0

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

l

a

t

0

0

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

a

p

r

0

0

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

-1

-1

-1

t

r

i

0

0

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

v

o

i

-1

1

-1

-1

-1

1

1

1

-1

-1

-1

-1

-1

-1

1

1

1

1

1

IPA features (sonorants)

m

n

J

N

l

L

rret

ralv

Ruvu

j

w

h

...

l

a

b

1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

-1

0

d

e

n

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

0

a

l

v

-1

1

-1

-1

1

-1

1

1

-1

-1

-1

-1

0

p

a

l

-1

-1

1

-1

-1

1

-1

-1

-1

1

-1

-1

0

v

e

l

-1

-1

-1

1

-1

-1

-1

-1

-1

-1

1

-1

0

u

v

u

-1

-1

-1

-1

-1

-1

-1

-1

1

-1

-1

-1

0

g

l

o

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

0

p

l

o

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

0

f

r

i

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

0

n

a

s

1

1

1

1

-1

-1

-1

-1

-1

-1

-1

-1

0

l

a

t

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

0

a

p

r

-1

-1

-1

-1

1

1

1

-1

-1

1

1

-1

0

t

r

i

-1

-1

-1

-1

-1

-1

-1

1

1

-1

-1

-1

0

v

o

i

1

1

1

1

1

1

1

1

1

1

1

-1

0

A zero value is assigned to all vowel features (not listed here)

IPA features (vowels)

m

i

d

i

-1

y

-1

u

-1

e

1

o

1

V

1

Uschwa 1

a

-1

E

1

3

1

@

1

o

p

e

-1

-1

-1

-1

-1

1

-1

1

1

1

1

f

r

o

1

1

-1

1

-1

-1

-1

1

1

1

-1

c

e

n

-1

-1

-1

-1

-1

-1

1

1

-1

1

1

r

o

u

-1

1

1

-1

1

-1

1

-1

-1

-1

-1

I

Y

U

2

O

Q

{

A

9

m

i

d

-1

-1

-1

1

1

-1

-1

-1

1

6

-1

o

p

e

-1

-1

-1

-1

1

1

1

1

1

f

r

o

1

1

-1

1

-1

-1

1

-1

1

c r

e o

n u

1 -1

1 1

1 1

-1 1

-1 1

-1 1

-1 -1

-1 -1

-1 1

1 -1

1 -1

A zero value is assigned to all consonant features (not listed

here)

Phonetic features

• Phonetic features

- different systems (JFH, SPE, art. feat.)

- distinction between “natural classes”

which undergo the same phonological

processes

SPE features (obstruents)

p0

b0

p

b

tden

t

d

k

g

f

vfri

T

Dfri

s

z

S

Z

C

x

c

n

s

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

s

y

l

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

n

a

s

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

s

o

n

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

l

o

w

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

h

i

g

0

0

-1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

1

1

1

1

c

e

n

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

0

b

a

c

0

0

-1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

r

o

u

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

a

n

t

0

0

1

1

1

1

1

-1

-1

1

1

1

1

1

1

-1

-1

-1

-1

c

o

r

0

0

-1

-1

1

1

1

-1

-1

-1

-1

1

1

1

1

1

1

-1

-1

c

n

t

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

1

1

1

1

1

1

1

1

1

v

o

i

-1

1

-1

1

-1

-1

1

-1

1

-1

1

-1

1

-1

1

-1

1

-1

-1

l

a

t

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

s

t

r

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

1

-1

-1

1

1

1

1

1

1

t

e

n

1

-1

1

-1

1

1

-1

1

-1

1

-1

1

-1

1

-1

1

-1

1

1

SPE features (sonorants)

m

n

J

N

l

L

ralv

Ruvu

rret

j

vapr

w

h

XXX

c

n

s

1

1

1

1

1

1

1

1

1

-1

-1

-1

-1

0

s

y

l

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

0

n

a

s

1

1

1

1

-1

-1

-1

-1

-1

-1

-1

-1

-1

0

s

o

n

1

1

1

1

1

1

1

1

1

1

1

1

1

0

l

o

w

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

0

h

i

g

-1

-1

1

1

-1

1

-1

-1

-1

1

-1

1

-1

0

c

e

n

0

0

0

0

0

0

0

0

0

0

0

0

0

0

b

a

c

-1

-1

-1

1

-1

-1

-1

1

-1

-1

-1

1

-1

0

r

o

u

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

-1

0

a

n

t

1

1

-1

-1

1

-1

1

-1

-1

-1

1

1

-1

0

c

o

r

-1

1

-1

-1

1

-1

1

-1

1

-1

-1

-1

-1

0

c

n

t

-1

-1

-1

-1

1

1

1

1

1

1

1

1

1

0

v

o

i

1

1

1

1

1

1

1

1

1

1

1

1

-1

0

l

a

t

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

-1

0

s

t

r

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

0

t

e

n

0

0

0

0

0

0

0

0

0

0

0

0

0

0

SPE features (vowels)

c

n

s

i

-1

I

-1

e

-1

E

-1

{

-1

a

-1

y

-1

Y

-1

2

-1

9

-1

A

-1

Q

-1

V

-1

O

-1

o

-1

U

-1

u

-1

Uschwa -1

3

-1

@

-1

6

-1

s

y

l

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

n

a

s

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

s

o

n

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

l

o

w

-1

-1

-1

-1

1

1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

-1

-1

1

h

i

g

1

1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

-1

-1

-1

1

1

-1

-1

-1

-1

c

e

n

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

1

1

1

b

a

c

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

1

1

1

1

1

1

1

-1

-1

-1

-1

r

o

u

-1

-1

-1

-1

-1

-1

1

1

1

1

-1

1

-1

1

1

1

1

1

-1

-1

-1

a

n

t

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

c

o

r

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

c

n

t

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

v

o

i

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

1

l

a

t

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

s

t

r

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

-1

t

e

n

1

-1

1

-1

-1

1

1

-1

1

-1

-1

-1

-1

-1

1

-1

1

-1

1

-1

-1

CONCLUSION

• Different feature matrices have different

implications for relations between phones

Practical:

Kohonen networks

• Kohonen networks are unsupervised neural

networks

• Our Kohonen networks take vectors of

acoustic parameters (MFCC_E_D) as input

and output phonetic feature vectors

• Network size: 50 x 50 neurons

Training the Kohonen network

1. Self-organisation results in a phonotopic

map

2. Phone calibration attaches array of phones

to each winning neuron

3. Feature calibration replaces array of

phones by array of phonetic feature vectors

4. Averaging of phonetic feature vectors for

each neuron

Mapping with the Kohonen network

• Acoustic parameter vector belonging to

one frame activates neuron

• Weighted average of phonetic feature

vector attached to winning neuron and

K-nearest neurons is output

Advantages of Kohonen networks

• Reduction of features dimensions possible

• Mapping onto linguistically meaningful

dimensions (phonetically less severe

confusions)

• Many-to-one mapping allows mapping of

different allophones (acoustic variability)

onto the same phonetic feature values

• automatic and fast mapping

Disadvantages of Kohonen networks

• They need to be trained on manually

segmented and labelled material

• BUT: cross-language training has been

shown to be succesful

Hybrid ASR system

phone

lexicon

language model

hidden Markov

modelling

phonetic features

Kohonen network

Kohonen

Kohonennetwork

network

MFCC’s + energy

delta parameters

phone

CONCLUSION

• Acoustic-phonetic mapping extracts

linguistically relevant information from the

variable input signal.

Practical:

ICSLP’98

Exploiting transitions and focussing on

linguistic properties for ASR

Jacques Koreman

William J. Barry

Bistra Andreeva

Institute of Phonetics, University of the Saarland

Saarbrücken, Germany

INTRODUCTION

Variation in the speech signal caused by coarticulation between sounds is one of the main challenges in

ASR.

• Exploit variation if you cannot reduce it

Coarticulatory variation causes vowel transitions

to be acoustically less homogeneous, but at the

same time provides information about neighbouring sounds whichcan be exploited (experiment 1).

• Reduce variation if you cannot exploit it

Some of the variation is not relevant for the phonemic identity of the sounds. Mapping of acoustic

parameters onto IPA-based phonetic features like

[± plosive] and [± alveolar] extracts only linguistically relevant properties before hidden Markov

modelling is applied (experiment 2).

INTRODUCTION

No lexicon or language model

The controlled experiments presented here reflect our general

aim of using phonetic knowledge to improve the ASR system

architecture. In order to evaluate the effect of the changes in

bottom-up processing, no lexicon or language model is used.

Both improve phone identification in a top-down manner by

preventing the identification of inadmissible words (lexical

gaps or phonotactic restrictions) or word sequences.

DATA

Texts

English, German, Italian and Dutch texts from the EUROM0

database, read by 2 male + 2 female speakers per language

Hamming window: 15 ms

step size: 5 ms

pre-emphasis: 0.97

DATA

Signals

• 12 mel-frequency cepstral coefficients (MFCC’s)

• energy

• corresponding delta parameters

Hamming window: 15 ms

step size: 5 ms

pre-emphasis: 0.97

16 kHz microphone signals

DATA

Labels

• Intervocalic consonants labelled with SAMPA symbols,

except plosives and affricates, which are divided into

closure and frication subphone units

• 35-ms vowel transitions labelled as

i_lab, alv_O (experiment 1)

V_lab, alv_V (experiment 2)

where lab, alv = cons. generalized across place

V

= generalized vowel

Hamming window: 15 ms

step size: 5 ms

pre-emphasis: 0.97

EXPERIMENT 1: SYSTEM

consonant

lexicon

hidden Markov

modelling

language model

Hamming window: 15 ms

step size: 5 ms

pre-emphasis: 0.97

MFCC’s + energy +

delta parameters

MFCC’s + energy +

delta parameters

C

Voffset - C - Vonset

EXPERIMENT 1: RESULTS

consonant

41,97

46,79

26,57

15,83

44,78

no V transitions

V transitions

13,17

100

90

80

70

60

50

40

30

20

10

0

place

manner

EXPERIMENT 1: CONCLUSIONS

When vowel transitions are used:

• consonant identification rate improves

• place better identified

• manner identified worse, because hidden Markov

models for vowel transitions generalize across

all consonants sharing the same place of articulation (solution: do not pool consonants sharing

the same place of articulation)

• vowel transitions can be exploited for identification of the

consonant, particularly its place of articulation

EXPERIMENT 2: SYSTEM

consonant

lexicon

hidden Markov

modelling

language model

phonetic features

Kohonen

network

Kohonen

Kohonen

network

network

MFCC’s + energy

delta parameters

C

EXPERIMENT 2: RESULTS

consonant

26,57

46,79

52,00

66,12

77,70

no mapping

mapping

13,17

100

90

80

70

60

50

40

30

20

10

0

place

manner

EXPERIMENT 2: CONCLUSIONS

When acoustic-phonetic mapping is applied:

• consonant identification rate improves strongly

• place better identified

• manner better identified

• phonetic features better address linguistically relevant

information than acoustic parameters

EXPERIMENT 3: SYSTEM

consonant

lexicon

hidden Markov

modelling

language model

phonetic features

Kohonen

network

MFCC’s + energy

C

Kohonen

Kohonen

network

network

delta parameters

Voffset - C - Vonset

consonant

place

76,67

67,71

66,12

52,23

mapping, no V transitions

mapping; V transitions

52,00

100

90

80

70

60

50

40

30

20

10

0

77,70

EXPERIMENT 3: RESULTS

manner

EXPERIMENT 3: CONCLUSIONS

When transitions are used for acoustic-phonetic

mapping:

• consonant identification rate does not improve

• place identification improves slightly

• manner identification rate decreases slightly

vowel transitions do not increase identification rate:

• because baseline identification rate is already high

• vowel transitions are undertrained in the Kohonen networks

INTERPRETATION (1)

• The greatest improvement in consonant identification is

achieved in experiment 2. By mapping acoustically different

realisations of consonants onto more similar phonetic

features, the input to hidden Markov modelling becomes more

homogeneous, leading to a higher consonant identification

rate.

• Using vowel transitions also leads to a higher consonant

identification rate in experiment 1. It was shown that

particularly the consonants’ place is identified better. Findings

confirm the importance of transitions as known from

perceptual experiments.

INTERPRETATION (2)

• The additional use of vowel transitions when acoustic-phonetic

mapping is applied does not improve the identification results.

Two possible explanations for this have been suggested:

the identification rates are high anyway when mapping is

applied, so that it is less likely that large improvements are

found

the generalized vowel transitions are undertrained in the

Kohonen networks, because the intrinsically variable frames

are spread over a larger area in the phonotopic map.

The latter interpretation is currently being verified by Sibylle

Kötzer by applying the methodology to a larger database

(TIMIT).

REFERENCES (1)

Bitar, N. & Espy-Wilson, C. (1995a). Speech parameterization

based on phonetic features: application to speech recognition.

Proc. 4th Eurospeech, 1411-1414.

Cassidy, S & Harrington, J. (1995). The place of articulation

distinction in voiced oral stops: evidence from burst spectra

and formant transitions. Phonetica 52, 263-284.

Delattre, P., Liberman, A. & Cooper, F. (1955). Acoustic loci

and transitional cues for consonants. JASA 27(4), 769-773.

Furui, S. (1986). On the role of spectral transitions for speech

preception. JASA 80(4), 1016-1025.

Koreman, J., Andreeva, B. & Barry, W.J. (1998). Do phonetic

features help to improve consonant identification in ASR?

Proc. ICSLP.

REFERENCES (2)

Koreman, J., Barry, W.J. & Andreeva, B. (1997). Relational

phonetic features for consonant identification in a hybrid ASR

system. PHONUS 3, 83-109. Saarbrücken (Germany): Institute

of Phonetics, University of the Saarland.

Koreman, J., Erriquez, A. & W.J. Barry (to appear

). On

the selective use of acoustic parameters for consonant

identification. PHONUS 4. Saarbrücken (Germany): Institute

of Phonetics, University of the Saarland.

Stevens, K. & Blumstein, S. (1978). Invariant cues for place of

articulation in stop consonants.

JASA 64(5), 1358-1368.

SUMMARY

• Acoustic-phonetic mapping by a Kohonen

network improves consonant identification

rates.

Practical:

ICSLP’98

Do phonetic features help to improve

consonant identification in ASR?

Jacques Koreman

Bistra Andreeva

William J. Barry

Institute of Phonetics, University of the Saarland

Saarbrücken, Germany

INTRODUCTION

Variation in the acoustic signal is not a problem for human

perception, but causes inhomogeneity in the phone models for

ASR, leading to poor consonant identification. We should

“directly target the linguistic information in the signal

and ... minimize other extra-linguistic information

that may yield large speech variability”

(Bitar & Espy-Wilson 1995a, p. 1411)

Bitar & Espy-Wilson do this by using a knowledge-based eventseeking approach for extracting phonetic features from the

microphone signal on the basis of acoustic cues.

We propose an acoustic-phonetic mapping procedure on the basis

of a Kohonen network.

DATA

Texts

English, German, Italian and Dutch texts from the EUROM0

database, read by 2 male + 2 female speakers per language

DATA

Signals

• 12 mel-frequency cepstral coefficients (MFCC’s)

• energy

• corresponding delta parameters

Hamming window: 15 ms

step size: 5 ms

pre-emphasis: 0.97

16 kHz microphone signals

DATA (1)

Labels

The consonants were transcribed with SAMPA symbols, except:

• plosives and afficates are subdivided into a closure (“p0” =

voiceless closure; “b0” = voiced closure) and a burst-plusaspiration (“p”, “t”, “k”) or frication part (“f”, “s”, “S”, “z”,

“Z”)

• Italian geminates were pooled with non-geminates to prevent

undertraining of geminate consonants

• The Dutch voiced velar fricative [], which only occurs in

some dialects, was pooled with its voiceless counterpart

[x] to prevent undertraining

DATA (2)

Labels

• SAMPA symbols are phonemic within a language, but can

represent different allophones cross-linguistically. These were

relabelled as shown in the table below:

SAMPA allophone label description

language

r

rapr alv. approx.

English

r

ralv alveolar trill

It., Dutch

Ruvu uvular trill

G., Dutch

v

vapr

labiod.

approx. German

v

vfri vd. labiod. fric. E., It., NL

w

vapr labiod. approx. Dutch

w

w

bilab. approx. Engl., It.

SYSTEM ARCHITECTURE

consonant

lexicon

hidden Markov

modelling

language model

phonetic features

Kohonen

network

Kohonen

Kohonen

network

network

MFCC’s + energy

delta parameters

C

CONFUSIONS BASELINE

(by Attilio Erriquez)

phonetic categories:

manner, place, voicing

1 category wrong

2 categories wrong

3 categories wrong

CONFUSIONS MAPPING

(by Attilio Erriquez)

phonetic categories:

manner, place, voicing

1 category wrong

2 categories wrong

3 categories wrong

ACIS =

total of all correct identification percentages

number of consonants to be identified

The Average Correct Identification Score compensates for the

number of occurrences in the database, giving each consonant

equal weight.

It is the total of all percentage numbers along the diagonal of

the confusion matrix divided by the number of consonants.

Baseline system:

31.22 %

Mapping system:

68.47 %

BASELINE SYSTEM

• good identification of language-specific phones

• reason: acoustic homogeneity

• poor identification of other phones

% correct

cons. baseline mapping

100.0

75.0

100.0 100.0

100.0

100.0

97.8

91.3

w

94.1

100.0

91.2

96.5

x

88.2

93.4

language

German

Italian

Italian

English

Engl., It.

English

G, NL

MAPPING SYSTEM

• good identification, also of acoustically variable phones

• reason: variable acoustic parameters are mapped onto

homogenous, distinctive phonetic features

% correct

cons. baseline mapping

h

6.7

E,G, NL

0.0

all

b

0.0

all

d

0.4

all

5.9

language

86.7

58.2

44.0

36.9

38.3

AFFRICATES (1)

cons.

pf

f

s

s

dz

z

d

% correct

baseline mapping language

0.0 100.0

German

1.2

64.4

all

0.0

72.2

German, It.

3.1

64.7

all

0.0

40.2

E., G., It.

78.1

90.6

all

0.0

70.3

Italian

10.4

50.5

all

28.0

96.0

English, It.

no intervocalic realisations

AFFRICATES (2)

• affricates, although restricted to fewer languages, are

recognised poorly in the baseline system

• reason: they are broken up into closure and frication segments,

which are trained separately in the Kohonen networks; these

segments occur in all languages and are acoustically variable,

leading to poor identification

• this is corroborated by the poor identification rates for

fricatives in the baseline system (exception: //, which only

occurs rarely)

• after mapping, both fricatives and affricates are identified well

APMS =

phonetic misidentification coefficient

sum of the misidentification percentages

The Average Phonetic Misidentification Score gives a measure

of the severity of the consonant confusions in terms of phonetic

features.

The multiple is the sum of all products of the misidentification

percentages (in the non-diagonal cells) times the number of

misidentified phonetic categories (manner, place and voicing). It

is divided by the total of all the percentage numbers in the nondiagonal cells.

Baseline system:

1.79

Mapping system:

1.57

APMS =

phonetic misidentification coefficient

sum of the misidentification percentages

• after mapping, incorrectly identified consonant is on average

closer to the phonetic identity of the consonant which was

produced

• reason: the Kohonen network is able to extract linguistically

distinctive phonetic features which allow for a better

separation of the consonants in hidden Markov modelling.

CONSONANT CONFUSIONS

cons. identified as

r

(61%), (16%), w

(13%)

BASELINE

j

(53%), j (18%),

(12%),

(6%), r (6%), (6%)

m

w (23%), (18%), m

(16%), (13%), (10%)

cons. identified as

w (28%), (18%),

r

r (84%), (5%), l (4%)

(16%),

j

j (94%), z (6%)

(12%), m (8%), (8%)

m

m (63%), (11%),

w (42%), (15%),

(10%),

r (6%)

(15%), m (8%), (8%), (8%)

(26%), m (21%), (20%),

MAPPING

r (6%)

(46%), (23%), m (15%),

w (8%)

CONCLUSIONS

Acoustic-phonetic mapping helps to address linguistically

relevant information in the speech signal, ignoring extralinguistic sources of variation.

The advantages of mapping are reflected in the two measures

which we have presented:

• ACIS

shows that mapping leads to better consonant

identification rates for all except a few of the languagespecific consonants. The improvement can be put down to the

system’s ability to map acoustically variable consonant

realisations to more homogeneous phonetic feature vectors.

CONCLUSIONS

Acoustic-phonetic mapping helps to address linguistically

relevant information in the speech signal, ignoring extralinguistic sources of variation.

The advantages of mapping are reflected in the two measures

which we have presented:

• APMS shows that the confusions which occur in the mapping

experiment are less severe than in the baseline experiment

from a phonetic point of view. There are fewer confusions on

the phonetic dimensions manner, place and voicing when

mapping is applied, because the system focuses on distinctive

information in the acoustic signals.

REFERENCES (1)

Bitar, N. & Espy-Wilson, C. (1995a). Speech parameterization based

on phonetic features: application to speech recognition. Proc. 4th

European Conference on Speech Communication and Technology,

1411-1414.

Bitar, N. & Espy-Wilson, C. (1995b). A signal representation of

speech based on phonetic features. Proc. 5th Annual Dual-Use Techn.

and Applications Conf., 310-315.

Kirchhoff, K. (1996). Syllable-level desynchronisation of phonetic

features for speech recognition. Proc. ICSLP., 2274-2276.

Dalsgaard, P. (1992). Phoneme label alignment using acousticphonetic features and Gaussian probability density functions.

Computer Speech and Language 6, 303-329.

REFERENCES (2)

Koreman, J., Barry, W.J. & Andreeva, B. (1997). Relational phonetic

features for consonant identification in a hybrid ASR system.

PHONUS 3, 83-109. Saarbrücken (Germany): Institute of Phonetics,

University of the Saarland.

Koreman, J., Barry, W.J., Andreeva, B. (1998). Exploiting transitions

and focussing on linguistic properties for ASR. Proc ICSLP. (these

proceedings).

SUMMARY

Acoustic-phonetic mapping leads to fewer and

phonetically less severe consonant confusions.

Practical:

THE END