Proposal

advertisement

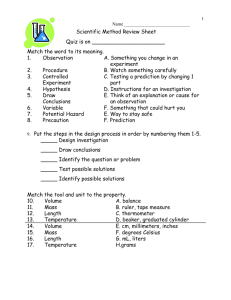

Scalable video coding extension of HEVC (S-HEVC) Submitted By: Aanal Desai 1001103728 List of Acronyms and Abbreviations: AVC – Advanced Video Coding BL – Base Layer CABAC – Context Adaptive Binary Arithmetic Coding CTB – Coding Tree Block CTU – Coding Tree Unit CU – Coding Unit DASH – Dynamic Adaptive Streaming over HTTP EL – Enhancement Layer FPS – Frames per second HD – High Definition HEVC – High Efficiency Video Coding HLS – High Level Syntax HTTP – Hyper Text Transfer Protocol ILR – Inter Layer Reference JCTVC – Joint Collaborative Team on Video Coding Mbps – Megabits per second MPD – Media Presentation Description MPEG – Moving Picture Experts Group JPEG- Joint Picture Experts Group MV – Motion Vector PSNR – Peak Signal to Noise Ratio PU – Prediction Unit SAO – Sample Adaptive Offset SHVC – Scalable High Efficiency Video Coding SNR – Signal to Noise Ratio SPIE – Society of Photo-Optical Instrumentation Engineers TU – Transform Unit UHD – Ultra High Definition URL – Uniform Resource Locator Overview: Due to the increased efficiency of video coding technology and the developments of network infrastructure, storage capacity, and computing power, digital video is used in more and more application areas, ranging from multimedia messaging, video telephony and video conferencing over mobile TV, wireless and Internet video streaming to standard- and highdefinition TV broadcasting. On the one hand, there is an increasing demand for video streaming to mobile devices such as smartphones, tablet computers, or notebooks and their broad variety of screen sizes and computing capabilities stimulate the need for a scalable extension. On the other hand, modern video transmission systems using the Internet and mobile networks are typically characterized by a wide range of connection qualities, which are a result of the used adaptive resource sharing mechanisms. In such diverse environments with varying connection qualities and different receiving devices, a flexible adaptation of once-encoded content is necessary[2]. Scalable video coding is a key to the challenges modeled by the characteristics of modern video applications. The objective of a scalable extension for a video coding standard is to allow the creation of a video bitstream that contains one or more sub-bitstreams, that can be decoded by themselves with a complexity and reconstruction quality comparable to that achieved using single-layer coding with the same quantity of data as that in the sub-bitstream[2]. SHVC provides a 50% bandwidth reduction for the same video quality when compared to the current H.264/AVC standard. SHVC further offers a scalable format that can be readily adapted to meet network conditions or terminal capabilities. Both bandwidth saving and scalability are highly desirable characteristics of adaptive video streaming applications in bandwidth-constrained, wireless networks[3].The scalable extension to the current H.264/AVC [4] video coding standard (H.264/SVC) [8] provided resources of readily adapting encoded video stream to meet receiving terminal's resource constraints or prevailing network conditions. Several H.264/SVC solutions have been proposed for video stream adaptation to meet bandwidth and power consumption constraints in a diverse range of network scenarios including wireless networks [10]. But, while addressing issues of network reliability and bandwidth resource allocation, they do not address the important issue of the ever- increasing volume of video traffic. HEVC reduces the bandwidth requirement of video stream by approximately 50% without degrading the video quality. So, HEVC can significantly lessen the network congestion by reducing the bandwidth required by the growing volume of t h e video traffic. The JCT-VC is now developing the scalable extension (SHVC) [5] to HEVC in order to bring similar benefits in terms of terminal constraint and network resource matching as H.264/SVC does, but with a significantly reduced bandwidth requirement[3]. Introduction: There are normally three types of scalabilities: Temporal, Spatial and SNR Scalabilities. Spatial scalability and temporal scalability defines cases in which a sub-bitstream represents the source content with a reduced picture size (or spatial resolution) and frame rate (or temporal resolution), respectively[1] . Quality scalability, which is also referred to as signal-to-noise ratio (SNR) scalability or fidelity scalability, the sub-bitstream delivers the same spatial and temporal resolution as the complete bitstream, but with a lower reproduction quality and, thus, a lower bit rate[2]. In this perspective, scalability refers to the property of a video bitstream that allows removing parts of the bitstream in order to adjust it to the needs of end users as well as to the capabilities of the receiving device or the network conditions, where the resulting bitstream remains compatible to the used video coding standard. It should, however, be noted that two or more single layer bitstreams can also be transmitted using the method of simulcast, which delivers similar functionalities as a scalable bitstream. Additionally, the adaptation of a single layer bitstream can be accomplished by transcoding. Scalable video coding has to compete against these alternatives. In particular, scalable coding is only useful if it offers a higher coding efficiency than simulcast[2]. Initial standards for the transmission of HEVC streams over loss-prone wireless and wired networks were established in a testbed environment in [7], which presented the effects of packet loss and bandwidth reduction on the quality of HEVC video streams. An equivalent work [6] provided a smaller set of largely similar benchmarks that were obtained by simulation rather than the testbed approach used in [7]. The authors of [7] have also proposed a scheme [9] to alleviate packet loss in HEVC by prioritizing and selectively dropping packets in response to a network resource constraint[3]. BLOCK DIAGRAM OF ENCODER: The design of HEVC certainly enables temporal scalability when a hierarchical temporal prediction structure is used. Therefore the proposed scheme concentrates on spatial and SNR scalability cases. A multi-loop decoding structure is employed to support these functionalities. Inside the framework of multi-loop decoding, all the information in the base layer (BL), including reconstructed pixel samples and syntax elements, is available for coding the enhancement layer (EL) in order to attain high coding efficiency[1]. Fig 1. High-Level block diagram of the proposed encoder.[1] (Figure1) above shows the block diagram of the proposed scalable video encoder for spatial scalability. For SNR(Quality) scalability, the up-sample step is not essential. 1.Inter-layer Intra prediction:- A block of the enhancement layer is predicted using the reconstructed (and upsampled) base layer signal. -Inter-layer motion prediction:- The motion data of a block are completely inferred using the (scaled) motion data of the co-located base layer blocks, or the (scaled) motion data of the base layer are used as an additional predictor for coding the enhancement layer motion. -Inter-layer residual prediction:- The reconstructed (and upsampled) residual signal of the co-located base layer area is used for predicting the residual signal of an inter-picture coded block in the enhancement layer, while the motion compensation is applied using enhancement layer reference pictures[2]. At the first look the scalable encoder comprises of two encoders, one for each of the layer. In spatial scalable coding, the input video is downsampled and fed into the base layer encoder, whereas the input video of the original size represents the input of the enhancement layer encoder. In quality scalable coding, both the encoders use the same input signal. The base layer encoder adapts to a single-layer video coding standard, so that the backwards compatibility with single-layer coding is achieved; the enhancement layer encoder generally contains additional coding features. The outputs of both encoders are multiplexed to form the scalable bitstream[2] .The inter and intra prediction modules of the enhancement layer encoder are altered to accommodate the base layer pixel samples in the prediction process. The base layer syntax elements containing motion parameters and intra modes are used to predict the corresponding enhancement layer syntax elements and to decrease the overhead for coding syntax elements. The transform/quantization and inverse transform/inverse quantization modules (denoted as T/Q and IT/IQ) respectively, in Figure 1 are developed such that additional DCT and DST transforms may be applied to inter-layer prediction residues for better energy compaction. The offered codec is designed to deliver a good balance between coding efficiency and implementation complexity[1]. In order to improve the coding efficiency, the data of the base layer must to be employed for an efficient enhancement layer coding by socalled inter-layer prediction methods[2]. The lower level processing modules from the single layer codec such as loop filtering, transforms, quantization and entropy coding are virtually unchanged in the enhancement layer. The changes are mainly focused in the prediction process[1]. The proposed codec was submitted as a response [11] to the joint call for proposals issued by MPEG and ITU-T on HEVC scalable extension [12]. It achieved the highest coding efficiency in terms of RD performance among all responses [13]. 2 Inter-layer texture prediction: H.264/AVC-SVC [14] presented inter-layer prediction for spatial and SNR scalabilities by using intra-BL and residual prediction under the constraint of a single-loop decoding structure. Hong et al [15] proposed a scalable video coding scheme for HEVC, where the residual prediction process is extended to both intra and inter prediction modes within a multi-loop decoding framework. In this paper, the multi-loop residual prediction is further improved by using generalized weighted residual prediction. In addition to the intra-BL and residual prediction, a combined prediction mode, which uses the average of the EL prediction and the intra-BL prediction as the final prediction, and multihypothesis inter prediction, which produces additional predictions for EL block using BL block motion information, are also presented. 2.1 Intra-BL prediction To utilize reconstructed base layer information, two Coding Unit (CU) level modes, namely intra-BL and intra-BL skip, are introduced[1]. For an enhancement layer CU, when ilpred_type indicates the IntraBL mode, the prediction signal is formed by copying or, for spatial scalable coding, upsampling the co-located base layer reconstructed samples. Since the final reconstructed samples from the base layer are used, multi-loop decoding architecture is essential[2]. When a CU in the EL picture is coded by using the intra-BL mode, the pixels in the collocated block of the up-sampled BL are used as the prediction for the current CU. For CUs using the intra-BL skip mode, no residual information is signaled[1]. Procedure for the up-sampling is decribed later in the paper. The operation is similar to the inter-layer intra prediction in the scalable extension of H.264| MPEG-4 AVC, except that it is likely to use the samples of both intra and inter predicted blocks from the base layer[2]. 2.2 Intra residual prediction: In the intra residual prediction mode, as shown in Figure 2, the difference between the intra prediction reference samples in the EL and collocated pixels in the up-sampled BL is generally used to produce a prediction, denoted as difference prediction, based on the intra prediction mode. The generated difference prediction is further added to the collocated block in the up-sampled BL to form the final prediction. Fig 2. Intra Residual Prediction. [1] In the offered codec, the intra prediction method for the difference signal remains unchanged with respect to HEVC excluding the planar mode. For the planar mode, after intra prediction is performed, the bottom-right portion of the difference prediction is set to zero. Now the bottom-right portion refers to each position (x, y) satisfying the condition (x + y) >= N-1, [where N is the width of the current block.] Because of the high frequency nature of the difference signals, the HEVC mode dependent reference sample smoothing process is disabled in the EL intra residual prediction mode[1]. 2.3 Weighted Intra prediction: [Fig 3 Weighted intra prediction mode. The (upsampled) base layer reconstructed samples are combined with the spatially predicted enhancement layer samples to predict an enhancement layer CU to be coded.] [2] In this mode, the (upsampled) base layer reconstructed signal constitutes one component for prediction. Another component is acquired by regular spatial intra prediction as in HEVC, by using the samples from the causal neighborhood of the current enhancement layer block. The base layer component is low pass filtered and the enhancement layer component is high pass filtered and the results are added to form the prediction. In our implementation, both low pass and high pass filtering happen in the DCT domain, as illustrated in Figure 3. First, the DCTs of the base and enhancement layer prediction signals are computed and the resulting coefficients are weighted according to spatial frequencies. The weights for the base layer signal are set such that the low frequency components are taken and the high frequency components are suppressed, and the weights for the enhancement layer signal are set vice versa. The weighted base and enhancement layer coefficients are added and an inverse DCT is computed to obtain the final prediction[2]. 2.4 Difference prediction modes: The principle in difference prediction modes is to lessen the systematic error when using the (upsampled) base layer reconstructed signal for prediction. It is accomplished by reusing the previously corrected prediction errors available to both encoder and decoder. To this end, a new signal, denoted as the difference signal, is derived using the difference amongst already reconstructed enhancement layer samples and (upsampled) base layer samples. The final prediction is made by adding a component from the (upsampled) base layer reconstructed signal and a component from the difference signal [17].This mode can be used for inter as well as intra prediction cases[2]. Fig 4.[ Inter difference prediction mode. The (upsampled) base layer reconstructed signal is combined with the motion compensated difference signal from a reference picture to predict the enhancement layer CU to be coded.] [2] In inter difference prediction shown above in Fig 4, the (upsampled) base layer reconstructed signal is added to a motion-compensated enhancement layer difference signal equivalent to a reference picture to obtain the final prediction for the current enhancement layer block. For the enhancement layer motion compensation, the same inter prediction technique as in single-layer HEVC is used, but with a bilinear interpolation filter[2]. Intra Prediction: Fig 5. [Intra difference prediction mode. The (upsampled) base layer reconstructed signal is combined with the intra predicted difference signal to predict the enhancement layer block to be coded.] [2] In the intra difference prediction, the (upsampled) base layer reconstructed signal constitutes one component for the prediction. Another component is derived by spatial intra prediction using the difference signal from the underlying neighborhood of the current enhancement layer block. The intra prediction modes that are used for spatial intra prediction of the difference signal are coded using the regular HEVC syntax. As Shown in the Fig 5 above,The final prediction signal is made by adding the (upsampled) base layer reconstructed signal and the spatially predicted difference signal[2]. 2.5 Motion vector prediction: Our scalable video extension of HEVC employs several methods to improve the coding of enhancement layer motion information by exploiting the availability of base layer motion information[2] .In HEVC, two modes can be used for MV coding, namely, “merge” and “advanced motion vector prediction (AMVP)”. In the both modes, some of the most probable candidates are derived based on motion data from spatially adjacent blocks and the collocated block in the temporal reference picture. The “merge” mode allows the inheritance of MVs from the neighboring blocks without coding the motion vector difference [16]. In the offered scheme, collocated base layer MVs are used in both the merge mode and the AMVP mode for enhancement layer coding. The base layer MV is inserted as the first candidate in the merge candidate list and added after the temporal candidate in the AMVP candidate list. The MV at the center position of the collocated block in the base layer picture is used in both merge and AVMP modes[1]. In HEVC, the motion vectors are compressed after being coded and the compressed motion vectors are utilized in the TMVP derivation for pictures that are coded later. In the proposed codec, the motion vector compression is delayed so that the uncompressed base layer MVs are used in inter-layer motion prediction for enhancement layer coding[1]. 2.6 Inferred prediction mode: For a CU in EL coded in the inferred base layer mode, its motion information (including the inter prediction direction, reference index and motion vectors) is not signaled. Instead, for each 4×4 block in the CU, its motion information is derived from its collocated base layer block. Once the motion information of a collocated base layer block is unavailable (e.g., the collocated base layer block is intra predicted), the 4x4 block is predicted in the same method as in the intra-BL mode[1]. Proposed Scheme for Wireless Networks: Here, we suggest a scheme for in-network adaptation of SHVC-encoded bitstreams to meet network's or terminal's resource constraints. The software agents that make up our streaming framework are distributed within the network as shown in Fig.6 below. Fig. 6. Main components and software agents of the SHVC streaming framework.[3] At the streaming server (streamer), the Network Abstraction Layer (NAL) units from an SHVC-encoded bitstream file are extracted by the NAL Unit Extractor. An extracted NAL unit is then passed to a group of pictures (GOP)-based Scheduler, which regulates the optimal number of SHVC layers to transmit based on the current state of the network. A Network Monitor located in the network, offers regular updates on network path conditions (available bandwidth and delay) to the GOP-based Scheduler. Then, the Dependency Checker examines the SHVC/HEVC Reference Picture Set (RPS) and SHVC layer-dependency, and then the NAL unit is encapsulated in an real time protocol (RTP) packet by the RTP Packetiser for the transmission. At the client side, the received packets are de-packetised by a De-Packetiser and i s presented to the RPS Repair agent, which seeks to identify and reconstruct any missing reference pictures of the currently received picture. This is accomplished using the same method of reference picture detection as the streamer side RPS Dependency Checker. Where a missing reference picture is identified, a new reference picture is created, wherever possible from the nearby or the nearest available reference picture (to the missing picture) in the RPS. The work of this agent is significant in overcoming robustness issues associated with packet loss in the current reference software implementation. The received bitstream is then passed to the Decoder whose output has been changed to include an Error Concealment agent at the picture reconstruction stage. Dependent on the content of any NAL unit(s) lost in transmission, either the whole frame is copied from the nearest picture in output order to the missing picture, or the missing blocks are copied from the co-located area. References: [1] IEEE paper by Jianle Chen, Krishna Rapaka, Xiang Li, Vadim Seregin, Liwei Guo, Marta Karczewicz, Geert Van der Auwera, Joel Sole, Xianglin Wang, Chengjie Tu, Ying Chen, Rajan Joshi “ Scalable Video coding extension for HEVC”. Qualcomm Technology Inc, Data compression conference (DCC)2013, DOC 20-22 March 2013 [2] IEEE paper by, Philipp Helle, Haricharan Lakshman, Mischa Siekmann, Jan Stegemann, Tobias Hinz, Heiko Schwarz, Detlev Marpe, and Thomas Wiegand Fraunhofer Institute for Telecommunications – Heinrich Hertz Institute, Berlin, Germany. “ScalableVideo coding extension of HEVC” Data compression conference (DCC)2013, DOC 20-22 March 2013 [3] IEEE paper “Scalable HEVC (SHVC)-Based Video Stream Adaptation in Wireless Networks” by James Nightingale, Qi Wang, Christos Grecos Centre for Audio Visual Communications & Networks (AVCN). 2013 IEEE 24th International Symposium on Personal, Indoor and Mobile Radio Communications: Services, Applications and Business Track [4] T. Weingand et al, "Overview of the H.264/AVC video coding standard," IEEE Trans. Circuits Syst. Video Technol., vol. 13, no. 7, pp. 560-576, July 2003. [5] T. Hinz et al, "An HEVC extension for spatial and quality scalable video coding," Proc. SPIE Visual Information Processing and Communication IV, Feb. 2013. [6] B. Oztas et al, "A study on the HEVC performance over lossy networks," Proc. 19th IEEE International Conference on Electronics, Circuits and Systems (ICECS), pp.785-788, Dec. 2012. [7] J. Nightingale et al, "HEVStream: a framework for streaming and evaluation of high efficiency video coding (HEVC) content in loss-prone networks," IEEE Trans. Consum. Electron., vol.58, no.2, pp.404-412, May 2012. [8] H.Schwarz et al, “Overview of the scalable extension of the H.264/AVC standard,”IEEE Trans Circuits Syst Video Technology, vol.17, pp.1103-1120,Sept 2007 [9] J. Nightingale et al, "Priority-based methods for reducing the impact of packet loss on HEVC encoded video streams," Proc. SPIE Real-Time Image and Video Processing 2013, Feb. 2013. [10] T.Schierl et al, “Mobile Video Transmission Syst. Video Technol., vol. 1217, Sept 2007. coding”, IEEE Trans. Circuits [11] J. Chen, K. Rapaka, X. Li, V. Seregin, L. Guo, M. Karczewicz, G. Van der Auwera, J. Sole, X. Wang, C. J. Tu, Y. Chen, “Description of scalable video coding technology proposal by Qualcomm (configuration 2)”, Joint Collaborative Team on Video Coding, doc. JCTVC- K0036, Shanghai, China, Oct. 2012. [12] ISO/IEC JTC1/SC29/WG11 and ITU-T SG 16, “Joint Call for Proposals on Scalable Video Coding Extensions of High Efficiency Video Coding (HEVC)”, ISO/IEC JTC 1/SC 29/WG 11 (MPEG) Doc. N12957 or ITU-T SG 16 Doc. VCEG-AS90, Stockholm, Sweden, Jul. 2012. [13] A. Segall, “BoG report on HEVC scalable extensions”, Joint Collaborative Team on Video Coding, doc. JCTVC-K0354, Shanghai, China, Oct. 2012 . [14] H. Schwarz, D. Marpe, T. Wiegand, “Overview of the Scalable Video Coding Extension of the H.264/AVC Standard”, IEEE Trans. Circuits and Syst. Video Technol., vol. 17, no. 9, pp. 1103�1120, 2007. [15] D. Hong, W. Jang, J. Boyce, A. Abbas, “Scalability Support in HEVC”, Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29/WG11, JCTVC-F290, Torino, Italy, Jul. 2011. [16] G. J. Sullivan, J.-R. Ohm, W.-J. Han, T. Wiegand, “Overview of the High Efficiency Video Coding (HEVC) Standard”, IEEE Trans. Circuits and Syst. Video Technol., to be published. [17] J. Boyce, D. Hong, W. Jang, A. Abbas, “Information for HEVC scalability extension,” Joint Collaborative Team on Video Coding, doc. JCTVC-G078, Nov. 2011.