- No category

The Portal Assessment Design System

advertisement

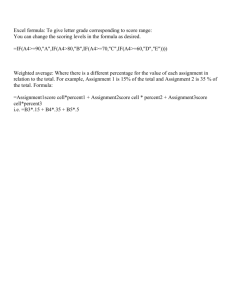

Evidence-Centered Design and Cisco’s Packet Tracer Simulation-Based Assessment Robert J. Mislevy Professor, Measurement & Statistics University of Maryland with John T. Behrens & Dennis Frezzo Cisco Systems, Inc. December 15, 2009 ADL, Alexandria, VA December 15, 2009 ADL Slide 1 Simulation-based & Game-based Assessment Motivation: Cog psych & technology » Complex combinations of knowledge & skills » Complex situations » Interactive, evolving in time, constructive » Challenge of technology-based environments December 15, 2009 ADL Slide 2 Outline ECD Packet Tracer Packet Tracer & ECD December 15, 2009 ADL Slide 3 ECD December 15, 2009 ADL Slide 4 Evidence-Centered Assessment Design Messick’s (1994) guiding questions: What complex of knowledge, skills, or other attributes should be assessed? What behaviors or performances should reveal those constructs? What tasks or situations should elicit those behaviors? December 15, 2009 ADL Slide 5 Evidence-Centered Assessment Design Principled framework for designing, producing, and delivering assessments Process model, object model, design tools Explicates the connections among assessment designs, inferences regarding students, and the processes needed to create and deliver these assessments. Particularly useful for new / complex assessments. December 15, 2009 ADL Slide 6 Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Conceptual Assessment Framework Assessment Implementation Assessment Delivery Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Layers in the assessment enterprise From Mislevy & Riconscente, in press Packet Tracer December 15, 2009 ADL Slide 8 Cisco’s Packet Tracer Online tool used in Cisco Networking Academies Create, edit, configure, run, troubleshoot networks Multiple representations in the logical layer Inspection tool links to a deeper physical world Simulation mode » Detailed visualization and data presentation Standard support for world authoring » Library of elements » Simulation of relevant deep structure » Copy, paste, save, edit, annotate, lock December 15, 2009 ADL Slide 9 Instructors and students can author their own activities December 15, 2009 ADL Slide 10 Instructors and students can author their own activities December 15, 2009 ADL Slide 11 Instructors and students can author their own activities Explanation Experimentation Packet Tracer & ECD December 15, 2009 ADL Slide 15 Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Conceptual Assessment Framework Assessment Implementation Assessment Delivery Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Layers in the assessment enterprise From Mislevy & Riconscente, in press Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Conceptual Assessment Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Conceptual Assessment Framework Design structures: Student, evidence, and task models. Generativity. Assessment Implementation • Assessment argument Manufacturing “nuts & bolts”: authoring automated scoring • tasks, Design Patterns details, statistical models. Reusability. Assessment Delivery From Mislevy & Riconscente, in press Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. structures What is important about this domain? Application to familiar assessments Domain Analysis What work and situations are central in this domain? What KRs are central to this domain? Fixed competency Domain Modeling variables Conceptual Assessment Framework Assessment Implementation Upfront design of Assessment features ofDelivery response classes From Mislevy & Riconscente, in press How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Upfront design of features of task situation – static, Students interact with tasks, performances evaluated, implicit in task, not usually feedback created. Fourtagged process delivery architecture. What is important about this domain? Application to complex assessments Domain Analysis What work and situations are central in this domain? What KRs are central to this domain? Multiple, perhaps Domain Modeling configuredon-the-fly competency Conceptual Assessment Framework variables Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. More complex evaluations of features of task situation What is important about this domain? Application to familiar assessments Application to complex assessments Domain Analysis What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling Conceptual Assessment Framework Assessment Implementation How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Some up front Time Some up front design of design of Assessment features of Delivery Students interact with tasks, features of task performances evaluated, performance or Evolving, created. FourUnfolding Macro features Micro features feedback Micro features Macro features situation; others effects; others process delivery architecture. situated of performance of performance interactive, of situation of situation recognized (e.g., performance recognized situation From Mislevy & Riconscente, in press agents) (e.g., agents) Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Evidence Model(s) Task Model(s) Student Model Scoring Measurement Model evidence, and Conceptual Assessment ModelDesign structures: Student, task models. Generativity. Framework Assessment Implementation X1 X1 Manufacturing “nuts & bolts”: authoring tasks, automated scoring X2 X2 for representing … details, statistical models. Reusability. Object models • Psychometric models Assessment“competences/proficiencies Delivery Includes Students interact with tasks, •Simulation environmentsperformances evaluated, feedback created. Four• Task templates process delivery architecture. • Automated scoring From Mislevy & Riconscente, in press 1. 3. 5. 7. xxxxxxx x xxxxxxx x xxxxxxx x xxxxxxx x 2. 4. 6. 8. xxxxxxxx xxxxxxxx xxxxxxxx xxxxxxxx What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? PADI Domain objectAnalysis model for task/evidence models Domain Modeling Conceptual Assessment Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Domain Analysis Domain Modeling Conceptual Assessment Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Graphical representation of network & configuration is How do we represent key aspects of the domain in expressable as a text representation terms of assessment argument. Conceptualization. in XML format, for presentation & work product, to support Design structures: Student, evidence, and automated scoring. task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Domain Analysis Domain Modeling Conceptual Assessment Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? • Authoring interfaces • Simulation environments How do we•represent key aspects of the domain in Re-usable platforms & elements terms of assessment argument. Conceptualization. • Standard data structures • IMS/QTI, SCORM Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. In Packet Tracer, Answer Network Serves as base pattern for work product evaluation Dynamic task models - Variable Assignment: Initial Network Similar to the Answer Network Tree. When the activity starts, instead of using the initial network as the starting values, the activity will configure the network with the contents of the variables. Domain Analysis What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? Domain Modeling How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Conceptual Assessment Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Domain Analysis • Interoperable elements • IMS/QTI, SCORM Domain Modeling • Feedback / instruction / Conceptual Assessment reporting Framework Assessment Implementation Assessment Delivery From Mislevy & Riconscente, in press What is important about this domain? What work and situations are central in this domain? What KRs are central to this domain? How do we represent key aspects of the domain in terms of assessment argument. Conceptualization. Design structures: Student, evidence, and task models. Generativity. Manufacturing “nuts & bolts”: authoring tasks, automated scoring details, statistical models. Reusability. Students interact with tasks, performances evaluated, feedback created. Fourprocess delivery architecture. Conclusion Behavior in learning environments builds connections for performance environments. Assessment tasks & features strongly related to instruction/learning objects & features. Re-use concepts and code in assessment, » via arguments, schemas, data structures that are consonant with instructional objects. Use data structures that are » share-able, extensible, » consistent with delivery processes and design models. December 15, 2009 ADL Slide 30 Further information Bob Mislevy home page » » » » http://www.education.umd.edu/EDMS/mislevy/ Links to papers on ECD Cisco NetPASS Cisco Packet Tracer PADI: Principled Assessment Design for Inquiry » » NSF project, collaboration with SRI et al. http://padi.sri.com December 15, 2009 ADL Slide 31

0

0

advertisement

Related documents

Download

advertisement

Add this document to collection(s)

You can add this document to your study collection(s)

Sign in Available only to authorized usersAdd this document to saved

You can add this document to your saved list

Sign in Available only to authorized users