Question 2012 Review3

advertisement

Questions and Topics Review Dec. 6, 2012

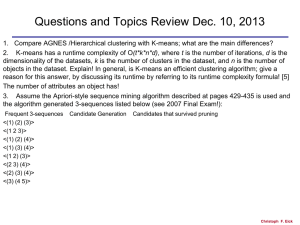

1. Compare AGNES /Hierarchical clustering with K-means; what are the main differences?

2 Compute the Silhouette of the following clustering that consists of 2 clusters: {(0,0), (0,1), (2,2)}

{(3,2), (3,3)}. Assume Manhattan Distance is used.

•

Silhouette: For an individual point, i

– Calculate a = average distance of i to the points in its cluster

– Calculate b = min (average distance of i to points in another cluster)

– The silhouette coefficient for a point is then given by:

s = (b-a)/max(a,b)

3. APRIORI has been generalized for mining sequential patterns. How is the APRIORI property

defined and used in the context of sequence mining?

4. Assume the Apriori-style sequence mining algorithm described at pages 429-435 is used and

the algorithm generated 3-sequences listed below (see 2007 Final Exam!):

Frequent 3-sequences

<(1) (2) (3)>

<(1 2 3)>

<(1) (2) (4)>

<(1) (3) (4)>

<(1 2) (3)>

<(2 3) (4)>

<(2) (3) (4)>

<(3) (4 5)>

Candidate Generation

Candidates that survived pruning

Christoph F. Eick

Answers

1.

a. AGNES creates set of clustering/a dendrogram; K-Means creates a single clustering

b. K-means forms cluster by using an iteration procedure which minimizes an objective functions,

AGNES forms the dendrogram by merging the closest 2 clusters until a single cluster is obtained

c. …

3. If a k-sequence s is frequent than all its (k-1) subsequences are frequent

How used by the algorithm?

a. Frequent k-sequences are computed by combining frequent (k-1)-sequences

b. For subset pruning: if a k-sequence s has a (k-1)-subsequence which not frequent, then

s is not frequent.

0.2

0.15

0.1

0.05

0

1

3

2

5

4 Christoph

6

F. Eick

Questions and Topics Review Dec. 6, 2012

5. The Top 10 Data Mining Algorithms article says about k-means “The greedy-descent nature of kmeans on a non-convex cost also implies that the convergence is only to a local optimum, and

indeed the algorithm is typically quite sensitive to the initial centroid locations…The local minima

problem can be countered to some extent by running the algorithm multiple times with

different initial centroids.” Explain why the suggestion in boldface is a potential solution to the

local maximum problem. Propose a modification of the k-means algorithm that uses the suggestion!

6. What is the role of slack variables in the Linear/SVM/Non-separable approach (textbook pages

266-270)—what do they measure? What properties of hyperplanes are maximized by the objective

function f(w) (on page 268) in the approach?

7. Give the equation system that PAGERANK would use for the webpage structure given on the

below. give a sketch of an approach that determines the page rank of the 4 pages from the

equation system!

8. What is a data warehouse; how is it different from a traditional database?

9. Example Essay-style Question: Assume you own an online book store which sells books over the

internet. How can your business benefit from data mining? Limit your answer to 7-10 sentences!

2

|| w ||

N k

L( w)

C i

2

i 1

Christoph F. Eick

Sample Network Structure N

P1

P2

P3

P4

PR(A) = (1-d) + d (PR(T1)/C(T1) + ... + PR(Tn)/C(Tn))

PR(P4)= (1-d) + d*PR(P3)/3

Christoph F. Eick

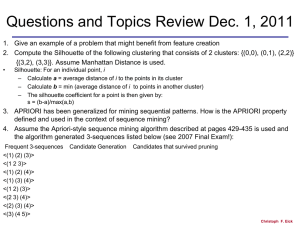

Questions and Topics Review Dec. 1, 2011

1. Give an example of a problem that might benefit from feature creation

2. Compute the Silhouette of the following clustering that consists of 2 clusters: {(0,0), (0,1), (2,2)}

{(3,2), (3,3)}.

•

Silhouette: For an individual point, i

– Calculate a = average distance of i to the points in its cluster

– Calculate b = min (average distance of i to points in another cluster)

– The silhouette coefficient for a point is then given by:

s = (b-a)/max(a,b)

3. APRIORI has been generalized for mining sequential patterns. How is the APRIORI property

defined and used in the context of sequence mining?

• Property: see text book [2]

• Use: Combine sequences that a frequent and which agree in all elements except the first element of the

first sequence, and the last element of the second sequence.

• Prune sequences if not all subsequences that can be obtained by removing a single element are frequent.

[3]

3. Assume the Apriori-style sequence mining algorithm described at pages 429-435 is used and

the algorithm generated 3-sequences listed below:

Frequent 3-sequences

<(1) (2) (3)>

<(1 2 3)>

<(1) (2) (4)>

Candidate Generation

Candidates that survived pruning

Christoph F. Eick

Questions and Topics Review Dec. 1, 2011

3. Assume the Apriori-style sequence mining algorithm described at pages 429-435 is used and

the algorithm generated 3-sequences listed below:

Frequent 3-sequences Candidate Generation Candidates that survived pruning

• 3) Association Rule and Sequence Mining [15]

• a) Assume the Apriori-style sequence mining algorithm described at pages 429-435 is used and

the algorithm generated 3-sequences listed below:

• Candidates that survived pruning:

• <(1) (2) (3) (4)>

•

• Candidate Generation:

• <(1) (2) (3) (4)> survived

• <(1 2 3) (4)> pruned, (1 3) (4) is infrequent

• <(1) (3) (4 5)> pruned (1) (4 5) is infrequent

• <(1 2) (3) (4)> pruned, (1 2) (4) is infrequent

• <(2 3) (4 5)> pruned, (2) (4 5) is infrequent

• <(2) (3) (4 5)>pruned, (2) (4 5) is infrequent

•

•

Christoph F. Eick

•

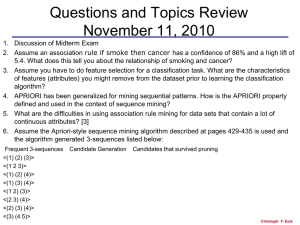

5. The Top 10 Data Mining Algorithms article says about k-means “The greedy-descent nature of kmeans on a non-convex cost also implies that the convergence is only to a local optimum, and

indeed the algorithm is typically quite sensitive to the initial centroid locations…The local minima

problem can be countered to some extent by running the algorithm multiple times with

different initial centroids.” Explain why the suggestion in boldface is a potential solution to the

local maximum problem. Propose a modification of the k-means algorithm that uses the suggestion!

Using k-means with different seeds will find different local maxima of K-mean’s objective

function; therefore, running k-means with different initial seeds that are in proximity of

different local maxima will produce alternative results.[2]

Run k-means with different seeds multiple times (e.g. 20 times), then compute the SSE

of each clustering, return the clustering with the lowest SSE value as the result. [3]

Christoph F. Eick