Location Management for Next-Generation Personal

advertisement

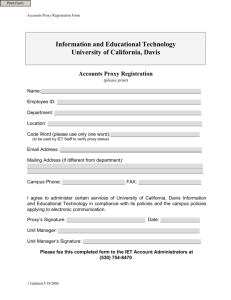

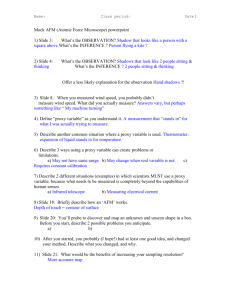

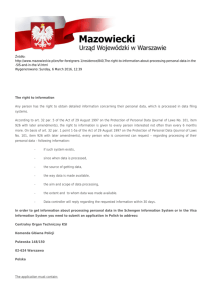

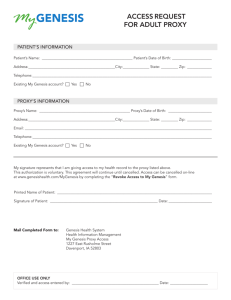

Lecture 2: Service and Data Management Ing-Ray Chen CS 6204 Mobile Computing Virginia Tech Fall 2005 Courtesy of G.G. Richard III for providing some of the slides for this chapter 1 Service Management in PCN Systems • Managing services in mobile environments Server VLR VLR • How does the server find new VLR? • Too much overhead in contacting HLR 2 PCN Systems: Proxy-Based Solution • Per-User Service Proxy Server Proxy Client 3 Per-User Service Proxy • Service Proxy – Maintains service context Information – Forwards client requests to servers – Forwards server replies to clients – Tracks the location of the MU, thereby reducing communication costs 4 Static Service Proxy • Proxy is located at a fixed location • Inefficient data route delivery Server Proxy Client 5 Mobile Service Proxy • Proxy could move with the client if necessary • Proxy informs servers of location changes Server Proxy/ Client Proxy/ Client Proxy/ Client 6 Location/Service Management Decoupled Model • Traditionally, service and location management are decoupled HLR / VLR Proxy Client 7 Integrated Location and Service Management • “Per-user” based proxy services • Service proxy co-locates (and moves) with the MU’s location database • Four possible schemes: – Centralized, fully distributed, dynamic anchor and static anchor schemes 8 Integrated: Centralized Scheme • The proxy is centralized and colocated with the HLR to minimize communication costs with HLR to track MU • When MU moves to a different VLR, a location update operation incurs to the HLR/proxy • Data delivery/call incurs a search operation at the HLR/proxy to locate the MU • Data service route: Server -> proxy/HLR -> MU 9 Integrated: Fully Distributed Scheme • Location and service handoffs occur when MU moves to a new VLR • Service proxy co-locates (moves) with the location database at the current VLR • The proxy’s moving to new VLR causes a location update to the HLR/server, and a context transfer • A call requires a search operation at the HLR to locate the MU • Data service route: Server -> proxy/MU 10 Integrated: Static Anchor Scheme • VLRs are grouped into anchor areas • HLR points to the current anchor • Proxy is co-located with anchor in a fixed location until MU moves to anchor area • Intra-anchor movement – Anchor/proxy is not moved and location update is sent to the anchor without updating the HLR • Inter-anchor movement – Anchor/proxy is moved with a context transfer cost and location update is send to the HLR/servers • Data/Call delivery performs a search at the HLR to locate the current anchor and then MU • Service route: Server->proxy/anchor -> MU 11 Integrated: Dynamic Anchor Scheme • Same as static anchor except that the anchor/proxy moves to the current VLR when there is a call delivery • On a call delivery – A search to the HLR is performed – If the anchor is not the current serving VLR • Move anchor/proxy; information HLR/server of address change; and perform context transfer • Service route: server->proxy/anchor>MU • Advantageous than static anchor when CMR and SMR are high 12 Model Parameters λ the average rate at which the MU is being called. σ the average rate at which the MU moves across VLR boundaries. γ the average rate at which the MU requests services. CMR call to mobility ratio, e.g., λ / σ. SMR service request to mobility ratio, e.g., γ / σ. T the average round trip communication cost between a VLR and the HLR (or between a VLR and the server) per message. t1 the average round trip communication cost between the anchor and a VLR in an anchor area per message. t2 the average round trip communication cost between two neighboring VLRs in an anchor areas per message. t3 the average round trip communication cost between two neighboring VLRs per message. Mcs the number of packets required to transfer the service context. Ns the number of server applications concurrently engaged by the MU. PInA the probability that a MU moves within the same anchor area when a VLR boundary crossing movement occurs. POutA the probability that a MU moves out of the current anchor area when a 13 VLR Cost Model • Performance metric – total communication cost per time unit: Ctotal Cupdate * Csearch * Cservice * • 3 basic operations – Location update (Cupdate) – cost for updating the location of MU and service proxy (sometimes, service context transfer) – Call delivery (Csearch ) – cost for locating a MU to deliver a call – Data service requests (Cservice) – cost for MU to communicate with server through proxy 14 Costs for Centralized and Fully Distributed Schemes Scheme/Cost Location update Centralized Distributed Call delivery Service request Cupdate T Csearch T Cservice T T Cost to inform the location database at the HLR of the new VLR Cost to locate the MU and deliver the call from the HLR to VLR Round trip cost from MU to proxy and from proxy to server Csearch T Cupdate T M cs *t 3 N sT T :cost to inform the HLR Cost to locate the of the new VLR MU and deliver the call M cs *t 3 :cost to transfer service context between two neighboring VLRs for the proxy move N sT :cost to update Ns application servers with new location of MU Cservice T Cost from the service proxy colocated at the current VLR to the server 15 Performance Evaluation-Results Cost rate under different CMR and SMR values 16 Performance Evaluation-Results Mobility rate fixed at 10 changes /hour; SMR = 1 to study the effect of varying CMR • Low CMR – Static/Dynamic Anchor perform better than centralized and fully distributed • High CMR – Centralized is the best. Dynamic anchor is better than static anchor. The reason is that dynamic anchor updates the HLR and moves the anchor to the current VLR, thereby reducing service request costs and location 17 update costs • Cost rate under different CMR values Performance Evaluation-Results Cost rate under different SMR values • Mobility rate is fixed at 10 changes/hour • CMR = 1 to study the effect of SMR on cost rate • Low SMR – Fully distributed scheme is the worst due to frequent movement of service proxy with mobility • High SMR – Fully distributed scheme performs the best since the service proxy is co-located with the current VLR to lower 18 the triangular cost Performance Evaluation – Results Depending on the user’s SMR the “best” integrated scheme and decoupled scheme were compared Integrated scheme converges with the decoupled scheme at high SMR where the “influence” of mobility is less Integrated scheme is better than the basic scheme at high SMR due to triangular cost between the server and MU via HLR Comparison of integrated with decoupled scheme 19 Integrated Location and Service Management in PCS: Conclusions • Design Concept: Position the service proxy along with the location database of the MU • Centralized scheme: Suited for low SMR and high CMR • Distributed scheme: Best at high SMR and high CMR • Dynamic anchor scheme: Works best for a wide range of CMR and SMR values except when service context transfer costs are high • Static anchor scheme: Works reasonably well for a wide range of CMR and SMR values • The best location/service integrated scheme always outperforms the best decoupled scheme, and the basic scheme 20 Communications Asymmetry in Mobile Wireless Environments • Network asymmetry – In many cases, downlink bandwidth far exceeds uplink bandwidth • Client-to-server ratio – Large client population, but few servers • Data volume – Small requests, large responses – Downlink bandwidth more important • Update-oriented communication – Updates likely affect a number of clients 21 Disseminating Data to Wireless Hosts • Broadcast-oriented dissemination makes sense for many applications • Can be one-way or with feedback – Sports – Stock prices – New software releases (e.g., Netscape) – Chess matches – Music – Election Coverage – Weather/traffic … 22 Dissemination: Pull • Pull-oriented dissemination can run into trouble when demand is extremely high – Web servers crash – Bandwidth is exhausted client client client server client client client client 23 Dissemination: Push • Server pushes data to clients • No need to ask for data • Ideal for broadcast-based media (wireless) client client client server client client client client 24 Broadcast Disks 2 3 1 4 5 server 6 Schedule of data blocks to be transmitted 25 Broadcast Disks: Scheduling 2 3 1 Round Robin Schedule 4 5 6 1 2 1 Priority Schedule 1 3 1 26 Priority Scheduling (2) • Random – Randomize broadcast schedule – Broadcast "hotter" items more frequently • Periodic Allows mobile hosts to sleep… – Create a schedule that broadcasts hotter items more frequently… – …but schedule is fixed – "Broadcast Disks: Data Management…" paper uses this approach – Simplifying assumptions • Data is read-only • Schedule is computed and doesn't change… • Means access patterns are assumed the same 27 "Broadcast Disks: Data Management…" • Order pages from "hottest" to coldest • Partition into ranges ("disks")—pages in a range have similar access probabilities • Choose broadcast frequency for each "disk" • Split each disk into "chunks" – maxchunks = LCM(relative frequencies) – numchunks(J) = maxchunks / relativefreq(J) • Broadcast program is then: for I = 0 to maxchunks - 1 for J = 1 to numdisks Broadcast( C(J, I mod numchunks(J) ) 28 Sample Schedule, From Paper Relative frequencies 4 2 1 29 Hot For You Ain't Hot for Me • Hottest data items are not necessarily the ones most frequently accessed by a particular client • Access patterns may have changed • Higher priority may be given to other clients • Might be the only client that considers this data important… • Thus: need to consider not only probability of access (standard caching), but also broadcast frequency • Observation: Hot items are more likely to be cached! 30 Broadcast Disks Paper: Caching • Under traditional caching schemes, usually want to cache "hottest" data • What to cache with broadcast disks? • Hottest? • Probably not—that data will come around soon! • Coldest? • Ummmm…not necessarily… • Cache data with access probability significantly higher than broadcast frequency 31 Caching • PIX algorithm (Acharya) • Eject the page from local cache with the smallest value of: probability of access broadcast frequency • Means that pages that are more frequently accessed may be ejected if they are expected to be broadcast frequently… 32 Hybrid Push/Pull • Balancing Push and Pull for Data Broadcast • B = B0 + Bb – B0 is bandwidth dedicated to on-demand pull-oriented requests from clients – Bb is bandwidth allocated to broadcast B0=0% "pure" Push Clients needing a page simply wait B0=100% Schedule is totally request-based 33 Optimal Bandwidth Allocation between On Demand and Broadcast • Assume there are n data items, each of size S • Each packet is of size R • The average time for the sever to service an on-demand request is D=(S+R)/B0; let m=1/D be the service rate • Each client generates requests at an average rate of r • There are m clients, so cumulative request rate is =m*r • For on-demand requests, the average response time per request is T0=(1+queue length)*D where “queue length” is given by utilization/(1-utilization) with “utilization’ being defined as /m (ref: queueing theory for M/M/1 take CS 5214 to be offered in Spring 2006) 34 Optimal Bandwidth Allocation between On Demand and Broadcast • What are the best frequencies for broadcasting data items? • Imielinski and Viswanathan showed that if there are n data items with popularity ratio p1, p2, …, pn, they should be broadcast with frequencies f1, f2, …, fn, where fi = sqrt(pi)/[sqrt(p1)+sqrt(p2) …+sqrt(pn)] in order to minimize the average latency Tb for accessing a broadcast data item. 35 Optimal Bandwidth Allocation between On Demand and Broadcast • T=Tb + To is the average time to access a data item • Imielinski and Viswanathan’s algorithm: Assign D1, D2, …, Di to broadcast channel Assign Di+1, Di+2, …, Dn to on-demand channel Determine optimal Bb, Bo to minimize T=Tb + To: Compute To by modeling on-demand channel as M/M/1 (or M/D/1) Compute Tb by using the optimal frequencies f1, f2, …, fn Compute optimal Bb which minimizes T to 36 within an acceptable threshold L Mobile Caching: General Issues • Mobile user/application issues: – Data access pattern (reads vs. writes?) – Data update rate – Communication/access cost – Mobility pattern of the client – Connectivity characteristics • disconnection frequency • available bandwidth – Data freshness requirements of the user – Context dependence of the information 37 Mobile Caching (2) • Research questions: Pertaining To Mobile Computing – How can client-side latency be reduced? – How can consistency be maintained among all caches and the server(s)? – How can we ensure high data availability in the presence of frequent disconnections? – How can we achieve high energy/bandwidth efficiency? – How to determine the cost of a cache miss and how to incorporate this cost in the cache management scheme? – How to manage location-dependent data in the cache? – How to enable cooperation between multiple peer caches? 38 Mobile Caching (3) • Cache organization issues: – Where do we cache? (client? proxy? service?) – How many levels of caching do we use (in the case of hierarchical caching architectures)? – What do we cache (i.e., when do we cache a data item and for how long)? – How do we invalidate cached items? – Who is responsible for invalidations? – What is the granularity at which the invalidation is done? – What data currency guarantees can the system provide to users? – What are the (real $$$) costs involved? How do we charge users? – What is the effect on query delay (response time) and system throughput (query completion rate)? 39 Weak vs. Strong Consistency • Strong consistency – – – – – Value read is most current value in system Invalidation on each write Disconnections may cause loss of invalidation messages Can also poll on every access Impossible to poll if disconnected! • Weak consistency – Value read may be “somewhat” out of date – TTL (time to live) associated with each value • Can combine TTL with polling – e.g., Polling to update TTL or retrieval of new copy of data item if out of date 40 Invalidation Report for Strong Cache Consistency • Stateless: Server does not maintain information about the cache content of the clients – Synchronous: An invalidation report is broadcast periodically, e.g., Broadcast Timestamp Scheme – Asynchronous: reports are sent on data modification – Property: Client cannot miss an update (say because of sleep or disconnection); otherwise, it will need to discard the entire cache content • Stateful: Server keeps track of the cache contents of its clients – Synchronous: none – Asynchronous: A proxy is used for each client to maintain state information for items cached by the client and their last modification times; invalidation messages are sent to the proxy asynchronously. – Clients can miss updates and get sync with the proxy (agent) upon reconnection 41 Asynchronous Stateful (AS) Scheme • Whenever the server updates any data item, an invalidation report message is sent to the MH’s HA via the wired line. • A home location cache (HLC) is being maintained in the HA to keep track of data having been cached by the MH. • The HLC is a list of records (x,T,invalid_flag) for each data item x locally cached at MH where x is the data item ID and T is the timestamp of the last invalidation of x • Invalidation reports are transmitted asynchronously and are buffered at the HA until explicit acknowledgment is received from the specific MH. The invalid_flag is set to true for data items for which an invalidation message has been sent to the MH but no acknowledgment is received • Before answering queries from application, the MH verifies whether a requested data item is in a consistent state. If it is valid, it will satisfy the query; otherwise, an 42 uplink request to the HA is issued. Asynchronous Stateful (AS) Scheme • When MH receives an invalidation message from HA, it discards that data item from the cache • Each client maintains a cache timestamp indicating the timestamp of the last message received by the MH from the HA. • HA discards any invalidation messages from the HLC with the timestamp less than or equal to the cache timestamp t received from MH and only sends invalidation messages with timestamp greater than t • In the sleep mode, the MH is unable to receive any invalidation message • When a MH reconnects, it sends a probe message to its HA with its cache timestamp upon receiving a query. In response to this probe message, the HA sends an invalidation report. • The AS scheme can handle arbitrary sleep patterns of43 the MH. Asynchronous Stateful Scheme Broadcasting Timestamp Scheme (Synchronous Stateless) A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment 44 Analysis of AS Scheme • Goal: – Cache miss probability – Mean query delay • Model constraints: A single Mobile Switching Station (MSS) with N mobile hosts 45 Assumptions • • • • • • • M data items, each of size ba bits No queuing of queries when MH is disconnected Single wireless channel of bandwidth C All messages are queued and serviced FCFS A query is of size bq bits An invalidations is of size bi bits Processing overhead is ignored 46 MH Queries • Queries follow a Poisson distribution with mean rate • Queries are uniformly distributed over all items M in the database t P( N (t ) n) n n! e t 47 Data Item Updates • Time between two consecutive updates to a data item is exponentially distributed with rate μ 48 MH states • Mobile hosts alternate between sleep and awake modes • s is the fraction of time in sleep mode; 0≤s≤1 • ω is rate at which state changes (sleeping or awake), i.e., the time t between two consecutive wake ups is exponentially distributed with rate ω 49 50 A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment Hit ratio estimation • λe = (1 – s) λ = effective rate of query generation • Queries are uniformly distributed over M items in the database • Per-data item (say x) query rate: λx = λe /M = [(1 – s) λ/M] 51 Hit rate estimation • Queries for a specific data item x by a MH would be a miss in the local cache (which would require an uplink request) in either of two conditions: – [Event 1] During time t, item x has been invalidated at least once – [Event 2] Data item x has not been invalidated during time t, but MH has slept at least once during t 52 t-t1 t 53 A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment Calculating P [Event 1] P[Event 1] x e 0 Probability density function of a query for x at time t xt t mx m Mm me dx dt x m (1 s ) Mm 0 Probability of invalidation in time [0,t] 54 PDF of state change occurs at t1 Calculating P [Event 2] P[Event 2] x e 0 PDF of query for x at time t Probability of no invalidation in time [0,t] xt e mt e t x t1 e t1 1 e t t1 dt dt 1 0 Probability of at least one sleep occurred in [0, t-t1] Probability of no query in time [t-t1, t] 55 t P[Event 2] x e xt e mt e xt1e t1 1 e t t1 dt1dt 0 0 x e e e e m x m x m 2x 56 Pmiss and Phit • Pmiss = P[Event 1] + P[Event 2] • Phit = 1 - Pmiss 57 Mean Query Delay • Let Tdelay be the mean query delay, then Tdelay = Phit *0 + PmissTq = PmissTq where Tq is the uplink query delay • Model up-link queries as M/D/1 queue (assuming there is a dedicated up-link channel of bandwidth C) • Model invalidations on down-link channel as M/D/1 queue (assuming there is a dedicated down-link channel of bandwidth C) 58 N MHs in cell Uplink query generation rate Query service rate 59 A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment Mean invalidation message arrival rate Invalidation service rate 60 A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment Effective arrival rate of invalidation messages Query service rate 61 A. Kahol, S. Khurana, S. K. S. Gupta, P. K. Srimani, An Efficient Cache Maintenance Scheme for Mobile Environment Mean Query Delay Estimation • Combine M/D/1 queues Average delay by an uplink query ~ 2m q q i Tq ~ 2m q m q q i • Resultant mean query delay Tdelay = PmissTq 62 Disconnected Operation • Disconnected operation is very desirable for mobile units • Idea: Attempt to cache/hoard data so that when disconnections occur, work (or play) can continue • Major issues: – – – – What data items (files) do we hoard? When and how often do we perform hoarding? How do we deal with cache misses? How do we reconcile the cached version of the data item with the version at the server? 63 States of Operation Data Hoarding Disconnected Reintegration 64 Case Study: Coda • Coda: file system developed at CMU that supports disconnected operation • Cache/hoard files and resolve needed updates upon reconnection • Replicate servers to improve availability • What data items (files) do we hoard? – User selects and prioritizes – Hoard walking ensures that cache contains the “most important” stuff • When and how often do we perform hoarding? – Often, when connected 65 Coda (2) • How do we deal with cache misses? – If disconnected, cannot • How do we reconcile the cached version of the data item with the version at the server? – – – – When connection is possible, can check before updating When disconnected, use local copies Upon reconnection, resolve updates If there are hard conflicts, user must intervene (e.g., it’s manual—requires a human brain) • Coda reduces the cost of checking items for consistency by grouping them into volumes – If a file within one of these groups is modified, then the volume is marked modified and individual files within can be checked 66 To Cache or Not? • Compare static allocation “always cache” (SA-always), “never cache” (SA-never), vs. dynamic allocation (DA) • Cost model: – Each rm (read at mobile) costs 1 unit if the mobile does not have a copy; otherwise the cost is 0 – Each ws (write at server) costs 1 unit if the mobile has a copy; otherwise the cost is 0 • A schedule of (ws, rm, rm, ws) – SA-always: Cost is 1+0+0+1=2 – SA-never: Cost is 0+1+1+0 = 2 – DA which allocates the item to the mobile after the first ws operation and deallocates after second rm operation: Cost is 0 + allocation/deallocation cost = 0 + 1 (for allocation) + 1 (deallocation) = 2 • A schedule of m ws operations followed by n rm operations? Cost=m (always) vs. n (never) vs. 1 (DA) 67 To Cache or Not? Sliding Window Dynamic Allocation Algorithm • A dynamic sliding-window allocation scheme SW[k] maintains the last k relevant operations and makes the allocation or deallocation decision after each relevant operation • Requiring the mobile client to be always connected to maintain the history information • Case “Data item is not cached at the mobile node” – If the window has more rm operations than ws operations, then allocate the data item at the mobile node • Case “Data item is cached at the mobile node” – If the window has more ws operations than rm operations, then deallocate the data item from the mobile node • Competitive w.r.t. the optimal offline algorithm 68 Web Caching: Case Study WebExpress • • • Housel, B. C., Samaras, G., and Lindquist, D. B., “WebExpress: A Client/Intercept Based System for Optimizing Web Browsing in a Wireless Environment,” Mobile Networks and Applications 3:419– 431, 1998. System intercepts web browsing, providing sophisticated caching and bandwidth saving optimizations for web activity in mobile environments Major features: – Low bandwidth in wireless networks Caching – Based on TTL-based cache consistency – When TTL expires, check if an object has been updated by using the CRC of an object – Verbosity of HTTP protocol Perform Protocol Reduction – TCP connection setup time Try to re-use a single TCP connection – Many responses from web servers are very similar to those seen previously Use differencing rather than returning complete responses, particularly for CGI-based interactions 69 WebExpress (2) A Single TCP connection Reduce redundant HTTP header info Reinsert removed HTTP header info on server side Caching on both client and on wired network + differencing Two intercepts (proxies): one on the client side and one on the server side 70 References 1. 2. 3. I.R. Chen, B. Gu and S.T. Cheng, “On integrated location and service handoff schemes for reducing network cost in personal communication systems,” IEEE Transactions on Mobile Computing, 2005. A. Kahol, S. Khurana, S.K.S. Gupta and P.K. Srimani, “A strategy to manage cache consistency in a disconnected distributed environment,” IEEE Trans. on Parallel and Distributed Systems, Vol. 12. No. 7, July 2001, pp. 686700. Chapter 3, F. Adelstein, S.K.S. Gupta, G.G. Richard III and L. Schwiebert, Fundamentals of Mobile and Pervasive Computing, McGraw Hill, 2005, ISBN: 0-07-141237-9. 71