Tagging

advertisement

Categorizing and Tagging Words

Chapter 5 of the NLTK book

Plan for tonight

• Quiz

• Part of speech tagging

– Use of the Python dictionary data type

– Application of regular expressions

• Planning for the rest of the semester

Understanding text

• “Understanding” written and spoken text is

a very complicated process

– Distinguishing characteristic of humans

– Machines do not understand

• Trying to make machines behave as though they

understand has led to interesting insights into the

nature of understanding in humans

• Humans acquire an ability to characterize aspects of

language as used in an instance, which aids in

seeing meaning in the text or speech.

• If we want machines to process autonomously, we

have to provide explicitly the extra information that

humans learn to append to what is written or

spoken.

Categorizing and Tagging Words

• Chapter goals: address these questions:

– What are lexical categories and how are they

used in natural language processing?

– What is a good Python data structure for

storing words and their categories?

– How can we automatically tag each word of a

text with its word class?

• This necessarily introduces some of the

fundamental concepts of natural language

processing.

– Potential use in many application areas

Word classes

• aka Lexical categories.

• The particular collection of tags used is the

tagset

– How nice it would be if there were only one

tagset, general enough for all use!

• Tags for parts of speech identify nouns,

verbs, adverbs, adjectives, articles, etc

– Verbs are further tagged with tense, passive or

active voice, etc.

– There are lots of possibilities for the kinds of

tagging desired.

First tagging example

>>> text = nltk.word_tokenize("And now for something completely different")

>>> nltk.pos_tag(text)

[('And', 'CC'), ('now', 'RB'), ('for', 'IN'), ('something', 'NN'),

('completely', 'RB'), ('different', 'JJ')]

• First, tokenize

• The tags are cryptic. In this case,

– CC = coordinating conjunction

– RB = adverb

– IN = preposition

– NN = noun

– JJ = adjective

Example

import nltk

# from nltk.book import *

raw = raw_input("Enter a sentence: ")

text = nltk.word_tokenize(raw)

print text

result =

nltk.pos_tag(text)

print result

Enter a sentence: And now for something

completely different

['And', 'now', 'for', 'something', 'completely',

'different']

[('And', 'CC'), ('now', 'RB'), ('for', 'IN'),

('something', 'NN'), ('completely', 'RB'),

('different', 'JJ')]

Homonyms

• Different words that are spelled the

same, but have different meanings

– They may be pronounced differently, or not

– Tags cannot be assigned to the word

independently. Word in context required.

More difficult example

import nltk

# from nltk.book import *

raw = raw_input("Enter a sentence: ")

text = nltk.word_tokenize(raw)

print text

result =

nltk.pos_tag(text)

print result

Enter a sentence: They refuse to permit us to

obtain the refuse permit.

['They', 'refuse', 'to', 'permit', 'us', 'to',

'obtain', 'the', 'refuse', 'permit', '.']

[('They', 'PRP'), ('refuse', 'VBP'), ('to',

'TO'), ('permit', 'VB'), ('us', 'PRP'), ('to',

'TO'), ('obtain', 'VB'), ('the', 'DT'),

('refuse', 'NN'), ('permit', 'NN'), ('.', '.')]

What use is tagging?

• The goal is autonomous processing that

correctly communicates in

understandable language.

– Results:

•

•

•

•

automated telephone trees

Spoken directions in gps

Directions provided by mapping programs

Any system that uses free text input and

provides appropriate information responses

– shopping assistants, perhaps

– Medical diagnosis systems

– Many more

Spot check

• Describe a context in which program

“understanding” of free text is needed

and/or in which text or spoken response

– that was not preprogrammed – is

useful

Tagged Corpora

• In nltk, tagged token is a tuple

– (token, tag)

– This allows us to isolate the two components

and use each easily

• Tags in some corpora are done differently,

conversion available

>>> tagged_token = nltk.tag.str2tuple('fly/NN')

>>> tagged_token

('fly', 'NN')

>>> tagged_token[0]

'fly'

>>> tagged_token[1]

'NN'

Steps to token, tag tuples

• Original text has each word followed by / and

the tag.

– Change to tokens.

• Each word and / and tag is a token

– Separate each token into a word, tag tuple

>>> sent = '''

... The/AT grand/JJ jury/NN commented/VBD on/IN a/AT number/NN of/IN

... other/AP topics/NNS ,/, among/IN them/PPO the/AT Atlanta/NP and/CC

... Fulton/NP-tl County/NN-tl purchasing/VBG departments/NNS which/WDT it/PPS

... said/VBD ``/`` zr\\are/BER well/QL operated/VBN and/CC follow/VB generally/RB

... accepted/VBN practices/NNS which/WDT inure/VB to/IN the/AT best/JJT

... interest/NN of/IN both/ABX governments/NNS ''/'' ./.

... '''

>>> [nltk.tag.str2tuple(t) for t in sent.split()]

[('The', 'AT'), ('grand', 'JJ'), ('jury', 'NN'), ('commented', 'VBD'),

('on', 'IN'), ('a', 'AT'), ('number', 'NN'), ... ('.', '.')

Handling differing tagging styles

• Different corpora have different

conventions for tagging.

• NLTK harmonizes those and presents

them all as tuples

– tagged_words() method available for all

tagged text in the corpus.

• Note this will probably not work for arbitrarily

selected files. This is done for the files in the

corpus.

• NLTK simplified tagset

Simplified Tagset of NLTK

Other languages

• NLTK is used for languages other than

English, and for alphabets and writing

forms other than the Western

characters.

• See the example showing Four Indian

Languages (and tell me if they look

meaningful!)

Looking at text for part of speech

>>> from nltk.corpus import brown

>>> brown_news_tagged = brown.tagged_words(categories='news',

simplify_tags=True)

>>> tag_fd = nltk.FreqDist(tag for (word, tag) in brown_news_tagged)

>>> tag_fd.keys()

['N', 'P', 'DET', 'NP', 'V', 'ADJ', ',', '.', 'CNJ', 'PRO', 'ADV', 'VD', ...]

Patterns for nouns

• noun tags – N for common nouns, NP for

proper nouns

• What parts of speech occur before a noun?

– Construct list of bigrams

• word-tag pairs

– Frequency Distribution

>>> word_tag_pairs = nltk.bigrams(brown_news_tagged)

>>> list(nltk.FreqDist(a[1] for (a, b) in word_tag_pairs if b[1] == 'N'))

['DET', 'ADJ', 'N', 'P', 'NP', 'NUM', 'V', 'PRO', 'CNJ', '.', ',', 'VG', 'VN', ...]

A closer look

Let’s parse that carefully. What is in word_tag_pairs?

>>> word_tag_pairs = nltk.bigrams(brown_news_tagged)

>>> list(nltk.FreqDist(a[1] for (a, b) in word_tag_pairs if b[1] == 'N'))

['DET', 'ADJ', 'N', 'P', 'NP', 'NUM', 'V', 'PRO', 'CNJ', '.', ',', 'VG', 'VN', ...]

>>> word_tag_pairs[10]

One bigram (Atlanta’s recent) showing

(("Atlanta's", 'NP'), ('recent', 'ADJ')) each word with its tag

A slice of the word_tag_pairs with red

>>> word_tag_pairs[10:15] parentheses bracketing each tuple)

[(("Atlanta's", 'NP'), ('recent', 'ADJ')), (('recent', 'ADJ'), ('primary', 'N')), (('primary', 'N'),

('election', 'N')), (('election', 'N'), ('produced', 'VD')), (('produced', 'VD'), ('``', '``'))]

list(nltk.FreqDist(a[1] for (a, b) in word_tag_pairs if b[1] == 'N'))

>>> word_tag_pairs[10][1]

>>> word_tag_pairs[10][1][0]

('recent', 'ADJ')

'recent’

Patterns for verbs

• Looking for verbs in the news text and

sorting by frequency:

>>> wsj = nltk.corpus.treebank.tagged_words(simplify_tags=True)

>>> word_tag_fd = nltk.FreqDist(wsj)

>>> [word + "/" + tag for (word, tag) in word_tag_fd if tag.startswith('V')]

['is/V', 'said/VD', 'was/VD', 'are/V', 'be/V', 'has/V', 'have/V', 'says/V',

'were/VD', 'had/VD', 'been/VN', "'s/V", 'do/V', 'say/V', 'make/V', 'did/VD',

'rose/VD', 'does/V', 'expected/VN', 'buy/V', 'take/V', 'get/V', 'sell/V',

'help/V', 'added/VD', 'including/VG', 'according/VG', 'made/VN', 'pay/V', ...]

for <…> in <frequency distribution> if <condition> -- format for

conditional distribution

import nltk

# from nltk.book import *

wsj=nltk.corpus.treebank.tagged_words(simplify_tags=True)

word_tag_fd = nltk.FreqDist(wsj)

print "Frequency distribution:"

print word_tag_fd

verbs= [word+"/"+tag for (word,tag) in word_tag_fd if

tag.startswith('V')]

print "Verbs: "

print verbs[:25]

Frequency distribution:

<FreqDist: (',', ','): 4885, ('the', 'DET'): 4038, ('.', '.'): 3828, ('of', 'P'): 2319, ('to',

'TO'): 2161, ('a', 'DET'): 1874, ('in', 'P'): 1554, ('and', 'CNJ'): 1505, ('*-1', ''): 1123,

('0', ''): 1099, ...>

Verbs:

['is/V', 'said/VD', 'are/V', 'was/VD', 'be/V', 'has/V', 'have/V', 'says/V', 'were/VD',

'had/VD', 'been/VN', "'s/V", 'do/V', 'say/V', 'make/V', 'did/VD', 'rose/VD',

'does/V', 'expected/VN', 'buy/V', 'take/V', 'get/V', 'sell/V', 'help/V', 'added/VD']

Conditional distribution

• Recall that conditional distribution requires an

event and a condition.

– We can treat the word as the condition and the tag as

For yield and cut, show the parts of speech.

the event.

>>> cfd1 = nltk.ConditionalFreqDist(wsj)

>>> cfd1['yield'].keys()

import nltk

wsj=nltk.corpus.treebank.tagged_words(simplify_tags=True)

['V', 'N']

cfd1=nltk.ConditionalFreqDist(wsj)

>>> cfd1['cut'].keys()

word = raw_input("Enter the word to explore: ")

['V', 'VD', 'N', 'VN']

if word in cfd1:

wordkeys = cfd1[word].keys()

print wordkeys

else:

print "Word not found"

Distributions

• or reverse, so the words are the events and

we see the tags commonly associated with

given words:

>>> cfd2 = nltk.ConditionalFreqDist((tag, word) for\

(word, tag) in wsj)

>>> cfd2['VN'].keys()

['been', 'expected', 'made', 'compared', 'based',

'priced', 'used', 'sold',

'named', 'designed', 'held', 'fined', 'taken',

'paid', 'traded', 'said', ...]

Verb tense

• Clarifying past tense and past participle,

look at some words that are the same for

both and the words that are surrounding

them:

>>> [w for w in cfd1.conditions() if 'VD' in cfd1[w] and 'VN' in cfd1[w]]

['Asked', 'accelerated', 'accepted', 'accused', 'acquired', 'added', 'adopted', ...]

>>> idx1 = wsj.index(('kicked', 'VD'))

>>> wsj[idx1-4:idx1+1]

[('While', 'P'), ('program', 'N'), ('trades', 'N'), ('swiftly', 'ADV'),

('kicked', 'VD')]

>>> idx2 = wsj.index(('kicked', 'VN'))

>>> wsj[idx2-4:idx2+1]

[('head', 'N'), ('of', 'P'), ('state', 'N'), ('has', 'V'), ('kicked', 'VN')]

Spot check

• Get the list of past participles found with

cfd2[‘VN’].keys()

• Collect all the context for each by

showing word-tag pair immediately

before and immediately after each.

>>> cfd2 = nltk.ConditionalFreqDist((tag, word) for\

(word, tag) in wsj)

Adjectives and Adverbs

• … and other parts of speech. We can

find them all, examine their context, etc.

Example, looking at a word

• “often” how is it used in common

English usage?

>>> brown_learned_text = brown.words(categories='learned')

>>> sorted(set(b for (a, b) in nltk.ibigrams(brown_learned_text) if a == 'often'))

[',', '.', 'accomplished', 'analytically', 'appear', 'apt', 'associated', 'assuming',

'became', 'become', 'been', 'began', 'call', 'called', 'carefully', 'chose', ...]

This gives us a set of sorted words, from the bigrams in the indicated

text, where the first word is “often”

>>> brown_lrnd_tagged = brown.tagged_words(categories='learned', simplify_tags=True)

>>> tags = [b[1] for (a, b) in nltk.ibigrams(brown_lrnd_tagged) if a[0] == 'often']

>>> fd = nltk.FreqDist(tags)

>>> fd.tabulate()

VN

15

V

12

VD

8

DET

5

ADJ

5

ADV

4

P

4

CNJ

3

,

3

TO

1

VG

1

WH

1

VBZ

1

.

1

This gives us the distribution of the part of speech of the word following “often”

in sorted order

Finding patterns

• We saw how to use regular expressions to extract

patterns on words, now let’s extract patterns of

parts of speech.

– We look at each three-word phrase in the text, and

find <verb> to <verb>:

from nltk.corpus import brown

def process(sentence):

for (w1,t1), (w2,t2), (w3,t3) in \

nltk.trigrams(sentence):

if (t1.startswith('V') and t2 == 'TO' and \

t3.startswith('V')):

print w1, w2, w3

>>> for tagged_sent in brown.tagged_sents():

...

process(tagged_sent)

...

combined to achieve

continue to place

serve to protect

wanted to wait

allowed to place

expected to become

...

POS ambiguities

• Looking at how the words are tagged,

may help understand the tagging

>>> brown_news_tagged =

brown.tagged_words(categories='news',\

simplify_tags=True)

>>> data = nltk.ConditionalFreqDist((word.lower(),

tag)

... for (word, tag) in brown_news_tagged)

>>> for word in data.conditions():

...

if len(data[word]) > 3:

...

tags = data[word].keys()

...

print word, ' '.join(tags)

...

Recall that a Conditional Frequency

best ADJ ADV NP V

Distribution

better ADJ ADV V DET

(ConditionalFreqDist()

close ADV ADJ V N

has an event and a condition.

cut V N VN VD

Each element of data has

even ADV DET ADJ V

data.conditions() and each condition has keys

data[…].keys()

Python Dictionary

• Allows mapping between arbitrary types

(not necessary to have a numeric index)

• Note the addition of defaultdict

– returns a value for a non-existing entry

• uses the default value for the type

• 0 for number, [] for empty list, etc.

– We can specify a default value to use

Tagging rare words

with default value

A lambda function is an unnamed function that can be defined and

used wherever a function object is required.

>>> alice = nltk.corpus.gutenberg.words('carrollalice.txt')

>>> vocab = nltk.FreqDist(alice)

>>> v1000 = list(vocab)[:1000]

>>> mapping = nltk.defaultdict(lambda: 'UNK')

>>> for v in v1000:

...

mapping[v] = v

...

>>> alice2 = [mapping[v] for v in alice]

>>> alice2[:100]

['UNK', 'Alice', "'", 's', 'Adventures', 'in',

'Wonderland', 'by', 'UNK', 'UNK',

'UNK', 'UNK', 'CHAPTER', 'I', '.', 'UNK', 'the',

'Rabbit', '-', 'UNK', 'Alice',

'was', 'beginning', 'to', 'get', 'very', 'tired', 'of',

'sitting', 'by', 'her',

Spot check

• Look at the previous example

• For each line of the code, determine

exactly what it does. Think first, then

insert print commands to show each

result.

Incrementally updating a

dictionary

•

•

•

•

Initialize an empty defaultdict

Process each part of speech tag in the text

If it has not been seen before, it will have zero count

Each time the tag is seen, increment its counter

• itemgetter(n) returns a function that can be called

on some other sequence object to obtain the nth

element

>>> counts = nltk.defaultdict(int)

>>> from nltk.corpus import brown

>>> for (word, tag) in brown.tagged_words(categories='news'):

... counts[tag] += 1

...

>>> counts['N']

22226

>>> list(counts)

['FW', 'DET', 'WH', "''", 'VBZ', 'VB+PPO', "'", ')', 'ADJ', 'PRO', '*', '-', ...]

>>> from operator import itemgetter

>>> sorted(counts.items(), key=itemgetter(1), reverse=True)

[('N', 22226), ('P', 10845), ('DET', 10648), ('NP', 8336), ('V', 7313), ...]

>>> [t for t, c in sorted(counts.items(), key=itemgetter(1), reverse=True)]

['N', 'P', 'DET', 'NP', 'V', 'ADJ', ',', '.', 'CNJ', 'PRO', 'ADV', 'VD', ...]

Look at the parameters of sorted. What does each represent?

Another pattern for updating

import nltk

last_letters = nltk.defaultdict(list)

words = nltk.corpus.words.words('en')

for word in words:

key = word[-2:]

last_letters[key].append(word)

print last_letters['ly']

['abactinally', 'abandonedly', 'abasedly',

'abashedly', 'abashlessly', 'abbreviately',

'abdominally', 'abhorrently', 'abidingly',

'abiogenetically', 'abiologically', ...]

Note that each entry in the dictionary has a unique key

The value part of the entry is a list

index

• Because accumulating a list of words is so

common, NLTK defines a defaultdict(list)

– nltk.Index

>>> anagrams = nltk.Index((''.join(sorted(w)), w) for w

in words)

>>> anagrams['aeilnrt']

['entrail', 'latrine', 'ratline', 'reliant', 'retinal',

'trenail']

What does this do?

Exactly the same as this:

>>> anagrams = nltk.defaultdict(list)

>>> for word in words:

...

key = ''.join(sorted(word))

...

anagrams[key].append(word)

...

>>> anagrams['aeilnrt']

Inverted dictionary

>>> pos = {'colorless': 'ADJ', 'ideas': 'N', 'sleep': 'V', 'furiously': 'ADV'}

>>> pos2 = dict((value, key) for (key, value) in pos.items())

>>> pos2['N']

pos is a dictionary by { … }

'ideas'

pos2 is a dictionary by dict( … )

>>> pos

{'furiously': 'ADV', 'sleep': 'V', 'ideas': 'N',

'colorless': 'ADJ'}

>>> pos2

{'ADV': 'furiously', 'N': 'ideas', 'ADJ': 'colorless',

'V': 'sleep'}

>>> pos.update({'cats': 'N', 'scratch': 'V', 'peacefully': 'ADV', 'old': 'ADJ'})

>>> pos2 = nltk.defaultdict(list)

>>> for key, value in pos.items():

... pos2[value].append(key)

...

>>> pos2['ADV']

['peacefully', 'furiously']

Automatic tagging

• Default

– Tag each token with the most common tag.

>>> tags = [tag for (word, tag) in brown.tagged_words(categories='news')]

>>> nltk.FreqDist(tags).max()

'NN'

NN (noun) is the most common tag in the given text.

>>> raw = 'I do not like green eggs and ham, I do not like them Sam I am!'

>>> tokens = nltk.word_tokenize(raw)

>>> default_tagger = nltk.DefaultTagger('NN')

>>> default_tagger.tag(tokens)

[('I', 'NN'), ('do', 'NN'), ('not', 'NN'), ('like', 'NN'), ('green', 'NN'),

('eggs', 'NN'), ('and', 'NN'), ('ham', 'NN'), (',', 'NN'), ('I', 'NN'),

('do', 'NN'), ('not', 'NN'), ('like', 'NN'), ('them', 'NN'), ('Sam', 'NN'),

('I', 'NN'), ('am', 'NN'), ('!', 'NN')]

NLTK includes an evaluate function for each tagger

>>> default_tagger.evaluate(brown_tagged_sents)

Not very

0.13089484257215028

good!

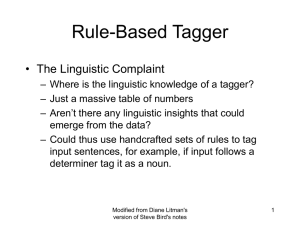

Regular expression tagger

• Use expected patterns to assign tags

>>> patterns = [

...

(r'.*ing$', 'VBG’),

# gerunds

...

(r'.*ed$', 'VBD'),

# simple past

...

(r'.*es$', 'VBZ'),

# 3rd singular present

...

(r'.*ould$', 'MD'),

# modals

...

(r'.*\'s$', 'NN$'),

# possessive nouns

...

(r'.*s$', 'NNS'),

# plural nouns

...

(r'^-?[0-9]+(.[0-9]+)?$', 'CD'), # cardinal num.

...

(r'.*', 'NN')

# nouns (default)

...

]

Tag applied as soon as a match is found. If no match, defaults to NN

>>> regexp_tagger = nltk.RegexpTagger(patterns)

>>> regexp_tagger.tag(brown_sents[3])

[('``', 'NN'), ('Only', 'NN'), ('a', 'NN'), ('relative', 'NN'), ('handful', 'NN'),

('of', 'NN'), ('such', 'NN'), ('reports', 'NNS'), ('was', 'NNS'), ('received', 'VBD'),

("''", 'NN'), (',', 'NN'), ('the', 'NN'), ('jury', 'NN'), ('said', 'NN'), (',', 'NN'),

('``', 'NN'), ('considering', 'VBG'), ('the', 'NN'), ('widespread', 'NN'), ...]

>>> regexp_tagger.evaluate(brown_tagged_sents)

0.20326391789486245

better

Lookup tagger

• Find the most common words and store

their usual tag.

>>> fd = nltk.FreqDist(brown.words(categories='news'))

>>> cfd = nltk.ConditionalFreqDist(brown.tagged_words(categories='news'))

>>> most_freq_words = fd.keys()[:100]

>>> likely_tags = dict((word, cfd[word].max()) for word in most_freq_words)

>>> baseline_tagger = nltk.UnigramTagger(model=likely_tags)

>>> baseline_tagger.evaluate(brown_tagged_sents)

0.45578495136941344

Look at this carefully

Still better

Refined lookup

• Assign tags to words that are not nouns,

and default others to noun.

>>> baseline_tagger = nltk.UnigramTagger(model=likely_tags,

...

backoff=nltk.DefaultTagger('NN'))

Model size and performance

Evaluation

• Gold standard test data

– Corpus that has been manually annotated

and carefully evaluated.

– Test the tagging technique against the test

case, where the right answers are known. If

it does well there, assume it does well in

general.

N-gram tagging

• Unigram

– Use the most frequent tag for a word

– Must have a “gold standard” for reference

>>> from nltk.corpus import brown

>>> brown_tagged_sents = brown.tagged_sents(categories='news')

>>> brown_sents = brown.sents(categories='news')

>>> unigram_tagger = nltk.UnigramTagger(brown_tagged_sents)

>>> unigram_tagger.tag(brown_sents[2007])

[('Various', 'JJ'), ('of', 'IN'), ('the', 'AT'), ('apartments', 'NNS'),

('are', 'BER'), ('of', 'IN'), ('the', 'AT'), ('terrace', 'NN'), ('type', 'NN'),

(',', ','), ('being', 'BEG'), ('on', 'IN'), ('the', 'AT'), ('ground', 'NN'),

('floor', 'NN'), ('so', 'QL'), ('that', 'CS'), ('entrance', 'NN'), ('is', 'BEZ'),

('direct', 'JJ'), ('.', '.')]

>>> unigram_tagger.evaluate(brown_tagged_sents)

0.9349006503968017

Testing on the same data as training.

Separate training and testing

If training and evaluation are on the same data, we certainly expect a very

good performance!

More realistically, train on part of the data and test on the rest.

>>> size = int(len(brown_tagged_sents) * 0.9)

>>> size

4160

>>> train_sents = brown_tagged_sents[:size] train on the last 4160 sentences

>>> test_sents = brown_tagged_sents[size:] test on the rest of the sentences

>>> unigram_tagger = nltk.UnigramTagger(train_sents)

>>> unigram_tagger.evaluate(test_sents)

0.81202033290142528

The testing data is now different from the

training data. So, this is a better test of the

process.

Your turn

• Experiment with this tagger.

• Does it matter if you train on the first part or

the last part?

• What is the effect of training on 80% of the

data and testing on the other 20%

• Notice that the training and testing, though on

different sentences, is all from the same

category of the Brown corpus. How well

would you expect the training to do in a

different corpus? If the corpus was also from

a news category? If it was from a novel?

General N-Gram Tagging

• Combine current word and the part of

speech tags of the previous n-1 words to

give the current word some context.

Bigram tagger

>>> bigram_tagger = nltk.BigramTagger(train_sents)

>>> bigram_tagger.tag(brown_sents[2007])

[('Various', 'JJ'), ('of', 'IN'), ('the', 'AT'), ('apartments', 'NNS'),

('are', 'BER'), ('of', 'IN'), ('the', 'AT'), ('terrace', 'NN'),

('type', 'NN'), (',', ','), ('being', 'BEG'), ('on', 'IN'), ('the', 'AT'),

('ground', 'NN'), ('floor', 'NN'), ('so', 'CS'), ('that', 'CS'),

('entrance', 'NN'), ('is', 'BEZ'), ('direct', 'JJ'), ('.', '.')]

This is the

precision – recall

tradeoff of

information

retrieval

>>> unseen_sent = brown_sents[4203]

>>> bigram_tagger.tag(unseen_sent)

[('The', 'AT'), ('population', 'NN'), ('of', 'IN'), ('the', 'AT'), ('Congo', 'NP'),

('is', 'BEZ'), ('13.5', None), ('million', None), (',', None), ('divided', None),

('into', None), ('at', None), ('least', None), ('seven', None), ('major', None),

('``', None), ('culture', None), ('clusters', None), ("''", None), ('and', None),

('innumerable', None), ('tribes', None), ('speaking', None), ('400', None),

('separate', None), ('dialects', None), ('.', None)]

>>> bigram_tagger.evaluate(test_sents)

Reliance on context not seen in training reduces

0.10276088906608193

accuracy.

So, what’s the problem?

• Now, we are matching pairs of words and a

pair is less likely to have occurred before.

• Using context provides greater accuracy,

when it is able to find a match. However,

frequently, it will not find a match.

• Compromise – use the most accurate

tagger for what it can do, then back it up

with another tagger for the parts that do

not get tagged.

Combining taggers

• Use the benefits of several types of

taggers

– Try the bigram tagger

– When it is unable to find a tag, use the

unigram tagger

– If that fails, then use the default tagger

>>> t0 = nltk.DefaultTagger('NN')

>>> t1 = nltk.UnigramTagger(train_sents, backoff=t0)

>>> t2 = nltk.BigramTagger(train_sents, backoff=t1)

>>> t2.evaluate(test_sents)

0.84491179108940495

Spot Check

• Note the order of appearance of the

taggers. Why is that?

• Extend to a trigramtagger, t3, which

backs off to t2.

Assignment for Chapter 5

• Assignment for two weeks:

• NLTK chapter 5: # 3, 12

• Program: (be sure to work alone!)

– Choose one of these: 5.25, 5.30, 5.33