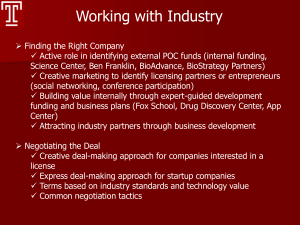

Talk Slides - Department of Computer and Information Science and

advertisement

Clustering and Partitioning for

Spatial and Temporal Data Mining

Vasilis Megalooikonomou

Data Engineering Laboratory (DEnLab)

Dept. of Computer and Information Sciences

Temple University

Philadelphia, PA

www.cis.temple.edu/~vasilis

V. Megalooikonomou, Temple University

Outline

• Introduction

– Motivation – Problems:

• Spatial domain

• Time domain

– Challenges

• Spatial data

– Partitioning and Clustering

– Detection of discriminative patterns

– Results

• Temporal data

– Partitioning

– Vector Quantization

– Results

• Conclusions - Discussion

V. Megalooikonomou, Temple University

Introduction

• Large spatial and temporal databases

• Meta-analysis of data pooled from multiple

studies

• Goal: To understand patterns and discover

associations, regularities and anomalies in

spatial and temporal data

V. Megalooikonomou, Temple University

Problem

Spatial Data Mining:

Given a large collection of spatial data, e.g., 2D or 3D

images, and other data, find interesting things, i.e.:

• associations among image data or among image

and non-image data

• discriminative areas among groups of images

• rules/patterns

• similar images to a query image (queries by

content)

V. Megalooikonomou, Temple University

Challenges

•

•

•

•

•

•

•

•

•

How to apply data mining techniques to images?

Learning from images directly

Heterogeneity and variability of image data

Preprocessing (segmentation, spatial normalization, etc)

Exploration of high correlation between neighboring

objects

Large dimensionality

Complexity of associations

Efficient management of topological/distance information

Spatial knowledge representation / Spatial Access

Methods (SAMs)

V. Megalooikonomou, Temple University

Example: Association Mining – Spatial Data

• Discover associations among spatial and non-spatial data:

• Images {i1, i2,…, iL}

• Spatial regions {s1, s2,…, sK}

• Non-spatial variables {c1, c2,…, cM}

i1

i2

c2

c1 c7

V. Megalooikonomou, Temple University

i3

c2

i4

i5

c1 c3 c9

c6

i6

i7

Example: fMRI contrast maps

Control

V. Megalooikonomou, Temple University

Patient

Applications

Medical Imaging, Bioinformatics, Geography, Meteorology, etc..

V. Megalooikonomou, Temple University

Voxel-based Analysis

• No model on the image data

• Each voxel’s changes analyzed independently - a map of

statistical significance is built

• Discriminatory significance measured by statistical tests (ttest, ranksum test, F-test, etc)

• Statistical Parametric Mapping (SPM)

• Significance of associations measured by chi-squared test,

Fisher’s exact test (a contingency table for each pair of vars)

• Cluster voxels by findings

V. Megalooikonomou, Temple University

[V. Megalooikonomou, C. Davatzikos, E. Herskovits, SIGKDD 1999]

Analysis by grouping of voxels

• Grouping of voxels (atlas-based)

• Prior knowledge increases sensitivity

• Data reduction: 107 voxels R regions (structures)

• Map a ROI onto at least one region

• As good as the atlas being used

• M non-spatial variables, R regions

• Analysis

• Categorical structural variables

• M x R contingency tables, Chi-square/Fisher exact test

• multiple comparison problem

• log-linear analysis, multivariate Bayesian

• Continuous structural variables

• Logistic regression, Mann-Whitney

V. Megalooikonomou, Temple University

Dynamic Recursive Partitioning

• Adaptive partitioning of a 3D volume

V. Megalooikonomou, Temple University

Dynamic Recursive Partitioning

• Adaptive partitioning of a 3D volume

• Partitioning criterion:

discriminative power of feature(s) of hyper-rectangle and

size of hyper-rectangle

V. Megalooikonomou, Temple University

Dynamic Recursive Partitioning

• Adaptive partitioning of a 3D volume

• Partitioning criterion:

discriminative power of feature(s) of hyper-rectangle and

size of hyper-rectangle

V. Megalooikonomou, Temple University

Dynamic Recursive Partitioning

• Adaptive partitioning of a 3D volume

• Partitioning criterion:

discriminative power of feature(s) of hyper-rectangle and

size of hyper-rectangle

V. Megalooikonomou, Temple University

Dynamic Recursive Partitioning

• Adaptive partitioning of a 3D volume

• Partitioning criterion:

discriminative power of feature(s) of hyper-rectangle and

size of hyper-rectangle

• Extract features from discriminative regions

• Reduce multiple comparison problem

(# tests = # partitions < # voxels)

• tests downward closed

V. Megalooikonomou, Temple University

[V. Megalooikonomou, D. Pokrajac, A. Lazarevic, and Z. Obradovic,

SPIE Conference on Visualization and Data Analysis, 2002]

Other Methods for Spatial Data Classification

Distinguishing among distributions:

•Distributional Distances:

- Mahalanobis distance

- Kullback-Leibler divergence (parametric, nonparametric)

•Maximum Likelihood:

- Estimate probability densities and compute likelihood

•EM (Expectation-Maximization) method to model

spatial regions using some base function (Gaussian)

•Static partitioning:

*

• Reduction of the # of attributes as compared to voxel-wise

*

analysis

*

*

• Space partitioned into 3D hyper-rectangles (variables: properties * *

of voxels inside hyper-rectangles) - incrementally increase

*

*

*

discretization

D. Pokrajac, V. Megalooikonomou, A. Lazarevic, D. Kontos, Z. Obradovic,

V. Megalooikonomou, Temple University

Artificial Intelligence in Medicine, Vol. 33, No. 3, pp. 261-280, Mar. 2005.

Experimental Results

Method

Classification Accuracy (%)

Criterion

Threshold

Tree depth

Controls

Patients

Total

correlation

0.4

3

82

93

88

0.05

3

89

100

94

0.05

4

84

100

92

0.01

4

87

100

93

0.05

3

87

100

93

0.05

4

80

100

90

0.01

4

87

96

91

Maximum Likelihood / EM

77

67

72

Maximum Likelihood / k-means

77

83

80

Kullback-Leibler / EM

79

57

68

Kullback-Leibler / k-means

77

66

71

t-test

DRP

ranksum

V. Megalooikonomou, Temple University

Areas discovered by DRP with t-test: significance

threshold=0.05, maximum tree depth=3. Colorbar shows

significance

Comparison of number of tests performed

Number of tests

Thresh

.

0.05

Depth

DRP

Voxel Wise

3

569

201774

0.05

4

4425

201774

0.01

4

4665

201774

[D. Kontos, V. Megalooikonomou, D. Pokrajac, A. Lazarevic, Z. Obradovic,

O. B. Boyko, J. Ford, F. Makedon, A. J. Saykin, MICCAI 2004]

Experimental Results

Discriminative sub-regions

detected when applying (a)

DRP and (b) voxel-wise

analysis with ranksum test

and significance threshold

0.05 to the real fMRI

volume data

(a)

(b)

Impact:

• Assist in interpretation of images (e.g., facilitating diagnosis)

• Enable researchers to integrate, manipulate and analyze large

volumes of image data

V. Megalooikonomou, Temple University

Time Sequence Analysis

Time Sequence: A sequence

(ordered collection) of real

values: X = x1, x2,…, xn

• Time series data abound in many applications …

• Challenges:

– High dimensionality

– Large number of sequences

– Similarity metric definition

• Similarity analysis (e.g., find stocks similar to that of IBM)

• Goals: high accuracy, (high speed) in similarity searches among time

series and in discovering interesting patterns

• Applications: clustering, classification, similarity searches,

summarization

V. Megalooikonomou, Temple University

Dimensionality Reduction Techniques

• DFT: Discrete Fourier Transform

• DWT: Discrete Wavelet Transform

• SVD: Singular Value Decomposition

•APCA: Adaptive Piecewise Constant Approximation

• PAA: Piecewise Aggregate Approximation

• SAX: Symbolic Aggregate approXimation

•

•… •

V. Megalooikonomou, Temple University

Similarity distances for time series

• Euclidean Distance:

most common, sensitive to shifts

• Dynamic Time Warping:

improving accuracy but slow: O(n2)

• Envelope-based DTW:

faster: O(n)

A more intuitive idea:

two series should be considered similar if they have enough

non-overlapping time-ordered pairs of subsequences that are

similar (Agrawal et al. VLDB, 1995)

V. Megalooikonomou, Temple University

Partitioning – Piecewise Constant

Approximations

Original time series

(n points)

Piecewise constant approximation (PCA)

or Piecewise Aggregate Approximation

(PAA), [Yi and Faloutsos ’00, Keogh et

al, ’00] (n' segments)

Adaptive Piecewise Constant

Approximation (APCA), [Keogh et al.,

’01] (n" segments)

V. Megalooikonomou, Temple University

Multiresolution Vector Quantized

approximation (MVQ)

Partitions a sequence into equal-length segments and uses VQ to

represent each sequence by appearance frequencies of keysubsequences

1) Uses a ‘vocabulary’ of subsequences (codebook) –

training is involved

2) Takes multiple resolutions into account – keeps both

local and global information

3) Unlike wavelets partially ignores the ordering of

‘codewords’

3) Can exploit prior knowledge about the data

4) Employs a new distance metric

V. Megalooikonomou, Temple University

[V. Megalooikonomou, Q. Wang, G. Li, C. Faloutsos, ICDE 2005]

Methodology

Codebook

l

s=16

s

Generation

Series

cmdbca

minj j a

hldf ko

ogcbl p

l hnkkk

kkgj hh

Transformation

ifaj bb

ma I n j m

phcako

occbl h

pl cacg

gkgj lp

……

V. Megalooikonomou, Temple University

Series

Encoding

1121000000001000

1200010011000000

1000000012001100

1000000011002100

0001010100110010

1010000100100011

……

Methodology

Creating a ‘vocabulary’

Q: How to create?

A: Use Vector Quantization, in

particular, the Generalized Lloyd

Algorithm (GLA)

Frequently appearing

patterns in

subsequences

Output:

A codebook with s codewords

Representing time series

X = x1, x2,…, xn

is encoded with a new representation

f = (f1,f2,…, fs)

(fi is the frequency of the i th codeword in X)

V. Megalooikonomou, Temple University

Methodology

New distance metric:

The histogram model is used to calculate

similarity at each resolution level:

1

S HM (q, t )

1 dis (q, t )

wit

h

V. Megalooikonomou, Temple University

s

f i ,t f i , q

i 1

1 f i ,t f i , q

dis (q, t )

fi,t

fi,q

1 2...s

Methodology

Time series summarization:

• High level information (frequently appearing patterns) is

more useful

• The new representation can provide this kind of

information

Both codeword

(pattern) 3 & 5

show up 2 times

V. Megalooikonomou, Temple University

Methodology

Problems of frequency based encoding:

• It is hard to define an approximate resolution

(codeword length)

• It may lose global information

V. Megalooikonomou, Temple University

Methodology

Solution: Use multiple resolutions:

• It is hard to define an approximate resolution

(codeword length)

• It may lose global information

V. Megalooikonomou, Temple University

Methodology

Proposed distance metric:

Weighted sum of similarities, at all

resolution levels

c

S HHM (q, d j ) w i * S HMi (q, d j )

i 1

where c is the number of resolution levels

similarity @ level i

•lacking any prior knowledge equal weights to

all resolution levels works well most of the time

V. Megalooikonomou, Temple University

MVQ: Example of Codebooks

• Codebook for the

first level

• Codebook for the

second level (more

codewords since

there are more

details)

V. Megalooikonomou, Temple University

Experiments

Datasets

SYNDATA (control chart data): synthetic

CAMMOUSE: 3 *5 sequences obtained using the

Camera Mouse Program

RTT: RTT measurements from UCR to CMU with

sending rate of 50 msec for a day

V. Megalooikonomou, Temple University

Experiments

Best Match Searching:

Matching accuracy: % of knn’s (found by different approaches)

that are in same class

Accuracy

V. Megalooikonomou, Temple University

| knn(q) std_set(q) |

100%

k

Experiments

Best Match Searching

SYNDATA

CAMMOUSE

Method

Weight

Vector

Accuracy

Method

Weight Vector

Accuracy

Single

level

VQ

[1 0 0 0 0]

0.55

[1 0 0 0 0]

0.56

[0 1 0 0 0]

0.70

Single

level

VQ

[0 1 0 0 0]

0.60

[0 0 1 0 0]

0.65

[0 0 1 0 0]

0.44

[0 0 0 1 0]

0.48

[0 0 0 1 0]

0.56

[0 0 0 0 1]

0.46

[0 0 0 0 1]

0.60

[1 1 1 1 1]

0.83

MVQ

[1 1 1 1 1]

0.83

0.51

Euclidean

MVQ

Euclidean

V. Megalooikonomou, Temple University

0.58

Experiments

Best Match Searching

MVQ

MVQ

(a)

(b)

Precision-recall for different methods

(a) on SYNDATA dataset (b) on CAMMOUSE dataset

V. Megalooikonomou, Temple University

Experiments

Clustering experiments

Given two clusterings, G=G1, G2, …, GK (the true clusters),

and A = A1, A2, …, Ak (clustering result by a certain

method), the clustering accuracy is evaluated with the

cluster similarity defined as:

Sim(G, A)

imax j Sim(Gi , A j )

V. Megalooikonomou, Temple University

k

with Sim(Gi, Aj)

2 | Gi A j |

| Gi | | A j |

[Gavrilov, M., Anguelov, D., Indyk, P. and Motwani, R., KDD 2000]

Experiments

Clustering experiments

SYNDATA

Method

RTT

Method

Weight

Vector

Single level

VQ

[1 0 0 0 0]

0.55

[0 1 0 0 0]

0.52

0.63

[0 0 1 0 0]

0.57

[0 0 0 1 0]

0.51

[0 0 0 1 0]

0.80

[0 0 0 0 1]

0.49

[0 0 0 0 1]

0.79

[1 1 1 1 1]

0.82

MVQ

[0 0 0 1 1]

0.81

DFT

0.67

DFT

0.54

SAX

0.65

SAX

0.54

DTW

0.80

DTW

0.62

Euclidean

0.55

Euclidean

0.50

Single level

VQ

MVQ

Weight

Vector

Accuracy

[1 0 0 0 0]

0.69

[0 1 0 0 0]

0.71

[0 0 1 0 0]

V. Megalooikonomou, Temple University

Accuracy

MVQ: Example: Two Time Series

• Given two time series t1 and t2 as follows:

• In the first level, they are encoded with the same codeword (3), so

they are not distinguishable

• In the second level, more details are recorded. These two series have

different encoded form: the first series is encoded with codeword 1

and 4, the second one is encoded with codewords 9 and 12.

V. Megalooikonomou, Temple University

Analysis of images by projection to 1D

(a)

(b)

(c)

(a) linear mapping of a 3D fMRI scan, (b) effect of binning by representing each bin with its Vmean measurement,

(c) the discriminative voxels after applying the t-test with θ=0.05

•

•

•

•

Hilbert Space Filling Curve

Binning

Statistical tests of significance on groups of points

Identification of discriminative areas by back-projection

V. Megalooikonomou, Temple University

[D. Kontos, V. Megalooikonomou, N. Ghubade, and C. Faloutsos.

IEEE Engineering in Medicine and Biology Society (EMBS), 2003]

Applying time series techniques

(a)

(b)

Areas discovered: (a) θ=0.05, (b) θ=0.01. The colorbar shows significance.

Results: 87%-98% classification accuracy (t-test, CATX)

Variation: Concatenate the values of statistically

significant areas spatial sequences

• Pattern analysis using the similarity between spatial

sequences and time sequences

• SVD, DFT, DWT, PCA (clustering accuracy:

89-100%)

V. Megalooikonomou, Temple University

[Q. Wang, D. Kontos, G. Li and V. Megalooikonomou, ICASSP 2004]

Conclusions

• ‘Find patterns/interesting things’ efficiently and

robustly in spatial and temporal data

• Use of partitioning and clustering

• Analysis at multiple resolutions

• Reduction of the number of tests performed

• Intelligent exploration of the space to find

discriminative areas

• Reduction of dimensionality

• Symbolic representation

• Nice summarization

V. Megalooikonomou, Temple University

Collaborators

Faculty:

• Zoran Obradovic

• Orest Boyko

• James Gee

• Andrew Saykin

• Christos Faloutsos

• Christos Davatzikos

• Edward Herskovits

• Fillia Makedon

• Dragoljub Pokrajac

V. Megalooikonomou, Temple University

Students:

• Despina Kontos

• Qiang Wang

• Guo Li

Others:

• James Ford

• Alexandar Lazarevic

Thank you!

Acknowledgements

This research has been funded by:

– National Science Foundation CAREER award 0237921

– National Science Foundation Grant 0083423

– National Institutes of Health Grant R01 MH68066 funded by NIMH, NINDS, and NIA

V. Megalooikonomou, Temple University