PPT - Tony Yates

advertisement

MSc Time Series Econometrics

Module 2: multivariate time series topics

Lecture 1: VARs, introduction, motivation, estimation,

preliminaries

Tony Yates

Spring 2014, Bristol

Topics we will cover

• Vector autoregressions:

– motivation

– Estimation, MLE, OLS, Bayesian using analytical and

Gibbs Sampling MCMC methods

– Identification [short run restrictions, long run

restrictions, sign restrictions, max share criteria]

– interpretation, use, contribution to macroeconomics

• Factor models in vector autoregressions

• TVP VAR estimation using kernels.

• Bootstrapping

VARs useful sources

• Chris Sims, 'Macroeonomics and reality‘

• Lutz Kilian 'Structural Vector Autoregressions‘

• Fabio Canova: Methods for Applied Business

Cycle research

• James Hamilton 'Time Series Analysis‘

• Helmut Luktepohl 'New introduction to

multiple time series analysis'

Useful sources, ctd

• Stock and Watson: implications of dynamic

factor models for VAR analysis

• Stock and Watson: 'Dynamic factor models'

Matrix/linear algebra pre-requisites

•

•

•

•

•

•

•

Scalar, vector, matrix.

Transpose

Inverse (matrix equivalent of dividing).

Diagonal matrix.

Eigenvalues and eigenvectors.

Powers of a matrix.

Matrix series sums. Matrix equivalent of geometric

scalar sums.

• Variance-covariance matrix.

• Cholesky factor of a variance-covariance matrix.

• Givens matrix.

Some applications

• Christiano, Eichenbaum, Evans: ‘Monetary policy

shocks: what have we learned and to what end?’

• Christiano, Eichenbaum and Evans (2005): ‘Nominal

rigidities and the dynamics effects of a monetary policy

shock’

• Mountford, Uhlig (2008): ‘what are the effects of fiscal

policy shocks?’

• Gali (1999): ’Technology, employment and the business

cycle….’

• I’ll remind you of these as we go through.

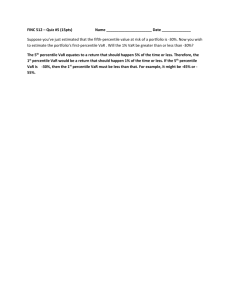

VAR motivation: Cowles Commission

models

• Dominant paradigm was large scale

macroeconometric models, in policy institutions

especially.

• Many estimated equations.

• Academic origins in foundational work to create

national accounts; Keynesian formulation of

macroeconomics; Haavelmo’s notion of

‘probability model’ applied to this.

• Nice discussion in Sims’ Nobel acceptance

lecture.

Illustrative but silly example of a CC

model

Sims: Equation for C excludes U, TU

Equation for Y excludes C, U

And so on....

C t c 0 c 1 Yt c 2 Yt1 u Ct

Yt y 0 y 1 N t y 2 N t1 y 3 Wt u Yt

Wt w 0 w 1 U t w 2 TU t u Wt

U t u 0 u 1 Yt u 2 Yt1 u Ut

TU t tu 0 tu 1 Yt tu 2 U t u TUt

Those u’s look exogenous

and are meant to be, but

are they really primitive

shocks? Both Sims’ and

Lucas’ critiques would

suggest not

Lucas: equation for C sounds like common

sense, but is it the C that reflects the

solution to a consumers’ problem in a

general equilibrium model? Maybe, or

maybe not

[Nobel prize yielding] Critiques of

Cowles Commission approach

• Lucas (1976):

– Laws of motion have to come from solving problems

of agents in the model

– If not, correlations and therefore coefficients will

change if policy changes. [Lucas ‘Critique’]

• Sims (1981):

– “Macroeconomics and reality”

– ‘Incredible’ identification restrictions

– Spurious: categorisation of some variables as

exogenous & restriction of some dynamic coefficients

to be zero

Response to Sims and Lucas critiques

• Estimate and identify a vector autoregression.

• No incredible restrictions. Everything left in.

• Reduced form shocks span the structural

shocks.

• Structural shocks and their effects sought

through identification, reference to classes of

Lucas-Critique proof models

• ‘Modest policy interventions’ [Sims and Zha].

Cowles Commission variables set out

as a VAR model

Yt

Ct

A 011 A 012 . . . A 016

Yt

A 021

.

. . .

.

.

.

. . .

.

Ut

.

.

. . .

.

TU t

.

.

. . .

.

Nt

A 061

.

. . . A 066

Wt

Potentially, everything is a

function of everything else

lagged

Yt1 A 1 Yt2

. . . Zt

Simultaneity encoded

in the reduced form

errors. To be

disentangled into

structural shocks

through identification.

VAR topics and contributions to macro

(1)

• Estimating parameters in a DSGE model:

– Rotemburg and Woodford (1998)

– Christiano, Eichenbaum and Evans (2005)

• Identify monetary policy ‘shock’

• Choose parameters of the model so that

impulse response to this shock in the DSGE

model as close as possible to corresponding

IRF in the VAR.

VAR topics and contributions to macro

(2)

• Evaluating the RBC claim that technology

shocks cause business cycles

– Gali (1999)

• Identified technology shocks as the only thing

that could change N/Y in long run

• Showed that these caused hours work to fall,

not rise

• Inconsistent with RBC model (‘make hay while

the sun shines’)

VAR topics and contributions to macro

(3)

• Cogley and Sargent (2005)

– VAR with time-varying parameters

– Multivariate counterpart to persistence,

‘predictability’

– Bayesian estimation

• By how much did inflation predictability change

in the post-war period?

• If it changed a lot, what does that suggest about

its causes?

Misc technical preliminaries to help

you read the papers

• The lag polynomial operator ‘L’

y t 1 y t1 2 y t2

...

p y tp

0 L 0 y t1 1 L 1 y t1

...

p1 L p1 y t1

L

y t1

Ly t y t1 , L 1 y t y t1

Lag / lead operator denoted by positive,

negative powers of L

Misc technical preliminaries...

• Cholesky decomposition

11

.

.

21 22

.

31 32 33

a 0 0

. b 0

a 0 0

a . .

. b 0

0 b .

. . c

0 0 c

Sigma is a v-cov matrix,

with elements symmetric

about the diagonal;

Cholesky factor on the

RHS

a . .

PP

. . c

0 b .

0 0 c

1 0 0

PP I

0 1 0

0 0 1

a, b, c 0

Here we decompose further

using an orthonormal

matrix P

Diagonals of vcov matrix are positive beause

these correspond to variances…

Misc technical preliminaries

• Givens matrix is an example of an orthonormal

matrix

1 0 0 0

P

0 c s 0

0 s c 0

, c cos

, s sin

0 0 0 1

Also known as a Givens ‘rotation’

Useful theorem: any orthonormal matrix can be shown to be a product of

Givens matrices with different thetas, the number depending on the

dimension of the orthonormal matrix concerned.

Products of orthonormal matrices

Pa P

a I, P b P b I

Pa Pb

P a P b I

If two matrices are orthonormal, then so is the product of those matrices.

The VAR impulse response function

y t y t1 e t

y irf

y1

e

y2

e

y3

2 e

...

...

yn

n1 e

Impulse response function in a univariate time series model

Take some unit value for e1, then substitute into eq for y repeatedly

AR(1) impulse response function

1

0.9

0.8

0.7

0.6

0.5

0.4

0.3

0.2

0.1

0

0

10

20

30

40

50

60

70

80

90

100

Y_t=0.85*y_t-1+e_t; e(1)=1

IRF shrinks back to zero as we multiply 1 by successively higher

powers of 0.85

Matlab code to plot IRF in an AR(1)

Matlab code to plot an AR(1) impulse

response

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

%compute impulse response in an AR(1).

%illustration for MSc Time Series Econometrics module.

%y_t=rho*y_t-1+e_t.

%calibrate parameters

rho=0.85;

%persistence parameter in the ar(1)

samp=100;

%length of time we will compute y for.

y=zeros(samp,1);

%create a vector to store our ys.

%semi colons suppress output of every command

%to screen.

e=1;

%value of the shock in period 1.

y(1)=e;

%first period, y=shock.

for t=2:samp

y(t)=rho*y(t-1);

end

%loop to project forwards effect of the unit shock.

%now plot the impulse response

time=[1:samp];

%create a vector of numbers to record the progression of time

plot(time,y)

VAR impulse response

Yt

Yirf,1

y1

y2

AYt1 e t

t

a 11 a 12

y1

a 21 a 22

y2

e, Ae, A 2 e, . . .

,e

t1

e1

e2

e1

e2

A multivariate example.

For IRF to e1, choose e=[1,0] for first period, then project forwards....

Matlab code to plot VAR(1) impulse

response function

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

%compute impulse response in an VAR(1).

%illustration for MSc Time Series Econometrics module.

%y_t=A*y_t-1+e_t.

clear all;

%bit of housekeeping to clear all variables so each time you run program as you are debugging

%you know you are not adding onto previous

%values

%calibrate parameters

A=[0.6 0.2;

0.2 0.6];

samp=100;

y=zeros(samp,2);

e=[1;0];

y(1,:)=e;

%length of time we will compute y for.

%create a matrix this time to store our 2 by samp bivariate time series y={y1,y2}.

%we will simulate a shock to the first equation. Note shock in first period is now a 2 by 1 vector.

%first period, y=shock. the colon ':' means 'corresponding values in this dimension'

for t=2:samp

y(t,:)=A*y(t-1,:)';

%loop to project forwards effect of the unit shock.

end

%' is transpose

%now plot the impulse response

time=[1:samp];

%create a vector of numbers to record the progression of time

subplot(2,1,1)

plot(time,y(:,1))

subplot(2,1,2)

plot(time,y(:,2))

VAR(1) impulse response

1

0.8

0.6

0.4

0.2

0

0

10

20

30

40

50

60

70

80

90

100

0

10

20

30

40

50

60

70

80

90

100

0.25

0.2

0.15

0.1

0.05

0

Y_t=A*Y_t-1’+e_t, e_t=[1 0], A=[0.6 0.2;0.2 0.6]

Note that the eigenvalues of A are 0.4 and 0.8

Using a VAR to forecast

f

Yth | e s0,st

f

Yth

h

e tA

Forecast at future horizon h, conditional

on starting from steady state= IRF to the

latest estimated shock.

h h1 h2

hn

e t A e t1 A e t2 A

...

e tn A

Forecast conditional on all the shocks estimated to have occurred:

Sum of IRF to that shock at increasing horizons.

Terms further to the right get smaller as higher powers of h [=smaller for

stable VAR]

Reflects response to shocks that hit further and further back in time.

Forecasts further and further out shrink back to steady state for the same

reason. Higher powers of h , A has eigenvalues <1 in absolute value.

VAR(1) representation of a VAR(p)

Yt A 1 Yt1 A 2 Yt2

. . . A p Ytp e t

Recall that you

learned how to write

the VAR

representation of an

AR(p).

Yt

Yt

Yt1

AYt1 e t

...

Ytp1

This is analogous.

A1 A2 . . . Ap

A

Ik

0 ...

0

Ik

0 ...

0

0

Ik

0

0

et

,et

0

...

0

Moving average representation of a

VAR(p)

Yt Ai e ti

i0

We have used the VAR(1) form of the VAR(p).

In words, it means Y is the sum of shocks, where each shock taken to higher

and higher powers as we go back in time.

Notice how this is related to the formula for the VAR impulse response we

computed before.

Persistence, memory, predictability,

stability

• When a shock hits, how long does it takes for its effects to

die out?

• Applications:

– Business cycle theory concerned with mechanisms for

propagation. Consumption and output not white noise: why?

– Bad monetary and fiscal policy could be part of the story about

why shocks take time to die out

• Time series notions of persistence etc are one way to

characterise propagation and bad policy.

• Why ‘bad’, because more persistence means larger

variance, and larger variance for most utility functions is

bad

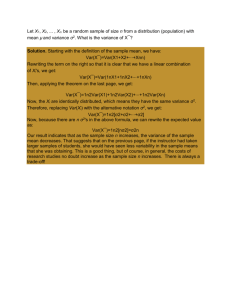

Persistence and variance

y t y t1 e t

var

y t 2 var

y t1 var

e t 2cov

y t1 , e t

2 var

y t1 var

et

NB : var

y t var

y t1

var

et

var

y t

1 2

Persistence, higher rho, means lower denominator, means higher

unconditional variance of y

Most economic models asssume agents don’t like variance

So persistence is interesting economically, since it usually indicates

something ‘bad’ is happening

Persistence and predictability

j1

j

0

P t 1 h

ei

h0

e

i

A ht t A ht

A ht t A ht

ei

ei

Multivariate predictability: if set horizon=2, dimension of

Y_t=1, then this formula delivers rho^2, ie persistence squared.

AR ‘stability’

y t

y t1 e t , || 1

Univariate model.

e,

e, 2 e, . . . n e

limt e 0

t

Effects of shock eventually die

out. So series converges to

something.

Stability=stationarity=series have convergent sums=first and second moments

independent of t, and computable

y t e t

e t1 2 e t2

. . . n e tn s e ts

s0

lims s e ts 0

The contribution of a shock very far

back goes to zero

Here we take the perspective that today’s data is the sum of the

effects of shocks going back into the infinite past.

Since today’s data is finite and well-defined, then it must be

that shocks infinitely far back have no effect. Otherwise today’s

data would be infinitely large.

VAR(1) stability

Yt AYt1 e t

x eig

A

;|x | 1, x

det

I K Ax0 |x | 1

Stability condition echoes the AR case,

but where does the dependence on

the eigenvalues come from?

Yt A 0 e t A 1 e t1 A 2 e t2

...

A n e tn

A 0 L 0 e A Le t A 2 L 2 e t

...

Ln A n et

Here we write out a vector Y as the sum of contributions from shocks

going back further and further into time.

Explaining VAR(1) eigenvalue stability

condition

A n PDn P 1

Dn

n1 0

0

0 n2

0

0

P

0 nd

v1 v2 vd

The crucial thing is to make this A^n go to zero

as n goes to infinity.

Importance of eigenvalues in this happening

comes from the fact that we can write a

square matrix using the eigenvalueeigenvector decomposition.

And then compute the power of A using the

powers of the eigenvales of A.

So to make A^n go to zero, we have to make

all the diagonal elements of D go to zero, and

by analogy with the AR(1) case, this means the

eigenvalues have to be < 1 in absolute value.

VAR(p) stability

Yt

Yt

Yt1

AYt1 e t

...

Ytp1

A

A1 A2 . . . Ap

et

Ik

0 ...

0

0

Ik

0 ...

0

0

Ik

0

0

x eig

A

; |x | 0, x

,et

...

0

VAR estimation

•

•

•

•

Linear, multivariate model

Suggests estimation by...

OLS!

Or MLE, which in these circumstances [linear;

Gaussian errors] is equivalent.

• MLE cumbersome because you may have

many parameters over which to optimise

• Why is this cumbersome? Well, you tell me.

OLS estimation of VAR parameters

y t

y t1 e t

y

y2 , . . . yT

, x

y 2 , . . . y T1

x x 1 x y

A XX

1

XY

Univariate case

Multivariate case for

VAR(1) representation of

VAR(p)

OLS estimate of reduced form residual

variance covariance matrix

y t

y t1 e t

1/Te e , e y

x

y y 2 . . . y T , x y 1 . . . y T1

1/T

e e , e Y AX

Univariate case.

Take data, subtract prediction, multiply

residual vector by transpose....

Analogously for the multivariate

case

The likelihood function for a VAR

• Why bother if we can use OLS? Given the

drawbacks of MLE?

• Log posterior is sum of log likelihood and log

prior: so we need it for Bayesian estimation

• Key to understanding many concepts:

– Origin and derivation of standard errors from slope of

LF

– Estimation when we can’t evaluate the LF but have to

approximate it by simulation

– Pseudo-ML when the data are non-Gaussian

Likelihood fn for an AR(1)

y t y t1 e t , e t N

0, 2e

E

y 1 0

2e

var

y 1 E

y 1 0

1

2

y 1 , y 01

f y1

y 01 ; , 2e

y 02

1

1

exp

2

2e /

1 2

2 2e

1 2

y 2 y 1 e 2

y 2 y 1 y 01 N

y 01 , 2e

f y 2 y 1

y 02

y 01 , , 2e

y 02 y 01 2

1

exp

2

2

22e /

1 2

2 e

1

LF for an AR(1)/ctd...

f yy 1 ,y 2 ... f y 1 f y 2 y 1 f y 3 y 2 . . . f y Ty T1

y 02

logf yy 1 ,y 2 ... 0. 5 log

2

0. 5 log

1 2 1

2/

1 2

2

2

T 1

/2log

2

T 1

/2log

2

T

y 0t

y 0t1 2

2

2

t2

Logging turns complicated product into long sum

We can maximise this and ignore constants.

Likelihood for a VAR(p)

Yt A 1 Yt1 A 2 Yt2 . . . A p Ytp ,

Yt Yt1 , Yt2 , . . . Ytp1 N

Yt1 , Yt2 , . . . Ytp

Yt

A1 , A2 . . . . Ap

A

AYt ,

Yt Yt1 , Yt2 , . . . Ytp1 N

n/2 |1 |

2

Y0t , Y0t1 , Y0t2 . . . . ;A,

f Y tY t1 ,Y t2 ,...Y tp1

0.5

Y0t AY0t

Y0t AY0t 1

0. 5

exp

T

f Y tY t1 ,...

. . . log

f Y 2 ...

f Y 1 ... log

f YY 1 ,Y 2 ...Y Tlog

log

t1

T/2log|1 |

2

Tn/2log

T

Y0t AY0t

Y0t AY0t 1

0. 5

t1

Recap

• Remember likelihood assumes Gaussian errors

• In some circumstances you can get consistent

estimates of parameters (but not standard

errors) even if this is violated.

Distributions for a VARs impulse

response functions

y t

y t1 e t

IRF is easy in an AR1.

e,

e, 2 e, . . . n e

irf

Yth

A e, h 0, 1, 2. . .

h

It involves powers of

only 1 coefficient. So

distributions of rho can

be used to compute

distributions of the

elements of the IRFs

vector. Work it out.

Things harder with a VAR as involves

many, jointly distributed coefficients.

Bootstrapping algorithm for VAR IRFs

Yt AYt1 e t

Suppose have estimated a VAR(p)

1. Draw, with replacement, a time series of shocks, e i e i1 , e i2 , , ,

2. Create a new time series of observeables using Yt AYt1 e it

3. Re-estimate the VAR to produce A i

4. Compute IRFs using a unit shock e 0

1, 0, 0. . .

and powers of A i

5. set i i 1, return to step 1. if i iter

Set iter=200 or so.

Algorithm will generate iter vectors h long, h=max chosen horizon of

the IRF.

Q: how to do step 1 with computer random number generator?

Why estimate VARs

• Estimate RBC/DSGE model by choosing the

parameters to match the VARs IRF

• Estimate using indirect inference.

• Test implications of model by identifying a

shock.

• Accounting for the business cycle by

identifying a shock.

• Forecasting.

Indirect inference with VARs

1. Estimate a VAR on the data

2. Generate data from DSGE model for candidate parameter values

i

3. Estimate a VAR on the generated data

4. Compute score S i distance of 1 from 2

5. Let i i 1;Go back to 2. i iterm

6. i such that S

i min

S

Key reference is Gourieroux et al

Measure of distance, eg, euclidian norm, perhaps weighted in some way.

VAR impulse response matching [Rotemburg and Woodford] is akin to

indirect inference.

In some cases, DSGE models don’t have VAR representations, though

they have VAR approximations

When they do, more properly called a partial information method, rather

than indirect inference.

IRFs one of the infinite moments summarised by the likelihood

Next topic, in fact most of rest of

course, will be on identification in

VARs

• Apologies – we are going to do some

economics.

• These are going to be ‘credible’ identification

restrictions, contrast with Cowles Commission.

• But still contestable.

• They will be based on classes of business cycle

models.

• Enables a test of a theory without making too

many auxiliary assumptions that could fail.

From VARs to SVARs

• Short-run, ‘zero’ restrictions [Sims, CEE]

• Long run restrictions. [Blanchard-Quah, Gali]

• Sign restrictions. [Faust, Uhlig, Mountford and

Uhlig, Canova and de Nicolo]

• Heteroskedasticity-based identification

[Rigobon]