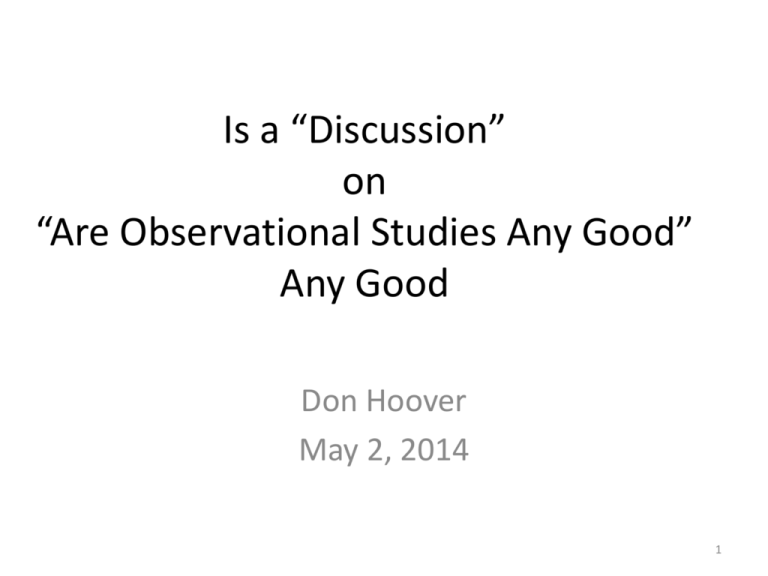

Is a *Discussion* on *Are Oservational Studies Any Good* Any Good

advertisement

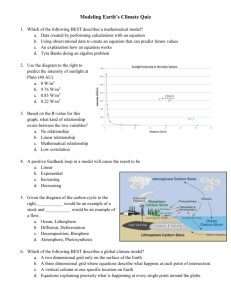

Is a “Discussion” on “Are Observational Studies Any Good” Any Good Don Hoover May 2, 2014 1 Everyone Already Knows Observational Studies Are Not Perfect … Right? • But who thinks – the real type 1 error is 0.55 when the nominal is 0.05? – The real coverage of a 95% confidence interval is 25%? – That’s what David Madigan and the OMAP team find • This obviously makes such results meaningless • But how many papers with these properties are being (and will continue to be) published ??? 2 But Does David’s Talk Really Apply to ALL Observational Studies? • They Only Look at Observational Studies of Drug Use and Adverse Consequences • There’s other kinds of Observational Studies … on HIV, Epi, Health Behaviors, Nutrition, etc. – No one has looked at these types of studies • These other studies must have similar problems • Maybe at a smaller magnitude – But there are no “negative controls” for these settings … so no one can check this 3 The Approach here is Creative and Innovative • Finding Negative Control Exposures or Outcomes to derive empirical distribution of the test statistic somewhat equalizes assumptions and unmeasured confounding • With a given Drug Use as the exposure and a given Disease the outcome, such negative controls are readily available in many data sets • So maybe something like it should be used when possible • But now some questions …… 4 Q1- Why were Negative Control Drugs More Associated With Outcomes than by Chance? • People put on Any Drug are Sicker? • Those receiving a negative (control) drug are more likely to receive some other positive drug? • Those apriori more likely to have a given disease outcome are steered to the negative drugs? • Incorrect statistical models used? 5 Q2- Is this Approach Practical? • A lot more work to fit many models than the standard approach which only fits one – More money as well - A grant application using it would be less likely to get funded – More work also means more chance for error in implementation 6 Q3 – How does one interpret a positive drug with empirical P < 0.05? Calibrated Normal Scores of Negative Controls Positive Drug with empirical P < 0.05 The use of an “empirical” approach acknowledges we do not know what is going on so maybe the P < 0.05 is from model artifact not causal 7 Q4 – What is done with “Negative Drugs” more extreme than the Positive One Calibrated Normal Scores of Negative Controls Positive Drug with P < 0.05 Should these Negative Controls all be Examined for Causal Association as their Signal is larger than the positive drug? 8 Q5 - How to handle Heterogeneity in Denominator of Calibration Statistic From Schumie … Madigan Stat Med 2014 33; 209-18 Variance Log ( RR) may introduce Apples to Oranges comparisons especially if τ 2n1<σˆ 2 , and other τ 2's although such does not appear to be the case in the examples David used 9