Document

advertisement

IE241 Introduction to

Mathematical Statistics

Topic

Slide

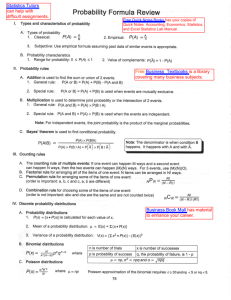

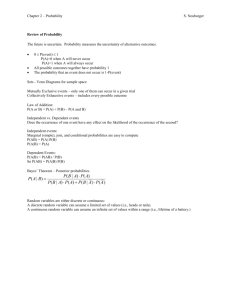

Probability …………………………………………………….….3

a priori …………………………………………………..4

set theory ……………………………………………..10

axiomatic definition ………………………………….14

marginal probability ………………………………………. 17

conditional probability ……………………………….19

independent events …………………………………20

Bayes’ formula ……………………………………….28

Discrete sample spaces ……………………………….33

permutations ………………………………………….34

combinations ………………………………………… 35

Statistical distributions …………………………………37

random variable ……………………………………...38

binomial distribution ………………………………….42

Moments ………………………………………………….47

moment generating function …………………….…50

Other discrete distributions ……………………………59

Poisson …………………………………………………59

Hypergeometric ………………………………………62

Negative binomial ……………………………………66

Continuous distributions ……………………………….69

Normal ………………………………………………….70

Normal approximation to binomial ……………… ..79

Uniform (rectangular) ………………………………..84

Gamma………………………………………………… 85

Beta …………………………………………………… 86

Log normal …………………………………………….87

Cumulative distributions…..……………………………89

Normal cdf……………………………………………..90

Binomial cdf ………………………………………….94

Empirical distributions …………………………………99

Random sampling ……………………………………99

Topic

Slide

Estimate of mean ………………………………….112

Estimate of variance ……………………………….113

degrees of freedom ………………………………..116

KAIST sample ………………………………………..119

Percentiles and quartiles……………………………………122

Sampling distributions ……………………………..…124

of the mean …………………………………..……..126

Central Limit Theorem………………………………127

Confidence intervals ………………………………….130

for the mean …………………………………………130

Student’s t ……………………………………………137

for the variance ……………………………………..143

Chi-square distribution …………………………….143

Coefficient of variation ……………………………….146

Properties of estimators………………………………149

unbiased……………………………………………… 150

consistent……………………………………………..152

minimum variances unbiased …………………….152

maximum likelihood…………………………………154

Statistical Process Control……………………………157

Linear functions of random variables ………………170

Multivariate distributions ……………………..………177

bivariate normal ………….………………………….177

correlation coefficient ………………………………182

covariance ……………………………………………183

Regression functions ………………………………….198

method of least squares……………………………199

multiple regression ………………………………….206

General multivariate normal…………………………211

Multinomial……………………………………………… 215

Marginal distributions………………………………….228

Conditional distributions………………………………236

2

Statistics is the discipline that permits

you to make decisions in the face of

uncertainty. Probability, a division of

mathematics, is the theory of uncertainty.

Statistics is based on probability theory,

but is not strictly a division of

mathematics.

However, in order to understand statistical

theory and procedures, you must have an

understanding of the basics of probability.

3

Probability arose in the 17th

century because of games of

chance. Its definition at the time

was an a priori one:

If there are n mutually exclusive,

equally likely outcomes and

if nA of these outcomes have

attribute A, then the probability of

A is nA/n.

4

This definition of probability seems reasonable for

certain situations. For example, if one wants the

probability of a diamond in a selection from a card

deck, then A = ♦, nA = 13, n = 52 and the probability

of a diamond = 13/52 =1/4.

As another example, consider the probability of an

even number on one roll of a die. In this case, A =

even number on roll, n = 6, nA = 3, and the

probability of an even number = 3/6 = 1/2.

As a third example, you are interested in the

probability of J♦ on one draw from a card deck.

Then A = J♦, n = 52, and nA = 1, so the probability of

J♦ = 1/52.

5

The conditions of equally likely and mutually exclusive

are critical to this a priori approach.

For example, suppose you want the probability of the

event A, where A is either a king or a spade drawn at

random from a new deck. Now when you figure the

number of ways you can achieve the event A, you

count 13 spades and 4 kings, which seems to give

nA = 17, for a probability of 17/52.

But one of the kings is a spade, so kings and spades

are not mutually exclusive. This means that you are

double counting. The correct answer is nA = 16, for a

probability of 16/52.

6

As another example, suppose the event A is

2 heads in two tosses of a fair coin. Now the

outcomes are 2H, 2T, or 1 of each. This

would seem to give a probability of 1/3.

But the last outcome really has twice the

probability of each of the others because the

right way to list the outcomes is: HH, TT, HT,

TH. Now we see that 1 head and 1 tail can

occur in either of two ways and the correct

probability of 2H is 1/4.

7

But there are some problems with the a priori

approach.

Suppose you want the probability that a

positive integer drawn at random is even.

You might assume that it would be 1/2, but

since there are infinitely many integers and

they need not be ordered in any given way,

there is no way to prove that the probability

of an even integer = 1/2.

The integers can even be ordered so that the

ratio of evens to odds oscillates and never

approaches any definite value as n increases.

8

Besides the difficulty of an infinite

number of possible outcomes, there is

also another problem with the a priori

definition. Suppose the outcomes are

not equally likely.

As an example, suppose that a coin is

biased in favor of heads. Now it is

clearly not correct to say that the

probability of a head = the probability of

a tail = 1/2 in a given toss of a coin.

9

Because of these difficulties, another

definition of probability arose which is based

on set theory.

Imagine a conceptual experiment that can be

repeated under similar conditions. Each

outcome of the experiment is called a sample

point s. The totality of all sample points

resulting from this experiment is called a

sample space S.

An example is two tosses of a coin. In this

case, there are four sample points in S:

(H,H), (H,T), (T,H), (T,T).

10

Some definitions

• If s is an element of S, then s∈S.

• Two sets are equal if every element of one is

also an element of the other.

• If every element of S1 is an element of S, but

not conversely, then S1 is a subset of S,

denoted S1⊂S.

• The universal set is S where all other sets are

subsets of S.

11

More definitions

• The complement of a set A with respect to

the sample space S is the set of points in S

but not in A. It is usually denoted A .

• If a set contains no sample points, it is called

the null set, φ.

• If S1 and S2 are two sets ⊂S, then all sample

points in S1 or S2 or both are called the

union of S1 and S2 which is denoted S1∪ S2.

12

More definitions

•

If S1 and S2 are two sets ⊂S, then the

event consisting of points in both S1 and

S2 is called the intersection of S1 and S2

which is denoted S1 ∩ S2.

•

S is called a continuous sample space if S

contains a continuum of points.

•

S is called a discrete sample space if S

contains a discrete number of points or a

countable infinity of points which can be put

in one-to-one correspondence with the

positive integers.

13

Now we can proceed with the axiomatic

definition of probability. Let S be a sample

space where A is an event in S. Then P is a

probability function on S if the following three

axioms are satisfied:

• Axiom 1. P(A) is a real nonnegative number

for every event A in S.

• Axiom 2. P(S) = 1.

• Axiom 3. If S1, S2, … Sn is a sequence of

mutually exclusive events in S, that is, if

Si ∩ Sj= φ for all i,j where i≠j, then

P(S1∪S2∪…∪Sn) = P(S1)+P(S2)+…+P(Sn)

14

Some theorems that follow from this definition

• If A is an event in S, then the probability that

A does not happen = 1- P(A).

• If A is an event in S, then 0 ≤ P(A) ≤ 1.

• P(φ) = 0.

• If A and B are any two events in S, then

P(A∪B) = P(A)+ P(B) – P(A ∩ B) where

A ∩ B represents the joint occurrence of both

A and B. P(A ∩ B) is also called P(A,B).

15

As an illustration of this last theorem -in S, there are many points, but the event

A and the event B are overlapping. If we

didn’t subtract the P(A∩B) portion, we

would be counting it twice for P(AUB).

A

B

16

Marginal probability is the term used

when one or more criteria of

classification is ignored.

Let’s say we have a sample of 60

people who are either male or female

and also who are either rich, middleclass, or poor.

17

In this case, we have the cross-tabulation of

gender and financial status shown in the table

below.

Status

Rich

Middle

-class

Poor

Gender

marginal

Male

3

28

3

34

Female

1

20

5

26

Status

marginal

4

48

8

60

Gender

The marginal probability of male is 34/60 and

the marginal probability of middle-class is

48/60.

18

More theorems

• If A and B are two events in S such that

P(B)>0, the conditional probability of A

given that B has happened is

P(A| B) = P(A ∩ B) / P(B).

• Then it follows that the joint probability

P(A ∩ B) = P(A| B) P(B).

19

More theorems

•

If A and B are two events in S, A and

B are independent of one another if

any of the following is satisfied:

P(A| B)= P(A)

P(B| A)= P(B)

P(A ∩ B) = P(A) P(B)

20

• P(A ∪ B) is the probability that either the

event A or the event B happens. When we

talk about either/or situations, we always are

adding probabilities.

P(A ∪ B) = P(A) + P(B) – P(A,B)

• P(A ∩ B) or P(A,B) is the probability that both

the event A and the event B happen. When

we talk about both/and situations, we are

always multiplying probabilities.

P(A ∩ B) = P(A) P(B) if A and B are

independent and

P(A ∩ B) = P(A|B) P(B) if A and B are not

independent.

21

As an example of conditional probability, consider an

urn with 6 red balls and 4 black balls. If two balls are

drawn without replacement, what is the probability

that the second ball is red if we know that the first

was red?

Let B be the event that the first ball is red and A be

the event the second ball is red. P(A ∩ B) is the

probability that both balls are red.

There are 10C2 = 45 ways of drawing two balls and

6C2 = 15 ways of getting two red balls.

So P(A ∩ B) = 15 / 45 = 1/3. P(B), the probability

that the first ball is red is 6/10 = 3/5.

Therefore, P(A| B) = 1/3 = 5/9.

3/5

22

This probability could be computed from the

sample space directly because once the first

red ball has been drawn, there remain only 5

red balls and 4 black balls. So the probability

of drawing red the second time is 5/9.

The idea of conditional probability is to

reduce the total sample space to that portion

of the sample space after the given event has

happened. All possible probabilities

computed in this reduced sample space must

sum to 1. So the probability of drawing black

the second time = 4/9.

23

Another example involves a test for detecting

cancer which has been developed and is

being tested in a large hospital.

It was found that 98% of cancer patients

reacted positively to the test, while only 4% of

non-cancer patients reacted positively.

If 3% of the patients in the hospital have

cancer, what is the probability that a patient

selected at random from the hospital who

reacts positively will have cancer?

24

Given:

P(reaction | cancer) = .98

P(reaction | no cancer) = .04

P(cancer) = .03

P(no cancer) = .97

Needed:

P (reaction & cancer )

P (cancer | reaction)

P (reaction)

25

P(r & c ) = P(r|c) P(c)

= (.98)(.03)

= .0294

P(r & nc) = P(r|nc) P(nc)

= (.04)(.97)

= .0388

P(r) = P(r & c)+ P(r & nc)

= .0294 + .0388

= .0682

26

Now we have the information we need

to solve the problem.

P (reaction & cancer )

P (cancer | reaction)

P (reaction)

.0294

P (cancer | reaction)

.4312

.0682

27

Conditional probability led to the development of

Bayes’ formula, which is used to determine the

likelihood of a hypothesis, given an outcome.

P ( H i | D)

P(Hi )P(D | Hi )

k

P(H )P(D | H )

i 1

i

i

This formula gives the likelihood of Hi given the data

D you actually got versus the total likelihood of every

hypothesis given the data you got. So Bayes’

strategy is a likelihood ratio test.

Bayes’ formula is one way of dealing with questions

like the last one. If we find a reaction, what is the

probability that it was caused by cancer?

28

Now let’s cast Bayes’ formula in the context

of our cancer situation, where there are two

possible hypotheses that might cause the

reaction, cancer and other.

P (C | R)

P (C ) P ( R | C )

P (C ) P ( R | C ) P (O ) P ( R | O )

(.03)(.98)

(.03)(. 98) (.97)(.04)

.0294

.0294 .0388

0.4312

P (C | R)

which confirms what we got with the classic

conditional probability approach.

29

Consider another simple example where there are two identical

boxes. Box 1 contains 2 red balls and box 2 contains 1 red

ball and 1 white ball. Now a box is selected by chance and 1

ball is drawn from it, which turns out to be red. What is the

probability that Box 1 was the one that was selected?

Using conditional probability, we would find

P ( Box1 | R)

P ( Box1, R)

P ( R)

and get the numerator by

P(Box1,R) = P(Box1)P(R|Box1)

= (½ )(1)

= 1/2

Then we get the denominator by

P(R) =P(Box1,R) + P(Box2,R)

= ½

+

¼

= 3/4

30

Putting these in the formula,

P ( Box1 | R)

P ( Box1, R)

P ( R)

1/ 2

3/ 4

2

3

If we use the sample space method, we have

four equally likely outcomes:

B1R1

B1R2

B2R

B2W

The condition R restricts the sample space to the

first three of these, each with probability 1/3.

Then

P(Box1|R) = 2/3

31

Now let’s try it with Bayes’ formula. There are only two

hypotheses here, so H1= Box1 and H2 = Box2. The data, of

course, = R. So we can find

P ( B1 | R)

P ( B1 ) P ( R | B1 )

P ( B1 ) P ( R | B1 ) P ( B2 ) P ( R | B2 )

(1 / 2)(1)

2

(1 / 2)(1) (1 / 2)(1 / 2) 3

And we can find

P ( B2 | R)

P ( B2 ) P ( R | B2 )

P ( B1 ) P ( R | B1 ) P ( B2 ) P ( R | B2 )

(1 / 2)(1 / 2)

1

(1 / 2)(1) (1 / 2)(1 / 2) 3

So we can see that the odds of the data favoring Box1 to Box2

are 2:1.

32

Discrete sample spaces with a finite number of

points

• Let s1, s2, s3, … sn be n sample points in S

which are equally likely. Then

P(s1) = P(s2) = P(s3) … P(sn) = 1/n.

If nA of these sample points are in the event A,

then P(A) = nA /n, which is the same as the

a priori definition.

• Clearly this definition satisfies the axiomatic

conditions because the sample points are

mutually exclusive and equally likely.

33

Now we need to know how many arrangements of a

set of objects there are. Take as an example the

number of arrangements of the three letters a, b, c.

In this case, the answer is easy:

abc, acb, bac, bca, cab, cba.

But if the number of arrangements were much larger,

it would be nice to have a formula that figures out

how many for us. This formula is the number of

arrangements or permutations of N things = N!.

Now we can find the number of permutations of N

things if we take only x of them at a time. This

formula is NPx = N! / (N-x)!

34

Next we want to know how many

combinations of a set of N objects there are if

we take x of them at a time. This is different

from the number of permutations because we

don’t care about the ordering of the objects,

so abc and cab count as one combination

though they represent two permutations.

The formula for the number of combinations

of N things taking x at a time is

N

N!

N Cx

x x!( N x )!

35

How many pairs of cards can be drawn from a deck,

where we don’t care about the order in which they

are drawn? The solution is

52 C 2

52!

1326

2!(52 2)!

ways that two cards can be drawn.

Now suppose we want to know the probability that

both cards will be spades. Since there are 13

spades in the deck and we are drawing 2 cards, the

number of ways that 2 spades can be drawn from the

13 available is

13!

78

13 C 2

2!(13 2)!

So the probability that two spades will be drawn is

78 /1326.

36

Statistical Distributions

Now we begin the study of statistical

distributions. If there is a distribution, then

something must be being distributed. This

something is a random variable.

You are familiar with variables in functions

like a linear form: y = a x + b. In this case,

a and b are constants for any given linear

function and x and y are variables.

In the equation for the circumference of a

circle, we have C = πd where C and d are

variables and π is a constant.

37

A random variable is different from a

mathematical variable because it has a

probability function associated with it.

More precisely, a random variable is a

real-valued function defined on a

probability space, where the function

transforms points of S into values on

the real axis.

38

For example, the number of heads

in two tosses of a fair coin can be

transformed as:

Points

in S

X(s)

s1 s2 s3

HH HT TH

2

1

1

s4

TT

0

Now X(s) is real-valued and can be

used in a distribution function.

39

Because a probability is associated with each

element in S, this probability is now

associated with each corresponding value of

the random variable.

There are two kinds of random variables:

discrete and continuous.

• A random variable is discrete if it assumes

only a finite (or denumerable) number of

values.

• A random variable is continuous if it assumes

a continuum of values.

40

We begin with discrete random variables.

Consider a random experiment where four fair

coins are tossed and the number of heads is

recorded.

In this case, the random variable X takes on

the five values: 0, 1, 2, 3, 4. The probability

associated with each value of the random

variable X is called its probability function p(X)

or probability mass function, because the

probability is massed at each of a discrete

number of points.

41

One of the most frequently used

discrete distributions in applications of

statistics is the binomial. The binomial

distribution is used for n repeated trials

of a given experiment, such as tossing

a coin. In this case, the random

variable X has the probability function:

P(x) = nCx pxqn-x where p+q =1

x =0,1,2,3,…,n

42

In one toss of a coin, this reduces to pxq0 and is

called the point binomial or Bernoulli distribution.

p = the probability that an event will occur and, of

course, q = the probability that it will not occur.

p and n are called parameters of this family of

distributions. Each time either p or n changes, we

have a new member of the binomial family of

distributions, just as each time a or b changed in the

linear function we had a new member of the family of

linear functions.

The binomial distribution for 10 tosses of a fair coin

is shown below. The actual values are shown in the

accompanying table. Note the symmetry of the

distribution. This always happens when p = .5.

43

B ino mial distributio n fo r 1 0 to sses o f a fair co in

0 .2 5

P(x)

0 .2

0 .1 5

0 .1

0 .0 5

0

0

1

2

3

4

5

6

7

8

9

10

Number o f heads

44

X

P(x)

0 0.000977

1 0.009766

2 0.043945

3 0.117188

4 0.205078

5 0.246094

6 0.205078

7 0.117188

8 0.043945

9 0.009766

10 0.000977

45

The probability of 5 heads is highest so

5 is called the mode of x. The mode of

any distribution is its most frequently

occurring value. The mode is a

measure of central tendency.

5 is also the mean of X, which in

general for the binomial = np. The

mean of any distribution is the most

important measure of central tendency.

It is the measure of location on the xaxis.

46

Every distribution has a set of moments.

Moments for theoretical distributions are

expected values of powers of the random

variable. The rth moment is E(X-θ)r where E

is the expectation operator and θ is an origin.

The expected value of a random variable is

defined as

E(X) ≡ μ

where μ is Greek because it is the theoretical

mean or average of the random variable.

μ is the first moment about 0.

47

The second moment is about μ itself

E(X- μ)2

and is called the variance σ2 of the

random variable.

The third moment E(X- μ)3 is also

about μ and is a measure of skewness

or non-symmetry of the distribution.

48

The mean of the distribution is a measure of

its location on the x axis. The mean is the

only point such that the sum of the deviations

from it = 0. The mean is the most important

measure of centrality of the distribution.

The variance is a measure of the spread of the

distribution or the extent of its variability.

The mean and variance are the two most

important moments.

49

Every distribution has a moment

generating function (mgf), which for a

discrete distribution is

M x ( ) e x p( x )

x 0

50

The way this works is

M x ( ) e x p( x )

x 0

Assume that p(x) is a function such that the

series above converges. Then

2 x 2 3 x3

M x ( ) 1 x

... p( x )

2!

3!

x 0

2 2

p( x) xp( x) x p( x) ...

2! x 0

x 0

x 0

1

'

1

2

2!

'

2

3

3!

...

'

3

51

In this expression, the coefficient of θk/k! is

the kth moment about the origin.

'

To evaluate a particular moment, k

it may be convenient to compute the proper

derivative of Mx(θ) at θ = 0, since repeated

differentiation of this moment generating

function will show that

k

d

M

'

k

k

d θ 0

52

From the mgf, we can find the first

moment around θ =0, which is the

mean. The mean of the binomial = np.

We can also find the second moment

around θ = μ, the variance. The

variance of the binomial = npq.

The mgf enables us to find all the

moments of a distribution.

53

Now suppose in our binomial we

change p to .7. Then a different

binomial distribution function results, as

shown in the next graph and the table

of data accompanying it.

This makes sense because with a

probability of .7 that you will get heads,

you should see more heads.

54

B ino mial distributio n fo r 1 0 to sses o f a co in with p = .7

0.3

0.25

P(x)

0.2

0.15

0.1

0.05

0

0

1

2

3

4

5

6

7

Number of heads

8

9

10

55

X

0

1

2

3

4

5

6

7

8

9

10

P(x)

5.9E-06

0.000138

0.001447

0.009002

0.036757

0.102919

0.200121

0.266828

0.233474

0.121061

0.028248

56

This distribution is called a skewed

distribution because it is not symmetric.

Skewing can be in either the positive or

the negative direction. The skew is

named by the direction of the long tail

of the distribution. The measure of

skew is the third moment around θ = μ.

So the binomial with p = .7 is negatively

skewed.

57

The mean of this binomial = np = 10(.7)

= 7. So you will expect more heads

when the probability of heads is greater

than that of tails.

The variance of this binomial is

npq =10(.7)(.3) = 2.1.

58

Another discrete distribution that comes in

handy when p is very small is the Poisson

distribution. Its distribution function is

(e μ x )

P ( x)

x!

where μ >0

In the Poisson distribution, the parameter is μ,

which is the mean value of x in this

distribution.

59

The Poisson distribution is an approximation to the

binomial distribution when np is large relative to p

and n is large relative to np. Because it does not

involve n, it is particularly useful when n is unknown.

As an example of the Poisson, assume that a volume

V of some fluid contains a large number n of some

very small organisms. These organisms have no

social instincts and therefore are just as likely to

appear in one part of the liquid as in any other part.

Now take a drop D of the liquid to examine under a

microscope. Then the probability that any one of the

organisms appears in D is D/V.

60

The probability that x of them are in D is

D

nCx

V

x

V D

V

n x

The Poisson is an approximation to this

expression, which is simply a binomial

in which p = D/V is very small.

The above binomial can be transformed

to the Poisson:

e

Dd

Dd

x

x!

where Dd = μ and n/V = d.

61

Another discrete distribution is the

hypergeometric distribution, which is

used when there is no replacement after

each experiment.

Because there is no replacement, the

value of p changes from one trial to the

next. In the binomial, p is always

constant from trial to trial.

62

Suppose that 20 applicants appear for a job

interview and only 5 will be selected. The

value of p for the first selection is 1/20.

After the first applicant is selected, p

changes from 1/20 to 1/19 because the one

selected is not thrown back in to be selected

again.

For the 5th selection, p has moved to 1/16,

which is quite different from the original 1/20.

63

Now if there had been 1000 applicants

and only 2 were going to be selected,

p would change from 1/1000 to 1/999,

which is not enough of a change to be

important.

So the binomial could be used here

with little error arising from the

assumptions that the trials are

independent and p is constant.

64

The hypergeometric distribution is

p( x )

( Np C x )( N Np C n x )

N Cn

65

Another discrete distribution is the

negative binomial. The negative

binomial distribution is used for the

question “On which trial(s) will the first

(and later) success(es) come?”

Let p be the probability of success and

let p(X) be the probability that exactly

x+r trials will be needed to produce r

successes.

66

The negative binomial is:

p(x) = pr ( x+r-1Cr-1 ) qx

where x = 0,1,2, …

and p +q =1

Notice that this turns the binomial on its

head because instead of the number of

successes in n trials, it gives the

number of trials to r successes. This is

why it is called the negative binomial.

67

The binomial is the most important of

the discrete distributions in applications,

but you should have a passing

familiarity with the others.

Now we move on to distributions of

continuous random variables.

68

Because a continuous random variable has a

nondenumerable number of values, its

probability function is a density function. A

probability density function is abbreviated pdf.

There is a logical problem associated with

assigning probabilities to the infinity of points

on the x-axis and still having the density sum

to 1. So what we do is deal with intervals

instead of with points. Hence P(x=a) = 0

for any number a.

69

By far, the most important distribution

in statistics is the normal or Gaussian

distribution. Its formula is

1

f ( x)

e

2

( x )

2

2

2

70

The normal distribution is characterized

by only two parameters, its mean μ and

its standard deviation σ.

The mgf for a continuous distribution is

M x ( )

x

e

f ( x )dx

71

This mgf is of the same form as that for

discrete distributions shown earlier, and

it generates moments in the same

manner.

A normal distribution with μ = 1.5 and

σ = .9 is shown next.

72

No rmal distributio n ( 1 .5 , 0 .9 )

0.4

0.35

0.3

0.25

0.2

0.15

0.1

0

0.5

1

1.5

2

2.5

3

rando m v ariable

73

This is the familiar bell curve. If the standard

deviation σ were smaller, the curve would be

tighter. And if σ were larger, the curve would

be flatter and more spread out.

Any normal distribution may be transformed

into the standard normal distribution with

μ = 0 and σ = 1. The transformation is

z = (x-μ) / σ

In this case, z is called the standard normal

variate or random variable.

74

If we use the transformed variable z, the

normal density becomes

1

f ( x)

e

2

1 2

z

2

75

The area under the curve for any normal

distribution from μ to +1σ = .34 and the area

from μ to -1σ = .34. So from -1σ to +1σ is

68% of the area, which means that the values

of the random variable X falling between

those two limits use up .68 of the total

probability.

The area from μ to +1.96σ = .475 and

because the normal curve is symmetric, it is

the same from μ to -1.96σ. So from -1.96σ

to +1.96σ = 95% of the area under the curve,

and the values of the random variable in that

range use up .95 of the total probability.

76

Standard normal distribution

0.45

0.40

0.35

0.30

0.25

0.20

0.15

0.10

0.05

.34

.34

.135

.135

0.00

-3.0 -2.5 -2.0 -1.5 -1.0 -0.5

0.0

0.5

1.0

1.5

2.0

2.5

3.0

random variable

77

The normal distribution is very important for

statisticians because it is a mathematically

manageable distribution with wide ranging

applicability, but it is also important on its own merits.

For one thing, many populations in various scientific

or natural fields have a normal distribution to a good

degree of approximation. To make inferences about

these populations, it is necessary to know the

distributions for various functions of the sample

observations.

The normal distribution may be used as an

approximation to the binomial for large n.

78

Theorem:

If X represents the number of

successes in n independent trials of an

event for which p is the probability of

success on a single trial, then the

variable (X-np)/√npq has a distribution

that approaches the normal distribution

with mean = 0 and variance = 1 as n

becomes increasingly large.

79

Corrollary:

The proportion of successes X/n will be

approximately normally distributed with

mean p and standard deviation √pq/n

if n is sufficiently large.

Consider the following illustration of the

normal approximation to the binomial.

80

In Mendelian genetics, certain crosses

of peas should give yellow and green

peas in a ratio of 3:1. In an experiment

that produced 224 peas, 176 turned out

to be yellow and only 48 were green.

The 224 peas may be considered 224

trials of a binomial experiment where

the probability of a yellow pea = ¾.

Given this, the average number of

yellow peas should be 224(3/4) =168

and σ =√224(3/4)(1/4) = 6.5.

81

Is the theory wrong? Or is the finding of 176

yellow peas just normal variation?

To save the laborious computation required

by the binomial, we can use the normal

approximation to get a region around the

mean of 168 which encompasses 95% of the

values that would be found in the normal

distribution.

168 1.96(6.5)

168 12.7

155 181

Since the 176 yellow peas found in this

experiment is within this interval, there is no

reason to reject Mendelian inheritance.

82

The normal distribution will be revisited later, but for now we’ll move on

to some other interesting continuous

distributions.

83

The first of these is the uniform or

rectangular distribution.

f(x) = 1/(β-α)

=0

α ≤X≤ β

elsewhere

This is an important distribution for

selecting random samples and

computers use it for this purpose.

84

Another important continuous distribution is

the gamma distribution, which is used for the

length of time it takes to do something or for

the time between events.

The gamma is a two-parameter family of

distributions, with α and β as the parameters.

Given β > 0 and α > -1, the gamma density

is:

1

α x/β

f ( x)

α 1 x e

!

85

Another important continuous distribution is

the beta distribution, which is used to model

proportions, such as the proportion of lead in

paint or the proportion of time that the FAX

machine is under repair.

This is a two-parameter family of distributions

with parameters α and β, which both must be

greater than -1. The beta density is:

β

( 1)! α

f ( x)

(

1

x

)

x

! !

86

The log normal distribution is another

interesting continuous distribution.

Let x be a random variable. If loge(x)

is normally distributed, then x has a log

normal distribution. The log normal has

two parameters, α and β, both of which

are greater than 0. For x > 0,

f ( x)

1

x

e

2

(1 / 2 β 2) (log x logα ) 2

87

As with the discrete distributions, most

of the continuous distributions are of

passing interest. Only the normal

distribution at this point is critically

important. You will come back to it

again and again in statistical study.

88

Now one kind of distribution we haven’t

covered so far is the cumulative distribution.

Whereas the distribution of the random

variable is denoted p(x) if it is discrete and

f(x) if it is continuous, the cumulative

distribution is denoted P(x) and F(x) for

discrete and continuous distributions,

respectively.

The cumulative distribution or cdf is the

probability that X ≤ Xc and thus it is the area

under the p(x) or f(x) function up to and

including the point Xc.

89

The most interesting cumulative distribution

function or cdf is the normal one, often

called the normal ogive.

Cumulative normal (1.5, .9)

1

0 .9

0 .8

0 .7

0 .6

0 .5

0 .4

0 .3

0 .2

0 .1

0

0

2

1

3

rando m v ariable

90

The points in a continuous cdf like the

normal F(x) are obtained by integrating

over the f(x) to the point in question.

F ( xc )

xc

f ( x)dx

91

The cdf can be used to find the

probability that a random variable

X is ≤ some value of interest because

the cdf gives probabilities directly.

In the normal distribution shown earlier

with μ = 1.5 and σ =0.9, the probability

that X ≤ 2 is given by the cdf as .71.

Also the probability that 1 ≤ x ≤ 2 is

given by F(2) – F(1) = .71 - .29 = .42.

92

Now you know from this normal cdf that

the probability that X ≤ 2 is .71.

Suppose you want the probability that

X ≥ 2. Well if P(X ≤ 2) = .71, then

P(X ≥ 2) = 1-.71= .29.

Note that you are ignoring the fact that

P(X = 2) is included is included in the

cdf probability because P(X = 2) = 0 in

a continuous pdf.

93

For the binomial distribution, a point on

the cumulative distribution function P(x)

is obtained by summing probabilities of

the p(x) up to the point in question.

Then P(xi)= p(x ≤ xi). In general,

P ( x j ) p( x i )

i j

94

Bino mial CDF with p =.5 and n=1 0

1 .1

1

pr obability X < Xc

0 .9

0 .8

0 .7

0 .6

0 .5

0 .4

0 .3

0 .2

0 .1

0

0

1

2

3

4

5

6

7

8

9

10

Number o f heads

95

From this cdf, we can see that the probability that the

number of heads will be ≤ 2 = .05.

And the probability that the number of heads will be

≤ 6 = .82.

But the probability that the number of heads will be

between two numbers is tricky here because the cdf

includes the probability of x, not just the values < x.

So if you want the probability that 2 ≤ x ≤ 6, you

need to use P(6)- P(1) because if you subtracted

P(2) from P(6), you would exclude the value 2 heads.

So P(2 ≤ x ≤ 6) = P(6) – P(1) = .82 -.01 = .81.

96

So if you are given a point on the binomial

cdf, say, (4, .38), then the probability that

X ≤ 4 = .38.

But suppose you want the probability that

X > 4. Then 1- P(X ≤ 4)

= 1-.38

= .62 is the answer.

But if you want the probability that X ≥ 4, you

can’t get it from the information given

because P(X = 4) is included in the binomial

cdf.

97

Now we have covered the major

distributions of interest. But so far,

we have been dealing with theoretical

distributions, where the unknown

parameters are given in Greek.

Since we don’t know the parameters,

we have to estimate them. This means

we have to develop empirical

distributions and estimate the

parameters.

98

To think about empirical distributions,

we must first consider the topic of

sampling.

We need a sample to develop the

empirical distribution, but the sample

must be selected randomly. Only

random samples are valid for statistical

use. If any other sample is used, say,

because it is conveniently available, the

information gained from it is useless

except to describe the sample itself.

99

Now how can you tell if a sample is

random? Can you tell by looking at the

data you got from your sample?

Does a random sample have to be

representative of the group from which

it was obtained?

The answer to these questions is a

resounding NO.

100

Now let’s develop what a random sample

really is.

First, there is a population with a variable of

interest. The population is all elements of

concern, for example, all males from age 18

to age 30 in Korea. Maybe the variable of

interest is height.

The population is always very large and often

infinite. Otherwise, we would just measure

the entire population on the variable of

interest and not bother with sampling.

101

Since we can never measure every

element (person, object, manufactured

part, etc.) in the population, we draw a

sample of these elements to measure

some variable of interest. This variable

is the random variable.

102

The sample may be taken from some portion

of the population, and not from the entire

population. The portion of the population

from which the sample is drawn is called the

sampling frame.

Maybe the sample was taken from males

between 18 and 30 in Seoul, not in all of

Korea. Then although Korea is the population

of interest, Seoul is the sampling frame. Any

conclusions reached from the Seoul sample

apply only to the set of 18 to 30 year-old

males in Seoul, not in all of Korea.

103

To show how far astray you can go when you

don’t pay attention to the sampling frame,

consider the US presidential election of 1948.

Harry Truman was running against Tom

Dewey. All the polling agencies were sure

Dewey would win and the morning paper after

the election carried the headline

DEWEY WINS

There is a famous picture of the victorious

Truman holding up the morning paper for all

to see.

104

How did the pollsters go so wrong? It was in

their sampling frame.

It turns out that they had used the phone

directories all over the US to select their

sample. But the phone directories all over

the US do not contain all the US voters. At

that time, many people didn’t have phones

and many others were unlisted.

This is a glaring and very famous example of

just how wrong you can be when you don’t

follow the sampling rules.

105

Now assuming you’ve got the right sampling frame,

the next requirement is a random sample. The

sample must be taken randomly for any conclusions

to be valid. All conclusions apply only to the

sampling frame, not to the entire population.

A random sample is one in which each and every

element in the sampling frame has an equal chance

of being selected for the sample.

This means that you can get some random samples

that are quite unrepresentative of the sampling frame.

But the larger the random sample is, the more

representative it tends to be.

106

Suppose you want to estimate the

height of males in Chicago between the

ages of 18 and 30.

If you were looking for a random

sample of size 12 in order to estimate

the height, you might end up with the

Chicago Bulls basketball team. This

sample of 12 is just as likely as any

other sample of 12 particular males.

But it certainly isn’t representative of the

height of Chicago young males.

107

But you must take a random sample to have

any justification for your conclusions.

Now the ONLY way you can know that a

sample is random is if it was selected by a

legitimate random sampling procedure.

Today, most random selections are done by

computer. But there are other methods, such

as drawing names out of a container if the

container was appropriately shaken.

108

The lottery in the US is done by putting a set

of numbered balls in a machine. The

machine stirs them up and selects 5

numbered balls, one at a time. These

numbers are the lottery winners.

Anyone who bought a lottery ticket which has

the same 5 numbers as were drawn will win

the lottery.

Because this equipment was designed as

lottery equipment, it is fair to say that the

sample of 5 balls drawn is a random sample.

109

Formally, in statistics, a random sample is

thought of as n independent and identically

distributed (iid) random variables, that is, x1,

x2, x3, …xn.

In this case, xi is the random variable from

which the ith value in the sample was obtained.

When we want to speak of a random sample,

we say: Let {xi} be a set of n iid random

variables.

110

Once you get the random sample, you

can get the distribution of the variable

of interest for the sample.

Then you can use the empirical sample

distribution to estimate the parameters

in the sampling frame, but not in the

entire population.

Most of what we estimate are the two

most important moments, μ and σ2.

111

Since we don’t know the theoretical mean μ

and variance σ2, we can estimate them from

our sample.

The mean estimate is X

n

X

x

i 1

i

n

where n is the sample size.

112

The estimate of the second moment, the

variance, is

n

s2

2

(

X

X

)

i 1

n 1

Although the variance is a measure of the

spread or variability of the distribution around

the mean, usually we take the square root of

the variance, the standard deviation, to get

the measure in the same scale as the mean.

The standard deviation is also a measure of

variability.

113

Now two questions arise. First, if we are

going to take the square root anyway, why

do we bother to square the estimate in the

first place?

The answer is simple if you look at the

formula carefully.

X

n

s2

i 1

X

2

i

n 1

114

Clearly, if you didn’t square the

deviations in the numerator, they would

always sum to 0, because the mean is

the value such that the deviations

around it always sum to 0.

X

i

X 0

i

115

Now for the second question. Why is it that

when we estimate the mean, we divide by n,

but when we estimate the variance, we divide

by n -1?

The answer is in the concept of degrees of

freedom.

When we estimate the mean, each value of x

is free to be whatever it is. Thus, there are

no constraints on any value of X so there are

n degrees of freedom because there are n

observations in the sample.

116

But when we estimate the variance, we use

the mean estimate in the formula. Once we

know the mean, which we must to compute

the variance, we lose one degree of freedom.

Suppose we have 5 observations and their

mean = 6. If the values 4, 5, 6, 7 are 4 of

these 5 observations, the 5th observation is

not free to be anything but 8.

So when we use the estimated mean in a

formula we always lose a degree of freedom.

117

In the formula for the variance, only n -1 of

the (Xi – X )2 points is free to vary. The nth

one is not free to vary. That’s why we divide

by n – 1.

One last point –

The sample mean and the sample variance

for normal distributions are independent of

one another.

118

Now let’s take a random sample of size 18 of

the height of Korean male students at KAIST.

Let’s say the height measurements are:

165,166,168,168,172,172,172,175,175,175,

175,178,178,178,182,182,184,185, all in cm.

Now the mean of these is 175 cm. The

standard deviation is 6 cm. And the

distribution is symmetric, as shown next.

119

Height of sample of 1 8 KAIS T male students

5

4

3

2

1

0

160

165

170

175

180

185

190

Height ( cm)

120

The distribution would be much closer

to normal if the sample were larger, but

with 18 observations, it still is

symmetric.

The median of the distribution is 175,

the same as the mean. The median is a

measure of central tendency such that

half of the observations fall below and

half above.

The mode of this distribution is also

175.

121

For normal distributions, the mean, median, and

mode are all equal. In fact for all unimodal

symmetric distributions, the mean, median, and

mode are all equal.

The mth percentile is the point below which is m% of

the observations. The 10th percentile is the point

below which are 10% of the observations. The 60th

percentile is the point below which are 60% of the

observations.

The 1st quartile is the point below which are 25% of

the observations. The 3rd quartile is the point below

which are 75% of the observations.

The median is the 50th percentile and the 2nd quartile.

122

This is our first empirical distribution.

We know its mean, its standard

deviation, and its general shape. The

estimates of the mean and standard

deviation are called statistics and are

shown in roman type.

Now assume that the sample that we

used was indeed a random sample of

male students at KAIST. Now we can

ask how good is our estimate of the

true mean of all KAIST male students.

123

In order to answer this question, assume that

you did this study -- selecting 18 male

students at KAIST and measuring their height

-- infinitely often. After each study, you

record the sample mean and variance.

Now you have infinitely many sample means

from samples of n = 18, and they must have

a distribution, with a mean and variance.

Note that now we are getting the distribution

of a statistic, not a fundamental measurement.

Distributions of statistics are called sampling

distributions.

124

So far, we have had theoretical

population distributions of the random

variable X and empirical sample

distributions of the random variable X.

Now we move into sampling

distributions, where the random variable

is not X but a function of X called a

statistic.

125

The first sampling distribution we will

consider is that of the sample mean so

we can see how good our estimate of

the population mean is.

Because we don’t really do the

experiment infinitely often, we just

imagine that it is possible to do so, we

need to know the distribution of the

sample mean.

126

This is where an amazing theorem comes to our rescue – the

Central Limit Theorem.

Let X be the mean and s2 the variance of a random sample of

size n from f(x). Now define

y

X

n

Then y is distributed normally with mean = 0 and variance =1

as n increases without bound.

Note that y here is just the standardized version of the

statistic X .

127

This theorem holds for means of

samples of any size n where f(x) is

normal.

But the really amazing thing is that it

also holds for means of any

distributional form of f(x) for large n. Of

course, the more the distribution differs

from normality, the larger n must be.

128

Now we’re back to our original question: How good

is our sample estimate of the mean of the population?

We know that

is distributed normally with mean μ

thanks to the CLT.

The standard deviation of

is

X

X

n

The standard deviation of is often called the

standard error because Xis an estimate of μ and any

variation of around μ isX error of estimate. By

contrast, theX standard deviation of X is just the

natural variation of X and is not error.

129

So now we can define a confidence interval

for our estimate of the mean.

X z

n

where zα is the standard normal deviate

which leaves .5α in each tail of the normal

distribution.

If zα = 1.96, then the confidence interval will

contain the parameter μ 95% of the time.

Hence, this is called a 95% confidence

interval and its two end points are called

confidence limits.

130

If σ is small, the interval will be very

tight, so the estimate is a precise one.

On the other hand, if σ is large, the

interval will be wide, so the estimate is

not so precise.

Now it is important to get the

interpretation of a confidence interval

clear. It does NOT mean that the

population mean μ has a 95%

probability of falling within the interval.

131

That would be tantamount to saying

that μ is a random variable that has a

probability function associated with it.

But μ is a parameter, not a random

variable, so its value is fixed. It is

unknown but fixed.

132

So the proper interpretation for a 95%

confidence interval is this. Imagine that you

have taken zillions (zillions means infinitely

often) of random samples of n =18 KAIST

male students and obtained the mean and

standard deviation of their height for each

sample.

Now imagine that you can form the 95%

confidence interval for each sample estimate

as we have done above. Then 95% of these

zillions of confidence intervals will contain the

parameter μ.

133

It may seem counter-intuitive to say that we

have 95% confidence that our 95%

confidence interval contains μ, but that there

is not 95% probability that μ falls in the

interval.

But if you understand the proper

interpretation, you can see the difference.

The idea is that 95% of the intervals formed

in this way will capture μ. This is why they

are called confidence intervals, not

probability intervals.

134

Now we can also form 99% confidence

intervals simply by changing the 1.96 in

the formula to 2.58. Of course, this will

widen the interval, but you will have

greater confidence.

90% confidence intervals can be

formed by using 1.65 in the formula.

This will narrow the interval, but you will

have less confidence.

135

But when we try to find a confidence interval,

we run into a problem. How can we find the

confidence interval when we don’t know the

parameter σ?

Of course, we could substitute the estimate s

for σ, but then our confidence statement

would be inexact, and especially so for small

samples.

The way out was shown by W.S. Gossett,

who wrote under the pseudonym “Student”.

His classic paper introducing the t distribution

has made him the founder of the modern

theory of exact statistical inference.

136

Student’s t is

t

X

s

n

t involves only one parameter μ and has

the t distribution with n -1 degrees of

freedom, which involves no unknown

parameters.

137

The t distribution is

f (t )

[( k 1) / 2]!

1

2

( k 1) / 2

k [( k 2) / 2]! [1 (t / k )]

where k is the only parameter and k = the

number of degrees of freedom.

Student’s t distribution is symmetric like the

normal but with higher and longer tails for

small k. The t distribution approaches the

normal as k → ∞, as can be seen in the t

table on the following page.

138

Table of t values for selected df and F(t)

F(t)

.75

.90

.95

.975

.99

.995

.9995

df

17

.689 1.333 1.740 2.110

2.567 2.898 9.965

30

.683 1.310 1.697 2.042

2.457 2.750 3.646

40

.681 1.303 1.684 2.021

2.423 2.704 3.551

60

.679 1.296 1.671 2.000

2.390 2.660 3.460

120

.677 1.289 1.658 1.980

2.358 2.617 3.373

∞

.674 1.282 1.645 1.960

2.326 2.576 3.291

139

Now we can solve the problem of computing

confidence intervals for the mean. This formula is

correct only if s is computed with n -1 in the

denominator.

X t

s

n

t is tabled so that its extreme points (to get 95%, 99%

confidence intervals, etc.) are given by t.975 and t.995,

respectively. There is also a tdist function in Excel

which gives the tail probability for any value.

140

In our sample of 18 KAIST males, the

estimated mean =175 cm and the

estimated standard deviation = 6 cm.

So our 95% confidence interval is

175 2.110 (6 / 18) or

(172 ≤ μ ≤ 178)

where 2.110 is the tabled value of t.975

for 17 df. This interval isn’t very tight

but then we had only 18 observations.

141

Technically, we always have to use the t

distribution for confidence intervals for the

mean, even for large samples, because the

value σ is always unknown.

But it turns out that when the sample size is

over 30, the t distribution and the normal

distribution give the same values within at

least two decimal points, that is, z.975 ≈ t.975

because the t distribution approaches the

normal distribution as df →∞.

142

What about the distribution of s2

the estimate of σ2?

The statistic s2 has a chi-square

distribution with n-1 df. Chi-square is

a new distribution for us, but it is the

distribution of the quantity

i 1

n

2

x

2

i

143

or if we convert to a standard normal

deviate, where

xi

y

then

n

2

i 1

i

y

has a chi-square distribution with n df.

So the sample variance has a chisquare distribution.

144

What about a confidence interval for s2? In our KAIST

sample, n = 18, s = 6, and s2 = 36. The formula for

the confidence interval is

ns 2

22

2

ns 2

12

(18)(36)

(18)(36)

2

30.2

7.56

21.5 2 85.7

This is a 95% confidence interval for σ2 and it is very

wide because we had only 18 observations. The two

χ2 values are those for .975 and .025 with n-1 =17 df.

Confidence intervals for variances are rarely of

interest.

145

Much more common is the problem of

comparing two variances where the two

random variables are of different orders of

magnitude.

For example, which is more variable, the

weight of elephants or the weight of mice?

Now we know that elephants have a very

large mean weight and mice have a very

small mean weight. But is their variability

around their mean very different?

146

The only way we can answer this is to

take their variability relative to their

average weight. To do so, we use the

standard deviation as the measure of

variability.

The quantity

s

X

is a measure of relative variability called

the coefficient of variation.

147

Now if you had a random sample of

elephant weights and a random sample

of mouse weights, you could compare

the coefficient of variation of elephant

weight with the coefficient of variation

of mouse weight and answer the

question.

148

What are the properties of an estimator

that make it good?

1. Unbiased

2. Consistent

3. Best unbiased

149

Let’s look at each of these in turn.

1. An unbiased estimator ˆ is one where

E( ˆ ) = θ

The sample mean is an unbiased estimator of μ

because

n

xi

E ( X ) E i 1

n

n

1

E ( xi )

n

i 1

and since E(X)≡μ and there are n E(X) in this sum,

we have

1

n

n

150

Is s2 an unbiased estimator of σ2?

2

1 n

E

xi X

n 1 i 1

2

1 n

E ( xi ) ( X )

n 1 i 1

n

1 n

2

2

E ( xi ) n( X ) 2 ( xi )( X )

n 1 i 1

i 1

1 n

2

2

2

E ( x i ) n( X ) 2 n( X )

n 1 i 1

1 n

2

2

E ( xi ) nE ( X )

n 1 i 1

1 n

2

2

E ( x i ) n X

n 1 i 1

2

1

2

n n

n

n 1

1

2

2

n

n 1

1

2

(n 1)

n 1

2

151

2. A consistent estimator is one for

which the estimator gets closer and

closer to the parameter value as n

increases without limit.

3. A best unbiased estimator, also

called a minimum variance unbiased

estimator, is one which is first of all

unbiased and has the minimum variance

among all unbiased estimators.

152

How can we get estimates of

parameters?

One way is the method of moments,

which comes from the moment

generating function.

Another very important way is the

method of maximum likelihood.

153

A maximum likelihood estimator (MLE)

of the parameter θ in the density

function f(X; θ) is an estimator that

maximizes the likelihood function

L(x1, x2, …,xn; Θ), where the xi are the

n sample values of X and θ is the

parameter to be estimated.

If the xi are treated as fixed, the

likelihood function becomes a function

of only θ.

154

In the discrete case, the likelihood function is

L({xi}; Θ) = p(x1;Θ)p(x2;Θ)…p(xn;Θ)

where p(x;Θ) is the frequency function for a

sample of n observations and the parameter

Θ.

L({xi}; Θ) gives the probability of obtaining

the particular sample values that were

obtained with the parameter Θ. The value of

Θ which maximizes this likelihood function is

called the maximum likelihood estimate (MLE)

of Θ.

155

In the continuous case, the likelihood

function

L({xi}; Θ) = f(x1;Θ)f(x2;Θ)…f(xn;Θ)

gives the probability density at the

sample point (x1, x2, …, xn) where the

sample space is thought of as being

n -dimensional.

Again, the value of Θ which maximizes

this likelihood function is called the

maximum likelihood estimate (MLE) of Θ.

156

Let’s look at an application of mean and

standard deviation estimates in manufacturing.

The approach is called Statistical Process

Control (SPC) and it was developed in the

1920’s by Walter Shewhart.

It became very popular after another

statistician, W. Edwards Deming, showed the

Japanese how to use it after WWII. Now it is

used everywhere in the developed world.

157

At that time (1950’s), everything that came to

America from Japan was cheap, but junk.

It sold for a while because it was so cheap,

but eventually, people caught on that it was

just junk so they stopped buying.

It was at this point that Deming went to

Japan.

158

The general practice in manufacturing during

Shewhart’s time was to run an assembly line

all day. Then at the end of the day, an

inspector inspected all the parts produced by

the process that day.

If a part was good, it was passed on to the

next step. If it was bad, it was either

discarded or reworked, at significant cost to

the business. Sometimes inspection did not

occur until the product was finished. Then if

a product did not meet specifications, the

entire product was discarded. Imagine the

cost in this case.

159

The idea of SPC is to get rid of all that waste

in materials and manpower by eliminating bad

parts as soon as the process starts to

produce them.

The problem was to find the point where the

parts started going bad, so you could stop

the process and fix the problem. Shewhart

was the one who solved this problem by SPC.

160

The idea is to examine periodically a few

(usually 3 or 5) parts produced by an

assembly line and determine if the

process is still running properly.

In any process, there is variation. If the

process is very good, the variation is

small. The natural variation of the

process is called system variation or

common variation.

161

After some preliminary running of the process

to determine its location and variation, a chart

is made with control limits on it.

M ean Chart

55

54

Mean

53

52

51

50

49

2

4

6

8

10

12

Time o f day

162

The control chart was developed for a process that

would select 5 parts every two hours.

The green line is the expected mean line that was

found in preliminary work.

The upper red line is called the upper control limit

(UCL) and the lower red line is called the lower

control limit (LCL). The control limits reflect the

system variation around the overall mean line. They

are usually 95% confidence limits.

Then the process is run.

163

M ean Chart

59

58

57

56

Mean

55

54

53

52

51

50

49

2

4

6

8

10

12

Time o f day

164

Each point on the SPC chart is the

mean of the measurement X on 5 parts.

As you can see, the points are staying

within the control limits (red) and

generally staying slightly above or below

the overall mean line (green) from 2 pm

to 10 pm.

At midnight, the point jumps out of

control to a value of 58. Variation like

this is called special cause variation.

165

This alerts the operator to a problem

with his process. His job is to stop the

process and find and fix the problem.

Now he knows the problem happened

between 10pm and 12 midnight

because everything was OK at 10 pm.

So he holds back the parts produced

between 10 and 12 for inspection to

make sure no bad part goes to the next

step.

166

Once he fixes the problem, the process

starts up again and the chart continues.

Now SPC has cut all the losses that

would have occurred between midnight

and 8 am, when the parts go to the next

step.

There is also a range chart or a

standard deviation chart to accompany

the mean chart, but that is another story.

167

When Deming told the story of his

experiences in Japan, he said,

“I told them that they could go from

being the junk manufacturer of the

world to producing the best quality

products in the world in five years if

they used the SPC system. But I made

a mistake. They did it in two years.”

168

This is an example of how useful

statistics can be in a manufacturing

setting.

Actually there are a number of

variations of control charts, and an

entire field of technology has developed

surrounding this idea.

169

Let’s look at linear functions of random

variables.

We know that E(X) = μ. But suppose

we are interested in a function of X, like,

say, aX, where a is a constant. Now

what is E(aX)?

Because E is a linear operator,

E(aX) = aE(X) = aμ.

170

This means that when we estimate the

mean of aX, we get aX .

How about the E(X + Y - Z) where X, Y, Z

are all random variables?

Again, because E is a linear operator,

E(X + Y - Z) = E(X) + E(Y) - E(Z)

So we can estimate the mean of the sum or

difference of random variables by the sum

or difference of their means.

171

What about the variance of functions of random

variables?

For aX, how is the variance affected? Let’s go to

the definition of variance.

X2 E ( X ) 2

Then

2

aX

E (aX a ) a E ( X )

2

2

2

172

So if we want to estimate the variance of aX,

we can simply multiply the estimated

variance of X by a2 to get a2s2 .

Now what about the variance of X + Y or of

X - Y, where X and Y are independent?

The variance of the sum or difference of

independent random variables is the sum of

the separate variances.

s

2

X Y

s

2

X Y

s s

2

X

2

Y

173

In general, the variance of X+Y where X

and Y are random variables, whether

independent or not, is

s

2

X Y

s s 2sX ,Y

2

X

2

Y

If X and Y are independent, the covariance

term sX,Y drops out.

174

Now what about the variance of the sum

or difference of two independent means?

The variances of X and Y are

X2

X2

2

Y

nX

Y2

nY

175

So the estimated variance of the

difference between the means of two

independent random variables is

s X2 Y

s X2 sY2

nX nY

The square root of this is the standard

deviation or standard error of the

difference between two independent

means.

176

So far we have been talking about

distributions of a single random variable.

But we now turn to distributions of

multiple random variables, which may or

may not be related to one another.

Let’s begin with the bivariate case.

Now we have two random variables,

X and Y, which have a joint normal

density.

177

For 1-dimensional random variables,

the distribution can be drawn on a

piece of paper, where the x-axis is the

variate and the y-axis is the ordinate of

the distribution.

For two random variables, one variate X

is on the x-axis, the other variate Y is

on the y-axis, and the ordinate is the

third dimension.

178

So now we imagine a bell sitting on a

table. One edge of the table is the

x-axis and the other edge is the y-axis.

The distribution is the bell itself, which

represents the ordinates for a set of (x,y)

points on the table.

179

This density is shown below, where the

only new parameter is ρ.

F ( x, y )

1

2 x y 1

2

e

2

2

x x y y y y

1 x x

2

2

x y y

2 (1 ) x

180

If both X and Y are in standard normal form,

their bivariate density simplifies as

F (z x , z y )

1

2 1

2

e

1

2

2

z

2

(

z

z

)

z

x

x y

y

2 (1 2 )

181

What is ρ?

ρ is measure of the relationship between

the two random variables X and Y. It is

called the correlation coefficient, where

-1 ≤ ρ ≤ +1

When ρ = 0, there is no relationship

between X and Y and thus

f(x,y) =f(x) f(y)

182

ρ is defined through the covariance of

X and Y. The covariance is a measure

of how the two variables X and Y vary

together. It is defined as

Cov(X,Y) ≡ σx,y ≡ E[(x-μx)(y-μy)]

and is estimated by

( xi X )( yi Y )

n

i 1

n

183

The correlation coefficient ρ is estimated

by r

n

r

(x

i 1

i

X )( yi Y )

ns x s y

and is thus a standardized version of

the covariance.

184

The correlation ρ is a measure of

the linear relationship of two

variables. There is no cause-effect

implication. The two variables

simply vary together.

Consider the following example

of the scores of 30 students on

a language test X and a science

test Y.

185

35

34

37

36

32

32

36

35

34

29

35

37

37

34

34

33

40

39

37

36

28

30

32

41

38

36

37

33

32

33

30

34

30

37

40

42

40

36

31

31

39

33

30

33

43

31

38

34

36

34

36

29

29

40

42

29

40

31

38

32

186

Scattergram of language and science scores

45

science score

40

35

30

25

25

30

35

language score

40

45

187

As the scattergram shows, there is a

tendency for the language and science

scores to vary together. The degree of

linear relationship is not perfect and

r = .66 for this situation.

Note that the relationship is a linear one

and the best fitting line can be drawn

through the points. If the relationship

had been perfect, r = 1 and all of the

points would fall on the line.

188

If the relationship had been negative,

then the line would have a negative

slope and r would be negative.

In general, r = 0 if the points show no

linear relationship at all. If the

relationship is perfect, then r = 1 or -1,

depending on whether the best-fitting

line through the points would have a

positive or negative slope.

189

For weak relationships, r is usually in

the .3 to .4 range. For moderate

relationships, r is usually in the .5 to .7

range. And for strong relationships, r is

usually about .8 to .95.

Of course, if the direction of the

relationship were negative, each r

above would be negative.

190

As another example, consider the following

data on the heights and weights of 12 college

students.

ht

63

72

70

68

66

69

74

70

63

72

wt

124 184 161 164 140 154 210 164 126 172 133 150

Are these two variables correlated?

look at the scattergram.

65

71

Let’s first

191

R elatio nship o f height and weight

220

200

W eight

180

160

140

120

100

60

65

70

75

Height

192

It certainly does appear that height and

weight are correlated. In fact, the

correlation coefficient r = .91.

But what if you found out that four of

the points were for college women and

the other eight for college men. Now

what would you conclude? Well, let’s

look at the scattergrams for men and

women separately.

193

R elatio nship o f height and weight fo r co llege men

R elatio nship o f height and weight fo r co llege wo men

220

220

200

200

180

W eight

W eight

180

160

140

160

140

120

120

100

60

65

70

Height

75

100

60

65

70

75

Height

194

Now it doesn’t seem that height and

weight are only moderately correlated.

The important thing to note here is that

degree of correlation can be strongly

enhanced by including extreme values.

In this case, the women were extremely