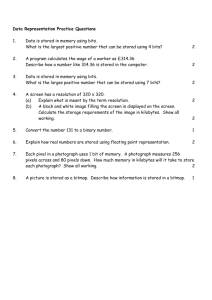

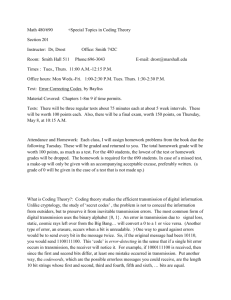

EE-317: Information Theory - Texas A&M University

advertisement

EE-317: Information Theory

Fall 2008

1

Organizational Details

Class Meeting:

7:00-9:45pm, Tuesday, Room SCIT213

Instructor: Dr. Igor Aizenberg

Office: Science and Technology Building, 115

Phone (903 334 6654)

e-mail: igor.aizenberg@tamut.edu

Office hours:

Wednesday, Thursday, Friday 12-30 – 2-00 pm

Monday: by appointment

Class Web Page: http://www.eagle.tamut.edu/faculty/igor/EE-317.htm

2

Dr. Igor Aizenberg: self-introduction

• MS in Mathematics from Uzhgorod National University (Ukraine),

1982

• PhD in Computer Science from the Russian Academy of Sciences,

Moscow (Russia), 1986

• Areas of research: Artificial Neural Networks, Image Processing and

Pattern Recognition

• About 100 journal and conference proceedings publications and

one monograph book

• Job experience: Russian Academy of Sciences (1982-1990);

Uzhgorod National University (Ukraine,1990-1996 and 1998-1999);

Catholic University of Leuven (Belgium, 1996-1998); Company

“Neural Networks Technologies” (Israel, 1999-2002); University of

Dortmund (Germany, 2003-2005); National Center of Advanced

Industrial Science and Technologies (Japan, 2004); Tampere

University of Technology (Finland, 2005-2006); Texas A&M

University-Texarkana, from March, 2006

3

Text Books

• [1] "An Introduction to Information

Theory" by Fazlollah M. Reza, Dover

Publications, 1994, ISBN 0-486-68210-2

(main book).

• [2]“An Introduction to Information

Theory" by John R. Pierce, Dover

Publications, 1980, ISBN 0-486—24061-4

(supportive book).

4

Control

Exams (open book, open notes):

Midterm 1:

Midterm 2:

Final Exam:

October 7, 2007

November 4, 2007

December 9, 2007

Homework

5

Grading

Grading Method

Homework and preparation:

Midterm Exam 1:

Midterm Exam 2:

Final Exam:

10%

25%

30%

35%

Grading Scale:

90%+ A

80%+ B

70%+ C

60%+ D

less than 60% F

6

What we will study?

• Basic concepts of information theory

• Elements of sets theory and probability

theory

• Measure of information and uncertainty.

Entropy

• Basic concepts of communication channels

organization

• Basic principles of encoding

• Error-detecting and error-correcting codes

7

Information

• What does a word “information” mean?

• There is no some exact definition, however:

• Information carries new specific knowledge,

which is definitely new for its recipient;

• Information is always carried by some specific

carrier in different forms (letters, digits, different

specific symbols, sequences of digits, letters, and

symbols , etc.);

• Information is meaningful only if the recipient is

able to interpret it.

8

Information

• According to the Oxford English

Dictionary, the earliest historical meaning

of the word information in English was the

act of informing, or giving form or shape to

the mind.

• The English word was apparently derived

by adding the common "noun of action"

ending "-ation”

9

Information

• The information materialized is a message.

• Information is always about something (size of a

parameter, occurrence of an event, etc).

• Viewed in this manner, information does not have

to be accurate; it may be a truth or a lie.

• Even a disruptive noise used to inhibit the flow of

communication and create misunderstanding

would in this view be a form of information.

• However, generally speaking, if the amount of

information in the received message increases,

the message is more accurate.

10

Information Theory

• How we can measure the amount of

information?

• How we can ensure the correctness of

information?

• What to do if information gets corrupted by

errors?

• How much memory does it require to store

information?

11

Information Theory

• Basic answers to these questions that formed

a solid background of the modern information

theory were given by the great American

mathematician, electrical engineer, and

computer scientist Claude E. Shannon in his

paper “A Mathematical Theory of

Communication” published in “The Bell

System Technical Journal” in October, 1948.

12

Claude Elwood Shannon (1916-2001)

The father of information theory

The father of practical digital circuit design theory

Bell Laboratories (1941-1972), MIT(1956-2001)

13

Information Content

• What is the information content of any

message?

• Shannon’s answer is: The information content

of a message consists simply of the number of

1s and 0s it takes to transmit it.

14

Information Content

• Hence, the elementary unit of information is a

binary unit: a bit, which can be either 1 or 0;

“true” or “false”; “yes” or “know”, “black” and

“white”, etc.

• One of the basic postulates of information

theory is that information can be treated like a

measurable physical quantity, such as density

or mass.

15

Information Content

• Suppose you flip a coin one million times and

write down the sequence of results. If you want

to communicate this sequence to another

person, how many bits will it take?

• If it's a fair coin, the two possible outcomes,

heads and tails, occur with equal probability.

Therefore each flip requires 1 bit of information

to transmit. To send the entire sequence will

require one million bits.

16

Information Content

• Suppose the coin is biased so that heads occur only 1/4 of the time,

and tails occur 3/4. Then the entire sequence can be sent in

811,300 bits, on average This would seem to imply that each flip of

the coin requires just 0.8113 bits to transmit.

• How can you transmit a coin flip in less than one bit, when the only

language available is that of zeros and ones?

• Obviously, you can't. But if the goal is to transmit an entire

sequence of flips, and the distribution is biased in some way, then

you can use your knowledge of the distribution to select a more

efficient code.

• Another way to look at it is: a sequence of biased coin flips contains

less "information" than a sequence of unbiased flips, so it should

take fewer bits to transmit.

17

Information Content

• Information Theory regards information as only

those symbols that are uncertain to the receiver.

• For years, people have sent telegraph messages,

leaving out non-essential words such as "a" and

"the."

• In the same vein, predictable symbols can be left

out, like in the sentence, "only infrmatn esentil to

understandn mst b tranmitd”. Shannon made

clear that uncertainty is the very commodity of

communication.

18

Information Content

• Suppose we transmit a long sequence of one

million bits corresponding to the first example.

What should we do if some errors occur

during this transmission?

• If the length of the sequence to be

transmitted or stored is even larger that 1

million bits, then 1 billion bits… what should

we do?

19

Two main questions of

Information Theory

• What to do if information gets corrupted by

errors?

• How much memory does it require to store

data?

• Both questions were asked and to a large

degree answered by Claude Shannon in his

1948 seminal article:

use error correction and data compression

20

Shannon’s basic principles of

Information Theory

• Shannon’s theory told engineers how much

information could be transmitted over the

channels of an ideal system.

• He also spelled out mathematically the principles

of data compression, which recognize what the

end of this sentence demonstrates, that “only

infrmatn esentil to understadn mst b tranmitd”.

• He also showed how we could transmit

information over noisy channels at error rates we

could control.

21

Why is the Information Theory Important?

• Thanks in large measure to Shannon's insights,

digital systems have come to dominate the world

of communications and information processing.

–

–

–

–

–

Modems

satellite communications

Data storage

Deep space communications

Wireless technology

22

Channels

• A channel is used to get information

across:

Source

0,1,1,0,0,1,1

Receiver

binary channel

Many systems act like channels.

Some obvious ones: phone lines, Ethernet cables.

Less obvious ones: the air when speaking, TV screen

when watching, paper when writing an article, etc.

All these are physical devices and hence prone to errors.

23

Noisy Channels

A noiseless binary channel

transmits bits without error:

A noisy, symmetric binary

channel applies a bit-flip

01 with probability p:

0

0

0

1

1

1–p

p

0

p

1

1–p

1

What to do if we have a noisy channel and

you want to send information across reliably?

24

Error Correction pre-Shannon

• Primitive error correction (assume p<1/2):

Instead of sending “0” and “1”, send “0…0” and “1…1”.

• The receiver takes the majority of the bit values

as the ‘intended’ value of the sender.

• Example: If we repeat the bit value three times,

the error goes down from p to p2(3–2p).

Hence for p=0.1 we reduce the error to 0.028.

• However, now we have to send 3 bits to get one

bit of information across and this will get worse if

we want to reduce the error rate further…

25

Channel Rate

• When correcting errors, we have to be mindful of

the rate of the bits that you use to encode one bit

(in the previous example we had rate 1/3).

• For the primitive encoding in the previous example

with 00r and 11r with rate 1/r, the error goes

down approximately as p rpr–1.

• If we want to send data with arbitrarily small errors,

then this requires arbitrarily low rates r, which is

costly.

26

Error Correction by Shannon

• Shannon’s basic observations:

• Correcting single bits is very wasteful and inefficient;

• Instead we should correct blocks of bits.

• We will see later that by doing so we can get arbitrarily

small errors for the constant channel rate 1–H(p)

where H(p) is the Shannon entropy,

defined by H(p) = –p log2(p) – (1–p) log2(1–p).

27

A model for a Communication System

• The communications systems are of a statistical

nature.

• That is, the performance of the system can never

be described in a deterministic sense; it is always

given in statistical terms.

• A source is a device that selects and transmits

sequences of symbols from a given alphabet.

• Each selection is made at random, although this

selection may be based on some statistical rule.

28

A model for a Communication System

• The channel transmits the incoming symbols

to the receiver. The performance of the

channel is also based on laws of chance.

• If the source transmits a symbol A, with a

probability of P{A} and the channel lets

through the letter A with a probability

denoted by P{A|A}, then the probability of

transmitting A and receiving A is P{A}∙P{A|A}

29

A model for a Communication System

• The channel is generally lossy: a part of the

transmitted content does not reach its

destination or it reaches the destination in a

distorted form.

30

A model for a Communication System

• A very important task is the minimization of

the loss and the optimum recovery of the

original content when it is corrupted by the

effect of noise.

• A method that is used to improve the

efficiency of the channel is called encoding.

• An encoded message is less sensitive to noise.

31

A model for a Communication System

• Decoding is employed to transform the

encoded messages into the original form,

which is acceptable to the receiver.

Encoding: F: I F(I)

Decoding: F-1: F(I) I

32

A Quantitative Measure of Information

• Suppose we have to select some equipment

from a catalog which indicates n distinct

models: x1 , x2 ,..., xn

• The desired amount of information I ( xk )

associated with the selection of a particular

model xk must be a function of the

probability of choosing xk :

I ( xk ) f P xk

33

A Quantitative Measure of Information

• If, for simplicity, we assume that each one of

these models is selected with an equal

probability, then the desired amount of

information is only a function of n:

I1 xk f 1/ n

34

A Quantitative Measure of Information

• If each piece of equipment can be ordered in

one of m different colors and the selection of

colors is also equiprobable, then the amount

of information associated with the selection of

a color c j is :

I 2 c j f P c j f 1/ m

35

A Quantitative Measure of Information

• The selection may be done in two ways:

• Select the equipment and then the color

independently of each other

I xk & c j I1 ( xk ) I1 (c j ) f 1/ n f 1/ m

• Select the equipment and its color at the same

time as one selection from mn possible

choices:

I xk & c j f 1/ mn

36

A Quantitative Measure of Information

• Since these amounts of information are identical,

we obtain:

f 1/ n f 1/ m f 1/ mn

• Among several solutions of this functional

equation, the most important for us is:

f x log x

• Thus, when a statistical experiment has n

eqiuprobable outcomes, the average amount of

information associated with an outcome is log n

37

A Quantitative Measure of Information

• The logarithmic information measure has the

desirable property of additivity for

independent statistical experiments.

• The simplest case to consider is a selection

between two eqiuprobable events. The

amount of information associated with the

selection of one out of two equiprobable

events is log 2 1/ 2 log 2 2 1 and provides a

unit of information known as a bit.

38