Decision Analysis

advertisement

IndE 311

Stochastic Models and Decision Analysis

UW Industrial Engineering

Instructor: Prof. Zelda Zabinsky

Decision Analysis-1

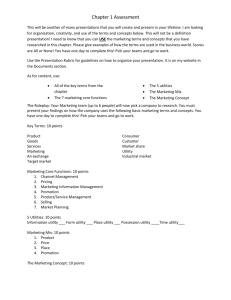

Operations Research

“The Science of Better”

Decision Analysis-2

Operations Research

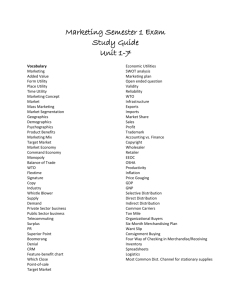

Modeling Toolset

311

Queueing

Theory

Simulation

Inventory

Theory

Forecasting

310

Markov

Chains

PERT/

CPM

Decision

Analysis

Stochastic

Programming

Markov

Decision

Processes

Dynamic

Programming

Network

Programming

Linear

Programming

Integer

Programming

Nonlinear

Programming

Game

Theory

312

Decision Analysis-3

IndE 311

• Decision analysis

– Decision making without

experimentation

– Decision making with

experimentation

– Decision trees

– Utility theory

• Markov chains

–

–

–

–

–

–

Modeling

Chapman-Kolmogorov equations

Classification of states

Long-run properties

First passage times

Absorbing states

• Queueing theory

–

–

–

–

–

Basic structure and modeling

Exponential distribution

Birth-and-death processes

Models based on birth-and-death

Models with non-exponential

distributions

• Applications of queueing

theory

– Waiting cost functions

– Decision models

Decision Analysis-4

Decision Analysis

Chapter 15

Decision Analysis-5

Decision Analysis

• Decision making without experimentation

– Decision making criteria

• Decision making with experimentation

– Expected value of experimentation

– Decision trees

• Utility theory

Decision Analysis-6

Decision Making without

Experimentation

Decision Analysis-7

Goferbroke Example

•

•

•

•

•

Goferbroke Company owns a tract of land that may contain oil

Consulting geologist: “1 chance in 4 of oil”

Offer for purchase from another company: $90k

Can also hold the land and drill for oil with cost $100k

If oil, expected revenue $800k, if not, nothing

Payoff

Alternative

Oil

Dry

1 in 4

3 in 4

Drill for oil

Sell the land

Chance

Decision Analysis-8

Notation and Terminology

• Actions: {a1, a2, …}

– The set of actions the decision maker must choose from

– Example:

• States of nature: {1, 2, ...}

– Possible outcomes of the uncertain event.

– Example:

Decision Analysis-9

Notation and Terminology

• Payoff/Loss Function: L(ai, k)

– The payoff/loss incurred by taking action ai when state k occurs.

– Example:

• Prior distribution:

– Distribution representing the relative likelihood of the possible

states of nature.

• Prior probabilities: P( = k)

– Probabilities (provided by prior distribution) for various states of

nature.

– Example:

Decision Analysis-10

Decision Making Criteria

Can “optimize” the decision with respect to several criteria

• Maximin payoff

• Minimax regret

• Maximum likelihood

• Bayes’ decision rule (expected value)

Decision Analysis-11

Maximin Payoff Criterion

• For each action, find minimum payoff over all states of nature

• Then choose the action with the maximum of these minimum

payoffs

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

Min

Payoff

Decision Analysis-12

Minimax Regret Criterion

• For each action, find maximum regret over all states of nature

• Then choose the action with the minimum of these maximum regrets

(Payoffs)

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

(Regrets)

State of Nature

Action

Oil

Dry

Max

Regret

Drill for oil

Sell the land

Decision Analysis-13

Maximum Likelihood Criterion

• Identify the most likely state of nature

• Then choose the action with the maximum payoff under that state of

nature

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

0.25

0.75

Prior probability

Decision Analysis-14

Bayes’ Decision Rule

(Expected Value Criterion)

• For each action, find expectation of payoff over all states of nature

• Then choose the action with the maximum of these expected

payoffs

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

0.25

0.75

Prior probability

Expected

Payoff

Decision Analysis-15

Sensitivity Analysis with

Bayes’ Decision Rule

• What is the minimum probability of oil such that we choose to drill

the land under Bayes’ decision rule?

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

p

1-p

Prior probability

Expected

Payoff

Decision Analysis-16

Decision Making with

Experimentation

Decision Analysis-17

Goferbroke Example (cont’d)

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

0.25

0.75

Prior probability

• Option available to conduct a detailed seismic survey to obtain a

better estimate of oil probability

• Costs $30k

• Possible findings:

– Unfavorable seismic soundings (USS), oil is fairly unlikely

– Favorable seismic soundings (FSS), oil is fairly likely

Decision Analysis-18

Posterior Probabilities

• Do experiments to get better information and improve

estimates for the probabilities of states of nature. These

improved estimates are called posterior probabilities.

• Experimental Outcomes: {x1, x2, …}

Example:

• Cost of experiment:

Example:

• Posterior Distribution: P( = k | X = xj)

Decision Analysis-19

Goferbroke Example (cont’d)

• Based on past experience:

If there is oil, then

– the probability that seismic survey findings is USS = 0.4 =

P(USS | oil)

– the probability that seismic survey findings is FSS = 0.6 =

P(FSS | oil)

If there is no oil, then

– the probability that seismic survey findings is USS = 0.8 =

P(USS | dry)

– the probability that seismic survey findings is FSS = 0.2 =

P(FSS | dry)

Decision Analysis-20

Bayes’ Theorem

• Calculate posterior probabilities using Bayes’ theorem:

Given P(X = xj | = k), find P( = k | X = xj)

P( k | X x j )

P(X x j | k ) P( k )

P(X x

j

| i ) P( i )

i

Decision Analysis-21

Goferbroke Example (cont’d)

• We have

P(USS | oil) = 0.4

P(USS | dry) = 0.8

P(FSS | oil) = 0.6

P(FSS | dry) = 0.2

P(oil) = 0.25

P(dry) = 0.75

• P(oil | USS) =

• P(oil | FSS) =

• P(dry | USS) =

• P(dry | FSS) =

Decision Analysis-22

Goferbroke Example (cont’d)

Optimal policies

• If finding is USS:

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

Expected

Payoff

Posterior probability

• If finding is FSS:

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

Expected

Payoff

Posterior probability

Decision Analysis-23

The Value of Experimentation

• Do we need to perform the experiment?

As evidenced by the experimental data, the experimental outcome is

not always “correct”. We sometimes have imperfect information.

• 2 ways to access value of information

– Expected value of perfect information (EVPI)

What is the value of having a crystal ball that can identify true

state of nature?

– Expected value of experimentation (EVE)

Is the experiment worth the cost?

Decision Analysis-24

Expected Value of Perfect Information

• Suppose we know the true state of nature. Then we will

pick the optimal action given this true state of nature.

State of Nature

Action

Oil

Dry

Drill for oil

700

-100

Sell the land

90

90

0.25

0.75

Prior probability

• E[PI] = expected payoff with perfect information =

Decision Analysis-25

Expected Value of Perfect Information

• Expected Value of Perfect Information:

EVPI = E[PI] – E[OI]

where E[OI] is expected value with original information

(i.e. without experimentation)

• EVPI for the Goferbroke problem =

Decision Analysis-26

Expected Value of Experimentation

• We are interested in the value of the experiment. If the

value is greater than the cost, then it is worthwhile to do

the experiment.

• Expected Value of Experimentation:

EVE = E[EI] – E[OI]

where E[EI] is expected value with experimental

information.

E [EI ] E [value | experiment al result j ] P (result j )

j

Decision Analysis-27

Goferbroke Example (cont’d)

• Expected Value of Experimentation:

EVE = E[EI] – E[OI]

E [EI ] E [value | experiment al result j ] P (result j )

j

EVE =

Decision Analysis-28

Decision Trees

Decision Analysis-29

Decision Tree

• Tool to display decision problem and relevant

computations

• Nodes on a decision tree called __________.

• Arcs on a decision tree called ___________.

• Decision forks represented by a __________.

• Chance forks represented by a ___________.

• Outcome is determined by both ___________ and ____________.

Outcomes noted at the end of a path.

• Can also include payoff information on a decision tree branch

Decision Analysis-30

Goferbroke Example (cont’d)

Decision Tree

Decision Analysis-31

Analysis Using Decision Trees

1. Start at the right side of tree and move left a column at a time. For

each column, if chance fork, go to (2). If decision fork, go to (3).

2. At each chance fork, calculate its expected value. Record this

value in bold next to the fork. This value is also the expected value

for branch leading into that fork.

3. At each decision fork, compare expected value and choose

alternative of branch with best value. Record choice by putting

slash marks through each rejected branch.

• Comments:

–

–

This is a backward induction procedure.

For any decision tree, such a procedure always leads to an optimal

solution.

Decision Analysis-32

Goferbroke Example (cont’d)

Decision Tree Analysis

Decision Analysis-33

Painting problem

• Painting at an art gallery, you think is worth $12,000

• Dealer asks $10,000 if you buy today (Wednesday)

• You can buy or wait until tomorrow, if not sold by then, can be yours

for $8,000

• Tomorrow you can buy or wait until the next day: if not sold by then,

can be yours for $7,000

• In any day, the probability that the painting will be sold to someone

else is 50%

• What is the optimal policy?

Decision Analysis-34

Drawer problem

• Two drawers

– One drawer contains three gold coins,

– The other contains one gold and two silver.

• Choose one drawer

• You will be paid $500 for each gold coin and $100 for each silver

coin in that drawer

• Before choosing, you may pay me $200 and I will draw a randomly

selected coin, and tell you whether it’s gold or silver and which

drawer it comes from (e.g. “gold coin from drawer 1”)

• What is the optimal decision policy? EVPI? EVE? Should you pay

me $200?

Decision Analysis-35

Utility Theory

Decision Analysis-36

Validity of Monetary Value Assumption

• Thus far, when applying Bayes’ decision rule, we

assumed that expected monetary value is the

appropriate measure

• In many situations and many applications, this

assumption may be inappropriate

Decision Analysis-37

Choosing between ‘Lotteries’

• Assume you were given the option to choose from two

‘lotteries’

– Lottery 1

50:50 chance of winning $1,000 or $0

– Lottery 2

Receive $50 for certain

.5

.5

1

$1,000

$0

$50

• Which one would you pick?

Decision Analysis-38

Choosing between ‘lotteries’

• How about between these two?

– Lottery 1

50:50 chance of winning $1,000 or $0

– Lottery 2

Receive $400 for certain

.5

.5

1

• Or these two?

– Lottery 1

50:50 chance of winning $1,000 or $0

– Lottery 2

Receive $700 for certain

$0

$400

.5

.5

1

$1,000

$1,000

$0

$700

Decision Analysis-39

Utility

• Think of a capital investment firm deciding whether or

not to invest in a firm developing a technology that is

unproven but has high potential impact

• How many people buy insurance?

Is this monetarily sound according to Bayes’ rule?

• So... is Bayes’ rule invalidated?

No- because we can use it with the utility for money

when choosing between decisions

– We’ll focus on utility for money, but in general it could be utility

for anything (e.g. consequences of a doctor’s actions)

Decision Analysis-40

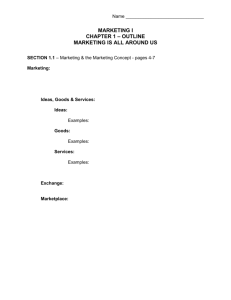

A Typical Utility Function for Money

u(M)

4

3

What does

this mean?

2

1

0

$100 $250

$500

$1,000

M

Decision Analysis-41

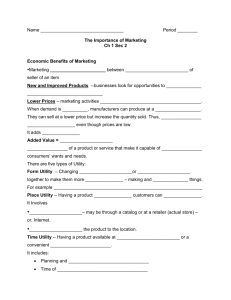

Decision Maker’s Preferences

• Risk-averse

u(M)

– Avoid risk

– Decreasing utility for money

• Risk-neutral

M

u(M)

– Monetary value = Utility

– Linear utility for money

• Risk-seeking (or risk-prone)

M

u(M)

– Seek risk

– Increasing utility for money

• Combination of these

M

u(M)

…

M

Decision Analysis-42

Constructing Utility Functions

• When utility theory is incorporated into a real decision

analysis problem, a utility function must be constructed

to fit the preferences and the values of the decision

maker(s) involved

• Fundamental property:

The decision maker is indifferent between two alternative

courses of action that have the same utility

Decision Analysis-43

Indifference in Utility

• Consider two lotteries

p

1-p

$1,000

1

$X

$0

• The example decision maker we discussed earlier

would be indifferent between the two lotteries if

– p is 0.25 and X is …

– p is 0.50 and X is …

– p is 0.75 and X is …

Decision Analysis-44

Goferbroke Example (with Utility)

• We need the utility values for the following possible

monetary payoffs:

Monetary

Payoff

45°

u(M)

Utility

-130

-100

60

90

670

M

700

Decision Analysis-45

Constructing Utility Functions

Goferbroke Example

• u(0) is usually set to 0. So u(0)=0

• We ask the decision maker what value of p makes

him/her indifferent between the following lotteries:

p

1-p

700

1

0

-130

• The decision maker’s response is p=0.2

• So…

Decision Analysis-46

Constructing Utility Functions

Goferbroke Example

• We now ask the decision maker what value of p makes

him/her indifferent between the following lotteries:

p

1-p

700

1

90

0

• The decision maker’s response is p=0.15

• So…

Decision Analysis-47

Constructing Utility Functions

Goferbroke Example

• We now ask the decision maker what value of p makes

him/her indifferent between the following lotteries:

p

1-p

700

1

60

0

• The decision maker’s response is p=0.1

• So…

Decision Analysis-48

Goferbroke Example (with Utility)

Decision Tree

Decision Analysis-49

Exponential Utility Functions

• One of the many mathematically prescribed forms of a “closed-form”

utility function

M

u(M ) R 1 e R

• It is used for risk-averse decision makers only

• Can be used in cases where it is not feasible or desirable for the

decision maker to answer lottery questions for all possible outcomes

• The single parameter R is the one such that the decision maker is

indifferent between

0.5

0.5

R

and

1

0

(approximately)

-R/2

Decision Analysis-50