שנה: 2012

advertisement

הטכניון -מכון טכנולוגי לישראל

TECHNION - ISRAEL INSTITUTE OF TECHNOLOGY

הפקולטה להנדסת חשמל

המעבדה לבקרה רובוטיקה ולמידה חישובית

דוח פרויקט :פרויקט א

הנושא:

התנהגות משתמשים

ברשת אלחוטית

מגישה:

אנה רוזנברג

מנחה:

אורלי אבנר

סמסטר :אביב

שנה2012 :

1

User Behavior

Analysis in Wi-Fi

network

By:

Anna Rosenberg

Supervisor:

Orly Avner

Date:

Spring Semester 2012

2

Contents

Abstract ......................................................................................................................................................... 6

Literature Review .......................................................................................................................................... 6

Data ............................................................................................................................................................. 13

Access Points Analysis ............................................................................................................................... 14

IEEE 802.11 Architecture ........................................................................................................................ 14

Arrival Rate.............................................................................................................................................. 15

Users ........................................................................................................................................................... 29

Visit duration........................................................................................................................................... 33

Features .................................................................................................................................................. 39

Clustering ................................................................................................................................................ 45

Possible Applications: ......................................................................................................................... 45

Distance Measure ............................................................................................................................... 46

K-Means Clustering ................................................................................................................................. 47

K-Means Results ...................................................................................................................................... 47

G-Means Algorithm ................................................................................................................................. 53

Evaluation of clustering .......................................................................................................................... 58

Conclusions ................................................................................................................................................. 62

Bibliography ................................................................................................................................................ 62

3

Figure 1 - Arrival rate of AP 1 ...................................................................................................................... 16

Figure 2 - Arrival rate of AP 2 ...................................................................................................................... 16

Figure 3 - Arrival rate of AP 3 ...................................................................................................................... 17

Figure 4 - Arrival rate of AP 4 ...................................................................................................................... 17

Figure 5 - Arrival rate of AP 5 ...................................................................................................................... 18

Figure 6 - Arrival rate of AP 6 ...................................................................................................................... 18

Figure 7 - Arrival rate of AP 7 ...................................................................................................................... 19

Figure 8 - Arrival rate of AP 8 ...................................................................................................................... 19

Figure 9 - Arrival rate of AP 9 ...................................................................................................................... 20

Figure 10 - Arrival rate of AP 10 .................................................................................................................. 20

Figure 11 - Arrival rate of AP 11 .................................................................................................................. 21

Figure 12 - Arrival rate of AP 12 .................................................................................................................. 21

Figure 13 - Arrival rate of AP 13 .................................................................................................................. 22

Figure 14 - Arrival rate of AP 14 .................................................................................................................. 22

Figure 15 - Arrival rate of AP 15 .................................................................................................................. 23

Figure 16 - Arrival rate of AP 16 .................................................................................................................. 23

Figure 17 - Arrival rate of AP 1 with averaging window of 0.1 hour........................................................... 24

Figure 18 - Arrival rate of AP 1 with averaging window of 0.2 hour........................................................... 25

Figure 19 - Arrival rate of AP 1 with averaging window of 0.25 hour......................................................... 25

Figure 20 - Arrival rate of AP 1 with averaging window of 0.3 hour........................................................... 26

Figure 21 - Arrival rate of AP 1 with averaging window of 0.35 hour......................................................... 26

Figure 22 - Arrival rate of AP 1 with averaging window of 0.4 hour........................................................... 27

Figure 23 - Arrival rate of AP 1 with averaging window of 0.5 hour........................................................... 27

Figure 24 - Arrival rate of AP 1 with different averaging windows............................................................. 28

Figure 25 -Transmission rate of user 1 during the day with averaging window 0f 1 hour ......................... 29

Figure 26 - Transmission rate of user 2 during the day with averaging window 0f 1 hour ........................ 30

Figure 27 - Transmission rate of user 3 during the day with averaging window 0f 1 hour ........................ 31

Figure 28 - Transmission rate of user 2 during the week with averaging window 0f 1 hour ..................... 32

Figure 29 - Transmission rate of user 3 during the week with averaging window 0f 1 hour ..................... 33

Figure 30 - Inter-arrival times of packets from 9:30 am till 11:18 am ........................................................ 34

Figure 31 - Inter-arrival times of packets from 12:30 pm till 16:53 pm...................................................... 35

Figure 32 - Inter-arrival times of packets during the day ........................................................................... 35

Figure 33 - Histogram of user's visits widespread ...................................................................................... 36

Figure 34 - Histogram of user's visits widespread ...................................................................................... 36

Figure 35 - Histogram of user's visits widespread ...................................................................................... 37

Figure 36 - Histogram of user's visits widespread ...................................................................................... 37

Figure 37 - Histogram of user's visits widespread ...................................................................................... 38

Figure 38 - Average inter-visits times vs. Average visit duration................................................................ 39

4

Figure 39 - Average inter-visits times vs. Number of visits ......................................................................... 40

Figure 40 - Average traffic vs. Average visit duration ................................................................................. 40

Figure 41 - Average traffic vs. Number of visits .......................................................................................... 41

Figure 42 - Average visit duration vs. Number of visits .............................................................................. 41

Figure 43 - Average Inter visits times vs. Total days in system ................................................................... 42

Figure 44 - Standard deviation of inter-arrival times of visits .................................................................... 43

Figure 45 - Standard deviation of traffic per packets ................................................................................. 43

Figure 46 - Standard deviation of visit duration times ............................................................................... 44

Figure 47 - k-means results when k=2: Average visit duration vs. Average inter-visits times.................... 48

Figure 48 - k-means results when k=2: Average visit duration vs. Average inter-visits times vs. Average

traffic per packets ....................................................................................................................................... 48

Figure 49 - k-means results when k=2: Average inter-visits times vs. Average traffic per packets............ 49

Figure 50 - k-means results when k=2: Maximal distance between visits vs. Minimal distance between

visits ............................................................................................................................................................ 49

Figure 51 - k-means results when k=3: Average visit duration vs. Average inter-visits times.................... 50

Figure 52 - k-means results when k=3: Average inter-visits times vs. Average traffic per packets............ 50

Figure 53 - k-means results when k=3: Maximal distance between visits vs. Minimal distance between

visits ............................................................................................................................................................ 51

Figure 54 - k-means results when k=4: Average visit duration vs. Average inter-visits times.................... 51

Figure 55 - k-means results when k=4: Average inter-visits times vs. Average traffic per packets............ 52

Figure 56 - k-means results when k=4: Maximal distance between visits vs. Minimal distance between

visits ............................................................................................................................................................ 52

Figure 57 - Number of clusters produced by the g-means algorithm vs. Significance level α ................... 55

Figure 58 - User's Clusters produced by the g-means algorithm................................................................ 56

Figure 59 - User's Clusters produced by the g-means algorithm ................................................................ 57

Figure 60 - User's Clusters produced by the g-means algorithm ................................................................ 58

Figure 61 - Evaluation measure Purity vs. significance level α ................................................................... 60

Figure 62 - Evaluation measure E vs. significance level α ........................................................................... 61

5

Abstract

Wireless networks are increasingly being deployed and expanded in airports, universities,

corporations, hospitals, residential, and other public areas to provide wireless Internet access.

Modeling how wireless clients arrive at different APs, how long they stay at them, and the

amount of data they access can be beneficial in capacity planning, administration and

deployment of wireless infrastructures, protocol design for wireless applications and services,

and their performance analysis.

A better understanding of the arrival rate of clients at APs can also assist in forecasting the traffic

demand at APs. Short-term (e.g., a few minutes) forecasting can be employed in the design of

more energy-efficient clients and resource reservation and load balancing (among APs)

mechanisms. Long-term forecasting is essential for capacity planning and understanding the

evolution of the wireless traffic and networks.

Understanding and forecasting the access patterns at APs can have a dominant impact on the

operation of wireless APs.

The goal of this project is to analyze a Wi-Fi network’s APs and to model the wireless clients

using it.

The contributions of this project are the following: the analysis of Access Points of wireless

network, the use of k-means and g-means algorithms for clustering the network’s users.

Literature Review

At the first step of the project we analyzed previous related research in the field of network

behavior studies.

1. "Modeling client arrivals at access points in wireless campus-wide networks (Maria

Papadopouli, Haipeng Shen, Manolis Spanakis)"

The goal of this study is to model the arrival of wireless clients at the access points (APs)

in a production 802.11 infrastructure.

Time-varying Poisson processes can model the arrival processes of clients at APs well

and they validate these results by modeling the visit arrivals at different time intervals

6

and APs. They investigate the traffic load characteristics (e.g., bytes, number of packets,

associations, distinct clients, type of clients), their dependencies and interplay in various

time-scales, from both the perspective of a client and an access point (AP).

The main contributions of this work are the following: a novel methodology for modeling

the arrival processes of clients at wireless APs, the use of a very powerful visualization

tool (the SiZer map) for finding detailed interior features and quantile plots with

simulation envelope for goodness-of-fit test, models of the arrival processes of clients at

APs as a time-varying Poisson process with different arrival-rate function to model their

arrival at an AP. Furthermore, they investigate the impact of the type of building (i.e., its

functionality) in which the AP is located at the arrival rate and cluster these visit arrival

models based on the building type.

The conclusions that were made in this work: time-varying Poisson processes can model

the arrival processes of clients at APs well; it is possible to cluster the APs based on their

visit arrival and functionality of the area in which these APs are located.

2. Characterizing user behavior and network performance in a public wireless LAN. In

Proceedings of the ACM Sigmetrics Conference on Measurement and Modeling of

Computer Systems, 2002. (Anand Balachandran, Geoffrey Voelker, Paramvir Bahl, and

VenkatRangan)

In this paper previous studies were extended by presenting and analyzing user behavior

and network performance in a public-area wireless network using a trace recorded over

three days at the ACM SIGCOMM’01 conference held at U.C. San Diego in August

2001. The trace consisted of two parts. The first part is a record of performance

monitoring data sampled from wireless access points (APs) serving the conference and

the second consists of anonymized packet headers of all wireless traffic. Both parts of the

trace span the three days of the conference, capturing the workload of 300,000 flows from

195 users consuming 4.6 GB of bandwidth.

In this paper the arrival process was modeled as governed by an underlying Markov

chain, which is in one of two states, ON or OFF. The OFF state is when there are no

7

arrivals into the system, which would typically be midway into the conference session.

During the ON state, arrivals vary randomly over time with a more or less constant

arrival rate. The mean inter-arrival time during the ON state is 38 seconds. The mean

duration of the OFF state is 6 minutes, with longer OFF periods during the session breaks

and the lunch break.

Also in this paper they model the error rates and analyzed MAC-level retransmission

Their overall analysis of user behavior shows that:

Users are evenly distributed across all APs and user arrivals are correlated in time and

space and user arrivals can be correlated into the network according to a two-state

Markov-Modulated Poisson Process (MMPP).

Most of the users have short session times: 60% of the user sessions last less than 10

minutes. Users with longer session times are idle for most of the session. The session

time distribution can be approximated by a General Pareto distribution with a shape

parameter of 0.78 and a scale parameter of 30.76. The R2 value is 0.9. Short session

times imply that network administrators using DHCP for IP address leasing can configure

DHCP to provide short-term leases, after which IP addresses can be reclaimed or

renewed.

Sessions can be broadly categorized based on their bandwidth consumption into light,

medium, and heavy sessions: light sessions on average generate traffic at 15 Kbps,

medium sessions between 15 and 80 Kbps, and heavy sessions above 80 Kbps. The

highest instantaneous bandwidth demand is 590 Kbps.

Web traffic accounts for 46% of the total bandwidth of all application traffic, and 57% of

all flows. Web and SSH together account for 64% of the total bandwidth and 58% of

flows.

There is an implicit correlation between session duration and average data rates. Longer

sessions typically have very low data requirements. Most of the sessions with high

average data rate are very short (< 15 minutes).

8

Their analysis of user mobility shows that users are mobile when expected, i.e., at the

beginning and end of the conference sessions. About 75% of the users are seen at more

than one AP during the day.

Their analysis of network performance shows that the load distribution across APs is

highly uneven and does not directly correlate to the number of users at an AP. Stated

another way, the peak offered load at an AP is not reached when the number of

associated users is a maximum. Rather, the load at an AP is determined more by

individual user workload behavior. One implication of this result is that load balancing

solely by the number of associated users may perform poorly.

Their observations indicate that the traditional method of modeling user arrivals

according to a Poisson arrival process may not adequately characterize scenarios where

arrivals are correlated with time and space. However, although the MMPP model is well

suited to their conference setting where most users follow a common schedule, they do

not expect it to generalize to every public-area wireless network. For example, it may be

appropriate in an airport network where users cluster at gates at specific times in

anticipation of departures, but not for a shopping mall network where we would expect

user arrivals, departures, and mobility to be more random.

3. Modeling users’ mobility among Wi-Fi access points.( Minkyong Kim, David Kotz)

In this paper, they present a model of user movements between APs. From the syslog

messages collected on the Dartmouth campus, they count the number of visits to each

AP. Based on the observation that most APs have strong daily repetition, they aggregate

the multiple days of the hourly visits into a single day. They then cluster APs based on

their peak hour. They derive four clusters with different peak times and one cluster

consisting of stable APs whose number of visits does not change much over 24 hours. To

model a cluster, they compute hourly arrival and departure rates, and the distribution of

daily arrivals. They leave the evaluation of this model as future work.

Their experience with the Dartmouth traces has shown that different APs have their peak

number of users at different times of the day. They then clustered the rest of APs based

on their peak hour.

9

They used a filter to convert the syslog traces into the sequence of APs that each client

associates with. This filter also defines the OFF state, which represents a state of being

not connected to the network. A device enters the OFF state when it is turned off or when

it loses network connectivity.

They made a conclusion that all of the clusters, except Cluster 1, have more transitions

from/to another AP than from/to the OFF state. The high number of transitions from/to

another AP is partly due to the ping-pong effect: associating repeatedly with multiple

APs. When a device is within the range of multiple APs, it often changes its associated

AP. Thus, changes in association do not necessarily mean that the user moved physically.

The ping-pong effect is especially common where the density of APs is high.

They found that in the process of developing the model, the number of visits to APs

exhibits a strong daily pattern. They also found that clustering APs based on their peak

time is effective;

4. Characterizing Flows in Large Wireless Data Networks(Xiaoqiao (George) Meng,

Starsky H.Y. Wong, Yuan Yuanz, Songwu Lu)

In this paper, they statistically characterize both static flows and roaming flows in a large

campus wireless network using a recently collected trace.

If only one AP is found to be used by the flow, they categorize the flow as a static flow;

otherwise, it is a roaming flow.

They explain the modeling results from the perspective of user behaviors and application

demands. For example, the Weibull regression model attributes to both observations of

strong 24-hour periodicity and diurnal cycle in user activity, and coexistence of

applications with short and long inter-arrival times.

They use two examples of scheduling and wireless TCP to showcase how to apply their

results to evaluate the dynamic behavior of network protocols.

Most studies in the wireless literature are based on unrealistic settings of static flow

configuration or simplistic Poisson model. They show that such simulation and analysis

models can produce misleading results compared with using the models derived from real

traces.

10

They found that that the inter-arrival times can be well modeled by a Weibull distribution

at fine-time scales, e.g., hourly basis. This result holds for all 24 hourly intervals.

In the study, they also tested other five continuous distribution models: Exponential,

Lognormal, Gamma, Pareto and Extreme-value. None of them produces a consistently

good match.

Their further analysis shows that, if further reduce the granularity into fine scales, say,

half an hour, the Weibull model still matches well.

However, if they increase the granularity to coarser scales, say, two hours, simple

distributions such as Weibull model are generally not sufficient because the

nonstationarity makes the traffic much more variable. Therefore, they select the hourly

scale in modeling.

Weibull regression model accurately approximates the flow arrival process in all time

scales. For different APs, they further discover that, the parameters of the Weibull

regression model are location dependent, and vary from one AP to another.

However, APs in the same subnet observe spatial similarity in the sense that, the flow

inter-arrival times across APs are highly likely to follow identical statistical distribution.

As for the flow duration, they characterize it via the data size each flow transfers, and

find that it follows the Lognormal distribution. Such a distribution holds for all APs.

5. Measurement and Analysis of the Error Characteristics of an In-Building Wireless

Network. In Proceedings of ACM SIGCOMM’96, pages 243–254, August 1996.( D.

Eckardt and P. Steenkiste.)

They examined their campus wide WaveLAN installation and focused more on network

performance and less on user behavior. The focus of their study was on the error model

and signal characteristics of the RF environment in the presence of obstacles.

6. Experience building a high speed, Campus-Wide Wireless Data Network. In Proceedings

of ACM MobiCom’97, pages 55–65, August 1997. (B. J. Bennington and C. R. Bartel.

Wireless Andrew)

11

The focus of their study was on the installation and maintenance issues of a campus

wireless network and comparing its performance to a wired LAN.

7. Analysis of a Local-Area Wireless Network. In Proceedings of ACM MobiCom’00,

pages 1–10, August 2000. (D. Tang and M. Baker.)

The focus of their study was on the user behavior and traffic characteristics in a

university department network.

They analyzed a 12-week trace collected from the wireless network used by the Stanford

Computer Science department; this study built on earlier work involving fewer users and

a shorter duration. Their study provides a good qualitative description of how mobile

users take advantage of a wireless network, although it does not give a characterization of

user workloads in the network.

The Stanford study looked at a network of large geographic size where the users are

unevenly distributed across the APs in the building.

8. Analysis of a Metropolitan-Area Wireless Network. In Proceedings of ACM

MobiCom’99, pages 13–23, August 1999.( D. Tang and M. Baker)

The focus of their study was on the user mobility in a low-bandwidth metropolitan area

network.

Earlier, Tang and Baker also characterized user behavior in a metropolitan area network,

focusing mainly on user mobility. Furthermore, the network was spread over a larger

geographical area and had very different performance characteristics.

9. Trace-based Mobile Network Emulation. In Proceedings of ACM SIGCOMM’97, pages

51–61, September 1997. (B. Noble, M. Satyanarayanan, G. Nguyen, and R. Katz.)

This work is a joint research effort between CMU and Berkeley that proposed a novel

method for network measurement and evaluation applicable to wireless networks. The

12

technique, called trace modulation, involves recording known workloads at a mobile host

and using it as input to develop a model for network behavior. Although this work helps

in developing a good model of network behavior, it does not provide a realistic

characterization of user activity in a mobile setting.

10. Characterizing Usage of a Campus-wide Wireless Network. Technical Report TR2002423, Dartmouth College, March 2002. (D. Kotz and K. Essien)

The focus of their study was on the user behavior and traffic characteristics in a college

campus.

Kotz and Essien traced and characterized the Dartmouth College campus-wide wireless

network during their fall 2001 term. Their workload is quite extensive, both in scope

(1706 users across 476 access points) and duration (12 weeks). Kotz and Essien focus on

large-scale characteristics of the campus, such as overall application mix, overall traffic

per building and AP, mobility patterns, etc. In terms of application mix, their network

carries a rich set of applications that reflects the nature of campus-wide applications.

With the size of their network, they were able to study mobility patterns as well.

Interestingly, they found that most users were stationary within a session, and overall

associated with just a few APs during the term.

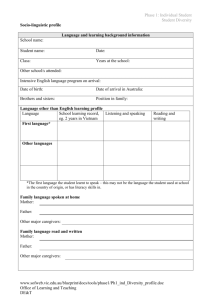

Data

We used a standard Linksys router as a sniffer that recorded packets sent by users in the network

during 6 weeks and 4 days. Every packet contains MAC address of the Access Points, Mac

Address of the user, Source/Destination IP Addresses, size of the packet, the time it was

received.

13

Photo of the router:

Access Points Analysis

IEEE 802.11 Architecture

A cellular architecture where the system is subdivided into cells, where each cell (called Basic

Service Set or BSS, in the 802.11 nomenclature) is controlled by a Base Station (called Access

Point or in short AP).

Even though a wireless LAN may be formed by a single cell, with a single Access Point, most

installations will be formed by several cells, where the Access Points are connected through

some kind of backbone (called Distribution System or DS), typically Ethernet, and in some cases

wireless itself. The whole interconnected Wireless LAN including the different cells, their

respective Access Points and the Distribution System is seen to the upper layers of the OSI

model, as a single 802 network, and is called in the standard as Extended Service Set (ESS).

The following picture shows a typical 802.11 LAN, with the components described previously:

14

(Brenner)

We examine the Electrical Engineering faculty Wi-Fi network with 16 Access Points.

Arrival Rate

To investigate how the arrival rate of APs changes during the day time we chose an averaging

window of 30 minutes.

The following graphs show the average arrival rate in units of Bytes

window of 30 minutes:

15

min

with an averaging

Figure 1 - Arrival rate of AP 1

Figure 2 - Arrival rate of AP 2

16

Figure 3 - Arrival rate of AP 3

Figure 4 - Arrival rate of AP 4

17

Figure 5 - Arrival rate of AP 5

Figure 6 - Arrival rate of AP 6

18

Figure 7 - Arrival rate of AP 7

Figure 8 - Arrival rate of AP 8

19

Figure 9 - Arrival rate of AP 9

Figure 10 - Arrival rate of AP 10

20

Figure 11 - Arrival rate of AP 11

Figure 12 - Arrival rate of AP 12

21

Figure 13 - Arrival rate of AP 13

Figure 14 - Arrival rate of AP 14

22

Figure 15 - Arrival rate of AP 15

Figure 16 - Arrival rate of AP 16

23

We see that some APs are active from midday till the evening, and some APs are active only in

the evening or during the specific hour. Access Point 1achieves maximal average arrival rate that

and Access Points 9, 10, 16 achieve maximal average

approximately equals to 7 105 B

min

.

arrival rate that approximately equals to 0.14 B

min

We chose AP 1 for analyzing arrival rate with different averaging windows and we produced

graphs of AP 1 for different averaging windows:

Averaging window of 0.1 hour:

Figure 17 - Arrival rate of AP 1 with averaging window of 0.1 hour

24

Averaging window of 0.2 hour:

Figure 18 - Arrival rate of AP 1 with averaging window of 0.2 hour

Averaging window of 0.25 hour:

Figure 19 - Arrival rate of AP 1 with averaging window of 0.25 hour

25

Averaging window of 0.3 hour:

Figure 20 - Arrival rate of AP 1 with averaging window of 0.3 hour

Averaging window of 0.35 hour:

Figure 21 - Arrival rate of AP 1 with averaging window of 0.35 hour

26

Averaging window of 0.4 hour:

Figure 22 - Arrival rate of AP 1 with averaging window of 0.4 hour

Averaging window of 0.5 hour:

Figure 23 - Arrival rate of AP 1 with averaging window of 0.5 hour

27

The following graph shows the difference between the average arrival rates for different

averaging windows:

Figure 24 - Arrival rate of AP 1 with different averaging windows

We see that there is a tradeoff in the averaging window selection. Small averaging windows

produce graphs with sharp peaks and it will be hard to fit a function for such graphs. Big

averaging windows produce smooth graphs and it is easy to fit a function for such graphs but

also big averaging windows cause data loss.

28

Users

We gathered statistics of 3273 users.

We chose a few users to show their transmission rate during the day time with an averaging

window of 1 hour.

user1:

Figure 25 -Transmission rate of user 1 during the day with averaging window 0f 1 hour

29

user 2:

Figure 26 - Transmission rate of user 2 during the day with averaging window 0f 1 hour

user 3:

30

Figure 27 - Transmission rate of user 3 during the day with averaging window 0f 1 hour

We see that these users are active from 9 am. User 1 and 2 are active till 9pm and user 3 is active

till 7 pm.

We also show the average arrival rate of users during the week with averaging window of 1

hour:

user 2:

31

Figure 28 - Transmission rate of user 2 during the week with averaging window 0f 1 hour

user 3:

32

Figure 29 - Transmission rate of user 3 during the week with averaging window 0f 1 hour

We see that these users are active only during the first part of the week.

Visit duration

33

We are interested in defining the users’ visit duration. When can we say that the visit has ended

and the next received packets will represent the start of a new visit?

The following figures show the inter-arrival times of packets of some user during different days

and different times of the day:

Figure 30 - Inter-arrival times of packets from 9:30 am till 11:18 am

The following figure shows that the typical intervals between packets bursts are 24 min, 55 min,

and 2 hours.

We see that user is active during the breaks and not active during the lectures that last 50-55

minutes.

34

Figure 31 - Inter-arrival times of packets from 12:30 pm till 16:53 pm

The following figure shows that the typical intervals between packets bursts are 10 min, 25 min,

50 min, 66 min, 4 hours:

Figure 32 - Inter-arrival times of packets during the day

35

We want to establish a maximal interval between two packets that can be considered as part of

one visit. To do so we use the histograms of packet’s inter-arrival times:

Figure 33 - Histogram of user's packets inter-arrival times

Figure 34 - Histogram of user's packets inter-arrival times

36

Figure 35 - Histogram of user's packets inter-arrival times

We chose some users with significant number of packets and got histograms of such users:

Figure 36 - Histogram of user's packets inter-arrival times

37

Figure 37 - Histogram of user's packets inter-arrival times

We chose 30 minutes as a maximal inter-arrival time between two packets that can be considered

as packets of one visit. If the inter-arrival time is more than 30 minutes, then we recognize it as

the start of new visit.

38

Features

We chose to base a clustering of the users on the following features: average visit duration,

average inter-arrival times between the visits, average traffic, number of visits and total number

of days in the system.

The following graphs show the correlation between those features:

Figure 38 - Average inter-visits times vs. Average visit duration

39

Figure 39 - Average inter-visits times vs. Number of visits

Figure 40 - Average traffic vs. Average visit duration

40

Figure 41 - Average traffic vs. Number of visits

Figure 42 - Average visit duration vs. Number of visits

41

Figure 43 - Average Inter visits times vs. Total days in system

According to the above figures we don’t see any typical clusters that can be found among the

networks users.

We based the clustering on the average characteristics that is why we want to examine a standard

deviation of the characteristics that were used in the clustering. We expect the characteristics to

have small standard deviation in order to achieve proper results of clustering algorithm using

average characteristics.

42

The standard deviation of inter-arrival times of visits:

Figure 44 - Standard deviation of inter-arrival times of visits

The standard deviation of traffic per packets:

Figure 45 - Standard deviation of traffic per packets

43

The standard deviation of visit duration times:

Figure 46 - Standard deviation of visit duration times

As we see none of the characteristics have a significant standard deviation.

44

Clustering

Clustering can be considered the most important unsupervised learning problem; so, as every

other problem of this kind, it deals with finding a structure in a collection of unlabeled data.

A loose definition of clustering could be “the process of organizing objects into groups whose

members are similar in some way”.

A cluster is therefore a collection of objects which are “similar” between them and are

“dissimilar” to the objects belonging to other clusters.

We can show this with a simple graphical example:

Possible Applications:

Marketing: finding groups of customers with similar behavior given a large database of

customer data containing their properties and past buying records;

Biology: classification of plants and animals given their features;

Libraries: book ordering;

Insurance: identifying groups of motor insurance policy holders with a high average claim

cost; identifying frauds;

45

City-planning: identifying groups of houses according to their house type, value and

geographical location;

Earthquake studies: clustering observed earthquake epicenters to identify dangerous zones;

WWW: document classification; clustering weblog data to discover groups of similar access

patterns.

(A Tutorial on Clustering Algorithms)

Distance Measure

An important component of a clustering algorithm is the distance measure between data points.

If the components of the data instance vectors are all in the same physical units then it is possible

that the simple Euclidean distance metric is sufficient to successfully group similar data

instances. However, even in this case the Euclidean distance can sometimes be misleading. The

figure shown below illustrates this with an example of the width and height measurements of an

object. Despite both measurements being taken in the same physical units, an informed decision

has to be made as to the relative scaling. As the figure shows, different scalings can lead to

different clusterings.

Notice however that this is not only a graphic issue: the problem arises from the mathematical

formula used to combine the distances between the single components of the data feature vectors

into a unique distance measure that can be used for clustering purposes: different formulas leads

46

to different clusterings.

Again, domain knowledge must be used to guide the formulation of a suitable distance measure

for each particular application.

(A Tutorial on Clustering Algorithms)

K-Means Clustering

In the first step of the clustering we use k-means clustering algorithm and analyze its results.

In data mining, k-means clustering is a method of cluster analysis which aims

to partition n observations into k clusters in which each observation belongs to the cluster with

the nearest mean. This results in a partitioning of the data space into Voronoi cells.

The problem is computationally difficult (NP-hard); however, there are efficient heuristic

algorithms that are commonly employed and converge quickly to a local optimum.

Given a set of observations ( x1 , x2 ,..., xn ) , where each observation is a d-dimensional real

vector, k-means clustering aims to partition the n observations into k sets

(k ≤ n) S {S1 , S2 ,..., Sk } so as to minimize the within-cluster sum of squares (WCSS):

k

arg min

i 1 x j Si

x j i

2

where i is the mean of points in Si.

We used a matlab function kmeans(X,k) which partitions the points in the n-by-p data matrix X

into k clusters. kmeans returns an n-by-1 vector containing the cluster indices of each point. By

default, kmeans uses squared Euclidean distances.

K-Means Results

47

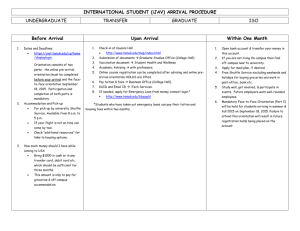

For clustering we use the following features: average visit duration, average inter-visit times,

average traffic per packets, maximal distance between visits, and minimal distance between visits.

The following figures show the results of k-means algorithm when k 2 :

Figure 47 - k-means results when k=2: Average visit duration vs. Average inter-visits times

Figure 48 - k-means results when k=2: Average visit duration vs. Average inter-visits times vs. Average traffic per packets

48

Figure 49 - k-means results when k=2: Average inter-visits times vs. Average traffic per packets

Figure 50 - k-means results when k=2: Maximal distance between visits vs. Minimal distance between visits

49

The following figures show the results of k-means algorithm when k 3 :

Figure 51 - k-means results when k=3: Average visit duration vs. Average inter-visits times

Figure 52 - k-means results when k=3: Average inter-visits times vs. Average traffic per packets

50

Figure 53 - k-means results when k=3: Maximal distance between visits vs. Minimal distance between visits

The following figures show the results of k-means algorithm when k 4 :

Figure 54 - k-means results when k=4: Average visit duration vs. Average inter-visits times

51

Figure 55 - k-means results when k=4: Average inter-visits times vs. Average traffic per packets

Figure 56 - k-means results when k=4: Maximal distance between visits vs. Minimal distance between visits

52

We can’t easily identify typical clusters as for example we can in the following figure:

As we see we did not find any isolated clusters. One of the problems of the users clustering is the

decision making upon Euclidian distances criteria because the distance measure between data

points is an important component of a clustering algorithm. Our components of the data instance

vectors are not in the same physical units that is why we did not succeed at successfully grouping

similar data instances.

G-Means Algorithm

We decided to use each visit as a point and not to use clustering based on the average

characteristics. Each point consists of the following components: the visit duration, the inter time

between the visits and the previous visit, number of packets that were sent during the visit and

the average amount of data that was accessed during the visit. We normalize the data

components to get proper results even with simple Euclidean distance metric. We used g-means

clustering algorithm to find an optimal number of clusters to use.

When clustering a dataset, the right number k of clusters to use is often not obvious, and

choosing k automatically is a hard algorithmic problem. There is an improved algorithm for

learning k while clustering which is called G-means. The G-means algorithm is based on a

statistical test for the hypothesis that a subset of data follows a Gaussian distribution. G-means

runs k-means with increasing k in a hierarchical fashion until the test accepts the hypothesis that

the data assigned to each k-means center are Gaussian. Two key advantages are that the

hypothesis test does not limit the covariance of the data and does not compute a full covariance

53

matrix. Additionally, G-means only requires one intuitive parameter, the standard statistical

significance level . (Hamerly & Elkan)

The statistical test detects whether the data assigned to a center are sampled from a Gaussian by

accepting one of two following hypotheses:

• H 0 : The data around the center are sampled from a Gaussian.

• H1 : The data around the center are not sampled from a Gaussian.

If the null hypothesis H 0 is accepted, then the one center is sufficient to model its data, and the

cluster should not be split into two sub-clusters.

If H 0 is rejected, then the cluster will be split.

The test that is used in g-means algorithm is based on the Anderson-Darling statistic. This onedimensional test has been shown empirically to be the most powerful normality test that is based

on the empirical cumulative distribution function (ECDF).

We must choose the significance level of the test, α, which is the desired probability of

incorrectly rejecting H 0 . It is appropriate to use a Bonferroni adjustment to reduce the chance of

incorrectly rejecting H 0 over multiple tests. For example, if we want a 0.01 chance of

incorrectly rejecting H 0 in 100 tests, we should apply a Bonferroni adjustment to make each test

use α = 0.01/100 = 0.0001. To find k final centers the G-means algorithm makes k statistical

tests, so the Bonferroni correction does not need to be extreme.

We used a code for g-means algorithm that finds the optimal number of clusters and runs kmeans algorithm with optimal number of clusters.

54

The following figure shows the dependence of number of clusters on :

Figure 57 - Number of clusters produced by the g-means algorithm vs. Significance level α

As was sad before α is the desired probability of incorrectly rejecting H 0 . So the bigger the

significance level α, the bigger the probability of incorrectly rejecting H 0 and the bigger the

probability of splitting a cluster into two sub-clusters. That is why the bigger the α we use, the

more clusters are produced by the g-means algorithm.

For g-means algorithm we used 0.0001 . We present the results of the algorithm that

produced 70 clusters.

55

Histograms of clusters for some users:

user 136 labels

4

3.5

3

2.5

2

1.5

1

0.5

0

0

10

20

30

40

50

60

70

Figure 58 - User's Clusters produced by the g-means algorithm

The user 136 had 58 visits and sent 8788 packets. He was associated with 30 clusters; the most

common clusters for this user are clusters 11, 20, 29 and 35. Each of the most common clusters

contains 4 samples.

56

user 202 labels

6

5

4

3

2

1

0

0

10

20

30

40

50

60

70

Figure 59 - User's Clusters produced by the g-means algorithm

The user 202 had 59 visits and sent 28777 packets. He was associated with 31 clusters; the most

common cluster for this user is cluster 30 that contains 6 samples.

57

user 240 labels

3

2.5

2

1.5

1

0.5

0

0

10

20

30

40

50

60

70

Figure 60 - User's Clusters produced by the g-means algorithm

The user 240 had 55 visits and sent 23801 packets. He was associated with 36 clusters; the most

common clusters for this user are clusters 20, 22, 30, 59 and 60. Each of the most common

clusters contains 3 samples.

According to the histograms it is hard to represent a user by one typical cluster.

Evaluation of clustering

Typical objective functions in clustering formalize the goal of attaining high intra-cluster

similarity and low inter-cluster similarity. This is an internal criterion for the quality of a

clustering. But good scores on an internal criterion do not necessarily translate into good

effectiveness in an application. An alternative to internal criteria is direct evaluation in the

application of interest. For search result clustering, we may want to measure the time it takes

users to find an answer with different clustering algorithms. This is the most direct evaluation,

but it is expensive, especially if large user studies are necessary. (Manning, Raghavan, &

Schütze)

58

We will use external criteria of clustering quality which is called purity. Purity is a simple and

transparent evaluation measure.

To compute purity, each cluster is assigned to the class which is most frequent in the cluster.

Formally:

purity (, C )

1

max k c j

N k j

where {1 , 2 ,..., k } is the set of clusters and C {c1 , c2 ,..., cJ } is the set of classes.

For example, if the following figure represents the result of the clustering algorithm:

(Manning, Raghavan, & Schütze)

then the majority class and number of members of the majority class for the three clusters are:

x, 5 (cluster 1); , 4 (cluster 2); and ,3 (cluster 3). Purity is (1 17) (5 4 3) 0.71 .

59

The following figure shows the dependence of the purity on :

alpha vs purity

0.18

0.16

0.14

purity

0.12

0.1

0.08

0.06

0.04

0.02

0

0

0.01

0.02

0.03

0.04

0.05

alpha

0.06

0.07

0.08

0.09

0.1

Figure 61 - Evaluation measure Purity vs. significance level α

The bigger the , the more clusters are produced by the algorithm. It is logical that the purity

increases with the number of clusters.

Also we present a new evaluation measure E that is calculated in the following fashion:

For each user we determine the most common cluster and the number of samples contained in

this cluster than we divide the number of samples contained in the most common cluster by the

users’ total number of samples. We find the average of these received values:

E

1 N xi

,

N i 1 M i

where N - total number of users, xi - number of samples contained in the most common cluster

of user i , M i - total number of samples of user i .

60

We would like to examine if most of the users’ visits are clustered into one cluster, so the user is

represented by one typical cluster.

The measure E represents the level of possibility of representing each user by one typical

cluster.

The following figure shows the dependence of the evaluation measure E on :

0.14

0.13

0.12

0.11

0.1

0.09

0.08

0.07

0.06

0.05

0.04

0

0.01

0.02

0.03

0.04

0.05

alpha

0.06

0.07

0.08

0.09

0.1

Figure 62 - Evaluation measure E vs. significance level α

The bigger the , the more clusters are produced by the algorithm. It is logical that the E

decreases with the number of clusters. In the case of big number of clusters, a user is associated

with the big number of clusters and it is more difficult to represent a user by one typical cluster.

We see that the upper bound of E is 0.13 which means that g-means algorithm doesn’t achieve

good performance when clustering networks’ users.

61

Conclusions

The conclusions that were made in this work:

1. The Access Points’ arrival rate is coherent with the time of lectures and breaks. The Aps

show low activity during the lectures and high activity during the breaks.

2. k-means clustering algorithm based on average characteristics of networks’ users can’t

produce any isolated clusters. That is why we conclude that this algorithm can’t cluster

well the networks’ users.

3. g-means clustering algorithm based on the points that consist of the 4 characteristics (that

were described earlier) can’t represent each user by one typical cluster. That is why we

conclude that this algorithm can’t cluster well the networks’ users.

Bibliography

A Tutorial on Clustering Algorithms. (n.d.). Retrieved from

http://home.deib.polimi.it/matteucc/Clustering/tutorial_html/.

Brenner, P. (n.d.). A technical tutorial on the IEEE 802.11 protocol.

Hamerly, G., & Elkan, C. (n.d.). Learning the k in k-means.

Manning, C. D., Raghavan, P., & Schütze, H. (n.d.). Introduction to Information Retrieval.

62