LN 15: Virtual Memory Management

advertisement

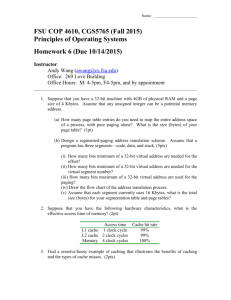

CS 201 Computer Systems Programming Chapter 15 Paged Virtual Memory Management Herbert G. Mayer, PSU Status 6/28/2015 1 Syllabus Introduction High-Level Steps of Paged VMM VMM Mapping Steps Two Levels Definitions History of Paging Goals and Methods of Paging An Unrealistic Paging Scheme A Realistic Paging Scheme for 32-bit Architecture Typical PD and PT Entries Page Replacement Using FIFO Bibliography 2 Introduction With the advent of 64-bit architectures, paged Virtual Memory Management (VMM) experienced a revival in the 1990s Originally, the force behind VMM systems (paged or segmented or both on Multics [4]) was the need for more addressable memory than was typically available in physical memory Scarceness of physical memory then was caused by high cost of memory. The early technology of core memories carried a high price tag due to the tedious manual labor involved High cost per byte of memory is gone, but the days of insufficient physical memory have returned, with larger data sets due to 64 bit addresses 3 Introduction Paged VMM is based on the idea that some memory areas can be relocated out to disk, while other reused areas can be moved back from disc into memory on demand Disk space abounds while main memory is more constrained. This relocation in and out, called swapping, can be handled transparently, thus imposing no additional burden on the application programmer The system must detect situations in which an address references an object that is on disk and must therefore perform the hidden swap-in automatically and transparently, except for the additional time 4 Introduction Paged VMM trades speed for address range. The loss in speed is caused by mapping logical-tophysical, and by the slow disk accesses; slow vs. memory access Typical disk access can be 1000s to millions of times more expensive -in number of cycles- than a memory access However, if virtual memory mapping allows large programs to run --albeit slowly-- that previously could not execute due to their high demands of memory, then the trade-off is worth the loss in speed The real trade-off is enabling memory-hungry programs to run slowly vs. not executing at all 5 Two-Level VMM Mapping On 32-Bit Architecture 6 Paged VMM --from Wikipedia [6] 7 High-Level Steps of Paged VMM Some instruction references a logical address la VMM determines, whether la maps onto resident page If yes, memory access completes. In a system with a data cache, such an access is fast; see also special purpose TLB cache for VMM If memory access is a store operation, (AKA write) that fact is recorded If access does not address a resident page: Then the missing page is found on disk and made available to memory via swap-in or else it is created for the first time ever, generally with initialization for system pages and no initialization for user pages; yet even for user pages a frame must be found Making a new page available requires finding memory space of size of a page frame, aligned on a page-size boundary 8 High-Level Steps of Paged VMM If such space can be allocated from unused memory (usually during initial program execution), a page frame is now reserved from available memory If no page frame is available, a currently resident page must be swapped out and the freed space reused for the new page Note preference of locating unmodified pages, i.e. pages with no writes; no swap-out needed! Should the page to be replaced be dirty, it must first be written to disk Otherwise a copy already exists on disk and swap-out operation can be skipped 9 VMM Mapping Steps Two Levels Instruction references the logical address (la) Processor finds datum in L1 data cache, and the operation is done Else the work for VMM starts: Processor finds start address of Page Directory (PD) Logical address is partitioned into three bit fields, Page Directory Index, Page Table (PT) Index, and user-page Page Offset The PD finds the PT, the PT finds the user Page Frame (actual page address) Typical entries are 32-bits long, 4 bytes on byteaddressable architecture Entry in PD is found by adding PD Index left-shifted by 2, added to the start address of PD; this yields a PT address 10 VMM Mapping Steps Two Levels Add PT Index left-shifted by 2 is then added to the previously found PT address; this yields a Page Address Add Page Offset to previously found Page Address; this yields byte address Along the way there may have been 3 page faults, with swap-outs and swap-ins During book-keeping (e.g. find clean pages, locate LRU page etc) many more memory accesses can result 11 Definitions Alignment Attribute of some memory address A, stating that A must be evenly divisible by some power of two. For example, word-aligned on a 4-byte, 32-bit architecture means, an address is divisible by 4, or the rightmost 2 bits are both 0 12 Definitions Demand Paging Policy that allocates a page in physical memory only if an address on that page is actually referenced (demanded) in the executing program 13 Definitions Dirty Bit Single bit data structure that tells whether the associated page was written after its last swap-in (or creation) 14 Definitions Global page A page that is used in more than one program; typically found in multi-programming environment with shared pages 15 Definitions Logical Address Address as defined by the architecture; is the address as seen by the programmer or compiler. Synonym virtual address and on Intel architecture: Linear address Antonym: physical address 16 Definitions Overlay Before advent of VMM, programmer had to manually relocate information out, to free memory for next data. This reuse of the same data area was called: to overlay memory 17 Definitions Page A portion of logical addressing space of a particular size and alignment. The start address of a page is an integer multiple of the page size, thus it also is page-size aligned. A logical page is placed into a physical page frame Antonym: Segment 18 Definitions Page Directory A list of addresses for Page Tables Typically this directory consumes an integral number of pages as well, e.g. 1 page In addition to page table addresses, each entry also contains information about presence, access rights, written to or not, global, etc. similar to Page Table entries 19 Definitions Page Directory Base Register (pdbr) HW resource (typically a machine register) that holds the address of the Page Directory page Due to alignment constraints, it may be shorter than 32 bits on a 32-bit architecture 20 Definitions Page Fault Logical address references a page that is not resident Consequently, space must be found for the referenced page, and that page must be either created or swapped in if it has existed before Space will be placed into a page frame 21 Definitions Page Frame A portion of physical memory that is aligned and fixed in size, able to hold one page It starts at a boundary evenly divisible by the page size Total physical memory should be an integral multiple of the page size Logical memory is an integral multiple of page size by definition 22 Definitions Page Table Base Register (ptbr) Rare! Resource (typically a machine register) that holds the address of the Page Table Used in a single-level paging scheme In dual-level scheme use pdbr 23 Definitions Page Table A list of addresses of user Pages Typically each page table consumes an integral number of pages, e.g. 1 In addition to page addresses, each entry also contains information about presence, access rights, dirty, global, etc. similar to Page Directory entries 24 Definitions Physical Memory Main memory actually available physically on a processor Antonym or related: Logical memory 25 Definitions Present Bit Single-bit data structure that tells, whether the associated page is resident or swapped out onto disk 26 Definitions Resident (adj.) Attribute of a page (or of any memory object) referenced by executing code: Object is physically in memory, then it is resident Else if not in memory, then it is non-resident 27 Definitions Swap-In Transfer of a page of information from secondary storage to primary storage, fitting into a page frame in memory Physical move from disk to physical memory Antonym: Swap-Out 28 Definitions Swap-Out Transfer of a page of information from primary to secondary storage; from physical memory to disk Antonym: Swap-In 29 Definitions Thrashing Excessive amount of swapping When this happens, performance is severely degraded This is an indicator for the working set being too small 30 Definitions Translation Look-Aside Buffer Special-purpose cache for storing recently used Page Directory and Page Table entries Physically very small, yet effective Lovingly referred to as tlb 31 Definitions Virtual Contiguity Memory management policy that separates physical from logical memory In particular, virtual contiguity creates the impression that two addresses n and n+1 are adjacent in main memory, while in reality they are an arbitrary number of physical locations apart from one another Usually, they are an integral number of page-size bytes (plus 1) apart 32 Definitions Virtual Memory Memory management policy that separates physical from logical memory In particular, virtual memory can create the impression that a larger amount of memory is addressable than is really available on the target 33 Definitions Working Set The Working Set number of page frames is that number of allocated physical frames to guarantee that the program executes without thrashing Working set is unique for any piece of software; moreover varies by the input data for a particular execution of such a piece of SW 34 History of Paging Developed ~1960 at University of Manchester for Atlas Computer Used commercially in KDF-9 computer [5] of English Electric Co. In fact, KDF-9 was one of the major architectural milestones in computer history aside from von Neumann’s and Atanasoff’s machines KDF9 incorporated first cache and VMM, had hardware display for stack frame addresses, etc. In the late 1960s to early 1980s, memory was expensive, processors were expensive and getting fast, programs grew large Insufficient memories were common, and become one aspect of the growing Software Crisis of the late 1970s 16-bit minicomputers and 32-bit mainframes became common; also 18-bit address architectures (CDC and Cyber) of 60-bit words were used 35 History of Paging Paging grew increasingly popular: fast execution was gladly traded against large address range at cost of slower performance By mid 1980s, memories became cheaper, faster By the late 1980s, memories had become cheaper yet, the address range remained 32-bit, and large physical memories became possible and available Several supercomputers were designed with operating systems that provided no virtual memory at all. For example, Cray systems were built without VMM. The Intel Hypercube NX® operating system had no virtual memory management 36 History of Paging Just when VMM was falling into disfavor, the addressing limitation of 32 bits started to constrain real programs In the early 1990s, 64-bit architectures became common-place, rather than an exception Intermediate steps between evolving architectural generations: The Harris 3-byte system with 24-bit addresses, not making the jump to 32 bit quite The Pentium Pro ® with 36 bits in extended addressing mode, not quite yet making the jump to 64 bit addresses. Even the earliest Itanium ® family processors had only 44 physical address bits, not quite making the jump to the physical 64 bit address space 37 History of Paging By the end of the 1990s, 64-bit addresses were common, 64-bit integer arithmetic was generally performed in hardware, no longer via slow library extensions Pentium Pro has 4kB pages by default, 4MB pages if page size extension (pse) bit is set in normal addressing mode However, if the physical address extension (pae) bit and pse bit are set, the default page size changes from 4kB to 2 MB 38 Goals, Methods of Paging Goal to make full logical (virtual) address space available, even if smaller physical memory installed Perform mapping transparently. Thus, if at a later time the target receives a larger physical memory, the same program will run unchanged except faster Map logical onto physical address, even if this costs some performance Implement the mapping in a way that the overhead is small in relation to the total program execution 39 Goals, Methods of Paging A necessary requirement is a sufficiently large working set, else thrashing happens Moreover, this is accomplished by caching page directory- and page table entries Or by placing complete the page directory into a special cache 40 Unrealistic Paging Scheme On a 32-bit architecture, byte-addressable, 4-byte words, 4kB page size Single-level paging mechanism; later we’ll cover multi-level mapping On 4kB page, rightmost 12 bits of each page address are all 0, or implied Page Table thus has (32-12 = 20) 220 entries, each entry typically 4 bytes Page offset of 12 bits identifies each byte on a 4kB page 41 Unrealistic Paging Scheme Logical LogicalAddress Address Page PageOffset Offset(12) (12) Page PageAddress Address(20) (20) 42 Unrealistic Paging Scheme With Page Table entry consuming 4 bytes, results in page table of 4 MB This is a miserable paging scheme, since the scarce resource, physical memory, consumes already a 4 MB overhead Note that Page Tables should be resident, as long as some entries point to resident user pages Thus the overhead may exceed the total available resource, which is memory! Moreover, almost all entries are empty; their associated pages do not exist yet; the pointers are null! 43 Unrealistic Paging Scheme Page PageOffset Offset(12) (12) Page PageAddress Address(20) (20) Logical LogicalAddress Address Page PageTable Table4MB 4MB User UserPages Pages4kB 4kB 44 Unrealistic Paging Scheme Problem: The one data structure that should be resident is too large, consuming much/most of the physical memory that is so scarce --or was so scare in the 1970s, when VMM was productized So: break the table overhead into smaller units! Disadvantage of additional mapping: more memory accesses Advantage: Avoiding large 4MB Page Table We’ll forget this paging scheme 45 Realistic Paging Scheme 32-Bit Arch. Next try: assume a 32-bit architecture, byteaddressable, 4-byte words, 4kB page size This time use two-level paging mechanism, consisting of Page Directory and Page Tables in addition to user pages With 4kB page size, again the rightmost 12 bits of any page address are 0 implied All user pages, page tables, and the page directory can fit into identically sized page frames! 46 Realistic Paging Scheme 32-Bit Arch. Mechanism to determine a physical address: Start with a HW register that points to Page Directory Or else implement the Page Directory as a specialpurpose cache Or else place PD into a-priori known memory location But there must be a way to locate the PD, best without a memory access 47 Realistic Paging Scheme 32-Bit Arch. Design principle: have user pages, Page Tables, and Page Directory look similar, for example, all should consume one page frame each Every logical address is broken into three parts: 1. 2. 3. two indices, generally 10 bits on a 32-bit architecture with 4 kB one offset, generally 12 bits, to locate any offset on a 4 kB page total adds up to 32 bits The Page Directory Index is indeed an index; to find the actual entry in PD, first << 2 (or multiply by 4), and then add this number to the start address of PD; thus a PD entry can be found 48 Realistic Paging Scheme 32-Bit Arch Similarly, Page Table Index is an index; to find the entry in a page table: right-shift by two (<< 2) and add result to the start address of PT found in previous step; thus an entry in the PT is found PT entry holds the address of the user page, yet the rightmost 12 bits are all implied, are all 0, hence don’t need to be stored in the page table The rightmost 12 bits of a logical address define the page offset within the found user page Since pages are 4 kB in size, 12 bits suffice to identify any byte in a page Given that the address of a user page is found, add the offset to its address, and the final byte address (physical address) is identified 49 Realistic Paging Scheme 32-Bit Arch PD Index PT Index Page Offset Logical LogicalAddress Address pdbr pdbr User UserPages Pages Page PageDirectory Directory Page PageTables Tables 50 Realistic Paging Scheme 32-Bit Arch Disadvantage: Multiple memory accesses, total up to 3; can be worse if page-replacement algorithm also has to search through memory In fact, can become way worse, since any of these total 3 accesses could cause page faults, resulting in 3 swap-ins, with disc accesses and many memory references; it is a good design principle never to swap out the page directory, and rarely – if ever—the page tables! Some of these 3 accesses may cause swap-out, if a page frame has to be located, making matters even worse Performance loss could be tremendous; i.e. several decimal orders of magnitude slower than a single memory access 51 Realistic Paging Scheme 32-Bit Arch Thus some of these data structures should be cached In all higher-performance architectures –e.g. Intel Pentium Pro ® – a Translation Look-Aside Buffer (TLB) is a special purpose cache of PD or PT entries Also possible to cache the complete PD, since it is contained in size (4 KB) Conclusion: VMM via paging works only, if locality is good; another way of saying this, paged VMM works well, only if the working set is large enough; else there will be thrashing 52 Typical PD and PT Entries Entries in PD and PT need 10 + 10 = 20 bits for address; the lower 12 bits are implied The other 12 bits (beyond the 20) in any page directory or page table entry can be used for additional, crucial information; for example: P-Bit, AKA Present bit, indicating, is the referenced page present? (aka resident) R/W/E bit: Can referenced page be read, written, executed, all? User/Supervisor bit: OS dependent, is page reserved for privileged code? 53 Typical PD and PT Entries Typically the P-Bit is positioned for quick, easy access: rightmost bit in 4-byte word Some information is unique to PD or PT For example, P-Bit not needed in PD entries, if Page Tables must be permanently present On systems with varying page sizes, page size information must be recorded See examples below: 54 Typical PD and PT Entries Page Directory Entry Unused -- Bits Page Table Address Accessed Bit User/Supervisor Read/Write Present Bit 55 Typical PD and PT Entries If the Operating System exercises a policy to not ever swap-out Page Tables, then the respective PT entries in the PD do not need to track the fact that a PT was modified (i.e. the Dirty bit) Hence there may be reasons that PT and PD entries exhibit minor differences But in general, the format and structure of PTs and PDs are very similar, and the size of user pages, PTs and PDs are identical 56 Typical PD and PT Entries Page Table Entry User Page Address Dirty Bit Accessed Bit User/Supervisor Read/Write Present Bit 57 Page Replacement Using FIFO When a page needs to be swapped-in or created for the first time, it is placed into an empty page frame How does the VMM find an empty page frame? If no frame is available, another existing page needs to be removed out of its page frame. This page is referred to as the victim page The victim page may even be pirated from another processes’ set of page frames; but this is an OS decision, the user generally has no such control! If the replaced page –the victim page– was modified, it must be swapped out before its frame can be overwritten, to preserve the recent changes 58 Page Replacement Using FIFO Otherwise, if the page is unmodified, it can be overwritten without swap-out, as an exact copy exists already on mass storage; yet it must be marked as “not present” in its PT entry The policy used to identify a victim page is called the replacement policy; the algorithm is named the replacement algorithm Typical replacement policies are the FIFO method, the random method, or the LRU policy; similar to replacing cache lines in a data cache When storing the age, it is sufficient to record relative ages, not necessarily the exact time when it was created or referenced! Again, like in a data cache line 59 Page Replacement Using FIFO For example, LRU information may be recorded implicitly by linking pages in a list fashion, and removing the victim at the head, adding a new page at the tail MAY cause many memory references, making such a method of repeated lookups in memory impractical! 60 FIFO Sample, Track Page Faults In the two examples below, the numbers refer to 5 distinct page frames that shall be accessed. We use the following reference string of these 5 user pages; stolen with pride from [7]: 1, 2, 3, 4, 1, 2, 5, 1, 2, 3, 4, 5 We’ll track the number of page faults and hits, caused by an assumed FIFO replacement algorithm But strict FIFO age tracking requires an access to page table entries for each page reference! See later some significant simplifications in Unix! 61 Page Replacement Using FIFO Sample 1: In the first sample, we use 3 physical page frames for placing 5 logical pages: Let us track the number of page faults, given 3 frames! Cleary, when a page is referenced for the first time, it does not exist, so the memory access will cause a page fault automatically Page accesses causing a fault are listed in red frame 0 Page frame 0 Page frame 1 Page frame 2 User 1 4 5 2 1 1 3 2 2 page placed into page frame 5 3 4 62 Page Replacement Using FIFO We observe a total of 9 pages faults for the 12 page references in Sample 1 Would it not be desirable to have a smaller number of page faults? With less faults, performance will improve, since less swap-in and swap-out activity would be required Generally, adding computing resources improves SW performance 63 Page Replacement Using FIFO Sample 2: Run this same page-reference example with 4 page frames now; again faults listed in red: 1, 2, 3, 4, 1, 2, 5, 1, 2, 3, 4, 5 frame 0 Page Page Page Page frame frame frame frame 0 1 2 3 User page placed into page frame 1 1 5 4 2 2 1 5 3 2 4 3 64 Page Replacement Using FIFO A strange thing happened: Now the total number of page faults has increased to 10, despite having had more resources: One additional page frame! This phenomenon is known as the infamous Belady Anomaly 65 Bibliography 1. Denning, Peter: 1968, “The Working Set Model for Program Behavior”, ACM Symposium on Operating Systems Principles, Vol 11, Number 5, May 1968, pp 323-333 2. Organick, Elliott I. (1972). The Multics System: An Examination of Its Structure. MIT Press 3. Alex Nichol, http://www.aumha.org/win5/a/xpvm.php Virtual Memory in Windows XP 4. Multics history: ttp://www.multicians.org/history.html 5. KDF-9 development history: http://archive.computerhistory.org/resources/text/English_Elect ric/EnglishElectric.KDF9.1961.102641284.pdf 6. Wiki paged VMM: http://www.cs.nott.ac.uk/~gxk/courses/g53ops/Memory%20Man agement/MM10-paging.html 7. Silberschatz, Abraham and Peter Baer Galvin: 1998, “Operatying Systems Concepts”, Addison Wesley, 5th edition 66