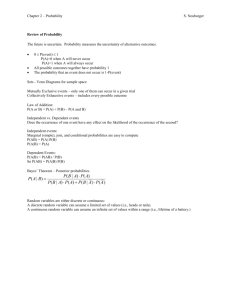

Discrete Random Variate Generation

advertisement

Generating Random Variates

• Use Trace Driven Simulations

• Use Empirical Distributions

• Use Parametric Distributions

Ref: L. Devroye Non-Uniform Random Variate Generation

Trace-driven Simulations

+

+

-

Strong validity argument

Easy to model systems

Expensive

Validity (data representative?)

Sensitivity analysis difficult

Results cannot be generalized to other systems

(restrictive)

- Slow

- No rare data information

Empirical Distributions (histograms)

+

+

+

-

Strong validity argument

Easy to model systems

Replicate

Data may not be representative

Sensitivity analysis is difficult

Results cannot be generalized to other systems

Difficult to model dependent data

Need a lot of data (must do conditional sampling)

Outliers and rare data problems

Parametric Probability Model

+ Results can be generalized

+ Can do sensitivity analysis

+ Replicate

- Data may not be representative

- Probability distribution selection error

- Parametric fitting error

Random Variate Generation:

General Methods

•

•

•

•

Inverse Transform

Composition

Acceptance/Rejection

Special Properties

Criteria for Comparing Algorithms

• Mathematical validity – does it give what it is

supposed to?

• Numerical stability – do some “seeds” cause

problems?

• Speed

• Memory requirements – are they excessively

large?

• Implementation (portability)

• Parametric stability – is it uniformly fast for all

input parameters (e.g. will it take longer to

generate PP as rate increases?)

Inverse Transform

•

Consider a random variable X with a

(cumulative) distribution function FX

•

Algorithm to generate X ~ FX :

1. Generate U ~ U(0,1)

2. Return X = F-1(U)

•

F-1(U) will always be defined because

0U1 and range of F is [0,1] (if monotonic)

•

What if F discrete or has “atoms”? (rt. cont.)

Consider discrete random variate, X

P{X=k} = Pk

-4

1 2

Pk = 1 so lay out Pks along Unit Interval

P-4

P1

0

P2

1

U (0,1)

Random Number, U, falls in interval k with Prob = Pk

Return k corresponding to interval (use an array or table)

Equivalent to “inverting” the CDF

P{X=k}

-4

1

2

Fx(k) = Prob{X≤k}

1

U1

U2

X2=-4

X1=2

x

Generating Continuous Random Variates

FX(x)

1

U1

U2

X2

X1

x

Proof Algorithm Works

• Must show the X generated by the algorithm

is, in fact, from FX : P{X x} = FX(x)

• P{X x} = P{FX

-1(U)

x} =

defn

P{U FX(x)} = FX(x) = P{X x}

defn

• First equality is conjecture, X = FX-1 (U),

second is mult. by Fx (monotone non-dec)

• Note: The = in the conjecture should really

be , meaning RVs have same distribution.

e.g. Exponential R.V.

• X is an exponential random variable with

mean

1 e

F x

0

x/

if x 0

otherwise

• To generate values of X set U = Fx(X) and

solve for (rv) X.

X = - ln(1-U) (or X = - ln(U))

Weibull Random Variable (with parameters and )

-(x/ )

PDF: f(x) = - xe -1

e

, x0, 0 otherwise

-(x/ )

CDF:F(x) = 1-

, x0

Setting F(x) = u~U(0,1)

leads to x = (-LN(1-u))1/.

Triangular Distribution

— Used when a crude, single mode distribution is needed.

f(x)

a

b

x

c

2(x-a)/(b-a)(c-a) for a x b

— The PDF is f(x) =

2(c-x)/(c-a)(c-b) for b x c

0

otherwise

where a and c are the boundaries, and b is the mode.

—

The CDF is F(v) =

(v-a)2/(b-a)(c-a) for a v b

1 - (c-v)2/(c-a)(c-b) for b v c

—

By the inversion method,

v = a + (u)1/2[(b-a)(c-a)]1/2 for 0 u (b-a)/(c-a)

c - (1-u)1/2[(c-a)(c-b)]1/2 for (b-a)/(c-a) u 1

where F(b) = (b-a)/(c-a)

Geometric Random Variable (with parameter p)

- Number of trials until the first success (with probability p)

- Probability mass function (PMF) P{X=j} = p(1-p) j-1, j=1,2,…

- Cumulative distribution function (CDF)

F(k) = j<k p(1-p) j-1 = 1- (1-p)k

If u = F(k) ~U(0,1), then k = LN(1-u)/(1-p) +1 = LN(1-u)/(1-p).

u = 1- (1-p)k

1- u = (1-p)k

k = LN(1-u)/LN(1-p)

(use floor function to discretize)

Inverse Transform: Advantages

• Intuitive

• Can be very fast

• Accurate

• One random variate generated per U(0,1) random

number

• Allows variance-reduction techniques to be

applied (later)

• Truncation easy

• Order statistics are easy

Inverse Transform:

Disadvantages

• May be hard to calculate F-1(U) in terms of

computer operations

• F-1(U) may not even exist

• If use power-series expansions, stopping

rule?

• For discrete distributions, must perform

search

Conditional Distributions - want to generate X

conditioned that it is between a and b (truncated)

Fx(x)

Fx(b)

U’

Fx(a)

a

b

Generate U’ between Fx(a) and Fx(b) (how?)

U’ = Fx(a) + RND*(Fx(b) - Fx(a))

X = Fx-1(U’)

Inverse Transform for Discrete

Random Variates

• Inverse transform can be used for discrete

random variables, also

• E.g., can use empirical distribution function

(see next slide)

• If using known probabilities, replace 1/n

step size with p(xi) step size

Variate Generation Techniques:

Empirical Distribution

1

1/n

0

x1

x2 x3

xn

How to sample from a discrete empirical distribution:

• Determine {(x1, F(x1)), (x2, F(x2)),…, (xn, F(xn))}

• Generate u

• Search for interval (F(xi) u F(xi+1))

• Report xi

Order Statistics

• ith order statistics is the ith smallest of n

observations

• Generate n iid observations x1, x2,…,xn

• Order the n observations

• x[1], x[2],…,x[n]

• x[1] describes the failure time in a serial system

• x[n] describes the failure time in a parallel system

• How can we generate x[1] and x[n] using one

U(0,1) variate?

Order Statistics

• Serial system

1

2

• Parallel system

…

1

2

…

n

n

Order Statistics

• F[n](a) = P{x[n]a} = P{Max{xi}a}

= P{x1a, x2a, …, xna}

= P{x1a} P{x2a} … P{xna}(indep)

= Fn(a)

(identically distributed)

Represents CDF of failure time of parallel

system using CDF of failure time of individual

component

• Inversion: Fn(a) = U implies a = F-1((U)1/n)

Order Statistics

• F[1](a) = P{x[1]a} = 1 - P{x[1]>a}

=1-P{Min{xi}>a}

= 1 - P{x1>a, x2>a, …, xn>a}

= 1 - P{x1>a} P{x2>a} … P{xn>a}

= 1 – (1 - F(a))n

Represents CDF of failure time of serial

system using CDF of failure time of

individual component

Order Statistics

• Inversion:

1 – (1 – F(a))n = u implies

a = F-1(1 – (1 – u)1/n)

• Find ith order statistic:

e.g. 2nd order statistic:

find X[1],

now sample n-1 from U[F(X[1]), 1]

need 2 uniforms to generate X[2]

NOTE

—

—

As n+, u1/n 1 and 1 - (1 - u)1/n 0 for u ~ U(0, 1)

Once the CDF of the order statistics are known, the

densities (PDFs) can be obtained by differentiating.

u

F(X)

1

1/n

u

U(0,1)

1- (1-u)1/n

0

F-1(1-(1-u)1/n)

F-1(u) F-1(u1/n)

X

Example

Let X be exponential with mean = 1/5, and u U(0, 1).

Let n = 100

Then x[100] = (-1/5)1n(1-u1/100) and

x[1] = (-1/5)1n(1 - (1 - (1 - u)1/100)) = (-1/5) 1n((1 - u)1/100))

For u=.2,

For u=.5,

For u=.8,

x[100] = .827 and x[1] = .0004.

x[100] = .995 and x[1] = .0014.

x[100] = 1.22 and x[1] = .0032.

Composition

• Can be used when F can be expressed as a

convex combination of other distributions

Fi, where we hope to be able to sample from

Fi more easily than from F directly.

i 1

i 1

F x pi Fi x and f x pi f i x

• pi is the probability of generating from Fi

p

i 1

i

1

Composition: Graphical Example

f(x)

0.5

Area = 0.5

Area = 0.5

0

x

Composition: Algorithm

1. Generate positive random integer I such

that

P{I = i} = pi

for i = 1, 2, …

2. Return X with distribution function FI

Think of Step 1 as generating I with mass

function pI. (Can use inverse transform.)

Composition: Another Example

f(x)

1. Generate U1

2. If U1 a, generate

and return U2

2-a

Area = 1 - a

3. Else generate and

return X from

right-triangular

distribution

a

Area = a

x

0

1

Acceptance/Rejection

• X has density f(x) with bounded support

• If F is hard (or impossible) to invert, too

messy for composition... what to do?

Generate Y from a more manageable

distribution and accept as coming from f

with a certain probability

Acceptance/Rejection Intuition:

Density f(x) is really ugly ... Say, Orange!

M’(x)

f(x)

x

M’ is a “Nice” Majorizing function..., Say Uniform

Intuition:

Throw darts at rectangle under M’ until hit f

M’(x)

Missed again!

Reject!

f(x)

X

Accept X! - done

Proof: Prob{Accept X} is Proportional to height of f(X)

x

Acceptance/Rejection

•

•

Create majorizing function M(x) f(x) for all

x; normalize M( ) to be a density (area=1)

r(x) = M(x)/c where c is the area under M(x)

Algorithm:

1. Generate X from r(x)

2. Generate Y from U(0,M(X)) (independent of X)

3. If Y f(X), Return X; else go to 1 and repeat

Generalized Acceptance Rejection: f(x)<g(x) for all x

1) Generate x with pdf g(x)/Ag.

2) Generate y U[0, g(x)]

3) If y<f(x), accept x, otherwise, go to 1)

THEOREM: P{X t |Y f(X)} = F(t) = -t f(z)dz.

Proof:

P{X t |Y f(X)} = P{X t,Y f(X)} / P{Y f(X)}

P{X t, Y f(X)}

f ( x)

0

(1/g(x)(g(x)/Ag) dy dx

=

=

t

t

f ( x)

0

(1/Ag) dy dx

t

=

(f(x)/Ag) dx

Y uniform 0-g(x)

X distn g(x)/Ag

and indep.

Second,

P{Y f(X)}

=

=

f ( x)

g(x)/(g(x)/Ag) dy dx

0

f ( x)

(1/Ag) dy dx

0

= (f(x)/Ag) dx = 1/Ag

t

Therefore, P{X t | Y f(X)} = f(x) dx

Performance of Algorithm

Will Accept X with a probability equal to

(area under f(X))/ (area under M’(X))

If c = area under M’( ), Probability of

acceptance is 1/c – so want c to be small

What is the expected number of U’s needed to

generate one X?

2c Why?

Each iteration is coin flip (Bernoulli Trial).

Number of trials until first success is Geometric, G(p)

E[G] = 1/p and here p=1/c (2 Us per iteration)

Increase Prob(accept) and stop with

tighter Majorizing Function, M(X)

M’(x)

M(x)

f(X)/M(X)

f(X)

f(x)

x

X

What if f(x) is hard to evaluate?

Use minorizing function m(x) f(x)

M(x)

m(x)

f(x)

Acceptance/Rejection

Final algorithm:

1. Generate X from r(x)

2. Generate U from U(0,1) (indep. of X)

3. If U m(X)/M(X), return X and stop

4. Else if U f(X)/M(X), return X and stop

5. Else go to 1 and try again

Biggest Problems

— Choosing majorizing and minorizing functions such

that it is easy to generate points under the majorizing

function and the minorizing function is easy to compute

(f(x) may be difficult to compute)

— We want it to be easy to sample under g(x)

AND close to f(x) (contradictory constraints)

Result

— As (dimension of x) +,

(Area under f(x))/(Area in “cube”) 0

Special Properties

• Methods that make use of “special

properties” of distributions to generate them

n

• E.g.

= X where Xi is N(0,1) random

variable i 1

• Z1 and Z2 are 2 with k1 and k2 degrees of

freedom, then

is Fk1,k2

Z /k

n2

2

i

X

1

1

Z 2 / k2

Special Properties

• An Erlang is a sum of exponentials

ERL (r,) =i=1,2,…,r (- 1n(ui)) = - 1n(i=1,2,…,r ui))

(Gamma allows r to be non-negative real)

• If X is Gamma (,1), and Y = X, then Y is Gamma (, ).

If = 1, then X is Exp (1).

• Beta is a ratio of Gammas

X1 is distributed Gamma (1, )

X2 is distributed Gamma (2, )

X1 and X2 are independent

Then X1/(X1+X2) is distributed Beta (1, 2)

Binomial Random Variable Binomial(n,p)

(Bernoulli Approach)

0)

X = 0, i = 1

1)

Generate U distributed U(0, 1)

2)

If U p, X X+1

If U > p, X X

If in, set i i+1 and go to 1)

If i = n, stop with X distributed Binomial (n,p)

Geometric Random Variable Geometric (p)

(Bernoulli Approach) (already saw inversion approach)

0)

X=1

1)

Generate U U(0, 1)

2)

If U p, X geometric (p)

If U> p, X X+1 and go to 1)

• Negative Binomial (r, p) is the sum of r IID Geometric (p)

Example of Special Properties:

Poisson RV, P, is sum of exponentials in unit interval

E[1/]

E[1/]

E[1/]

E[1/]

1

0

Has same probability as a Poisson() = 3...

Generate E[1/] - ln{Ui} (–L*LN{RND} in sigma)

k

k+1

i=1

i=1

- ln{Ui} ≤ 1 ≤ - ln{Ui} <=> P = k

k

k+1

i=1

i=1

- ln{Ui} ≤ 1 ≤ - ln{Ui}

k

k+1

- ln{Ui} ≤ 1 ≤ - ln{ Ui}

k

k+1

ln{Ui} ≥ -1/ ≥ ln{ Ui}

i=1

(multiple by -1/ )

i=1

i=1

Ui ≥

(log of sum is product of logs)

i=1

i=1

k

<=> P = k

e-1/ ≥

k+1

Ui

(take exp of everything-monotonic)

i=1

Algorithm: to generate Poisson (mean = ), multiply

iid Uniforms (RND) until product is less than e-1/ .

Special Properties:

Assume have Census Data only for Poisson Arrivals

eg: Hospitals, Deli Queues, etc.

Use fact that, given K Poisson events occur in T,

The Event times are distributed as K Uniforms on (0,T)

First arrival time:

Generate T1 = T*U[1:K] = smallest in K Uniforms on (0,T) (min order statistic)

= T*(1 – U1/K) (beware of rounding errors on computer)

Next interarrival time = smallest of K-1 Uniforms on (0,T-T1):

Generate T2 = (T-T1)*(1 – U1/(K-1))

and so on until get K events in T

Special Properties

• Given X ~ N(0,1), can generate

X’ ~ N(, 2) as X’ = + X

• So sufficient to be able to generate X

• Inversion not possible

• Acceptance/rejection an option

• But better methods have been developed

Normal Random Variables

• Need only generate N(0, 1)

— If X is IID N(0, 1), then Y = X+ is N(,)

1) Crude (Central Limit Theorem) Method

• U U(0, 1), hence E(U) = .5 and V(U) = 1/12.

• Set Y =

n

Ui, hence E(Y) = n/2 and V(Y) = n/12

i1

• By CLT, (Y-n/2)/(n/12)1/2 D N(0, 1) as n +

• Consider

Ui-6 (i.e., n = 12).

12

i1

However n may be too small!

Box-Muller Method

• Generate U1, U2 U(0,1) random numbers

• Generate X1 2ln U1 cos 2 U 2

and

X 2 2ln U1 sin 2 U 2

• X1 and X2 will be iid N(0,1) random variables

Box-Muller Explanation

• If X1 and X2 iid N(0,1)

• D2 = X12 + X22 is 22 which is the SAME as

an Exponential(2)

D 2ln U

• X1 = D cos

X2 = D sin

D

X2

where = 2U2

X1

Polar Method

1.

2.

3.

4.

5.

Let U1, U2 be U(0,1),

define Vi = 2Ui – 1: Vi is U(-1,1)

Define S = V12 + V22

If S>1, go to 1.

2ln S

If S1, then Y

S

1. X1=V1Y and X2=V2Y are iid N(0,1)

Polar Method Graphically

(-1,1)

(1,1)

S

V1

(-1,-1)

V2

(1,-1)

Let’s Look at some Normal Random Variates...

Notes

— X12 + X 22 is distributed 22 or exponential (2)

or ( V12 V22 )Y2 = -2ln(S)

is true if

Theorem: S ~ U(0, 1) given S 1.

Proof:

P{S z | S 1} = P{Sz , S1}/ P{S1} = P{Sz }/ P{S1}

= P{ V12 V22 z | S 1}

(since V12 V22 =S)

2

2

and ( V1 V2 )Y2 = -21n(S))

= P{-(z- V22)1/2 V1 z- V22 )1/2} / P{S1}

=

=

z1/ 2

z1/ 2

/2

/2

1/2 (2/4) (z- V22 )1/2 dV2 / ( /4)

(zcos2()/2) d/ ( /4) = z

(later)

If S>1, go to (*).

Rejection occurs with probability P{S>1}=1- /4

Proof:

P{S 1} = P{ V12 + V22 1}

= P{-(1- V22 )1/2 V1 (1- V22 )1/2 }

=

=

1

π/2

-1

-π/2

(1- V22 )1/2 dV2 /2

(cos2 ()/2)d = /4

Scatter Plot of X1 and X2 from NOR{} in Sigma

Two successive Normals from Sigma

530

372

214

X2

56

-102

-260

-418

-382

-234

-86

62

X1

210

358

506

Histograms of X1 and X2 from NOR{} in Sigma

Two successive Normals from Sigma

648

540

515

432

412

Count 324

Count 309

216

206

108

103

0

-418

Two successive Normals from Sigma

618

-387

-356

-325

-294

-263

-232

-201

-170

-139

-108

-77

-46

-15

X2

16

47

78

109

140

171

202

233

264

295

326

357

0

-382

-353

-324

-295

-266

-237

-208

-179

-150

-121

-92

-63

-34

-5

X1

24

53

82

111

140

169

198

227

256

285

314

343

Autocorrelations of X1 and X2 from NOR{} in Sigma

Two successive Normals from Sigma

1

Two succ essive Normals from Sigma

1

X2 0

X1 0

-1

-1

0

1

2

3

4

5

Lag

6

7

8

9

10

0

1

2

3

4

5

Lag

6

7

8

9

10

Histograms of X1 and X2 from Exact Box Muller Algorithm

C:\TEACHING\SIMULA~1\__IEOR~1\BOXMULLR.OUT (X2 Histogram)

924

770

835

616

668

Count 462

Count

308

501

334

154

0

-247

C:\TEACHING\SIMULA~1\__IEOR~1\BOXMULLR.OUT (X1 Histogram)

1002

167

-227

-207

-187

-167

-147

-127

-107

-87

-67

-47

-27

-7

13

X2

33

53

73

93

113

133

153

173

193

213

233

253

0

-296

-274

-252

-230

-208

-186

-164

-142

-120

-98

-76

-54

-32

-10

X1

12

34

56

78

100

122

144

166

188

210

232

254

Autocorrelations of X1 and X2 - Exact Box Muller Algorithm

C:\TEACHING\SIMULA~1\__IEOR~1\BOXMULLR.OUT (Autocorrelations of X1)

1

C:\TEACHING\SIMULA~1\__IEOR~1\BOXMULLR.OUT (Autocorrelations of X2)

1

X1 0

X2 0

-1

-1

0

1

2

3

4

5

Lag

6

7

8

9

10

0

1

2

3

4

5

Lag

6

7

8

9

10

Scatter plot of X1 and X2 - Exact Box Muller Algorithm

BOXMULLR.OUT (X2 vs. X1)

371

268

165

X2

62

-41

-144

-247

-296

-183

-70

43

156

269

382

X1

This is weird! WHY? – Marsaglia’s Theorem in polar coordinates

Discrete Random Variate Generation

Discrete random variables can be tough to generate.

eg. Binomial (N,p), with N large ( say...yield from a

machine)... always check web for updates. (approx.)

If a large number of discrete observations are

needed, how can they be generated efficiently?

Discrete Random Variate

Generation

• Crude method: Inversion

Requires searching (sort mass function first)

• Continuous approximation

e.g. “Geometric is the greatest integer <

Exponential”

• Alias method, ref: Kromal & Peterson,

Statistical Computing 33.4, pp.214-218

Alias Method

• Use when

— there are a large number of discrete values.

— you want to generate many variates from this distribution.

• Requires only one U(0, 1) variate.

• Transforms a discrete random variable into a discrete

uniform random variable with aliases at each value

(using conditional probability).

Example:

p1 = .2

p2 = .4

p3 = .35

p4 = .05

If uniform, pi = .25 for i = 1, 2, 3, 4

.25

.25

1

2

3

4

Define Qi = probability that i is actually chosen given

that i is first selected

=

P{i chosen | i selected}

Ai is where to move (alias) to if i is not chosen

.25

2

1

3

2

1

2

A1 = 2

Q1 = .8

A2 = 3

Q2 = .6

3

2

4

3

A3 = 3

Q3 = 1.

4

A4 = 2

Q4 = .2

Qi = probability that i is actually chosen given that i is first selected

= P{i chosen | i selected}

Ai is where to move (alias) to if i is not chosen

1

0.

2

.2

2

.25

3

.4

3

.50

4

.75 .80

Other Possible Alias Combinations

A1 = 3

Q1 = .8

A2 = 2

Q2 = 1.

A3 = 2

Q1 = .4

A4 = 3

Q2 = .2

A1 = 3

Q1 = .8

A2 = 3

Q2 = .8

A3 = 3

Q1 = 1.

A4 = 2

Q2 = .2

2

1.0

Alias Table Generation Algorithm

For i = 1, 2, . . .,n, Do: Qi = npi

G = {i: Qi>1}

(needs extra probability above 1/n}

H = {i: Qi<1 and Ai has not been assigned}

(shift probability away from)

While H is nonempty Do:

j: any member of H

k: any member of G

Aj = k

Qk = Qk - (1-Qj)

If Qk <1 then:

G = G\{k}

H = H{k}

end

H = H\{j}

end

Example

i:

p i:

Q i:

G

H

j = 3, k = 1

i:

p i:

Q i:

G

H

Ai

1

.210

1.050

X

1

.210

.495

2

.278

1.390

X

2

.278

1.390

X

3

.089

.445

4

.189

.945

X

X

3

.089

.445

4

.189

.945

X

X

1

5

.234

1.170

X

5

.234

1.170

X

j = 1, k =2

i:

p i:

Q i:

G

H

Ai

j = 2, k = 5

i:

p i:

Q i:

G

H

Ai

1

.210

.495

2

.278

.885

3

.089

.445

X

2

1

.210

.495

4

.189

.945

X

1

2

.278

.885

3

.089

.445

4

.189

.945

X

2

5

.234

1.170

X

5

1

5

.234

1.055

X

j = 4, k =5

i:

p i:

Q i:

G

H

Ai

1

.210

.495

2

.278

.885

3

.089

.445

4

.189

.945

2

5

1

5

5

.234

1.000

X

To verify that the table is correct, use

P{i chosen} =

=

P{i chosen | j selected} P{j selected}

(Qi/n) + (1-Qj)(1/n)

j i

p1 = (1/5) (.495 +.555)

= .210

p2 = (1/5) (.885 +.505)

= .278

p3 = (1/5) (.445)

= .089

p4 = (1/5) (.945)

= .189

p5 = (1/5) (1.000 +.115 + .055) = .234

Using the Alias Table

• Suppose u = .67. Therefore, 5u=3.35, which gives i = 4.

Since .67 is .35 of the way between .6 and .8, and .35<.945,

we get i = 4.

• Suppose u = .39. Therefore, 5u=1.95, which gives i = 2.

Since .39 is .95 of the way between .2 and .4, and .885<.95,

we get (the alias) i = 5.

Marsaglia Tables

• For discrete random variables

• Must have probabilities with denominator as a power of 2

• Use when

— there are a large number of discrete values

(shifts work from n values to log2 (n) values)

— you want to generate many values from this distribution

• Requires 2 U(0, 1) variates (actually only need one)

Example

Prob.

p0=7/32

p1=10/32

p2=10/32

p3=3/32

p4=2/32

Binary

.00111

.01010

.01010

.00011

.00010

.5

.25 .125

x

x

x

qi

.5

.125

.0625 .03125

x

x

x

x

x

x

x

.3125 .0625

Algorithm:

1) pick an urn with probability qi

2) pick a value from the urn with discrete uniform

NOTE: At most log2 (n) values of qi needed (= #columns).

Check with Law of Total Probability:

p0 =

0

+(.125)(1) + (.3125)(1/5) + (.0625)(1/2) = 7/32

p1 = (.5)(1/2)

0

+ (.3125)(1/5)

+0

= 10/32

p2 = (.5)(1/2)

0

+ (.3125)(1/5)

+0

= 10/32

p3 =

0

+0

+ (.3125)(1/5) + (.0625)(1/2) = 3/32

p4 =

0

+0

+ (.3125)(1/5)

+0

= 2/32

Poisson Process

N(t)

4

3

2

1

t

0

E(1/) E(1/) E(1/) E(1/)

E(1/)

Key: times between arrivals are exponentially

distributed

Nonhomogeneous PP

• Rate no longer constant: (t), with

t

distribution

t y dy

0

• Tempting: time between ti+1 and ti

exponentially distributed with rate (ti)

• BAD IDEA

Nonhomogeneous PP

Generating at rate (ti) might cause

arrival during closed period!

(t)

ti

t

NHPP

Use thinning – same idea as acceptance/rejection:

1. Generate from PP with rate max

2. Accept with probability (t)/max

max

(t)

t

NHPP: Thinning

Reject

Reject

Accept

(t)

max

Inhomogeneous Poisson Process (Thinning)

m = max (t)

m

Reject

Accept

exp(m)

0

(t)

t

t

* Generate a homogeneous Poisson process with rate m.

* Accept arrivals with probability (t)/m

see Sigma model NONPOIS.MOD

NHPP: Inversion

(t)

Exp(1)

t

What about fact we don’t really know (t)?

Generating Dependent Data

• Many ways to generate dependent data

• AR(p) process: autoregressive process:

Yt = a1Yt-1 + a2Yt-2 + … + apYt-p + t

• MA(q) process: moving average process:

Yt = t + b1t-1 + … + bqt-q

• EAR process: exponential autoregressive:

Yt = R*Yt-1 + M*ERL{1}*(RND>R)

Autocorrelation

AR(1) autocorrelation lag k = k

Satisfies 1st order difference equn

k = α k-1 k>0

with boundary condition 0=1

Soln: k = αk

EAR Process

• EAR_Q.MOD

• Histograms of the interarrival and service

times will appear exponentially distributed.

• Plots of the values over time will look very

different

• Output from the model will be very

different as R is varied

demo earQ.MOD

Driving Processes

Problem: Real World Stochastic Processes are not

independent nor identically distributed...not even

stationary.

•

Serial Dependencies: yield drift

•

Cross Dependencies: maintenance

•

Spikes: hard and soft failures

•

Nonstationarity – trends, cycles (rush hours)

Driving Processes

Example: Machine subject to unplanned downtime...TTR distribution held constant but not

independent... EAR(1)

IID TTR (T Histogram)

Count

90% Soft/Hard TTR (T Histogram)

150

144

125

120

100

96

75

Count

72

50

48

25

24

0

0

0 29 58 87 116145174203232261290319348377406435464493522551580

1 31 61 91 121151181211241271301331361391421451481511541571601

T

T

Driving Processes

Example: WIP Charts

90% SOFT/HARD TTR (WIP vs. Time)

iid TTR (WIP vs. Time)

250

250

aveW=4.9

aveW=44.3

200

200

150

150

WIP

WIP

100

100

50

50

0

0

0

10000

20000

30000

Time

40000

50000

0

20000

40000

60000

Time

80000

100000

Models for Dependent Discrete-Valued Processes

Ref: Edward McKenzie "Time Series Analysis in Water Resources" in

Water Resources Bulletin; 21.4 pp. 645-650.

Motivation: wish to model realistic situation where random process has

serial correlation. This is different from time-dependency (say, for

modeling rush-hour traffic) in that value of process depends on its own

history, maybe in addition to being dependent on its index (time).

Criteria: (P.A.W.Lewis: Multivariate Analysis - V. (pp: 151-166) North Holland)

1. Models specified by marginal distributions and correlation structure.

2. Few parameters that can be easily interpreted.

3. Structure is linear in parameters, making them easy to fit and generate

AR(order 1) (Markov Models)

Continuous Autoregressive order-1 sequence, {Xn} satisfies the

difference equation

(Xn-) = α(Xn-1- ) + n

or remove means

Xn = αXn-1 + n

with {n} being a sequence of iid random variables and α is a

positive fraction...process retains fraction, α, of previous value.

Note: If Xn is discrete then n must be dependent on Xn-1 ...want

to reduce Xn-1 by the "right" amount to have same distn.

Models for Dependent Discrete-Valued Processes

McKenzie's idea: Generate each “unit” in integer Xn-1

separately, and "keep" it with probability α .

Replace αXn-1 with α*Xn-1 defined as

X n 1

X n 1

B ( )

i

i 1

where {Bi()} is an iid sequence of Bernoulli trials with

Prob{B=1} = α. This "reduces" Xn-1 by the same amount

(expected) as in the continuous autoregression.

Applications

Poisson random variables (eg. number arriving in a

interval at bus stop).

Xn = α*Xn-1+ n

With n Poisson with mean (1-α), if Xo is Poisson with

mean , then so are the Xn.

The correlation at lag k is αk.

This process is time-reversible.

Negative Binomial

(Note: check reference on this?)

(Number of trials until successes where prob of

success on each trial is ) .

Xn = α*Xn-1+ n

n is NB(,) and αi (Binomial probability in the

term α*X ) is Beta with parameters α and (1-α).

This has the same AR(1) correlation structure as

the other models. k= αk Process is also timereversible.

Geometric

(Special case of Neg. Binomial)

Xn = αXn-1+ BnGn

with Bn Bernoulli with Prob(B=1) = 1- α and

Gn is Geometric with parameter .

This is discrete analog with the EAR process.

McKenzie also discusses Binomial and Bernoulli as well as adding time

dependencies (seasonally, trends, etc.)

Summary: Generating Random Variates

• Could Use Trace Driven Simulations

• Could Use Empirical Distributions

• Could Use Parametric Distributions

Know the advantages and disadvantages of

each approach...

Summary: General Methods

•

•

•

•

Inverse Transform

Composition

Acceptance/Rejection

Special Properties

Look at the data! Scatter plots, histograms,

autocorrelations, etc.

Data Gathering

• Needing more Data is a common, but

usually invalid, excuse. Why? (sensitivity?)

• Timing Devices – RFID chips

• Benefits vs. hassle of tracking customers

Collect queue lengths Why? L/=W

• Coordinating between observers

• Hawthorn effect

Distributions and BestFit or

ExpertFit or Stat::fit or...

•

•

•

•

•

•

Gamma

Exponential (ie. Gamma w/ param 1)

Erlang (ie. Gamma w/ integer param)

Beta (-> Gamma in the limit)

Log-Logistic

Lognormal *** ln not log10 ***

5 Dastardly D’s of Data

(updated from Reader)

Data may be

• Distorted

– Material move times include (un)load

underestimate value of AGVs (loaders?)

– Want demand but get backorders (those willing

to wait)

overestimate service levels (resources?)

5 Dastardly D’s of Data, cont.

• Dependent

– Get means or histograms but not correlations,

cycles, or trends

underestimate congestion (capacity?)

– Fail to get cross dependencies

skill levels of operators, shift change effects

5 Dastardly D’s of Data, cont.

• Deleted

Data is censored (accounting) model not

valid with new data

• Damaged

Data entry errors, collection errors

(observer effect)

• Dated

last month’s data, different product mix

valid models later fail validation

6th and

th

7

Dastardly D of Data...

• Doctored – well intentioned people tried to

clean it up...

• Deceptive - any of the other problems might

be intentional!

Data often used for performance evaluations,

or thought to be...

Data Collection: General

Concepts

• Relevance:

Degree of control

Sensitivity

• When:

Need to observe early in study

Need sensitivities late in study

• Cost:

Setup (include training and P.R.)

Sample size (sensitivity)

• Accuracy: Technique, skill, motivation, training,

timing (Monday a.m.??),…

Data Collection, cont.

• Precision:

• Analysis:

Technique, etc.

Sample size

Sample interval (dependencies)

Error control

Verification

Dependencies within data set

Dependencies between data sets

What to do? - recommendations

• Don’t wait for data before you build model

• Run sensitivity experiments on current

system design to see what matters.

• Collect data for validation, if required.

• Remember: you are ultimately making a

forecast not predicting the past!... so

• Do sensitivity analysis on new systems

• Present output using Interval Estimators!!

Simulation study

Start

Anticipate system and human behavior

Formulate questions

Characterize answers

Enough time

and money?

Identify

Prejudices

Design experiments

(Re)plan study and (re)secure resources

Adjust Expectations

Develop, verify, refine model - as necessary

Code, re-code until have ‘no known errors’

Analyze, Advise

- and Caution

Do sensitivity analysis

If necessary, collect data

Redesign and Run Experiments