Homework 2

advertisement

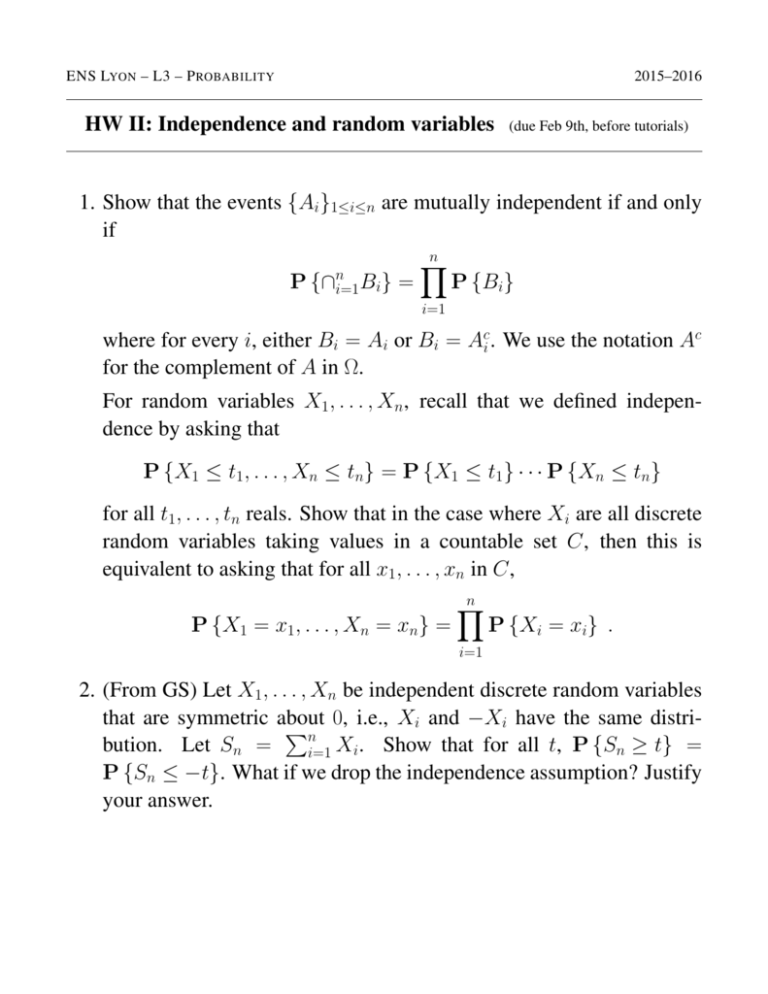

ENS LYON – L3 – P ROBABILITY

2015–2016

HW II: Independence and random variables

(due Feb 9th, before tutorials)

1. Show that the events {Ai}1≤i≤n are mutually independent if and only

if

n

Y

n

P {∩i=1Bi} =

P {Bi}

i=1

where for every i, either Bi = Ai or Bi = Aci. We use the notation Ac

for the complement of A in Ω.

For random variables X1, . . . , Xn, recall that we defined independence by asking that

P {X1 ≤ t1, . . . , Xn ≤ tn} = P {X1 ≤ t1} · · · P {Xn ≤ tn}

for all t1, . . . , tn reals. Show that in the case where Xi are all discrete

random variables taking values in a countable set C, then this is

equivalent to asking that for all x1, . . . , xn in C,

P {X1 = x1, . . . , Xn = xn} =

n

Y

P {Xi = xi} .

i=1

2. (From GS) Let X1, . . . , Xn be independent discrete random variables

that are symmetric about 0, i.e., Xi and −Xi have the same distriPn

bution. Let Sn =

i=1 Xi . Show that for all t, P {Sn ≥ t} =

P {Sn ≤ −t}. What if we drop the independence assumption? Justify

your answer.