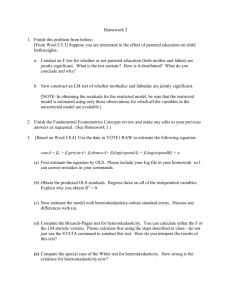

Econ 399 Chapter11a

advertisement

10 Further Time Series OLS Issues

Chapter 10 covered OLS properties for finite

(small) sample time series data

-If our Chapter 10 assumptions fail, we need to

derive large sample time series data OLS

properties

-for example, if strict exogeneity fails (TS.3)

-Unfortunately large sample analysis is more

complicated since observations may be

correlated over time

-but some cases still exist where OLS is valid

11. Further Issues in Using OLS

with Time Series Data

11.1 Stationary and Weakly Dependent

Time Series

11.2 Asymptotic Properties of OLS

11.3 Using Highly Persistent Time Series in

Regression Analysis

11.4 Dynamically Complete Models and the

Absence of Serial Correlation

11.5 The Homoskedasticity Assumption for

Time Series Models

11.1 Key Time Series Concepts

To derive OLS properties in large time series data

sample, we need to understand two properties:

1)Stationary Process

-x distributions are constant over time

-a weaker form is Covariance Stationary

Process

-x variables differ with distance but not with

time

2) Weakly Dependent Time Series

-variables lose connection when separated by

time

11.1 Stationary Stochastic

Process

The stochastic process {xt: t=1,2…}

is stationary if for every collection

of time indices 1≤t1<t2<…<tm, the

joint distribution of (xt1, xt2,…,xtm)

is the same as the joint distribution

(xt1+h, xt2+h,…,xtm+h) for all integers

h≥1

11.1 Stationary Stochastic Process

The above definition has two implications:

1) The sequence {xt: t=1,2…} is identically

distributed

-x1 has the same distribution as any xt

2) Any joint distribution (ie: the joint distribution

of [x1,x2]) remains the same over time (ie:

same joint distribution of [xt,xt+1])

Basically, any collection of random variables

has the same joint distribution now as in

the future

11.1 Stationary Stochastic Process

It is hard to prove if a data was generated by a

stochastic process

-Although certain sequences are obviously not

stationary

-Often a weaker form of stationarity suffices

-Some texts even call this weaker form

stationarity:

11.1 Covariance Stationary

Process

The stochastic process {xt: t=1,2…} with a

finite second moment [E(xt2)<∞] is

covariance stationary if:

i) E(xt) is constant

ii) Var(xt) is constant

iii) For any t, h≥1, Cov(xt,xt+h) depends on h

and not on t

11.1 Covariance Stationary Process

Essentially, covariance between variables can

only depend on the distance between them

Likewise, correlation between two variables can

only depend on the distance between them

-This does however allow for different correlation

between variables of different distances

-Note that Stationarity, often called “strict

stationarity”, is stronger than and implies

covariance stationarity

11.1 Stationarity Importance

Stationarity is important for two reasons:

1) It simplifies the law of large numbers and the

central limit theorem

-it makes it easier to statisticians to prove theorems

for economists

2) If the relationship between variables (y and x)

are allowed to vary each period we cannot

accurately estimate their relationship

-Note that we already assume some form of

stationarity by assuming that Bj does not

differ over time

11.1 Weakly Dependent Time

Series

The stochastic process {xt: t=1,2…} is

said to be WEAKLY DEPENDENT if

xt and xt+h are “almost independent”

as h increases without bound

If in the covariance stationary sequence

Cor(xt,xt+h)->0 as h->∞, the

sequence is ASYMPTOTICALLY

UNCORRELATED

11.1 Weakly Dependent Time Series

-weak dependence cannot be formally defined

since it varies across applications

-essentially, the correlation between xt and xt+h

must go to zero “sufficiently quickly” as the

distance between them approaches infinity

-Essentially, weak dependence replaces the

random sampling assumption in the law of

large numbers and the central limit theorem

11.1 MA(1)

-an independent sequence is trivially weakly

dependent

-a more interesting example is:

xt et 1et 1 , t 1,2,..., (11.1)

-were et is an iid (independent and identically

distributed) sequence with mean zero and

variance σe2

-the process {xt} is called a MOVING AVERAGE

PROCESS OF ORDER ONE [MA(1)]

-xt is a weighted average of et and et-1

11.1 MA(1)

-an MA(1) process is weakly dependent because:

1) Adjacent terms are correlated

-ie: both xt and xt+1 depend on et

-note that:

1

Corr ( xt , xt 1 )

2

(1 1 )

2) Variables more than two periods apart are

uncorrelated

11.1 AR(1)

-another key example is:

yt 1 yt 1 et , t 1,2,..., (11.2)

-were et is an iid (independent and identically

distributed) sequence with mean zero and

variance σe2

-we also assume et is independent of y0 and

E(y0)=0

-the process {yt} is called an AUTOREGRESSIVE

PROCESS OF ORDER ONE [AR(1)]

-xt is a weighted average of et and et-1

11.1 AR(1)

-The critical assumption for weak dependence of

AR(1) is the stability condition:

| | 1

-if this condition holds, we have a STABLE AR(1)

PROCESS

-we can show that this process is stationary and

weakly dependent

11.1 Stationary Notes

-Although a trending series is nonstationary, it

CAN be weakly dependent

-If a series is stationary ABOUT ITS TIME TREND,

and is also weakly dependent, it is often called a

TREND-STATIONARY PROCESS

-if time trends are included in these model, OLS

can be performed as in chapter 10

11.2 Asymptotic OLS Properties

-We’ve already seen cases where our CLM

assumptions are not satisfied for time series

-In these cases, stationarity and weak

dependence lead to modified assumptions that

allow us to use OLS

-in general, these modified assumptions deal with

single periods instead of across time

Assumption TS.1’

(Linear and Weak Dependence)

Same as TS.1, only add the assumption

that {(Xt,yt) t=1, 2,…} is stationary

and weakly dependent. In particular,

the law of large numbers and the

central limit theorem can be applied

to sample averages.

Assumption TS.2’

(No Perfect Collinearity)

Same as TS.2

Don’t mess with a good

thing!

Assumption TS.3’

(Zero Conditional Mean)

For each t, the expected value of the error

ut, given the explanatory variables for

ITS OWN time periods, is zero.

Mathematically,

E (ut | X t ) 0, t 1,2,..., n.

(10.10)

Xt is CONTEMPORANEOUSLY EXOGENOUS

(Note: Due to stationarity, if contemporaneous

exogeneity holds for one time period, it holds

for them all.)

Assumption TS.3’ Notes

Note that technically the only requirement

for Theorem 11.1 is zero UNconditional

mean on ut and zero covariance between

u and x:

E (ut ) 0, Cov(x tj , u t ) j 1,2,..., k.

(11.6)

However assumption TS.3’ leads to a more

straightforward analysis

Theorem 11.1

(Consistency of OLS)

Under assumptions TS.1’ through

TS.3’, the OLS estimators consistent:

ˆ

plim j j , j 0, 1,..., k.

11.2 Asymptotic OLS Properties

-We’ve effectively:

1) weakened exogeneity to allow for

contemporaneous exogeneity

2) strengthened the variable assumption to be

weakly dependent

In order to conclude consistency of OLS with

unbiasedness is impossible.

-To allow for tests, we will now impose less strict

homoskedasticity and no serial correlation

assumptions:

Assumption TS.4’

(Homoskedasticity)

The errors are CONTEMPORANEOUSLY

HOMOSKEDASTIC, that is,

Var (ut | X t )

2

Assumption TS.5’

(No Serial Correlation)

For all t≠s,

E (ut , us | X t , X s ) 0

11.2 Asymptotic OLS Properties

Note the modifications in these assumptions:

-TS.4’ now only conditions on explanatory

variables in time period t

-TS.5’ now only conditions on explanatory

variables in the involved time periods t and s.

-Note that TS.5’ does hold in AR(1) models

-if only one lag exists, errors are seareally

uncorrelated

-these assumptions allow us to test OLS without

the random distribution assumption:

Theorem 11.2

(Asymptotic Normality of OLS)

Under assumptions TS.1’ through

TS.5’, the OLS estimators are

asymptotically normally distributed.

Further, the usual OLS standard

errors, t statistics and F statistics are

asymptotically valid.

11.3 Random Walks

-We’ve already seen consistent AR(1) with |ρ|<1,

however some series are better explained with

ρ=1, producing a RANDOM WALK process:

yt yt 1 et

-where et is iid with mean zero and constant

variance σe2, and the initial value y0 is

independent of all et

-essentially, this period’s y is the sum as last

period’s y and a zero mean random variable e

11.3 Random Walks

-We can also calculate expected value and

variance for a random walk:

yt et et 1 ... et yo

E ( yt ) E (et ) E (et 1 ) ... E (et ) E ( yo )

E ( yt ) E ( yo )

-therefore the expected value of a random walk

does not depend on t, although the variance

does:

Var ( yt ) Var (et ) Var (et 1 ) ... Var (et ) Var ( yo )

Var ( yt ) e2t

(11.21)

11.3 Random Walks

-A random walk is an example of a HIGHLY

PERSISTENT or STRONGLY DEPENDENT time

series without trending

-an example of a highly persistent time series

with trending is a RANDOM WALK WITH DRIFT:

yt 0 yt 1 et

(11.23)

-Note that if y0=0,

E ( yt ) 0t

Var ( yt ) t

2

e

11.5 Time Series Homoskedasticity

-Due to lagged terms, time series

homoskedasticity from TS. 4’ can differ from

cross-sectional homoskedasticity

-a simple static model and its homoskedasiticity is

of the form:

yt 0 1 zt ut

Var (ut | zt )

2

-The addition of lagged terms can change this

homoskedasticity statement:

11.5 Time Series Homoskedasticity

-For the AR(1) model:

yt 0 1 yt 1 ut

Var (ut | yt 1 ) Var ( yt | yt 1 )

2

-Likewise for a more complicated model:

yt 0 1 yt 1 2 zt 3 zt 1 ut

Var (ut | zt , yt 1 , zt 1 ) Var ( yt | zt , yt 1 , zt 1 )

2

-Essentially, y’s variance is constant given ALL

explanatory variables, lagged or not

-This may lead to dynamic homoskedasticity

statements