DAA: Algorithms, Growth Functions, Asymptotic Notations

advertisement

DAA

1

(ECS-502)

Unit- I

1.1 Algorithms

1.2 Growth of Functions

1.3 Asymptotic Notations

EXERCISE

1.4 Solving Recurrences

1.4.1 Master Method

1.4.2 Substitution Method

1.4.3 Change of Variable Method

1.4.4 Iteration Method

1.4.5. Recursion Tree Method

EXERCISE

Contd………

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

2

(ECS-502)

1.1 Algorithms:

An algorithm is any well-defined computational procedure that takes some value, or set of

values, as input and produces some value, or set of values, as output. An algorithm is thus a

sequence of computational steps that transform the input into the output.

We can also view an algorithm as a tool for solving a well-specified computational

problem.

An Algorithm must satisfy the following criteria:

i.

ii.

It requires one or more than one inputs.

It produces at least one output.

iii.

Each instruction should be clear and unambiguous.

iv.

It must terminate after a finite number of times/ steps.

How to design an algo.: There are five fundamental techniques, which are used to design an

algo:

i.

ii.

iii.

Divide and Conquer Approach

Binary Search

Multiplication of two n bit numbers

Computing xn

V. Stressen’s matrix multiplication

Quick Sort

Merge Sort

Heap Sort

Greedy method

Knapsack Problem

Minimum cost Spanning Tree (Kruskal’s and Prim’s Algo)

Single Source Shortest Path

Dynamic Programming

All Pair Shortest Path

Chain Matrix Multiplication

Lohgest Common Subsequesnce (LCS)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

iv.

v.

3

Optimal Binary Search Tree (OBST)

Travelling Salesman Problem (TSP)

(ECS-502)

Backtracking

N-Queen’s Problem

Sum of Subsets

Branch and Bound

Assignment Problem

1.2 Growth of Functions:

Given an array with n elements, we want to rearrange them in ascending order. Sorting

algorithms such as the Bubble, Insertion and Selection Sort all have a quadratic time complexity O

(n2), that limits their use when an array is very big. We now need an algorithm which is more

efficient as compared to insertion sort and has linear time complexity. Merge Sort, a divide-and

conquer algorithm to sort an n element array had time complexity O(nlogn). That is, we are

concerned with how the running time of algorithm increases with the size of input.

Graph shown below shows Merge Sort algorithm is significantly faster than Insertion Sort

algorithm if array is of greater size. Merge sort is 24 to 241 times faster than Insertion Sort. And the

running time gap goes on increasing very fast when input size n goes on increasing

S.No

1.

2.

3.

4.

5.

6.

7.

8.

9.

10.

Number of

elements

to be

sorted

3000

4000

5000

6000

7000

10000

15000

20000

25000

35000

Total testing time in

seconds

Merge

Insertion

Sort

Sort

0.031

1.046

0.046

1.906

0.062

2.984

0.078

4.281

0.094

5.75

0.125

12.062

0.203

28.093

0.281

49.312

0.343

76.781

0.781

146.4

The above tests were run on PC running Windows XP and the following specifications: Intel Core 2

Duo CPU E8400 at 3.00 GHz with 2 GB of RAM. Algorithms were run in Java.

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

4

(ECS-502)

As can be noticed from the table that time gap between the running of

insertion sort and merge sort becoming larger and larger as we increase the size of array n. To find

the complexity or running time of algorithm we are not interested in knowing the exact time of

algorithm, because if we know the exact time its of no use since this algorithm with the same input

can be run on slower machine or can be run on faster machine and this time can differ.

Since there are many possible algorithms or programs that computes the

same results. But we would like to use the one that is fastest. How do we decide how fast an

algorithm is? Since knowing how fast an algorithm run's for a certain input does not reveal

anything about how fast it run's on the other input. Here we need a formula that relates input size n

to the running time of the algorithm i.e we only specify how the running time scales as the size of

input grows. For example the running time for algorithm with input size n is 4n2, we say that it's

running time scales as n2 or to make it simple if size is n = 10, its running time is 100, if input size is

n = 100, its running time is 10000 and so on. We use asymptotic notations to show the running

time of algorithm.

1.3 Asymptotic Notations (Analysis of efficiency):

1. It is a way to describe the behavior of function.

2. It describes the growth of function.

3. How the running time scales with the size of input.

Here we use five asymptotic notations and also define them one by one.

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

5

(ECS-502)

O –Notation:

Big O notation provides the upper bound on the growth of

function. Upper bound means, it is the maximum time (Worst Case) that can be

taken by the algorithm. we define the big-O notation as

O(g(n)) = { f(n): there exist positive constants c and no such that 0 ≤ f(n) ≤ cg(n) for all n ≥ no}.

Ω –Notation:

Big Ω-notation provides a lower bound on growth of

function. Lower bound means it is the minimum time (Best Case) that can be taken

by the algorithm. We define the big - Ω notation as

Ω(g(n)) = { f(n): there exist positive constants c and no such that 0 ≤ cg(n)≤ f(n) for all n ≥ no}.

Θ–Notation:

This notation provides both lower and upper bound on the

function f(n) i.e. average case. Here exist two constants c1 and c2 such that c1g(n) is

a lower bound on f(n) and c2g(n) is an upper bound on f(n). We define big- Θ

notation as

Θ(g(n)) = { f(n): there exist three positive constants c1, c2 and no such that 0 ≤ c1g(n)≤ f(n) ≤ c2g(n) for

all n ≥ no}.

ο–Notation: This is used to provide loose upper bound.

ο(g(n)) = { f(n): there exist positive constants c>0 and no>0 such that 0 ≤ f(n) < cg(n) for all n ≥ no}.

ω–Notation: This is used to provide loose lower bound.

ω(g(n)) = { f(n): there exist positive constants c>0 and no>0 such that 0 ≤ cg(n) < f(n) for all n ≥ no}.

Some Properties of Asymptotic Notations:

Transitivity:

f(n) = Θ(g(n)) and g(n) = Θ(h(n)) imply f(n) = Θ(h(n)),

f(n) = O(g(n)) and g(n) = O(h(n)) imply f(n) = O(h(n)),

f(n) = Ω(g(n)) and g(n) = Ω(h(n)) imply f(n) = Ω(h(n)),

f(n) = o(g(n)) and g(n) = o(h(n)) imply f(n) = o(h(n)),

f(n) = ω(g(n)) and g(n) = ω(h(n)) imply f(n) = ω(h(n)).

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

6

(ECS-502)

Reflexivity:

f(n) = Θ(f(n)),

f(n) = O(f(n)),

f(n) = Ω(f(n)).

Symmetry:

f(n) = Θ(g(n)) if and only if g(n) = Θ(f(n)).

Transpose symmetry:

f(n) = O(g(n)) if and only if g(n) = Ω(f(n)),

f(n) = o(g(n)) if and only if g(n) = ω(f(n)).

Limit Rule for calculating Asymptotic Notation:

ο–Notation

O–Notation

where c ≥ 0 (nonnegative

but not infinite)

ω–Notation

Ω –Notation

(either a strictly positive

constant or infinity)

Θ–Notation

C > 0 (strictly positive but

not infinity)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

7

(ECS-502)

Tutorial Sheet (Asymptotic Notations)

1. Find O notation for the following

a) f(n) = 7n + 8

b) f(n) = 27n2 + 16n

c) f(n) = 2n3 + n2 + 2n

2. If f(n) = 3n2 + 5 and g(n) = 2n2 . Proof that f(n) = Θg(n)

3. For f(x) = 3x2 + 2x +1 State true or false in the following:

a) f(x) = O(x3)

f) f(x) = Ω (x3)

b) x3 = O(f(x))

g) f(x) = Ω (x2)

c) x4 = O(f(x))

h) x3 = Ω(f(x))

d) f(x) = O(f(x))

i) f(x) = Ω(x4)

e) f(x) = O(x4)

j) x2 = Ω(f(x))

4. Show that max{f(n),g(n)} = Θ (f(n)+g(n)).

5. State True or False for the following:

a) log n.log n = O ((n log n)/100)

h) f(n) = Og(n) implies log (f(n)) = O(log(g(n)))

b) √log n = O (log(log n))

where log(g(n)) ≥ 1

c) n2 = O(n3)

i) f(n) = Og(n) implies 2f(n) = O(2g(n))

d) n2 = Ω(n3)

j) f(n) = O f(n2)

e) f(n) = O(g(n)) implies g(n) = O(f(n))

k) f(n) = Og(n) implies g(n)= Ω(f(n))

f) f(n)+g(n) = Θ (min(f(n),g(n)))

l) f(n) = O(f(n/2))

g) f(n) + O f(n) = Θf(n)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

8

(ECS-502)

Solutions

1.a) f(n) = 7n + 8

7n + 8 O(n), we have to find c and no such that

7n + 8 ≤ cn

7n + 8 ≤ 7n + n

7n + 8 ≤ 8n , treating it as an equality we got n = 8 and c = 8

Therefore,

7n + 8 ≤ cn for n = 8 and c = 8.

So, we can say that 7n + 8 = O(n) or f(n) = O(n)

for n = 8 and c = 8

b) f(n) = 27n2 + 16n

c)

f(n) = 2n3 + n2 + 2n

2. If f(n) = 3n2 + 5 and g(n) = 2n2 . Proof that f(n) = Θg(n)

f(n) = Θg(n)

c1 g(n) ≤ f(n) ≤ c2 g(n)

L.H.S. c1 g(n) ≤ f(n)

c1 2n2 ≤ 3n2+5

R.H.S f(n) ≤ c2 g(n)

3n2 + 5 ≤ c2 2n2

is satisfy for c1 = 1 and n ≥ 1

is satisfy for c2 = 1 and n ≥ 3

Now solution is c1 = 1, c2 = 1 and n ≥ 3

3. a) T

b) F

c) F

Prepared By: NIKUNJ KUMAR

f) F

g) T

h) T

RKGIT

DAA

9

d) T

e) T

(ECS-502)

i) F

j) T

4. Show that max{f(n),g(n)} = Θ (f(n)+g(n)).

max{f(n),g(n)} = Θ (f(n)+g(n))

f(n) = Θg(n)

for Θ notation c1 g(n) ≤ f(n) ≤ c2 g(n)

then

c1 (f(n)+g(n)) ≤ max{f(n),g(n)} ≤ c2 (f(n)+g(n))

L.H.S.

c1 (f(n)+g(n)) ≤ max{f(n),g(n)}

c1 (f(n)+g(n)) ≤ h(n)

where h(n) = f(n) if f(n) > g(n)

and h(n) = g(n) if g(n) > f(n)

so we can say it satisfy for c1 = ½ and n ≥ 1

R.H.S.

max{f(n),g(n)} ≤ c2 (f(n)+g(n))

h(n)

≤ c2 (f(n)+g(n))

where h(n) = f(n) if f(n) > g(n)

and h(n) = g(n) if g(n) > f(n)

so we can say it satisfy for c2 = 1 and n ≥ 1

so, the solution is c1 = ½, c2 = 1 and n ≥ 1

5. a) T

b) F

c) T

d) F

e) F

f) F

Prepared By: NIKUNJ KUMAR

g) T

g) F

i) T

j) T

k) T

l) T

RKGIT

DAA

10

(ECS-502)

1.4 Solving Recurrences:

1.4.1 Master Method

The master method is applicable only if the recurence is given in the form

T(n) = aT(n/b) + f(n)

a: How many parts we are dividing the problem

n/b: size of each problem

f(n) = cost of dividing the problem D(n)+cost of combining the problem C(n)

Where a > 1 and b > 1 be constants. Now as a first step we have to calculate the value of nlogba. The

solution of given recurrence can be given by comparing these two values polynimialy nlogba and f(n).

Case 1: If value of nlogba is polynomially greater then f(n) then case 1 of master theorem holds which

can be given as:

If f(n) = O(nlogba - Ԑ ) for some constant Ԑ > 0, then the solution of the recurrence will be T(n) = Θ (

nlogba )

Case 2: If value of nlogba is polynomially and asymptotically equal to f(n) then case 2 of master

theorem holds which can be given as:

If f(n) = Θ (nlogba ) ,then the solution of the recurrence will be T(n)= Θ ( nlogba log n)

Case 3: If value of nlogba is polynomially smaller then f(n) then case 3 of master theorem holds which

can be given as:

If f(n) = Ω(nlogba + Ԑ) for some constant Ԑ > 0 and af(n/b) ≤ c.f(n) for some constant c<1, then the

solution of the recurrence will be T(n) = Θ ( f(n))

1.4.2 Substitution Method

1.4.3 Change of Variable Method

1.4.4 Iteration Method

1.4.5. Recursion Tree Method

Tutorial Sheet (Solving Recurrences)

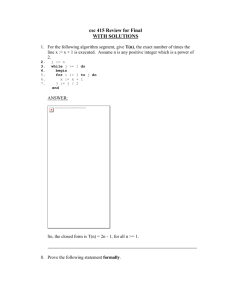

1. Solve the following Recurrences using Master Method:

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

11

(ECS-502)

a) T (n) = 4T (n/2) + n

g) T (n) = 2T (n/2) + n2

b) T (n) = 9T (n/3) + n

h) T (n) = 3T (n/4) + ( n2)

c) T (n) = 8T (n/2) + n

i) T (n) = 3T (n/4) + ( nlog n)

d) T (n) = 2T (n/2) + n

j) T (n) = 4T(n/2) + n

e) T (n) = T (n/2) + 1

k) T (n) = 4T(n/2) + n2

f) T (n) = T (2n/3) + 1

l) T (n) = 4T(n/2) + n3

m) The running time of an algorithm A is described by the recurrence T(n) = 7T(n/2) + n2. A

competing algorithm A' has a running time of T`(n) = aT'(n/4) + n2. What is the largest integer

value for a such that A' is asymptotically faster than A?

n) Use the master method to show that the solution to the recurrence T(n) = T(n/2) + (1) of binary

search is T(n) = (1g n)

o) Can the master method be applied to the recurrence T (n) = 4T (n/2) + n2logn? Why or why not?

Give an asymptotic upper bound for this recurrence.

p) Can the master method be applied to the recurrence T (n) = 2T (n/2) + n log n? Why or why not?

Give an asymptotic upper bound for this recurrence.

q) T(n) = 5T(n/2) + θ(n2)

r) T(n) =27T(n/3) + ( n3 log n)

s) T(n) = 5T(n/2) + (n3)

2. Solve the following Recurrences using Substitution Method:

a) Determine the upper bound of T(n) = 2T(n/2) + n is O(nlogn).

b) T (n) = 2T ( n/2 + 17) + n is O(nlogn).

c) T(n) = T(n – 1) + n is is O(n2).

3. Solve the following Recurrences using Change of Variable Method:

a) T (n) = 2T (√n) + log n

b) T (n) = 3T (√n) + log n, Your solution should be asymptotically tight. Do not worry

about whether values are integral.

c)

T (n) = 2T (√n) + 1

4. Solve the following Recurrences using Iteration Method:

a) T(n) = T(n – 1) + 1 and T(1) = Ө(1)

b) T(n) = T(n – 1) + n and T(1) = Ө(1)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

12

(ECS-502)

c) T(n) = 2T(n – 1) + 1

5. Solve the following Recurrences using Recursion Tree Method:

a) T(n) = 2T(n/2) + cn

b) T(n) = 4T(n/2) + cn

c) T (n) = 3T (⌊n/2⌋) + n

d) T (n) = T (n/3) + T (2n/3) + cn

e) T (n) = 3T (⌊ n/4⌋) + θ(n2)

f) T(n) = T(n – 1) + n

g) T(n) = 2T(n – 1) + 1

h) T(n) = T(n – 1) + 1

i) T(n) = T(n/2) + T(n/4) + T(n/8) + n

Solutions

1. a) Here a = 4, b = 2, f(n) = n

And

nlogba = nlog24 = n2

Therefore Ԑ > 1 exist such that f(n) = O(nlogba - Ԑ )

Or for Ԑ = 1

Prepared By: NIKUNJ KUMAR

f(n) = O(nlog24 - Ԑ ) = O(nlog24 - 1) = O(n2 - 1) = O(n ) = f(n)

RKGIT

DAA

13

(ECS-502)

Therefore Case 1 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba) = Θ( nlog24) = Θ( n2 )

T(n) = Θ( n2 )

b) Here a = 9, b = 3, f(n) = n

And

nlogba = nlog39 = n2

Therefore Ԑ > 1 exist such that f(n) = O(nlogba - Ԑ )

Or for Ԑ = 1

f(n) = O(nlog39 - Ԑ ) = O(nlog39 - 1) = O(n2 - 1) = O(n ) = f(n)

Therefore Case 1 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba) = Θ( nlog39) = Θ( n2 )

T(n) =Θ( n2 )

c) Here a = 8, b = 2, f(n) = n

And

nlogba = nlog28 = n3

Therefore Ԑ > 1 exist such that f(n) = O(nlogba - Ԑ )

Or for Ԑ = 2

f(n) = O(nlog28 - Ԑ) = O(nlog28 - 2) = O(n3 - 2) = O(n ) = f(n)

Therefore Case 1 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba) = Θ( nlog28) = Θ( n3 )

T(n) = Θ( n3 )

d) Here a = 2, b = 2, f(n) = n

And

Here

nlogba = nlog22 = n

f(n) = Θ (nlog22 ) = Θ(n ) = f(n)

Therefore Case 2 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba log n ) = Θ( nlog22 log n) = Θ( n log n )

T(n) = Θ( n log n)

e) Here a = 1, b = 2, f(n) = 1

And

Here

nlogba = nlog21 = n0 = 1

f(n) = Θ(nlog21 ) = Θ(n0 ) = f(n) = (1)

Therefore Case 2 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba log n ) = Θ( nlog21 log n) = Θ( n0 log n) = Θ( log n )

T(n) = Θ( log n)

f) Here a = 1, b = 3/2, f(n) = 1

And

Here

nlogba = nlog3/21 = n0 = 1

f(n) = Θ (nlog3/21 ) = Θ (n0 ) = f(n) = (1)

Therefore Case 2 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ( nlogba log n ) = Θ( nlog3/21 log n) = Θ( n0 log n ) = Θ( log n )

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

14

(ECS-502)

T(n) = Θ( log n )

g) Here a = 2, b = 2, f(n) = n2

And

nlogba = nlog22 = n

Therefore Ԑ > 1 exist such that f(n) = Ω (nlogba + Ԑ )

Or for Ԑ = 1

f(n) = Ω(nlog22 + Ԑ) = Ω(nlog22 + 1) = Ω(n1+ 1) = Ω(n2 ) = f(n)

Therefore Case 3 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = ( f(n) ) = Θ( n2)

T(n) = Θ( n2 )

h) Here a = 3, b = 4, f(n) = n2

And

nlogba = nlog43 = n0. 756

Therefore Ԑ > 1 exist such that f(n) = (nlogba + Ԑ )

Or for Ԑ = 1.25 f(n) = Ω(nlog43 +Ԑ ) = Ω(nlog43 + 1.25) = Ω(n0.75+ 1.25) = Ω(n2 ) = f(n)

Therefore Case 3 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = ( f(n) ) = Θ( n2) so solution will be

T(n) = Θ( n2 )

i) Here a = 3, b = 4, f(n) = n log n

And

nlogba = nlog43 = n0. 756

Therefore Ԑ > 1 exist such that f(n) = Ω (nlogba + Ԑ)

Or for Ԑ = 0.25 f(n) = Ω(nlog43 + Ԑ) = Ω(nlog43 + 0.25) = Ω(n0.75+ 0.25) = Ω(n ) = f(n)

Therefore Case 3 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = ( f(n) ) = Θ( n log n)

T(n) = Θ( n log n)

j) T(n) = 4T(n/2) + n

Here a = 4, b = 2, f(n) = n.

Therefore nlogba = nlog24 = n2

Since Case 1: If f(n) = O(nlogba - Ԑ) for some constant Ԑ > 0, Here Ԑ = 1

Therefore Case I of Master Theorem Holds and T(n) = Θ(nlogba )

T(n) = θ(n2)

k) T (n) = 4T(n/2) + n2

Here a = 4, b = 2, f(n) = n2

Therefore nlogba = nlog24 = n2

Since f(n) = nlog24 = n2 therefore Case 2: If f(n) = Θ (nlogba ) Of Master Theorem Holds and the

solution is

T(n) =Θ ( nlogba log n)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

15

(ECS-502)

T(n) = θ(n2 log n)

l) T (n) = 4T(n/2) + n3

Here a = 4, b = 2, f(n) = n3

Therefore nlogba = nlog24 = n2

Since, Case 3 If f(n) = (nlogba + Ԑ) for some constant Ԑ > 0, Here ε = 1,Therefore Case III of Master

Theorem Holds

and the solution is T(n) = Θ( f(n) ).

T(n) = θ(n3)

m) Algorithm A is given as T(n) = 7T(n/2) + n2

Here a = 7, b = 2, f (n) = n2

Therefore nlogba = nlog27 , Since 2 < log27 < 3 , therefore there exists constant ε such that

Case 1: If f(n) = O(nlogba - Ԑ ) for some constant Ԑ > 0, Here ε will have some decimal value (0.2453).

Therefore Case I of Master Theorem Holds and the solution is T(n) = ( nlogba )

T(n) = θ(nlog27)

--------(1)

For algorithm A` , T'`(n) = aT`'(n/4) + n2

If largest value of a = 16 then

nlogba = nlog416 = n2 . For this Case 2: If f(n) = (nlogba ) , of Master Theorem Holds and the solution of

recurrence will be

T(n) = ( nlogba log n)

Therefore ,

T(n) = θ(n2 log n)

---------(2)

But for a = 16 A` will be slower than A as can be seen from solution (1) and (2)

We will now try for smaller values of a

If largest value of a = 15 then

nlogba = nlog415 , Since here 1 < nlog415 < 2, Therefore Case 3 If f(n)=(nlogba + Ԑ ) for some constant Ԑ

> 0, of Master Theorem Holds and the solution is T(n) = ( f(n) ). Therefore solution of recurrence

will be

T (n) = θ(n2)

----------(3)

n) Here a = 1, b = 2, f(n) = 1

Therefore nlogba = nlog21 = n0 = 1

Here, Case 2: If f(n) = Θ (nlogba ) of Master Theorem holds , and the solution of the recurrence can

be given as

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

16

(ECS-502)

T(n) = Θ ( nlogba log n)

Therefore T(n) = θ(nlogba log n) = θ(1. log n) = θ(log n)

T(n) = θ(log n)

o) For T (n) = 4T (n/2) + n2 log n

a = 4, b = 2, f(n) = n2 log n

nlogba = nlog24 = n2 . Here f(n) is asymptotically larger then nlogba , but not polynomially larger. This

case falls into the gap between Case 2 and Case 3. The solution of this recurrence can be given by

the theorem as stated under.

If f(n) = θ(nlogba logkn), where k ≥ 0, then the master recurrence has solution T(n) = θ(nlogba

logk+1n).

Therefore the solution of the given recurrence will be

T(n) = θ(n2 log2n).

p) For T (n) = 2T (n/2) + n log n

a = 2, b = 2, f(n) = nlog n

nlogba = nlog22 = n . Here f(n) is asymptotically larger then nlogba , but not polynomially larger. This

case falls into the gap between Case 2 and Case 3. The solution of this recurrence can be given by

the theorem as stated under.

If f(n) = θ(nlogba logkn), where k ≥ 0, then the master recurrence has solution T(n) = θ(nlogba

logk+1n).

Therefore the solution of the given recurrence will be

T(n) = θ(n log2n)

q) Here a = 5, b = 2, f(n) = n2

And nlogba = log25 , Since here 2 < log25 < 3, Therefore Case 1 of Master theorem holds since Ԑ >

1 exist such that f(n) = O(nlogba - Ԑ )

f(n) = O(nlog25 - Ԑ) = O(nlog25 – 0.245) = O(n2 ) = f(n)

Therefore T(n) = Θ ( nlogba) = Θ ( nlog25)

T(n) =Θ( nlog25 )

r) Here a = 27, b = 3, f(n) = n3 log n

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

17

(ECS-502)

nlogba = nlog327 = n3 . Here f(n) is asymptotically larger then nlogba , but not polynomially larger. This

case falls into the gap between Case 2 and Case 3. The solution of this recurrence can be given by

the theorem as stated under.

If f(n) = θ(nlogba logkn), where k ≥ 0, then the master recurrence has solution T(n) = θ(nlogba logk+1n).

Therefore the solution of the given recurrence will be

T(n) = θ(n3 log2n).

s) Here a = 5, b = 2, f(n) = n3

And

nlogba = nlog25 = n , Since here 2 < log25 < 3,

Therefore Ԑ > 1 exist such that f(n) = Ω (nlogba +Ԑ )

Therefore Case 3 of Master theorem holds and we conclude the solution of given recurrence as

T(n) = Θ ( f(n) ) = Θ( n3)

T(n) = Θ( n3)

2. a) Here we guess that the solution is T(n) = O(n log n). Our method is to prove that T(n) ≤ cn log

n for an appropriate choice of constant c>0.

Given recurrence is

T(n) = 2T(n/2)+ n

Solution we guess

T(n) ≤ cn log n

-------- (1)

--------- (2)

Since we need T(n/2) to substitute in equation (1), so we calculate T(n/2) from equation (2) by

substituting n as n/2 in equation (2) we get

T(n/2)≤ cn/2 log n/2 --------- (3)

Now substituting the value of T(n/2) from equation (3) in recurrence relation (1) we get

T(n) ≤ 2(cn/2 log (n/2) ) + n

≤ 2cn/2 log (n/2) + n

≤ cn ( log n – log 2 ) + n

≤ cn (log n – 1 ) + n

= cn log n – cn + n

This will hold as long as c ≥ 1.

≤ cn log n

Therefore the upper bound for T (n) = 2T (n/2)+ n is T(n) = O(n log n) for c ≥ 1

b) Here we had the solution T(n) = O(n log n). Our method is to prove that T(n) ≤ cn log n for an

appropriate choice of constant c>0.

Given recurrence is

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

18

(ECS-502)

T(n) = 2T(n/2 + 17) + n

Solution

T(n) ≤ cn log n

-------- (1)

-------- (2)

Since we need T(n/2 + 17) to substitute in equation (1), so we calculate T(n/2 + 17) from equation

(2) by substituting n as n/2 + 17 in equation (2) we get

T(n/2 + 17)≤ c(n/2 + 17) log( n/2 + 17)

--------- (3)

Now substituting the value of T(n/2 + 17) from equation (3) in recurrence relation (1) we get

T(n) ≤ 2(cn/2 + 17) log (n/2 + 17) + n

n/2 + 17, can be written as n/2 if n is very large, and the same is for cn + 32, it can be written as cn

≤ (cn + 34) log (n/2) + n

≤ cn ( log n – log 2 ) + n

≤ cn (log n – 1 ) + n

= cn log n – cn + n

This will hold as long as c ≥ 1.

≤ cn log n

Therefore the upper bound for T (n) = 2T (n/2 + 17) + n is T(n) = O(n log n) for c ≥ 1

c) Here we are given solution as T(n) = O(n2) which can also be written as

T(n) = c n2

T(n – 1) = c(n – 1)2

= c(n2 + 1 - 2n)

Placing the value of T(n – 1) in the given recurrence we get

T(n) = c(n2 + 1 - 2n) + cn

= cn2 + c – 2cn + cn

Ignoring lower order terms we get

T(n) = cn2

Or

T(n) = O(n2).

3. a) The recurrence T (n) = 2T (√n) + log n on the first instance looks to be the difficult one. But we

can simplify this recurrence by changing (renaming) variables. Here we assume that √n will be a

integer.

Introduce one new variable m and assign it value log n. Now renaming log n as m we got

i.e. m = log n, which implies :- 2m = n which yields

T(2m) = 2T(2m/2) + m

We now rename S(m) = T(2m) to produce new recurrence

S(m) = 2S(m/2) + m

--------(1)

The recurrence which we now got is very much similar to the recurrence

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

19

(ECS-502)

T(n) = 2T(n/2) + n which had a solution O(n log n)

Therefore recurrence (1) has a same solution

S(m) = O(m log m)

Now changing S(m) to T(n), we obtain

T(n) = T(2m) = S(m) = O(m log m) = O( log n log log n).

b) O(log2n)log23

c) The recurrence T (n) = 2T (√n) + 1 on the first instance looks to be the difficult one. But we can

simplify this recurrence by changing (renaming) variables. Here we assume that √n will be a

integer.

Introduce one new variable m and assign it value log n. Now renaming log n as m we got

i.e. m = log n, which implies :- 2m = n which yields

T(2m) = 2T(2m/2) + 1

We now rename S(m) = T(2m) to produce new recurrence

S(m) = 2S(m/2) + 1

--------(1)

The recurrence which we now got is very much similar to the recurrence

T(n) = 2T(n/2) + 1 which had a solution O(n)

Therefore recurrence (1) has a same solution

S(m) = O(m)

Now changing S(m) to T(n), we obtain

T(n) = T(2m) = S(m) = O(m) = O(log n).

4. a) T(n) = T(n – 1) + 1

T(n – 1) = T(n – 2) + 1

T(n ) = T(n – 2) + 1 + 1

T(n ) = T(n – 2) + 2

T(n – 2) = T(n – 3) + 1

T(n ) = T(n – 3) + 1 + 2

T(n ) = T(n – 3) + 3

T(n – 3) = T(n – 4) + 1

T(n ) = T(n – 4) + 1 + 3

T(n ) = T(n – 4) + 4

………………………..

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

20

(ECS-502)

………………………..

T(n ) = T(n – k) + k

When k = n – 1

T(n – k) = T(n – (n - 1))

T(n ) = T(n – (n - 1)) + n – 1

T(n ) = T(1) + n – 1

T(n ) = Ө(1) + n – 1

T(n ) = Ө(n)

b) T(n) = T(n – 1) + n

T(n – 1) = T(n – 2) + n – 1

T(n) = T(n – 2) + n + n – 1

T(n – 2) = T(n – 3) + n – 2

T(n) = T(n – 3) + n + n – 1 + n – 2

T(n – 3) = T(n – 4) + n – 3

T(n) = T(n – 4) + n + n – 1 + n – 2 + n – 3

……………………………………………..

……………………………………………..

T(n) = T(n – k) + n + n – 1 + n – 2 + n – 3 + ……..+ (n – (k – 1))

For k = n – 1

T(n) = T(n – (n – 1)) + n + n – 1 + n – 2 + n – 3 + ……..+ (n – ((n – 1) – 1))

T(n) = T(1) + n + n – 1 + n – 2 + n – 3 + ……..+ 2

T(n) = T(1) + n + n – 1 + n – 2 + n – 3 + ……..+ 2

T(1) = Ө(1) and,n + n – 1 + n – 2 + n – 3 + ……..+ 2 =

T(n) = Ө(1) +

T(n) = Ө(n2)

c) T(n) = 2T(n – 1) + 1

T(n – 1) = 2T(n – 2) + 1

T(n) = 2[2T(n – 2) + 1] + 1

T(n) = 2.[2T(n – 2)] + 2 + 1

T(n) = 2.[2T(n – 2)] + 1 + 2

T(n – 2) = 2T(n – 3) + 1

T(n) = 2.2[2T(n – 3) + 1] + 1 + 2

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

21

(ECS-502)

T(n) = 2.2[2T(n – 3)] + 2.2 + 1 + 2

T(n) = 22 [2T(n – 3)] + 1 + 2 + 22

T(n – 3) = 2T(n – 4) + 1

T(n) = 23 [2T(n – 4) + 1] + 1 + 2 + 22

T(n) = 23 [2T(n – 4)] + 1 + 2 + 22 + 23

…………………………………….

…………………………………….

T(n) = 2k[2T(n – (k – 1))] + 1 + 2 + 22 + 23 +…….+ 2k

For k = n - 1

T(n) = 2n - 1[2T(n – ((n – 1) – 1))] + 1 + 2 + 22 + 23 +…….+ 2n - 1

T(n) = 2n - 1[2T(2)] + 1 + 2 + 22 + 23 +…….+ 2n – 1

T(n) = Ө(2n)

5.a)

Step 1: Draw the recurrence tree It is drawn

below

Step 2. To calculate the height of the tree ( or the

lowest level of the tree )

n/2i = 1

Which implies n = 2i

Therefore i = log2n

Thus the height of the tree = log2n

We know that:

Total levels of the tree = Height of the tree + 1

Therefore, Total levels of the tree = log2n + 1

Step 3. Cost at each level of the tree

From the recursion tree of T(n) = 2T(n/2) + cn we can easily conclude that cost of each level is cn.

Step 4. Total cost of the recursion tree

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

22

(ECS-502)

Total cost = Total no. of levels x cost of each level

= (log2n + 1) x cn

= cn log2n + cn

By ignoring lower order terms we get

Total cost = cn log2n

T(n) = Ө(n log2n)

b) Step 1: The recurrence tree for the recursive relation T(n) = 4T(n/2) + cn can be easilty drawn

(try it yourself)

Step 2: To calculate the height of the tree ( or the lowest level of the tree )

n/2i = 1

Which implies n = 2i

Therefore i = log2n (lowest level i is the height of the tree)

Thus the height of the tree = log2n

We know the Total levels of the tree = Height of the tree + 1

Therefore the Total levels of the tree = log2n + 1

Step 3 & 4:

Total cost of last level = Total number of leaves x Cost of each Leave

= 4log2n x T(1)

= nlog24 x T(1)

= n2 .c

= cn2

= O(n2)

Cost of each level = Cost of each node at that level x Total number of nodes at that level.

= n + 2n + 4n + 8n + 16n + ………….. + log2n – 1

= n(1 + 2 + 4 + 8 + 16 + …………….) + log2n – 1

= n(20 + 21 + 22 + 23 + 24 + ……….) + log2n – 1

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

23

(ECS-502)

This is a Geometric series

= n(2 log2n – 1 + 1 – 1) / ( 2 – 1)

= n(n log22 – 1)

= n(n1 – 1)

= n(n – 1)

Total cost of tree = Cost of 0, 1, 2, 3, …….., log2n – 1 levels + Cost of last level

= n(n – 1) + O(n2)

= n2 -1 + cn2

= O(n2)

Tight asymptotic bound for the given recurrence T(n) = 4T(n/2) + cn can be given as

T (n) = O (n2)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

24

(ECS-502)

c) Step 1: Draw the recurrence tre It is drawn as shown

Step 2. To calculate the height of the tree ( or the lowest

level of the tree )

n/2i = 1

Which implies n = 2i

Therefore i = log2n (lowest level i is the

height of the

tree)

Thus the height of the tree = log2n

We know the Total levels of the tree = Height of the tree

+1

Therefore the Total levels of the tree = log2n + 1

Step 3:

Total cost of last level = Total number of leaves x Cost of each Leave

= 3log2n x T(1)

= nlog23 x T(1) = O(nlog23)

Cost of each level = Cost of each node at that level x Total number of nodes at that level.

Total cost of recursion tree = cost of 0,1,2,...,(log2n – 1) levels + cost of last level

=

x cn + O(nlog23)

Therefore, the solution of recurrence T (n) = 3T (⌊n/2⌋) + n is T(n) = O(nlog23).

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

25

(ECS-502)

d) Step 1: Draw the recurrence tree It is drawn

below

Step 2. To calculate the height of the tree ( or

the lowest level of the tree )

here there are two order of reductions i.e. 1/3

and 2/3. Now among these two we have to take

the order of reduction that will go to the deeper

level, since that will decide the height of tree.

Therefore among these two order of reductions

1/3 and 2/3, we select 2/3, since it reaches

more deeper then 1/3.

Therefore, n/(2/3)i = 1

Which implies n = (3/2)i

Therefore i = log3/2n

Total levels of the tree = Height of the tree + 1

Therefore, Total levels of the tree = log3/2n + 1

Step 3. Cost at each level of the tree

From the recursion tree of T (n) = T (n/3) + T (2n/3) + cn we can easily conclude that cost of each

level is cn.

Step 4. Total cost of the recursion tree

Total cost = Total no. of levels x cost of each level

= (log3/2n + 1) x cn

= cn log3/2n + cn

By ignoring lower order terms we get

Total cost = cn log3/2n, by rounding the base value of we get

T(n) = Ω (n log n).

e) Step 1: Draw the recurrence tree It is drawn as shown

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

26

(ECS-502)

Step 2. To calculate the height of the tree ( or the lowest level of the tree )

n/4i = 1

Which implies n = 4i

Therefore i = log4n

(lowest level i is equal to the height of the tree)

Thus the height of the tree = log4n

Total levels of the tree = Height of the tree + 1

Therefore, Total levels of the tree = log4n + 1

Step 3:

Total cost of last level = Total number of leaves x Cost of each Leave

= 3log4n x T(1)

= nlog43 x T(1) = O(nlog43)

Cost of each level = Cost of each node at that level x Total number of nodes at that level.

Total cost of recursion tree = cost of 0, 1, 2, …… (log4n – 1) levels + cost of last level

=

x cn2 + O(nlog43) Here we got an equation, now we to find the

highest degree of polynomial, it is nlog23

i.e. T(n) = O(n2).

f) Step 1: Draw the recurrence tree It is drawn as shown

Step 2. To calculate the height of the tree ( or the lowest

level of the tree )

From the recurrence tree it can be easily noted that the

height of this tree in n. Since at each step its size is

reduced by only one.

Step 3. Cost at each level of the tree

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

27

(ECS-502)

From the recursion tree of T(n) = T(n – 1) + n we can easily see the cost of each level if we sum up

the cost of each level we got,

1 + (n – 1) + (n – 2) + (n – 3) +……… + n

Step 4. Total cost of the recursion tree

Total cost of recursion tree is

1 + (n – 1) + (n – 2) + (n – 3) +……… + n

We know that the sum of this series is

Therefore, we finally got the solution as T(n) = Ө(n2)

h) Hint: cost of last level = 1

Cost of remaining levels = n – 1

Total cost = n – 1 + 1

T(n) = Ө(n)

g) Step 1: Draw the recurrence tree It is drawn as shown

Step 2. To calculate the height of the tree ( or the lowest level of

the tree )

From the recurrence tree it can be easily noted that the height

of this tree in n. Since at each step its size is reduced by only

one.

Step 3. Cost at each level of the tree

From the recursion tree

Total number of nodes at last level = 2n

Total cost of last level = Total number of leaves x Cost of each Leave

= 2n x T(1)

= O(2n)

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

28

(ECS-502)

Cost of each level = Cost of each node at that level x Total number of nodes at that level.

Step 4. Total cost of the recursion tree

Total cost of recursion tree = cost of 0, 1, 2, …… (n – 1) levels + cost of last level

= 2n- 1 + O(2n)

Therefore, the solution of recurrence T(n) = 2T(n – 1) + 1 is

T(n) = Ө(2n).

i) Step 1: Draw the recurrence tree It is drawn

Step 2. To calculate the height of the tree ( or the lowest

level of the tree )

there are three order of reductions i.e. ½, ¼, and 1/8.

Now among these three we have to take the order of

reduction that will go to the deeper level, since that will

decide the height of tree. Therefore among these three

order of reductions ½, ¼, and 1/8, we select ½, since it

reaches more deeper then ¼, and 1/8.

n/2i = 1

Therefore i = log2n

Thus the

height of the tree = log2n

We know that:

Total levels of the tree = Height of the tree + 1

Therefore, Total levels of the tree = log2n + 1

Step 3. Cost at each level of the tree

First we find the cost of last level.

Total number of nodes at last level = 3log2n

Total cost of last level = Total number of leaves x Cost of each Leave

Prepared By: NIKUNJ KUMAR

RKGIT

DAA

= 3log2n x T(1)

29

= nlog23 x T(1)

(ECS-502)

= O(nlog23)

Therefore, the solution of recurrence T(n) = T(n/2) + T(n/4) + T(n/8) + n is

T(n) = O(nlog23).

Prepared By: NIKUNJ KUMAR

RKGIT