1 Lecture 17-18 The Transport Layer

advertisement

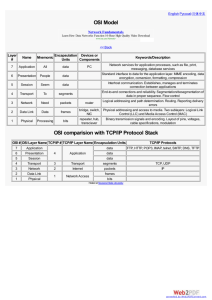

Computer Communications Lecture 17-18 The Transport Layer Required Reading: Tanenbaum (chapter 6 and section 5.3) Transport Service • Two types of service: connectionless and connection-oriented – Implemented by a transport protocol between the two transport entities • Interface to upper layers (user processes in the application layer) via transport service primitives (e.g., socket API in the Internet) The network, transport, and application layers. The nesting of TPDUs, packets, and frames. 2 1 Elements of Transport Protocols • Addressing • Multiplexing • Connection Establishment • Connection Release • Flow Control and Buffering • Congestion Control • Sequencing • Error Control • Crash Recovery 3 Transport vs. Data Link Protocols • Transport layer similarities with data link layer – Need to deal with error control, sequencing, flow control, etc. • Transport layer differences from data link layer – Needs explicit addressing – Complicated initial connection establishment – Deal with existence of storage capacity in the subnet – Greater flow control and buffering requirements due to large and dynamically varying number of connections (a) Environment of the data link layer. (b) Environment of the transport layer.4 2 Addressing • Transport layer address: Transport Service Access Point (TSAP) – “port” (16-bit) in the Internet • Network layer address: Network Service Access Point (NSAP) – “IP address” (32-bit) in the Internet • Mechanisms for knowing TSAPs – Well-known TSAPs – Initial connection protocol and a special process server (e.g., Internet daemon – inetd) TSAPs, NSAPs and transport connections. – Name (directory) server 5 Multiplexing • Upward multiplexing: De-multiplex arriving packets (TPDUs) to corresponding processes using transport addresses • Downward multiplexing: distribute traffic from a process among multiple network links, e.g., ISDN line (a) Upward multiplexing. (b) Downward multiplexing. 6 3 The Internet Transport Protocols: UDP • User Datagram Protocol (UDP) – RFC 768 – Connectionless protocol for sending/receiving encapsulated IP datagrams (IP packets wrapped with a short header) » In Internet, transport layer messages are called “segments” – Supports (de-)multiplexing of multiple processes over same IP address – No flow control, error control, sequencing • UDP Applications – Client-server (request-reply) interactions » E.g., Domain Name System (DNS), Remote Procedure Call (RPC) – Real-time multimedia applications » E.g., Real-time Transport Protocol (RTP) The UDP header. 7 The Internet Transport Protocols: TCP • Transmission Control Protocol (TCP) – RFC 793, RFC 1122, RFC 1323 – Connection-oriented – Provides a end-to-end reliable “byte” stream » Every byte has a (32-bit) sequence number » Uses a sliding window protocol » Separate 32-bit sequence numbers for acknowledgements and window mechanism – Full-duplex and point-to-point – Communicates in segments » Segment size bound by maximum IP payload limit and network maximum transfer unit (MTU) • TCP applications – FTP (21), Telnet (23), HTTP (80), NNTP (119), SMTP (25), POP-3 (110) 8 4 The TCP Segment Header TCP Header. 9 TCP Checksum • Checksums header, data and conceptual pseudoheader (below) • Checksum computation: – Set checksum part of the header to zero, pad data field with an additional zero if odd length – Add up all the 16-bit words in 1’s complement take 1’s complement of the sum as the checksum • Same procedure also used for UDP The pseudoheader included in the TCP checksum. 10 5 Connection Establishment • Problems arise when network can lose, store and duplicate packets • Main problem: existence of delayed duplicates • Common solution due to Tomlinson (1975): – Use of connection identifiers (sequence numbers) with clock-based setting of initial sequence numbers – Bounded packet lifetimes – Three-way handshake • Similar solution also used for TCP connection establishment 11 TCP Connection Establishment • Three-way handshake (a) TCP connection establishment in the normal case. (b) Call collision. 12 6 Connection Release • Asymmetric release: connection broken if one side releases – May result in data loss • Symmetric release: each side releases separately Abrupt disconnection with loss of data. 13 Connection Release (2) • Illustrating the impossibility of devising a correct connection release protocol The two-army problem. 14 7 Connection Release (3) • Practical approach: a three-way handshake with timeouts – Can lead to half-open connections Four protocol scenarios for releasing a connection. (a) Normal case of a three-way handshake. (b) final ACK lost. 15 Connection Release (4) • Practical approach: a three-way handshake with timeouts – Can lead to half-open connections (c) Response lost. (d) Response lost and subsequent DRs lost. 16 8 TCP Connection Release • View a connection as a pair of simplex connections and release each simplex connection independently • Timers used to avoid two-army problem – If no response to FIN within two packet lifetimes, sender of the FIN releases connection – Other side eventually notices that no one is listening to it any more and will time out as well Figure from Kurose & Ross 17 Flow Control and Buffering • Flow control problem in transport layer similar to data link layer, except that the former has to deal with large number of connections – Allocating dedicated buffers per connection at sender and receiver may not be practical – Different approaches for buffer organization » Chained fixed-size buffers » Chained variable-sized buffers » One large circular buffer per connection – Type of traffic carried by a connection also influences buffering strategy – As connections come and go, and traffic types changes, need to able to adjust buffer allocations dynamically » Using variable-sized sliding windows » Decouple window management from acknowledgements 18 9 Dynamic Buffer Allocation Dynamic buffer allocation. The arrows show the direction of transmission. An ellipsis (…) indicates a lost TPDU. 19 TCP Window Management • Additional optimizations – Cumulative ACKs – Delayed acknowledgements to reduce acknowledgement and window update traffic – Nagle’s algorithm to avoid sending many short packets with few bytes of data (interactive traffic) and make efficient use of network bandwidth » Send the first byte and buffer the rest until outstanding byte is acknowledged » After receiving acknowledgement, send all buffered characters in one TCP segment and start buffering until all sent data is acknowledged or enough data waiting to be sent (half the window or max segment) – Clark’s solution to address “silly window syndrome” 20 10 TCP Window Management (2) • Silly window syndrome: when data consumed one byte at a time at receiver • Clark’s solution: receiver delays sending window update segment until it has buffer to hold max sized segment or is half empty • Additionally need to deal with out-oforder segments Silly window syndrome. 21 TCP Timer Management • Retransmission timer setting difficult with TCP compared to data link layer because of high RTT variance and network dynamics • Retransmission timeout calculation algorithm due to Jacobson (1988) Timeout = RTTest + 4 x RTT_Devest RTTest = α RTTest + (1 – ) RTT_Sample, where RTT_Devest = α α α = 7/8 (typically) RTT_Devest + (1 – ) |RTTest – RTT_Sample| α – Karn’s algorithm to deal with retransmitted segments in timeout calculation » Do not update RTT on any retransmitted segments » Double timeout so long as retransmission attempt fails • Persistence timer to prevent deadlocks by triggering periodically probes from sender to receiver • Keepalive timer used when a connection has been idle for a long time • Timer during connection release set to twice the max packet lifetime 22 11 Congestion • Happens when offered load close to network capacity • Several factors can cause congestion – Sudden traffic bursts » Role of memory (buffering capacity) – Slow processors – Low bandwidth links • Congestion control vs. flow control – Congestion control involves the whole network – Only a pair of nodes (sender and receiver of a flow) are involved in flow control – But both may require same response from senders, i.e., reducing sending rate 23 General Principles of Congestion Control • Open loop solutions: focus on congestion prevention, oblivious of current network state – Source-oriented vs. destination-oriented • Closed loop solutions based on feedback loop 1. Monitor the system to detect when and where congestion occurs – Metrics: packet drop percentage, mean queue lengths, mean and standard deviation of packet delays, #retransmitted packets 2. Pass this information to places where action can be taken – Using special packets or marking existing packets to inform traffic sources (explicit feedback) – Implicit feedback (e.g., packet loss in wired Internet) – Proactive probing from hosts 3. Adjust system operation to correct the problem – Choosing appropriate timescale for adaptation critical – Increase resources or decrease load 24 12 Congestion Prevention Policies (Jain, 1990) Policies that affect congestion. 25 Congestion Control • Virtual circuit subnets • Datagram subnets – Admission control – Rerouting new virtual circuits around congested areas – Resource reservation during virtual circuit setup • Jitter (delay variation) control for audio/video applications – Can be bounded by computing expected transit time – Prioritizing packets that are behind their schedule the most can help control jitter – Buffering at receiver can help for video on demand or stored audio/video streaming apps, but not for live apps like Internet telephony or video conferencing – Monitor resource (e.g., output line) utilization and enter a “warning” state when it exceeds a threshold – On packet arrival for the resource in warning state, inform traffic source using: » Warning bit » Choke packets » Hop-by-hop choke packets » Load shedding (random vs. application-aware) - E.g., Random Early Detection (RED) with TCP 26 13 TCP Congestion Control • Congestion can be dealt with by employing a principle borrowed from physics: law of conservation of packets, i.e., refrain from injecting a new packet until an old one leaves the network • TCP achieves this goal by adjusting transmission rate via dynamic manipulation of sender window = min (receiver window, congestion window) a) A fast network feeding a low capacity receiver flow control problem (receiver window) b) A slow network feeding a highcapacity receiver congestion control problem (congestion window) • Congestion detection via monitoring retransmission timeouts because losses mainly due to congestion in wired Internet and losses lead to timeouts 27 TCP Congestion Control (2) • When connection established, congestion window = max segment size and threshold = 64KB • Congestion window is increased exponentially in response to acknowledgements until either a timeout occurs or threshold is reached or receiver window is reached (slow start phase) • When timeout, threshold is set to half the current congestion window and congestion window reset to max segment size Illustration of TCP congestion control algorithm. • After crossing threshold, congestion window increases linearly till a timeout occurs or receiver window is reached (congestion avoidance phase) 28 14