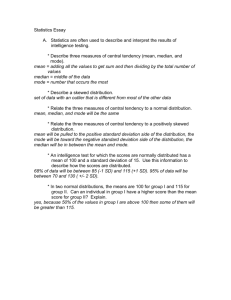

Statistical Thinking

advertisement