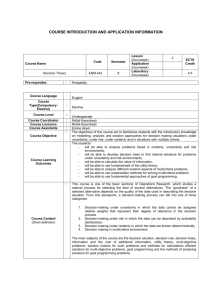

Decision Theory: A Brief Introduction

advertisement