4. PERFORMANCE APPRAISAL

What does it mean to do a good job, and how can you determine if

someone is doing one?

CRITERION

Standard of judging; a rule or test by which anything is tried in forming a

correct judgment respective it. A standard.

In I/O definition (operationalization) of good performance.

COMPOSITE CRITERION

Brogden & Taylor (1950) Dollar Criterion

1. Job analysis to define subcriteria

Ft2

Damage to equipment

Time of other personnel consumed

Accidents

Quality of finished product

Errors in finished product

Ft2 laid

2. Determine which to use

3. Affix dollar amounts

4. Calculate value of employee

MULTIDIMENSIONAL

CHARACTERISTICS OF CRITERIA: Contamination, Deficiency,

Relevance

Theoretical Criterion

Deficiency

Relevance

Contamination

Actual Criterion

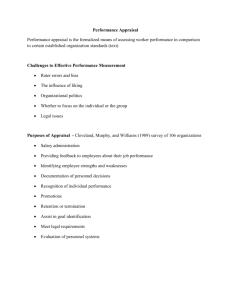

PERFORMANCE APPRAISAL

Determination and Documentation of Individual's Performance

Should be tied directly to criteria

USES

Administrative decisions (promotion, firing, transfer)

Employee development and feedback

Criteria for research (e.g., validation of tests)

Documentation for legal action

Training

METHODS

Objective Methods

Advantages

Consistent standards within jobs

Not biased by judgment

Easily quantified

Face validity-bottom line oriented

Disadvantages

Not always applicable (teacher)

Performance not always under individual's control

Too simplistic

Performance unreliable--Dynamic

Criterion

Subjective Methods: Rating Scales

Trait based graphic rating scale

Behavior based: Critical incidents

Mixed Standard Scale

Behaviorally Anchored Rating Scale

Behavior Observation Scales

Problems:

Rating errors: Leniency, Severity, Halo

Supervisor subversion of system--leniency as a strategy

Mixed purposes (feedback vs. administrative)

Negative impact of criticism

SOLUTIONS TO PROBLEM OF RATER ERRORS

ERROR RESISTANT FORMS

Behaviorally Anchored Rating Scale, BARS

Behavior Observation Scale, BOS

Mixed Standard Scale, MSS

Research does not show these forms to be successful in eliminating errors

RATER TRAINING

Rater error training: instructs raters in how to avoid errors

Reduces halo and leniency error

Less accuracy in some studies

Frame of reference training: Give raters examples of performance and

correct ratings

Initial research promising in reducing errors (Day & Sulsky, 1995, Journal

of Applied Psychology), but too new to tell for certain

SOUND PERFORMANCE APPRAISAL PRACTICES TO REDUCE

PROBLEMS

Separate purposes

Raises delt with separately from feedback

Consistent feedback, everyday

Limit criticism to one item at a time

Praise should be contingent

Supervisors should be coaches

Appraisal should be criterion related, not personal

TECHNOLOGY

Technology helpful for performance appraisal

Employee performance management systems

Web-based

Automated—reminds raters when to rate

Reduces paperwork

Provides feedback

360-degree feedback systems

Ratings provided by different people

Peers

Subordinates

Supervisors

Self

Big clerical task in large organizations to track/process ratings

Web makes 360s easy and feasible

Consulting firms available to conduct 360s

Performaworks

LEGALLY DEFENSIBLE PERFORMANCE APPRAISAL

Barrett and Kernan defensible performance appraisal system

Job analysis to define dimensions of performance

Develop rating form to assess dimensions from prior point

Train raters in how to assess performance

Management review ratings and employee appeal

Document performance and maintain detailed records

Provide assistance and counseling

Werner and Bolino (1997, Personnel Psychology) analysis of 295 court

cases

Organizations lost 41% of discrimination cases overall

Organizations using multiple raters lost only 11%

Safe system

Job analysis

Written instructions

Employee input

Multiple raters

Employee input leads to better attitudes, even when ratings are lower

Copyright Paul E. Spector, All rights reserved, July 22, 2002.