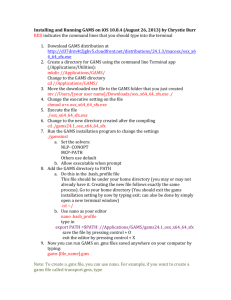

GTAP in GAMS with GUI, 76 pages, PDF

advertisement