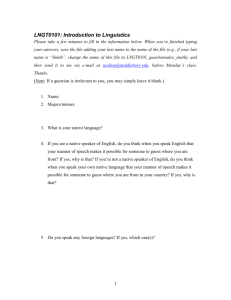

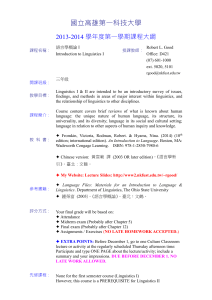

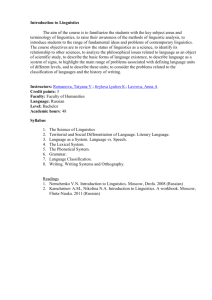

Lecture Notes: Linguistics

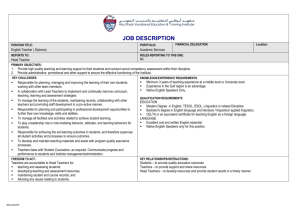

advertisement