NCI 8-14-03 Proceedings manuscript-peh

advertisement

Applications of Machine Learning and High Dimensional

Visualization in Cancer Diagnosis and Detection

John McCarthy*, Kenneth A. Marx, Alex Gee,

Philip O’Neil, M.L. Ujwal, Patrick Hoffman, John Hotchkiss

AnVil, Inc.

25 Corporate Drive

Burlington, MA 01803

1

*corresponding author

jmccarthy@verizon.net;

(781) 828-4230

Abstract

Introduction to Data Analysis by Machine Learning

Overview of Machine Learning and Visualization

Three of the major techniques in machine learning are clustering, classification and feature

reduction. Classification and clustering are also broadly known as unsupervised and supervised

2

learning. In supervised learning, the object is to learn predetermined class assignments from

other data attributes. For example, given a set of gene expression data for samples with known

diseases, a supervised learning algorithm might learn to classify disease states based on patterns

of gene expression. In unsupervised learning, there either are no predetermined classes or class

assignments are ignored. Cluster analysis is the process by which data objects are grouped

together based on some relationship defined between objects.

In both classification and

clustering an explicit or implicit model is created from the data which can help to predict future

data instances or understand the physical process behind the data. Creating these models can be

a very compute intensive task, such as training a neural network. Feature reduction or selection

reduces the data attributes used in creating a data model. This process can reduce analysis time

and create simpler and (sometimes) more accurate models.

In the three cancer examples presented all three machine learning techniques are used and

will be described, however, one of the primary analysis techniques used is high dimensional

visualizations. One particular visualization, RadViz™, incorporates all three machine learning

techniques in an intuitive, interactive display. Two other high dimensional other visualizations,

Parallel Coordinates and PatchGrid (similar to HeatMap) are also used to analyze and display

results.

Classification techniques used:

RadViz™ – rearranging dimensions based on T-statistic – a visual classifier

Naïve Bayes (Weka)

Support Vector Machines (Weka)

3

Instance Based or K – nearest neighbor (Weka)

Logistic Regression (Weka)

Neural Net (Weka)

Neural Net (Clementine)

Validation technique

10-fold

Hold 1 out

Training and Test datasets

Clustering techniques:

RadViz™ – arranging dimensions not based on class label – ex. Principal Components

Hiarchical with Pearson correlation

Feature Reduction techniques used:

Pairwise t-statistic – equal variance used in RadViz™ (other statistics can also be used)

F-statistic – select top dimensions based on the highest F-statistic computed from class labels

PURS™ (patent pending) - Principal Uncorrelated Record Selection

***** Phil/Alex Should have the new algorithm definition *******

Initially selection some “seed” dimensions, say based on high t or F statistic, repeatedly

delete dimensions that correlate highly to seed dimensions, if not correlated add the

4

dimension to the “seed” dimension set.

Repeat and slowly reduce the correlation

threshold until “seed” dimensions are reduced to the desired amount.

Random – randomly selected dimensions and build/test classifier

****** This probably should be reduced ********].

The Importance of High-dimensional Data Visualization and its Integration with

Analytic Data Mining Techniques.

Visualization, data mining, statistics, as well as

mathematical modeling and simulation are all methodologies that can be used to enhance the

discovery process [15].. There are numerous visualizations and a good number of valuable

taxonomies (See [16] for an overview of taxonomies). Most information visualization systems

focus on tables of numerical data (rows and columns), such as 2D and 3D scatterplots [17],

although many of the techniques apply to categorical data. Looking at the taxonomies, the

following stand out as high-dimensional visualizations: Matrix of scatterplots [17]; Heat maps

[17]; Height maps [17]; Table lens [18]; Survey plots [19]; Iconographic displays [20];

Dimensional stacking (general logic diagrams) [21]; parallel coordinates [22]; Pixel techniques,

circle segments [23]; Multidimensional scaling [23]; Sammon plots [24]; Polar charts [17];

RadViz™ [25]; Principal component analysis [26]; Principal curve analysis [27]; Grand Tours

[28]; Projection pursuit [29]; Kohonen self-organizing maps [30]. Grinstein et.al., [31] have

compared the capabilities of most of these visualizations. Historically, static displays include

histograms, scatterplots, and large numbers of their extensions. These can be seen in most

commercial graphics and statistical packages (Spotfire, S-PLUS, SPSS, SAS, MATLAB,

5

Clementine, Partek, Visual Insight’s Advisor, and SGI’s Mineset, to name a few). Most software

packages provide limited features that allow interactive and dynamic querying of data.

HDVs have been limited to research applications and have not been incorporated into many

commercial products. However, HDVs are extremely useful because they provide insight during

the analysis process and guide the user to more targeted queries. Visualizations fall into two

main categories: (1) low-dimensional, which includes scatterplots, with from 2-9 variables

(fields, columns, parameters) and (2) high-dimensional, with 100-1000+ variables. Parallel

coordinates or a spider chart or radar display in Microsoft Excel can display up to 100

dimensions, but place a limit on the number of records that can be interpreted. There are a few

visualizations that deal with a large number (>100) of dimensions quite well: Heatmaps,

Heightmaps, Iconographic Displays, Pixel Displays, Parallel Coordinates, Survey Plots, and

RadViz™. When more than 1000 records are displayed, the lines overlap and cannot be

distinguished. Of these, only RadViz is uniquely capable of dealing with ultra–high-dimensional

(>10,000 dimensions) datasets, and we discuss it in detail below.

RadViz™ is a visualization and classification/clustering tool that uses a spring analogy for

placement of data points and incorporates machine learning feature reduction techniques as

selectable algorithms. 13-15 The “force” that any feature exerts on a sample point is determined by

Hooke’s law: f kd . The spring constant, k, ranging from 0.0 to1.0 is the value of the

feature(scaled) for that sample, and d is the distance between the sample point and the perimeter

point on the RadViz™ circle assigned to that feature-see Figure A. The placement of a sample

point, as described in Figure A is determined by the point where the total force determined

vectorially from all features is 0. The RadViz display combines the n data dimensions into a

single point for the purpose of clustering, but it also integrates analytic embedded algorithms in

6

order to intelligently select and radially arrange the dimensional axes. This arrangement is

performed through Autolayout, a unique, proprietary set of algorithmic features based upon the

dimensions’ significance statistics that optimizes clustering by optimizing the distance separating

clusters of points. The default arrangement is to have all features equally spaced around the

perimeter of the circle, but the feature reduction and class discrimination algorithms arrange the

features unevenly in order to increase the separation of different classes of sample points. The

feature reduction technique used in all figures in the present work is based on the t statistic with

Bonferroni correction for multiple tests. The circle is divided into n equal sectors or “pie slices,”

one for each class. Features assigned to each class are spaced evenly within the sector for that

class, counterclockwise in order of significance (as determined by the t statistic, comparing

samples in the class with all other samples). As an example, for a 3 class problem, features are

assigned to class 1 based on the sample’s t-statistic, comparing class 1 samples with class 2 and 3

samples combined. Class 2 features are assigned based on the t-statistic comparing class 2 values

with class 1 and 3 combined values, and Class 3 features are assigned based on the t-statistic

comparing class 3 values with class 1 and class 2 combined. Occasionally, when large portions

of the perimeter of the circle have no features assigned to them, the data points would all cluster

on one side of the circle, pulled by the unbalanced force of the features present in other sectors.

In this case, a variation of the spring force calculation is used, where the features present are

effectively divided into qualitatively different forces comprised of high and low k value classes.

This is done via requiring k to range from –1.0 to 1.0. The net effect is to make some of the

features pull (high or +k values) and others ‘push’ (low or –k values) the points to spread them

absolutely into the display space, but maintaining the relative point separations. It should be

stated that one can simply do feature reduction by choosing the top features by t-statistic

7

significance and then apply those features to a standard classification algorithm. The t-statistic

significance is a standard method for feature reduction in machine learning approaches,

independently of RadViz. The top significance chemicals selected with the t-statistic are the

same as those selected by RadViz. RadViz has this machine learning feature embedded in it and

is responsible for the selections carried out here.

The advantage of RadViz is that one

immediately sees a “visual” clustering of the results of the t-statistic selection. Generally, the

amount of visual class separation correlates to the accuracy of any classifier built from the

reduced features. The additional advantage to this visualization is that sub clusters, outliers and

misclassified points can quickly be seen in the graphical layout. One of the standard techniques

to visualize clusters or class labels is to perform a Principle Component Analysis and show the

points in a 2d or 3d scatter plot using the first few Principle Components as axes. Often this

display shows clear class separation, but the most important features contributing to the PCA are

not easily seen. RadViz is a “visual” classifier that can help one understand important features

and how many features are related.

The RadViz Layout:

An example of the RadViz layout is illustrated in Figure A. There are 16 variables or dimensions

associated with the 1 point plotted. Sixteen imaginary springs are anchored to the points on the

circumference and attached to one data point. The data point is plotted where the sum of the

forces are zero according to Hooke’s law (F = Kx): where the force is proportional to the

distance x to the anchor point. The value K for each spring is the value of the variable for the

data point. In this example the spring constants (or dimensional values) are higher for the lighter

8

springs and lower for the darker springs. Normally, many points are plotted without showing the

spring lines. Generally, the dimensions (variables) are normalized to have values between 0 and

1 so that all dimensions have “equal” weights. This spring paradigm layout as some interesting

features.

For example if all dimensions have the same normalized value the data point will lie exactly in

the center of the circle. If the point is a unit vector then that point will lie exactly at the fixed

point on the edge of the circle (where the spring for that dimension is fixed). Many points can

map to the same position. This represents a non-linear transformation of the data which preserves

certain symmetries and which produces an intuitive display. Some features of this visualization

include:

it is intuitive, higher dimension values “pull” the data points closer to the dimension on the

circumference

points with approximately equal dimension values will lie close to the center

points with similar values whose dimensions are opposite each other on the circle will lie

near the center

points which have one or two dimension values greater than the others lie closer to those

dimensions

the relative locations of the of the dimension anchor points can drastically affect the layout

(the idea behind the “Class discrimination layout” algorithm)

an n-dimensional line gets mapped to a line (or a single point) in RadViz

Convex sets in n-space map into convex sets in RadViz

Computation time is very fast

9

1000’s of dimensions can be displayed in one visualization

We have studied the following systems related to cancer detection:

1. GI50 compound 60 cancer cell lines

2. Microarray lung cancer data

3. proteomics MS dataset

1. Data Mining the Public Domain NCI-60 Cancer Cell Line Compound GI50

Data Set

Introduction to the Cheminformatics Problem.

Important objectives in the overall process of molecular design for drug discovery are: 1)

the ability to represent and identify important structural features of any small molecule, and 2) to

select useful molecular structures for further study, usually using linear QSAR models and based

upon simple partitioning of the structures in n-dimensional space. To date, partitioning using

non-linear QSAR models has not been widespread, but the complexity and high-dimensionality

of the typical data set requires them. The machine learning and visualization techniques that we

describe and utilize here represent an ideal set of methodologies with which to approach

representing structural features of small molecules, followed by selecting molecules via

constructing and applying non-linear QSAR models. QSAR models might typically use

10

calculated chemical descriptors of compounds along with computed or experimentally

determined compound physical properties and interaction parameters (G, Ka, kf, kr, LD50,

GI50, etc) with other large molecules or whole cells. Theromodynamic and kinetic parameters

are usually generated in silico (G) or via high throughput screening of compound libraries

against appropriate receptors or important signaling pathway macromolecules (Ka, kf, kr),

whereas the LD50 or GI50 values are typically generated using whole cells that are suitable for

the disease model being investigated. When the data has been generated, then the application of

machine learning can take place. We provide a sample illustration of this process below.

The National Cancer Institute’s Developmental Therapeutics Program maintains a compound

data set (>700,000 compounds) that is currently being systematically tested for cytotoxicity

(generating 50% growth inhibition, GI50, values) against a panel of 60 cancer cell lines

representing 9 tissue types. Therefore, this dataset contains a wealth of valuable information

concerning potential cancer drug pharmacophores. In a data mining study of the 8 largest public

domain chemical structure databases, it was observed that the NCI compound data set contained

by far the largest number of unique compounds of all the databases (32). The application of

sophisticated machine learning techniques to this unique NCI compound dataset represents an

important open problem that motivated the investigation we present in this report. Previously,

this data set has been mined by supervised learning techniques such as cluster correlation,

principle component analysis and various neural networks, as well as statistical techniques

(33,34). These approaches have identified distinct subsets within of a variety of different classes

of chemical compounds (35,36,37,38). More recently, gene expression analysis has been added

to the data mining activity of the NCI compound data set (39) to predict chemosensitivity, using

the GI50 test data for each compound, for a few hundred compound subset of the NCI data set

11

(40). After we completed our initial data mining analysis using the GI50 values (41), gene

expression data on the 60 cancer cell lines was combined with NCI compound GI50 data and also

with a 27,000 chemical feature database computed for the NCI compounds. . {Using what

method or software??}

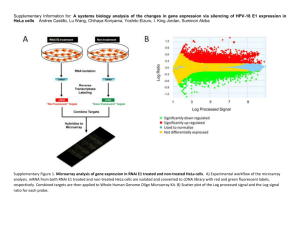

In this study, we use microarray based gene expression data to first establish a number of

‘functional’ classes of the 60 cancer cell lines via a hierarchical clustering technique. These

functional classes are then used to supervise a 3-Class learning problem, using a small but

complete subset of 1400 of the NCI compounds’ GI50 values as the input to a clustering

algorithm in the RadViz™ program (43).

Specific Methods Used.

For the ~ 4% missing values found in the 1400 compound data set, we tried and

compared two approaches to missing value replacement: 1) record average replacement; 2)

multiple imputation using Schafer’s NORM software (44). Since applying either missing value

replacement method to our data had little impact on the final results of our analysis, we chose

the record average replacement method for all subsequent analysis.

Clustering of cell lines was done with R-Project software using the hierarchical clustering

algorithm with “average” linkage method specified and a dissimilarity matrix computed as [1 –

the Pearson correlations] of the gene expression data. AnVil Corporation’s RadViz™ software

(??){ Need to update} was used for feature reduction and initial classification of the cell lines

based on the compound GI50 data. The selected features were validated using several classifiers

as implemented in the Weka (Waikato Environment for Knowledge Analysis, University of

Waikato, New Zealand) software application program . The classifiers used were IB1 (nearest

12

neighbor), IB3 (3 nearest neighbor), logistic regression, Naïve Bayes Classifier, support vector

machine, and neural network with back propagation. Both ChemOffice 6.0 (CambridgeSoft

Corp.) and the NCI website were used to identify compound structures via their NSC numbers.

Substructure searching to identify quinone compounds in the larger data set was carried out using

ChemFinder (CambridgeSoft).

Results and Discussion

Identifying functional cancer cell line classes using gene expression data. Based upon

gene expression data, we identified cancer cell line classes that we could use in a subsequent

supervised learning approach. In Figure 1.1, we present a hierarchical clustering dendrogram

using the [1-Pearson] distances calculated from the T-Matrix{?? Not sure what this is. Are you

referring to the t-test statistic in matrix form?} , comprised of 1376 gene expression values

determined for the 60 NCI cancer cell lines (43). There are five well defined clusters observed In

this figure. Clusters 2-5 respectively, represent pure renal, leukemia, ovarian and colonrectal

cancer cell lines. Only in Cluster 1, the melanoma class instance, does the class contain two

members of another clinical tumor type; the two breast cancer cell lines - MDA-MB-435 and

MDA-N. The two breast cancer cell lines behave functionally as melanoma cells and seem to be

related to melanoma cell lines via a shared neuroendocrine origin (43). The remaining cell lines

in this dendrogram, those not found in any of the five functional classes, are defined as being in a

sixth class; the non- melanoma, leukemia, renal, ovarian, colorectal class. In the supervised

learning analysis that follow, we treat these six computational derived functional clusters as

ground truth.

13

3-Class Cancer Cell Classifications and Validation of Selected Compounds. High

class number classification problems are difficult to implement in cases where the data are not

clearly separable into distinct classes. Thus, we could not successfully carry out a 6-class

classification of cancer cell lines based upon the starting GI50 compound data. Alternatively, we

implemented a 3-Class supervised learning classification using RadViz™ (25, 45-47). Starting

with the small 1400 compounds’ GI50 data set that contained no missing values for all 60 cell

lines, we selected those compounds that were effective in carrying out a 3-way class

discrimination at the p < .01 (Bonferroni corrected t statistic) significance level. A RadViz

visual classifier for the melanoma, leukemia, and non-melanoma/non-leukemia classes is shown

in Figure 2.1. A clear and accurate class separations of the 60 cancer cell lines can be seen.

There were 14 compounds selected as being most effective against melanoma cells and 30

compounds selected as being most effective against leukemia cells. Similar classification results

were obtained for the two separate 2-Class problems: melanoma vs. non-melanoma and

leukemia vs. non-leukemia. For all other possible 2-Class problems, we found that few to no

compounds could be selected at the significance level we had previously set.

In order to validate our list of computationally selected compounds , we applied six

additional analytical classification techniques, as previously described, , to the original GI50 data

set using the same set of chemical predictors and a hold-one-out cross-validation strategy. Using

these selected compounds resulted in a greater than 6-fold lowered level of error compared to

using the equivalent numbers of randomly selected compounds, thus validating our selection

methodology.

14

Quinone Compound Subtypes Upon examining the chemical identity of the compounds

selected as most effective against melanoma and leukemia, an interesting observation was made.

, For the 14 compounds selected as most effective against melanoma, 11 were p-quinones and

all have an internal ring quinone structure.

Alternatively, there were 30

compounds selected as most effective against leukemia, of which 8

contain p-quinones. In contrast to the internal ring quinones in the

melanoma class however, 6 out of the 8 leukemia p-quinones were

external ring quinones. In order to ascertain the uniqueness of the two quinone subsets

we first determined the extent of occurrence of p-quinones of all types in our starting data set, via

substructure searching using the ChemFinder 6.0 software. The internal and external quinone

subtypes represent a significant fraction, 25 % (10/41) of all the internal quinones and 40 %

(6/15) of all the external quinones in the entire data set (41).

Conclusion.

With this cheminformatics example we have demonstrated that the machine learning

approach described above utilizing RadViz™ has produced two novel discoveries . First, a small

group of chemical compounds, enriched in quinones, were found to effectively discriminate

among melanoma, leukemia, and non-melanoma/non-luekemia cell lines on the basis of

experimentally measured GI50 values. Secondly, two quinone subtypes were identified that

possess clearly different and specific toxicity to the leukemia and melanoma cancer cell types.

We believe that this example illustrates the potential of sophisticated machine learning

approaches to uncovering new and valuable relationships in complex high dimensional chemical

compound data sets.

15

2. Distinguishing lung tumor types using microarray gene expression data

Introduction to the high-throughput gene expression problem

Completion of the Human Genome Project has made possible the study of the gene

expression levels of over 30,000 genes [14, 15]??{Do these pertain to original references. 14

looks reasonable but I question 15 based on the journals. Please confirm. YES John, these were

reference numbers Ken provided from the original document he moved into this section and I

incorporated the text. The other two references below will need to be provided by Ken or

someone with their sources.} Major technological advances have made possible the use of DNA

microarrays to speed up this analysis. Even though the first microarray experiment was only

published in 1995{Ref ?, Ken?}, by October 2002 a PubMed query of microarray literature

yielded more than 2300 hits{Ref ?, Ken?}, indicating explosive growth in the use of this

powerful technique. DNA microarrays take advantage of the convergence of a number of

technologies and developments including: robotics and miniaturization of features to the micron

scale (currently 20-200 um surface feature sizes for spotting/printing and immobilizing

sequences for hybridization experiments), DNA amplification by PCR, automated and efficient

oligonucleotide synthesis and labeling chemistries, and sophisticated bioinformatics approaches.

An important application of microarray technology is the identification and

differentiation of tissue types using differential gene expressions, either between normal and

cancerous cells or among tumor subclasses. The specific aim of the project described below was

to explore the potential for using machine learning and high dimensional visualization in

16

building a classifier which could differentiate normal lung tissue from the various subclasses of

non-small cell lung cancer using microarray based differential expression patterns. We have

previously reported on using such techniques to successfully construct classifiers which can

solve the more general two-class problem of differentiating non-small cell lung cancer from

normal tissue with accuracies greater than 95%. However, the analysis of the three-class problem

of distinguishing normal lung tissue from the two subclasses of non-small cell lung carcinoma

(adenocarcinomas and squamous cell carcinoma) was not directly addressed. Our ultimate aim

was the creation of gene sets with small number of genes that might serve as the basis for

developing a clinically useful diagnostic tool.

In collaboration with the NCI, we examined two data sets of patients with and without

various lung cancers. The first data set was provided directly by the NCI and included 75 patient

samples [1]. This set contained 17 normal samples, 30 adenocarcinomas (6 doubles), and 28

squamous cell carcinomas (2 doubles). Doubles represent replicate samples prepared at different

times, using different equipment, but derived from the same tissue sample.. A second patient set

of 157 samples was obtained from a publically available data repository [2]. This set included

17 normal samples, 139 adenocarcinomas (127 of these with supporting information) and 21

squamous cell carcinomas. Both data sets included gene expression data from tissue samples

using Affymetrix’s Human Genome U95 Set [3]; only the first of five oligonucleotide based

GeneChip® arrays (Chip A) was used in this experiment. Chip A of the HG U95 array set

contains roughly 12,000 full-length genes and a number of controls. Because we were dealing

with two data sets both from different sources and microarray measurements taken at multiple

times we needed to consider a normalization procedure. For this particular analysis we kept with

a simple mean of 200 for each sample. This resulted in a set of 9918 expressed genes of which

17

approximately 2000 were found to be statistically significant (p<0.05) in differentiating normal

lung tissue form non-small cell lung cancer. This differentially expressed set of genes was then

used as the starting point for further analysis as described below.

Specific Methods Used

Because the combinatorial scale of trying all possible gene sets requires a significant

amount of time and computational power, we undertook an approach using sample genes sets

defined by three different gene selection methods {What happened to PURS? As PURS did not

provide any addition information to this analysis and did not perform better than radviz I

removed it for simplity. May be a more thorough analysis with the complete set of PURS results

might have provided something.}.?}

First we defined and analyzed the results from ten

independent random gene sets drawn from the set of approximately 2000 differentially expressed

genes as previously described. These random selections provided a lower predictive bound for

each gene set size.

Second, we selected only genes that demonstrated high statistical

significance by a standard F-test.

Finally, we applied the proprietary RadViz™ technique

developed at AnVil, Inc. (Burlington, MA) to identify sets of genes that best distinguished

differences among the subclasses of samples under analysis [4] {Need a reference here. !!! John,

this is a patent pending idea that has not been published yet. Although radviz as a visualization

technique has be published, the algorithm that selected variables to distinguishes classes is

material found in the company’s second patent (pending).}. Applying these three approaches to

the available expression data we were able to generate gene sets that ranged in size from 1 to 100

18

genes. The construction of gene sets was accomplished using a collection of custom scripts

written in Python.

To evaluate the resulting sets of genes we applied a collection of predictive algorithms to

each gene set using a ten-fold cross-validation testing methodology, since an initial comparison

of both ten-fold and hold-one-out cross-validation showed that they produced essentially the

same predictive accuracy. The predictive algorithms used in this analysis included but were not

limited to variations on neural networks, support vector machines, Naïve Bayes, and K-nearest

neighbors all implemented using the publically available Weka application program [5].

Throughout our process of evaluating the various gene sets we kept the two data sets separate in

order to perform two distinct testing scenarios. First we used the NCI data set for crossvalidation as described above; second, we used the Meyerson data set as an independent

validation set.

As a final validation of the biological significance of the genes in our our final 3-way

classifier, we mined the scientific literature for references that associated the selected genes with

specific key words found in association with lung cancer. {ML needs to provide the specific

tools used and brief description of methods}[Mesh – Informax, Go-onotlogy]

Results and Discussion

Distinguishing normal and two tumor types

Our analysis of the general two-class problem for distinguishing between normal lung

tissue and non-small cell lung cancer samples has been reported elsewhere [6]. Unlike the twoclass problem however, the three-class problem proved more challenging.

This problem

involved distinguishing normal lung tissue from two subclasses of non-small cell lung cancer;

19

adenocarcinoma and squamous cell carcinoma. Our best gene sets performed on average around

88% for the NCI data set and 96% for the Meyerson data set, both resulting in between 8 to 10

misclassifications. {Can we say anything about 2-class FP vs FN rates of this 3-class model as

compared to the previous 2-class model? Are they similar? NO, the amount of work needed to

make this comparison is beyond my allotted time for this paper.}?} As shown in Figure 2.1, sets

constructed from genes that are highly significant for the three-class problem using the F-statistic

performed better overall than gene sets constructed from randomly selected genes. Also shown in

this figure is the fact that the RadViz™ selection method generally outperforms randomly

selected genes and genes selected on the basis of high statistical significance using the F-test..

The RadViz™ display for the three-class problem as shown in Figure 2.2, clearly demonstrates

near perfect discrimination between normal lung tissue and the two non-small cell lung cancer

subclasses using as few as 15 genes..

Identification of problematic samples

Besides examining the classification results for each gene set independently we looked at

the consistency of classification of samples across gene sets using different machine learning

algorithms as previously described. Suprisingly we identified a few samples in both data sets that

were consistently misclassified. Figure 2.3 {Add patchgrid figure back in and generate separate

tiff image file} shows an example visualization of the results for of the various classification

algorithms (displayed horizontally) for each sample (displayed vertically) within the NCI data

set. The two continuous vertical lines, which are readily visible, represent two samples that have

been consistently misclassified by all the classification algorithms. Although it appears likely

that these samples were improperly labeled, we had no supporting information for these patients

20

and thus could not clinically validate these findings. In contrast , upon analysis of the Meyerson

data set we were able to identify six misclassified patients. After reviewing these patients’

supporting information we found that two of these samples consisted of mixed tissue types and

the classification algorithms caught this clinical anomoly.

Validation using biological relevance {ML needs to write this section}

Our validation of the various gene sets we constructed and tested included the use of

domain knowledge in an attempt to support the biological relevance of the selected gene set on

the basis of literature references that associated the selected genes with key words found to be

associated with lung cancer.

(ML needs to provide supporting data for the 15 gene model and a discussion of the

biological relevance of the genes selected. A table identifying the gene #, GenBank ID, and

whether or not there is literature support for its role in lung cancer might also prove

interesting).

Conclusion

This microarray high-throughput gene expression example demonstrates the usefulness of

the machine learning and high dimensional visualization approach to the identification of genes

that may play a significant role in the pathogenesis of non-small cell lung cancer. We have

shown that the RadViz™ technique is extremely useful in identifying genes with significant

differential gene expression which can be used as the basis for a clinically useful and accurate

diagnostic model incorporating measurements from as few as 15 genes. Finally, we have

21

provided the basis for a comprehensive pipeline based microarray analysis system incorporating

the selection, evaluation, and relevance of genes for multi-class problems in cancer detection.

References

1.

Jin Jen, M.D., Ph.D., Laboratory of Population Genetics, Center for Cancer Research,

National Cancer Institute.

2.

Matthew Meyerson Lab, Dana-Farber Cancer Institute,

http://research.dfci.harvard.edu/meyersonlab/lungca/data.html.

3.

Affymetrix, www.affymetrix.com.

4.

Reference to RadViz and PURS?? Methodology

5.

Weka (Waikato Environment for Knowledge Analysis), The University of Waikato,

http://www.cs.waikato.ac.nz/~ml.

6.

Dracheva, T., Shih, J., Jen, J., Gee, A., McCarthy, J., and Metrogenix; “Distinguishing

lung tumors based on small number of genes using flow-through-chips” (In preparation)

3. Building a Diagnostic Classifier for Ovarian Cancer Using

Proteomic Data

Introduction to the proteomics problem

ML: {One or two paragraph introduction to biological applications of mass spec and

SELDI-TOF. Focus on the value of using machine learning and high dimensional

22

visualization to differentiate the unique signatures of diseased vs normal protein

distibutions in relatively unfractioned serum rather than on the more conventional use of

mass spec fingerprinting in identifying unkown proteins after some form of separation. 1

or 2 general references would also be useful}

The specific goal of this project was to classify patients with ovarian cancer on the basis

of their SELDI-TOF mass spectroscopy signature derived from patient whole sera after

processing on the Ciphergen (Freemont, CA) WCX2 protein array. The methods for data

collection and the general approach are described in Petricoin, et al which documents the first

attempt at applying machine learning techniques to the analysis of clinical proteomic data. [1 ]

The data set used here is not the same as in the original paper, but a similar one labeled 8-07-02,

provided by the authors at http://clinicalproteomics.steem.com/download-ovar.php. The

authors indicate that this data set is less variable than the original data as a result of using an

improved protein chip coupled with totally automated processing by a robotic instrument.

The data consist of over 15,000 mass charge ratio (M/Z) intensity measures, below the

20,000 M/Z range, on 253 patient samples. 162 of these samples were from patients with

ovarian cancer and 91 were from controls. The major objective was to select a set of M/Z values

which best distinguishes cancer cases from controls. Since the number of features is much larger

than the number of samples, it is important to do this in a principled manner to avoid classifying

on the basis of noise.

Two aspects of this data set pose interesting technical challenges in its analysis. The first

is the low S/N level associated with many of the features as shown in Figure 3.1, and the second

is the high degree of correlation between different features. There are at least two sources of

correlation. One, illustrated in Figure 3.2 for M/Z ratios near 417, is the high correlation

23

between neighboring features in the vicinity of a peak. Such correlation may be due to the

inherent resolution limitations of this instrument in resolving two peaks when separated by less

than 600 M/Z units. The other, illustrated in Figure 3.3, is correlation between data at peaks

where one M/Z ratio is almost exactly half the other M/Z ratio. The graph at the top of Figure

3.3 shows the spectrum in the M/Z range from 5300 to 10600, while the bottom graph shows the

range from 2650 to 5300, exactly half the range of the top graph. All of the peaks of the top

graph are repeated in the lower graph, consistent with molecules with the same mass and twice

the charge suggesting production of doubly ionized forms of the original protein fragments.

These figures illustrate the power of visualization for data exploration. Clearly there is a high

degree of noise and redundancy in the data. Such data attributes can be problematic for feature

reduction and consequently reduce the accuracy of the predictive model under development.

Specific methods Used.

Initially each sample was randomly assigned (with 50% probability) to either a “train”

group or a “test” group. This resulted in a training group of 88 ovarian cancer samples and 49

controls, and a test group of 74 ovarian cancer samples and 42 controls. In order to avoid any

influence of test group data on the classification results, all feature reduction and modeling was

done on the basis of training group data only.

The first steps in feature reduction were to eliminate all M/Z ratios less than 350, and to

eliminate those for which the maximum intensity value (in the train group) was less than 17.5.

This was done in order to minimize the possibility of choosing a feature based on noise alone,

and resulted in a reduction from over 15,000 to less than 4,000 features. The next step was to

perform a t test on the remaining features to determine which features show a significant

24

difference between cancer patients and controls. We kept features with significance of p < .001

after Bonferroni correction for multiple tests. This left over 400 features, many of which were

redundant in the sense discussed above.

Simply choosing the most significant 5 or 10 features would incorporate this redundancy

into the classifier and could lead to poor performance. Consequently we used the PURS

technique with a correlation parameter of .90 and initialized with two features, the most

significant feature for each of the two classes. The result was a set of twelve features. We

trained two neural network models using SPSS Clementine. One used all twelve features, the

other used the top six features.

Results and Discussion.

Both neural network models classified cancer patients and controls perfectly in both the

training and test groups. On the website with the data

http://clinicalproteomics.steem.com/download-ovar.php, Petricoin et al present a set of seven

M/Z values which also results in perfect classification. These were chosen by means of a genetic

algorithm. Our past experience with genetic algorithms and microarray data has shown us that

genetic algorithms are susceptible to classification by noise. Microarray data are similar to the

proteomics data in that the number of features (genes) is far greater than the number of samples.

With this level of imbalance it is possible to find perfect classifiers in randomly generated data.

Having an independent test set helps to weed out the really noisy models. However, when you

consider the number of ways of choosing seven features out of 15,000 (> 1025), you begin to see

that the chance of finding a set of seven “good” features is small. At a minimum, features should

show a statistically significant difference between the two classes. Of the seven features given

25

on the website, two are not even marginally significant before correcting for multiple tests.

These contribute mostly noise to the classifier. Two or three more features would fail our strict p

< .001 standard after a Bonferroni correction. This is an arbitrary standard, but since it still

leaves more than 400 “good” features there is no reason to relax it.

Figure 3.4 shows parallel coordinates displays of the two feature sets. The display on the

left is the data for the seven features given on the website. The display on the right is the data for

the six features we selected. Five of the seven features on the left in Figure 3.4 have very low

intensities. We eliminated these in the first step of feature reduction because they fail to reach

the 17.5 threshold.

Conclusions.

It is clear that there are significant differences in proteins in serum between ovarian

cancer patients and controls, and that mass spectroscopy is potentially a useful diagnostic tool.

Because of differences in machines and instrumentation, the applicability of our models to a new

data set is an open question. However, by applying intelligent feature reduction to mass

spectroscopy data using high dimensional visualization prior to classification, the development

of clinically accurate and useful diagnostic models using proteomic data should be possible.

Petricoin, E. F., A. M. Ardekani, B. A. Hitt, P. J. Levine, V. A. Fusaro, S. M. Steinberg, G. B.

Mills, C. Simone, D. A. Fishman, E. C. Kohn, L. A. Liotta, Use of proteomic patterns in serum

to identify ovarian cancer, Lancet, 2002, 359:572-77.

26

Conclusions

Acknowledgements

AnVil and the authors gratefully acknowledges support from two SBIR Phase I grants R43

CA94429-01 and R43 CA096179-01 from the National Cancer Institute. Also, support is

acknowledged from ………..X Y Z

References

1.

A. Strehl. Relationship-based Clustering and Cluster Ensembles for High-dimensional

Data Mining. Dissertation, The University of Texas at Austin, May, 2002.

2.

I. H. Witten and E. Frank.

Data Mining: Practical Machine Learning Tools and

Techniques with Java Implementations. San Francisco: Morgan Kaufmann, 2000.

3.

J. A. Hartigan. Clustering Algorithms. New York: John Wiley & Sons, 1975.

4.

D. Fasulo. “An Analysis of Recent Work on Clustering Algorithms.”

http://www.cs.washington.edu/homes/dfasulo/clustering.ps, April 26, 1999.

5.

C. Fraley and A. E. Raftery “Model-Based Clustering, Discrimination Analysis, and

Density Estimation.” Technical Report no. 380, Department of Statistics, University of

Washington, Seattle, October, 2000.

27

6.

F. Höppner, F. Klawonn, R. Kruse, and T. Runkler. Fuzzy Cluster Analysis: Methods for

Classification, Data Analysis and Image Recognition. Chichester: John Wiley & Sons,

1999..

7.

Everitt, B., Cluster Analysis, Halsted Press, New York (1980).

8.

Schaffer, C., Selecting a classification method by cross-validation, Machine Learning,

13:135-143 (1993).

9.

Feelders A., Verkooijen W.: Which method learns most from the data? Proc. of 5th

International Workshop on Artificial Intelligence and Statistics, January 1995, Fort

Lauderdale, Florida, pp. 219-225, (1995).

10.

Dietterich, T.G., Approximate statistical tests for comparing supervised classification

learning algorithms. Neural Computation, 10(7), 1895-1924.

11.

Cheng, J., Greiner, R., Comparing Bayesian network classifiers. In Proceedings of the

15th Conference on Uncertainty in Artificial Intelligence (UAI ’99), 101-107, Morgan

Kaufmann Publishers (1999).

12.

Salzberg, S. L., On Comparing Classifiers: A Critique of Current Research and Methods,

Data Mining and Knowledge Discovery, 1999, 1:1-12, Kluwer Academic Publishers, Boston.

13.

Ramaswamy, S., Ross, K.N., Lander, E.S. and Golub, T.R. A molecular signature of

metastasis in primary solid tumors. Science, 22, 1-5.

14.

Chaussabel., D. and Sher, A. Mining microarray expression data by literature profiling.

Genomebiology, 3, 1-16

15. Fayyad, U.M., Piatetsky-Shapiro, G., Smyth, P., Uthurusamy, R. (Eds.) Advances in knowledge

discovery and data mining, AAAI/MIT Press, 1996.

16. B. Shneiderman, “The Eyes Have It: A Task by Data Type Taxonomy of Information

Visualization,” presented at IEEE Symposium on Visual Languages '96, Boulder, CO, 1996.

28

17. J. W. Tukey, Exploratory Data Analysis. Reading, MA: Addison-Wesley, MA, 1977.

18. R. Rao and S. K. Card, “The Table Lens: Merging Graphical and Symbolic Representations in an

Interactive Focus+Context Visualization for Tabular Information,” presented at ACM CHI '94,

Boston, MA, 1994.

19. D. F. Andrews, “Plots of High-Dimensional Data,” Biometrics, vol. 29, pp. 125-136, 1972.

20. H. Chernoff, “The Use of Faces to Represent Points in k-Dimensional Space Graphically,”

Journal of the American Statistical Association, vol. 68, pp. 361-368, 1973.

21. J. Beddow, “Shape Coding of Multidimensional Data on a Microcomputer Display,” presented at

IEEE Visualization '90, San Francisco, CA, 1990.

22. A. Inselberg, “The Plane with Parallel Coordinates,” Special Issue on Computational Geometry:

The Visual Computer, vol. 1, pp. 69-91, 1985.

23. D. A. Keim and H.-P. Kriegel, “VisDB: Database Exploration Using Multidimensional

Visualization,” IEEE Computer Graphics and Applications, vol. 14, pp. 40-49, 1994.

24. J. W. J. Sammon, “A Nonlinear Mapping for Data Structure Analysis,” IEEE Transactions on

Computers, vol. 18, pp. 401-409, 1969.

25. P. Hoffman and G. Grinstein, “Dimensional Anchors: A Graphic Primitive for Multidimensional

Multivariate Information Visualizations,” presented at NPIV '99 (Workshop on New Paradigmsn

in Information Visualization and Manipulation), 1999.

26. H. Hotelling, “Analysis of a Complex of Statistical Variables into Principal Components,”

Journal of Educational Psychology, vol. 24, pp. 417-441, 498-520, 1933.

27. T. Hastie and W. Stuetzle, “Principal Curves,” Journal of the American Statistical Association,

vol. 84, pp. 502-516, 1989.

28. D. Asimov, “The Grand Tour: A tool for Viewing Multidimensional Data,” DIAM Journal on

Scientific and Statistical Computing, vol. 61, pp. 128-143, 1985.

29. J. H. Friedman, “Exploratory Projection Pursuit,” Journal of the American Statistical Association,

vol. 82, pp. 249-266, 1987.

29

30. T. Kohonen, E. Oja, O. Simula, A. Visa, and J. Kangas, “Engineering Applications of the SelfOrganizing Map,” presented at IEEE, 1996.

31. G. Grinstein, P. E. Hoffman, S. Laskowski, and R. Pickett, “Benchmark Development for

the Evaluation of Visualization for Data Mining,” in Information Visualization in Data

Mining and Knowledge Discovery, The Morgan Kaufmann Series in Data Managament

Systems, U. Fayyad, G. Grinstein, and A. Wierse, Eds., 1st ed: Morgan-Kaufmann

Publishers, 2001.

32. Voigt, K. and Bruggeman, R. (1995)

Toxicology Databases in the Metadatabank of Online Databases

Toxicology, 100, 225-240

33. Weinstein, J.N.,et.al., (1997,) An information-intensive approach to the molecular

pharmacology of cancer, Science, 275, 343-349.

34. Shi, L.M., Fan, Y.,Lee, J.K., Waltham, M., Andrews, D.T., Scherf,U., Paul, K.D., and

Weinstein, J.N. (2000)

J. Chem. Inf. Comput. Sci., 40, 367-379.

35. Bai, R.L., Paul, K.D., Herald, C.L., Malspeis, L., Pettit, G.R., and Hamel, E. (1991)

Halichondrin B and homahalichondrin B, marine natural products binding in the vinca domain of

tubulin-based mechanism of action by analysis of fifferential cytotoxicity data

J. Biol. Chem., 266, 15882 – 15889.

36. Cleveland, E.S., Monks, A., Vaigro-Wolff, A., Zaharevitz, D.W., Paul, K., Ardalan,

K.,Cooney, D.A., and Ford, H. Jr. (1995)

Site of action of two novel pyramidine biosynthesis inhibitors accurately predicted by

COMPARE program

Biochem. Pharmacol., 49, 947-954.

30

37. Gupta, M., Abdel-Megeed M., Hoki, Y, Kohlhagen, G., Paul, K., and Pommier, Y.

(1995) Eukaryotic DNA topoisomerases mediated DNA cleavage induced by new inhibitor:

NSC 665517 Mol. Pharmacol., 48, 658-665

38. Shi, L.M., Myers, T.G., Fan, Y., O’Connors, P.M., Paul, K.D., Friend, S.H., and

Weinstein, J.N. (1998)

Mining the National Cancer Institute Anticancer Drug Discovery Database: cluster

analysis of ellipticine analogs with p53-inverse and central nervous system-selective

patterns of avtivity

Mol. Pharmacology, 53, 241-251.

39. Ross, D.T. et. al., (2000)

Systemamtic variation of gene expression patterns in human cancer cell lines

Nat. Genet., 24, 227-235

40. Staunton, J.E.; Slonim, D.K.; Coller, H.A.; Tamayo, P.; Angelo, M.P.; Park, J.; Sherf, U.;

Lee, J.K.; Reinhold, W.O.; Weinstein, J.N.; Mesirov, J.P.; Landers, E.S.; Golub, T.R.

Chemosensitivity prediction by transcriptional profiling, Proc. Natl. Acad. Sci., 2001, 98,

10787-10792.

41. Marx, K.A., O’Neil, P., Hoffman, P.; Ujwal, M.L. Data Mining the NCI Cancer Cell Line

Compound GI50 Values: Identifying Quinone Subtypes Effective Against Melanoma and

Leukemia Cell Classes, J. Chem. Inf. Comput. Sci., 2003, in press.

31

42. Blower, P.E.; Yang, C.; Fligner, M.A.; Verducci, J.S.; Yu, L.; Richman, S.; Weinstein, J.N.

Pharmacogenomic analysis: correlating molecular substructure classes with microarray gene

expression data, The Pharmacogenomics Journal, 2002, 2, 259-271.

43. Scherf, W.; Ross, D.T.; Waltham, M.; Smith, L.H.; Lee, J.K.; Tanabe, L.; Kohn, K.W.;

Reinhold, W.C.; Myers, T.G.; Andrews, D.T.; Scudiero, D.A.; Eisen, M.B.; Sausville, E.A.;

Pommier, Y.; Botstein, D.; Brown, P.O.; Weinstein, J.N. A gene expression database for the

molecular pharmacology of cancer, Nature, 2000, 24, 236-247.

44. Schafer, J.L. Analysis of Incomplete Multivariate Data, Monographs on Statistics and

Applied Probability 72, Chapman & Hall/CRC, 1997.

45. RadViz, URL: www.anvilinfo.com

46. Hoffman, P.; Grinstein, G.; Marx, K.; Grosse, I.; Stanley, E. DNA visual and analytical data

mining, IEEE Visualization 1997 Proceedings, pp. 437-441, Phoenix

47. Hoffman, P.; Grinstein, G. Multidimensional information visualization for data mining with

application for machine learning classifiers, Information Visualization in Data Mining and

Knowledge Discovery, Morgan-Kaufmann, San Francisco, 2000.

48. Bucci, C.; Thompsen, P.; Nicoziani, P.; McCarthy, J.; van Deurs, B. Rab7: a key to lysosome

biogenesis, Mol. Biol. Cell, 2000, 11, 467-480.

32

49. Ross, D. NAD(P)H: quinone oxidoreductases, Encyclopedia of Molecular Medicine, 2001,

2208-2212.

50. Faig, M.; Bianchet, M.A.; Talalay, P.; Chen, S.; Winski, S.; Ross, D.; Amzel, L.M. Structure

of recombinant human and mouse NAD(P)H:quinone oxidoreductase: Species comparison and

structural changes with substrate binding and release, Proc. Natl. Acad. Sci., 2000, 97, 31773182

51. Faig, M.; Bianchet, M.A.; Winsky, S.; Moody, C.J.; Hudnott, A.H.; Ross, D.; Amzel, L.M.

Structure-based development of anticancer drugs: complexes of NAD(P)H:quinone

oxidoreductase 1 with chemotherapeutic quinones, Structure (Cambridge), 2001, 9, 659-667

52. Smith, M.T.; Wang, Y.; Kane, E.; Rollinson, S.; Wiemels, J.L.; Roman, E.; Roddam, P.;

Cartwright, R.; Morgan, G., Low NAD(P)H: quinone oxidoreductase I activity is associated with

increased risk of acute leukemia in adults, Blood, 2001, 97, 1422-1426

53. Wiemels, J.L.; Pagnamenta, A.; Taylor, G.M.; Eden, O.B.; Alexander, F.E.; Greaves, M.F. A

lack of a functional NAD(P)H:quinone oxidoreductase allele in selectively associated with

pediatric leukemias that have MLL fusions. United Kingdom Childhood Cancer Study

Investigators, Cancer Res., 1999, 59, 4095-4099

33

54. Naoe T.; Takeyama, K.;, Yokozawa, T.; Kiyoi, H.; Seto, M.; Uike, N.; Ino, T.;

Utsunomiya, A.; Maruta, A.; Jin-nai, I.; Kamada, N.; Kubota, Y.; Nakamura, H.; Shimazaki,

C.; Horiike, S.; Kodera, Y.; Saito, H.; Ueda, R.; Wiemels, J.; Ohno, R. Analysis of the genetic

polymorphism in NQO1, GST-M1, GST-T1 and CYP3A4 in 469 Japanese patients with therapy

related leukemia/myelodysplastic syndrome and de novo acute myeloid leukemia, Clin. Cancer

Res., 2000, 6, 4091-4095

Other References (14-25 in CC Grant)

35. Venter, J.C., et.al., The Sequence of the Human Genome. Science, 291, 1303-1351 (2001).

36. Lander, E.S., et.al., Initial Sequencing and Analysis of the Human Genome. Nature, 409, 860921 (2001).

37. Stoeckert, C.J., et.al., Microarray databases: standards and ontologies. Nat. Genet. 32 (Suppl)

469-473.

38. No author, Microarray standards at last. Nature, 419, 323.

39. Ball, C., et.al., Standards for microarray data., Science, 298, 539.

40. Quackenbush, J. (2001) Computational analysis of cDNA microarray data. Nature Reviews 2(6):

418-428.

41. Dudoit, S., Yang, Y.H., Speed, T.P., and Callow, M.J. (2002) Statistical methods for identifying

differentially expressed genes in replicated cDNA microarray experiments. Statistica Sinica Vol.

12, No. 1, p. 111-139.

42. Li, C. and Wong, W.H. (2001) Model-based analysis of oligonucleotide arrays: model validation,

design issues and standard error applications. Genome Biology 2(8),

34

43. Irizarry, R.A., Hobbs, B., Collin, F., Beazer-Barclay, Y.D., Antonellis, K., Scherf, U., and Speed,

T.P. (2003) Exploration, normalization and summaries of high density oligonucleotide array

probe level data. Biostatistics (in press).

44. Durbin, B.P., Hardin, J.S., Hawkins, D.M., and Rocke, D.M. (2002) A variance-stabilizing

transformation for gene expression microarray data. Bioinformatics 18, 105S-110S.

45. Bolstad, B.M., Irizarry, R.A., Astrand, M., and Speed, T.P. (2002) A comparison of

normalization methods for high density oligonucleotide array data based on variance and bias.

Bioinformatics 19(2): 185-193.

Schadt, E.C., Li, C., Eliss, B., and Wong, W.H. (2002) Feature extraction and normalization algorithms

for high-density oligonucleotide gene expression array data. J. Cell. Biochem. 84(S37), 120-125.

Figure Legends

35

Figure A. One Point with 16 dimensions in RadViz. Spring lines (not usually shown) are

colored by value (K in Hooke’s law) for that variable (light is higher, dark is lower). The

point is plotted were the sum of the forces is zero.

Figure 1.1. Cancer cell line functional class definition using a hierarchical clustering (1-Pearson

coefficient) dendrogram for 60 cancer cell lines based upon gene expression data. Five well

defined clusters are shown highlighted. We treat the highlighted cell line clusters as the truth for

the purpose of carrying out studies to identify which chemical compounds are highly significant

in their classifying ability

Figure 1.2. RadViz™ result for the 3-Class problem classification of melanoma, leukemia and

non-melanoma, non-leukemia cancer cell types at the p < .01 criterion. Cell lines are symbol

coded as described in the figure. A total of 14 compounds (bottom of layout) were most effective

against melanoma and they are layed out on the melanoma sector (counterclockwise from most

36

0.2

Figure 1.2

ME_LOXIMVI

PR_PC-3

PR_DU-145

RE_SN12C

0.6

0.0

LC_HOP-92

BR_MDA-MB-231/ATCC

CNS_SF-295

CNS_SNB-19

CNS_U251

BR_BT-549

CNS_SF-268

CNS_SF-539

CNS_SNB-75

BR_HS578T

RE_A498

RE_CAKI-1

RE_ACHN

RE_UO-31

RE_TK-10

RE_RXF-393

RE_786-0

LC_NCI-H226

LC_HOP-62

OV_OVCAR-8

BR_MCF7/ADF-RES

LC_NCI-H23

LC_NCI-H522

LC_NCI-H460

LC_A549/ATCC

LC_EKVX

LE_SR

LE_RPMI-8226

LE_K-562

LE_HL-60

LE_CCRF-CEM

LE_MOLT-4

OV_SK-OV-3

OV_IGROV1

OV_OVCAR-3

OV_OVCAR-4

OV_OVCAR-5

LC_NCI-H322M

BR_MCF7

BR_T-47D

CO_HCT-116

CO_SW-620

CO_HCT-15

CO_KM12

CO_HT29

CO_HCC-2998

CO_COLO205

BR_MDA-MB-435

BR_MDA-N

0.4

ME_SK-MEL-5

ME_MALME-3M

ME_SK-MEL-28

ME_UACC-257

ME_SK-MEL-2

ME_UACC-62

ME_M14

Height

to least effective). For leukemia, 30 compounds were identified as most effective and are layed

out in that sector. Some 8 compounds were found to be most effective against non-melanoma,

non-leukemia cell lines and are layed out in that sector.

Figure 1.1

Cluster Dendrogram

1.0

0.8

37

Section 2 Figure Captions

38

Figure 2.1

Figure 2.2

Figure 2.1. Classification results for the NCI data set showing the size of the gene sets compared

to their associated best percent correct. Notice how the RadViz algorithm selected genes (black)

generally perform better than either the top F-statistic genes (gray) or the randomly selected

genes (white). As the gene set sizes increased from one to about twenty genes there was a shard

increase in classification accuracy. In addition, as more random genes are selected their

associated performance increases.

Figure 2.2. A RadViz display showing an example of a selected set of 15 genes from the

Myerson data set defined by a balanced layout for the three classes: normal (gray squares),

adenocarcinoma (black circles) and squamous cell carcinoma (white triangles). Ideally, the

patient samples displayed by their associated representative glyph should fall within their

respective regions, however some samples clearly fall into other regions thus being visually

misclassified. This particular gene set performs very will with about 6 misclassifications

visually, and after applying our collection of classification algorithms this gene set performed

with 8 misclassifications. {We should either identify the genes on the diagram or cross reference

them in ML’s table as previously discussed.}

Figure 2.3. Misclassification Patchgrid {Needs to be cnverted to B/W and reformatted as a TIFF

file along with appropriate caption}.

39

Figure 2.3

Table 1: Gene ID crossreference with indication of literature support {ML}

Figure 3.1. This segment of the spectrum around the M/Z ratio of 203 illustrates the high

signal to noise ratio of some features.

40

Figure 3.2. There is a peak at 417.732 but the intensities at nearby M/Z values are very similar.

The correlation between this peak and its two nearest neighbors is about .97, and the correlation

with the two next neighbors is about .91.

Figure 3.3. The top graph shows the portion of the spectrum from M/Z of 5300 to 10600, while

the bottom graph shows the portion from 2650 to 5300. Thus the range at the bottom is exactly

half the range at the top. Notice that all peaks in the top graph are repeated in the bottom graph.

Figure 3.4. On the left are the seven M/Z ratios selected by Petricoin et al. On the right are the

six features selected by the present authors.

41

Figure 3.1

Figure 3.1

Figure 3.1. This segment of the spectrum around the M/Z

ratio of 203 illustrates the high signal to noise ratio of

some features.

42

Figure 3.2

Figure 3.2. There is a peak at 417.732 but the intensities at

nearby M/Z values are very similar. The correlation between

this peak and its two nearest neighbors is about .97, and the

correlation with the two next neighbors is about .91.

43

Figure 3.3

Figure 3.3. The top graph shows the portion

of the spectrum from M/Z of 5300 to 10600,

while the bottom graph shows the portion

from 2650 to 5300. Thus the range at the

bottom is exactly half the range at the top.

Notice that all peaks in the top graph are

repeated in the bottom graph.

44

Figure 3.4

Figure 3.4. On the left are the seven M/Z ratios selected by Petricoin et al.

On the right are the six features selected by the present authors.

45