Interactive Machine-Language Programming Research Paper

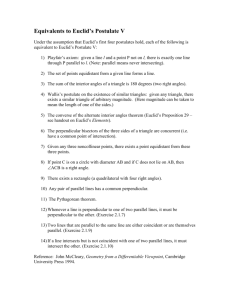

advertisement

Interactive Machine-Language Programming Butler W. Lampson University of California, Berkeley Proc. AFIPS Conf. 27 (1965), pp 473-482. Introduction The problems of machine language programming, in the broad sense of coding in which it is possible to write each instruction out explicitly, have been curiously neglected in the literature. There are still many problems which must be coded in the hardware language of the computer on which they are to run, either because of stringent time and space requirements or because no suitable higher level language is available. It is a sad fact, however, that a large number of these problems never run at all because of the inordinate amount of effort required to write and debug machine language programs. On those that are undertaken in spite of this obstacle, a great deal of time is wasted in struggles between programmer and computer which might be avoided if the proper systems were available. Some of the necessary components of these systems,, both hardware and software, have been developed and intensively used at a few installations. To most programmers, however, they remain as unfamiliar as other tools which are presented for the first time below. In the former category fall the most important features of a good assembler: macroinstructions implemented by character substitution, conditional assembly instructions, and reasonably free linking of independently assembled programs. The basic components of a debugging system are also known but relatively unfamiliar. For these the essential prerequisite is an interactive environment, in which the power of the computer is available at a console for long periods of time. The batch processing mode in which large systems are operated today of course precludes interaction, but programs for small machines are normally debugged in this way, and as time-sharing becomes more widespread the interactive environment will become common. It is clear that interactive debugging systems must have abilities very different from those of off-line systems. Large volumes of output are intolerable, so that dumps and traces are to be avoided at all costs. To take the place of dumps, selective examination and alteration of memory locations are provided. Traces give way to breakpoints, which cause control to return to the system at selected instructions. It is also essential to escape from the switches-and-lights console debugging common on small machines without adequate software. To this end, type-in and type-out of information must be symbolic rather than octal where this is convenient. The goal, which can be very nearly achieved, is to make the symbolic representation of an instruction produced by the system identical to the original symbolic written by the user. The emphasis is on convenience to the user and rapidity of communication. The combination of an assembler and a debugger of this kind is a powerful one which can reduce by a factor of perhaps five the time required to write and debug a machine language program. A full system for interactive machine language programming (IMP), however, can do much more and, if properly designed, need not be more difficult to implement. A Critique of “An Exploratory Investigation of Programmer Performance Under On-Line and Off-Line Conditions” Butler W. Lampson IEEE Trans. Human Factors in Electronics HFE-8, 1 (Mar. 1967), pp 48-51 Abstract The preceding paper by Grant and Sackman, “An Exploratory Investigation of Programmer Performance Under On-Line and Off-Line Conditions” is discussed critically. Primary emphasis is on this paper’s failure to consider the meaning of the numbers obtained. An understanding of the nature of an on-line system is necessary for proper interpretation of the observed results for debugging time, and the results for computer time are critically dependent on the idiosyncrasies of the system on which the work was done. Lack of attention to these matters cannot be compensated for by any amount of statistical analysis. Furthermore, many of the conclusions drawn and suggestions made are too vague to be useful. Dynamic protection structures B. W. Lampson Berkeley Computer Corporation Berkeley, California Proc. AFIPS Conf. 35 (1969), pp 27-38. Introduction A very general problem which pervades the entire field of operating system design is the construction of protection mechanisms. These come in many different forms, ranging from hardware which prevents the execution of input/output instructions by user programs, to password schemes for identifying customers when they log onto a timesharing system. This paper deals with one aspect of the subject, which might be called the meta-theory of protection systems: how can the information which specifies protection and authorizes access, itself be protected and manipulated. Thus, for example, a memory protection system decides whether a program P is allowed to store into location T. We are concerned with how P obtains this permission and how he passes it on to other programs. In order to lend immediacy to the discussion, it will be helpful to have some examples. To provide some background for the examples, we imagine a computation C running on a general multi-access system M. The computation responds to inputs from a terminal or a card reader. Some of these look like commands: to compile file A, load B and print the output double-spaced. Others may be program statements or data. As C goes about its business, it executes a large number of different programs and requires at various times a large number of different kinds of access to the resources of the system and to the various objects which exist in it. It is necessary to have some way of knowing at each instant what privileges the computation has, and of establishing and changing these privileges in a flexible way. We will establish a fairly general conceptual framework for this situation, and consider the details of implementation in a specific system. Part of this framework is common to most modern operating systems; we will summarize it briefly. A program running on the system M exists in an environment created by M, just as does a program running in supervisor state on a machine unequipped with software. In the latter case the environment is simply the available memory and the available complement of machine instructions and input/output commands; since these appear in just the form provided by the hardware designers, we call this environment the bare machine. By contrast, the, environment created by M for a program is called a virtual or user machine. It normally has less memory, differently organized, and an instruction set in which the input/output at least has been greatly changed. Besides the machine registers and memory, a user machine provides a set of objects which can be manipulated by the program. The instructions for manipulating objects are probably implemented in software, but this is of no concern to the user machine program, which is generally not able to tell how a given feature is implemented. The basic object which executes programs is called a task or process; it corresponds to one copy of the user machine. What we are primarily concerned with in this paper is the management of the objects which a process has access to: how are they identified, passed around, created, destroyed, used and shared. Beyond this point, three ideas are fundamental to the framework being developed: 1. Objects are named by capabilities, which are names that are protected by the system in the sense that programs can move them around but not change them or create them in an arbitrary way. As a consequence, possession of a capability can be taken as prima facie proof of the right to access the object it names. 2. A new kind of object called a domain is used to group capabilities. At any time a process is executing in some domain and hence can exercise the capabilities which belong to the domain. When control passes from one domain to another (in a suitably restricted fashion) the capabilities of the process will change. 3. Capabilities are usually obtained by presenting domains which possess them with suitable authorization, in the form of a special kind of capability called an access key. Since a domain can possess capabilities, including access keys, it can carry its own identification. A key property of this framework is that it does not distinguish any particular part of the computation. In other words, a program running in one domain can execute, expand the computation, access files and in general exercise its capabilities without regard to who created it or how far down in any hierarchy it is. Thus, for example, a user program running under a debugging system is quite free to create another incarnation of the debugging system underneath him, which may in turn create another user program which is not aware in any way of its position in the scheme of things. In particular, it is possible to reset things to a standard state in one domain without disrupting higher ones. The reason for placing so much weight on this property is two-fold. First of all, it provides a guarantee that programs can be glued together to make larger programs without elaborate pre-arrangements about the nature of the common environment. Large systems with active user communities quickly build up sizable collections of valuable routines. The large ones in the collections, such as compilers, often prove useful as subroutines of other programs. Thus, to implement language X it may be convenient to translate it into language Y, for which a compiler already exists. The X implementer is probably unaware that Y’s implementation involves a further call on an assembler. If the basic system organization does not allow an arbitrarily complex structure to be built up from any point, this kind of operation will not be feasible. The second reason for concern about extendibility is that it allows deficiencies in the design of the system to be made up without changes in the basic system itself, simply by interposing another layer between the basic system and the user. This is especially important when we realize that different people may have different ideas about the nature of a deficiency. We now have outlined the main ideas of the paper. The remainder of the discussion is devoted to filling them out with examples and explanations. The entire scheme has been developed as part of the operating system for the Berkeley Computer Corporation Model I. Since many details and specific mechanisms are dependent on the characteristics of the surrounding system and underlying hardware, we digress briefly at this point to describe them. On Reliable and Extendible Operating Systems Butler W. Lampson Proc. 2nd NATO Conf. on Techniques in Software Engineering, Rome, 1969. Reprinted in The Fourth Generation, Infotech State of the Art Report 1, 1971, pp 421-444. Introduction A considerable amount of bitter experience in the design of operating systems has been accumulated in the last few years, both by the designers of the systems which are currently in use and by those who have been forced to use them. As a result, many people have been led to the conclusion that some radical changes must be made, both in the way we think about the functions of operating systems and in the way they are implemented. Of course, these are not unrelated topics, but it is often convenient to organize ideas around the two axes of function and implementation. This paper is concerned with an effort to create more flexible and more reliable operating systems built around a very powerful and general protection mechanism. The mechanism is introduced at a low level and is then used to construct the rest of the system, which thus derives the same advantages from its existence as do user programs operating with the system. The entire design is based on two central ideas. The first of these is that an operating system should be constructed in layers, each one of which creates a different and hopefully more convenient environment in which the next higher layer can function. In the lower layers a bare machine provided by the hardware manufacturer is converted into a large number of user machines which are given access to common resources such as processor time and storage space in a controlled manner. In the higher layers these user machines are made easy for programs and users at terminals to operate. Thus, as we rise through the layers, we observe two trends. 1 The consequences of an error become less severe 2 The facilities provided become more elaborate At the lower levels we wish to press the analogy with the hardware machine very strongly; where the integrity of the entire system is concerned the operations provided should be as primitive as possible. This is not to say that the operations should not be complete, but that they need not be convenient. They are to be regarded in the same light as the instructions of a central processor. Each operation may in itself do very little; we require only that the entire collection should be powerful enough to permit more convenient operations to be programmed. The main reason for this dogma is clear enough; simple operations are more likely to work than complex ones and, if failures are to occur, it is very much preferable that they should hurt only one user rather than the entire community. We therefore admit increasing complexity in higher layers, until the user at his terminal may find himself invoking extremely elaborate procedures. The price to be paid for low level simplicity is also clear: additional time to interpret many simple operations and storage to maintain multiple representations of essentially the same information. We shall return to these points below. It is important to note that users of the system other than the designers need not suffer any added inconvenience from its adherence to the dogma, since the designers can very well supply, at a higher level, programs that simulate the action of the powerful low level operations to which users may be accustomed. Users do, in fact, profit from the fact that a different set of operations can be programmed if the ones provided by the designer prove unsatisfactory. This point also will receive further attention. The increased reliability that we hope to obtain from an application of the above ideas has two sources. In the first place, careful specification of an orderly hierarchy of operations will clarify what is going on in the system and make it easier to understand. This is the familiar idea of modularity. Equally important, however, is a second and less familiar point, the other pillar of our system design, which might loosely be called enforced modularity. It is this: if interactions between layers or modules can be forced to take place through defined paths only, then the integrity of one layer can be assured regardless of the deviations of a higher one. The requirement that no possible action of a higher layer, whether accidental or malicious, can affect the functioning of a lower one is a strong one. In general, hardware assistance will be required to achieve this goal, although in some cases the discipline imposed by a language such as ALGOL, together with suitable checks on the validity of subscripts, may suffice. The reward is that errors can be localized very precisely. Protection And Access Control In Operating Systems Butler W. Lampson In Operating Systems, Infotech State of the Art Report 14, 1972, pp 311-326 Introduction I should like to explain what protection and access control is all about in a form that is general enough to make it possible to understand all the existing forms of protection and access control that we see in existing systems, and perhaps to see more clearly than we can now the relationships between all the different ways that now exist for providing protection in a computer system. Just in case you are not aware of how many different ways there are, let me suggest a few that you might find in a typical system: 1 a key switch on a console 2 a monitor and user mode mechanism in the hardware of the machine that decides whether a given program can execute input/output instructions 3 a memory protection scheme that attaches 4-bit tags to each block of memory and decides whether or not particular programs can address that block 4 facilities in the file system for controlling access; such a system allows a number of entities, normally referred to as users, to exist in the system and controls the way in which data belonging to one user can be accessed by other users. All these mechanisms are mostly independent, and superficially they look almost completely different. However, it turns out that there is a fair amount of unity at the basic conceptual level in the way in which protection is done, although there is enormous efflorescence in implementations. I should like to make it clear precisely what I am not talking about in discussing protection of computer systems. I am concerned only about what goes on inside the system; the question of how the system identifies someone who proposes himself as a user I do not intend to consider, although it is of course an important problem. There is a basic idea underlying the whole apparatus of protection and access control; it is the idea of a program being executed in a certain context that determines what the program is authorized to do. For example, if you have a program running on System/360 hardware, there is a bit in the physical machine that determines whether the machine is in supervisor state or in problem state. On the Transfer of Control between Contexts B. W. Lampson, J. G. Mitchell and E. H. Satterthwaite Xerox Research Center 3180 Porter Drive Palo Alto, CA 94304, USA Lecture Notes in Computer Science 19, Springer, 1974, pp 181-203 Abstract We describe a single primitive mechanism for transferring control from one module to another, and show how this mechanism, together with suitable facilities for record handling and storage allocation, can be used to construct a variety of higher-level transfer disciplines. Procedure and function calls, coroutine linkages, non-local gotos, and signals can all be specified and implemented in a compatible way. The conventions for storage allocation and name binding associated with control transfers are also under the programmer’s control. Two new control disciplines are defined: a generalization of coroutines, and a facility for handling errors and unusual conditions which arise during program execution. Examples are drawn from the Modular Programming Language, in which all of the facilities described are being implemented. Report on the Programming Language Euclid J. Horning, B. Lampson, R. London, J. Mitchell, and G. Popek SIGPLAN Notices, February 1977. Revised as Technical Report CSL-81-12, Xerox Palo Alto Research Center. Introduction “There is no royal road to geometry.” —Proclus, Comment on Euclid, Prol. G. 20. The programming language Euclid has been designed to facilitate the construction of verifiable system programs. By a verifiable program we mean one written in such a way that existing formal techniques for proving certain properties of programs can be readily applied; the proofs might be either manual or automatic, and we believe that similar considerations apply in both cases. By system we mean that the programs of interest are part of the basic software of the machine on which they run; such a program might be an operating system kernel, the core of a data base management system, or a compiler. An important consequence of this goal is that Euclid is not intended to be a generalpurpose programming language. Furthermore, its design does not specifically address the problems of constructing very large programs; we believe most of the programs written in Euclid will be modest in size. While there is some experience suggesting that verifiability supports other desired goals, we assume the user is willing, if necessary, to obtain verifiability by giving up some run-time efficiency, and by tolerating some inconvenience in the writing of his programs. We see Euclid as a (perhaps somewhat eccentric) advance along one of the main lines of current programming language development: transferring more and more of the work of producing a correct program, and verifying its correctness, from the programmer and the verifier (human or mechanical) to the language and its compiler. The main changes relative to Pascal take the form of restrictions, which allow stronger statements about the properties of the program to be made from the rather superficial, but quite reliable, analysis which the compiler can perform. In some cases new constructions have been introduced, whose meaning can be explained by expanding them in terms of existing Pascal constructions. The reason for this is that the expansion would be forbidden by the newly introduced restrictions, whereas the new construction is itself sufficiently restrictive in a different way. The main differences between Euclid and Pascal are summarized in the following list: Visibility: Euclid provides explicit control over the visibility of identifiers, by requiring the program to list all the identifiers imported into a routine or module, or .exported from a module. Variables: The language guarantees that two identifiers in the same scope can never refer to the same or overlapping variables. There is a uniform mechanism for binding an identifier to a variable in a procedure call, on block entry (replacing the Pascal with statement), or in a variant record discrimination. The variables referenced or modified by a routine (i.e., procedure or function) must be accessible in every scope from which the routine is called. Pointers: This idea is extended to pointers, by allowing dynamic variables to be assigned to collections, and guaranteeing that two pointers into different collections can never refer to the same variable. Storage allocation: The program can control the allocation of storage for dynamic variables explicitly, in a way which confines the opportunity for making a type error very narrowly. It is also possible to declare that some dynamic variables should be reference-counted, and automatically deallocated when no pointers to them remain. Types: Types have been generalized to allow formal parameters, so that arrays can have bounds which are fixed only when they are created, and variant records can be bandied in a type-safe manner. Records are generalized to include constant components. Modules: A new kind of record, called a module, can contain routine and type components, and thus provides a facility for modularization. The module can include initialization and finalization statements which are executed whenever a module variable is created or destroyed. Constants: Euclid defines a constant to be a literal, or an identifier whose value is fixed throughout the scope in which it is declared. For statement: A generator can be declared as a module type, and used in a for statement to enumerate a sequence of values. Loopholes: features of the underlying machine can be accessed, and the typechecking can be overridden, in a controlled way. Except for the explicit loopholes, Euclid is designed to be type-safe. Assertions: the syntax allows assertions to be supplied at convenient points. Deletions: A number of Pascal features have been omitted from Euclid: input-output, reals, multi-dimensional arrays, labels and gotos, and functions and procedures as parameters. The only new features in the list which can make it hard to convert a Euclid program into a legal Pascal program by straightforward rewriting are parameterized types, storage allocation, finalization, and some of the loopholes. There are a number of other considerations which influenced the design of Euclid: It is based on current knowledge of programming languages and compilers; concepts which are not fairly well understood, and features whose implementation is unclear, have been omitted. Although program portability is not a major goal of the language design, it is necessary to have compilers which generate code for a number of different machines, including mini-computers. The object code must be reasonably efficient, and the language must not require a highly optimizing compiler to achieve an acceptable level of efficiency in the object program. Since the total size of a program is modest, separate compilation is not required (although it is certainly not ruled out). The required run time support must be minimal, since it presents a serious problem for verification. Notes On The Design Of Euclid G. J. Popek UCLA Computer Science Department Los Angeles, California 90024 J. J. Horning Computer Systems Research Group University of Toronto Toronto, Canada B. W. Lampson, J. G. Mitchell Xerox Palo Alto Research Palo Alto, California 94304 R. L. London USC Information Sciences Institute Marina del Rey, California 90291 ACM Sigplan Notices 12, 3 (Mar. 1977), pp 11-18 Abstract Euclid is a language for writing system programs that are to be verified. We believe that verification and reliability are closely related, because if it is hard to reason about programs using a language feature, it will be difficult to write programs that use it properly. This paper discusses a number of issues in the design of Euclid, including such topics as the scope of names, aliasing, modules, type-checking, and the confinement of machine dependencies; it gives some of the reasons for our expectation tnat programming in Euclid will be more reliable (and will produce more reliable programs) than programming in Pascal, on which Euclid is based. Key Words and Phrases: Euclid, verification, systems programming language, reliability, Pascal, aliasing, data encapsulation, parameterized types, visibility of names, machine dependencies, legality assertions, storage allocation. CR Categories: 4.12, 4.2, 4.34, 5.24 Introduction Euclid is a programming language evolved from Pascal by a series of changes intended to make it more suitable for verification and for system programming. We expect many of these changes to improve the reliability of the programming process, firstly by enlarging the class of errors that can be detected by the compiler, and secondly by making explicit in the program text more of the information needed for understanding and maintenance. In addition, we expect that effort expended in program verification will directly improve program reliability. Although Euclid is intended for a rather restricted class of applications, much of what we have done could surely be extended to languages designed for more general purposes. Like all designs, Euclid represents a compromise among conflicting goals, reflecting the skills, knowledge, and tastes (i.e., prejudices) of its designers. Euclid was conceived as an attempt to integrate into a practical language the results of several recent developments in programming methodology and program verification. As Hoare has pointed out, it is considerably more difficult to design a good language than it is to select one’s favorite set of good language features or to propose new ones. A language is more than the sum of its parts, and the interactions among its features are often more important than any feature considered separately. Thus this paper does not present many new language features. Rather, it discusses several aspects of our design that, taken together, should improve the reliability of programming in Euclid. We believe that the goals of reliability, understandability, and verifiability are mutually reinforcing. We never consciously sacrificed one of these in Euclid to achieve another. We had a tangible measure only for the third (namely, our ability to write reasonable proof rules, so we frequently used it as the touchstone for all three. Much of this paper is devoted to decisions motivated by the problems of verification. Another important goal of Euclid, the construction of acceptably efficient system programs, did not seem attainable without some sacrifices in the preceding three goals. Much of the language design effort was expended in finding ways to allow the precise control of machine resources that seemed to be necessary, while narrowly confining the attendant losses of reliability, understandability, and verifiability. The focus here is on features that contribute to reliability. Goals, History, and Relation To Pascal The chairman originally charged the committee as follows: “Let me outline our charter as I understand it. We are being asked to make minimal changes and extensions to Pascal in order to make the resulting language one that would be suitable for systems programming while retaining those characteristics of the language that are attractive for good programming style and verification. Because it is highly desirable that the language and appropriate compilers be available in a short time, the language definition effort is to be quite limited: only a month or two in duration. Therefore, we should not attempt to design a significantly different language, for that, while highly desirable, is a research project in itself. Instead, we should aim at a ‘good’ result, rather than the superb.” We defer to the Conclusions a discussion of our current feelings about these goals and how well we have met them. The design of Euclid took place at four two-day meetings of the authors in 1976, supplemented by a great deal of individual effort and uncounted Arpanet messages. Almost all of the basic changes to Pascal were agreed upon during the first meeting; most of the effort since then has been devoted to smoothing out unanticipated interactions among the changes and to developing a suitable exposition of the language. Three versions of the Euclid Report have been widely circulated for comment and criticism; the most recent appeared in the February 1977 Sigplan Notices. Proof rules are currently being prepared for publication. A Terminal-Oriented Communication System Paul G. Heckel Interactive Systems Consultants Butler W. Lampson Xerox Palo Alto Research Center Communications of the ACM, July 1977 Volume 20, Number 7 Abstract This paper describes a system for full-duplex communication between a time-shared computer and its terminals. The system consists of a communications computer directly connected to the time-shared system, a number of small remote computers to which the terminals are attached, and connecting medium speed telephone lines. It can service a large number of terminals of various types. The overall system design is presented along with the algorithms used to solve three specific problems: local echoing, error detection and correction on the telephone lines, and multiplexing of character output. Key Words and Phrases: terminal system, error correction, multiplexing, local echoing, communication system, network CR Categories: 3.81, 4.31 Introduction A number of computer communication systems have been developed in the last few years. The best known such system is the Arpanet, which provides a 50-kilobit network interconnecting more than 40 computers. By contrast, the system described in this paper connects computers, with terminals, rather than with each other. Such terminal networks are of interest because there are many requirements for connecting a number of geographically distributed terminals with a centrally located computer, and because terminal networks can use medium speed (2400-9600 baud) telephone lines which have reasonable cost and wide availability. Another system tackling essentially the same problem is Tymnet, which was developed at about the same time as the system described here. Of course, a general-purpose network like the Arpanet can (and does) carry terminal traffic. Our system was designed to connect (presumably remote) low and medium speed devices, such as teletypes and line printers, to the Berkeley Computer Corporation’s BCC-500 computer system. The basic service provided is a full-duplex channel between a user’s terminal and his program running on the BCC-500 CPU. The design objectives were to make the system efficient in the use of bandwidth and resistant to telephone line errors, while keeping it flexible so that a wide variety of devices could be handled. The paper provides an overall description of what the BCC terminal system does and how it does it. In addition, it presents in detail the solutions to three specific problems: local echoing (see Section 2); error detection and correction on the multiplexed telephone line (see Section 4.1); and output multiplexing (see Section 4.2). The structure of the system is shown in Figure 1. The hardware components, named along the heavy black line, are —A CPU on which user programs execute; — A central dedicated processor called the CHIO which handles all characteroriented input-output to the CPU; — A number of small remote computers called concentrators to which terminals are connected, either directly or via standard low-speed modems and telephone lines; and — Leased voice-grade telephone lines with medium-speed (e.g. 4800 baud) modems which connect the concentrators to the CHIO. The system is organized as a collection of parallel processes which communicate by sending messages to each other. In some cases the processes run in the same processor and the parallelism is provided by a scheduler or coroutine linkage, but it is convenient to ignore such details in describing the logical structure. Proof Rules for the Programming Language Euclid R.L. London, J.V. Guttag, J.J. Horning, B.W. Lampson, J.G. Mitchell, and G.J. Popek Acta Informatica 10, 1-26 (1978) Summary In the spirit of the previous axiomatization of the programming language Pascal, this paper describes Hoare-style proof rules for Euclid, a programming language intended for the expression of system programs which are to be verified. All constructs of Euclid are covered except for storage allocation and machine dependencies. “The symbolic form of the work has been forced upon us by necessity: without its help we should have been unable to perform the requisite reasoning.” —A.N. Whitehead and B. Russell, Principia Mathematica, p. vii “Rules are rules.” —Anonymous Introduction The programming language Euclid has been designed to facilitate the construction of verifiable system programs. Its defining report closely follows the defining report of the Pascal language (see also). The present document, giving Hoare-style proof rules applicable only to legal Euclid programs, owes a great deal to (and is in part identical to) the axiomatic definition of Pascal. Major differences include the treatment of procedures and functions, declarations, modules, collections, escape statements, binding, parameterized types, and the examples and detailed explanations in Appendices 1-3. Other semantic definition methods are certainly applicable to Euclid. We have used proof rules for two reasons: familiarity and the existence of the Pascal definition. One may regard the proof rules as a definition of Euclid in the same sense as the Pascal axiomatization defines Pascal. By stating what can be proved about Euclid programs, the rules define the meaning of most syntactically and semantically legal Euclid programs, but they do not give the information required to determine whether or not a program is legal. This information may be found in the language report. Neither do the proof rules define the meaning of illegal Euclid programs containing, for example, division by zero or an invalid array index. Finally, explicit proof rules are not provided for those portions of Euclid defined in the report by translation into other legal Euclid constructs. This includes pervasive, implicit imports through thus, and some uses of return and exit. All such transformations must be applied before the proof rules are applicable. As is the case with Pascal, the Euclid axiomatization should be read in conjunction with the language report, and is an almost total axiomatization of a realistic and useful system programming language. While the primary goal of the Euclid effort was to design a practical programming language (not to provide a vehicle for demonstrating proof rules), proof rule considerations did have significant influence on Euclid. All constructs of the language are covered except for storage allocation (zones and collections that are not reference-counted) and machine dependencies. In a few instances rules are applicable only to a subset of Euclid; the restrictions are noted with those rules. Crash recovery in a distributed data storage system Butler W. Lampson and Howard E. Sturgis Xerox Palo Alto Research Center 3333 Coyote Hill Road / Palo Alto / California 94 June 1, 1979 DRAFT - Not for distribution - DRAFT Abstract An algorithm is described which guarantees reliable storage of data in a distributed system, even when different portions of the data base, stored on separate machines, are updated as part of a single transaction. The algorithm is implemented by a hierarchy of rather simple abstractions, and it works properly regardless of crashes of the client or servers. Some care is taken to state precisely the assumptions about the physical components of the system (storage, processors and communication). Key Words And Phrases atomic, communication, consistency, data base, distributed computing, fault-tolerance, operating system, recovery, reliability, transaction, update. Introduction We consider the problem of crash recovery in a data storage system which is constructed from a number of independent computers. The portion of the system which is running on some individual computer may crash, and then be restarted by some crash recovery procedure. This may result in the loss of some information which was present just before the crash. The loss of this information may, in turn, lead to an inconsistent state for the information permanently stored in the system. For example, a client program may use this data storage system to store balances in an accounting system. Suppose that there are two accounts, called A and B, which contain $10 and $15 respectively. Further, suppose the client wishes to move $5 from A to B. The client might proceed as follows: read account A (obtaining $10) read account B (obtaining $15) write $5 to account A write $20 to account B Now consider a possible effect of a crash of the system program running on the machine to which these commands are addressed. The crash could occur after one of the write commands has been carried out, but before the other has been initiated. Moreover, recovery from the crash could result in never executing the other write command. In this case, account A is left containing $5 and account B with $15, an unintended result. The contents of the two accounts are inconsistent. There are other ways in which this problem can arise: accounts A and B are stored on two different machines and one of these machines crashes; or, the client itself crashes after issuing one write command and before issuing the other. In this paper we present an algorithm for maintaining the consistency of a file system in the presence of these possible errors. We begin, in section 2, by describing the kind of system to which the algorithm is intended to apply. In section 3 we introduce the concept of an atomic transaction. We argue that if a system provides atomic transactions, and the client program uses them correctly, then the stored data will remain consistent. The remainder of the paper is devoted to describing an algorithm for obtaining atomic transactions. Any correctness argument for this (or any other) algorithm necessarily depends on a formal model of the physical components of the system. Such models are quite simple for correctly functioning devices. Since we are interested in recovering from malfunctions, however, our models must be more complex. Section 4 gives models for storage, processors and communication, and discusses the meaning of a formal model for a physical device. Starting from this base, we build up the lattice of abstractions shown in figure 1. The second level of this lattice constructs better behaved devices from the physical ones, by eliminating storage failures and eliminating communication entirely (section 5). The third level consists of a more powerful primitive which works properly in spite of crashes (section 6). Finally, the highest level constructs atomic transactions (section 7). Parallel to the lattice of abstractions is a sequence of methods for constructing compound actions with various desirable properties. A final section discusses some efficiency and implementation considerations. Throughout we give informal arguments for the correctness of the various algorithms. Atomic transactions Butler W. Lampson and Howard Sturgis Distributed Systems—Architecture and Implementation, Lecture Notes in Computer Science 105, Springer, 1981, Chapter 11 Introduction This chapter deals with methods for performing atomic actions on a collection of computers, even in the face of such adverse circumstances as concurrent access to the data involved in the actions, and crashes of some of the computers involved. For the sake of definiteness, and because it captures the essence of the more general problem, we consider the problem of crash recovery in a data storage system which is constructed from a number of independent computers. The portion of the system which is running on some individual computer may crash, and then be restarted by some crash recovery procedure. This may result in the loss of some information which was present just before the crash. The loss of this information may, in turn, lead to an inconsistent state for the information permanently stored in the system. For example, a client program may use this data storage system to store balances in an accounting system. Suppose that there are two accounts, called A and B, which contain $10 and $15 respectively. Further, suppose the client wishes to move $5 from A to B. The client might proceed as follows: read account A (obtaining $10) read account B (obtaining $15) write $5 to account A write $20 to account B Now consider a possible effect of a crash of the system program running on the machine to which these commands are addressed. The crash could occur after one of the write commands has been carried out, but before the other has been initiated. Moreover, recovery from the crash could result in never executing the other write command. In this case, account A is left containing $5 and account B with $15, an unintended result. The contents of the two accounts are inconsistent There are other ways in which this problem can arise: accounts A and B are stored on two different machines and one of these machines crashes; or, the client itself crashes after issuing one write command and before issuing the other. In this chapter we present an algorithm for maintaining the consistency of a file system in the presence of these possible errors. We begin, in section 11.2, by describing the kind of system to which the algorithm is intended to apply. In section 11.3 we introduce the concept of an atomic transaction. We argue that if a system provides atomic transactions, and the client program uses them correctly, then the stored data will remain consistent The remainder of the chapter is devoted to describing an algorithm for obtaining atomic transactions. Any correctness argument for this (or any other) algorithm necessarily depends on a formal model of the physical components of the system. Such models are quite simple for correctly functioning devices. Since we are interested in recovering from malfunctions, however, our models must be more complex. Section 11.4 gives models for storage, processors and communication, and discusses the meaning of a formal model for a physical device. Starting from this base, we build up the lattice of abstractions shown in figure 11-1. The second level of this lattice constructs better behaved devices from the physical ones, by eliminating storage failures and eliminating communication entirely (section 11.5). The third level consists of a more powerful primitive which works properly in spite of crashes (section 11.6). Finally, the highest level constructs atomic transactions (section 11.7). Throughout we give informal arguments for the correctness of the various algorithms. Figure 11-1: The lattice of abstractions for transactions Remote Procedure Calls Butler W. Lampson Distributed Systems-Architecture and Implementation, Lecture Notes in Computer Science 105, Springer, 1981, 357-370 Parameter and data representation In this section we consider in a broader context the problems of parameter and data representation in distributed systems. Our treatment will be somewhat abstract and, unfortunately, rather superficial. This is really a programming language design problem, although in practice it is not usually addressed in that context. The reason is that a single bit has the same representation everywhere (except at low levels of abstraction which do not concern us here). An integer or a floating poini number, lo say nothing of a relational data base, may be represented by very different sets of bits on different machines, but it only makes sense to talk aboul the representation of data when iis type is known. Thus our problem is to define a common notion of data types and suitable ways of transforming from one representation of a type to another. This kind of problem is customarily addressed in the context of language design, and we shall find it convenient to do so here. In fact, we shall confine ourselves here to the problem of a single procedure call, possibly directed to a remote site. The type of the procedure is expressed by a declaration: P: procedure(a1: T1, a2: T2,...) returns (r1 U1, r2: U2,...). We shall sometimes write (a1: T1, a2: T2 , ...) → (r1 U1, r2: U2, ...)for short. Of course, sending a message and receiving a reply can be described in the same way, as far as the representation of the data is concerned. The control flow aspects of orderly communication with a remote site are discussed in Chapter 7 and Section 14.8; here we are interested only in data representation. The function of the declaration is to make explicit what the argument and result types must be, so that the caller and callee can agree on this point, and so that there is enough information for an automatic mechanism to have a chance of making any necessary conversions. For this reason we insist that remote procedures must have complete declarations. We assert without proof that any data representation problem can be cast in this form without doing violence to its essence. Remote procedure calls One of the major problems in constructing distributed programs is to abstract out the complications which arise from the transmission errors, concurrency and partial failures inherent in a distributed system. If these are allowed to appear in their full glory at the applications level, life becomes so complicated that there is little chance of getting anything right. A powerful tool for this purpose is the idea of a remote procedure call. If it is possible to call procedures on remote machines with the same semantics as ordinary local calls, the application can be written without concern for most of the complications This goal cannot be fully achieved without the transaction mechanism of Chapter 11, but we show in this section how to obtain the same semantics, except that the action of the remote call may occur more than once. Our treatment uses the definitions of Sections 11.3 and 11.4 for processor and communication failures and stable storage. Following Lamport, we write a → b to indicate that the event a precedes the event 4; this can happen because they are both in the same process, or because they are both references to the same datum, or as the transitive closure of these immediate relations. Recall that physical communication is modeled by a datum called the message; transmitting a message from one process to another involves two transitions in the medium, the Send and the Receive. A remote procedure call consists of several events. There is the main call, which consists of the following events: the call c; the start s, the work w; the end e; the return r. The ones on the left occur in the calling machine, the ones on the right in the machine being called. The events which detail the message transmission have been absorbed into the ones listed. These events occur in the order indicated (i.e., each precedes the next). In addition, there may be orphan events o in the receiver, consisting of any prefix of the transitions indicated. These occur because of duplicated call messages, which can arise from failures in the communication medium, or from timeouts or crashes in the caller which are followed by a retry. The orphans all follow the call and precede the rest of the main call. Difficulties arise, however, in guaranteeing the order of the orphans relative to the rest of the main call. Practical Use of a Polymorphic Applicative Language Butler W. Lampson and Eric E. Schmidt Computer Science Laboratory Xerox Palo Alto Research Center 3333 Coyote Hill Road Palo Alto, CA 94304 Abstract Assembling a large system from its component elements is not a simple task. An adequate notation for specifying this task must reflect the system structure, accommodate many configurations of the system and many versions as it develops, and be a suitable input to the many tools that support software development. The language described here applies the ideas of A-abstraction, hierarchical naming and type-checking to this problem. Some preliminary experience with its use is also given. Introduction Assembling a large system from its component elements is not a simple task. The subject of this paper is a language for describing how to do this assembly. We begin with a necessarily terse summary of the issues, in order to establish a context for the work described later. A large system usually exhibits a complex structure. It has many configurations, different but related. Each configuration is composed of many elements. The elements have complex interconnections. They form a hierarchy: each may itself be a system. The system develops during a long period of design and implementation, and an even longer period of maintenance. Each element is changed many times. Hence there are many versions of each element Certain sets of compatible versions form releases. Many tools can be useful in this development. Translators build an executable program. Accelerators speed up rebuilding after a change. Debuggers display the state of the program. Databases collect and present useful information. The System Modeling language (SMI. for short) is a notation for describing how to compose a set of related system configurations from their elements. A description in SML is called a model of the system. The development of a system can be described by a collection of models, one for each stage in the development; certain models define releases. A model contains all the information needed by a development tool; indeed, a tool can be regarded as a useful operator on models, e.g., build system, display state or structure, print source files, cross-reference, etc. In this paper we present the ideas which underlie SML, define its syntax and semantics, discuss a number of pragmatic issues, and give several examples of its use. We neglect development (changes, versions, releases) and tools; these are the subjects of a companion paper. More information about system modeling can be found in the second author’s PhD thesis, along with a discussion of related work. An Instruction Fetch Unit for a High-Performance Personal Computer BUTLER W. LAMPSON, GENE McDANIEL, AND SEVERO M. ORNSTEIN IEEE Transactions On Computers, Vol. C-33, No. 8, August 1984 Abstract The instruction fetch unit (IFU) of the Dorado personal computer speeds up the emulation of instructions by prefetching, decoding, and preparing later instructions in parallel with the execution of earlier ones. It dispatches the machine’s microcoded processor to the proper starting address for each instruction, and passes the instruction’s fields to the processor on demand. A writeable decoding memory allows the IFU to be specialized to a particular instruction set, as long as the instructions are an integral number of bytes long. There are implementations of specialized instruction sets for the Mesa, Lisp, and Smalltalk languages. The IFU is implemented with a six-stage pipeline, and can decode an instruction every 60 ns. Under favorable conditions the Dorado can execute instructions at this peak rate (16 MIPS). Index Terms —Cache, emulation, instruction fetch, microcode, pipeline. Introduction THIS paper describes the instruction fetch unit (IFU) for the Dorado, a powerful personal computer designed to meet the needs of computing researchers at the Xerox Palo Alto Research Center. These people work in many areas of computer science: programming environments, automated office systems, electronic filing and communication, page composition and computer graphics, VLSI design aids, distributed computing, etc. There is heavy emphasis on building working prototypes. The Dorado preserves the important properties of an earlier personal computer, the Alto 113), while removing the space and speed bottlenecks imposed by that machine’s 1973 design. The history, design goals, and general characteristics of the Dorado are discussed in a companion paper, which also describes its microprogrammed processor. A second paper describes the memory system. The Dorado is built out of ECL 10K circuits. It has 16 bit data paths, 28 bit virtual addresses, 4K-16K words of high-speed cache memory, writeable microcode, and an I/O bandwidth of 530 Mbits/s. Fig. 1 shows a block diagram of the machine. The microcoded processor can execute a microinstruction every 60 ns. An instruction of some high-level language is performed by executing a suitable succession of these microinstructions; this process is called emulation. The purpose of the IFU is to speed up emulation by prefetching, decoding, and preparing later instructions in parallel with the execution of earlier ones. It dispatches the machine’s microcoded processor to the proper starting address for each instruction, supplies the processor with an assortment of other useful information derived from the instruction, and passes its various fields to the processor on demand. A writeable decoding memory allows the IFU to be specialized to a particular instruction set; there is room for four of these, each with 256 instructions. There are implementations of specialized instruction sets for the Mesa, Lisp, and Smalltalk languages, as well as an Alto emulator. The IFU can decode an instruction every 60 ns, and under favorable conditions the Dorado can execute instructions at this peak rate (16 MIPS). Following this introduction, we discuss the problem of instruction execution in general terms and outline the space of possible solutions (Section II). We then describe the architecture of the Dorado’s IFU (Section 111) and its interactions with the processor which actually executes the instructions (Section IV); the reader who likes to see concrete details might wish to read these sections in parallel with Section II. The next section deals with the internals of the IFU, describing how to program it and the details of its pipelined implementation (Section V). A final section tells how big and how fast it is, and gives some information about the effectiveness of its various mechanisms for improving performance (Section VI). A Kernel Language for Modules and Abstract Data Types R. Burstall and B. Lampson University of Edinburgh and Xerox Palo Alto Research Center Abstract: A small set of constructs can simulate a wide variety of apparently distinct features in modern programming languages. Using a kernel language called Pebble based on the typed lambda calculus with bindings, declarations, and types as first-class values, we show how to build modules, interfaces and implementations, abstract data types, generic types, recursive types, and unions. Pebble has a concise operational semantics given by inference rules. Specifying Distributed Systems Butler W. Lampson Cambridge Research Laboratory Digital Equipment Corporation One Kendall Square Cambridge, MA 02139 October 1988 In Constructive Methods in Computer Science, ed. M. Broy, NATO ASI Series F: Computer and Systems Sciences 55, Springer, 1989, pp 367-396 These notes describe a method for specifying concurrent and distributed systems, and illustrate it with a number of examples, mostly of storage systems. The specification method is due to Lamport (1983, 1988), and the notation is an extension due to Nelson (1987) of Dijkstra’s (1976) guarded commands. We begin by defining states and actions. Then we present the guarded command notation for composing actions, give an example, and define its semantics in several ways. Next we explain what we mean by a specification, and what it means for an implementation to satisfy a specification. A simple example illustrates these ideas. The rest of the notes apply these ideas to specifications and implementations for a number of interesting concurrent systems: Ordinary memory, with two implementations using caches; Write buffered memory, which has a considerably weaker specification chosen to facilitate concurrent implementations; Transactional memory, which has a weaker specification of a different kind chosen to facilitate fault-tolerant implementations; Distributed memory, which has a yet weaker specification than buffered memory chosen to facilitate highly available implementations. We give a brief account of how to use this memory with a tree-structured address space in a highly available naming service. Thread synchronization primitives. On-line Data Compression in a Log-structured File System Michael Burrows Charles Jerian Butler Lampson Timothy Mann DEC Systems Research Center Abstract We have incorporated on-line data compression into the low levels of a log-structured file system (Rosenblum’s Sprite LFS). Each block of data or meta-data is compressed as it is written to the disk and decompressed as it is read. The log-structuring overcomes the problems of allocation and fragmentation for variable-sized blocks. We observe compression factors ranging from 1.6 to 2.2, using algorithms running from 1.7 to 0.4 MBytes per second in software on a DECstation 5000/200. System performance is degraded by a few percent for normal activities (such as compiling or editing), and as much as a factor of 1.6 for file system intensive operations (such as copying multimegabyte files). Hardware compression devices mesh well with this design. Chips are already available that operate at speeds exceeding disk transfer rates, which indicates that hardware compression would not only remove the performance degradation we observed, but might well increase the effective disk transfer rate beyond that obtainable from a system without compression. 1 Introduction Building a file system that compresses the data it stores on disk is clearly an attractive idea. First, more data would fit on the disk. Also, if a fast hardware data compressor could be put into the data path to disk, it would increase the effective disk transfer rate by the compression factor, thus speeding up the system. Yet on-line data compression is seldom used in conventional file systems, for two reasons. First, compression algorithms do not provide uniform compression on all data. When a file block is overwritten, the new data may be compressed by a different amount from the data it supersedes. Therefore the file system cannot simply overwrite the original blocks—if the new data is larger than the old, it must be written to a place where there is more room; if it is smaller, the file system must either find some use for the freed space or see it go to waste. In either case, disk space tends to become fragmented, which reduces the effective compression. Second, the best compression algorithms are adaptive—they use patterns discovered in one part of a block to do a better job of compressing information in other parts. These algorithms work better on large blocks of data than on small blocks. The details vary for different compression algorithms and different data, but the overall trend is the same— larger blocks make for better compression. However, it is difficult to arrange for sufficiently large blocks of data to be compressed all at once. Most file systems use block sizes that are too small for good compression, and increasing the block size would waste disk space in fragmentation. Compressing multiple blocks at a time seems difficult to do efficiently, since adjacent blocks are often not written at the same time. Compressing whole files would also be less than ideal, since in many systems most files are only a few kilobytes. In a log-structured file system, the main data structure on disk is a sequentially written log. All new data, including modifications to existing files, is written to the end of the log. This technique has been demonstrated by Rosenblum and Ousterhout in a system called Sprite LFS. The main goal of LFS was to provide improved performance by eliminating disk seeks on writes. In addition, LFS is ideally suited for adding compression—we simply compress the log as it is written to disk. No blocks are overwritten, so we do not have to make special arrangements when new data does not compress as well as existing data. Because blocks are written sequentially, the compressed blocks can be packed tightly together on disk, eliminating fragmentation. Better still, we can choose to compress blocks of any size we like, and if many related small files are created at the same time, they will be compressed together, so any similarities between the files will lead to better compression. We do, however, need additional bookkeeping to keep track of where compressed blocks fall on the disk. Evolving the High Performance Computing and Communications Initiative to Support the Nation’s Information Infrastructure Computer Science and Telecommunications Board 1995 (The Brooks-Sutherland report) Executive Summary Information technology drives many of today’s innovations and offers still greater potential for further innovation in the next decade. It is also the basis for a domestic industry of about $500 billion,1 an industry that is critical to our nation’s international competitiveness. Our domestic information technology industry is thriving now, based to a large extent on an extraordinary 50-year track record of public research funded by the federal government, creating the ideas and people that have let industry flourish. This record shows that for a dozen major innovations, 10 to 15 years have passed between research and commercial application. Despite many efforts, commercialization has seldom been achieved more quickly. Publicly funded research in information technology will continue to create important new technologies and industries, some of them unimagined today, and the process will continue to take 10 to 15 years. Without such research there will still be innovation, but the quantity and range of new ideas for U.S. industry to draw from will be greatly diminished. Public research, which creates new opportunities for private industry to use. should not be confused with industrial policy, which chooses firms or industries to support. Industry, with its focus mostly on the near term, cannot take the place of government in supporting the research that will lead to the next decade’s advances. The High Performance Computing and Communications Initiative (HPCCI) is the main vehicle for public research in information technology today and the subject of this report. By the early 1980s, several federal agencies had developed independent programs to advance many of the objectives of what was to become the HPCCI. The program received added impetus and more formal status when Congress passed the High Performance Computing Act of 1991 (Public Law 102-194) authorizing a 5-year program in high-performance computing and communications. The initiative began with a focus on high-speed parallel computing and networking and is now evolving to meet the needs of the nation for widespread use on a large scale as well as for high speed in computation and communications. To advance the nation’s information infrastructure there is much that needs to be discovered or invented, because a useful “information highway” is much more than wires to every house. As a prelude to examining the current status of the HPCCI, this report first describes the rationale for the initiative as an engine of U.S. leadership in information technology and outlines the contributions of ongoing publicly funded research to past and current progress in developing computing and communications technologies (Chapter 1). It then describes and evaluates the HPCCI’s goals, accomplishments, management, and planning (Chapter 2). Finally, it makes recommendations aimed at ensuring continuing U.S. leadership in information technology through wise evolution and use of the HPCCI as an important lever (Chapter 3). Appendixes A through F of the report provide additional details on and documentation for points made in the main text. IP Lookups using Multiway and Multicolumn Search Butler Lampson, V. Srinivasan and George Varghese ACM Transactions on Networking, 7, 3 (June 1999), pp 324-334 (also in Infocom 98, April 1998) Abstract IP address lookup is becoming critical because of increasing routing table size, speed, and traffic in the Internet. Our paper shows how binary search can be adapted for best matching prefix using two entries per prefix and by doing precomputation. Next we show how to improve the performance of any best matching prefix scheme using an initial array indexed by the first X bits of the address. We then describe how to take advantage of cache line size to do a multiway search with 6-way branching. Finally, we show how to extend the binary search solution and the multiway search solution for IPv6. For a database of N prefixes with address length W, naive binary search scheme would take O(W log N); we show how to reduce this to O(W + log N) using multiple column binary search. Measurements using a practical (Mae-East) database of 30000 entries yield a worst case lookup time of 490 nanoseconds, five times faster than the Patricia trie scheme used in BSD UNIX. Our scheme is attractive for IPv6 because of small storage requirement (2N nodes) and speed (estimated worst case of 7 cache line reads) Keywords—Longest Prefix Match, IP Lookup Introduction Statistics show that the number of hosts on the internet is tripling approximately every two years. Traffic on the Internet is also increasing exponentially. Traffic increase can be traced not only to increased hosts, but also to new applications (e.g., the Web, video conferencing, remote imaging) which have higher bandwidth needs than traditional applications. One can only expect further increases in users, hosts, domains, and traffic. The possibility of a global Internet with multiple addresses per user (e.g., for appliances) has necessitated a transition from the older Internet routing protocol (IPv4 with 32 bit addresses) to the proposed next generation protocol (IPv6 with 128 bit addresses). High speed packet forwarding is compounded by increasing routing database sizes (due to increased number of hosts) and the increased size of each address in the database (due to the transition to IPv6). Our paper deals with the problem of increasing IP packet forwarding rates in routers. In particular, we deal with a component of high speed forwarding, address lookup, that is considered to be a major bottleneck. When an Internet router gets a packet P from an input link interface, it uses the destination address in packet P to lookup a routing database. The result of the lookup provides an output link interface, to which packet P is forwarded. There is some additional bookkeeping such as updating packet headers, but the major tasks in packet forwarding are address lookup and switching packets between link interfaces. For Gigabit routing, many solutions exist which do fast switching within the router box. Despite this, the problem of doing lookups at Gigabit speeds remains. For example, Ascend’s product has hardware assistance for lookups and can take up to 3s for a single lookup in the worst case and 1 s on average. However, to support say 5 Gbps with an average packet size of 512 bytes, lookups need to be performed in 800 nsec per packet. By contrast, our scheme can be implemented in software on an ordinary PC in a worst case time of 490 nsec. The Best Matching Prefix Problem: Address lookup can be done at high speeds if we are looking for an exact match of the packet destination address to a corresponding address in the routing database. Exact matching can be done using standard techniques such as hashing or binary search. Unfortunately, most routing protocols (including OSI and IP) use hierarchical addressing to avoid scaling problems. Rather than have each router store a database entry for all possible destination IP addresses, the router stores address prefixes that represent a group of addresses reachable through the same interface. The use of prefixes allows scaling to worldwide networks. The use of prefixes introduces a new dimension to the lookup problem: multiple prefixes may match a given address. If a packet matches multiple prefixes, it is intuitive that the packet should be forwarded corresponding to the most specific prefix or longest prefix match.