emotion

advertisement

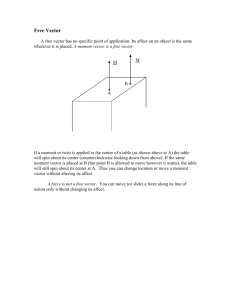

The Emotion Engine and Vector Unit Design 2002 Kim L. Vu Kim L. Vu 2002 The Emotion Engine and Vector Unit Design The Sony Emotion Engine’s architecture is structured around the ability to achieve “emotion synthesis,” or in simpler terms, high quality image expression, through the integration of wide internal buses and multiple arithmetic operating units. While most PCs are based on a 64-bit data structure, the Emotion Engine (EE) doubles its registers and data busses to 128-bits allowing for large amounts of multimedia data to be processed and transported at the highest possible speeds. For further implementation, ten floating-point multiplier-accumulators (FMACS), four floatingpoint dividers (FDIV) and an image-processing unit (IPU) are used to speed up calculations. Before venturing forth into a detailed analysis of the EE, let us briefly analyze the basic interactions of the EE with its surrounding components. The following figure is an overview of the Sony Play Station 2 architecture. The I/O Processor sends controller input to the Emotion Engine where the state of the game world is updated. To do this, the EE produces a set of rendering commands called display lists and sends it to the Graphics Synthesizer where basic elementary shapes are drawn into complex 3D graphics. Sound is omitted in this document. The Emotion Engine is a 2-instruction, super-scalar MIPS III architecture CPU. It has three coprocessors, one of which is a floating-point unit (FPU) and two of which are vector units (VU). Other components include a 10-channel Direct Memory Access Controller (DMAC), a Kim L. Vu 2002 Graphic Interface Unit (GIF), a MPEG2 decoder as an IPU and two interfaces, one for the RDRAM and the other, for the I/O. A 128b single instruction multiple data (SIMD) set and a scratchpad RAM (SPRAM) are added as multimedia functional enhancements. The following diagram displays these components. Lighting calculations and perspective transformations are the keys in achieving emotion synthesis. Designed for this purpose, Sony cleverly juxtaposes traditional CPU structure with Digital Signal Processing (DSP). In order for the EE to manage the large amounts of data required by multi-media applications, information is processed in digital electronic format in repetitive, loop-based calculations or otherwise known as, DSP. In this scheme, large data streams are being acted upon by relatively few repetitive instructions. Consequently, the EE is equipped with small caches and large buses. These small caches have very low latency and can be accessed in one cycle allowing rapid data access. Likewise, the widened data busses result in higher-bandwidth and faster information processing. MIPS III Core The CPU core of the Emotion Engine, as mentioned earlier, is based on a RISC ISA. However, to take advantage of the FP SIMD capabilities, the EE veers from MIPS III ISA by creating an entirely new set of 128-bit integer instructions to replace the conventional 64-bit integer instruction set. To place this new set into practice, the registers in the EE are expanded to 128bits and the two ALUs are locked together to operate as a single unit. Used in parallel, the two- Kim L. Vu 2002 integer units can perform four 32-bit, eight 16-bit, or sixteen 8-bit integer arithmetic operations each cycle. The CPU core supports the following instruction types: - MUL/DIV instructions 3-opo MUL/MADD instructions Arithmetic ADD/SUB instructions Pack and extend instructions Min/Max instructions Absolute instructions Shift instructions Logical instructions Compare instructions Quadword load/store instructions Other miscellaneous instructios The core comprises of a 16K instruction cache, an 8K data cache, a 16K SPRAM, 32 128-bit general purpose registers and 5 logical pipes. The logical pipes are: two 64-bit iALUs, 128-bit Load/Store unit, 2 coprocessors and a branch execution unit. The data cache is a two-way set associative cache that supports non-blocking access. The instruction cache is also two-way set associative and includes two bits of branch history tag (BHT) and three instruction steering bits. Both caches are virtually indexed and physically tagged. The SPRAM can be accessed through the same pipeline stage as the data cache, hence a flag is added into the MIPS-standard TLB to differentiate between the SPRAM and the cache address space. The CPU uses standard MIPS III Load/Store instructions to access the SPRAM once it is loaded by the DMAC. The CPU also has a 6-stage pipeline (PC|Fetch|Register|Exec|Cache Access|Write Back) and a speculative execution capability centered upon a 64-entry branch target-address cache (BTAC) and a BHT integrated into the instruction cache. Observe that this simple, two-branch prediction mechanism adequately suffices for the EE because of the small misprediction penalty inherent in a short-staged pipeline design. In particular, the EE yields a misprediction penalty of only 3cycles. Vector Unit Design Strategy The VUs operate in two modes: coprocessor mode for use as MIPS COP2 and VLIW for use as a stand-alone processor. This arrangement gives the vector units the flexibility to optimize its performance with the CPU core. The coprocessor mode is available only in the VU0 and while the VLIW mode is available in both vector units, the VUO operates mainly in coprocessor mode. In the VLIW mode, each 64-bit instruction format is split into an upper instruction and a lower instruction. Its instruction set comprises of 164 instructions; 95 of these are the upper instructions and 69 of these are the lower instructions. The coprocessor mode has 130 instructions that include all of the COP2 instructions and most of the upper and lower VLIW instructions. To help the vector units handle DMA access to and from memories, interfaces are employed. The VU0 has a coprocessor interface and a vector unit interface (VIF0). Along with its own Kim L. Vu 2002 vector unit interface (VIF1), VU1 also collaborates with a GIF. These interfaces in conjunction with EE’s memory double-buffering techniques are the means of continuous data processing. VU Instruction Format As noted above, the vector units are two-instruction, SIMD/VLIW processors. To accommodate the VLIW 64-bit bundle, the vector processing units (VPU) are divided into an upper, “SIMD” unit and a lower execution unit. Hence the term “SIMD/VLIW processor.” The upper and lower execution units have the following functionality. Upper Instructions - 4 parallel FP ADD/SUB - 4 parallel FP MUL - 4 parallel FP ADD/MSUB - 4 parallel MAX/MIN - Outer product calculation - Clipping Detection Lower Instructions - FP DIV/SQRT/RSQRT - Load/Store 128b data - EFU - Jump/Branch - Random number generator Note that because the VUO operates mainly in coprocessor mode, which utilizes only 32-bit instructions, the lower opcode is often a NOP. This permits the VU0 to execute 4 FP SIMD operations every clock cycle using the 4 FMACS available to it. However, the VU0 can step out of coprocessor mode and into VLIW mode simply by taking the 64-bit instruction bundle, splitting it into two 32-bit COP2 instructions and running them in parallel. VU0 The VU0 chiefly acts as a coprocessor for the MIPS III core and thus for the most part, appears to the programmer as another logical pipe. Like the VU1, it has 5 computational units available to it: 4 FMACS, 1 FDIV, 1 LSU, 1 ALU and a random number generator. Of these units, the FMACS and the FDIV plays the most important role. The FMAC can perform two types of calculations, both utilizing one cycle. These calculations are the min/max and the floating-point accumulator calculations. The FDIV on the other hand can perform three types of calculations: floating-point divide, square root, and inverse square root. These calculations take 7, 7, and 13 cycles respectively. VU1 As a stand-alone processor, VU1 is mainly responsible for simple 3D graphic calculations. These calculations require the transference of large amounts of data. For this reason, VU1 is equipped with four times more memory than VU0. In addition, it has an elementary function unit (EFU) added to the lower execution unit that uses one FMAC and one FDIV to perform basic calculations such as sin and exp. This EFU is architecturally analogous to the FPU coprocessor. To transfer the display list to the GIF, it has three optional data paths. The VU1 can work on the data list in its 16k data RAM by means of the direct 128-bit bus from the data RAM to the GIF or it can use the other two transfer paths, mainly via the VIF data path or the main data bus. For a visual of this, please refer ahead to figure 4. One other difference betwixt the VU0 and the VU1 is VU1’s dependency on its VIF. Whereas, in the VU0 the VIF plays no crucial role, in the VU1 it decodes and parses the commands within the 3D display list checking that the commands Kim L. Vu 2002 have transferred to the correct area. By this work, the VIF frees the VU1 from CPU dependency sanctioning it to perform as pure SIMD/VLIW processor. The main internal blocks of the VU1 are: 1) Upper execution unit with four parallel FMACs 2) Lower execution unit with FDIV, load/store, integer ALU, and a branch 3) 32, 128-bit floating registers 4) 16, 16-bit integer registers Although microarchitecturally identical, the vector units are designed to fit certain roles and have been customized in advanced to fill these roles under a logical unit design, or simply, a “team” design. Two main “teams” each housing an individual vector unit (VU) are formed in advance by Toshiba which I have called “Team 1” and “Team 2.” They can be seen above in Figure 2. Team 1: FPU, core CPU, VU0 As a side note, since the FPU is an uncomplicated structure, it was not discussed in length prior to this. However, it is adequate enough for our purposes to know that the structure of the FPU is actually a reversion back to early RISC ISA’s when the FPU was a separate unit from the cpu. In this construct, the FPU is simply a coprocessor that uses one FMAC and one FDIV to execute 32-bit MIPS coprocessor instructions. The first team handles the physics, program control, AI and behavior calculations. In order for Team 1 to handle these complex calculations, each member in the team is in constant communication with the other members. Two main items make this constant interaction feasible. The first item is the CPU’s 2 separate, 128-bit exclusive coprocessor data bus to the FPU and the VUO. These data busses allow the CPU to transfer data to the other members without reliance on the main bus and the manipulation of VU0’s registers by CPU instructions. The second important component is the CPU’s scratch pad RAM (SPRAM). The SPRAM is accessible in a single cycle and available to the DMAC while the processor is busy executing instructions and accessing the data cache. Because of its ease of access, the SPRAM acts as a shared workspace for the CPU and the VU0. Before data is sent to the 128-bit internal bus, it is first work on in the SPRAM by the CPU and the VU0. Kim L. Vu 2002 Team 2: VU1 and GIF Team 2’s main responsibility is simple geometric calculations. Unlike Team 1, the members in this team are somewhat independent of each other; no member controls the other member but yet act as equal partners. However, like the members in Team 1, to ease communication VU1 and GIF also share a dedicated 128-bit bus. The VU1 processes the calculations and sends this information as a display list to the GIF where it is in turn sent to the graphic synthesizer. The graphic synthesizer then draws the basic shapes, turning them into complex graphics. Team Interoperation Although, Toshiba has pre-designed these two teams for specific jobs, the actual allocation of work among these components is quite flexible and is in the end up to the individual game programmer. For example in the following figure labeled “Serial Connection,” Team 1 can be programmed to act as a preprocessor for VU1. In this organization, the team processes conditional branches in the code, loads data from main memory and updates the state of the game world. This information is then passed on to VU1 where it is used to compose a display list. Kim L. Vu 2002 The next figure labeled “paralled connection” depicts another example where Team 1 and Team 2 are programmed to work in parallel to simultaneously create display lists. This design flexibility allows programmers to tailor the process of 3D creation and rendering to adapt to the specific needs of an application. Conclusion The Emotion Engine is an important entry into the game console world. Its unique architectural approach has permitted a design that is now one of the most powerful media engines on the market. As one of the first processors to utilize a 128-bit architecture with a VLIW/SIMD design and vector units, it has “uped the ante” on emotion synthesis and real-time interactive 3D graphics thereby elevating the “gaming” world into another new level. Some have even argued that the Emotion Engine has transcended the “gaming” world and is now a threatening contender in the PC market. It will be interesting to see where the future lies and whether or when PCs and gaming consoles will truly integrate and become one market and what role will the EE architecture play in it. Acronyms ALU DSP EE EFU FDIV FMAC GIF IPU SPRAM VPU VU Arithmetic Logic Units Digital Signal Processing Emotion Engine Elementary Functional Unit Floating-Point Divider Floating-Point Multiply Accumulator Graphics InterFace Image Processing Unit Scratch-Pad RAM Vector Processing Unit Vector Unit Kim L. Vu 2002 References [1] Atsushi Kunimatsu, et. al., “Vector Unit Architecture for Emotion Synthesis”, IEEE Micro, Vol. 20, No. 2, March/April 2000, pp. 40-47 [2] K. Kutaragie et. al., “A Microprocessor with 128b CPU, 10 Floating-Point MACS, 4 Floating-Point Dividers, and MPEG2 Decoder,” ISSCC (Int’l Solid-States Circuit Conf.) Digest Tech. Papers, IEEE Press, Piscatawey, New Jersey, Feb. 1999, pp. 256-257 [3] F. Micheal Raam, et. al., “A High-Bandwidth Superscalar Microprocessor for Multimedia Applications,” ISSCC Digest Tech. Papers, IEEE Press, Feb. 1999, pp. 258-259 [4] Sound and Vision: A Technical Overview of the Emotion Engine by John Stokes, Ars Technica - http://arstechnica.com/reviews/1q00/playstation2/ee-1.html [5] The Playstation2 vs. the PC: A System-level Comparison of Two 3D Platforms by John Stokes, Ars Technica - http://arstechnica.com/cpu/2q00/ps2/ps2vspc-1.html [6] Emotion Engine Caching, Arspentia - http://www.arspentia.org/lgl/eecaching Kim L. Vu 2002