Full project report

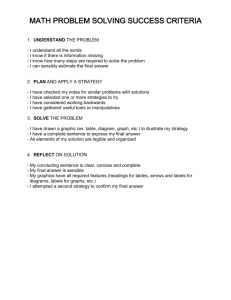

advertisement

Using relaxation labeling for Hebrew text tagging Final project for the course “Introduction to computational and biological vision”, Ben-Gurion university of the Negev, 2005 By shai shapira Introduction Relaxation labeling Relaxation labeling is a general name for a group of methods for assigning labels to a set of objects using contextual constraints. It was originally developed for use in the field of computer vision, in such fields as line drawing interpretation and image segmentation, but can generally be applied to any problem of that type. The mechanism of relaxation labeling is a parallel iterative process, where in each iteration, each object uses the labels of it’s neighboring objects, and contextual constraints defined as a support function, to update it’s own label, which in turn will affect it’s neighbors’ labels. One way to approach the problem would be to assign each object one label of the set of possible labels, and use only that in the calculations, and for the support function to be boolean, meaning that for every pair (or group) of objects and their labels, it will return true or false, based on whether they are compatible or not . This approach is known as unambiguous labeling. While it can be useful in some cases, it is usually not a practical solution, as it does not allow for preferences – one cannot make one label “less likely” than another, but still possible, whereas that is often how our initial measurements are received. The same goes for the support functions – we usually want to define not only whether a group of labels is compatible, but also how compatible (or incompatible) they are. Therefore the more common approach is that known as ambiguous labeling, in which every object has a set of probabilities for each label to be assigned to it. Also, the support functions now return not a boolean answer, but a number with both a sign, stating whether the labels are compatible or incompatible, and a magnitude for representing the importance of the constraint. Since this approach is better suited for the problem, it is the one used in this project. Natural language text tagging The problem of Natural language text tagging is a crucial problem in the field of natural language understanding. It involves the assignment of grammatical information, usually either lexical categories (i.e. noun, adjective, etc.) or grammatical function (i.e. subject, predicate, etc.) to parts of a given text. While the question of when a computer truly “understands” a natural language sentence, it is certain that an absolutely necessary step is to figure out the part every word plays in the sentence – understanding every word in itself is very important on it’s own, but hardly extracts any information from the sentence. Even a simple sentence like “The cop saw the thief” will say very little until we know which is the subject, and which is the object. It seems that one of the most important factors in tagging is using contextual information – in the previous example, while “the cop” on it’s own can have many meanings, the active verb after it gives a very strong clue that it is the subject. Since it seems that contextual information is very important in the tagging process, and relaxation labeling is a method for using contextual information, this project will try to use relaxation labeling methods for tagging natural language sentences. The tagging system Requirements To test the suggested approach, an implementation of the idea had to be made. First, the problem must be defined more accurately: - Language: This project will work on the Hebrew language. Hebrew places higher importance on context, as Hebrew words tend to be highly ambiguous, making it much more difficult to extract grammatical information from a word without it’s context. This makes Hebrew an especially interesting language to explore in such a project. - Tagging type: The tagging will be assignment of grammatical functions to each word. This is perhaps more difficult than division to lexical categories, but again, it is more context-dependent, as lexical category tagging can reach fairly good results by simply ignoring the context, and assigning each word it's most likely category (it is perhaps not as easy in Hebrew with it's high ambiguity, but still easier). Therefore, it seems that tagging with grammatical functions will be a more interesting problem for this project. - Label set: Having decided on grammatical functions as label types, it is still necessary to decide what the actual labels will be, as there is no universal division of grammatical functions – one can divide all words to as little as 4 or five categories, or to as much as 1,000 or more. It seems that the more subtle the division the more likely the results are to be accurate, but it also increases the complexity of operations such as choosing the initial measurements and creating the support function by very much. Since this project is mainly set to investigate the relaxation algorithm rather than Hebrew linguistics, it seems that such complexity would be too cumbersome and a set of five labels was chosen: subject, predicate, object, modifier, and descriptor. Label set Grammatical functions don’t really have a complete, standard definition, but in most cases the division to our five labels is unambiguous. A brief description of each label: - Subject: The item about which the sentence mainly provides information, usually by the fact that it is the actor of the predicate (if the predicate is a verb), or described by it (if the predicate is an adjective, a form that exists in Hebrew but not in English). - Predicate: The main piece of information given by the sentence to describe the subject – usually either an action it performs or an adjective that describes it (again, the latter form does not exist in English). - Object: Existing only in sentences where the predicate is a transitive verb. A transitive verb is a verb which requires arguments, usually the target of the action the verb describes, but sometimes others. Therefore, a sentence may have multiple or no objects. - Modifier: Any word which adds information on any noun in the sentence, which is not the main point of the sentence (that is, it is not an adjective predicate). - Descriptor: Any word which adds information on the verb predicate of a sentence. Structure of the system The system is an application that reads a Hebrew sentence and tags it. It can be roughly divided to four parts: The reader, which reads the sentence and assigns each word an initial set of label probabilities, the support function, which represents the contextual information used by the algorithm, the actual relaxation algorithm, and the user interface. Reader The reader, having received the sentence from the UI, must observe each word and assign it initial probabilities. For that, it requires a lexicon, which is a file that holds a list of words and their initial probabilities. Since, like a human, the system still has a chance to understand a sentence even without recognizing all words, the reader was made to initialize an unknown word with a set of equal probabilities, in hope that the contextual information will be enough to infer it’s grammatical role. properly building the lexicon is not a simple problem, and many approaches are possible. One of the main problems is that it’s not well defined – the initial probabilities that would be helpful for one text might be disastrous for another – this is especially true for a language as ancient as Hebrew, with many different styles and levels. It seems that the most efficient way would be to implement a learning mechanism, and present it with some pre-tagged sentences of a style as similar as possible to that of the sentences we would later want the system to work on. However, such a solution is beyond the scope of this project, and the lexicon was made manually, mostly in a process of trial and error. Support function The support function is a representation of the contextual information used in the relaxation algorithm. It shows, for each group of assignments of labels to objects, how compatible they are. Much like the construction of the lexicon, this is also a difficult problem, suffering from pretty much the same obstacles. The main hints for understanding a sentence one can find in the context are in the word ordering. But those can differ greatly in different styles of texts – for example, in older Hebrew text, the basic sentence structure is usually <predicate> <subject> <object>, whereas in modern text usually <subject> <predicate> <object>. For this and other reasons, the support function will probably also be best created with a learning mechanism. And here also, it is not possible for this project and the support function was also built manually. Relaxation algorithm The relaxation process uses the classical algorithm suggested by Rosenfeld, Hummel, and Zucker in 1976. While much work has been made to improve it, it remains an important, widely-used general purpose relaxation algorithm, and seems to be the one most suitable for this project. It uses a simple, parallel iterative process where each object updates it's label probabilities considering the support from labels of other objects. That is how the contextual information (in our case, the support function) is added to the non-contextual information (in our case, the initial probabilities from the lexicon) to form a (hopefully) consistent interpretation of the sentence. After the algorithm finishes, each word receives the label with the highest probability as it's tag. The user interface A simple interface was made to enable the testing of the system: The user simply types the sentence into the text box, presses the “tag” button, and views the result in the result table. The table shows each word fro, the sentence, along with it’s tag, which is the label with the highest probability at the end of the algorithm. Word that were not recognized by the lexicon have an asterisk after them to note that. Results The results seem adequate for the scope of the project. The system shows a significant ability to overcome cases where the correct tagging is different from the initial assumptions, thereby showing an ability to use contextual information. However, it is far from perfect, and works only on relatively simple sentences. One way to improve the performance would probably be to implement the reader and support as learning mechanisms, as suggested previously. Also, the binary support function may be expanded to include non-binary ones, perhaps up to even support functions that span the entire sentence, for example to reduce the likeliness of sentences tagged with more than one subject or predicate, which are hard to prevent with binary support but are very often wrong. To allow the system to work with compound sentences, perhaps a multi-leveled approach can be used. Since the identification of a certain stream of words within a sentence as a sub-sentence is largely context-dependent, perhaps a relaxation algorithm for identifying the sub-sentences can be combined with the algorithm for tagging each sub-sentence. Those are only some of the options for improvement of the system. Unfortunately, this project is of too small a scope to attempt them. However, it is possible that they could make the system reach more impressive results. Conclusions It is hard to say at this point wheather relaxation labeling methods are practically suitable for natural language tagging. This project could only try to begin exploring the many options this approach has to the problem, and much more work is necessary to improve it to a level of comparison with other methods. However, given some promising results and many directions for improvement, it seems that this approach does have some potential, and might be worth exploring further. References Relaxation labeling: 1. Relaxation Labeling Algorithms - A Review , by Kittler and Illingworth, 1985 2. On the Foundations of Relaxation Labeling Processes, by Hummel and Zucker, 1983 Text tagging: 3. Hidden Markov Model for Hebrew Part-of-speech Tagging, by Meni Adler, Msc. theis, Ben Gurion University, Israel, November 2001