The measurement and neural foundations

advertisement

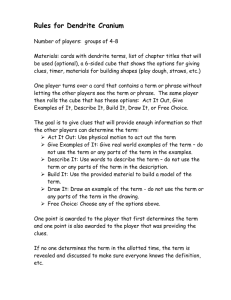

Collaborative Research on The Measurement and Neural Foundations of Strategic IQ 1. Introduction We propose to create a system to measure how well different people reason in social (gametheoretic) situations, where the choices of other people (or organizations, including companies and nation-states) affect their own outcomes and choices unfold over time. Understanding these situations helps us understand human social dynamics and enhance human performance. The work is interdisciplinary, bringing together strands of mathematical game theory, psychology, and neurobiology, in order to create a measure of “strategic IQ” for calibrating how well people with different skills think strategically. This proposal combines our expertise in experimental studies of strategic thinking with access to special subject populations with localized brain legions, experienced business executives, and technologies for brain imaging. The concept of strategic IQ can have scientific value because these data, along with neuroscience data, can also be used to understand the psychological and neural foundations of strategic thinking. A strategic IQ measure also has practical value: To help people evaluate their own skills, to aid in personnel selection (e.g., screening corporate strategists, diplomats, or military personnel), and to show people where their strategic thinking can be improved. Understanding the neural foundations of strategic thinking can also help with diagnosis and rehabilitation of disorders, and for immunizing elderly populations from potential risks (since prefrontal and hippocampal regions, which we focus on, decline most rapidly with age) in elderly populations. Our research starts with game theory. Game theory has proved enormously useful in economics, among social sciences, and can potentially unify diverse disciplines by providing a common language. Game theory provides a way to define strategic situations mathematically— viz., in terms of players, their information and strategies, an order of moves, and how players evaluate consequences which result from all the strategy choices. Most analyses of how people actually behave in games use some concept of equilibrium (i.e., players accurately guess by learning or introspection what others will do). While the concept of equilibrium is useful as an idealized model, hundreds of experiments with many groups of people (including subjects who are highly financially-motivated and trained in game theory) show that actual behaviors are often inconsistent with the equilibrium assumption (Camerer, 2003). The fact that people do not always make equilibrium choices implies that there may be reliable, measurable differences in the ability of people to think strategically, which we call strategic IQ. Strategic IQ measures a person's ability to guess accurately what others will do in situations where two or more parties do not have prior contractual agreements (agreeing on a contract may be the strategic situation of interest), each party can choose several possible courses of action, and each individual payoff depends on his or her actions, the actions of the other parties, and on chance events. Examples include war, international trade negotiation, rivalry between firms to gain market share, and child custody fights between divorced couples. A person's strategic IQ is her total normalized payoff when playing with others in a series of carefully designed competitive situations. Note that IQ is dynamic-- a person's IQ can increase or decrease as a result of others changing their choices, and can be improved by sharpening a person's understanding of typical behavior. A premise of the engineering applications of our approach is that people differ in their IQ-- this variation enables us to understand the neural foundations of strategic thinking-- and that it is possible to improve IQ by training. 1 We propose to measure strategic IQ by how much people earn in a battery of games, when playing against the previous choices of a population of specified players. Measures of strategic IQ's will be compared to three benchmarks-- equilibrium choices from game theory analyses; a “clairvoyant” best guess about what others do (based on the data we collect), which by definition an upper bound on strategic IQ; and a “cognitive hierarchy” model developed in previouslysupported NSF research. As noted, an important use of strategic IQ is basic scientific research. To this end, we will give the battery of games to five specially-selected populations: (i) Undergraduates who are superbly skilled in mathematics (screened groups of Berkeley and Caltech students); (ii) undergraduate and graduate students specially trained in game theory1; (iii) chess players recruited at tournaments (since their numerical chess-skill ratings can be compared to their strategic IQ’s); (iv) highly-experienced business managers (available through executive education activities at Berkeley where PI Ho teaches); and (v) patients with specialized brain lesions (available through the University of Iowa’s unique Cognitive Neuroscience Patient Registry, which PI Adolphs has used extensively). Other interesting subject pools will be sampled as opportunities arise. Evidence about what games the lesion patients play poorly will help diagnose which brain circuitry is used in various types of strategic thinking. These data are complements to planned fMRI imaging: Imaging is useful for seeing all the parts of neural circuitry (while lesion patient deficits show the “necessary” parts); and lesion patients are useful for studying parts of the brain that are not easily imaged (especially insula and orbitofrontal cortex), or where delicate designs are needed. This part of the project also contributes to basic neuroscience by providing a new set of tasks which can help refine our understanding of the functions of higher-order cognition in prefrontal cortex, which is not thoroughly understood (e.g., Wood and Grafman, 2003). Before we continue, it is important to note that strategizing is not merely outfoxing opponents in “zero-sum” games, where one player loses if another player wins. Strategic thinking generally refers to guessing correctly what others are likely to do. Therefore, strategic thinking can often be mutually beneficial, in games where more strategic thinking by both players can help them achieve joint gains. So raising one person’s strategic IQ may help others. Also, the term “IQ'” just denotes a numerical measure of how well different people perform in a specific test. We hope to avoid debates about whether there is such a thing as general intelligence, or whether such tests are inherently biased or used for socially harmful purposes. The strategic IQ measures simply starts to put an important skill on a scientific basis, which may also have some practical use. Our approach follows Gardner (1983) and others who distinguish “multiple intelligences” (cf. Salovey, Mayer and Caruso, 2002 on “emotional intelligence”). Gardner's seven types of intelligence include logic/mathematical reasoning and interpersonal intelligence. Strategic IQ is a combination of these two subtypes. 2. Proposed Activities a. Strategic IQ measurement: First we will develop a battery of games and a database of how various groups play those games under conditions which are typical in experimental economics-1 Earlier research on the economic value of models (Camerer, Ho and Chong, 2004) showed that players could often earn more by following Nash equilibrium advice. This fact suggests that students trained in game theory will raise their strategic IQ (since earning more when playing others and strategic IQ are linearly related). However, in some games equilibrium choices are bad choices against a typical population. So it is conceivable that students trained in game theory will have lower strategic IQ’s than some other untrained group with better strategic intuition. 2 namely, the game is described abstractly and subjects earn payoffs which depend on their choices and the choices of others whom they are paired with. b. Creating theoretical benchmarks: It is useful to compare human performance on these games with two theoretic benchmarks-- the game-theoretic advice from equilibrium models, and a simple descriptive model based on “cognitive hierarchy” (CH) models of naturally-limited cognition (extending earlier work by PI Camerer and Ho). Doing this comparison requires extending the CH models to dynamic “extensive form” games, which is a basic scientific contribution. c. Studying specially-selected groups: Experiments with lesion patients from the Iowa database will show which neural regions are important in the various components of strategic thinking identified in Table 1. Other experiments with specialized populations, including experts and others will tell us more about likely regions used in different kinds of strategic thinking. d. Imaging neural activity: fMRI imaging and tracking of eye movements with typical subjects will supplement what we learn from (3) about neural regions active in strategic thinking. Imaging will be done at Caltech's Broad Imaging Center where PI Camerer has been active (e.g., Tomlin et al, 2004) and there is good access to scanner time. The fMRI scanner goggles worn by subjects also include tiny cameras so the eye movements of subjects can be measured while they are in the scanner (see Camerer et al, 1994, for an early application of eye-tracking to game theory). 3. Strategic IQ measurement To illustrate the skeleton of a strategic IQ measure, we will discuss five classes of games which we hypothesize to tap different dimensions of strategic IQ. We illustrate each class with only one exemplar game, but in practice we will use many games with similar strategic properties (some of which are enumerated in footnotes annotating the section that describes each exemplar game). Standard psychometric methods like factor analysis will be used to see which components of strategic IQ congeal statistically. That is, each game will be treated as a separate test item, and we will evaluate statistically which test items correlate into distinct factors. Assume there are J dimensions altogether and each dimension j has K(j) test questions (i.e., games). If N people take the test, each participant i's strategic IQ(i) is determined as follows: Each individual will have N-1 possible matches in each of the competitive situations. The total payoffs for player i will be i = j=1J k=1K(j) m=1N-1 i(j,k,m), where i(j,k,m) is player i’s payoff from test question k in dimension j, in each match m with one of the N-1 other players. The payoffs will be scaled so each item receives equal weight. Strategic IQ(i) is then normalized by subtracting the mean and dividing by the variance. Of course, the items may cluster into categories which are different than the five we hypothesize. The simple psychometrics is important because most recent mathematical models in behavioral game theory assume that players have distinct emotional or cognitive “types” which will lead to correlated behavior across games, but little is known about how reliable types are across a wide range of games. Psychometric testing of which components of game performance are correlated will reveal whether there are separate dimensions of strategic thinking-- for example, whether anticipating how others will react emotionally to outcomes which give different payoffs to different players is correlated with planning ahead in dynamic games. We will also compare strategic IQ measures to a measures of general intelligence from a short-form of the Wechsler scale 3 (Satz and Mogel,1962) and a measure of emotional intelligence (MSCEIT, e.g. Lopes et al, in press). Analyses with lesion patients who have localized brain damage, and expert subgroups (e.g., the undergraduate chess players), and fMRI measures of brain activity on typical normal control Figure 1: Photographs of the human brain schematizing some of the anatomical regions we are exploring. Color-coded regions are shown on lateral (A and B) and medial (middle, C) views of a human brain. A. The insula (purple), can only be revealed by dissection of the overlying frontal cortex. It is buried underneath the frontal lobe. B. Sectors of prefrontal cortex we will investigate include a cytoarchitectonic region, Brodmann’s area 10 (frontal polar cortex, orange), as well as more general anatomical regions that encompass several cytoarchitectonic regions: dorsolateral prefrontal cortex (blue) and ventromedial (VM) prefrontal cortex (green). The hippocampal formation is buried within the medial temporal lobe; its outline projected onto the lateral surface is indicated in red. C. Visible on the medial surface are the ventromedial prefrontal cortex (green) and anterior cingulate cortex (yellow). subjects, enable us to potentially link performance on different item-clusters to distinct neural regions which underly components of strategic thinking. This analysis may also provide new ways to categorize brain function which might interest neuroscientists. These games have all been well-studied experimentally (see Camerer, 2003). In fact, the large amount of experimental data from previous studies are what permit the construction of a reliable IQ measure (since it calibrates a person’s choices against a large amount of historical play). Each section below describes one component of strategic thinking, an exemplar game which illustrates the strategic thinking component, some hypothesized psychological processes, and tentative candidate brain regions that can be explored as neural loci of the psychological process. Table 1 summarizes this exploratory structure. Note that very little is known about the brain circuitry that creates these processes— indeed, a major contribution of our proposed research is to learn more-- so the hypothesized brain processes are tentative and the work will be exploratory. Figure 1 shows views of the brain with some regions of interest marked. a. Strategic reasoning: The central feature of strategic thinking is the ability to forecast what other players will do purely by reasoning about their likely choices (and about the reasoning of others, and others’ reasoning about reasoning, etc.) An example which distinguishes pure strategic thinking from emotional forecasting and dynamic planning ahead (which are discussed separately below) is the “p-beauty contest game” (e.g., Ho et al, 1998, and named after a passage in John Maynard Keynes’s famous economics treatise about how the stock market is like a beauty contest). In this 4 game each of the players chooses a number in the interval xi [0,100]. The player who is closest to p times the average (with p<1) wins a fixed payoff. The Nash equilibrium in this game is a number x which is close to the average of everyone else picking x, which is zero. Put differently, the only stopping point to the introspective iteration “If the average was X, I would pick (2/3)X; but if everyone else is as smart as me, where do we all stop?”…is zero. In 24 different subject pools with p=2/3, the average number picked ranges from 20 to 35. These choices suggest that people are only doing 1 to 3 steps of strategic thinking on average (Camerer, Ho, and Chong, 2004). The availability of a very large amount of data on these games enables us to construct an IQ item which gives a percentage chance that a person would win the game, if she played in a group sampled from previous data. The percentage chance of winning times the winning payoff gives a numerical score.2 Reasoning in this game requires people to use working memory to store iterations of reasoning (e.g. “If I think the average will be 50, I should choose 33; but if people think like I do they will pick 33 so I should choose 22…”). It also presumably requires “theory of mind” (ToM, e.g. Baron-Cohen 1995), the capacity to form beliefs about what other minds know, as well as the ability to iterate theory-of-mind beliefs. Table 1: Strategic principles, exemplar games, cognitive processes, and candidate brain regions Strategic principle Exemplar game Cognitive process Brain regions to explore Strategic reasoning Beauty contest Working memory, VM (pilot), DLPFC ToM Emotional anticipation Trust ToM (emotions), VM, insula, cingulate social emotion (Sanfey et al 2003) Strategic foresight Shrinking-pie Planning BA 10, DLPFC bargaining Coordination Pure matching ToM, social meta- VM, BA8 knowledge Learning Iterated beauty contest Reinforcement, regret, VM (pilot), Hippocampus forgetting, noveltydetection b. Emotional anticipation: In the beauty contest game the payoff is fixed (in game theory jargon, the game is “constant-sum”) so there is little scope for emotional reaction to inequality in payoffs. In most games, however, the distribution of payoffs (and their total) depends on the choices peple make. A wide variety of data suggest people dislike unequal payoffs and will often sacrifice their own payoffs to reduce inequality (e.g., Fehr and Schmidt 1999), to harm a player who has treated them badly or help a player who has behaved nicely (e.g. Rabin, 1993). Therefore, a separate 2 Other games which measure strategic reasoning are those in which players delete strategies which are dominated iteratively (see Camerer, chapter 5). A deeper feature of strategic thinking is the realization that what other players know-- in game theory terms, their ``private information''-- may affect how they behave, and making probabilistic inferences from what players do about what they know. This is important in “signaling games” and in auctions for objects of unknown common value, in which players must infer what the bids of other bidders tell them about the guesses those other bidders have about the object’s value. 5 feature of strategic thinking is the ability to forecast how others will behave when emotions influence their reaction to unequal outcomes. A well-studied exemplar3 game is the “trust game” (Camerer and Weigelt, 1988). In the simplest version of this game (Berg et al, 1995) one player starts with $10 which she can partly invest, or keep. The amount she invests is tripled—representing a productive return on investment—and given to a second player, the “trustee”. The trustee can repay as much as she wants to the first player, or keep as much as she wants. Presumably trustees repay money because they feel a sense of altruism toward the first player, or a sense of reciprocal moral obligation since the first player took a risk to enlarge the available “pie” for both players. Therefore, the first player must anticipate the emotional reaction of the trustee—the trustee’s sense of altruism or reciprocity. Many studies show that players invest about half of their $10 on average (although the initial investments are widely dispersed) and, on average, trustees repay about $5 so the first player just breaks even. Investing wisely in trust games requires theory of mind as well as anticipation of social emotion. Studies of autistics (who are thought to have poor ToM) show that about a third do not anticipate emotional reactions of others (Hill and Sally, 2003). An fMRI study by Sanfey et al (2003) of the related “ultimatum” game shows that when the second player is deciding what to do, there is activity in prefrontal cortex, insula (a region which is active in discomfort like disgusting odors and pain), and cingulate cortex (a “conflict resolution” region which weighs the desire to earn more money with emotional reactions to inequality). These studies provide candidate regions for emotional anticipation in strategic thinking we will explore in fMRI and with lesion patients. c. Strategic foresight: The two games discussed so far are played simultaneously (the beauty contest game) or only require one step of planning (the trust game). Another component of strategic IQ is strategic foresight in games with many steps (in psychological terms, “planning”). Many studies suggest that players do not plan ahead more than a couple of steps. An exemplar game is alternating-offer “shrinking pie bargaining”4, a workhorse example widely used in economics and political science. In a three-stage example studied experimentally, one player (P1) makes an offer of a division of $5 between herself and a second player, P2. If P2 accepts the offer they earn the proposed amounts and the game ends. However, if P2 rejects the offer then the available money “shrinks”, say to $2.50, and P2 has a chance to make an offer of how to divide the $2.50. If P1 rejects P2’s offer the pie shrinks further, say to $1.25, and P1 makes a final offer. If P2 rejects that offer, the game ends and neither player earns anything. Other games we will study to measure emotional anticipation include “ultimatum” bargaining, in which one player makes a take-it-or-leave-it offer to another player (see Camerer, 2003, chapter 2). In ultimatum games players often reject low offers, presumably because they would prefer to get nothing than to accept an unequal share. Proposers must therefore anticipate how responding players will react emotionally, in order to choose offers which are likely to be accepted. Another emotionally-charged game is the well-known prisoner’s dilemma (PD), in which players can “defect” and earn more money, but if both players “cooperate” then both earn more. (The trust game is like a PD in which the players’ moves are sequential rather than simultaneous). The economic analogue of the PD is “public goods contribution” in which players can keep tokens for private gain, or invest them in a way that benefits everybody. In an economic analogue of the trust game, “gift exchange”, firms prepay a wage to workers, who can decide how much effort to choose. Effort is costly to workers and valuable to firms, so firms must anticipate how much moral obligation a high or low wage offer will instill in workers. 4 Another game which requires strategic foresight is the PD when it is repeated. For example, if the PD is played 10 times, and players know that, then players often cooperate for several periods until the end draws near, when one player typically defects. Maximizing payoffs requires emotional anticipation, as well as planning ahead to guess accurately when another player will defect. Many other repeated games can be used to test for strategic foresight as well. 3 6 The “subgame perfect” equilibrium of this game, assuming players have no emotional reaction to unequal outcomes (i.e., they don’t care how much the other player gets), is for P1 to offer $1.25. In experiments, however, offers are around $2.10; offers below $1.80 are rejected about half the time. Direct measurement of looking ahead (using a computer analogue to eyetracking) shows that about 10-20% of the time, players do not even look at the third stage to see how much would be available if the first two offers were rejected (Camerer et al, 1994). These data suggests that strategic foresight is limited to only a couple of steps, due to constraints on working memory or a “truncation heuristic” which leads players to ignore steps far in the future (as in chessplaying programs that look ahead only a few steps because looking very far ahead is computationally difficult). Temporal planning requires players to have ToM beliefs (perhaps in Brodmann area 8; e.g. Fletcher et al, 1995; McCabe et al, 2001) and use prefrontal cortex and working memory. d. Coordination In the games above there is a unique equilibrium prediction. However, in many games of economic interest, there are multiple equilibria. In these games, the behavioral challenge for players is figuring out which equilibrium is likely to occur. This requires a kind of "social common sense", an understanding of norms of social convention. As Schelling (1960) famously put it matching requires a sense of which “focal” outcomes are "psychologically prominent". An exemplar game5 to study coordination is “pure matching”. In a pure matching game, players simultaneously choose objects from a set, and earn a fixed reward if their choice matches the choice of another player. For example, in Mehta, Starmer and Sugden’s (1994) experiments players are asked to name a date of the year, a flower, a number, and a male name (you can test this component of your strategic IQ by thinking about what you would pick; the "answers" are below6). Pure matching requires ToM and a sense of what the conventional or well-known choice is, which requires a kind of “social meta-knowledge”—i.e., what people know people know. For example, in Mehta et al’s experiments, when people were simply asked to name a favorite day of the year, the 88 subjects chose 75 different dates—presumably their birthdates. But when they were trying to match choice of others, those with healthy ToM realize that others are not likely to know and choose their birthdates. So most of them chose focal dates like December 25, rather than their birthdates. Of course, good choices in matching games can also depend heavily on the group you are playing with (non-Christian populations are not likely to choose December 25), so social metaknowledge about what is culturally known and shared is an important component of strategic IQ. There is little guidance from neuroscience about where this sort of processing of shared knowledge is likely to occur, so fMRI studies of these games will be exploratory and may provoke some new thinking in neuroscience. Many other coordination games are described in Camerer (2003), chapter 7. An example is the “battle of the sexes”: Two players choose either 1 or 3; if the two numbers add up to 4 then each person receives their number, and if the numbers don’t add up to 4 they get nothing. This game pits the desire to coordinate on some pair of numbers which add to 4, with the private desire to get 3 rather than 1. Another interesting game is “stag hunt”: Both players choose H or L. L pays 5 for sure, and H pays X (say, X=10) only if the other player picks H as well, and zero otherwise. This game pits the desire to coordinate on H, so both players can earn more, with the desire to avoid social risk in case the other player chooses L rather than H. 6 In their experiments (conducted with UK students in the late 1980’s), the most common choices were December 25 (44%), rose (67%), the number 1 (40%), and the name John (50%). 5 7 e. Learning from experience: An important component of strategic IQ is learning from experience, and anticipating how other players will learn from experience (i.e. understanding of human social dynamics). The "EWA" model pursued in earlier NSF-supported work (e.g., Camerer and Ho, 1999) is a benchmark for learning which is psychologically rich. The EWA model combines two kinds of learning mechanisms. One kind is direct reinforcement of chosen strategies according to their payoffs (which may be neurally instantiated by midbrain dopaminergic neurons, or parietal neurons; e.g., Schultz and Dickinson, 2000 and Glimcher, 2003). Another kind of learning is based on counterfactual reasoning, or "regret", about how much higher the payoff would have been if another strategy was chosen (which probably requires cognition in frontal cortex.7 The EWA model combines these two types of learning, putting a weight of 1 on the strength of reinforcement and δ on the counterfactual-learning mechanism (with 0<δ<1). The model also assumes that past reinforcements are weighted by a decay rate between zero and one. A lower corresponds to decaying the past more heavily and =1 corresponds to weighing all previous reinforcements equally. An exemplar game to study learning8 from experience is the repeated beauty contest game, choosing a number from 0 to 100 and trying to get closest to 2/3 of the average, with feedback after each round. The thin line in Figure 2 shows a time series of choices by a single control subject with temporal-lobe damage. (The control subject’s learning path is similar to those usually seen in other subject populations.) The numbers this player picked fall steadily toward the Nash equilibrium of zero. Statistically, these paths are fit reasonably well by the EWA model with a δ=.78, and =.36 (Ho, Camerer, and Chong, 2004). In an extension of the EWA model, Ho et al (2004) allow the parameters themselves to be functions of experience rather than fixed throughout the learning process. For example, the decay rate on the past can be interpreted as forgetting, or as a “self-tuning” response to the detection of novelty (which often activates the hippocampus). For example if other players suddenly switch their behavior, a self-tuning learner will realize that old history may be a poor guide to action and will deliberately decay previous reinforcements. Note that such a learner is not “forgetting” per se (she may easily recall previous outcomes), but is instead suppressing old history on purpose. The EWA model suggests that learning involves psychological processes of reinforcement of received payoffs, regret-driven counterfactual reasoning about whether other strategies would have given higher payoffs (with strength δ), and forgetting and novelty-detection which affect . These processes may occur in VM, possibly in emotional regions which register regret, and in the hippocampus (which detects novelty and also creates long-term memories). 4. Creating theoretical benchmarks Measures of strategic IQ can be compared to three benchmarks. The first theoretical benchmark is the Nash equilibrium player. In each match of a test question, we can compute the payoff for a Nash player. Adding these up gives the IQ of an artificial equilibrium player. A key 7 Interestingly, "fictitious play" learning, based on updating beliefs of what other players are likely to do, is equivalent to a special parametric restriction of the EWA model in which there is only regret-driven learning, and no pure reinforcement (i.e., all strategies are reinforced equally, whether they were chosen or not, or δ=1). 8 Repeating virtually any game provides a way to study learning and many such games have been studied experimentally (see Camerer 2003, chapter 6). However, it is important to choose games in which the “repeated game equilibria” (which presume strategic foresight, so players anticipate the effects of current choices on future actions of other players) are the same as the equilibria in one-shot games. (For example, in a repeated PD it is an equilibrium to cooperate until another person defects, but this is not an equilibrium in a single one-shot PD.) In practice, this can also be achieved by having players randomly rematched in each period with people who do not know the previous history. 8 interest of measure is where the Nash player falls in the distribution of payoffs and whether it is above or below the average human strategic IQ (i.e. a normalized IQ score of 100). This is an indirect way to measure whether equilibrium models give good advice (by raising the strategic IQ of typical players; cf. "economic value" in Camerer, Ho and Chong (2004)). The second benchmark is a player who clairvoyantly guesses the actual distribution of choices by the other players and chooses the best response to that distribution. This benchmark is an upper bound because strategic IQ is defined as the payoff earned by playing against the actual distribution-- this is the maximum achievable strategic IQ, which is what the clairvoyant benchmark player earns. The third theoretical benchmark is an artificially-intelligent player who uses a "cognitive hierarchy" model to predict others' actions and best-respond to that forecast (e.g., Camerer, Ho, and Chong, 2004). The CH model assumes that there are frequencies f(k) of players who use k-steps of reasoning in an iterative fashion. 0-step players choose all strategies with equal probability. Onestep players believe they are facing 0-step players and best-respond to that belief. Two-step players think they are playing a mixture of zero- and one-step players (with mixture frequencies f(0)/(f(0)+f(1)) and f(1)/(f(0)+f(1))) and best-respond to their belief, and so on. (In general, k-step players think they are facing a mixture of players using k-1 or fewer steps of reasoning.) For tractability, we assumed that f(k) follows a Poisson distribution f(k)=e k/k! which has only one parameter, , which represents the average number of thinking steps (and also the variance). This simple model is easy to compute once is specified. In earlier work it was applied to about 120 different games and shown to both explain where Nash equilibrium predicts poorly and where equilibrium predicts surprisingly accurately. An artificial CH player uses the CH model to form beliefs and chooses a strategy which has the highest expected payoff given those beliefs. However, the CH model is only designed to apply to normal-form matrix games (in which players are assumed to choose simultaneously). Of course, many games unfold dynamically over time; these "extensive-form" games are often represented in the form of a tree with nodes that follow in temporal order. Extending the CH model to dynamic games is a challenge we will address in this research, in order to create an all-purpose CH benchmark that can be applied to any game. One way to apply CH model to extensive-form games is to combine it with backward induction principle and proceed recursively. That is, 0-step players randomize at all decision nodes. Then 1-step players optimize, starting from future information sets and working backward, and so forth for higher step players. A different idea is to link the number of steps of strategic thinking that players do about other players to the number of steps ahead that they plan. This can be accomplished by a nested-logit9 procedure which links the number of steps of iterated thinking about other players to the number of steps of thinking ahead (and replaces a future subgame’s computed value by its “inclusive value”, an arithmetic average of possible future payoffs), in order to decide on the best current move. Preliminary estimates of this model indicate that it fits dynamic data reasonably well. 5. Studying special groups: Fractionating Strategic IQ using experts and lesions We plan to administer our strategic IQ battery to five special populations in order to gain insights into possible dissociations. To the extent that a given population shows a profile of expert, normal, or impaired performance across different tasks, this will provide evidence that the processes described in Table 1 are independent, or aspects of a single ability: 9 The nested logit was developed to model stochastic choice in situations where there are natural hierarchies (nesting) of choice sets or features of choices. 9 a) Undergraduates who are superbly skilled in mathematics (e.g., high SAT quantitative score): Since strategic IQ combines logic/mathematical reasoning and interpersonal intelligence, this group of subjects allows us to isolate strategic IQ from logic/mathematical reasoning intelligence. This group of subjects will not necessarily score high in the interpersonal component of the strategic IQ test (e.g. .emotional anticipation). b) Undergraduate and graduate students specially trained in game theory: To the extent that these subjects are more likely to behave like “equilibrium” players, their performance is likely to be close to the equilibrium benchmark. However, they will not earn high payoffs if they fail to understand that others may not be as rational as they are. c) Chess players recruited at tournaments: Skilled chess players are good at planning many steps ahead. So they should do well in games along the planning dimension. We plan to correlate their numerical chess-skill ratings with their scores along the planning dimension to determine where the two scores are indeed correlated. d) Highly-experienced business managers: Experienced business managers must consider how their competitors will react in choosing their managerial actions may be more skilled in guessing what others are likely to do in social situations. Successful businesspeople are proud of their skills but it is not clear whether they reflect only domain knowledge or generalizable strategic knowledge which will translate to high strategic IQ. e) Higher-order cognitive abilities depend on multiple distinct brain regions that participate in a "neural system" to produce behavior. Behavior of patients with lesions tell us which parts of the neural circuitry are necessary for the system to function properly, just as clipping one crucial wire in an electric circuit tells which wires are necessary for the circuit to function. We will use PI Adolphs's experience with the Iowa lesion patient database to correlate deficits in strategic thinking with lesion locations. Many subgroups that could potentially be studied, but we have chosen two about which we have specific hypotheses, and which offer a reasonably large sample of patients with homogeneous lesions. a. Hippocampal damage and learning One interesting group is patients with damage restricted to the hippocampus (N=10) (without concomitant damage to the nearby amygdala, a small walnut-shaped region important in fear and learning) who have impaired declarative memory. The hippocampal patients usually have damage due to anoxia (lack of oxygen to the brain, e.g., following carbon monoxide poisoning). They have a very selective problem: their anterograde declarative memory is impaired, but their procedural memory and emotional memories are intact, as shown by prior studies with the Iowa patients (Bechara et al., 1995) and others. (Their ability to form new episodic memories is impaired, like a buggy software program that “saves” your file every minute, but erases it by mistake rather than saving and “remembering” it.) The hippocampal-damage patients will not remember your name, or a list of numbers to memorize, but can learn and remember how to drive a car, and whether people and situations caused them emotional reactions (even if their declarative memory for those people and situations is absent). The film "Memento" dramatized patients of this sort. A large literature on the hippocampal-dependent memory system in humans and other animals has provided support for the idea that the hippocampus is critical for only one specific kind of memory. While there is some debate about the issue, it is those memories that we can consciously recollect, memories of particular episodes, called “explicit”, or “episodic” memories, that are most impaired (Squire and Zola-Morgan, 1991; Eichenbaum, 2000). Another description 10 of this kind of memory calls it “relational memory,” to emphasize the idea that the hippocampus is critical for learning about the relations among objects and events presented on a particular occasion (Eichenbaum et al., 1994). A key role played by the hippocampus in strategic learning may be to abstract, from multiple particular learning episodes, an overall trend (Eichenbaum, 2003). Damage to the hippocampus may therefore limit the ability to recollect any specific episodic fact, or to form higher-level representations based on regularities across time in specific episodic facts. It does, however, permit memory for facts and skills, such as knowing how to do math, or knowing how to speak. It also permits normal episodic memory over a very short time span, as long as material is held in working memory (less than one minute in the absence of rehearsal). Thus, patients with hippocampal damage who participate in an experimental game would remember what they need to do, and that they are playing a game, as long as they are engaged in the game. They would be able to understand the instructions and converse normally with the experimenter. However, a minute into the game, they would not remember what they did a minute ago, and following a minute’s break, they would not remember that they ever played the game. Whether hippocampal patients can learn in games is important because mathematical models of learning in games generally assume that people store memories of the quality of previous strategies in some numerical form, which is related statistically to the chance of choosing those strategies in the future (Camerer and Ho, 1999) and appears to be encoded, in some games, in the firing rates of neurons in the parietal lobe (e.g., Glimcher, 2003) and dorsolateral prefrontal cortex (Barraclouch et al, in press). However, these models do not commit to any interpretation of where or how these memories and updated numerical ratings are stored in the brain. The hippocampal patients enable us to learn about an intriguing question that would not have occurred to scientists modeling learning in games-- viz., are memories about what happened previously in dynamic games explicit, declarative memories (which hippocampal patients cannot form), or procedural memories about physical acts, or emotional memories about whether a person harmed or helped you (which the hippocampal patients can form)? If people with hippocampal damage show evidence that a prior experience of being repaid a small amount in a repeated trust game, leads them to invest less in future periods, then this would support the hypothesis that emotional, rather than declarative memory is sufficient to guide normal behavior on such iterated games . This would be a huge clue about the role that memory plays in iterative games and, hence, about how models of learning should be structured. Furthermore, the nature of learned memories about previous histories in games may depend on whether the histories are emotionally charged-- as previous experiences in dilemma and trust games are likely to be-- or more declarative and cognitive. It should be noted that such an experiment can give us insights that are difficult to obtain with normal subjects. A positive finding with the hippocampal-lesioned patients would suggest that implicit, emotional memories that are not consciously accessible can nonetheless guide our learning and behavior on iterated games. This conclusion would presumably apply as well to normal people, but it would be difficult to test since normal brain would invariably also have the declarative memory for the learning experience. The data from the lesion patients might show that such declarative memory, though normally present, is not necessary for performance in the game and does not in fact drive the learning changes measured in behavior. b. Prefrontal Cortex Multiple sectors of the prefrontal cortex are important for higher-order cognition, planning, and decision-making, abilities often termed under the rubric “executive functions” (Wood and 11 Grafman, 2003). There is some evidence of a hierarchical arrangement among these different prefrontal regions, such that “higher” regions (such as frontal polar cortex, Brodmann’s area 10) can direct the processing occurring in “lower” regions, such as ventromedial prefrontal cortex (Koechlin, Ody, and Kouneiher, 2003). Here we will explore the contributions made to strategic IQ of four distinct sectors of the frontal lobes: frontal pole (BA 10), ventromedial prefrontal cortex (VM), dorsolateral prefrontal cortex (DLPFC), and the insula (see Figure 1 for the locations of these different regions). For brevity, we will describe in detail only studies with VM patients. b.i. Ventromedial prefrontal cortex Patients with bilateral damage to the ventromedial (VM) frontal lobe (N=10) are an important source of insight, because they may permit something like the converse dissociation to that we hypothesize for the patients with hippocampal damage. While their declarative memory logical reasoning abilities are intact, they are impaired in their ability to process emotional information (Damasio, 1994; Adolphs et al, 1995; Bechara et al., 2000). Patients with damage in the ventromedial prefrontal cortex are impaired in their ability to use emotions to guide their ability to plan ahead, so their behavior will be a useful way to measure the possible contributions of this mechanism to strategic IQ (see Bechara et al, 1998, for details on typical VM patient profiles). This patient population is also well suited to study because their lesions are fairly homogenous and localized, and a lot is known about their skills on a wide range of tasks and psychometric measures. Furthermore, while we can study other sectors of the prefrontal cortex using fMRI, this region is difficult to study using fMRI due to a technical signal artifact (paramagnetic susceptibility due to the proximity of the air sinuses), making the lesion method potentially more powerful than imaging for this region. Figure 2 shows pilot data in the beauty contest game repeated six times, from 4 VM patients, and 1 control (with temporal-lobe damage) who were given a battery of games and risky choice tasks over the last four months in Iowa under the supervision of PI Adolphs. The four VM Figure 2: VM patients (n=4) & control, (2/3)-beauty contest game 60 50 number 40 mean+1 SE mean-1 SE control 30 20 10 0 1 2 3 4 period 12 5 6 patients (three males, one female) range in age from 51-60 and range in measured IQ from 97-147. The control patient is a 49-year old female with unknown IQ. The samples are small, and obviously not random, but that is typical in lesion-patient studies (which is why triangulating results with fMRI and other measures is important). The control patient’s choices, shown by the thin line, are quite close to those in typical normal-subject samples (see Camerer, Ho and Chong, 2004): The control subject first chose 33 (reflecting one step of strategic thinking) and converged downward steadily toward over time the equilibrium prediction of 0. The two dotted lines show the mean, plus and minus one standard error, of the four VM patient choices. The VM patients’ number choices are significantly above the control in the first two periods. This is a suggestion, albeit with a limited sample, that VM is an area which is important for both strategic reasoning and learning over time (at least the first period of learning) (the first and fifth principles in our Table 1 list). Their slower learning raises the possibility that VM patients do not produce enough regret or counterfactual thinking to learn as rapidly from feedback other than their own payoffs, compared to normal subjects. These data show that is possible to study strategic thinking using lesion patients, and are illustrative of what mightbe learned in larger samples of lesion patients doing a wider range of games. 6. Measuring neural activity We can also use brain imaging (fMRI) and eye tracking to establish neural circuitry in normally-functioning adults (as well as fMRI imaging of lesion patients, which is a rather new combination of tools). Part of our research is to do fMRI scans of normal subjects playing a battery of strategic IQ tests. Using Caltech’s new (c. 2003) 3T Siemens scanner dedicated to research, PI Camerer has successfully collaborated in a "hyperscan" consortium with neuroscientists at Baylor, Emory, and Princeton, using subjects in two scanners making simultaneous, web-linked decisions (e.g., Tomlin et al, 2004). The methods are fairly standard in fMRI work: Subjects see visuallysimilar presentations of a task screen that permits subtraction of activity during treatments X and Y, to learn about what brain regions are differentially active during task X relative to task Y. In our scanner setup, subjects wear a pair of goggles which show a computer screen. The goggles contain a tiny eye-tracking camera which records the movements of the subjects' eyes (by contrasting the whites of the eyes with the center). Eye tracking is ideally suited to studying strategic thinking, because the payoffs of players from combinations of strategies are usually shown in distinct cells of a matrix, and it is easy to relate rules for strategic thinking to the order in which different matrix cells are "looked up" visually.10 Eye-tracking has proved useful in measuring steps of strategic thinking (see Costa-Gomes et al (2001) and Costa-Gomes and Crawford (2003), building on Camerer et al (1994), Johnson et al (2002) and Camerer and Johnson (in press). 7. Research plan and expected project significance Year 1: Extend CH model to dynamic games to enhance CH as a benchmark for strategic IQ. Conduct experiments with many games that tap the five strategic dimensions. Psychometric 10 For example, if players think others are completely random, then those players don't need to look up the payoffs of the other players. If players do look at payoffs of others, it follows that they are either thinking more strategically, or perhaps expressing social preferences about whether they are earning less or more money than other players (a necessary part of emotional forecasting). Furthermore, the regret-driven component of EWA learning requires players who chose one row of a matrix, for example, to look at how much other row choices would have earned, so we can use eye-tracking as a measure of how much counterfactual thinking is being used as part of learning. 13 factor analysis, and determine whether strategic thinking is dissociable from general intelligence (short-form Wechsler scale) and emotional intelligence (MSCEIT). Preliminary fMRI and eyetracking work on the CH steps-of-thinking model to establish neural circuitry of higher-order strategic thinking. Year 2: Web-based programming of a strategic IQ test that can be widely taken, capturing data from a wide range of demographic and educational groups. Administer the strategic IQ measure to specialized lesion patient populations. PI Adolphs budgets studies with 50 patients (10 each with VM, Hippocampal, DLPFC, BA10, and insula damage, and 10 controls). Year 3: Continuing studies with lesion patients. Collect and assemble web-based data from strategic IQ test. Dissemination and publication of findings. Expected Project Significance: 1. We will develop a database of games, and actual choices, that permit widespread use of the strategic IQ measure for personnel evaluation and training.11 The system can also be implemented on a website, which permits widespread data collection and outreach. 2. We will extend the CH model to dynamic games (to provide a strategic IQ benchmark). 3. We will provide new data on behavior of lesion patients, and expert populations, in a wide battery of games. 4. We will provide fMRI and other (e.g. eye-tracking) neurally-relevant evidence of behavior in many games. Little is known about the neural underpinning in a broad class of games. These data will therefore pose new questions in neuroscience about strategic thinking. 5. We will exploit the new capacity to “hyperscan” more than one brain at the same time. Simultaneous scans may are useful for understanding dyadic activation. For example, the rejection of an ultimatum offer is a reflection of both the offer, the offerer’s guess about what offer would be rejected, and the behavior of the responder. Understanding this behavior by scanning only one brain is like understanding a tennis match by watching only one player’s strokes. 8. Conclusion The proposed work is an ambitious effort to develop a behavioral theory of human social dynamics and implement the theory as a strategic IQ measurement system. It brings together strands of mathematical game theory, psychology, and neurobiology, in order to create the system for calibrating how well different groups of people behave in social situations. It will be scientifically grounded because we will obtain survey data from special groups of participants (e.g., experts) as well as lesion patients who have localized brain damage, and fMRI measures of brain activity on typical normal control subjects. It can be used for people to evaluate their own strategic thinking skills, to aid in personnel selection, and to show people areas where their strategic thinking can be improved. 9. Summary of Work under Previous Most-Relevant Grant NSF Grant No. SES-0078911, "Collaborative research: Sophisticated learning and strategic teaching in repeated games," Colin Camerer and Teck-Hua Ho, 8/1/2000-7/31/2003, $244,580. 11 A former student of PI Ho former student reported that her tech company uses the beauty contest game as a kind of mini-IQ test of general intelligence to evaluate prospective employees. This suggest the potential corporate value of a broader test which is psychometrically calibrated. 14 In [2] and [3], PIs Camerer and Ho extended their prior work with Chong on “experienceweighted attraction” (EWA) learning ([1]) to include sophistication and strategic teaching in repeated games. Sophisticated players understand that other players are either learning or sophisticated like themselves; sophisticated teachers account for the way their current-round actions influence learners’ future-round behavior. Including teaching also allows an empirical learning-based approach to reputation formation which predicts better than a quantal-response extension of the standard Bayesian-Nash approach. The “self-tuning EWA” model ([4]) replaces fixed EWA parameters with functions of a subject’s earlier experience in the games. The self-tuning EWA model has only one parameter to be estimatd, but it is as accurate as the fixed-parameter EWA. [5] adapts the EWA model to predict consumer choice using a panel-level data from sixteen product categories and 133,492 purchases. The central feature of the model is that buying one product leads to partial reinforcement of “nearby” products with similar features (brand names, package sizes, flavors, etc.) The model is simpler than other leading models but forecasts more accurately out-of-sample. The models can also be used forecast test-market results from products which combine existing features in a new way. In [6], we develop a one-parameter cognitive hierarchy (CH) model to predict behavior in one-shot games, and initial conditions in repeated games (as discussed in section 4 above). The CH model fits and predicts behavior better than Nash equilibrium in 130 experimental samples of matrix games, mixed-equilibrium games and entry games. [7] is a short published report describing this model. [8] is a published version of a Nobel Symposium summarizing all our previous work on the CH “thinking model”, the EWA learning model, and the teaching version of the EWA model. [9] and [10] introduce behavioral game theory to cognitive scientists and neuoroscientists. [1] Camerer and Ho, “Experience Weighted Attraction Learning in Normal Form Games,” Econometrica, 67 (1999), 827-873. [2] Camerer, Ho and Chong, “Sophisticated EWA Learning and Strategic Teaching in Repeated Games,” Journal of Economic Theory, 104 (2002), 137-188. [3] Chong, Ho, and Camerer, ``Strategic Teaching and Equilbrium in Repeated Entry and Trust Games” (under revision for Games and Economic Behavior), 2003. [4] Ho, Camerer and Chong, “Economics of Learning Models: A Self-tuning Theory of Learning in Games,” 2004. [5] Ho and Chong, “A Parsimonious Model of SKU Choice,” Journal of Marketing Research, Vol. XL (Aug 2003), 351-365. [6] Camerer, Ho and Chong, “A Cognitive Hierarchy Theory of One-shot Games,” Quarterly Journal of Economics, August 2004. [7] Camerer, Ho and Chong, “Thinking, learning and teaching in games,” American Economic Review, 93 (2003), 182-186. [8] Camerer, Ho and Chong, ``Behavioral Game Theory: Thinking, learning and teaching in games," in Essays in Honor of Werner Güth (Steffen Huck, Ed.) (in press). [9] Camerer, “Behavioural game theory: Thinking and learning in games,” Trends in Cognitive Sciences, May 2003. [10] Camerer, “Strategizing in the brain”, Science, March 2003. 15 References Adolphs, R.; A. Bechara, D. Tranel, H. Damasio, A. Damasio (1995). "Neuropsychological Approaches to Reasoning and Decision-Making". In: Neurobiology of Decision-Making, A. Damasio, H. Damasio, Y.Christen (eds.), pp.157-180. Springer Verlag, New York. Baron-Cohen, Simon. Mindblindness: An Essay on Autism and Theory of Mind (Cambridge, Massachusetts: MIT Press, 1995). Barraclough, D.; M Conroy and D. Lee. (In press). Prefrontal cortex and decision making in a mixed-strategy game. Nature Neuroscience. Bechara A, Damasio H, Damasio AR (2000) Emotion, decision-making, and the orbitofrontal cortex. Cerebral Cortex 10:295-307. Bechara A, Damasio H, Tranel D, Anderson SW (1998) Dissociation of working memory from decision making within the human prefrontal cortex. The Journal of Neuroscience 18:428437. Bechara A, Tranel D, Damasio H, Adolphs R, Rockland C, Damasio AR (1995) Double dissociation of conditioning and declarative knowledge relative to the amygdala and hippocampus in humans. Science 269:1115-1118. Berg, J., Dickhaut, J. and McCabe, K. “Trust, reciprocity, and social history,” Games and Economic Behavior, 10, 122-142. Camerer, Colin F., and K. Weigelt. January 1988. “Experimental Tests of a Sequential Equilibrium Reputation Model." Econometrica, 56, 1-36. Camerer, C., E.J. Johnson, T. Rymon, and S. Sen, in K. "Cognition and Framing in Sequential Bargaining for Gains and Losses," in Binmore, A. Kirman, and P. Tani (Eds.), Frontiers of Game Theory, MIT Press, 1994. Camerer and Ho, ``Experience Weighted Attraction Learning in Normal Form Games," Econometrica, 67 (1999), 827-873. Camerer, Colin F., Behavioral Game Theory: Experiments on Strategic Interaction, Princeton: Princeton University Press, (2003). Camerer, Colin F., Teck-Hua Ho and Juin-Kuan Chong, ``A Cognitive Hierarchy Model of Games", Quarterly Journal of Economics, August 2004. Camerer, C. and Johnson, E. (In press). “Thinking about attention in games: Backward and forward induction,” In I. Brocas and J. Carillo (Eds.) Psychology and Economics, Oxford University Press. Costa-Gomes, Miguel , Vincent Crawford, and Bruno Broseta, "Cognition and Behavior in Normal-Form Games: An Experimental Study," Econometrica 69 (September 2001)), 11931235. Costa-Gomes, Miguel A. and Vincent P. Crawford, "Cognition and Behavior in Two-Person Guessing Games: An Experimental Study," UCSD Working Paper, 2003. Damasio AR (1994) Descartes' Error: Emotion, Reason, and the Human Brain. New York: Grosset/Putnam. Eichenbaum H (2000) A cortical-hippocampal system for declarative memory. Nature Reviews Neuroscience 1:41-50. Eichenbaum H (2003) How does the hippocampus contribute to memory? TICS 7:427-429. Eichenbaum H, Otto T, Cohen NJ (1994) Two functional components of the hippocampal memory system. Behavioral and Brain Sciences, 17:449-518. 16 Fehr, E. and K. Schmidt ``A Theory of Fairness, Competition and Cooperation," Quarterly Journal of Economics, 114, (1999), 817-868. Fletcher, P. C., F. Happe, F. Frith, U. Baker, S. C. et al. Other minds in the brain: A functional imaging study of "theory of mind" in story comprehension. Cognition 57. 2 (Nov 1995), 109-128. Gardner, H. Frames of Mind. New York: Basic Books Inc., 1983. Glimcher, Paul. Decisions, Uncertainty and the Brain. MIT Press, 2003. Hill, Elisabeth and David Sally. 2003. "Dilemmas and Bargains: Autism, Theory-of-mind, Cooperation and Fairness". Working paper. http://ssrn.com/abstract=407040 Ho, T-H., Camerer, C., and Weigelt, K., "Iterated Dominance and Iterated Best Response in Experimental P-Beauty Contests," American Economic Review, 88 (1998), 947-969. Ho, T-H., Camerer, C. and Chong, J-K. "The Economics of Learning Models: A Self-tuning Theory of Learning in Games," UC, Berkeley Working Paper, 2004. Jehiel, Phillipe and Dov Samet, ``Valuation Equilibria," Toulouse working paper, (2003). Johnson, Eric, Colin F. Camerer, Sankar Sen, and Talia Rymon. “Detecting Failures of Backward Induction: Monitoring Information Search in Sequential Bargaining." Journal of Economic Theory, 104:1, 16-47, 2002. Koechlin, Ody, and Kouneiher (2003), “The architecture of cognitive control in the human prefrontal cortex.” Science 302: 1181-1185. Lopes, Paulo; Marc Brackett; John Nezlek; Astrid Schutz; and Peter Salovey. Emotional intelligence and social interaction. Personality and Social Psychology Bulletin, in press. McCabe et al. (2003). Mehta, Judith & Starmer, Chris & Sugden, Robert, 1994. "The Nature of Salience: An Experimental Investigation of Pure Coordination Games," American Economic Review, Vol. 84 (3) pp. 658-73. Rabin, Matthew, ``Incorporating Fairness into Game Theory and Economics," American Economic Review, 83, (1993), 1281-1302. Salovey, P., Mayer, J.D., & Caruso, D. (2002). The positive psychology of emotional intelligence. In C.R. Snyder & S.J. Lopez (Eds.), The handbook of positive psychology (pp. 159-171). New York: Oxford University Press. Sanfey, A.G., J.K. Rilling, J.A. Aaronson, Leigh E. Nystrom and J.D. Cohen. 2003. “The Neural Basis of Economic Decision-Making in the Ultimatum Game.” Science, 300, 5626, pp. 1755-1758. Satz, P. and S. Mogel. An abbreviation of the WAIS for clinical use. Journal of Clinical Psychology, 1962, 18, 77-79. Schelling, Thomas, Strategy of Conflict. Harvard University Press, 1960. Schulz, Wolfram and A. Dickinson. 2000. "Neuronal coding of prediction errors." Annual Review of Neuroscience, 23, pp. 473-500. Squire LR, Zola-Morgan S (1991) The medial temporal lobe memory system. Science 253:13801386. Tomlin, Damon; Cedric Anen; Brooks King-Casas; Colin Camerer; Steven Quartz; and P. Read Montague. Dynamic trust game reveals mapping of ‘self’ and ‘other’ responses along human cingulate cortex. Working paper, March 2004 (in submission to Science). Wood, Jacqueline and Jordan Grafman. Human prefrontal cortex: Processing and representational perspectives. Nature Reviews Neuroscience, February 2003, 4, 139-147. 17