CHAPTER 8 MANAGERIAL ECONOMICS GAME THEORY FOR

advertisement

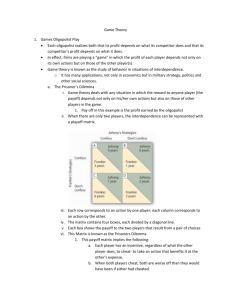

CHAPTER 8 MANAGERIAL ECONOMICS GAME THEORY FOR MANAGERS Game A game is a formal description of a strategic situation. A game is a contest involving two or more decision makers, each of whom wants to win. There are two person and multiperson games. Game theory Variously defined as (1) a branch of mathematical analysis developed to study decision making in conflict situations. Game theory provides a mathematical process for selecting an optimum strategy (that is, an optimum decision or a sequence of decisions) in the face of an opponent who has a strategy of his own. Such a situation exists when two or more decision makers who have different objectives act on the same system or share the same resources. (2) the study of how optimal strategies are formulated in the conflict. Game theory is the formal study of decision-making where several players must make choices that potentially affect the interests of the other players. (3) the formal study of conflict and cooperation. Game theoretic concepts apply whenever the actions of several agents are interdependent. These agents may be individuals, groups, firms, or any combination of these. The concepts of game theory provide a language to formulate, structure, analyze, and understand strategic scenarios. 8.1.1. Assumptions of Game Theory: (1) Each decision maker ["PLAYER"] has available to him two or more well-specified choices or sequences of choices (called "PLAYS"). (2) Every possible combination of plays available to the players leads to a well-defined endstate (win, loss, or draw) that terminates the game. (3) A specified payoff for each player is associated with each end-state (a zero-sum game means that the sum of payoffs to all players is zero in each end-state). (4) Each decision maker has perfect knowledge of the game and of his opposition; that is, he knows in full detail the rules of the game as well as the payoffs of all other players. (5) All decision makers are rational; that is, each player, given two alternatives, will select the one that yields him the greater payoff. The last two assumptions, in particular, restrict the application of game theory in real-world conflict situations. Nonetheless, game theory has provided a means for analyzing many problems of interest in economics, management science, and other fields. The main purpose of game theory is to consider situations where instead of agents making decisions as reactions to exogenous prices ("dead variables"), their decisions are strategic reactions to other agents actions ("live variables"). An agent is faced with a set of moves he can play and will form a strategy, a best response to his environment, which he will play by. Strategies can be either "pure" (i.e. play a particular move) or "mixed" (random play). Single period and multiple period (repeated games) A game that is played only once is called a single period game while a game that is played more than once is called a multiple period or repeated game. In repeated or multiple –period 1 games, each firm may attempt to influence its rival’s behaviour by sending signals that promise to reward cooperative behaviour, and threaten to punish non-cooperative behaviour. Non-cooperative (or strategic) games. In a game in strategic form, a strategy is one of the given possible actions of a player. In an extensive game, a strategy is a complete plan of choices, one for each decision point of the player. A game in strategic form, also called normal form, is a compact representation of a game in which players simultaneously choose their strategies. The resulting payoffs are presented in a table with a cell for each strategy combination. A game is non-cooperative if negotiation and enforcement of a binding contract are not possible. An example of a cooperative game is the bargaining between a buyer and a seller over the price of an item. The strategic form (also called normal form) is the basic type of game studied in non cooperative game theory. A game in strategic form lists each player’s strategies, and the outcomes that result from each possible combination of choices. An outcome is represented by a separate payoff for each player, which is a number (also called utility) that measures how much the player likes the outcome or (that reflects the desirability of an outcome to a player, for whatever reason). When the outcome is random, payoffs are usually weighted with their probabilities. The expected payoff incorporates the player’s attitude towards risk. Co-operative (or coalitional) games. Coalitional games A coalitional (or cooperative) game is a high-level description, specifying only what payoffs each potential group, or coalition, can obtain by the cooperation of its members. What is not made explicit is the process by which the coalition forms. As an example, the players may be several parties in parliament. Each party has a different strength, based upon the number of seats occupied by party members. The game describes which coalitions of parties can form a majority, but does not delineate, for example, the negotiation process through which an agreement to vote en bloc is achieved. A game is cooperative if the players can negotiate binding contracts that allow them to plan joint strategies. Cooperative game theory investigates such coalitional games with respect to the relative amounts of power held by various players, or how a successful coalition should divide its proceeds. This is most naturally applied to situations arising in political science or international relations, where concepts like power are most important. For example, a solution for the division of gains from agreement in a bargaining problem depends solely on the relative strengths of the two parties’ bargaining position. The amount of power a side has is determined by the usually inefficient outcome that results when negotiations break down. The fundamental difference between cooperative and non-cooperative games lies in the contracting possibilities. In cooperative games binding contracts are possible; in noncooperative games they are not. With this approach , an oligopolist assumes that its competitors choose the optimal strategy, and then develop its own best counterstrategy. Extensive game An extensive game (or extensive form game) describes with a tree how a game is played. It depicts the order in which players make moves, and the information each player has at each decision point. The extensive form, also called a game tree, is more detailed than the strategic form of a game. It is a complete description of how the game is played over time. This includes the order in which players take actions, the information that players have at the time they must take those actions, and the times at which any uncertainty in the situation is resolved. 2 8.3.1. Extensive games with imperfect information Typically, players do not always have full access to all the information which is relevant to their choices. Extensive games with imperfect information model exactly which information is available to the players when they make a move. Modeling and evaluating strategic information precisely is one of the strengths of game theory. Consider the situation faced by a large software company after a small startup has announced deployment of a key new technology. The large company has a large research and development operation, and it is generally known that they have researchers working on a wide variety of innovations. However, only the large company knows for sure whether or not they have made any progress on a product similar to the startup’s new technology. Sequential and simultaneous Games Sequential game- one of the players moves first and the other player can observe this move and then responds. Entry into a new market is an example of a sequential game. The new firm decides whether or not to enter, and the existing firm then decides whether to ignore the new firm or to try to prevent entry. For sequential games, there may be an advantage to the player who acts first (first mover advantage- example a firm which moves into a new market with a new product would quickly enjoy product loyalty). The player who follows will be penalized with losses. Firm B No new product Introduce new product No new $2m; $2m -$5m; $10m Firm A product Introduce new $10m. ; -$5m -$7m; -$7m product For a sequential game, it is convenient to map the choices facing the players in the form of a game tree. Game tree for the game above No New Product (2m, 2m) Firm B No New Product New Product (-5m, 10m) Firm A ((10m. -5m) New Product No New Product Firm B New Product (-7m,-7m) Simultaneous game- each player has to make his decision not knowing what the other player has decided or will decide. The difference between a sequential and a simultaneous game has to do with lack of information. Without observable information coordination is difficult to achieve. First-mover advantage Many games in strategic form exhibit what may be called the first-mover advantage. A player in a game becomes a first mover or “leader” when he can commit to a strategy, that is, choose 3 a strategy irrevocably and inform the other players about it; this is a change of the “rules of the game.” The first-mover advantage states that a player who can become a leader is not worse off than in the original game where the players act simultaneously. In other words, if one of the players has the power to commit, he or she should do so. 8.4. Zero-sum games and positive-sum games The extreme case of players with fully opposed interests is embodied in the class of two player zero-sum (or constant-sum) games. Familiar examples are games like chess and the game of poker. A two –person game is one where only two parties can play- as in the case of a union and a company in a bargaining session. Zero sum means that the sum of losses for one player must equal the sum of gains for the other player. A game is said to be zero-sum if for any outcome, the sum of the payoffs to all players is zero. In a two-player zero-sum game, one player’s gain is the other player’s loss, so their interests are diametrically opposed. There is no way for all players to become better off. N.B. some authors(notably Png and Lehman,2007) however argue that we can have a zero sum game even if the consequences for the various players do not add up to zero in every cell of the game in strategic form. If the consequences for the various players add up to the same number (whether positive , zero or negative) in every cell of the game in strategic form, then one player can become better off only if another is made worse off. Accordingly such a situation is also a zero-sum game. A positive-sum game is a strategic situation where one player can become better off without another being made worse off. Example Suppose X and Y are operating in a duopoly and their market shares have been stable until now. A new marketing manager for X has developed two distinct advertising strategies, one using radio spots and the other newspaper ads. Upon hearing this manager of Y also proceeds to prepare radio and newspaper ads. X’s payoff matrix is as below. Game Player Y’s strategies Y1 Y2 (Use radio) (Use newspaper) X1 Use radio Game Player X’s Strategies X2 Use newspaper 2 7 6 -4 The payoff matrix shows what will happen to current market shares if both X and Y begin advertising. By convention, payoffs are shown only for the first game player, X. 4 Game outcomes X’s strategy X1 (use radio) X1 (use radio) X2 (use newspaper) X2 (use newspaper) Y’s strategy Y1 (use radio) Y2 (use newspaper) Y1 (use radio) Y2 (use newspaper) Outcome (% change in market share. X wins 2, and Y loses 2 X wins 7 and Y loses 7 X wins 6 and Y loses 6 X loses 4 and Y wins 4 However, the payoffs can also be shown as Game Player Y’s strategies Y1 Y2 (Use radio) (Use newspaper) X1 Use radio Game Player X’s Strategies X2 Use newspaper 2;-2 7;-7 6;-6 -4;-4 Constant sum game The sum of the payoffs to all players is always the same, whatever strategies are chosen. An example is the game below. Firm B’s strategies X Y Firm A’s Strategies X +1, +4 +3, +2 Y +4, +1 0, +5 8.5. Pure strategy In some games, the strategies each player follows will always be the same regardless of the other player’s strategy. This is called a “pure” strategy. A saddle point is a situation where both players are facing pure strategies. Strategies for saddle point games can be determined without performing any calculations. Consider the following game. Y’s strategies Y1 Y2 (Use radio) (Use newspaper) X1 3;-3 5;-5 X2 1;-1 -2;+2 X’s Strategies To determine if the game has a saddle point? 5 X will always play strategy X1. The worst outcome for X playing strategy X1 is +3 points. The best outcome for X playing X2 is +1. Knowing that X will always play strategy X1, Y will always play strategy Y1. Y will lose 3 points by playing Y1. If Y2 is played, Y will lose 5 points. Both players have a dominant or pure strategy, and therefore the game has a saddle point. The numerical value of the saddle point is the game outcome. For this example, the saddle point is in cell 3;-3. Y’s Pure strategy Y1 Y2 (Use radio) (Use newspaper) X1 3 5 X2 1 -2 X’s Pure Strategy Saddle Point Value of the game The value of the game is the average or expected game outcome if the game is played an infinite number of times. The value of the game for this example is 3. If a game has a saddle point, the value of the game is equal to its numerical value. A saddle point exists if both of the following conditions exist: 1. It is the largest number in the column. 2. It is the smallest number in its row. Minimax Criterion Under decision theory this was involving a pessimistic decision maker who would maximize his minimum gains. Minimising one’s maximum losses is identical to maximizing one’s minimum gains. In game theory this is the so called minimax criterion. This criterion is one approach to selecting strategies that will minimize losses for each player. The minimax procedure is accomplished as follows. Find the smallest number in each row. Pick the largest of these numbers. This number is called the lower value of the game, and the row is X’s maximum strategy Next, find the largest number in each column. Pick the smallest of these numbers. This number is called the higher value of the game, and the column is Y’s minimax strategy. If the upper value and lower value of the game are the same, there is a saddle point which is equal to the upper or lower value. This is an alternate method of determining whether or not a saddle point exists. 6 Y1 Y’s strategies Y2 X1 10 6 6 Lower value X2 -12 2 -12 Minimum Row number X’s Strategies Maximum number column 10 6 Upper value Since the upper value equals the lower value of the game, the saddle point is 6. X’s strategy is to play X1, and Y’s strategy is to playY2. Mixed Strategy Games Mixed Strategies In many games there is no strictly determined solution. For example there is no unique solution to the game above, there being no dominant strategy for each player. The solution lies in the mixed strategy developed by von Neumann and Morgenstein in 1944. A player follows a mixed strategy by choosing his action randomly, using fixed probabilities. Each player’s mixed strategy involves the selection of probabilities that maximise his expected payoff, regardless of the strategy that is being employed by the other player. Mixed strategies are a natural device for constant-sum games with imperfect information. Leaving one’s own actions open reduces one’s vulnerability against malicious responses. When there is no saddle point, then players will play each strategy for a certain percentage of the time. This is called a mixed strategy. For 2 x 2 games (where both players have only two possible strategies), an algebraic approach can be used to solve for the percentage of the time each strategy is played. Nash Equilibrium A Nash equilibrium is a non-cooperative equilibrium- each firm makes the decisions that give it the highest possible profit, given the actions of its competitors. This is where each firm is doing the best it can given what its competitors are doing. A Nash equilibrium is also called strategic equilibrium, is a list of strategies, one for each player, which has the property that no player can unilaterally change his strategy and get a better payoff. A Nash Equilibrium will be reached when each agent's actions begets a reaction by all the other agents which, in turn, begets the same initial action. In other words, the best responses of all players are in accordance with each other. A Nash equilibrium recommends a strategy to each player that the player cannot improve upon unilaterally, that is, given that the other players follow the recommendation. Since the other players are also rational, it is reasonable for each player to expect his opponents to follow the recommendation as well. If a game has more than one Nash equilibrium, a theory of strategic interaction should guide players towards the “most reasonable” equilibrium upon which they should focus. 7 In many games, however, there are no dominated strategies, and so these considerations are not enough to rule out any outcomes or to provide more specific advice on how to play the game. The central concept of Nash equilibrium is much more general. Nash Equilibria Example Suppose there are two airlines A and B. Two price strategies are available to each firm; set a high price of $500 or a low price of $220.Each strategy has four possible strategy combinations showing the profits for each airline. A PH=$500 PL=$220 B PH=$500 $9000; $9000 $0; $3600 PL=$220 $3600 ; $0 $1800; $1800 There is no dominant or dominated strategy for either firm. If A selects a high price, B should also select high price. But if A selects a low price, B’s best bet is to match this price reduction. There is no dominated or dominant strategy for either firm. To solve this game look at B, who after looking at the matrix and reason that the best bet is to choose the same fare as A. Suppose that B expect A to set a low fare. Their best bet is to choose low price. This expectation will make sense if B also believes that A will set a low price. The low fare strategy is B’s best response to its prediction of A’s strategy , and that predicted strategy is also the best response to B’s best response to that predicted strategy. In the language of game theory, the strategy combination low fare , low fare is a Nash Equilibrium. Following the same reasoning as above, another strategy combination is also another Nash equilibrium (high price, high price). There are two Nash equilibria. As to which one will be the ultimate equilibrium chosen, this will depend on past experience and learning from each firm’s managers. If the managers of both sides are old ‘pros’ who have dealt with each for some time, they may be able to avoid the ‘price war’ outcome and coordinate to achieve the more profitable (high fare, high fare) outcome. (Strategic situations that involve elements of both competition and coordination have been described as co-opetition). But if the management of either or both sides is new and inexperienced, it will be harder to determine which Nash equilibrium will occur. Mixed Strategies A game in strategic form does not always have a Nash equilibrium in which each player deterministically chooses one of his strategies. However, players may instead randomly select from among these pure strategies with certain probabilities. Randomizing one’s own choice in this way is called a mixed strategy. An equilibrium is defined by a (possibly mixed) strategy for each player where no player can gain on average by unilateral deviation. Average (that is, expected) payoffs must be considered because the outcome of the game may be random. First-mover advantage Many games in strategic form exhibit what may be called the first-mover advantage. A player in a game becomes a first mover or “leader” when he can commit to a strategy, that is, choose a strategy irrevocably and inform the other players about it; this is a change of the “rules of the game.” The first-mover advantage states that a player who can become a leader is not worse off than in the original game where the players act simultaneously. In other words, if one of the players has the power to commit, he or she should do so. 8 Dominant strategies Since all players are assumed to be rational, they make choices which result in the outcome they prefer most, given what their opponents do. In the extreme case, a player may have two strategies A and B so that, given any combination of strategies of the other players, the outcome resulting from A is better than the outcome resulting from B. Then strategy A is said to dominate strategy B. A rational player will never choose to play a dominated strategy. In the Prisoner’s Dilemma game, “defect” is a strategy that dominates “cooperate.” A strategy is dominated if it generates worse consequences than some other strategy, regardless of some other players’ choices. Prisoner’s dilemma The prisoner’s dilemma situation arises when two or more parties are motivated to behave in a self-serving manner, and they assume that their rivals or adversaries will act similarly. The result is that the outcome to all parties is inferior to that which could have been attained had the parties been able to assume that their rivals would not act in a way detrimental to them. This situation is called the prisoner’s dilemma after the supposed situation of two bank robbers caught with the proceeds of a robbery; but with no more than circumstantial evidence of being involved in that robbery. Two prisoners have been accused of collaborating in a crime. There is no judicial evidence for this crime except if one of the prisoners testifies against the other. They are in separate jail cells and cannot communicate with each other. Each has been asked to confess to the crime. If both prisoners confess, each will receive a prison term of 5 years. If neither confesses, the prosecution’s case will be difficult to make, (for example for illegal weapons possession) so the prisoners can expect to plea bargain and receive a term of two years. On the other hand, if one prisoner confesses and the other does not, the one who confesses will receive a term of only one year, while the other will go to prison for ten years. If you were one of the prisoners, what would you do – confess or not confess? The “defection” from that mutually beneficial outcome is to testify, which gives a higher payoff no matter what the other prisoner does, with a resulting lower payoff to both. This constitutes their “dilemma.” The payoff matrix summarizes the possible outcomes. The payoffs are negative for example the payoff in the lower right hand corner of the payoff matrix means a two year sentence for each prisoner. Confess Confess -5,-5 Prisoner B Don’t Confess -1, -10 Prisoner A Don’t Confess -10, -1 -2, -2 If prisoner A does not confess, he risks being taken advantage of by his former accomplice. After all, no matter what Prisoner A does, Prisoner B comes out ahead by confessing. Similarly, Prisoner A always comes out ahead by confessing, so Prisoner B must worry that by not confessing, he will be taken advantage of. Therefore both prisoners will probably confess and go to jail for five years. The two prisoners are playing a non-cooperative game. (Confessing is the dominant strategy for each prisoner.) Given the inability of the prisoners to communicate with each other, and given that each prisoner dislikes time spent in jail, each will be motivated to avoid the worst possible outcome. Since the worst possible outcome is that of not confessing while the other does confess, the maximum strategy for each prisoner is to confess. Since each prisoner confesses, they each end up with a relatively long jail sentence whereas if they had been able to 9 communicate and coordinate their strategies they would have been sentenced to the relatively short prison term for possession of stolen goods. Firms and the Prisoner’s Dilemma The essence of the prisoner’s dilemma thus applies to firms in situations of advertising rivalry. A lack of communication and coordination between parties with conflicting selfinterest can lead to a situation in which both parties are worse off compared with the outcomes that would have been obtained had there been communication and coordination between those firms. Had the firms agreed to limit their advertising expenditures to $4m each, their net profits would have remained at the $10m level. In pursuit of independent profit gains however, and without knowing whether or not the other firm was simultaneously planning an increase in the promotional budget, both firms find themselves at a reduced level of profit. Note that when both firms have increased their promotional budget to the $6m level, there is no incentive for either firm to independently reduce the advertising budget, since this would lead to a loss of market share and net profits. A dominant strategy is one that is optimal for a player no matter what an opponent does. For example in a duopoly setting, if firms A and B sell competing products and are deciding whether to undertake advertising campaigns. Each firm however will be affected by its competitor’s decision. The payoff matrix (showing possible profits) is given below: Advertise Advertise $10; $5 Firm B Don’t Advertise $15; $0 Firm A Don’t advertise $6. ; $8 $10; $2 What strategy should A choose? It should clearly advertise because no matter what Firm B does, Firm A does best by advertising. Advertising is thus a dominant strategy for Firm A. The same is true for Firm B. No matter what Firm A does, Firm B does best by advertising. Therefore, assuming that both firms are rational, we know that the outcome for this game is that both firms will advertise. This outcome is easy to determine because both firms have dominant strategies. Not every game has a dominant strategy. Look at the modified model below. Advertise Advertise $10; $5 Firm B Don’t advertise $15; $0 Firm A Don’t Advertise $6. ; $8 $20; $2 Firm A has no dominant strategy. Its optimal decision depends on what Firm B does. If firm B advertises, then Firm A does best by advertising; but if Firm B does not advertise, Firm A also does best by not advertising. 10 Supposing that both firms must make their decisions at the same time. Firm A must put itself in Firm B’s shoes. Firm B has a dominant strategy- advertise, no matter what Firm A does. Firm A can conclude that Firm B will advertise. This means that Firm A should itself advertise (and earn $10 instead of $6). The equilibrium is that both firms will advertise. It is the logical outcome of the game because Firm A is doing the best it can, given Firm B’s decision; and Firm B is doing the best it can , given Firm A’s decision. Duopoly Example: Suppose there are two firms 1 and 2 (duopoly) and each duopolist is faced with the decision of spending either a little or a lot on advertising, so that we have 4 outcomes (profit levels ) as indicated in the table. FIRM 2 Low Adv High Adv Expenditure Expenditure Case A Case B Low Advert 1 $10 1 $4 expenditure 2 $10 2 $17 Firm 1 Case C Case D High Advert 1 $17 1 $8 expenditure 2 $8 2 $4 Each duopolist can raise its profits to $17million by increasing its advertising expenditure, provided its competitor does not (cases B and C) Cases B and C are not points of stable equilibrium., because the low advertising and low profit firm can at least increase its profits by from $4m to $8m by increasing its advertising expenditure. As a result both firms choose the strategy of high advertising expenditures, thus neutralizing each other’s effort, so that each ends up with only $8m in profits. There is now too much advertising but each firm will not return unilaterally to low advertising expenditures since it does not pay for its competitor Example Suppose there is another duopoly. Each firm can charge a price of $4 and earn a profit of $12. If the firms can collude they will charge a price of $6 and earn a profit of $16. Suppose that the firms do not collude, but that firm 1 charges the $6 collusive price, hoping that Firm 2 will do the same. If Firm 2 does do the same, it will earn a profit of $16. If it charges a different price of $4 instead of the $6, it will earn $20 profit, while Firm 1 earns $4 profit. If Firm 1 charges $6 but Firm 2 charges only $4, Firm 2’s profit will increase to $20. It will be at the expense of Firm 1’s profits which will fall to $4. In deciding what price to set, the two firms are playing a non-cooperative game. – each firm independently does the best it can, taking its competitor into account. The payoff matrix is given below: Firm 2 Charge $4 Charge $6 Firm 1 Charge $4 $12; $12 $20; $4 Charge $6 $4 ; $20 $16; $16 In this case if the firms cooperate both firms charge $6 instead of $4, and thereby earning $16 instead of $12. The problem is that each firm always makes more money by charging $4 no matter what its competitor does. As the payoff matrix shows, if Firm 2 charges $6 , Firm 1 still does best by charging $4. Similarly, Firm 2 always does best by charging $4, no matter what Firm 1 does. As a result, unless the two firms can sign an enforceable agreement to charge $6, neither firm can expect its competitor to charge $6 and both will charge $4. 11 While game theory is useful in analysing single-move decision making situations under duopoly, it has not been very useful in analysing multiple-move decision-making situations with several oligopolists, which is often encountered in the real world. Furthermore, by examining only the worst outcome of each strategy for the purpose of avoiding the worst of all possible outcomes, game theory views the world in an excessively pessimistic light. Game theory has nevertheless, added a new and useful dimension to the analysis of oligopoly. The criticism that the theory applies only to one-move duopoly problems is now being overcome by recent exciting expansions of the theory. It has been found that for many-move situations , the best strategy for playing the prisoner’s dilemma is a “tit-for-tat” behaviour which can be summed by “do to your opponents what he has just done to you.” That is, as long as your opponent cooperates, you cooperate. If he betrays you, the next time you betray him back. If he cooperates again, next time you cooperate also. Controlled experiments with human participants have shown that such tit-for tat behaviour leads people to cooperate and reach a better outcome than with any other form of behaviour. Oligopoly Oligopolistic firms often find themselves in a Prisoners’ dilemma. They must decide whether to compete aggressively, attempting to capture a larger share of the market at their competitor’s expense, or to “cooperate” and compete more passively, coexisting with their competitors and settling for the market share they currently hold, and perhaps even implicitly colluding. If the firms compete passively, setting high prices and limiting output, they will make higher profits than if they compete aggressively. Under conditions of oligopoly the firm is expected to recognize that its advertising and other marketing strategies would have a noticeable impact on the sales and profits of rivals unless the other firms are simultaneously carrying out new promotional campaigns. Unlike price adjustments, promotional campaigns require a significant lead time in which they must be planned and coordinated with the availability of time and space from the various advertising agencies and media channels. This means that if a firm is caught napping by a competitor’s new advertising campaign, there will be a significant lag before it can produce its own retaliatory campaign, during which time it may have lost a significant market share, which may in turn prove difficult if not impossible to retrieve. The existence of this lag thus motivates firms to have an ongoing involvement in promotional activity. Given that firms tend to have continual advertising and promotional strategies, changes in market shares should only be expected to occur when the relative advertising and promotional effectiveness of firms is suddenly made different by an increase in the relative size of an individual firm’s advertising budget, and or in the relative effectiveness of a firm’s advertising expenditures. Suppose two large firms share the major part of a particular market and each budgets approximately $4m toward promotional expenditures each year. When both firms spend $4m, net profits to each firm are $10m. The payoff matrix shows all information. Payoff matrix for advertising strategies Firm A’s $4m Advertising Budget $6m Firm B’s Advertising Budget $4m $6m $10; $10 $6; $12 $12. ; $6 12 $8.7; $8.7 In this situation it is not unreasonable to expect each firm to follow a “Maximin” strategy. Given that the firms are likely to be risk averters and that there will be a significant lag before the firm can retaliate to an increase in advertising expenditures, we might expect each firm to wish to avoid the worst possible outcome. For each firm the worst outcome associated with the $4m expenditure is $6m profit, whereas the worst outcome associated with the $6m promotional budget is $8.7m profit. The best of these worst situations is the $8.7m profit associated with the $6m promotional expenditures. Thus the Maximin strategy for each firm is to increase its promotional budget to the higher level. The above example shows that firms that have independently increased their expenditures in pursuit of private gain instead find that the result is inferior to that which was enjoyed at the earlier promotional levels. The firms are also subject to the “prisoner’s dilemma”. Elimination of dominated strategies When there is no dominant strategy equilibrium, we have to resort to the idea of Nash equilibrium. But typically there will be more than one Nash equilibria. Our problem is to try to eliminate some of the Nash equilibria as being “unreasonable”. One sensible belief to have about players’ behaviour is that it would be unreasonable for them to play strategies that are dominated by other strategies. This suggests that when given a game, we should first eliminate all strategies that are dominated and then calculate the Nash equilibria of the remaining game. This procedure is called elimination of dominated strategies; it can sometimes result in a significant reduction in the number of Nash equilibria. For example consider a game with dominated strategies below. Y Y1 Y2 X1 2,2 0,2 X2 2,0 1,1 Note there are two pure strategy Nash Equilibria, (X1, Y1) and (X2,Y2). However the strategy Y2 weakly dominates the strategy Y1 for Y. If X assumes that Y will never play his dominated strategy, the only equilibrium for the game is (X2,Y2). Elimination of strictly dominated strategies is generally agreed to be an acceptable procedure to simplify the analysis of a game. The principle of dominance can be used to reduce the size of games by eliminating strategies that would never be played. A strategy for a player can be eliminated if the player can always do as well or better playing another strategy. In other words, a strategy can be eliminated if all its game’s outcomes are the same or worse than the corresponding game outcomes of another strategy. Using the principle of dominance, we reduce the size of the following game X Y1 Y2 X1 4 3 X2 2 20 X3 1 1 In this game X3 will never be played because X can always do better by playing X1 or X2. 13 The new game is: Y1 Y2 X1 4 3 X2 2 20 Here is another example Y1 Y2 Y3 Y4 X1 -5 4 6 -3 X2 -2 6 2 -20 In this game, Y would never play Y2 and Y3 because Y could always do better playing Y1 or Y4. The new game is: Y1 Y4 X1 X2 -5 -2 -3 -20 A number of critical issues can be raised with the Prisoners' Dilemma, and each of these issues has been the basis of a large scholarly literature: The Prisoners' Dilemma is a two-person game, but many of the applications of the idea are really many-person interactions. We have assumed that there is no communication between the two prisoners. If they could communicate and commit themselves to coordinated strategies, we would expect a quite different outcome. In the Prisoners' Dilemma, the two prisoners interact only once. Repetition of the interactions might lead to quite different results. 8.10. Repeated Games and Coordination One simple, and famous (but not, contrary to widespread myth, necessarily optimal) strategy for preserving cooperation in repeated Prisoner’s Dilemmas is called tit-for-tat. This strategy tells each player to behave as follows: i. Always cooperate in the first round. ii. Thereafter, take whatever action your opponent took in the previous round. iii. Do not be envious. A group of players all playing tit-for-tat will never see any defections. Since, in a population where others play tit-for-tat, tit-for-tat is the rational response for each player, everyone playing tit-for-tat is a Nash Equilibrium. There are two complications. First, the players must be uncertain as to when their interaction ends. Suppose the players know when the last round comes. In that round, it will be rational for players to defect, since no punishment will be possible. Now consider the second-last round. In this round, players also face no punishment for defection, since they know they will defect in the last round anyway. So they defect in the second-last round. But this means they face no threat of punishment in the third-last round, 14 and defect there too. We can simply iterate this backwards through the game tree until we reach the first round. Since cooperation is not rational in that round, tit-for-tat is no longer a rational strategy, and we get the same outcome—mutual defection—as in the one-shot Prisoner’s Dilemma. Therefore, cooperation is only possible in repeated Prisoner’s Dilemmas where the expected number of repetitions is indeterminate. Philosophical and Historical Motivation Despite the fact that game theory has been rendered mathematically and logically systematic only recently, however, game-theoretic insights can be found among philosophers and political commentators going back to ancient times. For example, in two of Plato's texts, the Laches and the Symposium, Socrates recalls an episode from the Battle of Delium that involved the following situation. Consider a soldier at the front, waiting with his comrades to repulse an enemy attack. It may occur to him that if the defense is likely to be successful, then it isn't very probable that his own personal contribution will be essential. But if he stays, he runs the risk of being killed or wounded—apparently for no point. On the other hand, if the enemy is going to win the battle, then his chances of death or injury are higher still, and now quite clearly to no point, since the line will be overwhelmed anyway. Based on this reasoning, it would appear that the soldier is better off running away regardless of who is going to win the battle. Of course, if all of the soldiers reason this way—as they all apparently should, since they're all in identical situations—then this will certainly bring about the outcome in which the battle is lost. Thus the Spanish conqueror Cortez, when landing in Mexico with a small force who had good reason to fear their capacity to repel attack from the far more numerous Aztecs, removed the risk that his troops might think their way into a retreat by burning the ships on which they had landed. With retreat having thus been rendered physically impossible, the Spanish soldiers had no better course of action but to stand and fight—and, furthermore, to fight with as much determination as they could muster. Better still, from Cortez's point of view, his action had a discouraging effect on the motivation of the Aztecs. He took care to burn his ships very visibly, so that the Aztecs would be sure to see what he had done. They then reasoned as follows: Any commander who could be so confident as to willfully destroy his own option to be prudent if the battle went badly for him must have good reasons for such extreme optimism. It cannot be wise to attack an opponent who has a good reason (whatever, exactly, it might be) for being sure that he can't lose. The Aztecs therefore retreated into the surrounding hills, and Cortez had his victory bloodlessly. 15