network strategies

advertisement

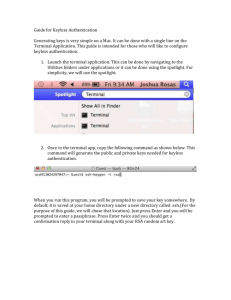

INFORMATION SYSTEM AND TECHNOLOGY ASSIGNMENT 2 NETWORK STRATEGIES LECTURER’S NAME : DR.NOORMINSHAH BT A.IAHAD NAME : NUR ANASUHA BT ILIAS MATRIC NO : A11HA0101 I/C : 921120-03-5352 1 CONTENT Title Page Terminal Server Network Definition Advantages Disadvantages 1 1-2 2-3 Client Server Network Definition Advantages Disadvantages Peer-to-Peer Network Definition Advantages Disadvantages Distributed Processing Network Definition Advantages Disadvantages References 4-5 5 6 7 7-8 8-9 9 10 10 11 2 TERMINAL SERVER NETWORK DEFINITION Generally in information technology, a terminal server is a hardware device or server that provides terminals such as PCs, printers, and other devices with a common connection point to a local or wide area network. The terminals connect to the terminal server from their RS-232C or RS-423 serial port. The other side of the terminal server connects through network interface cards (NIC) to a local area network (LAN) usually an Ethernet or token ring LAN through modems to the dial-in or out wide area network, or to an X.25 network or a 3270 gateway. Different makes of terminal server offer different kinds of interconnection. Some can be ordered in different configurations based on customer need. The use of a terminal server means that each terminal doesn't need its own network interface card or modem. The connection resources inside the terminal server are usually shared dynamically by all attached terminals. ADVANTAGE When using terminal services on the server then all the activities on the networks and all the developments and managements issues related to network are handled by the central computer or server. Once Terminal Services are applied to system, all the clients can connect on the network local area network connection, remote dial-up connection or through wide area network connection. Clients can be Windows or non-Window based, terminal services supports all the operating systems. Terminal Services provides three advantages. 3 First, Rapid-Centralized Deployment where terminal services can resourcefully install Office 2003 to computing devices across an entire network. When you run Office 2003 on a terminal service, you are sure that information workers are also running the most recent version of the same application. Second, Low-Bandwidth Access to Data that is terminal services reduce the amount of network bandwidth that is required to access data from the remote computer. Data only in the form of screen views are transmitted. Over this bandwidth-constrained it is very successful combination for remotely manipulating large amounts of data. Third, Office 2003 Anytime and Anywhere. Terminal Services environment helps workers to achieve higher productivity by aflowing access to their applications on any platform, and computers running Windows or non¬windows operatin.g system. DISADVANTAGE Graphically Intensive applications SBC doesn’t meet the end-user experience when delivering graphically intensive applications with the RDP/ICA protocol. Resource intensive applications that require a lot of internal memory won’t be the best type of application for executing on an x86 Terminal Server environment. When an application claims a lot of CPU resources these applications aren’t the best type of applications for a SBC environment. The solution is to designing a Terminal Server infrastructure with multiple CPU’s, multiple cores and using x64 Terminal Services which can solve this disadvantages. Other than that, peripherals that is access to peripherals within your terminal sessions is limited. This limitation depends on the type of peripheral, client Operating System, usage of additional software on top of Terminal Services. PDA synchronization, scanning, Bi-directional audio and printing are peripherals which are difficult to access within a terminal server session. Application compatibility. Application conflicts can occur when multiple applications or subcomponents are installed on a Terminal Server. Regression testing is needed to address this risk. Applications must be terminal server aware. Application Virtualization can address these conflicts and provide a dynamic application delivery platform. Freedom within workspace environment is limited; A shared desktop doesn’t offer a ‘PC-like’ Windows XP/Vista experience. The freedom of installing applications and changing desktop 4 configuration settings is limited. In a shared desktop infrastructure this is implemented because of performance, reliability and stability reasons. No offline application delivery scenario; All program execution, data processing, and data storage occur centrally on a terminal server in the datacenter. The client sends mouse movements and keystrokes to the Terminal Server. A reliable and available network connection for application delivery is required. Legacy applications; Legacy applications, DOS and Win16 on an x86 terminal server platform or Win32 application on an x64 platform, will consume much more system resources. Maybe it’s not a disadvantage, more a fact, it’s important to design a SBC infrastructure with these types of application are being used. 5 CLIENT SERVER NETWORK DEFINITION Client or server describes the relationship between two computer programs in which one program, the client, makes a service request from another program, the server, which fulfills the request. Although the client/server idea can be used by programs within a single computer, it is a more important idea in a network. In a network, the client/server model provides a convenient way to interconnect programs that are distributed efficiently across different locations. Computer transactions using the client/server model are very common. For example, to check your bank account from your computer, a client program in your computer forwards your request to a server program at the bank. That program may in turn forward the request to its own client program that sends a request to a database server at another bank computer to retrieve your account balance. The balance is returned back to the bank data client, which in turn serves it back to the client in your personal computer, which displays the information for you. The client/server model has become one of the central ideas of network computing. Most business applications being written today use the client/server model. So does the Internet's main program, TCP/IP. In marketing, the term has been used to distinguish distributed computing by smaller 6 dispersed computers from the "monolithic" centralized computing of mainframe computers. But this distinction has largely disappeared as mainframes and their applications have also turned to the client/server model and become part of network computing. In the usual client/server model, one server, sometimes called a daemon, is activated and awaits client requests. Typically, multiple client programs share the services of a common server program. Both client programs and server programs are often part of a larger program or application. Relative to the Internet, your Web browser is a client program that requests services (the sending of Web pages or files) from a Web server (which technically is called a Hypertext Transport Protocol or HTTP server) in another computer somewhere on the Internet. Similarly, your computer with TCP/IP installed allows you to make client requests for files from File Transfer Protocol (FTP) servers in other computers on the Internet. ADVANTAGE Centralizatiom. Unlike P2P, where there is no central administration, here in this architecture there is a centralized control. Servers help in administering the whole set-up. Access rights and resource allocation is done by Servers. Proper Management. All the files are stored at the same place. In this way, management of files becomes easy. Also it becomes easier to find files. Back-up and Recovery possible,as all the data is stored on server its easy to make a back-up of it. Also, in case of some break-down if data is lost, it can be recovered easily and efficiently. While in peer computing we have to take back-up at every workstation. Upgradation and Scalability in Client-server set-up where changes can be made easily by just upgrading the server. Also new resources and systems can be added by making necessary changes in server. Accessibility, from various platforms in the network, server can be accessed remotely. As new information is uploaded in database , each workstation need not have its own storage capacities increased (as may be the case in peer-to-peer systems). All the changes are made only in central computer on which server database exists. Security, rules defining security and access rights can be defined at the time of set-up of server and servers can play different roles for different clients. 7 DISADVANTAGE For a cost, a client or server network can be expensive to implement and maintain. First, at least one server is required to create a client/server network. This requires server hardware and software, a server operating system and appropriate licenses to allow the end users to use the network software. If data will be stored centrally, a backup system is needed, which requires backup server software and backup media. Maintaining a client/server network also requires at least one network administrator, which translates into an additional salary. For a smaller network, cost alone may be the deciding factor in opting for a different type of network architecture. For complexity, a client or server network is naturally more complex, which can be both an advantage and a disadvantage. For instance, data stored remotely can be more secure than data stored locally, but more configuration is required both to ensure the security of that data and to allow the appropriate users to have appropriate access to that data. The very nature of a client/server environment creates challenges in the delivery and accessibility of remote resources. Besides that, a client or server network requires experienced network personnel to maintain the server, manage security and backup systems, and recover quickly from unexpected outages. Depending on the size and complexity of the client/server network, this could require network administrators, IT security professionals, and/or other IT professionals. A smaller network may be able to combine several of these roles into one position, but additional training is often required as a result. When a computer in a simple peer-to-peer network goes down, the only users affected are the user of that particular computer, and perhaps any users who are trying to access data stored on that computer. In contrast, when a server in a client/server network goes down, all users are affected. If security is maintained centrally on that server, workstations are unable to authenticate login information, and users are unable to gain access to their own systems. If user data is stored centrally, users are unable to access their data. If applications are managed centrally, users are unable to run their applications. Any of these scenarios can result in significant loss of productivity and/or revenue 8 PEER-TO-PEER NETWORK DEFINITION Peer-to-peer is a communications model in which each party has the same capabilities and either party can initiate a communication session. Other models with which it might be contrasted include the client/server model and the master/slave model. In some cases, peer-to-peer communications is implemented by giving each communication node both server and client capabilities. In recent usage, peer-to-peer has come to describe applications in which users can use the Internet to exchange files with each other directly or through a mediating server. On the Internet, peer-to-peer (referred to as P2P) is a type of transient Internet network that allows a group of computer users with the same networking program to connect with each other and directly access files from one another's hard drives. Napster and Gnutella are examples of this kind of peer-to-peer software. Major producers of content, including record companies, have shown their concern about what they consider illegal sharing of copyrighted content by suing some P2P users. ADVANTAGE The main advantage of peer to peer network is that it is easier to set up and use than a network with a dedicated server. In peer-to-peer networks all nodes are act as server as well as 9 client therefore no need of dedicated server. When demand on the system increases, the total capacity of the system also increases. Robustness of the peer -to-peer network increases when duplicate data arrive on multiple peers. Here is some points that describe that the peer to peer network is less expensive. Peer to peer network is easier to set up and use this means that you can spend less time in the configuration and implementation of peer to peer network. It is not require for the peer to peer network to use the dedicated server computer. Any computer on the network can function as both a network server and a user workstation. DISADVANTAGE File sharing and peer to peer is largely viewed as taboo by most people involved within the entertainment industry due to the fact that holders of copyright aren't being paid for the use of there materials. For example recording artists usually gain what is known as an record advance by there respected record company which is really a recoupable loan in which the recording artist is liable for. Out of this advance the recording artist is meant to pay for there management fees and recording sessions and marketing fees. When the recording artists goes onto release there album or single the average recording artist earns 20 - 25p per cd sold and the rest goes back to the record company. The recording artist has to pay back the recording advance out of the money that they earn so from the recording artists view if there album or single is available on the internet and people are sharing there album people usually wont go out to buy there album and this in turn costs them and the recording company money. And in some cases can lead to the recording artists being dropped by there record company. Another disadvantage to end users can be that viruses are available on the majority of peer to peer programs and usually the virus is masked as a music or video file this in turn can severely damage computers and in some cases can make the computer in operable. According to (Clark, 1995) There are usually 3 different types of computer viruses. There are what is known as boot viruses which affect the master boot record (mbr), file allocation table (fat), and partition table. Program viruses which affect file extensions like: .com, .exe, .ovl, .drv, .sys, and .bin. The third computer virus is what is known as the Multipartite virus which affects both the program and boot sectors. 10 People who use peer to peer also leave themselves open to unauthorized Access. This can usually take place when your computer is left unattended for long periods of time. Unauthorized access is in most cases hard to spot but there are many programs that are available that try to prevent unauthorized access. DISTRIBUTED PROCESSING NETWORK DEFINITION Distributed processing is a phrase used to refer to a variety of computer systems that use more than one computer (or processor) to run an application. This includes parallel processing in which a single computer uses more than one CPU to execute programs. More often, however, distributed processing refers to local-area networks (LANs) designed so that a single program can run simultaneously at various sites. Most distributed processing systems contain sophisticated software that detects idle CPUs on the network and parcels out programs to utilize them. Another form of distributed processing involves distributed databases. This is databases in which the data is stored across two or more computer systems. The database system keeps track of where the data is so that the distributed nature of the database is not apparent to users. 11 ADVANTAGE Quicker response time, by locating processing power close to user, response time is typically improved. This means that the system responds rapidly to commands entered by users. Lower costs that long-distance communication costs are declining at a slower rate than the cost of computer power. Distributed processing can reduce the volume of data that must be transmitted over long-distances and thereby reduce long-distance costs. Improved data integrity: High degrees of accuracy and correctness may be achieved by giving users control over data entry and storage. Reduced host processor costs where the productive life of a costly mainframe can be extended by off-loading some its processing tasks to other, less expensive machines (whose total costs usually a fraction of the cost needed to up-grade the central processor). And lastly, resource sharing that one of the main advantages of developing microcomputer networks is because they make it possible to share expensive resources such as high-speed, color laser printers, fast data storage devices, and high-priced software packages. DISADVANTAGE Managing and controlling is complex, there is less security because data is at so many different sites. Distributed databases provides more flexible accesses that increase the chance of security violations since the database can be accessed throughout every site within the network. The ability to ensure the integrity of the database in the presence of unpredictable failures of both hardware and software components is also an important features of any distributed database management systems. The integrity of a database is concerned with its consistency, correctness, validity, and accuracy. The integrity controls must be built into the structure of software, databases, and involved personnel. If there are multiple copies of the same data, then this duplicated data introduces additional complexity in ensuring that all copies are updated for each update. The notion of concurrency control and recoverability consume much of the research efforts in the area of distributed database theory. Increasing in reliability and performance is the goal and not the status quo. 12 REFERENCES http://searchcio-midmarket.techtarget.com/definition/terminal-server http://www.techfuels.com/everything-else/11485-advantage-terminalservices.html http://searchnetworking.techtarget.com/definition/client-server http://www.ianswer4u.com/2011/05/client-server-network-advantagesand.html http://www.ehow.com/list_6368002_disadvantages-client_servernetworks.html http://searchnetworking.techtarget.com/definition/peer-to-peer http://www.techfuels.com/general-networking/10266-advantages-peer-peernetworks.html http://peer2peer.wikidot.com/disadvantages http://www.webopedia.com/TERM/D/distributed_processing.html http://www.blurtit.com/q471790.html http://answers.yahoo.com/question/index?qid=20081103035005AAdZlRL 13