2010

advertisement

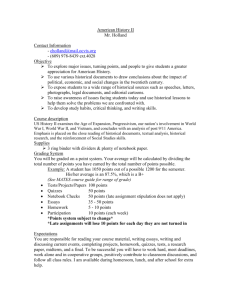

English/Communication Subcommittee Assessment Report Originally submitted February 2009 (Been, Delaney-Lehman, O’Shea) Updated December 2009 (Been, Balfantz, Hronek, Wright) Currently, both English (the writing classes ENGL091, 110, and 111) and Communication (COMM101)—those classes that collectively fulfill the Gen Ed Communication Outcomes—are being assessed. Original assessment plans with updates and changes in methodology are noted in the tables below. Summaries of assessment results and responses thus far are attached ENGLISH The table below identifies the three assessment tools currently in place, identifying the purpose of each and administration method/schedule of each. ENGL091/110/111 Outcomes Assessment Reliability testing Administered to all students enrolled in the above classes once a year over a three-year period. Provides the ability to compare scores between 091/1110/111 classes to measure differences in basic language usage and rhetorical skills. First exam completed Fall 08. Attached are copies of outcomes for ENGL 091, 110, and 111, indicating which outcomes are measured by this assessment tool. Instrument title Administration CAAP: Writing Skills 72 items. Language usage skills; rhetorical skills Multiple choice. Faculty distribute exams during class. Original plan: Once/yr. Administration dates/ numbers assessed Fall 2008 Numbers Total: 613 ENGL091: 102 ENGL110: 368 ENGL111: 143 Summarized results are attached. Changes/ updates in plans as of February 2009 Budgetary restrictions have not allowed administration to pay for additional CAAP assessment past the first use of the CAAP exam. An interim plan had been designed to charge a student fee to pay for the next CAAP exam in Spring 2010, but the Chair of English has decided that this is an unacceptable option. We could consider using the MAPP English scores as a new “reliability” measure, but will not be able to use MAPP scores to compare results between ENGL091, 110, and 111 students as we were able to do with the CAAP. We also will not be able to compare the Fall 08 CAAP data with the Fall 09 MAPP data, as they are different instruments. If we do decide to use the MAPP as our new reliability measure, we will need to discard the CAAP data we gathered Fall 2008 and begin this section of assessment again from the start. Validity testing This tool assesses students’ essay and research-writing skills. All faculty originally submitted second copies of students’ final papers in their classes. (See note in “Changes” column about midassessment shift in methodology of determining student essay pool.) After faculty submit students’ essays, 30 randomly selected student essays (generally final essays) are pulled from each class level (ENGL091, 110, 111) and assessed via blind (no instructor name, no student name) holistic reads by writing faculty on a Likert scale. Assessment rubrics become increasingly complex from class level to class level. (See note in “Changes” column about mid-assessment shift in methodology of selecting essays.) Attached are copies of outcomes for ENGL 091, 110, and 111, indicating which outcomes are measured by this assessment tool. Holistic, blind essay assessment based on facultyconstructed rubrics. This assessment is performed once each Fall and Spring semester over a three-year period. The first holistic reading was held in March 2009. Essays collected from the Fall 2008 semester were read and assessed. The Saturday reading was attended by five full-time faculty and three part-time faculty. Each of ninety essays (30 ENGL091 essays; 30 ENGL110 essays; 30 ENGL111 essays) received three separate readings (that’s 270 readings in Due to medical leave by the assessment coordinator during the second half of Fall 2009 as well as the resignation of the writing program coordinator during that same semester, a holistic reading session was not held in the Fall of 2009. Essays that were collected from the Spring 2009 classes will therefore be assessed in the Spring of 2010. Shifts in methodology: 1. Essay pool. In the Fall of 2009, the Chair decided to narrow the essay pool and collect student essays only from full-time faculty whereas essays had previously been collected from all faculty (full and part time) for the first two readings (Fall 2008 and Spring 2009). The pool of essays to be assessed therefore changed from 100 percent of student essays being part of the assessment pool to 75 percent of student essays being part of the pool (using the part-time to full-time ratio from Fall of 2009) for the third round of assessment. all), requiring six and onehalf hours. Lots of bloodshot eyes on Monday. Summarized results are attached. 2. Process of choosing essays to be assessed The Chair is also examining the possibility of determining in advance which essays will be assessed and notifying faculty of those students’ names, probably beginning in the Fall of 2010. In previous readings, essays were collected from all students and a random sample chosen after collection so that neither faculty nor students would know in advance which essays were being assessed. This is another mid-assessment shift in methodology. The Chair of English would also like to establish an “assessment team” that will conduct all assessment work and do all the readings. (To my knowledge, this team has not yet been assembled. MB) Process measure This questionnaire gathers information on types, repetitions, and student-determined effectiveness of various process pedagogies. This tool assesses those “process” outcomes that are not measurable by either standard multiple choice (CAAP or MAPP) exams or by holistic essay assessment. The questionnaire is distributed to all students in writing classes in a given semester. Questionnaires were distributed in the Spring 2009 semester for the first time. Future distributions: TBD Attached are copies of outcomes for ENGL 091, 110, and 111, Assessment coordinatordesigned questionnaire Questionnaire: 22 questions, multiple choice. Questions determined frequency of process strategy use (numerical scale) and satisfaction with strategy use (Likert scale). These questions asked students: First distribution: Spring 2009 Numbers: 333 returned questionnaires of 517 enrolled. Total: ENGL091: 14 (39% of all 091 students) ENGL110: 46 Additional distribution dates TBD. Questionnaires were not distributed in the Fall of 2009 due to staffing constraints (noted above). indicating which outcomes are measured by this assessment tool. 1. how often they had used various named process/collaborative strategies 2. to what degree they felt the use of each of those strategies strengthened their writing (33% of all 110 students) ENGL111: 273 (80% of all 111 students) Copy of questionnaire and tabulated results are attached. Distributed: Once or twice/yr (TBD) Questionnaires are distributed by faculty sometime during the last two weeks of class. COMMUNICATION Since the COMM101 course is intended to pursue three outcomes, three scales are being used. The new program will be implemented in Spring 2009. The table below shows the scales which have been selected. Note from Mary Been: The table below is the same as that submitted to the General Education Committee by Dr. George Denger in February of 2009, outlining assessment plans for Communication. Based on my emails and conversations with Dr. Denger this December, I have summarized the current degree of assessment completion and future plans for Communication below the table. COMM 101 Outcomes Assessment Interpersonal Instrument title Interpersonal communication Administration 30 items, Likert type scale The interpersonal pretest data have been collected for Spring 09 semester. competency scale Small group Relational satisfaction scale 12 items, Likert type scale Public speaking competency instrument Rubric The small group pretest is scheduled for Feb 09 Public speaking For this inaugural wave of data collection, we are using four sections of COMM 101 as our sample. That means that we could have as many as (roughly) ninety pre- and posttests. This is quite manageable for the paper and pencil tests. But for the public speaking component, which involves viewing of recorded speeches and completing the standardized rubric, it could entail the expenditure of roughly thirty hours of faculty time to produce a single semester's data. Thus, we are using a smaller sample drawn from the same sections used for assessment of interpersonal and small group skill development. We are on the verge of pretesting on the public speaking component. In the future, it will be desirable to do this earlier. In this case, though, it was not possible. While the contrast between pre- and posttest will be attenuated to a greater than desirable degree, some contrast will still exist, and this pilot phase of the public speaking assessment will provide highly useful opportunities to work out the logistical challenges presented by this most complex of the three instruments. Current report (December 2009): In Spring 2009, Communication faculty did a first run-through (validation testing, or, testing the test) of the tools developed (as noted above), then worked on finalizing those tools and scheduling for a first full round of full pre- and post-testing in the Fall of 2009. That full three-instrument testing is now being completed and the data accumulated. The first report--with Fall 09 data and analysis--should be available in the Spring of 2010. A later report on planned responses to that analyzed data should be available shortly afterwards. Update from Dr. George Denger, Feb 2010 On assessment: I notified our work study person, and she reports rapid progress on 2/3 of the assessment data from last semester [Fall 2009]. Specifically, we will have the interpersonal and small group assessment data entered and (in a preliminary way) analyzed by next week's gen-ed meeting, so you will have something to pass along to that august body. The public speaking component will follow in the next few weeks. This element is considerably more cumbersome, since it involves the viewing (and scoring by standardized rubric) of all the videotaped speeches gathered for this purpose last semester. The viewing/scoring is done by all of the communication faculty, which we suspect will complicate the process. Nevertheless, we suspect that this broad-based involvement will provide benefits far outweighing the costs.