Notes 14 - Wharton Statistics Department

advertisement

Statistics 550 Notes 14

Reading: Section 2.3-2.4

I. Review from last class (Conditions for Uniqueness and

Existence of the MLE).

Lemma 2.3.1: Suppose we are given a function

l : where p is open and l is continuous.

Suppose also that

lim {l ( ) : } .

Then there exists ˆ such that

l (ˆ) max{l ( ) : } .

Proposition 2.3.1: Suppose our model is that X has pdf or

pmf p( X | ), , and that (i) l x ( ) is strictly concave;

(ii) l x ( ) as . Then the maximum

likelihood estimator exists and is unique.

Corollary: If the conditions of Proposition 2.3.1 are

satisfied and l x ( ) is differentiable in , then ˆMLE is the

unique solution to the estimating equation:

l x ( ) 0

(1.1)

Note: It is the strict concavity of l x ( ) that guarantees that

l x ( ) 0 has a unique solution.

1

II. Application to Exponential Families.

1. Theorem 1.6.4, Corollary 1.6.5: For a full exponential

family, the log likelihood is strictly concave.

Consider the exponential family

p( x | ) h( x) exp{i 1iTi ( x) A( )}

k

Note that if A( ) is convex, then the log likelihood

log p( x | ) log h( x) i 1iTi ( x) A( ) is concave in

.

k

Proof that A( ) is convex:

Recall that A( ) log h( x) exp[i 1iTi ( x)dx . To

show that A( ) is convex, we want to show that

A(1 (1 )2 ) A(1 ) (1 ) A(2 ) for 0 1

or equivalently

exp{ A(1 (1 )2 )} exp{ A(1 )}exp{(1 ) A(2 )}

k

We use Holder’s Inequality to establish this. Holder’s

Inequality (B.9.4 on page 518 of Bickel and Doksum)

states that for any two numbers r and s with

r , s 1, r 1 s 1 1 ,

E | XY | {E | X |r }1/ r {E | Y |s }1/ s .

More generally, Holder’s inequality states

| f ( x) g ( x) |h( x)dx

r

| f ( x) | h( x)dx

2

1/ r

s

| g ( x) | h( x)dx

1/ s

We have

exp{ A(1 (1 )2 )} { exp[ i 1 (1i (1 )2i )Ti ( x)]h( x) dx =

k

exp[ i 11iTi ( x)]exp[ i 1 (1 )2iTi ( x)]h( x)dx}

k

(exp[

k

k

i 1

1/

1iTi ( x )]) h( x) dx

(exp[

k

i 1

1/(1 )

(1 )2iTi ( x)])

h( x) dx

exp{ A(1 )}exp{(1 ) A(2 )}

For a full exponential family, the log likelihood is strictly

concave.

For a curved exponential family, the log likelihood is

concave but not strictly concave.

2. Theorem 2.3.1, Corollary 2.3.2 spell out specific

conditions under which l x ( ) as for

exponential families.

Example 1: Gamma distribution

1

x 1e x / , 0 x

f ( x; , ) ( )

0,

elsewhere

l ( , ) i 1 log ( ) log ( 1)log X i X i /

n

for the parameter space 0, 0 .

The gamma distribution is a full two-dimensional

exponential family so that the likelihood function is strictly

concave.

3

1

The boundary of the parameter space is

{(a, b) : a , 0 b } {( a, b) : a 0, 0 b }

{(a, b) : 0 a , b } {( a, b) : 0 a , b 0}

Can check that lim {l ( ) : } .

Thus, by Proposition 2.3.1, the MLE is the unique solution

to the likelihood equation.

The partial derivatives of the log likelihood are

l

n '( )

i 1

log log X i

( )

X

l

n

i 1 2i

Setting the second partial derivative equal to zero, we find

ˆ

n

i 1

Xi

nˆ MLE

When this solution is substituted into the first partial

derivative, we obtain a nonlinear equation for the MLE of

:

MLE

X

'( )

n

i 1 i

n

n log

n log ˆ MLE i 1 log X i 0

( )

n

This equation cannot be solved in closed form.

n

II. Numerical Methods for Finding MLEs

The Bisection Method

4

The bisection method is a method for finding the root of a

one-dimensional function f that is continuous on (a, b) ,

f (a) 0 f (b) for which f is increasing (an analogous

method can be used for f decreasing).

*

Note: There is a root f ( x ) 0 by the intermediate value

theorem.

Bisection Algorithm:

*

Decide on tolerance 0 for | xfinal x |

Stop algorithm when we find xfinal

1. Find x0 , x1 such that f ( x0 ) 0, f ( x1 ) 0 .

Initialize xold x1 , xold x0 .

1

|

x

x

|

2

,

set

x

( xold xold

) and return x final

final

2. If old old

2

1

x

(

x

x

)

new

old

old

Else set

2

3. If f ( xnew ) 0, set x final xnew .

If f ( xnew ) 0 set xold xnew and go to step 2.

If f ( xnew ) 0, set xold xnew and go to step 2.

Lemma 2.4.1: The bisection algorithm stops at a solution

x final such that

| x final x* | .

Proof: If xm is the mth iterate of xnew ,

5

1

1

| xm 1 xm 2 |

| x1 x0 |

2

2m 1

Moreover, by the intermediate value theorem,

xm x* xm 1 for all m .

Therefore,

| xm 1 x* | 2 m | x1 x0 |

| xm xm 1 |

*

For m log 2 (| x1 x0 | / ), we have | xm 1 x | .

Note: Bisection can be much more efficient than the

approach of specifying a grid of points between a and b and

evaluating f at each grid point, since for finding the root to

within , a grid of size | x1 x0 | / is required, while

bisection requires only log 2 (| x1 x0 | / ) evaluations of f.

Coordinate Ascent Method

The coordinate ascent method is an approach to finding the

maximum likelihood estimate in a multidimensional

family. Suppose we have a k-dimensional parameter

(1 , ,k ) . The coordinate ascent method is:

Choose an initial estimate (ˆ1 , ,ˆk )

0. Set (ˆ1 , ,ˆk )old (ˆ1 , ,ˆk )

1. Maximize l ( ,ˆ , ,ˆ ) over using the bisection

x

1

2

1

k

ˆ,

l

(

,

1

2

method by solving

1

6

,ˆk ) 0 (assuming the

log likelihood is differentiable). Reset ˆ1 to the 1 that

maximizes l ( ,ˆ , ,ˆ ) .

x

1

2

k

2. Maximize lx (ˆ1 ,2 ,ˆ3 , ,ˆk ) over 2 using the bisection

method. Reset ˆ2 to the 2 that maximizes

l (ˆ , ,ˆ ,ˆ ) .

x

1

2

3

k

....

K. Maximize lx (ˆ1 ,ˆ2 ,ˆ3 , ,ˆk 1,k ) over k using the

bisection method. Reset ˆk to the k that maximizes

l (ˆ ,ˆ ,ˆ ,ˆ , ) .

x

1

2

3

k 1

k

K+1. Stop if the distance between (ˆ1 , ,ˆk )old and

(ˆ1 , ,ˆk ) is less than some tolerance . Otherwise return

to step 0.

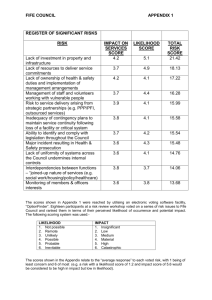

The coordinate ascent method converges to the maximum

likelihood estimate when the log likelihood function is

strictly concave on the parameter space (see diagram

below).

7

Newton’s Method

Newton’s method is a numerical method for approximating

solutions to equations. The method produces a sequence of

(0)

(1)

values , , that, under ideal conditions, converges

to the MLE ˆ .

MLE

To motivate the method, we expand the derivative of the

( j)

log likelihood around :

0 l '(ˆMLE ) l '( ( j ) ) (ˆMLE ( j ) )l ''( ( j ) )

Solving for ˆ gives

MLE

8

l '( ( j ) )

MLE

l ''( ( j ) )

This suggests the following iterative scheme:

l '( ( j ) )

( j 1)

( j)

l ''( ( j ) ) .

ˆ

( j)

Newton’s method can be extended to more than one

dimension (usually called Newton-Raphson)

( j 1)

( j)

1

l (

( j)

) l ( ( j ) )

where l denotes the gradient vector of the likelihood and l

denote the Hessian.

Comments on methods for finding the MLE:

1. The bisection method is guaranteed to converge if there

is a unique root in the interval being searched over but is

slower than Newton’s method.

2. Newton’s method:

( j)

A. The method does not work if l ''( ) 0 .

B. The method does not always converge.

See attached pages from Numerical Recipes in C book.

3. For the coordinate ascent method and Newton’s method,

a good choice of starting values is often the method of

moments estimator.

4. When there are multiple roots to the likelihood equation,

the solution found by the bisection method, the coordinate

9

ascent method and Newton’s method depends on the

starting value. These algorithms might converge to a local

maximum (or a saddlepoint) rather than a global maximum.

5. The EM (Expectation/Maximization) algorithm (Section

2.4.4) is another approach to finding the MLE that is

particularly suitable when part of the data is missing.

Numerical Examples:

Example 1: MLE for the gamma distribution

In a study of the natural variability of rainfall, the rainfall

of summer storms was measured by a network of rain

gauges in southern Illinois for the years 1960-1964. 227

measurements were taken.

10

R program for finding the maximum likelihood estimate

using the bisection method.

digamma(x) = function in R that computes the derivative of

'( x)

the log of the gamma function of x, ( x)

uniroot(f,interval) = function in R that finds the

approximate zero of a function in the interval using

bisection type method.

alphahatfunc=function(alpha,xvec){

n=length(xvec);

eq=-n*digamma(alpha)n*log(mean(xvec))+n*log(alpha)+sum(log(xvec));

eq;

}

> alphahatfunc(.3779155,illinoisrainfall)

[1] 65.25308

> alphahatfunc(.5,illinoisrainfall)

[1] -45.27781

alpharoot=uniroot(alphahatfunc,interval=c(.377,.5),xvec=ill

inoisrainfall)

> alpharoot

$root

[1] 0.4407967

11

$f.root

[1] -0.004515694

$iter

[1] 4

$estim.prec

[1] 6.103516e-05

betahatmle=mean(illinoisrainfall)/.4407967

[1] 0.5090602

ˆ MLE .4408

ˆMLE .5091

Comparison with method of moments:

E ( X i )

Var ( X i ) 2 , E ( X i2 ) 2 2 2

Substituting

E ( X i )

2

into the expression for E ( X i ) ,

we obtain

E ( X i2 ) E ( X i )

2

E ( X i )

E ( X i )

2

E ( X i2 ) E ( X i )

2

Thus,

12

2

ˆMOM

1 n 2 1 n

X

X

i

i

n i 1

n i 1

1 n

Xi

n i 1

ˆ MOM

1 n

X

i 1 i

n

2

1 n 2 1 n

X i n i 1 X i

n i 1

2

betahatmom=(mean(illinoisrainfall^2)(mean(illinoisrainfall))^2)/mean(illinoisrainfall)

> betahatmom

[1] 0.5937626

alphahatmom=(mean(illinoisrainfall))^2/(mean(illinoisrainf

all^2)-(mean(illinoisrainfall))^2)

> alphahatmom

[1] 0.3779155

Example 2: MLE for Cauchy Distribution

Cauchy model:

p( x | )

1

, x 0,

2

(1 ( x ) )

13

Suppose X 1 , X 2 , X 3 are iid Cauchy( ) and we observe

X1 0, X 2 1, X 3 10 .

Log likelihood is not concave and has two local maxima

between 0 and 10. There is also a local minimum.

The likelihood equation is

3

2( xi )

l x '( )

0

2

1

(

x

)

i 1

i

14

The local maximum (i.e., the solution to the likelihood

equation) that the bisection method finds depends on the

interval searched over.

R program to use bisection method

derivloglikfunc=function(theta,x1,x2,x3){

dloglikx1=2*(x1-theta)/(1+(x1-theta)^2);

dloglikx2=2*(x2-theta)/(1+(x2-theta)^2);

dloglikx3=2*(x3-theta)/(1+(x3-theta)^2);

dloglikx1+dloglikx2+dloglikx3;

}

When the starting points for the bisection method are

x0 0, x1 5 , the bisection method finds the MLE:

uniroot(derivloglikfunc,interval=c(0,5),x1=0,x2=1,x3=10);

$root

[1] 0.6092127

When the starting points for the bisection method are

x0 0, x1 10 , the bisection method finds a local

maximum but not the MLE:

uniroot(derivloglikfunc,interval=c(0,10),x1=0,x2=1,x3=10)

;

$root

[1] 9.775498

15